WO2015033501A1 - 映像受信装置、映像認識方法および付加情報表示システム - Google Patents

映像受信装置、映像認識方法および付加情報表示システム Download PDFInfo

- Publication number

- WO2015033501A1 WO2015033501A1 PCT/JP2014/003548 JP2014003548W WO2015033501A1 WO 2015033501 A1 WO2015033501 A1 WO 2015033501A1 JP 2014003548 W JP2014003548 W JP 2014003548W WO 2015033501 A1 WO2015033501 A1 WO 2015033501A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- video

- information

- recognition

- video recognition

- content

- Prior art date

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/43—Processing of content or additional data, e.g. demultiplexing additional data from a digital video stream; Elementary client operations, e.g. monitoring of home network or synchronising decoder's clock; Client middleware

- H04N21/431—Generation of visual interfaces for content selection or interaction; Content or additional data rendering

- H04N21/4312—Generation of visual interfaces for content selection or interaction; Content or additional data rendering involving specific graphical features, e.g. screen layout, special fonts or colors, blinking icons, highlights or animations

- H04N21/4316—Generation of visual interfaces for content selection or interaction; Content or additional data rendering involving specific graphical features, e.g. screen layout, special fonts or colors, blinking icons, highlights or animations for displaying supplemental content in a region of the screen, e.g. an advertisement in a separate window

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/40—Scenes; Scene-specific elements in video content

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/40—Scenes; Scene-specific elements in video content

- G06V20/46—Extracting features or characteristics from the video content, e.g. video fingerprints, representative shots or key frames

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/20—Servers specifically adapted for the distribution of content, e.g. VOD servers; Operations thereof

- H04N21/23—Processing of content or additional data; Elementary server operations; Server middleware

- H04N21/234—Processing of video elementary streams, e.g. splicing of video streams, manipulating MPEG-4 scene graphs

- H04N21/23424—Processing of video elementary streams, e.g. splicing of video streams, manipulating MPEG-4 scene graphs involving splicing one content stream with another content stream, e.g. for inserting or substituting an advertisement

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/472—End-user interface for requesting content, additional data or services; End-user interface for interacting with content, e.g. for content reservation or setting reminders, for requesting event notification, for manipulating displayed content

- H04N21/4722—End-user interface for requesting content, additional data or services; End-user interface for interacting with content, e.g. for content reservation or setting reminders, for requesting event notification, for manipulating displayed content for requesting additional data associated with the content

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/472—End-user interface for requesting content, additional data or services; End-user interface for interacting with content, e.g. for content reservation or setting reminders, for requesting event notification, for manipulating displayed content

- H04N21/4722—End-user interface for requesting content, additional data or services; End-user interface for interacting with content, e.g. for content reservation or setting reminders, for requesting event notification, for manipulating displayed content for requesting additional data associated with the content

- H04N21/4725—End-user interface for requesting content, additional data or services; End-user interface for interacting with content, e.g. for content reservation or setting reminders, for requesting event notification, for manipulating displayed content for requesting additional data associated with the content using interactive regions of the image, e.g. hot spots

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/482—End-user interface for program selection

- H04N21/4828—End-user interface for program selection for searching program descriptors

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/485—End-user interface for client configuration

- H04N21/4856—End-user interface for client configuration for language selection, e.g. for the menu or subtitles

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/488—Data services, e.g. news ticker

- H04N21/4884—Data services, e.g. news ticker for displaying subtitles

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/488—Data services, e.g. news ticker

- H04N21/4888—Data services, e.g. news ticker for displaying teletext characters

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/80—Generation or processing of content or additional data by content creator independently of the distribution process; Content per se

- H04N21/81—Monomedia components thereof

- H04N21/8126—Monomedia components thereof involving additional data, e.g. news, sports, stocks, weather forecasts

- H04N21/8133—Monomedia components thereof involving additional data, e.g. news, sports, stocks, weather forecasts specifically related to the content, e.g. biography of the actors in a movie, detailed information about an article seen in a video program

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/80—Generation or processing of content or additional data by content creator independently of the distribution process; Content per se

- H04N21/83—Generation or processing of protective or descriptive data associated with content; Content structuring

- H04N21/835—Generation of protective data, e.g. certificates

- H04N21/8352—Generation of protective data, e.g. certificates involving content or source identification data, e.g. Unique Material Identifier [UMID]

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/80—Generation or processing of content or additional data by content creator independently of the distribution process; Content per se

- H04N21/83—Generation or processing of protective or descriptive data associated with content; Content structuring

- H04N21/835—Generation of protective data, e.g. certificates

- H04N21/8358—Generation of protective data, e.g. certificates involving watermark

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/80—Generation or processing of content or additional data by content creator independently of the distribution process; Content per se

- H04N21/85—Assembly of content; Generation of multimedia applications

- H04N21/858—Linking data to content, e.g. by linking an URL to a video object, by creating a hotspot

- H04N21/8586—Linking data to content, e.g. by linking an URL to a video object, by creating a hotspot by using a URL

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N5/00—Details of television systems

- H04N5/222—Studio circuitry; Studio devices; Studio equipment

- H04N5/262—Studio circuits, e.g. for mixing, switching-over, change of character of image, other special effects ; Cameras specially adapted for the electronic generation of special effects

- H04N5/265—Mixing

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/20—Servers specifically adapted for the distribution of content, e.g. VOD servers; Operations thereof

- H04N21/23—Processing of content or additional data; Elementary server operations; Server middleware

- H04N21/237—Communication with additional data server

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/43—Processing of content or additional data, e.g. demultiplexing additional data from a digital video stream; Elementary client operations, e.g. monitoring of home network or synchronising decoder's clock; Client middleware

- H04N21/437—Interfacing the upstream path of the transmission network, e.g. for transmitting client requests to a VOD server

Definitions

- This disclosure relates to a video receiving apparatus that acquires additional information related to a video signal input from the outside and superimposes the additional information on the video signal.

- Patent Document 1 discloses a data processing system.

- a client device transmits video data through a network to a server device that is a video recognition device, and requests video recognition processing.

- the server device performs video recognition based on the received video data, and transmits the video recognition result to the client device through the network.

- This disclosure provides a video receiving apparatus, a video recognition method, and an additional information display system that are effective for acquiring additional information related to an externally input video signal and superimposing the acquired additional information on the video signal.

- the video reception device of the present disclosure is configured to be able to transmit and receive data via a communication network, and includes an input unit, a video extraction unit, a video recognition area setting unit, a control unit, and an additional information display control unit.

- the input unit is configured to input a video signal output from a video transmission device installed outside and content-related information including feature information indicating characteristics of the video signal.

- the video extraction unit is configured to extract a partial video for video recognition processing from the video signal.

- the video recognition area setting unit is configured to set the video recognition area for the partial video based on the feature information included in the content related information.

- the control unit transmits content recognition information to a video recognition device connected to the communication network, requests video recognition processing, acquires the result of the video recognition processing from the video recognition device, and adds the result of the video recognition processing to the result of the video recognition processing. It is configured to perform control for acquiring the additional information based on the additional information distribution apparatus connected to the communication network.

- the additional information display control unit is configured to generate content recognition information in the video recognition area of the partial video.

- the video recognition method of the present disclosure is a video recognition method in a video reception device configured to be able to transmit and receive data via a communication network, and a partial video for video recognition processing is obtained from a video signal input from the outside.

- the additional information display system of the present disclosure includes a video reception device, a video recognition device, and an additional information distribution device configured to be able to transmit and receive data to and from each other via a communication network.

- the video reception device includes an input unit, a video extraction unit, a video recognition region setting unit, a control unit, and an additional information display control unit.

- the input unit is configured to input a video signal output from a video transmission device installed outside and content-related information including feature information indicating characteristics of the video signal.

- the video extraction unit is configured to extract a partial video for video recognition from the video signal.

- the video recognition area setting unit is configured to set the video recognition area for the partial video based on the feature information included in the content related information.

- the control unit transmits content recognition information to the video recognition device via the communication network, requests video recognition processing, acquires the result of the video recognition processing from the video recognition device via the communication network, and adds the additional information distribution device. From this, it is configured to perform control for acquiring additional information based on the result of the video recognition processing via a communication network.

- the additional information display control unit is configured to generate content recognition information in the video recognition area of the partial video.

- the video recognition device is configured to perform video recognition processing related to content recognition information received via a communication network, and transmit the result of the video recognition processing to the video reception device via the communication network.

- the additional information distribution device is configured to transmit additional information corresponding to the result of the video recognition process received via the communication network to the video reception device via the communication network.

- FIG. 1 is a diagram schematically showing an example of the configuration of the additional information display system in the first embodiment.

- FIG. 2 is a block diagram schematically showing an example of the configuration of the video recognition apparatus and the video reception apparatus in the first embodiment.

- FIG. 3A is a diagram schematically showing an example of a video displayed on the display unit of the video receiving device in the first exemplary embodiment.

- FIG. 3B is a diagram schematically illustrating another example of the video displayed on the display unit of the video receiving device in the first exemplary embodiment.

- FIG. 4A is a diagram schematically showing an example of a video recognition area set in a video by the video recognition area setting unit of the video reception device in the first exemplary embodiment.

- FIG. 4B is a diagram schematically illustrating an example of a fingerprint generation area set based on the video recognition area set by the video recognition area setting unit in the video recognition apparatus according to Embodiment 1.

- FIG. 4C is a diagram schematically illustrating an example of a video recognition area set on the entire surface of the video in the video reception device.

- FIG. 4D is a diagram schematically illustrating an example of a fingerprint generation area set on the entire surface of the image in the image recognition apparatus.

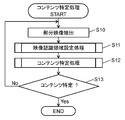

- FIG. 5 is a flowchart schematically showing the operation of the content specifying process performed by the video receiving device in the first embodiment.

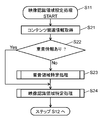

- FIG. 6 is a flowchart schematically showing the operation of video recognition area setting processing performed by the video receiving apparatus in the first embodiment.

- FIG. 7 is a flowchart schematically showing the operation of the overlapping area specifying process performed by the video receiving apparatus in the first embodiment.

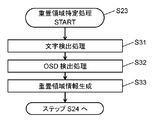

- FIG. 8 is a flowchart schematically showing the operation of the video recognition area specifying process performed by the video receiving apparatus in the first embodiment.

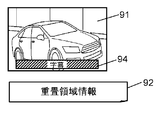

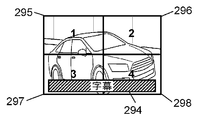

- FIG. 9A is a diagram schematically illustrating an example of a video signal output from the video transmission device and input to the video reception device, and superimposition region information.

- FIG. 9B is a diagram schematically illustrating an example of a video recognition area set by the video recognition area setting unit of the video reception device in the first exemplary embodiment.

- FIG. 9C is a diagram schematically illustrating an example of a display position of additional information displayed on the display unit of the video reception device in the first exemplary embodiment.

- FIG. 9A is a diagram schematically illustrating an example of a video signal output from the video transmission device and input to the video reception device, and superimposition region information.

- FIG. 9B is a diagram schematically illustrating an example of a

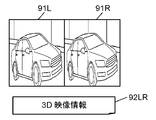

- FIG. 9D is a diagram schematically illustrating an example of a 3D video signal output from the video transmission apparatus and input to the video reception apparatus.

- FIG. 9E is a diagram schematically illustrating an example of a video recognition area set in the 3D video signal by the video recognition area setting unit of the video reception device in the first exemplary embodiment.

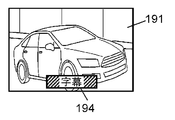

- FIG. 10A is a diagram schematically illustrating an example of a video signal 191 output from the video transmission device and input to the video reception device.

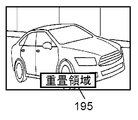

- FIG. 10B is a diagram schematically illustrating an example of a superimposition region set by the video recognition region setting unit of the video reception device in the first exemplary embodiment.

- FIG. 10C is a diagram schematically illustrating an example of a video recognition area set by the video recognition area setting unit of the video reception device in the first exemplary embodiment.

- FIG. 10D is a diagram schematically illustrating an example of a display position of additional information displayed on the display unit of the video reception device in the first exemplary embodiment.

- FIG. 11A is a diagram schematically illustrating an example of a video signal output from the video transmission device and input to the video reception device.

- FIG. 11B is a diagram schematically illustrating an example of a video recognition candidate area set by the video recognition area setting unit of the video reception device in the first exemplary embodiment.

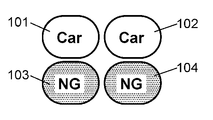

- FIG. 11C is a diagram schematically illustrating an example of a result of content identification processing acquired from the video recognition device by the video reception device in the first exemplary embodiment.

- FIG. 11D is a diagram schematically illustrating an example of a display position of additional information displayed on the display unit of the video reception device in the first exemplary embodiment.

- FIG. 12 is a flowchart schematically showing an operation of content identification processing performed by the video reception device in the first embodiment.

- FIG. 13 is a block diagram schematically illustrating an example of a configuration of a video recognition device and a video reception device according to another embodiment.

- FIG. 1 is a diagram schematically showing an example of a configuration of an additional information display system 10 according to the first embodiment.

- the additional information display system 10 includes a broadcasting station 12, an STB (Set Top Box) 14 that is a video transmission device, a video recognition device 20, an additional information distribution device 30, and a video reception device 40.

- the additional information display system 10 uses the video recognition technology of the video recognition device 20 to identify which content the video received by the video reception device 40 belongs to, and adds additional information related to the content to the additional information.

- the communication system is configured to be able to acquire from the distribution device 30 and display it on the video reception device 40.

- the video receiving device 40, the video recognition device 20, and the additional information distribution device 30 are connected to each other via the communication network 16.

- the communication network 16 is configured by wired, wireless, or a mixture of both.

- the communication network 16 is, for example, the Internet, but may be an intranet, a commercial line, other communication lines, or a mixture thereof.

- the video receiver 40 and the STB 14 are connected to each other via a communication interface.

- the communication interface is, for example, HDMI (registered trademark) (High-Definition Multimedia Interface), but may be a video cable, or may be Wi-Fi (registered trademark), Bluetooth (registered trademark), or wireless LAN (Local Area Network). ) Or the like.

- Broadcast station 12 is a transmission device configured to transmit (broadcast) a broadcast signal.

- the broadcast station 12 converts a television program including a program body and a commercial message (CM) into a video signal and superimposes it on the broadcast signal and broadcasts it.

- the program body and the CM are switched to each other as time passes.

- the program body and CM are referred to as “content”.

- the broadcast station 12 broadcasts content that changes over time.

- the transmission device is not limited to the broadcasting station 12 and may be any device that transmits or broadcasts content that changes over time.

- the broadcast station 12 transmits information including information indicating the characteristic of the content and the video signal of the content (hereinafter referred to as “feature information”) together with the video signal. May be broadcast or transmitted.

- feature information information including information indicating the characteristic of the content and the video signal of the content

- the information including the feature information is referred to as “content related information”.

- Content with unique features includes, for example, content of 3D video (3 Dimensional video images; hereinafter also referred to as “3D video”.

- 3D video signal is also referred to as “3D video signal”) and subtitles. Content including superimposed video, and the like.

- the content-related information related to 3D video includes, for example, characteristic information related to video signal transmission methods (side-by-side method, top-and-bottom method, line-by-line method, frame sequential method, etc.) of 3D video. May be.

- content-related information related to subtitles may include, for example, characteristic information related to the presence / absence of subtitles, the position and size of subtitles, the color of subtitles, fonts, and the like.

- characteristic information regarding the broadcast format of the broadcast signal may be included in the content-related information.

- the STB 14 is a receiver (tuner) configured to receive a broadcast signal broadcast by the broadcast station 12 and extract a video signal.

- the STB 14 may have a function of decoding the received video signal.

- the STB 14 receives a channel selected based on a user's (User) instruction from a plurality of channels broadcasted by the broadcast station 12, and transmits a video interface of the channel to a communication interface (for example, HDMI (registered trademark)).

- a communication interface for example, HDMI (registered trademark)

- the STB 14 can receive the content related information and output it to the video receiving device 40.

- the video transmission apparatus is not limited to the STB 14, and may be a recording apparatus having a recording function, a broadcast signal receiving function, and a video signal output function, for example.

- the STB 14 detects the characteristics, generates content-related information including characteristic information indicating the characteristics, and outputs the content-related information to the video receiving device 40. It may be configured as follows. For example, when 3D video content is broadcast from the broadcast station 12, the video receiving device 40 detects the 3D video signal transmission method, generates characteristic information indicating the detected result (3D video signal transmission method), and the video receiving device 40. It may be configured to output to. Alternatively, when content including video on which subtitles are superimposed is broadcast from the broadcast station 12, the subtitles are detected, and feature information indicating the display position and display size of the detected subtitles is generated to the video reception device 40. It may be configured to output.

- the STB 14 when the STB 14 superimposes a caption or OSD (On Screen Display) on the video signal, the STB 14 is configured to generate feature information regarding the display position and display size of the caption or OSD and output the feature information to the video receiver 40. Also good.

- the feature information that the video transmission device such as the STB 14 detects and generates from the video signal broadcast from the broadcast station 12 and outputs it is also treated as part of the content related information.

- the video receiving device 40 is a video receiving device configured to display video based on a video signal input from the outside on the display unit 54, and is, for example, a television receiver.

- the video receiving device 40 is connected to the communication network 16 and can transmit and receive data to and from the video recognition device 20 and the additional information distribution device 30 via the communication network 16.

- the video receiving device 40 in the present embodiment is configured to be able to:

- the video receiving device 40 performs content specifying processing (content specifying processing based on the video recognition processing) on the video signal input from the video transmitting device (for example, STB 14) via the communication interface, using the video recognition device 20.

- the content specifying process is a process for specifying the content represented by the video signal.

- the video reception device 40 receives the analysis information transmitted from the video recognition device 20 as a result of the content specifying process, and distributes additional information (for example, advertisement information) related to the content based on the analysis information.

- the additional information acquired from the device 30 is superimposed on the video signal and displayed on the display unit 54.

- the video reception device 40 periodically extracts a partial video by periodically cutting out a part from the input video signal, and generates content recognition information and content identification processing (video) generated from the partial video.

- a request for content identification processing based on the recognition processing (hereinafter also referred to as “video recognition request”) is transmitted to the video recognition device 20 via the communication network 16.

- the video reception device 40 acquires the result (analysis information) of the content specifying process for the content recognition information from the video recognition device 20 via the communication network 16.

- additional information related to the acquired content identification processing result (analysis information) is acquired from the additional information distribution device 30 via the communication network 16.

- an image based on the acquired additional information shown as “additional information 51” in FIG. 1) is displayed superimposed on the video being displayed on the display unit. Details of these will be described later.

- the video reception device 40 can receive content-related information output from the STB 14.

- the content related information may include characteristic information indicating the transmission method of the 3D video signal.

- characteristic information indicating the location (coordinates) and size of the area on which the subtitles are superimposed may be included.

- characteristic information indicating the location (coordinates) or size of the area where the caption or OSD is superimposed may be included.

- the content recognition information is information for recognizing a video

- the fingerprint is a hash value of each image constituting a partial video or a moving image.

- the content recognition information may be information (data) that can be used for video recognition processing, and is not limited to a fingerprint (hash value).

- the additional information is “advertising information related to content”

- the additional information is not limited to advertising information.

- tourism information historical information, and person profiles It may be information, URL (Uniform Resource Locator), public gazette information, information about a program being broadcast, social information such as Twitter (registered trademark), and the like.

- URL Uniform Resource Locator

- public gazette information information about a program being broadcast

- social information such as Twitter (registered trademark), and the like.

- the video recognition device 20 is a server device connected to the communication network 16 and is a Web site that performs content specifying processing based on video recognition processing.

- the content specifying process is a process of performing the video recognition process based on the received fingerprint and specifying the content represented by the fingerprint based on the result of the video recognition process.

- the video recognition device 20 performs analysis on the content broadcast by the broadcast station 12 and video recognition processing using the result of the analysis.

- the video recognition device 20 acquires substantially all content broadcast from the broadcast station 12. Then, the acquired content is analyzed, and the time, capacity, broadcast format, content, genre, characters, time table, etc. of the content are examined to generate analysis information. In addition, the video recognition device 20 creates a fingerprint from the video signal of the content acquired from the broadcast station 12.

- the video recognition apparatus 20 may acquire a content by receiving a broadcast signal broadcast from a broadcast station, or receive a video signal transmitted from the broadcast station 12 via a dedicated video line or the like and receive the content. May be obtained. Moreover, this analysis may be performed automatically, for example, and may be performed manually by an operator.

- the analysis information which is the analysis result, is stored in the storage unit 23 of the video recognition device 20 together with information related to the content.

- the video recognition device 20 When the video recognition device 20 receives a video recognition request with a fingerprint transmitted from the video reception device 40 via the communication network 16, the video recognition device 20 generates the fingerprint in advance and stores it in the storage unit 23. The fingerprint corresponding to the stored fingerprint is collated (video recognition process), and the content corresponding to the fingerprint is specified. In this way, the video recognition apparatus 20 performs a content specifying process for determining what content the partial video of the fingerprint transmitted from the video receiving apparatus 40 is created and specifying the content. Then, an analysis result (analysis information) related to the identified content is read from the storage unit 23, and the read information is returned to the video reception device 40 via the communication network 16 as a result of the content identification process.

- analysis result analysis information

- the video recognition processing (content identification processing based on the video recognition processing) by such a method is also referred to as “ACR (Automatic Content Recognition)”.

- the additional information distribution device 30 is a server device connected to the communication network 16 and is a Web site (advertisement distribution site) that holds and distributes advertisement information of various products.

- the additional information distribution device 30 transmits the result of the content specifying process transmitted from the video receiving device 40 (analysis information acquired by the video receiving device 40 from the video recognition device 20 based on the result of the content specifying process) to the communication network 16. Then, additional information related to the result (analysis information) of the content specifying process is transmitted to the video receiver 40 via the communication network 16.

- This additional information is, for example, advertisement information related to the content specified by the content specifying process.

- FIG. 2 is a block diagram schematically showing an example of the configuration of the video recognition device 20 and the video reception device 40 in the first embodiment.

- FIG. 2 shows main circuit blocks related to the operation shown in this embodiment, and other functions and circuit blocks related to the operation are omitted. This is for easy understanding of the operation described in the present embodiment.

- Each circuit block shown in FIG. 2 may be composed of independent circuits, or a program created to realize one or more of the circuit blocks shown in FIG. 2 is executed by a processor. It may be configured to.

- the video recognition device 20 is a server device including an HTTP (Hypertext Transfer Protocol) transmission / reception unit 21, a search unit 22, and a storage unit 23.

- the video recognition device 20 is configured to provide a content identification processing service based on the video recognition processing to the video reception device 40 via the communication network 16.

- the HTTP transmission / reception unit 21 is a communication interface, for example, a communication adapter conforming to the Ethernet (registered trademark) standard.

- the HTTP transmission / reception unit 21 is configured to be able to transmit / receive data to / from the video reception device 40 via the communication network 16.

- the storage unit 23 is a storage device composed of, for example, an HDD (Hard Disk Drive) or the like.

- the storage unit 23 is configured to store a fingerprint of content broadcast from the broadcasting station 12 and analysis information of an analysis result for the content in association with the content. This fingerprint is, for example, a hash value of each image constituting the moving image.

- the storage unit 23 has a slight time delay (for example, 10 seconds) from the broadcast for each content (for example, the program main body or CM).

- the fingerprint and the analysis result (analysis information) are stored in association with each other.

- the analysis result (analysis information) may include, for example, a program title, a CM title, a program outline, a CM outline, a character, a location related to a video, a URL, and the like.

- the search unit 22 When the search unit 22 receives a video recognition request with a fingerprint transmitted from the video receiving device 40 via the HTTP transmission / reception unit 21, the search unit 22 performs a content specifying process based on the video recognition process using the fingerprint, The result (analysis information) is configured to be returned to the video reception device 40 via the HTTP transmission / reception unit 21.

- the search unit 22 receives a video recognition request with a fingerprint transmitted from the video reception device 40 via the communication network 16 and the HTTP transmission / reception unit 21.

- the received fingerprint is collated with the fingerprint stored in the storage unit 23, and a fingerprint corresponding to the received fingerprint is searched (video recognition process).

- the content corresponding to the fingerprint specified by the search result is set as the content corresponding to the received fingerprint (content specifying process).

- the search unit 22 specifies content corresponding to the received fingerprint.

- the search part 22 reads the analysis result (analysis information) matched with the specified content from the memory

- a reply is made to the video receiver 40 via the communication network 16.

- the video receiver 40 includes a control unit 41, an HTTP transmission / reception unit 42, an operation signal reception unit 43, a video output unit 44, a display unit 54, a video extraction unit 45, an additional information display control unit 46, a storage unit 47, and a video recognition area setting.

- the video receiving device 40 performs content identification processing based on video recognition processing using the video recognition device 20, acquires analysis information as a result of the content identification processing from the video recognition device 20, and adds information related to the analysis information.

- Information for example, advertisement information

- an image based on the additional information for example, advertisement information related to the image

- is superimposed on the image (content) based on the received image signal to display the display unit. 54 is displayed.

- the HTTP transmission / reception unit 42 is a communication interface, for example, a communication adapter that conforms to the Ethernet (registered trademark) standard.

- the HTTP transmission / reception unit 42 is configured to be able to transmit / receive data to / from the video recognition device 20 via the communication network 16.

- the operation signal reception unit 43 receives an operation signal (operation signal for the video reception device 40) transmitted by an operation unit (not shown) such as a remote control device (hereinafter abbreviated as “remote control”) that has received a user operation. Is configured to do.

- the operation signal receiving unit 43 may be configured such that a remote controller having a gyro sensor receives a signal that is transmitted based on a physical variation that occurs in the remote controller.

- the video input unit 48 is a receiving circuit and a decoder, and includes a receiving unit 49 configured to receive a broadcast signal transmitted by a broadcasting station, and a video signal and content related information output from a video transmitting device (for example, the STB 14). And an input unit 65 configured to input.

- the video signal received by the video input unit 48 includes content (program body and CM, etc.) that changes over time.

- the receiving unit 49 is configured to receive a broadcast signal transmitted from the broadcast station 12 via an antenna (not shown) or the like.

- the input unit 65 is an interface configured to input a video signal and content related information output from a video transmission device installed outside.

- the input unit 65 is configured to conform to, for example, the HDMI (registered trademark) standard, and can receive a video signal and content related information transmitted from the video transmission device via the HDMI (registered trademark).

- the video transmission device is, for example, the STB 14, but may be a video recording / playback device or the like.

- the input unit may be configured to receive a video signal and content related information transmitted via a video cable, and a video signal and content related information transmitted by wireless communication.

- the video output unit 44 has a function of controlling the display unit 54, controls the display unit 54 based on the video signal input from the video input unit 48, and displays a video based on the video signal on the display unit 54. It is configured as follows. When the additional information is input from the control unit 41, the video output unit 44 superimposes an image based on the additional information on the video being displayed on the display unit 54.

- the display unit 54 is a display configured to display an image based on an image signal, and is, for example, an LCD (Liquid Crystal Display).

- the display unit 54 may be a PDP (Plasma Display Panel), an OLED (Organic Electro Luminescence Display), or the like.

- the additional information display control unit 46 is configured to perform display control of additional information. Specifically, the additional information display control unit 46 generates a fingerprint from the partial video extracted by the video extraction unit 45, and performs content specifying processing based on the generated fingerprint (content specifying processing based on the video recognition processing).

- the image recognition device 20 is used. This fingerprint is, for example, a hash value of each image constituting the partial video.

- the additional information display control unit 46 generates a fingerprint in the effective area (video recognition area or video recognition candidate area) set by the video recognition area setting unit 66. Details of these processes will be described later.

- the additional information display control unit 46 determines where the image (or character string) based on the acquired additional information is to be superimposed on the video being displayed on the display unit 54 in the video recognition region setting unit 66. A determination is made based on the result of the setting process, and an instruction based on the determination is output to the control unit 41.

- the video extraction unit 45 is configured to extract a partial video from the video signal input from the video input unit 48.

- the video extraction unit 45 is configured to extract a partial video that is a part of the video signal for a predetermined time from the video signal at a predetermined cycle. For example, if the predetermined period is 3 seconds and the predetermined time is 3 seconds, the video extraction unit 45 repeats the operation of extracting a partial video of 3 seconds every 3 seconds from the video signal. That is, the operation of continuously extracting the partial video for 3 seconds from the video signal every 3 seconds without a gap is repeated. For example, if the predetermined period is 15 seconds and the predetermined time is 3 seconds, the video extraction unit 45 repeats the operation of extracting a partial video of 3 seconds every 15 seconds from the video signal.

- the partial video extracted from the video signal is not limited to extraction in units of seconds.

- the partial video may be extracted with a predetermined number of frames at the video frame rate.

- the video recognition area setting unit 66 performs a video recognition area setting process on the partial video extracted by the video extraction unit 45.

- superimposition information such as subtitles and OSD (On Screen Display) superimposed on the partial video is detected, and based on the detection result or based on the feature information included in the content related information, Set the effective area.

- the effective area is a video recognition area or a video recognition candidate area. That is, the video recognition area setting unit 66 sets the video recognition area or the video recognition candidate area based on the detection result of the superimposition information or based on the feature information included in the content related information.

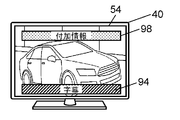

- FIG. 3A is a diagram schematically showing an example of a video displayed on the display unit 54 of the video receiving device 40 in the first exemplary embodiment.

- FIG. 3B is a diagram schematically showing another example of a video displayed on the display unit 54 of the video receiving device 40 in the first exemplary embodiment.

- FIG. 3A shows an example in which the STB 14 outputs the video signal received from the broadcast station 12 to the video receiving device 40 as it is, and the video 50 based on the video signal is displayed on the display unit 54 of the video receiving device 40.

- the STB 14 superimposes the caption 94 on the video signal received from the broadcast station 12 and outputs it to the video receiver 40, and the video based on the video signal (video with the caption 94 superimposed on the video 50).

- the example currently displayed on the display part 54 is shown.

- Some video transmission apparatuses such as the STB 14 have a function of superimposing subtitles, OSD, and the like on the video transmission apparatus side on the video signal transmitted from the broadcasting station 12.

- subtitles, OSDs, and the like that are superposed on video signals by the video transmission device are also referred to as “superimposition information”.

- the STB 14 when the STB 14 superimposes the caption 94 on the video signal transmitted from the broadcasting station 12 and outputs it, the caption 94 is superimposed on the video 50 on the display unit 54 of the video receiver 40 as shown in FIG. 3B. Displayed.

- the video of the same content acquired by the video recognition device 20 from the broadcast station 12 is the video 50 on which the subtitle 94 is not superimposed, as shown in FIG. 3A. .

- the video transmission device such as the STB 14 superimposes the superimposition information such as the caption 94 on the video signal transmitted from the broadcasting station 12

- the video displayed on the display unit 54 of the video reception device 40 and the video recognition are recognized.

- the video acquired by the apparatus 20 from the broadcast station 12 is the same content, the video content differs.

- the video recognition area setting unit 66 performs video recognition area setting processing, detects the display position and display size of the superimposition information, and recognizes the video in the area excluding the superimposition information. An area is set, or a video recognition area is set based on the feature information included in the content related information, and a fingerprint is generated in the video recognition area.

- the video reception device 40 calculates the hash value of each image constituting the partial video in the video recognition area or the video recognition candidate area to obtain a fingerprint.

- caption 94 shown in FIG. 3B is merely an example of superimposition information, and the display position and display size of the superimposition information are limited to the display position and display size of the subtitle 94 shown in FIG. 3B. Is not to be done. The same applies to captions shown in other drawings.

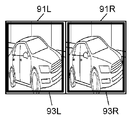

- FIG. 4A is a diagram schematically showing an example of the video recognition area 71 set in the video 50 by the video recognition area setting unit 66 of the video receiving apparatus 40 in the first embodiment.

- FIG. 4B is a diagram schematically illustrating an example of a fingerprint generation area set based on the video recognition area 71 set by the video recognition area setting unit 66 in the video recognition apparatus 20 according to the first exemplary embodiment.

- FIG. 4C is a diagram schematically illustrating an example of the video recognition area 72 set on the entire surface of the video 50 in the video receiving device 40.

- FIG. 4D is a diagram schematically illustrating an example of a fingerprint generation area set on the entire surface of the video 50 in the video recognition device 20.

- the image recognition areas 71 and 72 which are fingerprint generation areas, are indicated by bold lines, but the bold lines are not actually displayed on the display unit 54. .

- FIG. 4A shows an example in which the STB 14 superimposes a caption 94, which is an example of superimposition information, on the video 50, and the video recognition area setting unit 66 sets the video recognition area 71 in an area excluding the caption 94.

- FIG. 4C shows an example in which the video recognition area 72 is set in an area including the subtitle 94 (the entire surface of the video 50) even though the STB 14 superimposes the subtitle 94 on the video 50. At this time, the subtitle 94 is not superimposed on the video 50 acquired from the broadcast station 12 by the video recognition device 20 as shown in FIGS. 4B and 4D.

- FIG. 4C is only shown for comparison with the operation example shown in FIG. 4A, and the video 50 is displayed despite the fact that the video recognition area setting unit 66 has detected superimposition information such as caption 94. It does not indicate that a video recognition area is set on the entire surface of the screen.

- the additional information display control unit 46 performs fingerprinting in the area including the caption 94. Generate.

- the video recognition device 20 generates a fingerprint in the video recognition area 72 of the video 50 as shown in FIG. 4D. There is no caption 94 in this area. For this reason, the fingerprint generated by the video receiving device 40 and the fingerprint generated by the video recognizing device 20 do not match each other, and the content identifying process may fail in the video recognizing device 20.

- the video recognition area setting unit 66 detects a display area (hereinafter referred to as “superimposition area”) of superimposition information such as caption 94.

- the superimposition area of the superimposition information is specified based on the feature information included in the content related information, and the video recognition area 71 is set in an area excluding the superimposition area.

- the additional information display control unit 46 of the video receiver 40 generates a fingerprint in the video recognition area 71 excluding the overlapping area.

- the video recognition device 20 generates a fingerprint in a video recognition area 71 similar to that of the video reception device 40.

- the possibility that the fingerprint generated by the video receiving device 40 and the fingerprint generated by the video recognizing device 20 match each other is relatively high, and the content identifying process is successful in the video recognizing device 20.

- the possibility is relatively high compared to the examples shown in FIGS. 4C and 4D.

- the region where the fingerprint is generated in the video recognition device 20 is set based on the video recognition region set by the video recognition region setting unit 66.

- the video receiving apparatus 40 may be configured to transmit information indicating the video recognition area set by the video recognition area setting unit 66 to the video recognition apparatus 20 together with a fingerprint, for example.

- the storage unit 47 is a storage device configured by, for example, a nonvolatile memory.

- the storage unit 47 displays program meta information such as an electronic program guide (EPG) received by the video input unit 48, additional information acquired from the additional information distribution device 30 via the HTTP transmission / reception unit 42, and display of additional information.

- EPG electronic program guide

- the display control information for the additional information is information for controlling the display of the additional information, and includes information indicating the display period of the additional information, for example.

- the control unit 41 is configured to control each circuit block included in the video reception device 40.

- the control unit 41 is, for example, a nonvolatile memory such as a ROM that stores a program (application program or the like), a CPU that executes the program, and temporarily stores data, parameters, and the like when the CPU executes the program. It consists of a volatile memory such as a RAM for storing.

- control performed by the control unit 41 examples include the following.

- the control unit 41 acquires content related information input together with the video signal.

- the video extraction unit 45 is controlled so that the video extraction unit 45 extracts partial videos from the video signal at a predetermined cycle.

- the video recognition region setting unit 66 sets the video recognition region as a partial video from the feature information included in the content related information, and the additional information display control unit 46 is extracted.

- Each circuit block is controlled to generate (calculate) a fingerprint of the partial video in the video recognition area.

- the fingerprint is transmitted together with the video recognition request to the video recognition device 20 via the HTTP transmission / reception unit 42 and the communication network 16, and content identification processing (content identification processing based on the video recognition processing) is performed on the fingerprint.

- Each circuit block is controlled to request the recognition device 20.

- the result (analysis information) of the content specific process with respect to the fingerprint is acquired from the video recognition apparatus 20 via the communication network 16 and the HTTP transmission / reception part 42, and the additional information based on the result (analysis information) of the content specific process is obtained.

- Each circuit block is controlled so as to be acquired from the additional information distribution device 30 via the communication network 16 and the HTTP transmission / reception unit 42.

- the acquired additional information is stored in the storage unit 47 and output to the video output unit 44, and each circuit block is controlled so that the additional information is superimposed on the video being displayed on the display unit 54 and displayed.

- the additional information display control unit 46 determines that “additional information is not displayed”, the control unit 41 sets each circuit block so that the additional information being displayed on the display unit 54 is not displayed. Control.

- the video reception device 40 When the video reception device 40 requests the video recognition device 20 for content identification processing based on the video recognition processing, the video reception device 40 creates a signal (data) indicating the request for content identification processing, and recognizes the signal as video recognition.

- the additional information display system 10 may be configured to transmit to the video recognition device 20 as a request. For example, such a signal (data) is not transmitted, and the video reception device 40 displays the fingerprint. An agreement may be made in advance between the video reception device 40 and the video recognition device 20 so that the content identification processing based on the video recognition processing is requested to the video recognition device 20 by transmitting to the video recognition device 20.

- the video reception device 40 performs content specifying processing on the video signal using the video recognition device 20. Then, additional information 51 (for example, advertisement information) related to the result of the content specifying process is acquired from the additional information distribution device 30, and the acquired additional information 51 is superimposed on the video signal and displayed on the display unit 54. In addition, the video receiver 40 displays or hides the acquired additional information 51 (for example, advertisement information) according to the display control information of the additional information acquired together with the additional information 51.

- additional information 51 for example, advertisement information

- the video receiving device 40 uses the video recognition area set by the video recognition area setting unit 66 based on the feature information included in the content-related information, or the video recognition area setting unit.

- 66 is a video recognition area or video recognition candidate area set by performing the video recognition area setting process, and is generated from the partial video.

- FIG. 5 is a flowchart schematically showing the operation of the content specifying process performed by the video reception device 40 in the first embodiment.

- the video extraction unit 45 of the video reception device 40 extracts a partial video from the video signal output from the STB 14 and input to the input unit 65 (step S10).

- the video recognition area setting unit 66 performs a video recognition area setting process (step S11).

- the video recognition area setting process is a process in which the video recognition area setting unit 66 sets the video recognition area based on the feature information included in the content-related information or the superimposition information from the partial video extracted by the video extraction unit 45. Is a process for detecting a region (superimposed region) where the image is displayed and setting a video recognition region or a video recognition candidate region in a region excluding the superimposed region. Details of the video recognition area setting process will be described later.

- the additional information display control unit 46 performs the following content specifying process (step S12).

- the additional information display control unit 46 generates a fingerprint based on the partial video extracted by the video extraction unit 45 and the video recognition area or video recognition candidate area set by the video recognition area setting unit 66.

- the generated fingerprint is transmitted to the video recognition apparatus 20 via the HTTP transmission / reception unit 42 according to an instruction from the control unit 41.

- the video recognition device 20 performs content identification processing based on the video recognition processing using the fingerprint, and sends analysis information (result of content identification processing) related to the identified content to the video reception device 40 via the communication network 16. Send.

- the video recognition device 20 may operate to transmit specific information such as “NG” or “0” as analysis information to the video reception device 40.

- the control unit 41 controls each circuit block so as to receive the analysis information (result of content identification processing) transmitted from the video recognition device 20 and transfer it to the additional information display control unit 46.

- the analysis information is stored in the storage unit 47. Details of these content specifying processes will be described later.

- the additional information display control unit 46 determines from the acquired analysis information whether the content specifying process has succeeded (whether the content has been specified from the fingerprint) (step S13).

- the control unit 41 transmits the analysis information received from the video recognition device 20 to the HTTP transmission / reception based on the instruction from the additional information display control unit 46.

- Each circuit block is controlled to be transmitted to the additional information distribution apparatus 30 via the unit 42 and the communication network 16.

- the additional information distribution device 30 transmits additional information related to the received analysis information to the video reception device 40 through the communication network 16.

- the control unit 41 controls each circuit block so that the additional information transmitted from the additional information distribution device 30 is received via the HTTP transmission / reception unit 42.

- Each circuit is configured to transfer the received additional information to the video output unit 44 and display the additional information superimposed on the video being displayed on the display unit 54 based on an instruction from the additional information display control unit 46. Control the block. Then, a series of processing ends.

- the URL is included in the analysis information acquired from the video recognition device 20, and the control unit 41 designates the URL to access the additional information distribution device 30, and associates the information related to the URL with the analysis information.

- Each device may be configured such that the additional information is acquired from the additional information distribution device 30 as additional information.

- step S13 When it is determined in step S13 that the content specifying process has failed (No), the video reception device 40 repeats the processes of steps S10 to S13 described above for the next partial video.

- step S13 for example, when the video recognition apparatus 20 returns the same analysis result (result of the content specifying process) to a predetermined number of consecutive partial videos (for example, three), the content specifying process is performed.

- the additional information display control unit 46 may be configured to determine that has succeeded.

- the video receiver 40 repeats these series of operations.

- step S11 Next, the video recognition area setting process in step S11 will be described.

- the video receiving device 40 performs the video recognition area setting process in order to increase the accuracy of the content specifying process related to the video signal input from the input unit 65.

- the video receiver 40 examines the characteristics of the video signal.

- the outline of the video recognition area setting process is as follows.

- the video receiving device 40 first displays content-related information including feature information (for example, feature information indicating presence / absence of caption 94, display position and display size of caption 94, transmission method of 3D video signal, etc.) It is detected whether it is input to the video receiver 40 via the input unit 65 together with the signal. If the feature information is input, the video reception device 40 sets a video recognition area using the information, generates a fingerprint in the video recognition area, and performs content specifying processing.

- feature information for example, feature information indicating presence / absence of caption 94, display position and display size of caption 94, transmission method of 3D video signal, etc.

- the video receiver 40 receives the feature of the video signal (for example, The operation of detecting the presence / absence of caption 94, the display position and display size of caption 94, etc. from the video signal itself is performed. Thereafter, content identification processing is performed based on the detection result.

- FIG. 6 is a flowchart schematically showing the operation of the video recognition area setting process performed by the video receiver 40 in the first embodiment.

- the video recognition area setting unit 66 of the video receiving device 40 first acquires content related information related to the video signal input from the STB 14 to the video receiving device 40 via the input unit 65 (step S21).

- the video recognition area setting unit 66 includes, in the content-related information, feature information related to the display area of superimposition information such as subtitles 94 and OSD (information indicating the display position, display size, etc., hereinafter “superimposition area information”. Whether or not) is included (step S22).

- feature information related to the display area of superimposition information such as subtitles 94 and OSD (information indicating the display position, display size, etc., hereinafter “superimposition area information”. Whether or not) is included (step S22).

- step S22 When it is determined in step S22 that the content-related information does not include superimposition area information, the content-related information itself does not exist, or the feature information included in the content-related information is feature information related to 3D video ( In No), the video recognition area setting unit 66 performs the overlapping area specifying process (step S23).

- Superimposition area specifying processing is to detect caption 94, OSD, etc. (superimposition information) from the video signal input to the video receiver 40 via the input unit 65, and display position and display size (superimposition area) of the superimposition information. It is a process to specify. Details of the overlapping area specifying process will be described later.

- the video recognition area setting unit 66 performs the video recognition area specifying process using the information of the overlapping area (superimposition area information) specified in the overlapping area specifying process in step S23 (step S24).

- the video recognition area specifying process is a process for specifying a video recognition area or a video recognition candidate area using the superimposition area information. Details of the image recognition area specifying process will be described later.

- step S22 when the overlapping area information is detected from the content related information (Yes), the image recognition area setting unit 66 performs the image recognition area specifying process using the overlapping area information (step S24).

- step S24 After the video recognition area setting process in step S24 is completed, the process proceeds to step S12 in FIG.

- the video recognition area setting unit 66 can grasp the display position, display size, and the like of the superimposition information such as the caption 94 with relatively high accuracy.

- the overlapping area specifying process in step S23 can be omitted.

- the video recognition area setting unit 66 appropriately sets the video recognition area based on the transmission method of the 3D video signal. be able to. Therefore, content identification processing (content identification processing based on video recognition area identification processing) and acquisition of additional information can be performed faster and with higher accuracy.

- step S23 the superposition area specifying process in step S23 when the superposition area information is not included in the content-related information or when the content-related information is not input to the video reception device 40 will be described.

- FIG. 7 is a flowchart schematically showing the operation of the overlapping area specifying process performed by the video reception device 40 in the first embodiment.

- the video recognition area setting unit 66 performs character detection processing on the partial video extracted by the video extraction unit 45 (step S31).

- OCR Optical Character Reader

- the video recognition area setting unit 66 performs OSD detection processing by pattern matching processing (step S32).

- the OSD detection process is a process for detecting the display area (display position and display size) of the OSD.

- the pattern matching processing is performed by, for example, storing the OSD image of STB 14 in advance in the storage unit 47 as a template image, comparing the partial video with the template image, and generating a template image (OSD image) in the partial video. It is a process using a pattern matching method for searching whether or not it is included.

- the image recognition area setting unit 66 detects the detected area (area where the caption 94 is displayed) when the character is detected in the character detection process in step S31, and the OSD is detected in the OSD detection process in step S32. In some cases, the detected area (area where the OSD image is displayed) is set as an overlapping area, and overlapping area information indicating the display position and display size of the overlapping area is generated (step S33). Thereafter, the process proceeds to step S24 in FIG.

- the video reception device 40 may be configured to perform only one of the character detection process in step S31 and the pattern matching process in step S32.

- step S24 the video recognition area specifying process performed in step S24 will be described.

- FIG. 8 is a flowchart schematically showing the operation of the video recognition area specifying process performed by the video receiver 40 in the first embodiment.

- the video recognition area setting unit 66 determines whether or not the overlapping area information is acquired or specified in step S22 or step S23 (step S41).

- step S41 When it is determined in step S41 that the overlapping area information has been acquired or specified (Yes), the video recognition area setting unit 66 includes the overlapping area information included in the content-related information acquired in step S22, Alternatively, a non-superimposition area specifying process is performed based on the superposition area information specified in step S23 (step S44).

- the non-superimposition area specifying process is a process of specifying an area excluding the superimposition area (hereinafter also referred to as “non-superimposition area”) by specifying the superimposition area based on the superimposition area information in the partial video. Details of the non-superimposition region specifying process will be described later.

- the video recognition area setting unit 66 sets the video recognition area in the non-superimposed area specified by the non-superimposed area specifying process in step S44. And the information showing the image

- the information representing the video recognition area generated in step S45 may be transmitted from the video reception device 40 to the video recognition device 20 together with the fingerprint.

- the video recognition area may coincide with the non-superimposed area or may be a part of the non-superimposed area. That is, the video recognition area may be set to all video areas (partial video areas) excluding the overlapping area, or may be set to a part of the video area excluding the overlapping area. It is desirable that the video recognition area is appropriately set according to the accuracy in the content specifying process, the specifications of the video receiving device 40, and the like.

- step S41 When it is determined in step S41 that the superimposed area information has not been acquired or specified (No), the video recognition area setting unit 66 performs a video recognition candidate area setting process (step S42).

- the video recognition candidate area setting process is a process of dividing a partial video into a plurality of areas.

- the partial video divided by the video recognition candidate area setting process is also referred to as “video recognition candidate area”.

- the video recognition candidate area setting process is performed for the following reason.

- step S41 when it is determined that the superimposition area information has not been acquired or specified, the video reception device 40 cannot acquire or specify the superimposition area information because the superimposition information is not superimposed on the video signal. Alternatively, it is difficult to determine whether the superimposition area information cannot be acquired or specified even though the superimposition information is superimposed on the video signal.

- the partial video is divided into a plurality of areas, a fingerprint is generated in each area (each video recognition candidate area), and a content specifying process is performed for each area.

- a content specifying process is performed for each area.

- the partial video is divided into a plurality of regions and the partial video can be divided into a region where the superimposition information is superimposed and a region where the superimposition information is not superimposed, Regarding a region where information is superimposed (video recognition candidate region where superimposition information is superimposed), even if there is a possibility that the content specifying process may fail, a region where superimposition information is not superimposed (video where superimposition information is not superimposed) This is because the possibility of successful content identification processing can be relatively increased with respect to the recognition candidate area. Details of the video recognition candidate area setting process will be described later.

- the video recognition area setting unit 66 generates information representing the video recognition candidate area set in the video recognition candidate area setting process in step S42, and outputs the information to the additional information display control unit 46 (step S43). Thereafter, the process proceeds to step S12 in FIG.

- the information representing the video recognition candidate area generated in step S43 may be transmitted from the video reception device 40 to the video recognition device 20 together with the fingerprint.

- step S44 the non-superimposed area specifying process performed in step S44 will be described with reference to FIGS. 9A to 11C.

- FIG. 9A is a diagram schematically illustrating an example of the video signal 91 output from the video transmission device and input to the video reception device 40 and the superimposition area information 92.

- FIG. 9B is a diagram schematically illustrating an example of the video recognition area 93 set by the video recognition area setting unit 66 of the video reception device 40 in the first exemplary embodiment.

- FIG. 9C is a diagram schematically illustrating an example of a display position of the additional information 98 displayed on the display unit 54 of the video reception device 40 in the first exemplary embodiment.

- the video reception device 40 may acquire content-related information via the communication network 16, for example.

- content-related information including a video signal 91 in which a caption 94 is superimposed on the STB 14 side on a video signal broadcast from the broadcasting station 12, and superimposed area information 92 of the caption 94, Is input from the STB 14 to the video receiver 40.

- the video recognition area setting unit 66 specifies a superimposition area (for example, a display area of the caption 94) based on the acquired superimposition area information 92. Then, an area excluding the superimposition area (for example, an area excluding the caption 94) is specified as a non-superimposition area (non-superimposition area specifying process in step S44).

- the video recognition area setting unit 66 sets the video recognition area 93 in the non-superimposition area (step S45).