WO2011125352A1 - 情報処理システム、操作入力装置、情報処理装置、情報処理方法、プログラム及び情報記憶媒体 - Google Patents

情報処理システム、操作入力装置、情報処理装置、情報処理方法、プログラム及び情報記憶媒体 Download PDFInfo

- Publication number

- WO2011125352A1 WO2011125352A1 PCT/JP2011/050443 JP2011050443W WO2011125352A1 WO 2011125352 A1 WO2011125352 A1 WO 2011125352A1 JP 2011050443 W JP2011050443 W JP 2011050443W WO 2011125352 A1 WO2011125352 A1 WO 2011125352A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- display

- touch sensor

- display surface

- region

- options

- Prior art date

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/03—Arrangements for converting the position or the displacement of a member into a coded form

- G06F3/041—Digitisers, e.g. for touch screens or touch pads, characterised by the transducing means

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/03—Arrangements for converting the position or the displacement of a member into a coded form

- G06F3/041—Digitisers, e.g. for touch screens or touch pads, characterised by the transducing means

- G06F3/0412—Digitisers structurally integrated in a display

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0484—Interaction techniques based on graphical user interfaces [GUI] for the control of specific functions or operations, e.g. selecting or manipulating an object, an image or a displayed text element, setting a parameter value or selecting a range

- G06F3/0485—Scrolling or panning

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0487—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser

- G06F3/0488—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0487—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser

- G06F3/0488—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures

- G06F3/04886—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures by partitioning the display area of the touch-screen or the surface of the digitising tablet into independently controllable areas, e.g. virtual keyboards or menus

Definitions

- the present invention relates to an information processing system, an operation input device, an information processing device, an information processing method, a program, and an information storage medium.

- a portable game device in which a user can enjoy a game by operating a touch panel (touch screen) including a display unit and a touch sensor.

- Patent Document 1 describes a technique in which a display and a touch display different from the display are juxtaposed, and a menu displayed on the touch display is touched to switch display contents on the display.

- the display surface of the display unit included in the touch panel matches the detection surface of the touch sensor included in the touch panel. Therefore, even if the user performs a tracing operation from the outside to the inside of the touch panel (or from the inside to the outside) with a finger or a stylus, it is detected separately from the tracing operation from the edge of the touch panel to the inside (or from the inside to the edge). I could't.

- the conventional information processing system there is a limit to the range of operation variations that can be detected on the touch panel, and thus there is a limit to the range of executable process variations.

- an image showing options such as icons and buttons to be touched by the user does not hinder the visibility of displayed information on the display surface of the display unit. May be displayed in a row on the edge.

- the display surface of the display unit included in the touch panel and the detection surface of the touch sensor included in the touch panel coincide with each other. For this reason, when the user touches the vicinity of the edge of the touch panel, the position may or may not be detected depending on whether the touch panel is touched or the outside of the touch panel is touched.

- the present invention has been made in view of the above problems, and one of its purposes is an information processing system, an operation input device, and an information processing capable of widening the range of variations of processing executed based on a touch operation.

- An apparatus, an information processing method, a program, and an information storage medium are provided.

- Another object of the present invention is to provide an information processing device, an operation input device, an information processing system, an information processing method, and a program capable of effectively utilizing the display surface of the display unit while maintaining the operability of the touch operation by the user. And providing an information storage medium.

- an information processing system includes a display unit, an inner region that occupies at least a part of a display surface of the display unit, and a region outside the display surface adjacent to the inner region.

- a touch sensor for detecting a position of an object on the detection surface, and a position detected by the touch sensor corresponding to a position in the inner region, wherein a detection surface is provided in a region including an outer region.

- a process execution means for executing a process based on the position detected by the touch sensor corresponding to the position in the outer region.

- the operation input device includes a display unit, an inner region that occupies at least a part of the display surface of the display unit, and an outer region that is an area outside the display surface adjacent to the inner region.

- a touch sensor for detecting a position of an object on the detection surface, wherein the touch sensor is detected by the touch sensor corresponding to a position in the inner region.

- Data corresponding to a detection result by the touch sensor is output to a processing execution unit that executes processing based on the position and the position detected by the touch sensor corresponding to the position in the outer region.

- the information processing apparatus has a detection surface in a region including an inner region that occupies at least a part of the display surface of the display unit and an outer region that is an area outside the display surface adjacent to the inner region. Based on a position corresponding to a position in the inner area and a position corresponding to a position in the outer area detected by a touch sensor that detects the position of the object on the detection surface. And a process execution means for executing the process.

- the information processing method provides a detection surface in a region including an inner region that occupies at least a part of the display surface of the display unit, and an outer region that is an area outside the display surface adjacent to the inner region. Based on a position corresponding to a position in the inner area and a position corresponding to a position in the outer area detected by a touch sensor that detects the position of the object on the detection surface. A process execution step for executing the process.

- the detection surface is provided in a region including an inner region that occupies at least a part of the display surface of the display unit and an outer region that is an area outside the display surface adjacent to the inner region.

- a process based on a position corresponding to a position in the inner area and a position corresponding to a position in the outer area, which is detected by a touch sensor that detects the position of the object on the detection surface.

- the computer is caused to function as processing execution means to be executed.

- the information storage medium has a detection surface in a region including an inner region that occupies at least a part of the display surface of the display unit and an outer region that is an area outside the display surface adjacent to the inner region. Based on a position corresponding to a position in the inner area and a position corresponding to a position in the outer area detected by a touch sensor that detects the position of the object on the detection surface.

- a computer-readable information storage medium storing a program characterized by causing a computer to function as processing execution means for executing processing.

- the present invention it is possible to execute processing based on the position corresponding to the position in the display surface and the position corresponding to the position in the outer region outside the display surface.

- the range of processing variations to be executed can be expanded.

- the touch sensor sequentially detects the position of the object, and the processing execution unit executes a process based on a history of positions detected by the touch sensor. By doing so, it is possible to perform processing according to the object movement operation from the inner region to the outer region and the object movement operation from the outer region to the inner region.

- the processing execution means includes a case where the position history detected by the touch sensor includes both a position corresponding to a position in the inner area and a position corresponding to a position in the outer area. Different processing may be executed when the position history detected by the touch sensor includes only the position corresponding to the position in the inner region. In this way, the range of processing variations to be executed can be further expanded.

- the processing execution unit detects a position corresponding to one of the position in the inner region or the position in the outer region after the touch sensor detects the position corresponding to the other.

- a predetermined process may be executed. By doing so, it is possible to execute processing according to the movement of the object across the boundary between the inner region and the outer region.

- the process execution unit executes a process of displaying information at a position in the display unit specified based on a position detected by the touch sensor. By doing this, it is possible to display information based on the detection position corresponding to the position in the display surface and the detection position corresponding to the position in the outer region outside the display surface.

- another information processing apparatus includes a display processing execution unit that displays a plurality of options side by side along an edge of the display surface of the display unit, and an inner side that is at least a partial region in the display surface. Detected by a touch sensor that detects a position of an object on the detection surface, in which a detection surface is provided in a region including an area and an outer area that is an area outside the display surface adjacent to the inner area Selection processing execution means for executing processing according to at least one of the plurality of options specified based on the position.

- another operation input device is a display unit, an inner region that is at least a part of a display surface of the display unit, and a region outside the display surface adjacent to the inner region.

- a touch sensor for detecting the position of an object on the detection surface, the touch sensor being provided along an edge of the display surface of the display unit.

- Display processing execution means for displaying a plurality of options side by side, an inner region that is at least a part of the display surface, and an outer region that is an area outside the display surface adjacent to the inner region.

- a process according to at least one of the plurality of options specified based on a position detected by a touch sensor that detects a position of an object on the detection surface, the detection surface being provided in a region Execute The information processing apparatus that includes a selection processing execution means, the, and outputs data indicating the detection results.

- another information processing system includes a display unit, an inner region that is at least a part of a display surface of the display unit, and a region outside the display surface adjacent to the inner region.

- a touch sensor that detects a position of an object on the detection surface, and a plurality of options are displayed side by side along an edge of the display surface of the display unit.

- a detection surface is provided in a region including display processing execution means, an inner region that is at least a portion of the display surface, and an outer region that is an area outside the display surface adjacent to the inner region.

- Selection processing execution means for executing processing according to at least one of the plurality of options specified based on a position detected by a touch sensor that detects a position of an object on the detection surface; The And wherein the Mukoto.

- another information processing method includes a display processing execution step of displaying a plurality of options side by side along an edge of the display surface of the display unit, and an inner side that is at least a partial region in the display surface Detected by a touch sensor that detects a position of an object on the detection surface, in which a detection surface is provided in a region including an area and an outer area that is an area outside the display surface adjacent to the inner area An option corresponding process execution step for executing a process according to at least one of the plurality of options specified based on a position.

- another program is a display processing execution means for displaying a plurality of options side by side along the edge of the display surface of the display unit, an inner region that is at least a partial region in the display surface, Based on a position detected by a touch sensor that detects a position of an object on the detection surface, wherein a detection surface is provided in an area including an outer area that is an area outside the display surface adjacent to the inner area.

- the computer is caused to function as selection process execution means for executing a process according to at least one of the plurality of options specified in the above.

- another information storage medium includes a display processing execution unit that displays a plurality of options side by side along an edge of the display surface of the display unit, and an inner region that is at least a partial region in the display surface And a position detected by a touch sensor that detects the position of the object on the detection surface, wherein a detection surface is provided in a region including the outer region that is an area outside the display surface adjacent to the inner region.

- a computer-readable information storage medium storing a program characterized by causing a computer to function as selection processing execution means for executing processing according to at least one of the plurality of options specified based on It is.

- the display surface of the display unit can be effectively utilized while maintaining the operability of the touch operation by the user.

- the selection processing execution means is an object by the user along a direction connecting the center of the display surface and the edge of the display surface on which the plurality of options are displayed side by side.

- the plurality of options displayed on the display surface that are specified based on the distance between the option and the position of the object when a detection result corresponding to the movement of the object is detected by the touch sensor.

- a process based on at least one of the above is executed. In this way, the user can perform an intuitive operation of moving the object along the direction connecting the center of the display surface and the edge of the display surface, based on the distance between the position of the object and the option. Processing based on the specified option can be executed.

- the display processing execution unit may select a plurality of options along the edge of the display surface when the touch sensor detects a position corresponding to the edge of the display surface. It is characterized by being displayed side by side. By so doing, it is possible to execute a process of displaying options along the edge according to the operation of bringing the object into contact with the edge of the display surface.

- the display processing execution unit may select an option specified based on a relationship between a position detected by the touch sensor and a position where the option is displayed as another option. It displays on the said display part in the aspect different from. In this way, it is possible to present to the user in an easy-to-understand manner what the specified option is.

- the touch sensor detects the detection result corresponding to the movement of the object by the user in the direction along the edge of the display surface on which the plurality of options are displayed side by side.

- the options displayed in a manner different from other options may be changed according to the detection result. In this way, the user can change the specified option by moving the object.

- the display processing execution unit may select a plurality of options along the edge of the display surface when the touch sensor detects a position corresponding to the edge of the display surface. Displayed side by side, and after the display processing execution means displays the plurality of options side by side on the display unit, the position detected by the touch sensor and the position where the options are displayed The selection specified based on the display unit is displayed on the display unit in a mode different from other options, and the selection processing execution unit displays the central part of the display surface and the plurality of options side by side.

- the option is an icon corresponding to a program

- the selection process executing unit executes a process of starting a program corresponding to the specified option

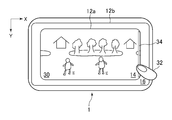

- FIG. 1 shows the 1st usage example of the portable game machine which concerns on this embodiment.

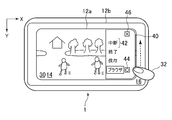

- FIG. 2nd usage example of the portable game machine which concerns on this embodiment shows the 2nd usage example of the portable game machine which concerns on this embodiment.

- FIG. 1 is a perspective view showing an example of the appearance of an information processing system according to an embodiment of the present invention (in this embodiment, for example, a portable game machine 1).

- the casing 10 of the portable game machine 1 has a substantially rectangular flat plate shape as a whole, and a touch panel 12 is provided on the surface thereof.

- the touch panel 12 has a substantially rectangular shape, and includes a display unit (display 12a) and a touch sensor 12b.

- the display 12a may be various image display devices such as a liquid crystal display panel and an organic EL display panel.

- the touch sensor 12b is disposed so as to overlap the display 12a, and is provided with a substantially rectangular detection surface having a shape corresponding to the display surface of the display 12a.

- the touch sensor 12b sequentially detects contact of an object such as a user's finger or stylus on the detection surface at a predetermined time interval.

- the touch sensor 12b detects the contact position of the object.

- the touch sensor 12b may be of any type as long as it is a device that can detect the position of an object on the detection surface, such as a capacitance type, a pressure sensitive type, or an optical type.

- the sizes of the display 12a and the touch sensor 12b are different, and the touch sensor 12b is slightly larger than the display 12a.

- the display 12a and the touch sensor 12b are arranged in the housing 10 so that the center position of the display 12a is slightly lower right than the center position of the touch sensor 12b.

- the touch sensor 12b and the display 12a may be arranged in the housing 10 so that the positions of the centers overlap.

- the touch sensor area where the display 12a and the touch sensor 12b overlap areas that occupies the display surface of the display 12a

- the touch sensor area outside the display surface of the display 12a that is adjacent to the inner area 14 will be described. Will be referred to as the outer region 16.

- the portable game machine 1 includes various operation members for receiving user operation inputs such as buttons and switches, and an imaging unit such as a digital camera. May be disposed on the front surface, back surface, side surface, or the like of the housing 10.

- FIG. 2 is a block diagram showing an example of the internal configuration of the portable game machine 1 shown in FIG.

- the portable game machine 1 includes a control unit 20, a storage unit 22, and an image processing unit 24.

- the control unit 20 is a CPU, for example, and executes various types of information processing according to programs stored in the storage unit 22.

- the storage unit 22 is, for example, a memory element such as a RAM or a ROM, a disk device, and the like, and stores a program executed by the control unit 20 and various data.

- the storage unit 22 also functions as a work memory for the control unit 20.

- the image processing unit 24 includes, for example, a GPU and a frame buffer memory, and draws an image to be displayed on the display 12a in accordance with an instruction output from the control unit 20.

- the image processing unit 24 includes a frame buffer memory corresponding to the display area of the display 12a, and the GPU writes an image to the frame buffer memory every predetermined time in accordance with an instruction from the control unit 20.

- the image written in the frame buffer memory is converted into a video signal at a predetermined timing and displayed on the display 12a.

- FIG. 3 is a functional block diagram showing an example of functions realized by the portable game machine 1 according to the present embodiment.

- the portable game machine 1 functions as including a detection result receiving unit 26 and a process execution unit 28.

- the detection result receiving unit 26 is realized mainly by the touch sensor 12b and the control unit 20.

- the process execution unit 28 is realized mainly by the control unit 20 and the image processing unit 24.

- These elements are realized by executing a program installed in the portable game machine 1 that is a computer by the control unit 20 of the portable game machine 1.

- This program is supplied to the portable game machine 1 via a computer-readable information transmission medium such as a CD-ROM or DVD-ROM, or via a communication network such as the Internet.

- the detection result receiving unit 26 receives the detection result by the touch sensor 12b.

- the touch sensor 12b outputs a detection result corresponding to the contact position of the object to the detection result receiving unit 26 at predetermined time intervals.

- the detection result reception part 26 receives sequentially the contact position data corresponding to the contact position of the object detected by the touch sensor 12b for every predetermined time.

- the process execution unit 28 executes various processes using the detection results received by the detection result reception unit 26. Specifically, the process execution unit 28 determines the content of the user's operation input using, for example, the detection result (for example, contact position data) of the position of an object such as the user's finger or stylus by the touch sensor 12b. . And the process according to the content of the determined operation input is performed, and a process result is displayed on the display 12a, and is shown to a user.

- the detection result for example, contact position data

- the detection surface of the touch sensor 12b is provided in the outer region 16 adjacent to the inner region 14 corresponding to the display 12a, so that the display 12a can be moved from the outside of the display 12a to the inside of the display 12a It is possible to detect that an operation of tracing the detection surface of the touch sensor 12b with an object such as a user's finger or stylus (hereinafter referred to as a slide operation) is performed from the inside to the outside of the display 12a. In this way, according to the present embodiment, the range of touch operation variations that can be detected becomes wider than when the display surface of the display 12a and the detection surface of the touch sensor 12b match.

- the portable game machine 1 according to the present embodiment can more easily operate the edge of the touch sensor 12b than the portable game machine 1 in which a frame member is provided outside the touch sensor 12b. .

- FIGS. 4A and 4B are diagrams illustrating a first usage example of the portable game machine 1.

- a game screen 30 is displayed on the display 12a.

- the detection result receiving unit 26 receives contact position data corresponding to the contact position of the finger 32.

- the process execution part 28 performs the process according to the contact position data.

- stretched to a horizontal direction along the edge of the display 12a is arrange

- the touch sensor 12b touches the finger at that timing every predetermined time.

- the position where 32 touches the detection surface is sequentially detected.

- the detection result reception part 26 receives sequentially a series of contact position data corresponding to the detected contact position. Then, the process execution unit 28 determines that the slide operation of the finger 32 across the indicator image 34 from the outer region 16 to the inner region 14 has been performed based on the received series of contact position data.

- the process execution unit 28 displays an operation panel image 36 corresponding to the indicator image 34 on the upper portion of the display 12a as shown in FIG. 4B.

- the operation panel image 36 includes a button 38 corresponding to a predetermined process.

- the process execution unit 28 corresponds to the touched button 38. Execute the process.

- the process execution unit 28 executes a process based on the position in the inner region 14 and the position in the outer region 16.

- the process execution part 28 performs the process based on the log

- the user touches the detection surface of the touch sensor 12b with the finger 32 and slides the finger 32 across the indicator image 34 displayed on the edge of the display 12a, whereby the indicator image is displayed.

- An operation for displaying the operation panel image 36 in the vicinity of the position where 34 is displayed can be performed.

- the operation to be performed when the operation panel image 36 is displayed can be presented to the user in an easy-to-understand manner using the indicator image 34.

- the user can perform display control of the game screen 30 by an intuitively easy-to-understand operation of sliding the finger 32 across the edge of the display 12a.

- the width of the indicator image 34 is reduced, an area in the display 12a that can be used as the game screen 30 is increased. Therefore, the display surface of the display 12a can be effectively used. Can do.

- the process execution unit 28 moves the finger 32.

- the operation panel image 36 may be displayed on the display 12a at the same speed as the sliding speed. In this way, since the slide operation and the state in which the operation panel image 36 is displayed are linked, it is possible to give the user an intuitive operation feeling as if the operation panel image 36 is drawn from the screen end of the display 12a. it can.

- the process execution unit 28 may display the operation panel image 36 on the display 12 a in accordance with the slide operation of the finger 32 from the inner region 14 to the outer region 16.

- the indicator image 34 may be arranged on the left side, the right side, or the lower side of the display 12a. Then, the operation panel image 36 may be displayed at a position corresponding to the display position of the indicator image 34.

- FIGS. 5A, 5B, and 5C are diagrams illustrating a second usage example of the portable game machine 1.

- the game screen 30 is displayed on the display 12a.

- the process execution unit 28 executes a process according to the touched position.

- the rightward direction in the horizontal direction is the X-axis direction and the downward direction in the vertical direction is the Y-axis direction.

- a strip-shaped indicator image 34 extending in the vertical direction along the edge of the display 12a is arranged.

- the processing execution unit 28 displays a menu image 40 corresponding to the indicator image 34 as shown in FIG. 5B. Displayed on the right side of the game screen 30.

- the menu image 40 includes a plurality of options 42 (for example, character strings indicating processing contents) to be selected by the user.

- the options 42 are displayed side by side along the edge of the display surface of the display 12a.

- the process execution unit 28 displays the options 42 side by side along the edge of the display surface of the display 12a.

- the touch position of the finger 32 corresponding to the display operation of the menu image 40 may be a position in the inner area 14 or a position in the outer area 16.

- the process execution unit 28 displays (highlights) the option 42 having the shortest distance from the position corresponding to the contact position data received by the detection result receiving unit 26 in a manner different from the other options 42.

- a frame is displayed around the option 42 to be highlighted (in the example of FIG. 5B, the character string “browser”), and the option 42 to the right of the option 42 to be highlighted.

- a selection icon 44 indicating that is selected is displayed. Note that the color of the option 42 to be highlighted may be changed.

- the touch sensor 12b detects the contact position of the finger 32 every predetermined time, and the detection result receiving unit 26 sequentially receives the contact position data corresponding to the contact position of the finger 32. Then, each time the detection result receiving unit 26 receives the contact position data, the process execution unit 28 compares the contact position data with the contact position data received immediately before to specify the moving direction of the finger 32. When it is determined that the moving direction of the finger 32 is the vertical direction (Y-axis direction), the process execution unit 28 specifies the option 42 to be highlighted based on the received contact position data. . Then, the process execution unit 28 updates the menu image 40 so that the option 42 is highlighted. In the example of FIG. 5C, the option 42 corresponding to the character string “interrupt” is highlighted.

- the option 42 to be highlighted is displayed. Will change.

- the processing execution unit 28 is highlighted according to the detection result.

- the option 42 may be changed.

- the process execution unit 28 specifies that the movement direction of the finger 32 is the left-right direction (X-axis direction). In this case, the process execution unit 28 executes a process according to the highlighted option 42. In the example of FIG. 5C, the process execution unit 28 executes an interruption process.

- the option 42 is displayed to the edge of the display 12a. Even in the situation where 42 is hidden, even if the finger 32 protrudes outside the display 12a, the position of the finger 32 can be detected because the touch sensor 12b is also provided in the outer region 16. Become. Thus, in the second usage example, it is possible to effectively utilize the display surface of the display 12a while maintaining the operability of the touch operation by the user.

- the process execution unit 28 selects the option 42 (for example, the detection result reception unit 26 receives) specified based on the distance from the position corresponding to the contact position data received by the detection result reception unit 26.

- the option 42) whose distance from the position corresponding to the contact position data is within a predetermined range may be highlighted. Further, when the user performs a sliding operation of the finger 32 in a direction away from the center from the right edge of the display 12a, the processing execution unit 28 performs processing corresponding to the option 42 highlighted at this timing. May be executed.

- the processing execution unit 28 updates the display content of the display 12a so as to erase the menu image 40. You may do it.

- the arrangement, shape, and the like of the options 42 in the menu image 40 in the second embodiment are not limited to the above example.

- the option 42 may be an image such as an icon.

- the second usage example may be applied to an operation panel in, for example, a music player or a photo viewer.

- each option 42 is, for example, a character string or icon corresponding to an operation in a music player, a photo viewer, or the like.

- the second usage example may be applied to, for example, a control panel for performing various settings.

- each option 42 is, for example, a character string or icon corresponding to the setting item.

- the process execution unit 28 may display and output menu items that are frequently used by the user as options 42 in the menu image 40.

- FIG. 6 is a diagram illustrating a third usage example of the portable game machine 1.

- a game screen 30 similar to that shown in FIG. 5A is displayed on the display 12a.

- the process execution unit 28 executes a process related to the game according to the touched position.

- the detection result receiving unit 26 performs this operation. A series of corresponding contact position data is received. Then, based on the contact position data, the process execution unit 28 moves the game screen 30 to the left as shown in FIG. 6, and displays the system setting screen 48 of the portable game machine 1 in the right area of the display 12a. indicate. When the user touches the system setting screen 48 with the finger 32 or the like, the process execution unit 28 executes a process related to the system setting of the portable game machine 1 in accordance with the touched position.

- the screen of a program such as an application program currently being executed is dismissed and the screen of another program (for example, an operation system program) is displayed.

- a production effect can be realized.

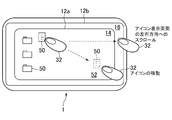

- FIG. 7 is a diagram illustrating a fourth usage example of the portable game machine 1.

- an icon display screen 52 including a plurality of icons 50 is displayed on the display 12a.

- the process execution unit 28 moves the touched icon 50 to the position to which the finger 32 is moved. (Drag and drop).

- the process execution unit 28 Scrolls the icon display screen 52 itself to the left.

- the process execution unit 28 stops scrolling the icon display screen 52.

- the process execution unit 28 includes a position corresponding to a position in the inner area 14 and a position corresponding to a position in the outer area 16 in the position history detected by the touch sensor 12b. Different processing is executed when both are included, and when only the position corresponding to the position in the inner region 14 is included in the history of positions detected by the touch sensor 12b.

- the touch sensor 12 b is provided in the outer region 16, so that the touch sensor 12 b moves when the finger 32 is moved in the inner region 14 and from the inner region 14 to the outer region 16. Different detection results are output when the finger 32 is moved. Therefore, the process execution unit 28 can execute different processes depending on whether the finger 32 is moved within the inner region 14 or when the finger 32 is moved from the inner region 14 to the outer region 16. .

- the process execution unit 28 causes the display 12a to

- the icon display screen 52 itself may be scrolled to the left in units of pages corresponding to the size.

- FIG. 8 is a diagram illustrating a first application example of the present embodiment.

- the index information 56 is arranged in a line in the vertical direction on the right side of the display 12a.

- the marks 58 are arranged in a line in the vertical direction in the outer region 16 on the right side of the display 12a.

- the index information 56 for example, characters

- the mark 58 are in one-to-one correspondence, and the corresponding index information 56 and the mark 58 are arranged side by side.

- a plurality of pieces of personal information including a person's name and telephone number are registered in advance.

- the process execution unit 28 When the user touches the position in the outer region 16 on the right side of the display 12a with the finger 32, the process execution unit 28 highlights the index information 56 closest to the position, and displays the highlighted index information 56 on the highlighted index information 56.

- Corresponding information for example, a list of personal information registered in the portable information terminal 54 in which the highlighted alphabet corresponds to the first letter of the last name is displayed on the display 12a.

- the process execution unit 28 When the user performs an operation of sliding the finger 32 along the direction in which the marks 58 are arranged, the process execution unit 28 highlights the index information 56 closest to the position of the finger 32 according to the operation. The content displayed on the display 12a is changed.

- the user since the user can select the index information 56 outside the screen of the display 12a, the user can use the index information 56 to display the area near the edge of the display 12a.

- the process execution unit 28 highlights the index information 56 closest to the touched position, and updates the display content of the display 12a so that the information corresponding to the highlighted index information 56 is displayed on the display 12a. It may be. In this application example, the mark 58 may not be disposed in the outer region 16 on the right side of the display 12a.

- FIG. 9A, 9B, and 9C are diagrams illustrating a second application example of the portable information terminal 54.

- FIG. 9A In the portable information terminal 54 shown in FIG. 9A, a strip-shaped indicator image 34 extending in the horizontal direction along the edge of the display 12a is arranged on the lower side of the screen shown in FIG. 9A. Then, when the user touches the indicator image 34 with the finger 32 and performs a slide operation of the finger 32 from the lower edge of the display 12a toward the center, the process execution unit 28, as shown in FIG. 9B, The operation panel image 36 of the first stage portion is displayed and output on the display 12a.

- the process execution unit 28 changes the display content of the display 12a so that the indicator image 34 extends in the vertical direction to become the operation panel image 36, for example.

- an operation panel image 36 in which the second stage portion is arranged below the first stage portion is displayed as shown in FIG. 9C. Display output to 12a.

- the process execution unit 28 controls the display 12a so that the operation panel image 36 disappears from the display 12a.

- the processing execution unit 28 starts the operation panel image 36 from one end of the display 12a on which the indicator image 34 is arranged. Transition may be displayed so that appears.

- the process execution unit 28 outputs the operation panel image 36 of the first stage portion on the display 12a in response to one slide operation and receives the same slide operation again.

- the operation panel image 36 of the first stage portion may be displayed and output on the display 12a.

- the processing execution unit 28 outputs the operation panel image 36 of the first stage portion on the display 12a or outputs the operation of the second stage portion according to the moving amount (or moving speed) of the finger 32 in the slide operation. It may be controlled whether to display up to the panel image 36 on the display 12a.

- the contents displayed as the operation panel image 36 of the first stage part and the contents displayed as the operation panel image 36 of the second stage part may be divided based on a predetermined priority order.

- Good. 9A, 9B, and 9C show an example of an operation during playback of moving image content.

- the content displayed as the operation panel image 36 in the first stage portion is also the operation panel image 36 in the second stage portion.

- the displayed content is also related to the video playback.

- information representing the current moving image playback status such as the current chapter, elapsed time, and timeline appears in the first stage portion, and pause, playback, and stop are displayed in the second stage portion.

- Function buttons such as fast forward, fast reverse, repeat, and help appear.

- the portable information terminal 54 of this application example may change the contents displayed in the first stage part and the second stage part and the setting of the function buttons according to the setting operation received from the user.

- the process execution unit 28 controls the display 12a so that the operation panel image 36 disappears from the display 12a. Also good. Further, when the user touches an area outside the operation panel image 36, the process execution unit 28 may control the display 12a so that the operation panel image 36 disappears from the display 12a. Further, when the user performs a sliding operation of the finger 32 in the direction opposite to the direction in which the operation panel image 36 is displayed and output, the processing execution unit 28 displays the operation panel image 36 so that the operation panel image 36 disappears from the display 12a. You may make it display-control 12a.

- the process execution unit 28 displays the operation panel image 36 from the display 12a step by step in the order of the second step portion and the first step portion according to the moving amount (or moving speed) of the finger 32 in the slide operation. You may make it display-control the display 12a so that it may disappear.

- the user's slide operation when the operation panel image 36 is displayed on the display 12a is not limited to the above-described operation.

- the processing execution unit 28 operates a button provided outside the display 12a, a slide operation of the finger 32 from the lower edge of the display 12a toward the center outside the indicator image 34, a display

- the operation panel image 36 may be displayed and output on the display 12a in accordance with a slide operation into the display 12 from a touch sensor area outside the display 12.

- this invention is not limited to the above-mentioned embodiment, a usage example, or an application example.

- some of the functions described in the above-described embodiments, usage examples, and application examples may be combined.

- the following operations can be performed by combining the first usage example and the second usage example.

- the processing execution unit 28 has the shortest distance from the position corresponding to the contact position data received by the detection result receiving unit 26 42 may be highlighted. Then, the user may slide the finger 32 further to the left without releasing the finger 32 as it is, and the process execution unit 28 may execute the process according to the highlighted option 42. In this way, the user can display the menu image 40 and select the option 42 as a series of operations without releasing the finger 32 from the display 12a.

- a straight line or a curve specified by the process execution unit 28 interpolating the positions indicated by the contact position data sequentially received by the detection result receiving unit 26 in accordance with the user's slide operation on the detection surface of the touch sensor 12b. May be executed on the display surface of the display 12a.

- the process execution unit 28 may display a line specified by complementing the position indicated by the contact position data corresponding to the position in the outer region 16 on the display surface of the display 12a.

- the touch sensor 12b may detect the contact position of the object and the strength of pressing.

- the touch sensor 12b does not necessarily detect the position of the object only when the object touches the detection surface, but the position of the object with respect to the detection surface when the object approaches within the detectable range on the detection surface. May be detected.

- the width of the touch sensor 12b protruding from the display 12a may be different for each side of the display 12a.

- the touch sensor 12b may not protrude from the display 12a for all sides of the display 12a.

- the touch sensor 12b may not cover the entire area of the display surface in the display 12a.

- the display 12a may be disposed closer to the housing 10 than the touch sensor 12b, or the touch sensor 12b may be disposed closer to the housing 10 than the display 12a.

- the present embodiment may be applied to an information processing system other than the portable game machine 1.

- the operation input device configured to include the touch panel 12 and the information processing device functioning as the detection result reception unit 26 and the process execution unit 28 are separate cases, and the operation input device and the information

- the present embodiment may be applied to an information processing system in which a processing apparatus is connected by a cable or the like.

Abstract

Description

以下、本実施形態に係る携帯型ゲーム機1の利用例について説明する。

図5A、図5B及び図5Cは、携帯型ゲーム機1の第2の利用例を示す図である。図5Aに示す携帯型ゲーム機1では、ディスプレイ12aにゲーム画面30が表示されている。ユーザが指32などによりゲーム画面30をタッチすると、処理実行部28は、タッチされた位置に応じた処理を実行する。なお、図5A、図5B及び図5Cにおいて、横方向右向きをX軸方向、縦方向下向きをY軸方向とする。

図6は、携帯型ゲーム機1の第3の利用例を示す図である。第3の利用例では、初期状態では、例えば、図5Aと同様のゲーム画面30がディスプレイ12aに表示されている。そして、ユーザが指32などによりゲーム画面30をタッチすると、処理実行部28は、タッチされた位置に応じたゲームに関する処理を実行する。

図7は、携帯型ゲーム機1の第4の利用例を示す図である。図7に示す携帯型ゲーム機1では、ディスプレイ12aに複数のアイコン50を含むアイコン表示画面52が表示されている。

ここでは、本実施形態を携帯電話などの携帯情報端末54に応用した応用例について説明する。図8は、本実施形態の第1の応用例を示す図である。図8に示す携帯情報端末54では、ディスプレイ12aの右側にインデックス情報56が縦方向に一列に並んで配置されている。そして、ディスプレイ12aの右側の外側領域16にマーク58が縦方向に一列に並んで配置されている。本応用例では、インデックス情報56(例えば、文字)とマーク58とは一対一で対応しており、対応するインデックス情報56とマーク58とは横に並んで配置されている。また、図8に示す携帯情報端末54の記憶部には、予め、人の名前や電話番号などを含んで構成される個人情報が複数登録されている。

ここでは、本実施形態を動画像再生機能が付いた携帯情報端末54に応用した応用例について説明する。

Claims (22)

- 表示部と、

前記表示部の表示面の少なくとも一部を占める内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサと、

前記内側領域内の位置に対応する前記タッチセンサにより検出される位置と、前記外側領域内の位置に対応する前記タッチセンサにより検出される位置と、に基づく処理を実行する処理実行手段と、

を含むことを特徴とする情報処理システム。 - 前記タッチセンサが、前記物体の位置を順次検出し、

前記処理実行手段が、前記タッチセンサにより検出される位置の履歴に基づく処理を実行する、

ことを特徴とする請求項1に記載の情報処理システム。 - 前記処理実行手段が、前記タッチセンサにより検出される位置の履歴に前記内側領域内の位置に対応する位置と前記外側領域内の位置に対応する位置との両方が含まれる場合と、前記タッチセンサにより検出される位置の履歴に前記内側領域内の位置に対応する位置のみが含まれる場合と、で異なる処理を実行する、

ことを特徴とする請求項2に記載の情報処理システム。 - 前記処理実行手段が、前記タッチセンサにより、前記内側領域内の位置又は前記外側領域内の位置の一方に対応する位置が検出された後に、他方に対応する位置が検出された際に、所定の処理を実行する、

ことを特徴とする請求項2に記載の情報処理システム。 - 前記処理実行手段が、前記タッチセンサにより検出される位置に基づいて特定される前記表示部内の位置に情報を表示する処理を実行する、

ことを特徴とする請求項1に記載の情報処理システム。 - 表示部と、

前記表示部の表示面の少なくとも一部を占める内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサと、を含み、

前記タッチセンサが、前記内側領域内の位置に対応する前記タッチセンサにより検出される位置と、前記外側領域内の位置に対応する前記タッチセンサにより検出される位置と、に基づく処理を実行する処理実行手段に、前記タッチセンサによる検出結果に対応するデータを出力する、

ことを特徴とする操作入力装置。 - 表示部の表示面の少なくとも一部を占める内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される、前記内側領域内の位置に対応する位置と、前記外側領域内の位置に対応する位置と、に基づく処理を実行する処理実行手段、

を含むことを特徴とする情報処理装置。 - 表示部の表示面の少なくとも一部を占める内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される、前記内側領域内の位置に対応する位置と、前記外側領域内の位置に対応する位置と、に基づく処理を実行する処理実行ステップ、

を含むことを特徴とする情報処理方法。 - 表示部の表示面の少なくとも一部を占める内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される、前記内側領域内の位置に対応する位置と、前記外側領域内の位置に対応する位置と、に基づく処理を実行する処理実行手段、

としてコンピュータを機能させることを特徴とするプログラム。 - 表示部の表示面の少なくとも一部を占める内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される、前記内側領域内の位置に対応する位置と、前記外側領域内の位置に対応する位置と、に基づく処理を実行する処理実行手段、

としてコンピュータを機能させることを特徴とするプログラムを記憶したコンピュータ読み取り可能な情報記憶媒体。 - 表示部の表示面の縁部に沿って複数の選択肢を並べて表示する表示処理実行手段と、

前記表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される位置に基づいて特定される、前記複数の選択肢のうちの少なくとも1つに応じた処理を実行する選択処理実行手段と、

を含むことを特徴とする情報処理装置。 - 前記選択処理実行手段が、前記表示面の中央部と、前記複数の選択肢が並んで表示されている前記表示面の縁部と、を結ぶ方向に沿う、前記タッチセンサにより位置が検出される物体の移動に対応する検出結果が前記タッチセンサにより検出される場合に、前記選択肢と前記物体の位置との間の距離に基づいて特定される、前記表示面に表示されている複数の選択肢のうちの少なくとも1つに基づく処理を実行する、

ことを特徴とする請求項11に記載の情報処理装置。 - 前記表示処理実行手段が、前記タッチセンサにより前記表示面の縁部に対応する位置が検出される際に、当該表示面の縁部に沿って複数の選択肢を並べて表示する、

ことを特徴とする請求項11に記載の情報処理装置。 - 前記表示処理実行手段が、前記タッチセンサにより検出される位置と、前記選択肢が表示されている位置と、の関係に基づいて特定される選択肢を、他の選択肢とは異なる態様で前記表示部に表示する、

ことを特徴とする請求項11に記載の情報処理装置。 - 前記表示処理実行手段が、前記複数の選択肢が並んで表示されている前記表示面の縁部に沿う方向の、ユーザによる物体の移動に対応する検出結果が前記タッチセンサにより検出される場合に、当該検出結果に応じて他の選択肢とは異なる態様で表示される選択肢を変化させる、

ことを特徴とする請求項14に記載の情報処理装置。 - 前記表示処理実行手段が、前記タッチセンサにより前記表示面の縁部に対応する位置が検出される際に、当該表示面の縁部に沿って複数の選択肢を並べて表示し、

前記表示処理実行手段が、前記表示部に前記複数の選択肢が並んで表示された後に、前記タッチセンサにより検出される位置と、前記選択肢が表示されている位置と、の関係に基づいて特定される選択肢を、他の選択肢とは異なる態様で前記表示部に表示し、

前記選択処理実行手段が、前記表示面の中央部と、前記複数の選択肢が並んで表示されている前記表示面の縁部と、を結ぶ方向に沿う、ユーザによる物体の移動に対応する検出結果が前記タッチセンサにより検出される場合に、他の選択肢とは異なる態様で表示されている選択肢に基づく処理を実行する、

ことを特徴とする請求項11に記載の情報処理装置。 - 前記選択肢は、プログラムに対応するアイコンであり、

前記選択処理実行手段は、特定された選択肢に対応するプログラムを起動する処理を実行する、

ことを特徴とする請求項11に記載の情報処理装置。 - 表示部と、

前記表示部の表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサと、を含み、

前記タッチセンサが、前記表示部の表示面の縁部に沿って複数の選択肢を並べて表示する表示処理実行手段と、前記表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される位置に基づいて特定される、前記複数の選択肢のうちの少なくとも1つに応じた処理を実行する選択処理実行手段と、を含む情報処理装置に、検出結果を示すデータを出力する、

ことを特徴とする操作入力装置。 - 表示部と、

前記表示部の表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサと、

前記表示部の表示面の縁部に沿って複数の選択肢を並べて表示する表示処理実行手段と、

前記表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される位置に基づいて特定される、前記複数の選択肢のうちの少なくとも1つに応じた処理を実行する選択処理実行手段と、

を含むことを特徴とする情報処理システム。 - 表示部の表示面の縁部に沿って複数の選択肢を並べて表示する表示処理実行ステップと、

前記表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される位置に基づいて特定される、前記複数の選択肢のうちの少なくとも1つに応じた処理を実行する選択処理実行ステップと、

を含むことを特徴とする情報処理方法。 - 表示部の表示面の縁部に沿って複数の選択肢を並べて表示する表示処理実行手段、

前記表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される位置に基づいて特定される、前記複数の選択肢のうちの少なくとも1つに応じた処理を実行する選択処理実行手段、

としてコンピュータを機能させることを特徴とするプログラム。 - 表示部の表示面の縁部に沿って複数の選択肢を並べて表示する表示処理実行手段、

前記表示面内の少なくとも一部の領域である内側領域と、前記内側領域に隣接する前記表示面外の領域である外側領域と、を含む領域に検出面が設けられている、前記検出面上における物体の位置を検出するタッチセンサにより検出される位置に基づいて特定される、前記複数の選択肢のうちの少なくとも1つに応じた処理を実行する選択処理実行手段、

としてコンピュータを機能させることを特徴とするプログラムを記憶したコンピュータ読み取り可能な情報記憶媒体。

Priority Applications (4)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| EP11765254.5A EP2557484B1 (en) | 2010-04-09 | 2011-01-13 | Information processing system, operation input device, information processing device, information processing method, program and information storage medium |

| CN201180028059.6A CN102934067B (zh) | 2010-04-09 | 2011-01-13 | 信息处理系统、操作输入装置、信息处理装置、信息处理方法 |

| US13/639,612 US20130088450A1 (en) | 2010-04-09 | 2011-01-13 | Information processing system, operation input device, information processing device, information processing method, program, and information storage medium |

| KR1020127029104A KR101455690B1 (ko) | 2010-04-09 | 2011-01-13 | 정보처리 시스템, 조작입력장치, 정보처리장치, 정보처리방법, 프로그램 및 정보기억매체 |

Applications Claiming Priority (4)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| JP2010-090931 | 2010-04-09 | ||

| JP2010090932A JP5653062B2 (ja) | 2010-04-09 | 2010-04-09 | 情報処理装置、操作入力装置、情報処理システム、情報処理方法、プログラム及び情報記憶媒体 |

| JP2010090931A JP5529616B2 (ja) | 2010-04-09 | 2010-04-09 | 情報処理システム、操作入力装置、情報処理装置、情報処理方法、プログラム及び情報記憶媒体 |

| JP2010-090932 | 2010-04-09 |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| WO2011125352A1 true WO2011125352A1 (ja) | 2011-10-13 |

Family

ID=44762319

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| PCT/JP2011/050443 WO2011125352A1 (ja) | 2010-04-09 | 2011-01-13 | 情報処理システム、操作入力装置、情報処理装置、情報処理方法、プログラム及び情報記憶媒体 |

Country Status (5)

| Country | Link |

|---|---|

| US (1) | US20130088450A1 (ja) |

| EP (1) | EP2557484B1 (ja) |

| KR (1) | KR101455690B1 (ja) |

| CN (1) | CN102934067B (ja) |

| WO (1) | WO2011125352A1 (ja) |

Cited By (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2013170233A1 (en) * | 2012-05-11 | 2013-11-14 | Perceptive Pixel Inc. | Overscan display device and method of using the same |

| EP2930594A4 (en) * | 2012-12-06 | 2016-07-13 | Nippon Electric Glass Co | DISPLAY DEVICE |

Families Citing this family (22)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20140223381A1 (en) * | 2011-05-23 | 2014-08-07 | Microsoft Corporation | Invisible control |

| WO2012167735A1 (zh) * | 2011-06-07 | 2012-12-13 | 联想(北京)有限公司 | 电子设备、触摸输入方法和控制方法 |

| US9417754B2 (en) | 2011-08-05 | 2016-08-16 | P4tents1, LLC | User interface system, method, and computer program product |

| KR101924835B1 (ko) * | 2011-10-10 | 2018-12-05 | 삼성전자주식회사 | 터치 디바이스의 기능 운용 방법 및 장치 |

| US20130167059A1 (en) * | 2011-12-21 | 2013-06-27 | New Commerce Solutions Inc. | User interface for displaying and refining search results |

| US10248278B2 (en) * | 2011-12-30 | 2019-04-02 | Nokia Technologies Oy | Method and apparatus for intuitive multitasking |

| EP2735955B1 (en) * | 2012-11-21 | 2018-08-15 | Océ-Technologies B.V. | Method and system for selecting a digital object on a user interface screen |

| CN110413175A (zh) * | 2013-07-12 | 2019-11-05 | 索尼公司 | 信息处理装置、信息处理方法和非暂态计算机可读介质 |

| US10451874B2 (en) | 2013-09-25 | 2019-10-22 | Seiko Epson Corporation | Image display device, method of controlling image display device, computer program, and image display system |

| JP6334125B2 (ja) * | 2013-10-11 | 2018-05-30 | 任天堂株式会社 | 表示制御プログラム、表示制御装置、表示制御システム、および表示制御方法 |

| USD749117S1 (en) * | 2013-11-25 | 2016-02-09 | Tencent Technology (Shenzhen) Company Limited | Graphical user interface for a portion of a display screen |

| USD733745S1 (en) * | 2013-11-25 | 2015-07-07 | Tencent Technology (Shenzhen) Company Limited | Portion of a display screen with graphical user interface |

| CN108733302B (zh) * | 2014-03-27 | 2020-11-06 | 原相科技股份有限公司 | 手势触发方法 |

| KR20160098752A (ko) * | 2015-02-11 | 2016-08-19 | 삼성전자주식회사 | 디스플레이 장치 및 디스플레이 방법 및 컴퓨터 판독가능 기록매체 |

| KR20160117098A (ko) * | 2015-03-31 | 2016-10-10 | 삼성전자주식회사 | 전자 장치 및 그 디스플레이 방법 |

| CN106325726B (zh) * | 2015-06-30 | 2019-12-13 | 中强光电股份有限公司 | 触控互动方法 |

| KR102481878B1 (ko) * | 2015-10-12 | 2022-12-28 | 삼성전자주식회사 | 휴대 장치 및 휴대 장치의 화면 표시방법 |

| US20170195736A1 (en) * | 2015-12-31 | 2017-07-06 | Opentv, Inc. | Systems and methods for enabling transitions between items of content |

| WO2019073546A1 (ja) * | 2017-10-11 | 2019-04-18 | 三菱電機株式会社 | 操作入力装置、情報処理システムおよび操作判定方法 |

| US11409430B2 (en) * | 2018-11-02 | 2022-08-09 | Benjamin Firooz Ghassabian | Screen stabilizer |

| JP7212071B2 (ja) * | 2019-01-24 | 2023-01-24 | 株式会社ソニー・インタラクティブエンタテインメント | 情報処理装置、情報処理装置の制御方法、及びプログラム |

| US10908811B1 (en) * | 2019-12-17 | 2021-02-02 | Dell Products, L.P. | System and method for improving a graphical menu |

Citations (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2003087673A (ja) | 2001-09-06 | 2003-03-20 | Sony Corp | 映像表示装置 |

| JP2005321964A (ja) * | 2004-05-07 | 2005-11-17 | Pentax Corp | タッチパネル付き入力装置 |

| JP2008532185A (ja) * | 2005-03-04 | 2008-08-14 | アップル インコーポレイテッド | マルチタッチセンシングデバイスを持つハンドヘルド電子装置 |

| JP2009042993A (ja) * | 2007-08-08 | 2009-02-26 | Hitachi Ltd | 画面表示装置 |

| JP2010049679A (ja) * | 2008-08-22 | 2010-03-04 | Fuji Xerox Co Ltd | 情報処理装置、情報処理方法、およびコンピュータプログラム |

Family Cites Families (12)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JPH0452723A (ja) * | 1990-06-14 | 1992-02-20 | Sony Corp | 座標データ入力装置 |

| JP4686870B2 (ja) * | 2001-02-28 | 2011-05-25 | ソニー株式会社 | 携帯型情報端末装置、情報処理方法、プログラム記録媒体及びプログラム |

| US7656393B2 (en) * | 2005-03-04 | 2010-02-02 | Apple Inc. | Electronic device having display and surrounding touch sensitive bezel for user interface and control |

| CN101133385B (zh) * | 2005-03-04 | 2014-05-07 | 苹果公司 | 手持电子设备、手持设备及其操作方法 |

| US20090278806A1 (en) * | 2008-05-06 | 2009-11-12 | Matias Gonzalo Duarte | Extended touch-sensitive control area for electronic device |

| US8564543B2 (en) * | 2006-09-11 | 2013-10-22 | Apple Inc. | Media player with imaged based browsing |

| JP2008204402A (ja) * | 2007-02-22 | 2008-09-04 | Eastman Kodak Co | ユーザインターフェース装置 |

| TWI417764B (zh) * | 2007-10-01 | 2013-12-01 | Giga Byte Comm Inc | A control method and a device for performing a switching function of a touch screen of a hand-held electronic device |

| US20100031202A1 (en) * | 2008-08-04 | 2010-02-04 | Microsoft Corporation | User-defined gesture set for surface computing |

| KR20100078295A (ko) * | 2008-12-30 | 2010-07-08 | 삼성전자주식회사 | 이종의 터치영역을 이용한 휴대단말의 동작 제어 방법 및 장치 |

| US9965165B2 (en) * | 2010-02-19 | 2018-05-08 | Microsoft Technology Licensing, Llc | Multi-finger gestures |

| US9310994B2 (en) * | 2010-02-19 | 2016-04-12 | Microsoft Technology Licensing, Llc | Use of bezel as an input mechanism |

-

2011

- 2011-01-13 EP EP11765254.5A patent/EP2557484B1/en active Active

- 2011-01-13 WO PCT/JP2011/050443 patent/WO2011125352A1/ja active Application Filing

- 2011-01-13 CN CN201180028059.6A patent/CN102934067B/zh active Active

- 2011-01-13 US US13/639,612 patent/US20130088450A1/en not_active Abandoned

- 2011-01-13 KR KR1020127029104A patent/KR101455690B1/ko active IP Right Grant

Patent Citations (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2003087673A (ja) | 2001-09-06 | 2003-03-20 | Sony Corp | 映像表示装置 |

| JP2005321964A (ja) * | 2004-05-07 | 2005-11-17 | Pentax Corp | タッチパネル付き入力装置 |

| JP2008532185A (ja) * | 2005-03-04 | 2008-08-14 | アップル インコーポレイテッド | マルチタッチセンシングデバイスを持つハンドヘルド電子装置 |

| JP2009042993A (ja) * | 2007-08-08 | 2009-02-26 | Hitachi Ltd | 画面表示装置 |

| JP2010049679A (ja) * | 2008-08-22 | 2010-03-04 | Fuji Xerox Co Ltd | 情報処理装置、情報処理方法、およびコンピュータプログラム |

Non-Patent Citations (1)

| Title |

|---|

| See also references of EP2557484A4 |

Cited By (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2013170233A1 (en) * | 2012-05-11 | 2013-11-14 | Perceptive Pixel Inc. | Overscan display device and method of using the same |

| US9098192B2 (en) | 2012-05-11 | 2015-08-04 | Perceptive Pixel, Inc. | Overscan display device and method of using the same |

| EP2930594A4 (en) * | 2012-12-06 | 2016-07-13 | Nippon Electric Glass Co | DISPLAY DEVICE |

| TWI578199B (zh) * | 2012-12-06 | 2017-04-11 | Nippon Electric Glass Co | Display device |

Also Published As

| Publication number | Publication date |

|---|---|

| EP2557484A1 (en) | 2013-02-13 |

| CN102934067A (zh) | 2013-02-13 |

| CN102934067B (zh) | 2016-07-13 |

| EP2557484B1 (en) | 2017-12-06 |

| EP2557484A4 (en) | 2015-12-02 |

| KR20130005300A (ko) | 2013-01-15 |

| KR101455690B1 (ko) | 2014-11-03 |

| US20130088450A1 (en) | 2013-04-11 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| JP5529616B2 (ja) | 情報処理システム、操作入力装置、情報処理装置、情報処理方法、プログラム及び情報記憶媒体 | |

| WO2011125352A1 (ja) | 情報処理システム、操作入力装置、情報処理装置、情報処理方法、プログラム及び情報記憶媒体 | |

| US8775966B2 (en) | Electronic device and method with dual mode rear TouchPad | |

| JP5708644B2 (ja) | 情報処理端末およびその制御方法 | |

| KR101224588B1 (ko) | 멀티포인트 스트록을 감지하기 위한 ui 제공방법 및 이를적용한 멀티미디어 기기 | |

| US20110138275A1 (en) | Method for selecting functional icons on touch screen | |

| US11435870B2 (en) | Input/output controller and input/output control program | |

| JP2013526171A (ja) | 補助キーのストリップをもつ仮想キーボード | |

| KR20070062911A (ko) | 터치 및 드래그를 이용하여 제어 가능한 모바일 장치 및 그조작 방법 | |

| JP2006148510A (ja) | 画像処理装置および画像処理プログラム | |

| JP5780438B2 (ja) | 電子機器、位置指定方法及びプログラム | |

| US9733667B2 (en) | Information processing device, information processing method and recording medium | |

| KR20110085189A (ko) | 터치패널을 갖는 개인휴대단말기의 작동방법 | |

| WO2012160829A1 (ja) | タッチスクリーン装置、タッチ操作入力方法及びプログラム | |

| JP5653062B2 (ja) | 情報処理装置、操作入力装置、情報処理システム、情報処理方法、プログラム及び情報記憶媒体 | |

| JP6217633B2 (ja) | 携帯端末装置、携帯端末装置の制御方法、及びプログラム | |

| JP5414134B1 (ja) | タッチ式入力システムおよび入力制御方法 | |

| JP2017140342A (ja) | ユーザインターフェースプログラム、当該プログラムを備えたゲームプログラム及び制御方法 | |

| US20150309601A1 (en) | Touch input system and input control method | |

| JP6267418B2 (ja) | 情報処理装置、情報処理システム、情報処理方法、及び情報処理プログラム | |

| KR20120095155A (ko) | 터치패널을 갖는 개인휴대단말기의 작동방법 | |

| KR20120094728A (ko) | 유저인터페이스 제공 방법 및 이를 이용한 휴대 단말기 | |

| KR20140020570A (ko) | 터치패널을 갖는 개인휴대단말기의 작동방법 | |

| JP2014067146A (ja) | 電子機器 | |

| KR20110084042A (ko) | 터치패널 작동방법 및 터치패널 구동칩 |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| WWE | Wipo information: entry into national phase |

Ref document number: 201180028059.6 Country of ref document: CN |

|

| 121 | Ep: the epo has been informed by wipo that ep was designated in this application |

Ref document number: 11765254 Country of ref document: EP Kind code of ref document: A1 |

|

| WWE | Wipo information: entry into national phase |

Ref document number: 13639612 Country of ref document: US Ref document number: 2011765254 Country of ref document: EP |

|

| NENP | Non-entry into the national phase |

Ref country code: DE |

|

| ENP | Entry into the national phase |

Ref document number: 20127029104 Country of ref document: KR Kind code of ref document: A |