EP0976030B1 - Befehlsfalten in einem stapelspeicherprozessor - Google Patents

Befehlsfalten in einem stapelspeicherprozessor Download PDFInfo

- Publication number

- EP0976030B1 EP0976030B1 EP97904872A EP97904872A EP0976030B1 EP 0976030 B1 EP0976030 B1 EP 0976030B1 EP 97904872 A EP97904872 A EP 97904872A EP 97904872 A EP97904872 A EP 97904872A EP 0976030 B1 EP0976030 B1 EP 0976030B1

- Authority

- EP

- European Patent Office

- Prior art keywords

- stack

- instruction

- virtual machine

- operand

- value

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Expired - Lifetime

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/30003—Arrangements for executing specific machine instructions

- G06F9/30007—Arrangements for executing specific machine instructions to perform operations on data operands

- G06F9/30021—Compare instructions, e.g. Greater-Than, Equal-To, MINMAX

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F12/00—Accessing, addressing or allocating within memory systems or architectures

- G06F12/02—Addressing or allocation; Relocation

- G06F12/08—Addressing or allocation; Relocation in hierarchically structured memory systems, e.g. virtual memory systems

- G06F12/0802—Addressing of a memory level in which the access to the desired data or data block requires associative addressing means, e.g. caches

- G06F12/0875—Addressing of a memory level in which the access to the desired data or data block requires associative addressing means, e.g. caches with dedicated cache, e.g. instruction or stack

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/30098—Register arrangements

- G06F9/3012—Organisation of register space, e.g. banked or distributed register file

- G06F9/30134—Register stacks; shift registers

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/3017—Runtime instruction translation, e.g. macros

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/3017—Runtime instruction translation, e.g. macros

- G06F9/30174—Runtime instruction translation, e.g. macros for non-native instruction set, e.g. Javabyte, legacy code

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/30181—Instruction operation extension or modification

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/34—Addressing or accessing the instruction operand or the result ; Formation of operand address; Addressing modes

- G06F9/345—Addressing or accessing the instruction operand or the result ; Formation of operand address; Addressing modes of multiple operands or results

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/30—Arrangements for executing machine instructions, e.g. instruction decode

- G06F9/38—Concurrent instruction execution, e.g. pipeline, look ahead

- G06F9/3877—Concurrent instruction execution, e.g. pipeline, look ahead using a slave processor, e.g. coprocessor

- G06F9/3879—Concurrent instruction execution, e.g. pipeline, look ahead using a slave processor, e.g. coprocessor for non-native instruction execution, e.g. executing a command; for Java instruction set

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/44—Arrangements for executing specific programs

- G06F9/445—Program loading or initiating

- G06F9/44589—Program code verification, e.g. Java bytecode verification, proof-carrying code

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/44—Arrangements for executing specific programs

- G06F9/448—Execution paradigms, e.g. implementations of programming paradigms

- G06F9/4482—Procedural

- G06F9/4484—Executing subprograms

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/44—Arrangements for executing specific programs

- G06F9/448—Execution paradigms, e.g. implementations of programming paradigms

- G06F9/4488—Object-oriented

- G06F9/449—Object-oriented method invocation or resolution

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F9/00—Arrangements for program control, e.g. control units

- G06F9/06—Arrangements for program control, e.g. control units using stored programs, i.e. using an internal store of processing equipment to receive or retain programs

- G06F9/44—Arrangements for executing specific programs

- G06F9/455—Emulation; Interpretation; Software simulation, e.g. virtualisation or emulation of application or operating system execution engines

- G06F9/45504—Abstract machines for programme code execution, e.g. Java virtual machine [JVM], interpreters, emulators

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F2212/00—Indexing scheme relating to accessing, addressing or allocation within memory systems or architectures

- G06F2212/45—Caching of specific data in cache memory

- G06F2212/451—Stack data

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02D—CLIMATE CHANGE MITIGATION TECHNOLOGIES IN INFORMATION AND COMMUNICATION TECHNOLOGIES [ICT], I.E. INFORMATION AND COMMUNICATION TECHNOLOGIES AIMING AT THE REDUCTION OF THEIR OWN ENERGY USE

- Y02D10/00—Energy efficient computing, e.g. low power processors, power management or thermal management

Definitions

- the present invention relates to instruction decoders for a stack machine, and in particular, to methods and apparati for folding a sequence of multiple instructions into a single folded operation.

- intranet In addition, to the public carrier network or Internet, many corporations and other businesses are shifting their internal information systems onto an intranet as a way of more effectively sharing information within a corporate or private network.

- the basic infrastructure for an intranet is an internal network connecting servers and desktops, which may or may not be connected to the Internet through a firewall. These intranets provide services to desktops via standard open network protocols which are well established in the industry. Intranets provide many benefits to the enterprises which employ them, such as simplified internal information management and improved internal communication using the browser paradigm. Integrating Internet technologies with a company's enterprise infrastructure and legacy systems also leverages existing technology investment for the party employing an intranet.

- intranets and the Internet are closely related, with intranets being used for internal and secure communications within the business and the Internet being used for external transactions between the business and the outside world.

- the term "networks” includes both the Internet and intranets. However, the distinction between the Internet and an intranet should be born in mind where applicable.

- JAVA is a trademark of Sun Microsystems of Mountain View, CA.

- the JAVA programming language resulted from programming efforts which initially were intended to be coded in the C++ programming language; therefore, the JAVA programming language has manycommonaltieswith the C++ programming language.

- the JAVA programming language is a simple, object-oriented, distributed, interpreted yet high performance, robust yet safe, secure, dynamic, architecture neutral, portable, and multi-threaded language.

- the JAVA programming language has emerged as the programming language of choice for the Internet as many large hardware and software companies have licensed it from Sun Microsystems.

- the JAVA programming language and environment is designed to solve a number of problems in modem programming practice.

- the JAVA programming language omits many rarely used, poorly understood, and confusing features of the C++ programming language. These omitted features primarily consist of operator overloading, multiple inheritance, and extensive automatic coercions.

- the JAVA programming language includes automatic garbage collection that simplifies the task of programming because it is no longer necessary to allocate and free memory as in the C programming language.

- the JAVA programming language restricts the use of pointers as defined in the C programming language, and instead has true arrays in which array bounds are explicitly checked, thereby eliminating vulnerability to many viruses and nasty bugs.

- the JAVA programming language includes objective-C interfaces and specific exception handlers.

- the JAVA programming language has an extensive library of routines for coping easily with TCP/IP protocol (Transmission Control Protocol based on Internet protocol), HTTP (Hypertext Transfer Protocol) and FTP (File Transfer Protocol).

- TCP/IP protocol Transmission Control Protocol based on Internet protocol

- HTTP Hypertext Transfer Protocol

- FTP File Transfer Protocol

- the JAVA programming language is intended to be used in networked/distributed environments.

- the JAVA programming language enabled the construction of virus-free, tamper-free systems.

- the authentication techniques are based on public-key encryption.

- WO-A-94/27214 discloses a prior art method for decoding a sequence of guest instructions that eliminates the overhead of decoding and dispatching individual instructions in the sequence.

- EP-A-0 071 028 discloses a prior art instruction substitution mechanism to avoid Address Generate Interlock (AGI) problems associated with certain multiple instruction sequences.

- AGI Address Generate Interlock

- a JAVA virtual machine is an stack-oriented abstract computing machine, which like a physical computing machine has an instruction set and uses various storage areas.

- a JAVA virtual machine need not understand the JAVA programming language; instead it understands a class file format.

- a class file includes JAVA virtual machine instructions (or bytecodes) and a symbol table, as well as other ancillary information. Programs written in the JAVA programming language (or in other languages) may be compiled to produce a sequence of JAVA virtual machine instructions.

- instructions typically operate on data at the top of an operand stack.

- One or more first instructions such as a load from local variable instruction, are executed to push operand data onto the operand stack as a precursor to execution of an instruction which immediately follows such instruction(s).

- the instruction which follows e.g., an add operation, pops operand data from the top of the stack, operates on the operand data, and pushes a result onto the operand stack, replacing the operand data at the top of the operand stack.

- a suitably configured instruction decoder allows the folding away of instructions pushing an operand onto the top of a stack merely as a precursor to a second instruction which operates on the top of stack operand.

- the instruction decoder identifies foldable instruction sequences (typically 2, 3, or 4 instructions) and supplies an execution unit with an equivalent folded operation (typically a single operation) thereby reducing processing cycles otherwise required for execution of multiple operations corresponding to the multiple instructions of the folded instruction sequence.

- foldable instruction sequences typically 2, 3, or 4 instructions

- an equivalent folded operation typically a single operation

- an instruction sequence including a pair of load instructions for loading integer operands from local variables to the top of stack

- an add instruction for popping the integer operands of the stack, adding them, and placing the result at the top of stack

- an store instruction for popping the result from the stack and storing the result in a local variable

- an apparatus for a virtual machine instruction processor wherein instructions generally source operands from, and target a result to, uppermost entries of an operand stack

- the apparatus comprising: a virtual machine instruction store; an operand stack; a data store wherein the data store includes local variable storage; an execution unit; and a virtual machine instruction decoder coupled to the virtual machine instruction store to identify a foldable sequence of virtual machine instructions represented therein, the foldable sequence including first and second virtual machine instructions, the first instruction for pushing a first operand value onto the operand stack from the data store merely as a first source operand for a second instruction, the virtual machine instruction decoder coupled to supply the execution unit with a single folded operation equivalent to the foldable sequence and including a first operand address identifier selective for the first operand value in the data store, thereby obviating an explicit operation corresponding to the first virtual machine instruction wherein said execution unit uses said first operand address identifier to access said first operand value from said data store.

- the instruction decoder supplies the execution unit with an operation identifier and operand address identifier corresponding to the first instruction only.

- the instruction decoder further identifies a third instruction in the foldable sequence.

- This third instruction is for pushing a second operand value onto the operand stack from the data store merely as a second source operand for the second instruction.

- the single folded operation is equivalent to the foldable sequence and includes a second operand address identifier selective for the second operand value in the data store, thereby obviating an explicit operation corresponding to the third instruction.

- the instruction decoder further identifies a fourth instruction in the foldable sequence.

- This fourth instruction is for popping a result of the second instruction from the operand stack and storing the result in a result location of the data store.

- the single folded operation is equivalent to the foldable sequence and includes a destination address identifier selective for the result location in the data store, thereby obviating an explicit operation corresponding to the fourth instruction.

- the instruction decoder includes normal and folded decode paths and switching means.

- the switching means are responsive to the folded decode path for selecting operation, operand, and destination identifiers from the folded decode path in response to a fold indication therefrom, and for otherwise selecting operation, operand, and destination identifers from the normal decode path.

- the apparatus is for a virtual machine instruction processor wherein instructions generally source operands from, and target a result to, uppermost entries of an operand stack.

- the virtual machine instruction processor is a hardware virtual machine instruction processor and the instruction decoder includes decode logic.

- the virtual machine instruction processor includes a just-in-time compiler implementation and the instruction decoder includes software executable on a hardware processor.

- the hardware processor includes the execution unit.

- the virtual machine instruction processor includes a bytecode interpreter implementation and the instruction decoder including software executable on a hardware processor.

- the hardware processor includes the execution unit.

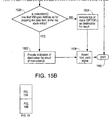

- a method for decoding virtual machine instructions in a virtual machine instruction processor wherein generally source operands from, and target a result to, uppermost entries of an operand stack, the method comprising: (a) determining if a first virtual machine instruction of a virtual machine instruction sequence is an instruction for pushing a first operand value onto the operand stack from a data store merely as a first source operand for a second virtual machine instruction; and if the result of the (a) determining is affirmative, supplying an execution unit with a single folded operation equivalent to a foldable sequence comprising the first and second virtual machine instructions, the single folded operation including a first operand identifier selective for the first operand value, thereby obviating an explicit operation corresponding to the first instruction wherein said execution unit uses said first operand address identifier to access said first operand value from said data store.

- the method includes supplying, if the result of the (a) determining is negative, the execution unit with an operation equivalent to the first instruction in the virtual machine instruction sequence.

- the method includes (b) determining if a third instruction of the virtual machine instruction sequence is an instruction for popping a result value of the second instruction from the operand stack and storing the result value in a result location of the data store and, if the result of the (b) determining is affirmative, further including a result identifier selective for the result location with the equivalent single folded operation, thereby further obviating an explicit operation corresponding to the third instruction.

- the method includes including, if the result of the (b) determining is negative, a result identifier selective for a top location of the operand stack with the equivalent single folded operation.

- the (a) determining and the (b) determining are performed substantially in parallel.

- a stack-based virtual machine implementation includes a randomly-accessible operand stack representation, a randomly-accessible local variable storage representation, and a virtual machine instruction decoder for selectively decoding virtual machine instructions and folding together a selected sequence thereof to eliminate unnecessary temporary storage of operands on the operand stack.

- the stack-based virtual machine implementation (1) is a hardware virtual machine instruction processor including a hardware stack cache, a hardware instruction decoder, and an execution unit or (2) includes software encoded in a computer readable medium and executable on a hardware processor.

- the hardware virtual machine instruction processor embodiment (a) the randomly-accessible operand stack local variable storage representations at least partially reside in the hardware stack cache, and (b) the virtual machine instruction decoder includes the hardware instruction decoder coupled to provide the execution unit with opcode, operand, and result identifiers respectively selective for a hardware virtual machine instruction processor operation and for locations in the hardware stack cache as a single hardware virtual machine instruction processor operation equivalent to the selected sequence of virtual machine instructions.

- the randomly-accessible operand stack local variable storage representations at least partially reside in registers of the hardware processor

- the virtual machine instruction decoder is at least partially implemented in the software

- the virtual machine instruction decoder is coupled to provide opcode, operand, and result identifiers respectively selective for a hardware processor operation and for locations in the registers as a single hardware processor operation equivalent to the selected sequence of virtual machine instructions.

- a hardware virtual machine instruction decoder includes a normal decode path, a fold decode path, and switching means.

- the fold decode path is for decoding a sequence of virtual machine instructions and, if the sequence is foldable, supplying (a) a single operation identifier, (b) one or more operand identifiers; and (c) a destination identifier, which are together equivalent to the sequence of virtual machine instructions.

- the switching means is responsive to the folded decode path for selecting operation, operand, and destination identifiers from the folded decode path in response to a fold indication therefrom, and otherwise selecting operation, operand, and destination identifiers from the normal decode path.

- Figure 1 illustrates one embodiment of a virtual machine instruction hardware processor 100, hereinafter hardware processor 100, that includes an instruction decoder 135 for folding a sequence of multiple instructions into a single folded operation in accordance with the present invention, and that directly executes virtual machine instructions that are processor architecture independent.

- the performance of hardware processor 100 in executing virtual machine instructions is much better than high-end CPUs, such as the Intel PENTIUM microprocessor or the Sun Microsystems ULTRASPARC processor, (ULTRASPARC is a trademark of Sun Microsystems of Mountain View, CA., and PENTIUM is a trademark of Intel Corp.

- Hardware processor 100 provides similar advantages for other virtual machine stack-based architectures as well as for virtual machines utilizing features such as garbage collection, thread synchronization, etc.

- a system based on hardware processor 100 presents attractive price for performance characteristics, if not the best overall performance, as compared with alternative virtual machine execution environments including software interpreters and just-in-time compilers. Nonetheless, the present invention is not limited to virtual machine hardware processor embodiments, and encompasses any suitable stack-based, or non-stack-based, machine implementations, including implementations emulating the JAVA virtual machine as a software interpreter, compiling JAVA virtual machine instructions (either in batch or just-in-time) to machine instruction native to a particular hardware processor, or providing hardware implementing the JAVA virtual machine in microcode, directly in silicon, or in some combination thereof.

- hardware processor 100 has the advantage that the 250 Kilobytes to 500 Kilobytes (Kbytes) of memory storage, e.g., read-only memory or random access memory, typically required by a software interpreter, is eliminated.

- a simulation of hardware processor 100 showed that hardware processor 100 executes virtual machine instructions twenty times faster than a software interpreter running on a variety of applications on a PENTIUM processor clocked at the same clock rate as hardware processor 100, and executing the same virtual machine instructions.

- Another simulation of hardware processor 100 showed that hardware processor 100 executes virtual machine instructions five times faster than a just-in-time compiler running on a PENTIUM processor running at the same clock rate as hardware processor 100, and executing the same virtual machine instructions.

- hardware processor 100 is advantageous. These applications include, for example, an Internet chip for network appliances, a cellular telephone processor, other telecommunications integrated circuits, or other low-power, low-cost applications such as embedded processors, and portable devices.

- Instruction decoder 135, as described herein, allows the folding away of JAVA virtual machine instructions pushing an operand onto the top of a stack merely as a precursor to a second JAVA virtual machine instruction which operates on the top of stack operand.

- Such an instruction decoder identifies foldable instruction sequences and supplies an execution unit with a single equivalent folded operation thereby reducing processing cycles otherwise required for execution of multiple operations corresponding to the multiple instructions of the folded instruction sequence.

- Instruction decoder embodiments described herein provide for folding of two, three, four, or more instruction folding. For example, in one instruction decoder embodiment described herein, two load instructions and a store instruction can be folded into execution of operation corresponding to an instruction appearing therebetween in the instruction sequence.

- a virtual machine is an abstract computing machine that, like a real computing machine, has an instruction set and uses various memory areas.

- a virtual machine specification defines a set of processor architecture independent virtual machine instructions that are executed by a virtual machine implementation, e.g., hardware processor 100.

- Each virtual machine instruction defines a specific operation that is to be performed.

- the virtual computing machine need not understand the computer language that is used to generate virtual machine instructions or the underlying implementation of the virtual machine. Only a particular file format for virtual machine instructions needs to be understood.

- the virtual machine instructions are JAVA virtual machine instructions.

- Each JAVA virtual machine instruction includes one or more bytes that encode instruction identfying information, operands, and any other required information.

- Appendix I which is incorporated herein by reference in its entirety, includes an illustrative set of the JAVA virtual machine instructions.

- the particular set of virtual machine instructions utilized is not an essential aspect of this invention.

- those of skill in the art can modify the invention for a particular set of virtual machine instructions, or for changes to the JAVA virtual machine specification..

- a JAVA compiler JAVAC ( Fig. 2 ) that is executing on a computer platform, converts an application 201 written in the JAVA computer language to an architecture neutral object file format encoding a compiled instruction sequence 203, according to the JAVA Virtual Machine Specification, that includes a compiled instruction set.

- a source of virtual machine instructions and related information is needed. The method or technique used to generate the source of virtual machine instructions and related information is not essential to this invention.

- Compiled instruction sequence 203 is executable on hardware processor 100 as well as on any computer platform that implements the JAVA virtual machine using, for example, a software interpreter or just-in-time compiler.

- hardware processor 100 provides significant performance advantages over the software implementations.

- hardware processor 100 processes the JAVA virtual machine instructions, which include bytecodes.

- Hardware processor 100 executes directly most of the bytecodes. However, execution of some of the bytecodes is implemented via microcode.

- firmware means microcode stored in ROM that when executed controls the operations of hardware processor 100.

- hardware processor 100 includes an I/O bus and memory interface unit 110, an instruction cache unit 120 including instruction cache 125, an instruction decode unit 130, a unified execution unit 140, a stack management unit 150 including stack cache 155, a data cache unit 160 including a data cache 165, and program counter and trap control logic 170. Each of these units is described more completely below.

- each unit includes several elements.

- the interconnections between elements within a unit are not shown in Figure 1 .

- those of skill in the art will understand the interconnections and cooperation between the elements in a unit and between the various units.

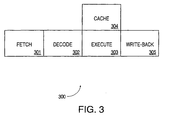

- the pipeline stages implemented using the units illustrated in Figure 1 include fetch, decode, execute, and write-back stages. If desired, extra stages for memory access or exception resolution are provided in hardware processor 100.

- Figure 3 is an illustration of a four stage pipeline for execution of instructions in the exemplary embodiment of processor 100.

- fetch stage 301 a virtual machine instruction is fetched and placed in instruction buffer 124 ( Fig. 1 ).

- the virtual machine instruction is fetched from one of (i) a fixed size cache line from instruction cache 125 or (ii) microcode ROM 141 in execution unit 140 .

- each virtual machine instruction is between one and five bytes long. Thus, to keep things simple, at least forty bits are required to guarantee that all of a given instruction is contained in the fetch.

- Another alternative is to always fetch a predetermined number of bytes, for example, four bytes, starting with the opcode. This is sufficient for 95% of JAVA virtual machine instructions (See Appendix I). For an instruction requiring more than three bytes of operands, another cycle in the front end must be tolerated if four bytes are fetched. In this case, the instruction execution can be started with the first operands fetched even if the full set of operands is not yet available.

- decode stage 302 ( Fig. 3 ) the virtual machine instruction at the front of instruction buffer 124 ( Fig. 1 ) is decoded and instruction folding is performed if possible.

- Stack cache 155 is accessed only if needed by the virtual machine instruction.

- Register OPTOP that contains a pointer OPTOP to a top of a stack 400 ( Figs. 4A and 4B ) is also updated in decode stage 302 ( Fig. 3 ).

- a register to store a pointer is illustrative only of one embodiment.

- the pointer may be implemented using a hardware register, a hardware counter, a software counter, a software pointer, or other equivalent embodiments known to those of skill in the art.

- the particular implementation selected is not essential to the invention, and typically is made based on a price to performance trade-off.

- execute stage 303 the virtual machine instruction is executed for one or more cycles.

- an ALU in integer unit 142 ( Fig. 1 ) is used either to do an arithmetic computation or to calculate the address of a load or a store from data cache unit (DCU) 160. If necessary, traps are prioritized and taken at the end of execute stage 303 ( Fig. 3 ).

- the branch address is calculated in execute stage 303, as well as the condition upon which the branch is dependent.

- Cache stage 304 is a non-pipelined stage.

- Data cache 165 ( Fig. 1 ) is accessed if needed during execution stage 303 ( Fig. 3 ).

- stage 304 is non-pipelined is because hardware processor 100 is a stack-based machine.

- the instruction following a load is almost always dependent on the value returned by the load. Consequently, in this embodiment, the pipeline is held for one cycle for a data cache access. This reduces the pipeline stages, and the die area taken by the pipeline for the extra registers and bypasses.

- Write-back stage 305 is the last stage in the pipeline. In stage 305, the calculated data is written back to stack cache 155.

- Hardware processor 100 directly implements a stack 400 ( Fig. 4A ) that supports the JAVA virtual machine stack-based architecture (See Appendix 1).

- a stack 400 ( Fig. 4A ) that supports the JAVA virtual machine stack-based architecture (See Appendix 1).

- Sixty-four entries on stack 400 are contained on stack cache 155 in stack management unit 150. Some entries in stack 400 may be duplicated on stack cache 155. Operations on data are performed through stack cache 155.

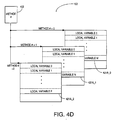

- Stack 400 of hardware processor 100 is primarily used as a repository of information for methods. At any point in time, hardware processor 100 is executing a single method. Each method has memory space, i.e., a method frame on stack 400, allocated for a set of local variables, an operand stack, and an execution environment structure.

- a new method frame e.g., method frame two 410

- Current frame 410 ( Fig. 4A ), as well as the other method frames, may contain a part of or all of the following six entities, depending on various method invoking situations:

- object reference, incoming arguments, and local variables are included in arguments and local variables area 421.

- the invoker's method context is included in execution environment 422, sometimes called frame state, that in turn includes: a return program counter value 431 that is the address of the virtual machine instruction, e.g., JAVA opcode, next to the method invoke instruction; a return frame 432 that is the location of the calling method's frame; a return constant pool pointer 433 that is a pointer to the calling method's constant pool table; a current method vector 434 that is the base address of the current method's vector table; and a current monitor address 435 that is the address of the current method's monitor.

- a return program counter value 431 that is the address of the virtual machine instruction, e.g., JAVA opcode, next to the method invoke instruction

- a return frame 432 that is the location of the calling method's frame

- a return constant pool pointer 433 that is a pointer to the calling method's constant pool

- the object reference is an indirect pointer to an object-storage representing the object being targeted for the method invocation.

- JAVA compiler JAVAC See Fig. 2 .

- This object reference is accessible as local variable zero during the execution of the method.

- This indirect pointer is not available for a static method invocation as there is no target-object defined for a static method invocation.

- the list of incoming arguments transfers information from the calling method to the invoked method. Like the object reference, the incoming arguments are pushed onto stack 400 by JAVA compiler generated instructions and may be accessed as local variables.

- JAVA compiler JAVAC See Fig. 2

- statically generates a list of arguments for current method 410 Fig. 4A

- hardware processor 100 determines the number of arguments from the list

- the object reference is present in the frame for a non-static method invocation

- the first argument is accessible as local variable one.

- the first argument becomes local variable zero.

- the upper 32-bits i.e., the 32 most significant bits, of a 64-bit entity are placed on the upper location of stack 400, i.e., pushed on the stack last.

- the upper 32-bit portion of the 64-bit entity is on the top of the stack, and the lower 32-bit portion of the 64-bit entity is in the storage location immediately adjacent to the top of stack 400.

- the local variable area on stack 400 ( Fig. 4A ) for current method 410 represents temporary variable storage space,which is allocated and remains effective during invocation of method 410.

- JAVA compiler JAVAC ( Fig. 2 ) statically determines the required number of local variables and hardware processor 100 allocates temporary variable storage space accordingly.

- the local variables When a method is executing on hardware processor 100, the local variables typically reside in stack cache 155 and are addressed as offsets from the pointer VARS ( Figs. 1 and 4A ), which points to the position of the local variable zero. Instructions are provided to load the values of local variables onto operand stack 423 and store values from operand stack into local variables area 421.

- the information in execution environment 422 includes the invoker's method context.

- hardware processor 100 pushes the invoker's method context onto newly allocated frame 410, and later utilizes the information to restore the invoker's method context before returning.

- Pointer FRAME ( Figs. 1 and 4A ) is a pointer to the execution environment of the current method.

- each register in register set 144 ( Fig. 1 ) is 32-bits wide.

- Operand stack 423 is allocated to support the execution of the virtual machine instructions within the current method.

- Program counter register PC ( Fig. 1 ) contains the address of the next instruction, e.g., opcode, to be executed. Locations on operand stack 423 ( Fig.

- operand stack 423 are used to store the operands of virtual machine instructions, providing both source and target storage locations for instruction execution.

- the size of operand stack 423 is statically determined by JAVA compiler JAVAC ( Fig. 2 ) and hardware processor 100 allocates space for operand stack 423 accordingly.

- Register OPTOP Figs. 1 and 4A ) holds a pointer to a top of operand stack 423.

- the invoked method may return its execution result onto the invoker's top of stack, so that the invoker can access the return value with operand stack references.

- the return value is placed on the area where an object reference or an argument is pushed before a method invocation.

- One way to speed up this process is for hardware processor 100 to load the execution environment in the background and indicate what has been loaded so far, e.g., simple one bit scoreboarding. Hardware processor 100 tries to execute the bytecodes of the called method as soon as possible, even though stack 400 is not completely loaded. If accesses are made to variables already loaded, overlapping of execution with loading of stack 400 is achieved, otherwise a hardware interlock occurs and hardware processor 100 just waits for the variable or variables in the execution environment to be loaded.

- Figure 4B illustrates another way to accelerate method invocation.

- the execution environment of each method frame is stored separately from the local variable area and the operand stack of the method frame.

- stack 400B contains modified method frames, e.g., modified method frame 410B having only local variable area 421 and operand stack 423.

- Execution environment 422 of the method frame is stored in an execution environment memory 440. Storing the execution environment in execution environment memory 440 reduces the amount of data in stack cache 155. Therefore, the size of stack cache 155 can be reduced. Furthermore, execution environment memory 440 and stack cache 155 can be accessed simultaneously. Thus, method invocation can be accelerated by loading or storing the execution environment in parallel with loading or storing data onto stack 400B.

- the memory architecture of execution environment memory 440 is also a stack. As modified method frames are pushed onto stack 400B through stack cache 155, corresponding execution environments are pushed onto execution environment memory 440. For example, since modified method frames 0 to 2, as shown in Figure 4B , are in stack 400B, execution environments (EE) 0 to 2, respectively, are stored in execution environment memory circuit 440.

- EE execution environments

- an execution environment cache can be added to improve the speed of saving and retrieving the execution environment during method invocation.

- the architecture described more completely below for stack cache 155, dribbler manager unit 151, and stack control unit 152 for caching stack 400, can also be applied to caching execution environment memory 440.

- Figure 4C illustrates an embodiment of stack management unit 150 modified to support both stack 400B and execution environment memory 440.

- the embodiment of stack management unit 150 in Figure 4C adds an execution environment stack cache 450, an execution environment dribble manager unit 460, and an execution environment stack control unit 470.

- execution dribble manager unit 460 transfers an entire execution environment between execution environment cache 450 and execution environment memory 440 during a spill operation or a fill operation.

- I/O bus and memory interface unit 110 implements an interface between hardware processor 100 and a memory hierarchy which in an exemplary embodiment includes external memory and may optionally include memory storage and/or interfaces on the same die as hardware processor 100.

- I/O controller 111 interfaces with external I/O devices and memory controller 112 interfaces with external memory.

- external memory means memory external to hardware processor 100.

- external memory either may be included on the same die as hardware processor 100, may be external to the die containing hardware processor 100, or may include both on- and off-die portions.

- requests to I/O devices go through memory controller 112, which maintains an address map of the entire system including hardware processor 100.

- hardware processor 100 is the only master and does not have to arbitrate to use the memory bus.

- alternatives for the input/output bus that interfaces with I/O bus and memory interface unit 110 include supporting memory-mapped schemes, providing direct support for PCI, PCMCIA, or other standard busses.

- Fast graphics w/ VIS or other technology may optionally be included on the die with hardware processor 100.

- I/O bus and memory interface unit 110 generates read and write requests to external memory.

- interface unit 110 provides an interface for instruction cache and data cache controllers 121 and 161 to the external memory.

- Interface unit 110 includes arbitration logic for internal requests from instruction cache controller 121 and data cache controller 161 to access external memory and in response to a request initiates either a read or a write request on the memory bus to the external memory.

- a request from data cache controller 161 is always treated as higher priority relative to a request from instruction cache controller 121.

- Interface unit 110 provides an acknowledgment signal to the requesting instruction cache controller 121, or data cache controller 161 on read cycles so that the requesting controller can latch the data. On write cycles, the acknowledgment signal from interface unit 110 is used for flow control so that the requesting instruction cache controller 121 or data cache controller 161 does not generate a new request when there is one pending. Interface unit 110 also handles errors generated on the memory bus to the external memory.

- Instruction cache unit (ICU) 120 fetches virtual machine instructions from instruction cache 125 and provides the instructions to instruction decode unit 130.

- instruction cache controller 121 upon a instruction cache hit, instruction cache controller 121, in one cycle, transfers an instruction from instruction cache 125 to instruction buffer 124 where the instruction is held until integer execution unit IEU, that is described more completely below, is ready to process the instruction. This separates the rest of pipeline 300 ( Fig. 3 ) in hardware processor 100 from fetch stage 301. If it is undesirable to incur the complexity of supporting an instruction-buffer type of arrangement, a temporary one instruction register is sufficient for most purposes. However, instruction fetching, caching, and buffering should provide sufficient instruction bandwidth to support instruction folding as described below.

- the front end of hardware processor 100 is largely separate from the rest of hardware processor 100. Ideally, one instruction per cycle is delivered to the execution pipeline.

- the instructions are aligned on an arbitrary eight-bit boundary by byte aligner circuit 122 in response to a signal from instruction decode unit 130.

- byte aligner circuit 122 in response to a signal from instruction decode unit 130.

- the front end of hardware processor 100 efficiently deals with fetching from any byte position.

- hardware processor 100 deals with the problems of instructions that span multiple cache lines of cache 125. In this case, since the opcode is always the first byte, the design is able to tolerate an extra cycle of fetch latency for the operands. Thus, a very simple de-coupling between the fetching and execution of the bytecodes is possible.

- instruction cache controller 121 In case of an instruction cache miss, instruction cache controller 121 generates an external memory request for the missed instruction to I/O bus and memory interface unit 110. If instruction buffer 124 is empty, or nearly empty, when there is an instruction cache miss, instruction decode unit 130 is stalled, i.e., pipeline 300 is stalled. Specifically, instruction cache controller 121 generates a stall signal upon a cache miss which is used along with an instruction buffer empty signal to determine whether to stall pipeline 300. Instruction cache 125 can be invalidated to accommodate self-modifying code, e.g., instruction cache controller 121 can invalidate a particular line in instruction cache 125.

- instruction cache controller 121 determines the next instruction to be fetched, i.e., which instruction in instruction cache 125 needs to accessed, and generates address, data and control signals for data and tag RAMs in instruction cache 125. On a cache hit, four bytes of data are fetched from instruction cache 125 in a single cycle, and a maximum of four bytes can be written into instruction buffer 124.

- Byte aligner circuit 122 aligns the data out of the instruction cache RAM and feeds the aligned data to instruction buffer 124. As explained more completely below, the first two bytes in instruction buffer 124 are decoded to determine the length of the virtual machine instruction. Instruction buffer 124 tracks the valid instructions in the queue and updates the entries, as explained more completely below.

- Instruction cache controller 121 also provides the data path and control for handling instruction cache misses. On an instruction cache miss, instruction cache controller 121 generates a cache fill request to I/O bus and memory interface unit 110.

- instruction cache controller 121 On receiving data from external memory, instruction cache controller 121 writes the data into instruction cache 125 and the data are also bypassed into instruction buffer 124. Data are bypassed to instruction buffer 124 as soon as the data are available from external memory, and before the completion of the cache fill.

- Instruction cache controller 121 continues fetching sequential data until instruction buffer 124 is full or a branch or trap has taken place.

- instruction buffer 124 is considered full if there are more than eight bytes of valid entries in buffer 124.

- eight bytes of data are written into instruction cache 125 from external memory in response to the cache fill request sent to interface unit 110 by instruction cache unit 120. If there is a branch or trap taken while processing an instruction cache miss, only after the completion of the miss processing is the trap or branch executed.

- a fault indication is generated and stored into instruction buffer 124 along with the virtual machine instruction, i.e., a fault bit is set.

- the line is not written into instruction cache 125.

- the erroneous cache fill transaction acts like a non-cacheable transaction except that a fault bit is set.

- Instruction cache controller 121 also services non-cacheable instruction reads.

- An instruction cache enable (ICE) bit in a processor status register in register set 144, is used to define whether a load can be cached. If the instruction cache enable bit is cleared, instruction cache unit 120 treats all loads as non-cacheable loads.

- Instruction cache controller 121 issues a non-cacheable request to interface unit 110 for non-cacheable instructions. When the data are available on a cache fill bus for the non-cacheable instruction, the data are bypassed into instruction buffer 124 and are not written into instruction cache 125.

- ICE instruction cache enable

- instruction cache 125 is a direct-mapped, eight-byte line size cache. Instruction cache 125 has a single cycle latency.

- the cache size is configurable to 0K, 1K, 2K, 4K, 8K and 16K byte sizes where K means kilo.

- the default size is 4K bytes.

- Each line has a cache tag entry associated with the line. Each cache tag contains a twenty bit address tag field and one valid bit for the default 4K byte size.

- Instruction buffer 124 which, in an exemplary embodiment, is a twelve-byte deep first-in, first-out (FIFO) buffer, de-links fetch stage 301 ( Fig. 3 ) from the rest of pipeline 300 for performance reasons.

- Each instruction in buffer 124 ( Fig. 1 ) has an associated valid bit and an error bit. When the valid bit is set, the instruction associated with that valid bit is a valid instruction. When the error bit is set, the fetch of the instruction associated with that error bit was an erroneous transaction.

- Instruction buffer 124 includes an instruction buffer control circuit (not shown) that generates signals to pass data to and from instruction buffer 124 and that keeps track of the valid entries in instruction buffer 124, i.e., those with valid bits set.

- instruction buffer 124 in an exemplary embodiment, four bytes can be received into instruction buffer 124 in a given cycle. Up to five bytes, representing up to two virtual machine instructions, can be read out of instruction buffer 124 in a given cycle. Alternative embodiments, particularly those providing folding of multi-byte virtual machine instructions and/or those providing folding of more than two virtual machine instructions, provide higher input and output bandwidth. Persons of ordinary skill in the art will recognize a variety of suitable instruction buffer designs including, for example, alignment logic, circular buffer design, etc. When a branch or trap is taken, all the entries in instruction buffer 124 are nullified and the branch/trap data moves to the top of instruction buffer 124.

- a unified execution unit 140 is shown.

- instruction decode unit 130,integer unit 142, and stack management unit 150 are considered a single integer execution unit

- floating point execution unit 143 is a separate optional unit.

- the various elements in the execution unit may be implemented using the execution unit of another processor.

- the various elements included in the various units of Figure 1 are exemplary only of one embodiment. Each unit could be implemented with all or some of the elements shown. Again, the decision is largely dependent upon a price vs. performance trade-off.

- virtual machine instructions are decoded in decode stage 302 ( Fig. 3 ) of pipeline 300.

- two bytes that can correspond to two virtual machine instructions, are fetched from instruction buffer 124 ( Fig. 1 ).

- the two bytes are decoded in parallel to determine if the two bytes correspond to two virtual machine instructions, e.g., a first load top of stack instruction and a second add top two stack entries instruction, that can be folded into a single equivalent operation. Folding refers to supplying a single equivalent operation corresponding to two or more virtual machine instructions.

- a single-byte first instruction can be folded with a second instruction.

- alternative embodiments provide folding of more than two virtual machine instructions, e.g., two to four virtual machine instructions, and of muhi-byte virtual machine instructions, though at the cost of instruction decoder complexity and increased instruction bandwidth.

- the first byte which corresponds to the first virtual machine instruction, is a multi-byte instruction, the first and second instructions are not folded.

- An optional current object loader folder 132 exploits instruction folding, such as that described above and as well as in greater detail below in virtual machine instruction sequences which simulation results have shown to be particularly frequent and therefore a desirable target for optimization.

- a method invocation typically loads an object reference for the corresponding object onto the operand stack and fetches a field from tne object. Instruction folding allows this extremely common virtual machine instruction sequence to be executed using an equivalent folded operation.

- Quick variants are not part of the virtual machine instruction set (See Chapter 3 of Appendix I), and are invisible outside of a JAVA virtual machine implementation. However, inside a virtual machine implementation, quick variants have proven to be an effective optimization. (See Appendix A in Appendix I; which is an integral part of this specification.) Supporting writes for updates of various instructions to quick variants in a non-quick to quick translator cache 131 changes the normal virtual machine instruction to a quick virtual machine instruction to take advantage of the large benefits bought from the quick variants.

- the information required to initiate execution of an instruction has been assembled for the first time, the information is stored in a cache along with the value of program counter PC as tag in non-quick to quick translator cache 131 and the instruction is identified as a quick-variant. In one embodiment, this is done with self-modifying code.

- instruction decode unit 130 Upon a subsequent call of that instruction, instruction decode unit 130 detects that the instruction is identified as a quick-variant and simply retrieves the information needed to initiate execution of the instruction from non-quick to quick translator cache 131.

- Non-quick to quick translator cache is an optional feature of hardware processor 100.

- branch predictor circuit 133 branch predictor circuit 133.

- Implementations for branch predictor circuit 133 include branching based on opcode, branching based on offset, or branching based on a two-bit counter mechanism.

- Operand stack 423 contains a reference to an object and some number of arguments when this instruction is executed.

- Index bytes one and two are used to generate an index into the constant pool of the current class.

- the item in the constant pool at that index points to a complete method signature and class. Signatures are defined in Appendix I and that description is incorporated herein by reference.

- the method signature a short, unique identifier for each method, is looked up in a method table of the class indicated.

- the result of the lookup is a method block that indicates the type of method and the number of arguments for the method.

- the object reference and arguments are popped off this method's stack and become initial values of the local variables of the new method.

- the execution then resumes with the first instruction of the new method.

- instructions invokevirtual , opcode 182, and invokestatic, opcode 184 invoke processes similar to that just described. In each case, a pointer is used to lookup a method block.

- a method argument cache 134 that also is an optional feature of hardware processor 100, is used, in a first embodiment, to store the method block of a method for use, after the first call to the method, along with the pointer to the method block as a tag.

- Instruction decode unit 130 uses index bytes one and two to generate the pointer and then uses the pointer to retrieve the method block for that pointer in cache 134. This permits building the stack frame for the newly invoked method more rapidly in the background in subsequent invocations of the method.

- Alternative embodiments may use a program counter or method identifier as a reference into cache 134. If there is a cache miss, the instruction is executed in the normal fashion and cache 134 is updated accordingly.

- the particular process used to determine which cache entry is overwritten is not an essential aspect of this invention. A least-recently used criterion could be implemented, for example.

- method argument cache 134 is used to store the pointer to the method block, for use after the first call to the method, along with the value of program counter PC of the method as a tag.

- Instruction decode unit 130 uses the value of program counter PC to access cache 134. If the value of program counter PC is equal to one of the tags in cache 134, cache 134 supplies the pointer stored with that tag to instruction decode unit 130. Instruction decode unit 130 uses the supplied pointer to retrieve the method block for the method.

- Wide index forwarder 136 which is an optional element of hardware processor 100, is a specific embodiment of instruction folding for instruction wide. Wide index forwarder 136 handles an opcode encoding an extension of an index operand for an immediately subsequent virtual machine instruction. In this way, wide index forwarder 136 allows instruction decode unit 130 to provide indices into local variable storage 421 when the number of local variables exceeds that addressable with a single byte index without incurring a separate execution cycle for instruction wide..

- instruction decoder 135, particularly instruction folding, non-quick to quick translator cache 131, current object loader folder 132, branch predictor 133, method argument cache 134, and wide index forwarder 136 are also useful in implementations that utilize a software interpreter or just-in-time compiler, since these elements can be used to accelerate the operation of the software interpreter or just-in-time compiler.

- the virtual machine instructions are translated to an instruction for the processor executing the interpreter or compiler, e.g., any one of a Sun processor, a DEC processor, an Intel processor, or a Motorola processor, for example, and the operation of the elements is modified to support execution on that processor.

- the translation from the virtual machine instruction to the other processor instruction can be done either with a translator in a ROM or a simple software translator.

- processor 100 implements instruction folding to enhance the performance of processor 100.

- instruction folding in accordance with the present invention can be used in any of a stack-based virtual machine implementation, including, e.g., in a hardware processor implementation, in a software interpreter implementation, in a just-in-time compiler implementation. etc.

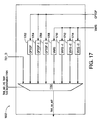

- Figure 7 illustrates folded execution of first and second stack instructions, according to the principles of this invention.

- a first operand for an addition instruction resides in top-of-stack (TOS) entry 711a of stack 710.

- a second operand resides in entry 712 of stack 710. Notice that entry 712 is not physically adjacent to top-of stack entry 711a and in fact, is in the interior of stack 710.

- An instruction stream includes a load top-of-stack instruction for pushing the second operand onto the top of stack (see description of instruction iload in Appendix I) and an addition instruction for operating on the first and second operands residing in the top two entries of stack 710 (see description of instruction iadd in Appendix I).

- the load top-of-stack and addition instructions are folded into a single operation whereby the explicit sequential execution of the load top-of-stack instruction and the associated execution cycle are eliminated. Instead, a folded operation corresponding to the addition instruction operates on the first and second operands, which reside in TOS entry 711a and entry 712 of stack 710. The result of the folded operation is pushed onto stack 710 at TOS entry 711b .

- folding according to the principles of this invention enhances performance compared to an unfolded method for executing the same sequence of instructions.

- a first operand for an addition instruction resides in top-of-stack (TOS) entry 611a of stack 610 (see Figure 6 ).

- a second operand resides in entry 612 of stack 610.

- a load to top-of-stack instruction pushes the second operand onto the top of stack 610 and typically requires an execution cycle. The push results in the second and first operands residing in TOS entry 611b and (TOS-1) entry 613, respectively.

- the addition instruction operates, in another execution cycle, on the first and second operands which properly reside in the top two entries, i.e., TOS entry 611b and (TOS-1) entry 613, of stack 610 in accordance with the semantics of a stack architecture.

- the result of the addition instruction is pushed onto stack 610 at TOS entry 611c and after the addition instruction is completed, it is as if the first and second operand data were never pushed onto stack 610.

- folding reduces the execution cycles required to complete the addition and so enhances the speed of execution of the instruction stream. More complex folding, e.g., folding including store instructions and folding including larger numbers of instructions, is described in greater detail below.

- instruction decoder unit 130 examines instructions in a stream of instructions. Instruction decoder unit 130 folds first and second adjacent instructions together and provides a single equivalent operation for execution by execution unit 140 when instruction decoder unit 130 detects that the first and second instructions have neither structural nor resource dependencies and the second instruction operates on data provided by the first instruction. Execution of the single operations obtains the same result as execution of an operation corresponding to the first instruction followed by execution an operation corresponding to the second instruction, except that an execution cycle has been eliminated.

- the JAVA virtual machine is stack-oriented and specifies an instruction set, a register set, an operand stack, and an execution environment.

- the present invention is described in relation to the JAVA Virtual Machine, those of skill in the art will appreciate that the invention is not limited to embodiments implementing or related to the JAVA virtual machine and, instead, encompasses systems, articles, methods, and apparati for a wide variety of stack machine environments, both virtual and physical.

- each method has storage allocated for an operand stack and a set of local variables.

- a series of method frames e.g., method frame 801 and method frame 802 on stack 803, each include an operand stack instance, local variable storage instance, and frame state information instance for respective methods invoked along the execution path of a JAVA program.

- a new frame is created and becomes current each time a method is invoked and is destroyed after the method completes execution.

- a frame ceases to be current if its method invokes another method.

- the current frame passes back the result of its method invocation, if any, to the previous frame via stack 803.

- Folding in accordance with the present invention is not dependent upon a particular process used to allocate or define memory space for a method, such as a frame, and can, in general, be used in any stack based architecture.

- This series of method frames may be implemented in any of a variety of suitable memory hierarchies, including for example register/ cache/ memory hierarchies.

- an operand stack instance 812 ( Figure 8 ) is implemented in randomly-accessible storage 810, i.e., at least some of the entries in operand stack instance 812 can be accessed from locations other than the top most locations of operand stack instance 812 in contrast with a conventional stack implementation in which only the top entry or topmost entries of the stack can be accessed.

- register OPTOP stores a pointer that identifies the top of operand stack instance 812 associated with the current method. The value stored in register OPTOP is maintained to identify the top entry of an operand stack instance corresponding to the current method.

- local variables for the current method are represented in randomly-accessible storage 810.

- a pointer stored in register VARS identifies the starting address of local variable storage instance 813 associated with the current method.

- the value in register VARS is maintained to identify a base address of the local variable storage instance corresponding to the current method.

- Entries in operand stack instance 812 and local variable storage instance 813 are referenced by indexing off of values represented in registers OPTOP and VARS, respectively, that in the embodiment of Figure 1 are included in register set 144, and in the embodiment of Figure 8 are included in pointer registers 822.

- Pointer registers 822 may be represented in physical registers of a processor implementing the JAVA Virtual Machine, or optionally, in randomly-accessible storage 810.

- commonly used offsets OPTOP-1, OPTOP-2, VARS+1, VARS+2, and VARS+3 are derived from the values in registers OPTOP and VARS, respectively.

- the additional offsets could be stored in registers of pointer registers 822.

- Operand stack instance 812 and local variable storage instance 813 associated with the current method are preferably represented in a flat 64-entry cache, e.g., stack cache 155 (see Figure 1 ) whose contents are kept updated so that a working set of operand stack and local variable storage entries are cached.

- a flat 64-entry cache e.g., stack cache 155 (see Figure 1 )

- the current frame including operand stack instance 812 and local variable storage instance 813 may be fully or partially represented in the cache.

- Operand stack and local variable storage entries for frames other than the current frame may also be represented in the cache if space allows.

- a cache suitable for use with the folding of this invention may have various representations, including separate and/or uncached operand stack and local varaible storage areas.

- a constant area 814 is provided in the address space of a processor implementing the JAVA virtual machine for commonly-used constants, e.g., constants specified by JAVA virtual machine instructions such as instruction iconst.

- an operand source is represented as an index into constant area 814.

- constant area 814 is represented in randomly-accessible storage 810.

- entries of constant area 814 could also be cached, e.g., in stack cache 155.

- operand stack 812 and local variable storage 813 are referred to simply as operand stack 812 and local variable storage 813.

- operand stack 812 and local variable storage 813 refer to any instances of an operand stack and variable storage associated with the current method, including representations which maintain separate instances for each method and representations which combine instances into a composite representation.

- Operand sources and result targets for JAVA Virtual Machine instructions typically identify entries of operand stack instance 812 or local variable storage instance 813, i.e., identify entries of the operand stack and local variable storage for the current method.

- representative JAVA virtual machine instructions are described in Chapter 3 of The JAVA Virtual Machine Specification which is included at Appendix I.

- JAVA virtual machine instructions rarely explicitly designate both the source of the operand, or operands, and the result destination. Instead, either the source or the destination is implicitly the top of operand stack 812. Some JAVA bytecodes explicitly designate neither a source nor a destination. For example, instruction iconst_0 pushes a constant integer zero onto operand stack 812. The constant zero is implicit in the instruction, although the instruction may actually be implemented by a particular JAVA virtual machine implementation using a representation of the value zero from a pool of constants, such as constant area 814, as the source for the zero operand. An instruction decoder for a JAVA virtual machine implementation that implements instruction iconst_0 in this way could generate, as the source address, the index of the entry in constant area 814 where the constant zero is represented.

- the JAVA virtual machine integer add instruction, iadd generates the sum of first and second integer operands, referred to as operand 1 and operand2, respectively, that are at the top two locations of operand stack 812.

- the top two locations are identified, at the time of instruction iadd execution, by pointer OPTOP in register OPTOP and by pointer OPTOP-1.

- the result of the execution of instruction iadd i.e., the sum of first and second integer operands, is pushed onto operand stack 812.

- Figure 9A shows the state of operand stack 812 and local variable storage 813 that includes first and second values, referred to as value and value2, before execution of a pair of JAVA virtual machine integer load instructions iload .

- pointer OPTOP has the value AAC0h.

- Figure 9B shows operand stack 812 after execution of the pair of instructions iload that load integer values from local variable storage 813 onto operand stack 812, pushing (i.e., copying) values value1 and value2 from locations identified by pointer VARS in register VARS and by pointer VARS+2 onto operand stack 812 as operand1 at location AAC4h and operand2 at location AAC8h, and updating pointer OPTOP in the process to value AAC8h.

- Figure 9C shows operand stack 812 after instruction iadd has been executed.

- Execution of instruction iadd pops operands operand1 and operand2 off operand stack 812, calculates the sum of operands operand1 and operand2, and pushes that sum onto operand stack 812 at location AAC4h.

- pointer OPTOP has the value AAC0h and points to the operand stack 812 entry storing the sum.

- Figure 9D shows operand stack 812 after an instruction istore has been executed. Execution of instruction istore pops the sum off operand stack 812 and stores the sum in the local variable storage 813 entry at the location identified by pointer VARS+2.

- FIG. 10A-C The folding example of Figures 10A-C is analogous to that illustrated with reference to Figures 9A-D , though with only load folding illustrated.

- Execution of JAVA virtual machine length of array instruction arraylength determines the length of an array whose object reference pointer objectref is at the top of operand stack 812, and pushes the length onto operand stack 812.

- Figure 10A shows the state of operand stack 812 and local variable storage 813 before execution of JAVA virtual machine reference load instruction aload that is used to load an object reference from local variable storage 813 onto the top of operand stack 812.

- pointer OPTOP has the value AAC0h.

- Figure 10B shows operand stack 812 after execution of instruction aload pushes, i.e., copies, object reference pointer objectref onto the top of operand stack 812 and updates pointer OPTOP to AAC4h in the process.

- Figure 10C shows operand stack 812 after instruction arraylength has been executed.

- Execution of instruction arraylength pops object reference pointer objectref off operand stack 812, calculates the length of the array referenced thereby, and pushes that length onto operand stack 812.

- Suitable implementations of the instruction arraylength may supply object reference pointer objectref to an execution unit, e.g., execution unit 140, which subsequently overwrites the object reference pointer objectref with the value length.

- execution unit 140 e.g., execution unit 140

- pointer OPTOP has the value AAC4h and points to the operand stack 812 entry storing the value length.

- Figure 1 illustrates a processor 1100 wherein loads, such as those illustrated in Figures 9A and 9B and in Figures 10A and 10B , are folded into execution of subsequent instructions, e.g., into execution of subsequent instruction iadd, or instruction arraylength.

- loads such as those illustrated in Figures 9A and 9B and in Figures 10A and 10B

- subsequent instructions e.g., into execution of subsequent instruction iadd, or instruction arraylength.

- intermediate execution cycles associated with loading operands operand 1 and operand2 for instruction iadd, or with loading pointer objectref for instruction arraylength onto the top of operand stack 812 can be eliminated.

- single cycle execution of groups of JAVA virtual machine instructions e.g., the group of instructions iload, iload, iadd, and istore , or the group of instructions aload and arraylength, is provided by processor 1100.

- processor 1100 is presented in Figure 1 as hardware processor 100.

- hardware processor 1100 includes other embodiments that do not include the various optimizations of hardware processor 100.

- the folding processes described below could be implemented in a software interpreter or a included within a just-in-time compiler.

- stores such as that illustrated in Figure 9D are folded into execution of prior instructions, e.g., in Figure 9D , into execution of the immediately prior instruction iadd .

- Instruction decoder 1118 retrieves fetched instructions from instruction buffer 1116 and depending upon the nature of instructions in the fetched instruction sequence, supplies execution unit 1120 with decoded operation and operand addressing information implementing the instruction sequence as a single folded operation. Unlike instructions of the JAVA virtual machine instruction set to which the instruction sequence from instruction buffer 1116 conforms, decoded operations supplied to execution unit 1120 by instruction decoder 1118 operate on operand values represented in entries of local variable storage 813, operand stack 812, and constant area 814.

- valid operand sources include local variable storage 813 entries identified by pointers VARS, VARS+1 , VARS+2, and VARS+3, as well as operand stack 812 entries identified by pointers OPTOP, OPTOP-1, and OPTOP-2.

- valid result targets include local variable storage 813 entries identified by operands VARS, VARS+1, VARS+2, and VARS+3.

- Embodiments in accordance with Figure 11 may also provide for constant area 814 entries as valid operand sources as well as other locations in operand stack 812 and local variable storage 813.

- instruction buffer 1116 is organized as a shift register for JAVA bytecodes.

- One or more bytecodes are decoded by instruction decoder 1118 during each cycle and operations are supplied to execution unit 1120 in the form of a decoded operation on instruction decode bus instr_dec and associated operand source and result destination addressing information on instruction address bus instr_addr .