WO2011129544A2 - 실감 효과 처리 시스템 및 방법 - Google Patents

실감 효과 처리 시스템 및 방법 Download PDFInfo

- Publication number

- WO2011129544A2 WO2011129544A2 PCT/KR2011/002409 KR2011002409W WO2011129544A2 WO 2011129544 A2 WO2011129544 A2 WO 2011129544A2 KR 2011002409 W KR2011002409 W KR 2011002409W WO 2011129544 A2 WO2011129544 A2 WO 2011129544A2

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- sensory

- metadata

- information

- type

- shows

- Prior art date

Links

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F16/00—Information retrieval; Database structures therefor; File system structures therefor

- G06F16/10—File systems; File servers

- G06F16/16—File or folder operations, e.g. details of user interfaces specifically adapted to file systems

- G06F16/164—File meta data generation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/189—Recording image signals; Reproducing recorded image signals

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/41—Structure of client; Structure of client peripherals

- H04N21/4104—Peripherals receiving signals from specially adapted client devices

- H04N21/4126—The peripheral being portable, e.g. PDAs or mobile phones

-

- A—HUMAN NECESSITIES

- A63—SPORTS; GAMES; AMUSEMENTS

- A63F—CARD, BOARD, OR ROULETTE GAMES; INDOOR GAMES USING SMALL MOVING PLAYING BODIES; VIDEO GAMES; GAMES NOT OTHERWISE PROVIDED FOR

- A63F13/00—Video games, i.e. games using an electronically generated display having two or more dimensions

- A63F13/25—Output arrangements for video game devices

- A63F13/28—Output arrangements for video game devices responding to control signals received from the game device for affecting ambient conditions, e.g. for vibrating players' seats, activating scent dispensers or affecting temperature or light

-

- A—HUMAN NECESSITIES

- A63—SPORTS; GAMES; AMUSEMENTS

- A63F—CARD, BOARD, OR ROULETTE GAMES; INDOOR GAMES USING SMALL MOVING PLAYING BODIES; VIDEO GAMES; GAMES NOT OTHERWISE PROVIDED FOR

- A63F13/00—Video games, i.e. games using an electronically generated display having two or more dimensions

- A63F13/30—Interconnection arrangements between game servers and game devices; Interconnection arrangements between game devices; Interconnection arrangements between game servers

-

- A—HUMAN NECESSITIES

- A63—SPORTS; GAMES; AMUSEMENTS

- A63F—CARD, BOARD, OR ROULETTE GAMES; INDOOR GAMES USING SMALL MOVING PLAYING BODIES; VIDEO GAMES; GAMES NOT OTHERWISE PROVIDED FOR

- A63F13/00—Video games, i.e. games using an electronically generated display having two or more dimensions

- A63F13/45—Controlling the progress of the video game

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/106—Processing image signals

- H04N13/172—Processing image signals image signals comprising non-image signal components, e.g. headers or format information

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/41—Structure of client; Structure of client peripherals

- H04N21/4104—Peripherals receiving signals from specially adapted client devices

- H04N21/4131—Peripherals receiving signals from specially adapted client devices home appliance, e.g. lighting, air conditioning system, metering devices

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/43—Processing of content or additional data, e.g. demultiplexing additional data from a digital video stream; Elementary client operations, e.g. monitoring of home network or synchronising decoder's clock; Client middleware

- H04N21/434—Disassembling of a multiplex stream, e.g. demultiplexing audio and video streams, extraction of additional data from a video stream; Remultiplexing of multiplex streams; Extraction or processing of SI; Disassembling of packetised elementary stream

- H04N21/4348—Demultiplexing of additional data and video streams

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/80—Generation or processing of content or additional data by content creator independently of the distribution process; Content per se

- H04N21/81—Monomedia components thereof

- H04N21/8126—Monomedia components thereof involving additional data, e.g. news, sports, stocks, weather forecasts

- H04N21/8133—Monomedia components thereof involving additional data, e.g. news, sports, stocks, weather forecasts specifically related to the content, e.g. biography of the actors in a movie, detailed information about an article seen in a video program

Definitions

- Embodiments of the present invention relate to a sensory effect processing system and method, and more particularly, to a system and method for processing sensory effects included in content at high speed.

- a content reproducing apparatus does not only display content information and provide it to a user, but also provides content information to a user with various effects by using a separate actuator.

- 4D theaters which have recently been in the spotlight, not only provide movie images but also provide self-vibrating effects, windy effects, and water splashing effects. All. Therefore, the user can enjoy the content more realistically.

- a sensory media playback apparatus for reproducing content including sensory effect information may include: an extractor configured to extract the sensory effect information from the content; an encoder configured to encode the extracted sensory effect information into sensory effect metadata; It may include a transmitter for transmitting the sensory effect metadata to the sensory effect control device.

- a sensory media playback method of playing content including sensory effect information may include extracting the sensory effect information from the content, encoding the extracted sensory effect information into sensory effect metadata, and And transmitting sensory effect metadata to the sensory effect control device.

- Embodiments may generate the sensory effect included in the content in the real world by generating command information for controlling the sensory device based on the sensory effect information and the characteristic information of the sensory device.

- the embodiments encode and transmit metadata in binary form, or encode and transmit in XML form, or encode and transmit in XML form and then in binary form, thereby increasing data transmission speed and using low bandwidth. Can be.

- 1 is a view showing a sensory effect processing system according to an embodiment of the present invention.

- 2 to 4 is a view showing a sensory effect processing system according to an embodiment of the present invention.

- 5 is a view showing the configuration of a sensory device according to an embodiment of the present invention.

- 6 is a view showing the configuration of a sensory effect control apparatus according to an embodiment of the present invention.

- FIG. 7 is a diagram illustrating a configuration of a sensory media playback device according to an embodiment of the present invention.

- FIG. 8 is a diagram illustrating a method of operating a sensory effect processing system according to an embodiment of the present invention.

- a sensory effect processing system 100 includes a sensory media playback device 110, a sensory effect control device 120, and a sensory device 130.

- the sensory media playback device 110 is a device for playing content including at least one sensory effect information.

- Realistic media playback device 110 is a DVD player, movie screen,

- the sensory effect information is information on a predetermined effect implemented in the real world in response to content played by the sensory media playback device 110.

- the sensory effect information may be information about an effect of causing the controller of the game machine to vibrate when an earthquake occurs in the virtual world played in the game machine. The sensory effect information will be described later in detail.

- Sensory media playback device 110 adds sensory effect information from the content I can ship it.

- the sensory media playback apparatus 110 may encode the extracted sensory effect information into sensory effect metadata (SEM). That is, the sensory media playback device 110 may generate sensory effect metadata by encoding sensory effect information.

- the sensory media playback apparatus 110 may transmit the generated sensory effect metadata to the sensory effect control apparatus 120.

- the sensory device 130 is a device that performs an effect event that s on sensory effect information.

- the sensory device 130 may be an actuator that implements an effect event in the real world.

- the sensory device 130 may include a vibrating game controller, a seat in a 4D theater, virtual world playback goggles, and the like.

- the effect event may be an event implemented in the real world through the sensory device 130 in response to the sensory effect information.

- the effect event may be an event for operating the vibration unit of the game machine in response to the sensory effect information causing the controller of the game machine to vibrate.

- the sensory device 130 may encode characteristic information on characteristics of the sensory device 130 into sensory device capability metadata (SDCap Metadata). That is, the sensory device 130 may generate sensory device characteristic metadata by encoding the characteristic information.

- SDCap Metadata sensory device capability metadata

- the characteristic information on the characteristic of the sensory device 130 will be described in detail later.

- the sensory device 130 may transmit the generated sensory device characteristic metadata to the sensory effect control device 120.

- the sensory device 130 is preferred information on the user's sensory effect Can be encoded into user realistic preference metadata. That is, the sensory device 130 may generate user sensory preference metadata (USP Metadata) by encoding preference information on the user's sensory effect.

- USP Metadata user sensory preference metadata

- the preference information on the user's sensory effect may be information indicating the user's preference level for the individual sensory effect.

- the preference information according to an embodiment may be information on the level of the effect event performed by the sensory effect information. For example, in an effect event that causes the controller to vibrate, if the user does not want to vibrate, the preference information may be information that sets the level of the effect event for the vibration effect to zero. Preferred information on the user's sensory effect will be described in detail later.

- the sensory device 130 may receive preference information from a user. Also, the sensory device 130 may transmit the generated user sensory preference metadata to the sensory effect control device 120.

- the sensory effect control apparatus 120 may receive sensory effect metadata from the sensory media playback device 110, and receive sensory device characteristic metadata from the sensory device 130.

- the sensory effect control apparatus 120 may decode sensory effect metadata and sensory device characteristic metadata.

- the sensory effect control apparatus 120 may extract sensory effect information by decoding sensory effect metadata. Also, the sensory effect control apparatus 120 may extract characteristic information on the characteristic of the sensory device 130 by decoding the sensory device characteristic metadata.

- Sensory effect control device ( 12 0) is a device for decoding decoded sensory effect metadata and decoded sensory effects Command information for controlling the sensory device 130 may be generated based on the value characteristic metadata. Accordingly, the sensory effect control device 120 may generate command information for controlling the sensory device 130 to perform the effect event in accordance with the characteristics of the sensory device 130.

- the command information may be information for controlling the performance of the effect event by the sensory device 130.

- the command information may include sensory effect information.

- the sensory effect control apparatus 120 may receive sensory device characteristic metadata and user sensory preference metadata from the sensory device 130.

- the sensory effect control apparatus 120 may decode the user's sensory preference metadata to extract preference information for the user's sensory effect.

- the sensory effect control apparatus 120 may generate command information based on decoded sensory effect metadata, decoded sensory device characteristic metadata, and decoded user sensory preference metadata.

- the command information may include sensory effect information. Therefore, the sensory effect control apparatus 120 may generate command information for controlling the sensory apparatus 130 to perform the effect event while meeting the characteristics of the sensory apparatus 130 while reflecting the user's preference.

- the sensory effect control apparatus 120 may encode the generated command information into sensory device command metadata. That is, the sensory effect control apparatus 120 may generate sensory command command metadata by encoding the generated command information.

- the sensory effect control device 120 may transmit sensory device command metadata to the sensory device 130.

- the sensory device 130 sends sensory device command metadata from the sensory effect control device 120. Receive and decode received sensory device command metadata.

- the sensory device 130 may extract sensory effect information by decoding sensory device command metadata. In this case, the sensory device 130 may perform an effect event that listens to sensory effect information.

- the sensory device 130 may extract command information by decoding sensory device command metadata.

- the sensory device 130 may perform an effect event that listens to sensory effect information based on the command information.

- FIG. 2 to 4 is a view showing a sensory effect processing system according to an embodiment of the present invention.

- the sensory effect processing system 200 may include a sensory media playback device 210, a sensory effect control device 220, and a sensory device 230.

- Realistic media playback device 210 is an XML encoder (XML Encoder, extensible Mark-up

- the XML encoder 211 may generate sensory effect metadata by encoding sensory effect information in an XML form.

- the sensory media playback device 210 is a sensory effect control device.

- the sensory effect metadata encoded in the XML format may be transmitted.

- the sensory effect control apparatus 220 may include an XML decoder 221.

- the XML decoder 221 may decode sensory effect metadata received from the sensory media playback device 210. According to an embodiment, the XML decoder 221 may extract sensory effect information by decoding sensory effect metadata.

- Sensory device 230 may include an XML encoder 231.

- the XML encoder 231 may generate sensory device characteristic metadata by encoding the characteristic information on the characteristic of the sensory device 230 in an XML form.

- the sensory device 230 may transmit sensory device characteristic metadata encoded in the XML form to the sensory effect control device 220.

- the XML encoder 231 may generate user sensory preference metadata by encoding preference information on the user's sensory effect in an XML form.

- the sensory device 230 may transmit the user sensory preference metadata encoded in the XML form to the sensory effect control device 220.

- the sensory effect control apparatus 220 may include an XML decoder 222.

- the XML decoder 222 may decode sensory device characteristic metadata received from the sensory device 230. According to an embodiment, the XML decoder 222 may decode sensory property metadata to extract feature information about the property of the sensory device 230. "In addition, XML decoder 222 may decode the user feeling received from the realized device 230, preferred metadata. According to an embodiment, the XML decoder 222 may extract user preference information about sensory effects by decoding user sensory preference metadata.

- the sensory effect control apparatus 220 may include an XML encoder 223.

- the XML encoder 223 may generate sensory device command metadata by encoding the command information controlling the execution of the effect event by the sensory device 230 in an XML form.

- the sensory effect control apparatus 220 may transmit sensory apparatus command metadata encoded in the XML form to the sensory apparatus 230.

- Sensory device 230 may include an XML decoder 232.

- the XML decoder 232 is a sensory device command metadata received from the sensory effect control device 220. It can decode data.

- the XL decoder 232 may extract command information by decoding the sensor device command metadata.

- the sensory effect processing system 300 may include a sensory media playback device 310, a sensory effect control device 320, and a sensory device 330.

- Realize media reproduction apparatus 310 is may comprise a binary encoder (Encoder Bi nar y) (311).

- the binary encoder 311 may generate sensory effect metadata by encoding sensory effect information in a binary form.

- the sensory media playback device 310 may transmit sensory effect metadata encoded in binary form to the sensory effect control device 320.

- the sensory effect control apparatus 320 may include a binary decoder 321.

- the binary decoder 321 may decode sensory effect metadata received from the sensory media playback device 310.

- the binary decoder 321 according to an embodiment may extract sensory effect information by decoding sensory effect metadata.

- the sensory device 330 may include a binary encoder 331.

- the binary encoder 331 may generate sensory device characteristic metadata by encoding the characteristic information on the characteristic of the sensory device 330 in binary form.

- the sensory device 330 may transmit sensory device characteristic metadata encoded in binary form to the sensory effect control device 320.

- the binary encoder 331 may generate the user sensory preference metadata by encoding the preference information on the user's sensory effect in binary form.

- the sensory device 330 is a user encoded in binary form by the sensory effect control device 320.

- the sensory preference metadata may be transmitted.

- the sensory effect control apparatus 320 may include a binary decoder 322.

- the binary decoder 322 may decode sensory device characteristic metadata received from the sensory device 330.

- the binary decoder 322 may decode sensory property metadata to extract feature information about the characteristics of the sensory device 330.

- the binary decoder 322 may decode user sensory preference metadata received from the sensory device 330.

- the binary decoder 322 may extract user preference information on sensory effects by decoding user sensory preference metadata.

- the sensory effect control device 320 may include a binary encoder 323.

- the binary encoder 323 may generate sensory device command metadata by encoding command information for controlling the performance of the effect event by the sensory device 330 in a binary form.

- the sensory effect control device 320 may transmit sensory device command metadata encoded in a binary form to the sensory device 330.

- Sensory device 330 may include a binary decoder 332.

- the binary decoder 332 may decode sensory device command metadata received from the sensory effect control device 320.

- the binary decoder 332 may extract command information by decoding sensory device command metadata.

- the sensory effect processing system 400 may include a sensory media playback device 410, a sensory effect control device 420, and a sensory device 430.

- the sensory media playback device 410 may include an XML encoder 411 and a binary encoder 412.

- the XML encoder 411 may generate third metadata by encoding the sensory effect information in an XML format.

- the binary encoder 412 may encode crab 3 metadata in binary form to generate sensory effect metadata.

- the sensory media playback device 410 may transmit sensory effect metadata to the sensory effect control device 420.

- the sensory effect control apparatus 420 may include a binary decoder 421 and an XML decoder 422.

- the binary decoder 421 may decode sensory effect metadata received from the sensory media playback device 410 to extract third metadata. Also, the X L decoder 422 may extract sensory effect information by decoding the third metadata.

- Sensory device 430 may include an XML encoder 431 and a binary encoder 432.

- the XML encoder 431 may generate feature 2 metadata by encoding the attribute information of the characteristic of the sensor 430 in the XML format.

- the binary encoder 432 may generate sensory device characteristic metadata by encoding the second metadata in binary form.

- the sensory device 430 may transmit sensory device characteristic metadata to the sensory effect control device 420.

- the XML encoder 431 may generate the fourth metadata by encoding the preference information on the user's sensory effect in an XML form.

- the binary encoder 432 may generate the user sensory preference metadata by encoding the system 4 metadata in a binary form.

- the sensory device 430 may transmit the user sensory preference metadata to the sensory effect control device 420.

- the sensory effect control apparatus 420 may include a binary decoder 423 and an XML decoder 424.

- the binary decoder 423 may decode sensory device characteristic metadata received from the sensory device 430 to extract second metadata.

- the XML decoder 424 may decode the second metadata to extract feature information about the feature of the sensory device 430.

- the binary decoder 423 may extract the fourth metadata by decoding the user sensory preference metadata received from the sensory device 430.

- the XML decoder 424 may decode the fourth metadata to extract the user's preference information for the sensory effect.

- the sensory effect control device 420 may include an XML encoder 425 and a binary encoder 426.

- the XML encoder 425 may generate first metadata by encoding command information for controlling the execution of the effect event by the sensory device 430 in an XML form.

- the binary encoder 426 can generate sensory device command metadata by encoding the first metadata in binary form.

- the sensory effect control device 420 may transmit sensory device command metadata to the sensory device 430.

- Sensory device 430 may include a binary decoder 433 and an XML decoder 434.

- the binary decoder 433 may decode sensory device command metadata received from the sensory effect control device 420 to extract system 1 metadata.

- the X L decoder 434 may decode the first metadata to extract command information.

- FIG. 5 is a view showing the configuration of a sensory device according to an embodiment of the present invention.

- the sensory device 530 includes a decoding unit 531 and a driving unit 532.

- the decoding unit 531 is a sensory device command metadata including at least one sensory effect information. Decode the data. That is, the decoder 531 may extract at least one sensory effect information by decoding the sensory device command metadata.

- the sensory device command metadata may be received from the sensory effect control device 520.

- the sensory device command metadata may include command information.

- the decoder 531 may extract command information by decoding sensory device command metadata.

- the driver 532 performs an effect event corresponding to at least one sensory effect information.

- the driver 532 may perform an effect event based on the command information.

- Content played by the sensory media playback device 510 may include at least one sensory effect information.

- the sensory device 530 may further include an encoder 533.

- the encoding unit 533 encodes the characteristic information about the characteristic of the sensory device 530 into sensory device characteristic metadata. That is, the encoding unit 533 may generate sensory device characteristic metadata by encoding the characteristic information.

- the encoding unit 533 may include at least one of an XML encoder and a binary encoder.

- the encoder 533 may generate sensory device characteristic metadata by encoding the characteristic information in an XML format.

- the encoder 533 may generate sensory device characteristic metadata by encoding the characteristic information in a binary form.

- the encoding unit 533 generates the second metadata by encoding the characteristic information in the XML form, and generates the sensory device characteristic metadata by encoding the second metadata in the binary form. It can be done.

- the characteristic information may be information about characteristics of the sensory device 530.

- the sensory device characteristic metadata may be a sensory device characteristic basic type indicating basic characteristic information of the sensory device 530.

- the sensory device characteristic basic type may be metadata for characteristic information that is commonly applied to all sensory devices 530.

- Table 1 shows an XML encoding syntax for a sensory basic property type according to an embodiment.

- Table 2 shows a binary representation syntax for a sensory device characteristic basic type according to one embodiment.

- Table 3 shows descriptor component semantics for the sensory device characteristic basic type according to one embodiment.

- sensory device attribute metadata is a sensory device attribute basic attribute representing a group of Co ⁇ on Attributes of sensory device 530.

- Table 4 shows an XML encoding syntax for a sensory device property basic attribute according to an embodiment.

- Table 5 shows a binary encoding syntax for sensory device property basic attributes, according to an embodiment.

- Table 6 shows binary coding for a location type of a sensory device characteristic basic attribute according to an embodiment.

- Table 7 is a description of the syntax configuration semantics for sensory device basic properties according to one embodiment

- the sensory device processing system includes MPEG-V information (MPEG-V).

- Table 7-1 shows binary encoding syntax for MPEG-V information, according to an embodiment.

- Table 7-1 (Number of (Mnemonic) * 3

- Table 7-2 shows a descriptor construction semantics for MPEG-V information according to an embodiment.

- the sensory device 530 is a driver 532 for performing an effect event. It can be classified into several types according to the type of.

- the sensory device 530 includes a light type, a flash type, a heating type, a cooling type, a wind type, and a vibration type.

- Type Scent Type, Fog Type, Sprayer Type, Color Correction Type, Tactile Type, Kinesthetic Type, and Rigid Motion Type (RigidBody Motion Type).

- Table 7-2 shows the binary coding for the individual types of sensory device 530.

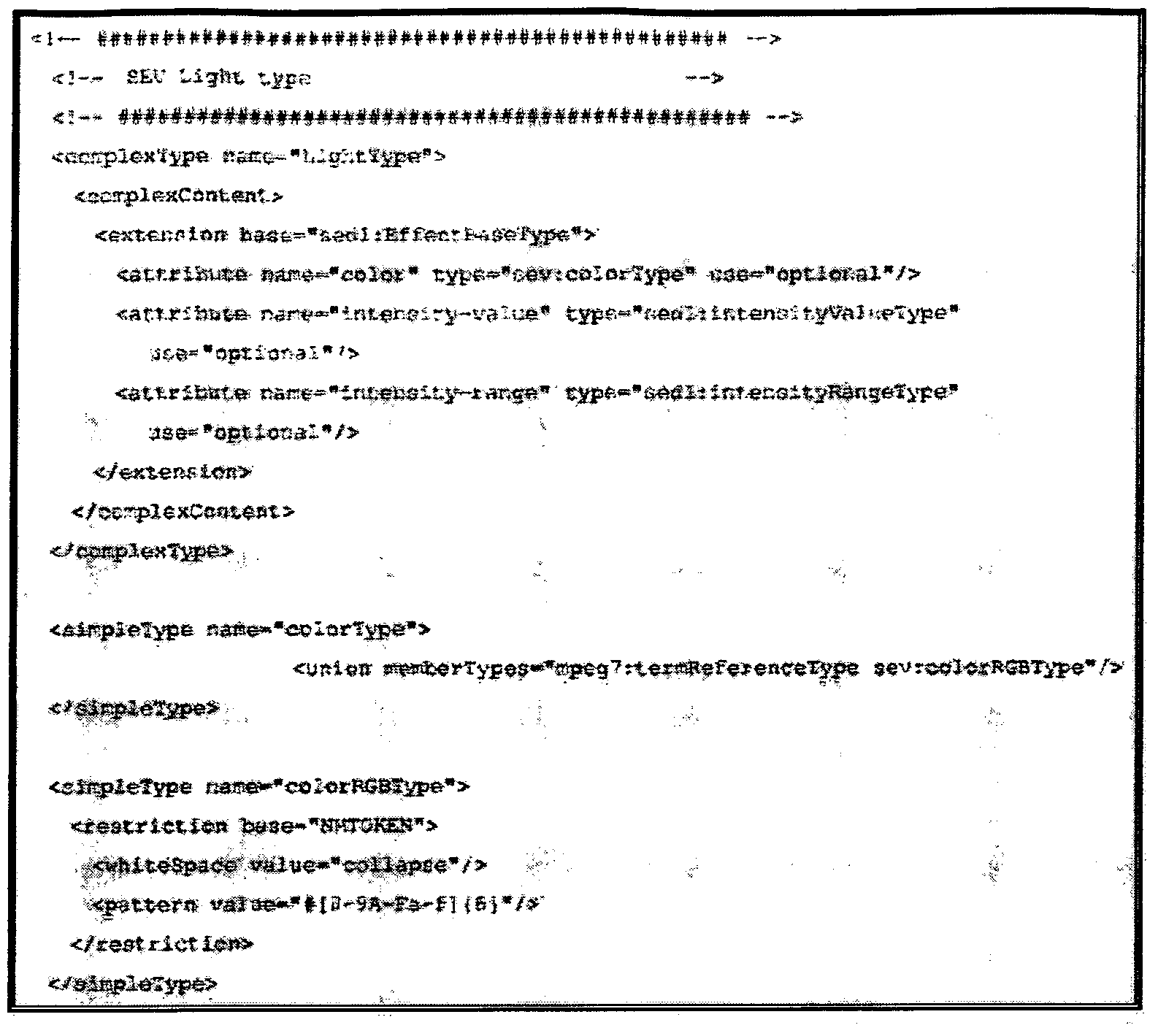

- Table 8 shows an XML Representation Syntax of characteristic information for a sensor of a light type according to an embodiment.

- Table 9 shows a binary representation syntax of characteristic information of a sensor of a light type according to an embodiment.

- Table 10 shows the Descriptor Components Semantics of the descriptor configuration of the characteristic information for the sensor device of the light type according to an embodiment.

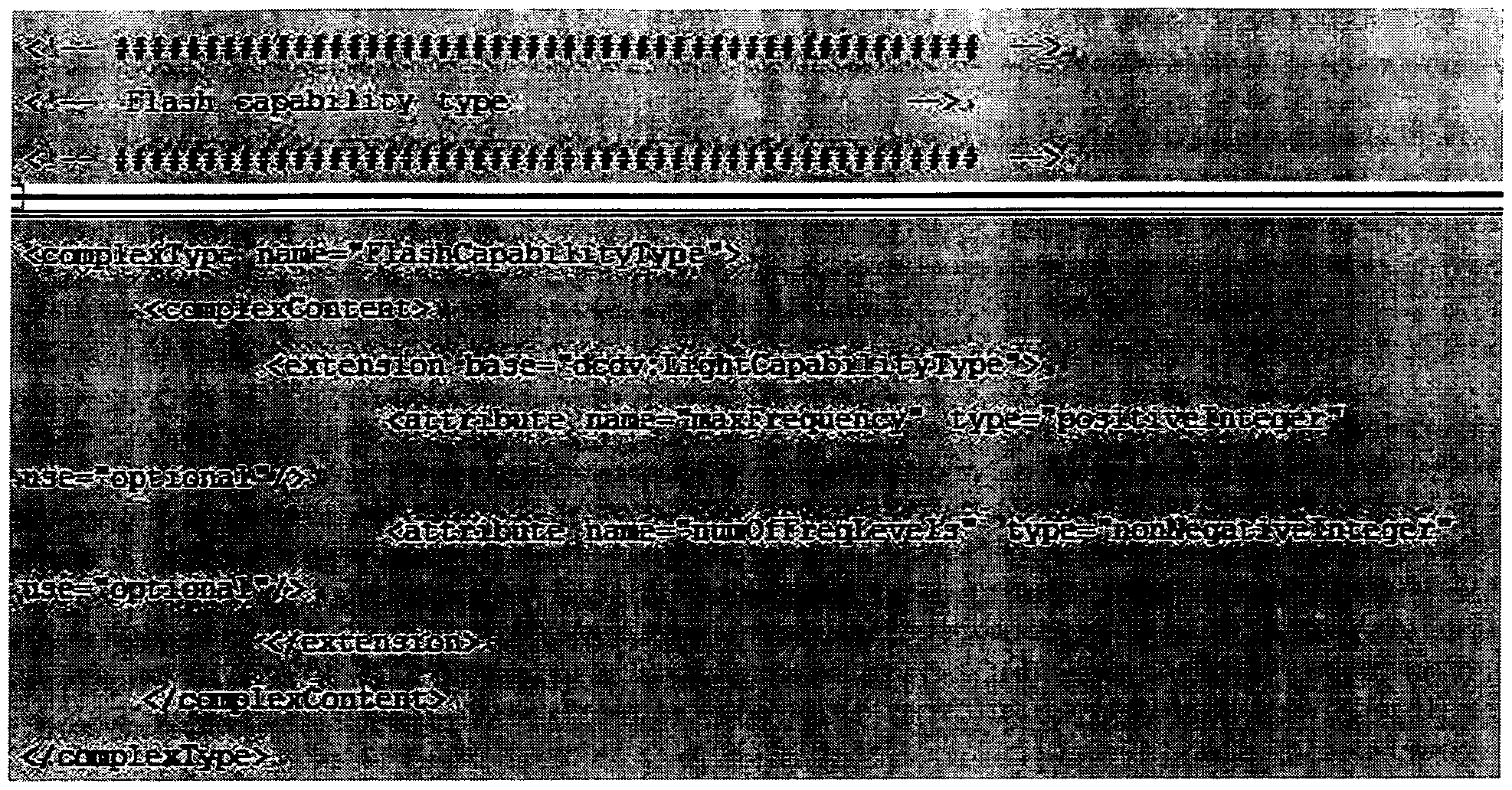

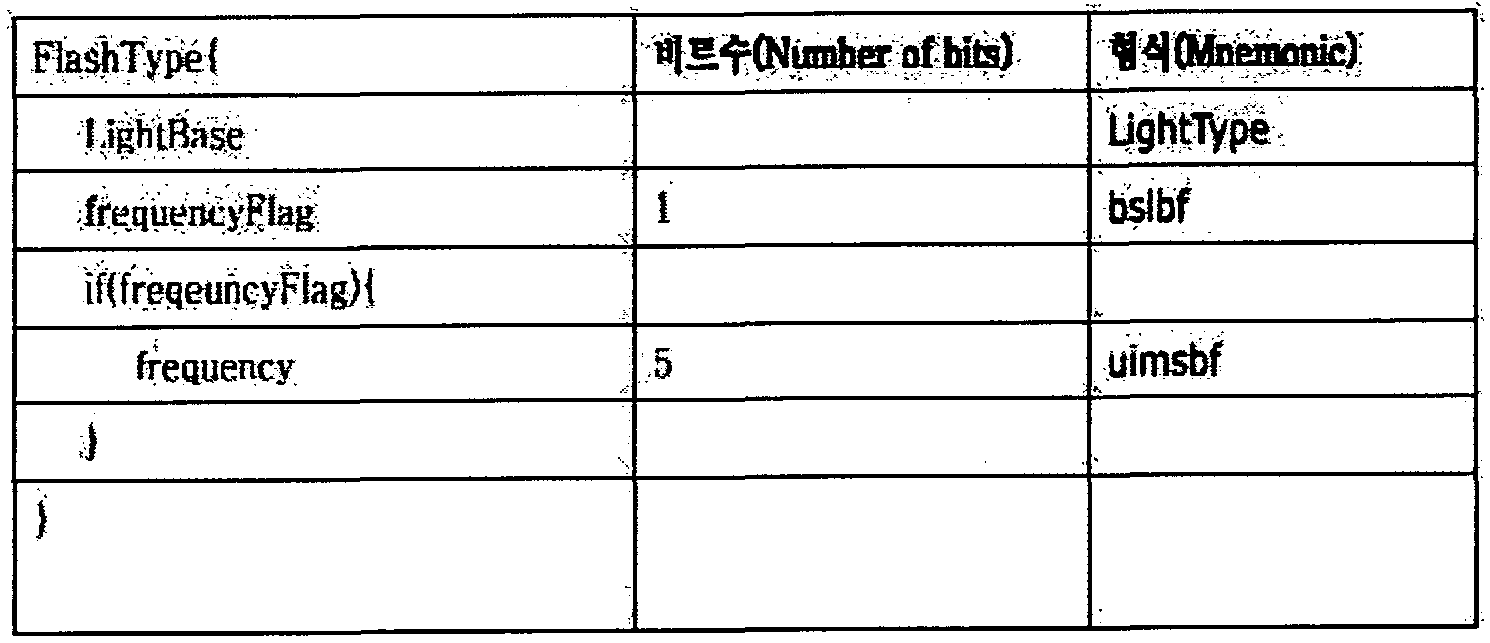

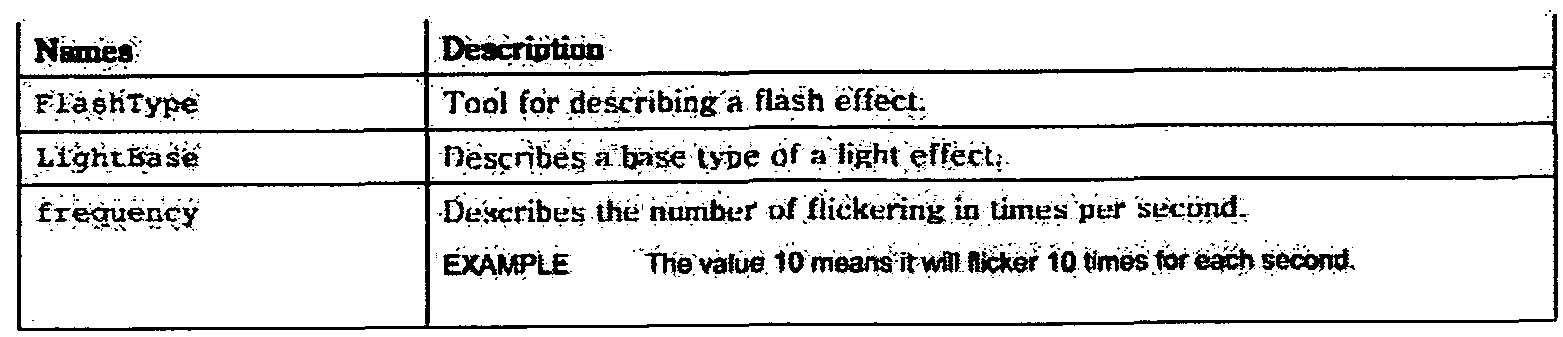

- Table 10 shows an XML Representation Syntax of feature information for a flash-type sensory device, according to an embodiment.

- Table 12 shows a binary representation syntax of characteristic information for a flash-type sensory device, according to an exemplary embodiment.

- Table 13 shows descriptor components semantics of characteristic information for a flash-type sensory device, according to an exemplary embodiment.

- Table 14 shows an XML Representation Syntax of feature information for a sensor of a heating type according to an embodiment.

- Table 15 is binary encoding of characteristic information for a sensor of a heating type according to an embodiment. Syntax is represented by Binary Representat ion Syntax.

- Table 16 shows descriptor components semantics of characteristic information for a sensor of a heating type according to an embodiment.

- Table 17 shows an XML Representation Syntax of characteristic information for a cooling type sensory device according to an embodiment.

- Table 18 illustrates a binary representation syntax of characteristic information of a cooling type sensory device, according to an exemplary embodiment.

- unitFlag :, ⁇ . ttslbf ,

- Table 19 shows descriptor components semantics of characteristic information for a cooling type sensory device according to one embodiment.

- Table 20 shows an XML Representat ion Syntax of characteristic information for a wind type sensor.

- Table 21 shows a binary representation syntax of feature information for a wind-type sensory device, according to an embodiment.

- Table 22 is a descriptor configuration of the characteristic information for the wind type sensor according to an embodiment Descriptor Components Semantics.

- Table 23 shows XML Representat ion Syntax of characteristic information for a vibration-type sensory device according to an embodiment.

- Table 24 illustrates a binary representation syntax of characteristic information of a vibration-type sensory device, according to an exemplary embodiment.

- Table 25 shows descriptor component semantics of characteristic information for a vibration-type sensory device according to an embodiment.

- Table 26 shows an XML Representation Syntax of characteristic information for an odor type sensory device according to an embodiment.

- Table 27 shows a binary representation syntax of characteristic information of an odor type sensory apparatus, according to an exemplary embodiment.

- Table 28 shows binary encoding for an odor type according to an embodiment.

- Table 29 shows descriptor components semantics of characteristic information for a sensory device of an odor type according to an embodiment.

- Table 30 shows XML Representat ion Syntax of characteristic information about a fog type sensory device, according to an exemplary embodiment.

- Table 31 illustrates a binary representation syntax of characteristic information of a fog-type sensory device, according to an exemplary embodiment.

- Table 32 shows descriptor components semantics of characteristic information about a fog-type sensory apparatus, according to an exemplary embodiment.

- Table 33 illustrates XML Representat ion Syntax of characteristic information for a spray-type sensory device according to an embodiment.

- Table 34 illustrates a binary representation syntax of characteristic information for a spray-type sensory device, according to an exemplary embodiment.

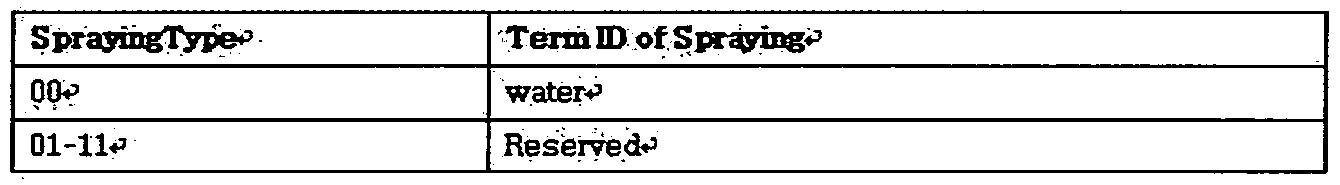

- Table 35 shows binary coding for spray types according to one embodiment.

- Table 36 shows descriptor components semantics of the characteristic information for the spray-type sensory device according to one embodiment.

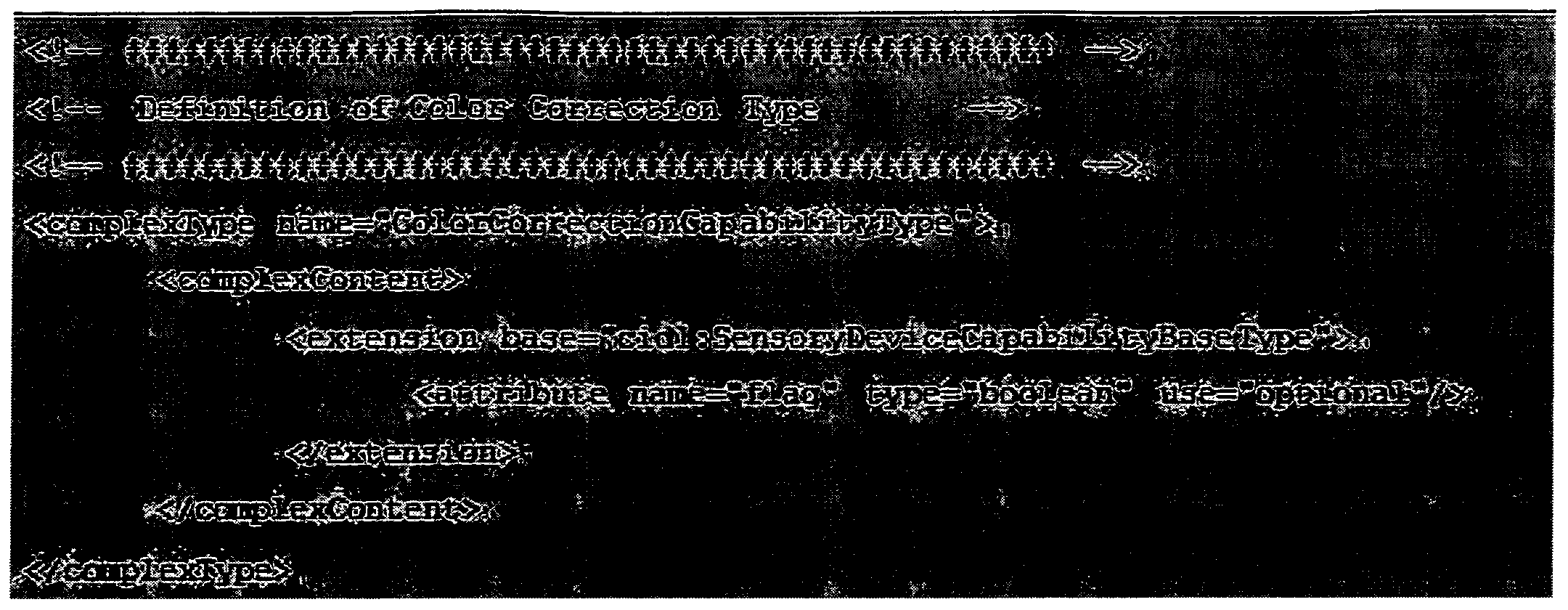

- Table 37 shows an XML Representation Syntax of feature information for a color correction type sensory device, according to an embodiment.

- Table 38 illustrates a binary representation syntax of characteristic information for a color calibration type sensory device.

- Table 39 shows descriptor components semantics of characteristic information for a color calibration type sensory apparatus according to an embodiment.

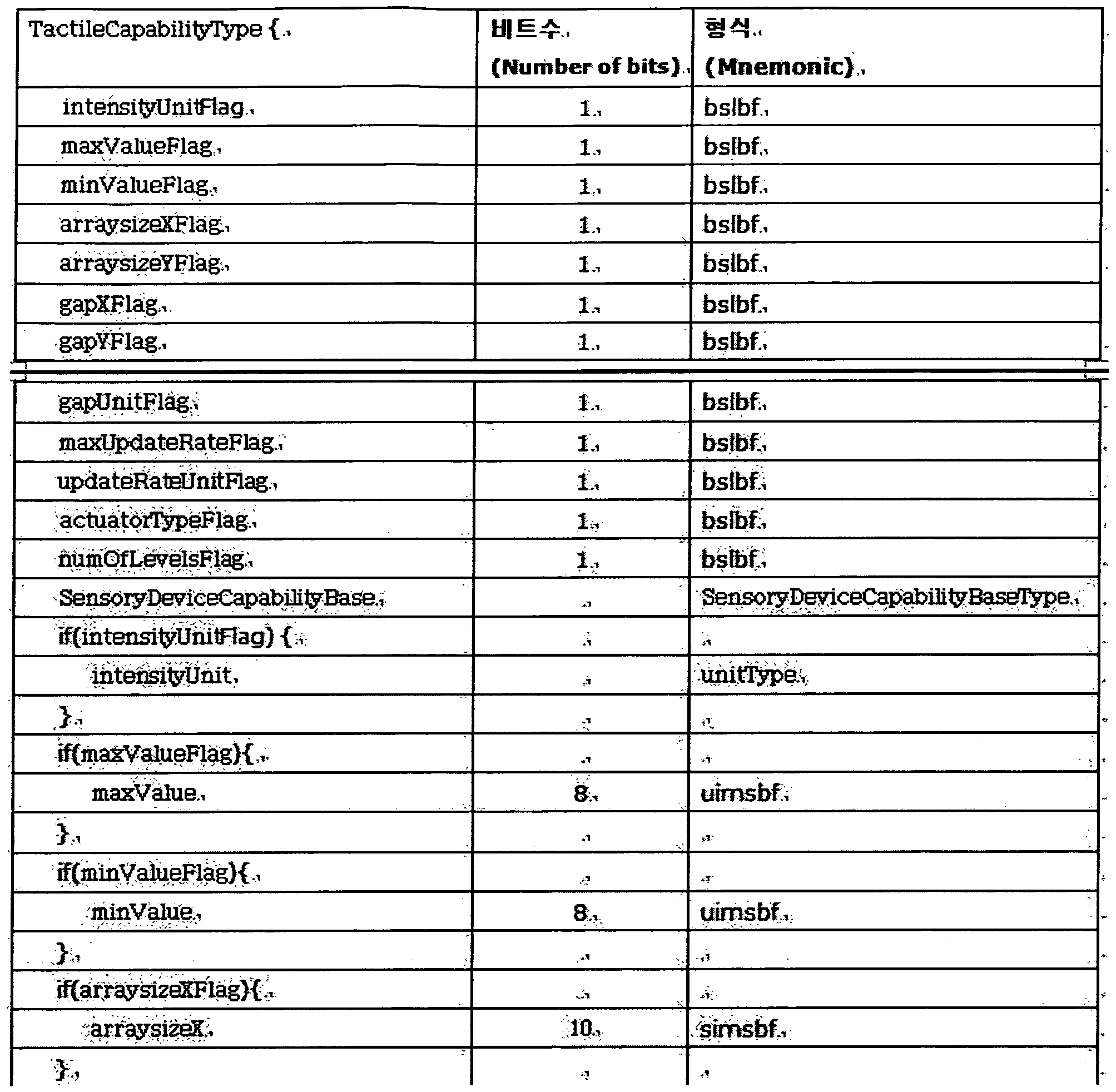

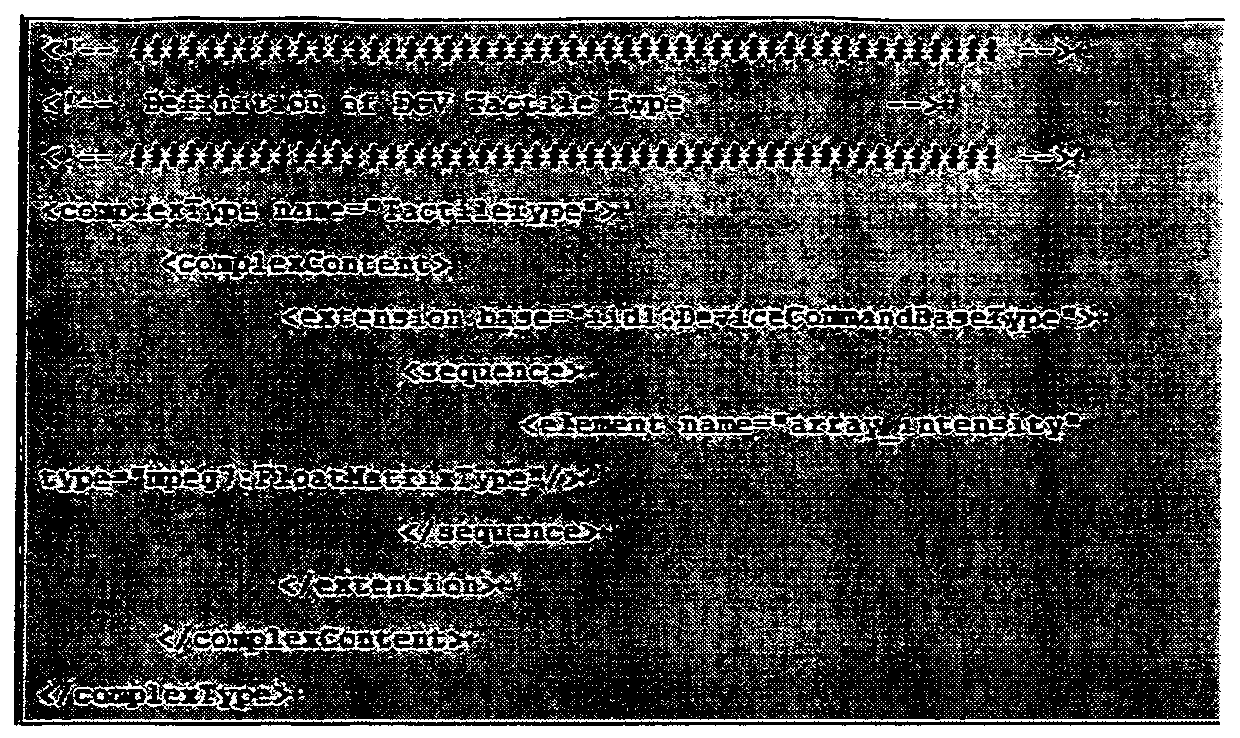

- Table 40 shows an XML Representation Syntax of characteristic information for a tactile type sensory device according to an embodiment.

- Table 41 illustrates a binary representation syntax of characteristic information of a tactile type sensory device, according to an exemplary embodiment.

- Table 42 shows binary encoding for a tactile display type according to one embodiment.

- Table 43 shows descriptor components semantics of characteristic information for a tactile type sensory device according to an embodiment.

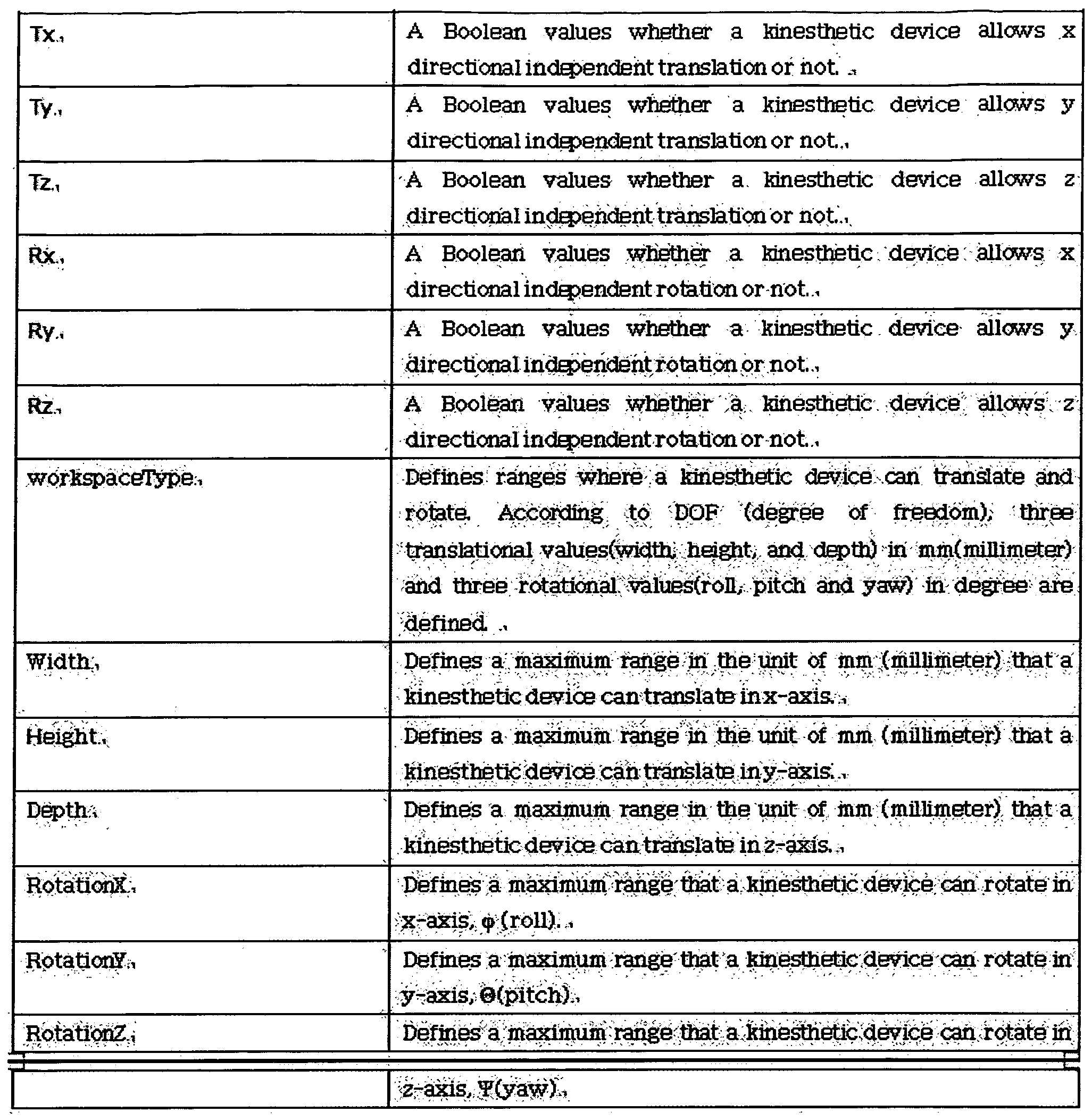

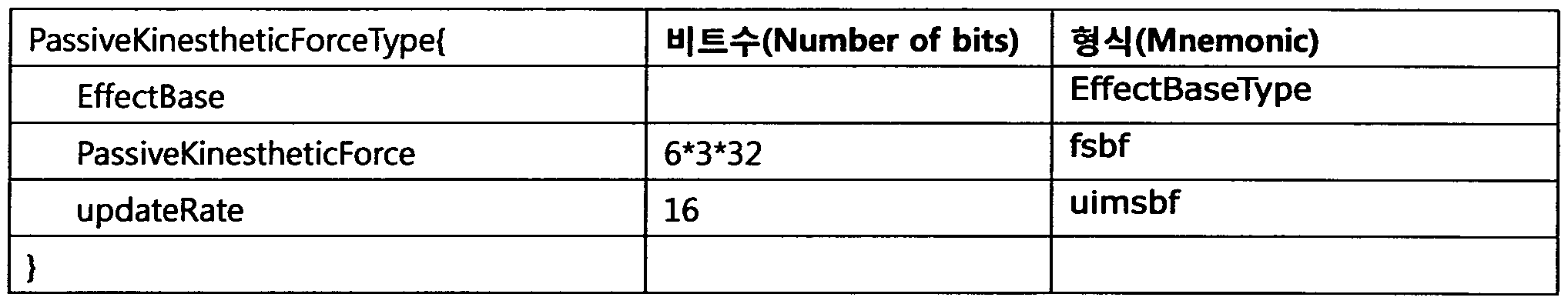

- Table 44 shows an XML Representation ion Syntax of characteristic information for a sensory device of a near sense type according to an embodiment.

- Table 45 shows a binary representation syntax of characteristic information for a sensory device of a near sense type according to an embodiment.

- Table 46 shows descriptor components semantics of characteristic information for a sensory device of the near-sensory type according to an embodiment.

- Table 47 shows an XML Representation Syntax of characteristic information for a rigid motion type sensory device according to an embodiment.

- Table 48 shows a binary representation syntax of characteristic information for a rigid motion type sensory device.

- Table 49 shows descriptor components semantics of characteristic information of a rigid motion type sensory apparatus according to an embodiment.

- the encoding unit 533 may encode the preference information on the user's sensory effect into the user's sensory preference metadata. That is, the encoding unit 533 may generate user sensation preference metadata by encoding preference information.

- Encoding section . 533 may include at least one of an XML encoder and a binary encoder.

- the encoding unit 533 may generate user sensation preference metadata by encoding the preference information in an XML form.

- the encoder 533 may generate user sensation preference metadata by encoding the preference information in binary form.

- the encoding unit 533 may generate the fourth metadata by encoding the preference information in the XML form, and generate the user feel preference metadata by encoding the fourth metadata in the binary form.

- the sensory device 530 may further include an input unit 534.

- the input unit 534 may receive preference information from a user of the sensory device 530.

- the user sensory preference metadata may affect the user's sensory effect. It may include a User Sensory Preference Base Type indicating basic preference information about the user.

- the user sensation preference type may be metadata about preference information that is common to all users.

- Table 50 shows an XML Representation Syntax for a User Feeling Preference Basic Type according to an embodiment.

- Table 51 shows Binary Representat ion Syntax for a User Feeling Preference Basic Type according to an embodiment.

- Table 52 shows descriptor components semantics for a user feel preference base type according to an embodiment.

- the user sensory preference metadata may include user sensory preference base attributes indicating a group of common attributes (Co ⁇ on Attributes) of preference information on a user's sensory effect. It may include.

- Table 53 shows an XML Representation Syntax for a User Feeling Preference Basic Attribute according to an embodiment.

- Table 54 shows a binary representation syntax for a user sensory preference basic attribute according to an embodiment.

- Table 55 shows binary encoding of an adaptive mode type among user's sensory preference basic attributes according to an embodiment.

- Table 56 is a description of the syntax configuration semantics for the user sensory preference basic attribute according to an embodiment

- Table 57 shows an XML representation syntax of XML preference information for a sensor of a light type according to an embodiment.

- Table 58 shows a binary representation syntax of preference information for a sensor of light type according to an embodiment.

- Table 59 shows binary coding for a unit CS Jnit CS) according to an embodiment.

- Table 60 shows the Descriptor Components Semant ics of the preference information for the sensory device of the light type according to one embodiment.

- Binary re resentatio3 ⁇ 4 signals the presence of the activation iattribute,

- a value of "means the attribute shall be used and" 0 ° means the attribute siiall ⁇ be used SXtelids dia: jEJsert3 ⁇ 4m ac ⁇ eri3ticBa3e # ypiB as defined ih ai 7 of ISO / EC 2 ⁇ 000 and provides a base abstract type ior a subset of

- Table 61 shows an XML Representation Syntax of preference information for a flash-type sensory device, according to an embodiment.

- Table 62 shows a binary representation syntax of preference information for a flash-type sensory device, according to an exemplary embodiment.

- Table 63 shows descriptor components semantics of preference information for a flash-type sensory device, according to an exemplary embodiment.

- Table 64 shows an XML Representation Syntax of preference information for a sensory device of a heating type according to an embodiment.

- Table 65 shows a binary representation syntax of preference information for a sensor of a heating type according to an embodiment.

- Table 66 shows descriptor components semantics of preference information for a sensory device of a heating type according to an embodiment.

- Table 68 shows a binary representation syntax of preference information for a cooling type sensory device, according to an embodiment.

- Table 69 shows descriptor components semantics of preference information for a cooling type sensory device, according to an exemplary embodiment.

- Table 70 shows XML Representat ion Syntax of preference information for a wind-type sensory device according to an embodiment.

- the notation indicates a binary representation syntax of the preference information for the wind type sensor.

- Table 72 shows descriptor components semantics of preference information for a wind-type sensory device, according to an embodiment.

- Table 73 illustrates XML Representat ion Syntax of preference information for a vibration-type sensory device according to an embodiment.

- Table 74 shows a binary representation syntax of preference information for a vibration-type sensory device.

- Table 75 is a descriptor configuration of preference information for a vibration-type sensory device according to an embodiment Descriptor Components Semantics.

- Table 76 shows an XML Representation Syntax of preference information for an odor type sensory device according to an embodiment.

- Table 77 shows Binary Representation Syntax of Preference Types for Sensory Devices of Smell Types in accordance with an embodiment.

- Table 78 shows binary encoding for an odor type according to an embodiment. Table 78

- Table 79 shows descriptor components semantics of preference information for a sensory device of an odor type according to an embodiment. Table 79

- Table 80 illustrates XML Representat ion Syntax of preference information for a fog-type sensory apparatus, according to an exemplary embodiment.

- Table 81 shows a binary representation syntax of preference information for a fog-type sensory device, according to an embodiment.

- Table 82 is a descriptor configuration of preference information for a fog type sensor according to an embodiment Descriptor Components Semantics.

- Table 83 shows XML Representation Syntax of preference information for a spray-type sensory apparatus, according to an embodiment.

- Table 84 shows a binary representation syntax of preference information for a spray-type sensory apparatus, according to an embodiment.

- Table 85 shows binary coding for spray types according to one embodiment. Table 85

- Table 86 shows descriptor components semantics of the preference information for the spray-type sensory device according to one embodiment.

- Table 87 shows an XML Representation Syntax of preference information for a color correction type sensory device, according to an embodiment.

- Table 88 shows Binary Representat ion Syntax of preference information for a color correction type sensory device.

- Table 89 shows descriptor components semantics of preference information for a color correction type sensory device, according to an embodiment.

- Table 90 shows an XML Representat ion Syntax of preference information for a tactile type sensory device according to an embodiment.

- Table 91 is a binary encoding of preference information for a tactile type sensory device, according to an embodiment. Syntax is represented by Binary Representat ion Syntax. Table 91

- Table 92 shows Descriptor Components Semantics of a descriptor configuration of preference information for a tactile type sensory device according to an embodiment.

- Table 93 shows XML Representat ion Syntax of preference information for a sensory device of the near-sensory type, according to an embodiment.

- Table 94 shows a binary representation syntax of preference information for a sensory device of a near sense type according to an embodiment.

- Table 95 shows descriptor components semantics of preference information for a sensory device of the near-sensory type according to an embodiment.

- Z * 5 Describes the sensed value, in z-axis in the unit 96 is an XML part of the preference information for the sensory device of the rigid motion type according to an embodiment.

- Table 96 shows XML Representation Syntax.

- Table 97 shows a binary representation syntax of preference information for a rigid motion type sensory device.

- Table 98 shows descriptor components semantics of preference information for a rigid motion type sensory apparatus according to an embodiment.

- FIG. 6 is a view showing the configuration of a sensory effect control apparatus according to an embodiment of the present invention.

- the sensory effect control apparatus 620 includes a decoding unit 621, a generation unit 622, and an encoding unit 623.

- the decoding unit 621 decodes sensory effect metadata and sensory device characteristic metadata.

- the sensory effect control device 620 may receive sensory effect metadata from the sensory media playback device 610, and may receive sensory device characteristic metadata from the sensory device 630.

- the decoding unit 621 decodes the sensory effect metadata to produce a sensory effect. Information can be extracted. In addition, the decoding unit 621 may decode sensory device characteristic metadata to extract feature information about the sensory device 630.

- the decoding unit 621 may include at least one of an XML decoder and a binary decoder.

- the decoding unit 621 may include at least one of the XML decoder 221 described with reference to FIG. 2, the binary decoder 321 described with reference to FIG. 3, the binary decoder 421 described with reference to FIG. 4, and the XML decoder 422. It may include one.

- the generation unit 622 generates command information for controlling the sensory device 630 based on the decoded sensory effect metadata and the decoded sensory device characteristic metadata.

- the command information may be information for controlling execution of an effect event corresponding to sensory effect information by the sensory device 630.

- the sensory effect control device 620 may further include a receiver (not shown).

- the receiver may receive user sensory preference metadata from the sensory device 630.

- the decoding unit 621 may decode the user sensory preference metadata. That is, the decoding unit 621 may decode the user's sensory preference metadata to extract user's preference information on the sensory effect.

- the generator 622 may generate command information for controlling the sensory device 630 based on the decoded sensory effect metadata, the decoded sensory device characteristic metadata, and the decoded user sensory preference metadata. .

- the encoder 623 may encode the command information into sensory device command metadata. That is, the encoding unit 623 may generate sensory device command metadata by encoding the command information.

- the encoding unit 623 may include at least one of an XML encoder and a binary encoder. have.

- the encoder 623 may generate sensory device command metadata by encoding the command information in an XML form.

- the encoding unit 623 may generate sensory device command metadata by encoding the command information in binary form.

- the encoding unit 623 may generate the first metadata by encoding the command information in the XML form, and generate the sensory device command metadata by encoding the first metadata in the binary form.

- the sensory device command metadata may include a sensory device command base type indicating basic command information for controlling the sensory device 630.

- the sensory device command base type may be metadata for command information commonly applied to the control of all sensory devices 630.

- Table 99 shows an XML Representation Syntax for a sensory device instruction base type according to one embodiment.

- Table 100 shows a binary encoding syntax for a sensor device basic type according to an embodiment.

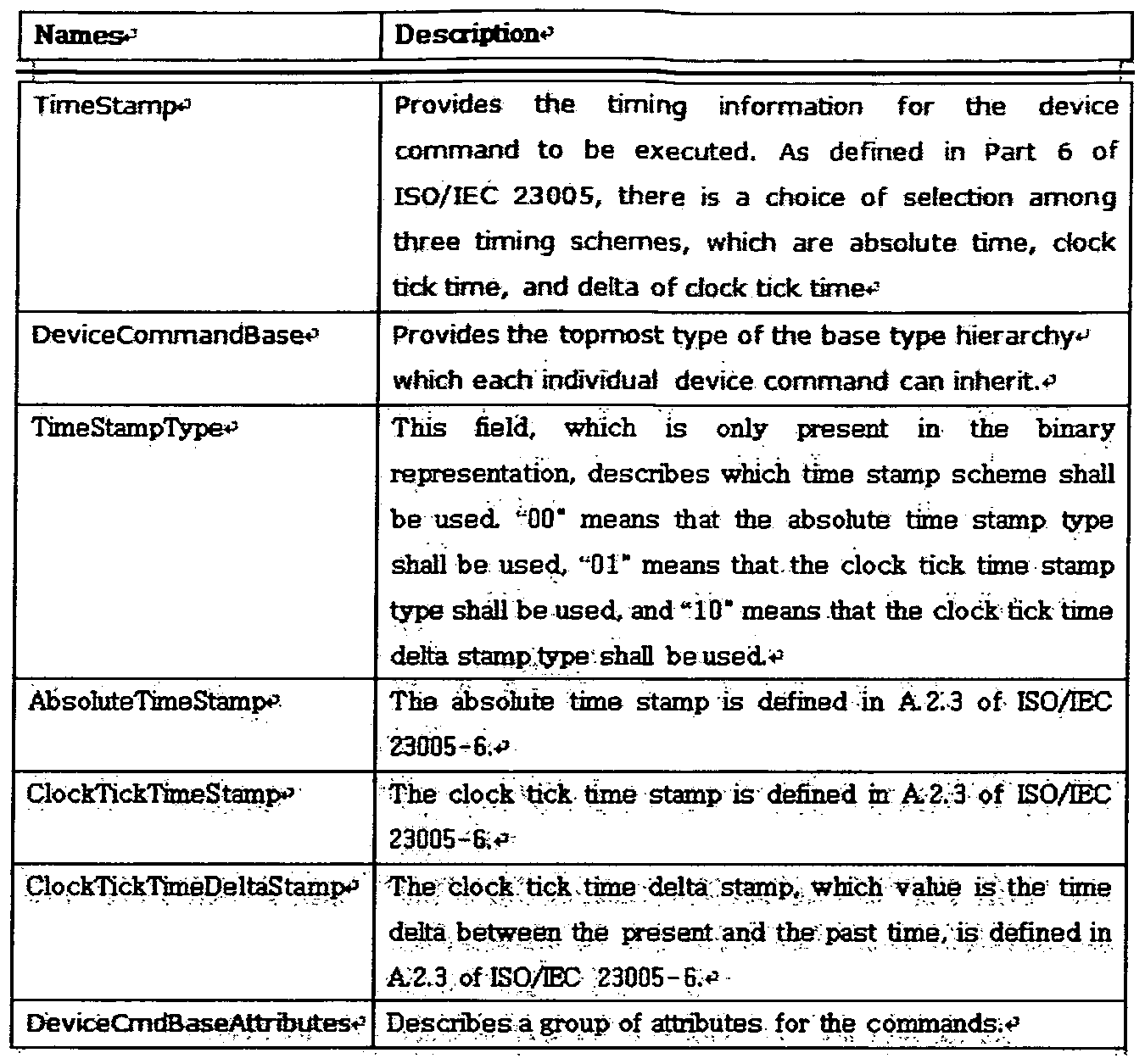

- Table 1 shows descriptor components semantics for a sensor device basic type according to an embodiment.

- the sensory device command metadata may include sensory device command base attributes indicating a group of common attributes of command information.

- Table 102 shows an XML Representation Syntax for a sensory device command basic attribute according to an embodiment.

- Table 103 shows a binary representation syntax for a sensory device basic element attribute according to an embodiment. Table 103

- Table 104 shows descriptor components semantics for a sensory device command basic attribute according to one embodiment.

- Table 105 shows an XML Representation Syntax of command information for a sensor of a light type according to an embodiment.

- Table 106 shows binary encoding of command information for a sensory device of a light type, according to an embodiment. Syntax is represented by Binary Representat ion Syntax.

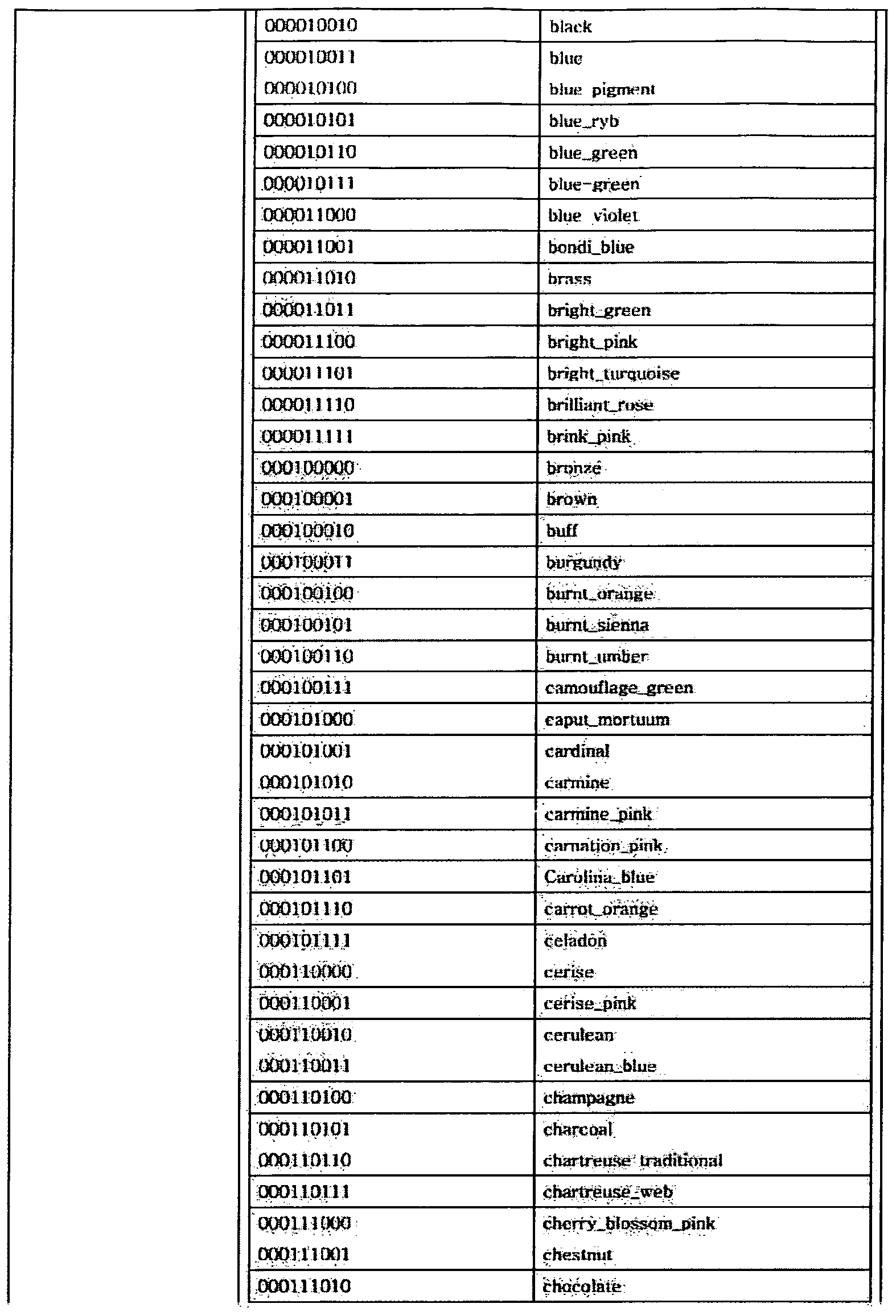

- Table 107 shows a binary representation for Color CS, according to one embodiment. Table 107

- Table 108 is a descriptor configuration of command information for a sensor of light type according to an embodiment. Semantics (Descriptor Components Semantics).

- Table 109 shows an XML Representation Syntax of command information for a flash-type sensory device, according to an embodiment.

- Table 110 shows a binary representation syntax of command information for a flash-type sensory device, according to an exemplary embodiment.

- Table 111 shows descriptor components semantics of command information for a flash-type sensory device, according to an exemplary embodiment.

- Table 112 shows an XML Representation Syntax of command information for a sensor of a heating type according to an embodiment.

- Table 113 shows a binary representation syntax of command information for a sensor of a heating type according to an embodiment.

- Table 114 shows descriptor component semantics of command information for a sensor of a heating type according to an embodiment.

- Table 115 shows an XML Representation Syntax of command information for a cooling type sensory device according to an embodiment.

- Table 116 shows a binary representation syntax of command information for a cooling type sensory device, according to an exemplary embodiment.

- Table 117 shows descriptor components semantics of command information for a cooling type sensory device, according to an exemplary embodiment.

- Coolingiy e Tool for describing ⁇ command for cooling device, ⁇

- a value of "1" means the attribute sha be Used

- DeviceCommandBasev Provides the tp ⁇ nost type of the base type hierarchy

- Table 118 shows XML Representat ion Syntax of command information for a wind-type sensory device, according to an embodiment.

- Table 119 shows a binary representation syntax of command information for a wind-type sensory device, according to an embodiment.

- Table 120 shows descriptor components semantics of command information for a wind-type sensory device, according to an exemplary embodiment.

- Table 121 shows an XML Representation Syntax of command information for a vibration-type sensory device, according to an exemplary embodiment.

- Table 122 shows binary encoding of command information for a vibration-type sensory device, according to an exemplary embodiment.

- Table 123 shows descriptor components semantics of command information for a vibration-type sensory device according to an embodiment.

- Table 124 shows an X L encoding syntax of command information for an odor type sensory apparatus, according to an embodiment.

- Table 125 shows a binary representation syntax of command information for an odor type sensory device, according to an exemplary embodiment.

- Table 126 shows binary encoding for an odor type according to an embodiment.

- Table 127 shows descriptor component semantics of command information for an odor type sensory apparatus, according to an exemplary embodiment.

- Table 128 shows an XML Representation Syntax of command information for a fog type sensory device, according to an embodiment.

- Table 129 shows a binary representation syntax of command information for a fog-type sensory device, according to an embodiment.

- Table 130 shows descriptor components semantics of command information for a fog type sensor device according to an embodiment.

- Table 131 shows an XML representation syntax (XML Representat ion Syntax) of the command information for the spray-type sensory device, according to an embodiment.

- Table 132 shows a binary representation syntax of command information for a spray-type sensory device, according to an embodiment.

- Table 133 shows binary encoding for a spray type according to one embodiment.

- Table 134 shows Descriptor Components Semantics of command information for a spray type sensory device, according to one embodiment.

- Table 135 shows an XML representation syntax (XML Representation Syntax) of the command information for the color correction type sensory device, according to one embodiment.

- Table 136 shows a binary representation syntax of command information for a color correction type sensory device, according to an embodiment.

- Table 137 illustrates descriptor components semantics of command information for a color correction type sensory apparatus, according to an exemplary embodiment.

- Table 138 shows an XML Representation ion Syntax of command information for a tactile type sensory device according to an embodiment.

- Table 139 shows a binary representation syntax of command information for a tactile type sensory device, according to an embodiment.

- Table 140 shows descriptor components semantics of command information for a tactile type sensory device according to an embodiment.

- tactile device is composed of an array of actuators. * 3

- DeviceCommahdBase * 3 Provides the to ⁇ n st type of the base type hierarchy

- Table 141 illustrates XML Representat ion Syntax of command information for a sensory device of the near-sensory type, according to an embodiment.

- Table 142 shows a binary representation syntax of command information for a sensory device of the near sense type according to an embodiment.

- Table 143 shows descriptor components semantics of command information for a sensory device of the type of muscle sensation according to an embodiment.

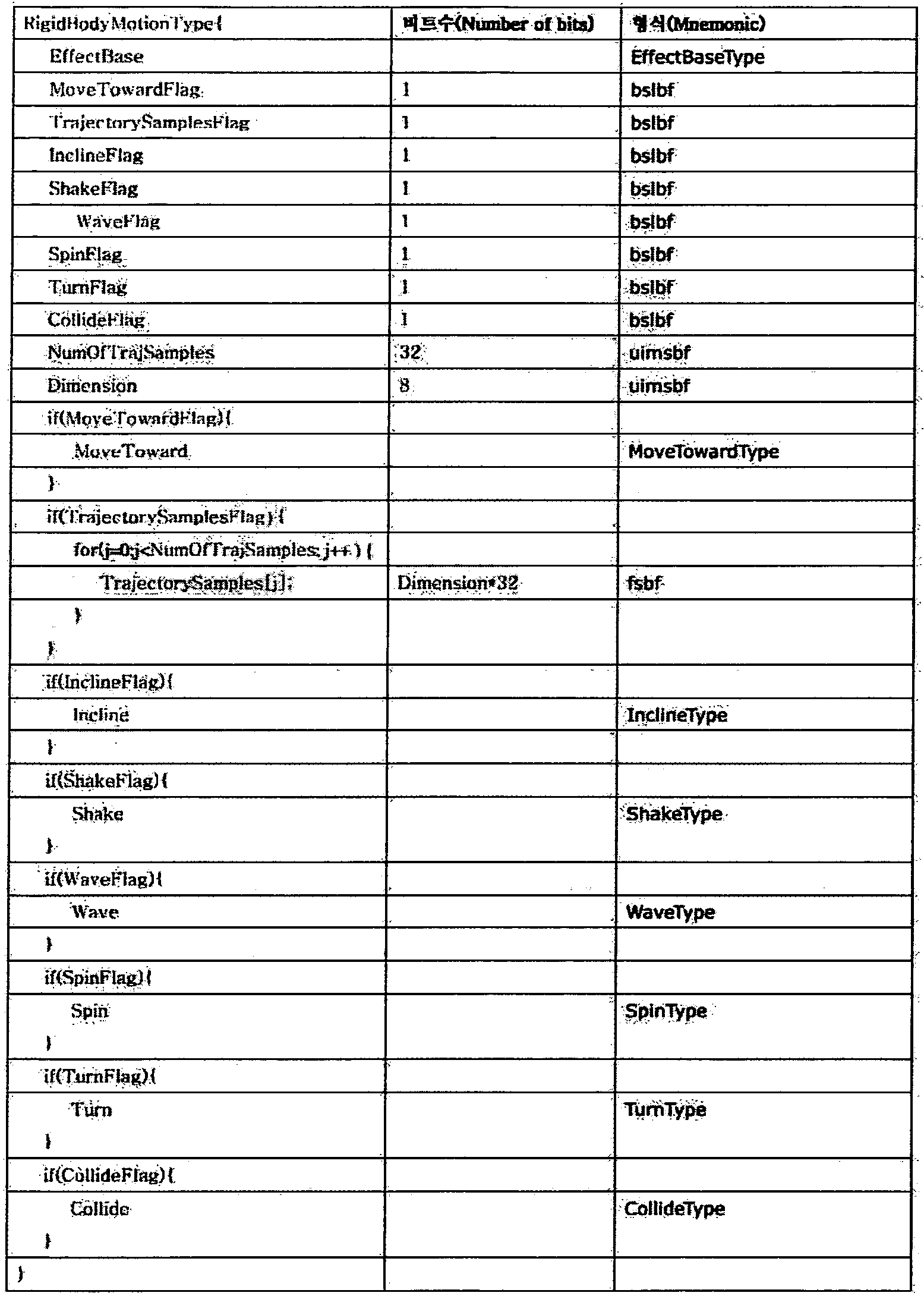

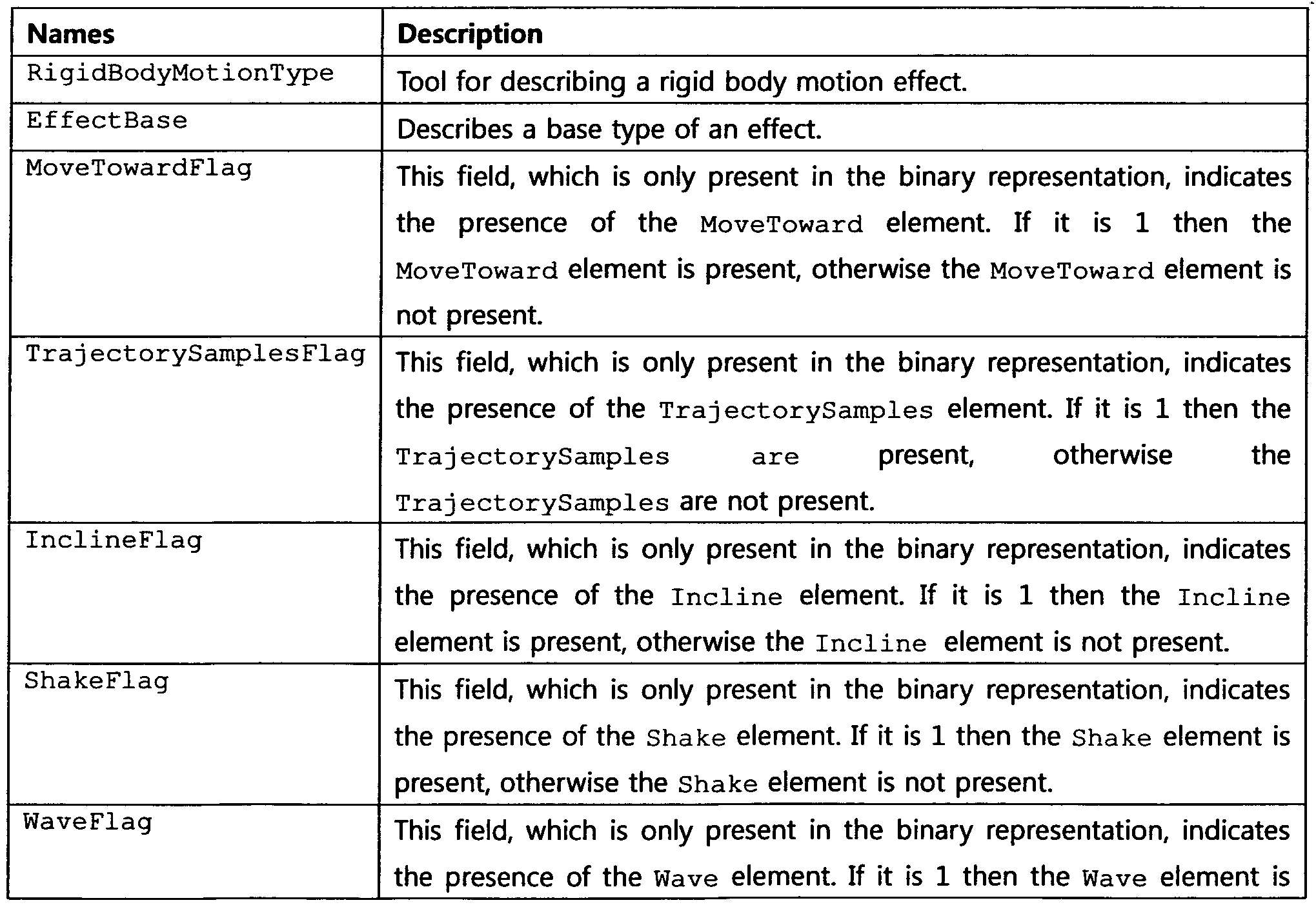

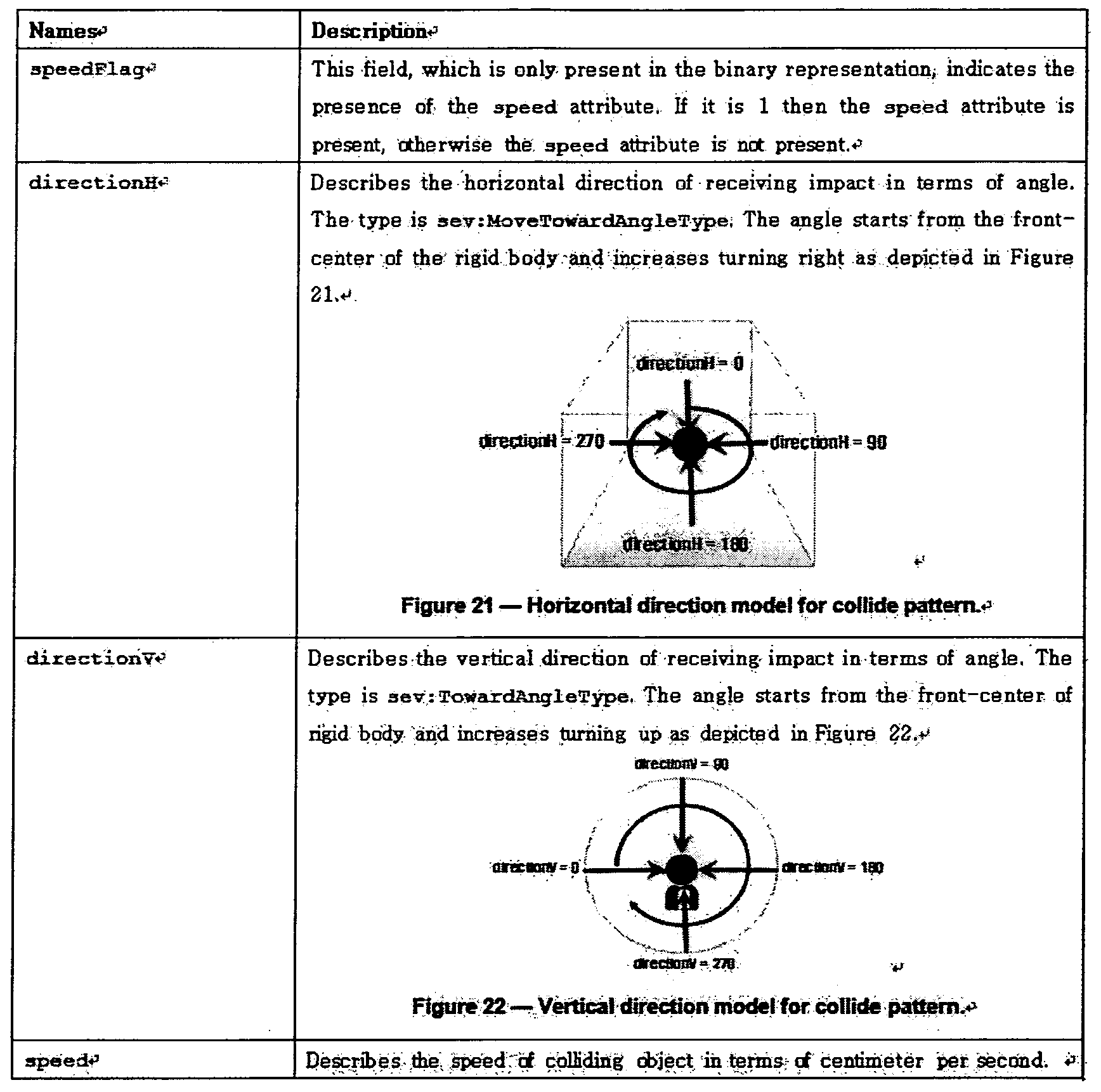

- Table 144 shows an XML Representation Syntax of command information for a rigid motion type sensory device according to an embodiment.

- Table 146 shows a binary representation syntax of command information for a rigid motion type sensory device according to another embodiment.

- Table 147 shows descriptor components semantics of command information for a rigid motion type sensory apparatus according to an embodiment.

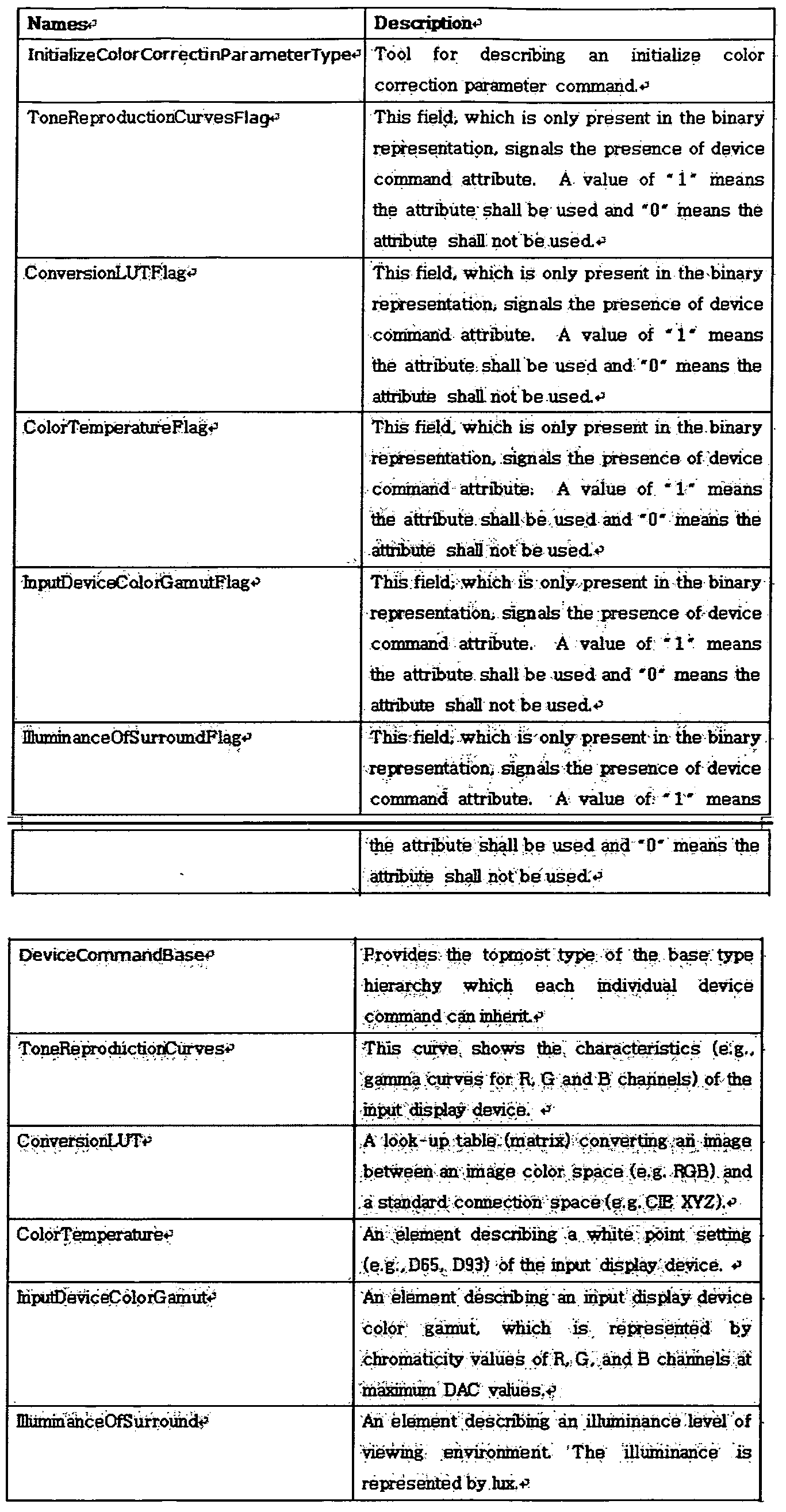

- the color correction type is the initial color correction parameter type

- the initial color correction parameter type is the tone reproduction curve type (Tone Reproduction).

- Curves Type Conversion LUT Type, i 1 luminant Type, and Input Device Color Gamut Type.

- Table 148 shows an XML encoding syntax of an initial color correction parameter type according to one embodiment.

- Table 149 shows a binary coded syntax of the initial color correction parameter type, according to an embodiment.

- Table 150 shows a Binary Representat ion Syntax of a Tone Radiation Grain Type according to an embodiment.

- Table 151 shows a binary representation syntax of the transform LUT type according to an embodiment.

- Table 152 shows the binary coding syntax (Binary Representation Syntax) of the light emitting type according to one embodiment.

- Table 153 shows a binary representation syntax of all types of input device colors according to an embodiment.

- Table 154 shows initial color correction parameters, according to one embodiment.

- Table 155 shows Descriptor Components Semantics of the tone radiation curve type according to one embodiment.

- Table 158 illustrates Descriptor Components Semantics of all types of input device colors according to an embodiment.

- FIG. 7 is a diagram showing the configuration of a sensory media playback device according to an embodiment of the present invention.

- the sensory media playback device 710 to play includes an extracting unit 711, an encoding unit 712, and a transmitting unit 713.

- the extractor 711 extracts sensory effect information from the content.

- the sensory device 730 may implement an effect event corresponding to sensory effect information.

- the encoding unit 712 encodes the extracted sensory effect information into sensory effect metadata. That is, the encoder 712 may generate sensory effect metadata by encoding sensory effect information.

- the encoding unit 712 may include at least one of an XML encoder and a binary encoder.

- the transmitter 713 may transmit the encoded sensory effect metadata to the sensory effect control apparatus 720.

- sensory effect metadata may include a SEM base type (SEM Base Type, Sensory Effect Metadata Base Type) that represents basic sensory effect information.

- SEM Base Type SEM Base Type

- Sensory Effect Metadata Base Type SEM Base Type

- Table 159 shows an XML Representation Syntax for the SEM Basic Type according to an embodiment.

- binary coding for sensory effect metadata includes a data field of a type of metadata, a type of individual metadata, and an individual metadata type. It may include a type (Data Field Type).

- Table 160-2 shows a basic structure of binary encoding according to an embodiment.

- the type of metadata may include metadata about sensory device command information (ie, sensory device command metadata, sensory device command metadata, sensory effect metadata, and the like).

- Table 160-3 shows binary encoding for the type of metadata.

- the type of metadata includes sensory effect metadata (SEM), interaction information metadata, command information metadata, and virtual world object characteristics. (Virtual World Object Characteristics) and Reserved Metadata.

- the type of individual metadata may be a selection for a light effect, a flash effect, and the like.

- Table 160-4 lists the identifiers (IDs) for the Effect Types of the individual metadata types.

- Table 161 shows descriptor components semantics for the SEM basic type according to one embodiment. Table 161

- the sensory effect metadata may include SEM Base Attributes indicating a group of Co's on Attributes of sensory effect information.

- Table 162 shows XML Representation ion Syntax for SEM basic attributes according to an embodiment.

- Table 163 shows binary encoding syntax for SEM basic attributes according to an embodiment.

- Table 164 is a descriptor construct semantics for SEM basic attributes according to an embodiment Components Semantics). Table 164

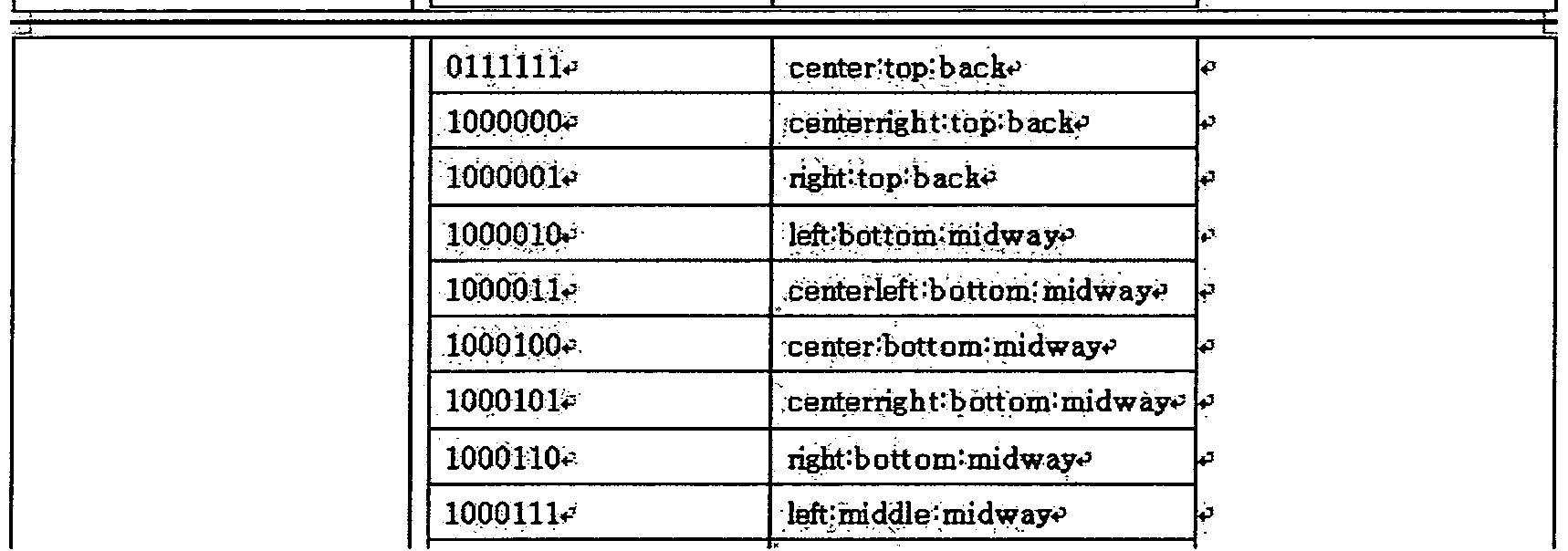

- EXAMPLE 2 The adaptation VR processes tie indlvid ⁇ l effects of a group of effects accorcing to ttieir priority in descending order due to its Bmited capabf! Iti s. That is, effects with tow priority might get lost locations Describes the location from where the effect is: expected to be received from the user -s perspective, according to the x—, y ⁇ , and 2-.axis depict; ed in

- the terms from the LocationCS shall be concatenated with the ":" sign in order of the ⁇ -,-, and 2-axis to uniquely define a location within the three-dimensional space.

- a wild card mechanism may be employed using the ⁇ * 'sign.

- EXAMPLE 4 urnin ⁇ iegrn eg-v: 01-SI-I * oca3 ⁇ 4ionCS-iIS : center : middle : front defines the location as fofiows: co ter on tie x-axis, midcle on the y-axis, and front on the z -axis. Thatis, it describes all effects at the renter, middle, front side of the user.

- axis.71 ⁇ 2it is, it describes ail effects at tiie bad f the tiser ⁇ sed, ⁇

- Table 165 shows Descriptor Components Semantics for SEM Adaptability Attributes, according to one embodiment.

- Table 166 shows an XML Representation Syntax for an si Attribute List, according to an embodiment.

- ve 3icn n ISO / IEC 22090-7, 231 " ⁇ ⁇

- naice w pis e fcj ⁇ w i ⁇ x »Wegative nt.egFe n > ⁇

- 167 is a binary encoding syntax for an si attribute list according to an embodiment. Representat ion Syntax).

- Table 168 shows Descriptor Components Semantics for Description Metadata Type according to one embodiment.

- anchorElementFlag- ⁇ This Held, which is only present in the binary representation, indicates the presence of the anchorElemen attribute. If it is 1 then the anchorElement attribute is present, otherwise the anchorElement attribute is not presents

- puMode attribute is present, odiervnse the. encodeAaR & P attribute- is not presents puM deFlag * 'This field, wHich is only present in the binary representation, indicates the presence of the puHode attribute. If it is 1 then the puMode attribute is present, otherwise the-, puMode attribute is not re ⁇ nt; * 3

- timeScateFlag * 7 This field , wHich is only present in the binary representation, indicates the presence of the timeScale attribute . . If it is 1 then :, the. tiioeScaie attribute is present, otiierwise ⁇ . izneSc & le attribute is not preseiat. * "

- stamp PTS * ' Table 169 shows XML Representat ion Syntax for SEM Root Element according to an embodiment.

- Table 170 shows a binary coded syntax for SEM root elements according to an embodiment.

- Table 1 shows descriptor components semantics for SEM according to an embodiment.

- EXAMPLE ⁇ si fcs describes the paint in time, when the associated

- Table 3 shows XML Representation ion Syntax for Description Metadata, according to an embodiment.

- Table 173 illustrates a binary representation syntax for description metadata according to an embodiment.

- Table 174 shows a description of the descriptor configuration for the description metadata type according to another embodiment.

- Ron Descriptor Components Semant ics

- Table 175 shows an XML Representation Syntax for Declarations Type, according to an embodiment.

- Table 176 shows a binary representation syntax for a declaration type according to an embodiment.

- Table 177 shows descriptor components semantics for the declaration type according to another embodiment.

- Table 178 illustrates XML Representat ion Syntax for an Effect Group Type according to an embodiment.

- Table 179 shows a binary representation syntax for an effect group type according to an embodiment.

- Table 180 is a descriptor construction semantics for the effect type according to another embodiment (Descriptor Components Semant ics). Table 180

- Table 181 shows XML Representat ion Syntax for an Effect Base Type according to an embodiment.

- Table 182 shows a binary representation syntax for an effect basic type according to an embodiment.

- Table 183 shows descriptor components semantics for the effect base type according to an embodiment. Table 183

- Table 184 illustrates descriptor components semantics for the supply information type (SuppHmental Information Type) according to an embodiment.

- Table 185 illustrates XML Representation Syntax for a Reference Effect Type according to an embodiment.

- Table 186 shows binary encoding syntax for a reference effect type according to an embodiment. Representat ion Syntax).

- Table 187 illustrates descriptor components semantics for a reference effect type according to an embodiment.

- Table 188 shows an XML Representation ion Syntax for a Parameter Base Type according to an embodiment.

- Table 189 shows a binary representation syntax for a parameter basic type according to an embodiment.

- Table 190 shows descriptor components semantics for a parameter basic type according to an embodiment.

- Table 191 shows XML Representat ion Syntax for Color Correction Parameter Type according to one embodiment.

- Table 192 shows a binary representation syntax for color correction parameter types according to an embodiment.

- Table 193 shows descriptor components semantics for color correction parameter types according to one embodiment.

- the color correction parameter type is the Tone Reproduct ion Curves Type. It may include a conversion LUT type, an i 1 luminant type, and an input device color gamut type.

- Table 194 is a descriptor construction semantics for the tone radiation curve type according to an embodiment

- Table 195 shows descriptor components semantics for a transform LUT type according to one embodiment.

- Table 196 shows descriptor components semantics for the light emission type according to one embodiment.

- Table 197 shows descriptor components semantics for the entire input device color type according to one embodiment.

- Table 198 shows an XML Representation Syntax for sensory effect information implemented in a sensor of a light type according to an embodiment.

- Table 199 shows a binary representation syntax for sensory effect information implemented in a sensory device of a light type according to an embodiment.

- Table 200 shows descriptor components semantics for sensory effect information implemented in a sensory device of a light type according to an embodiment.

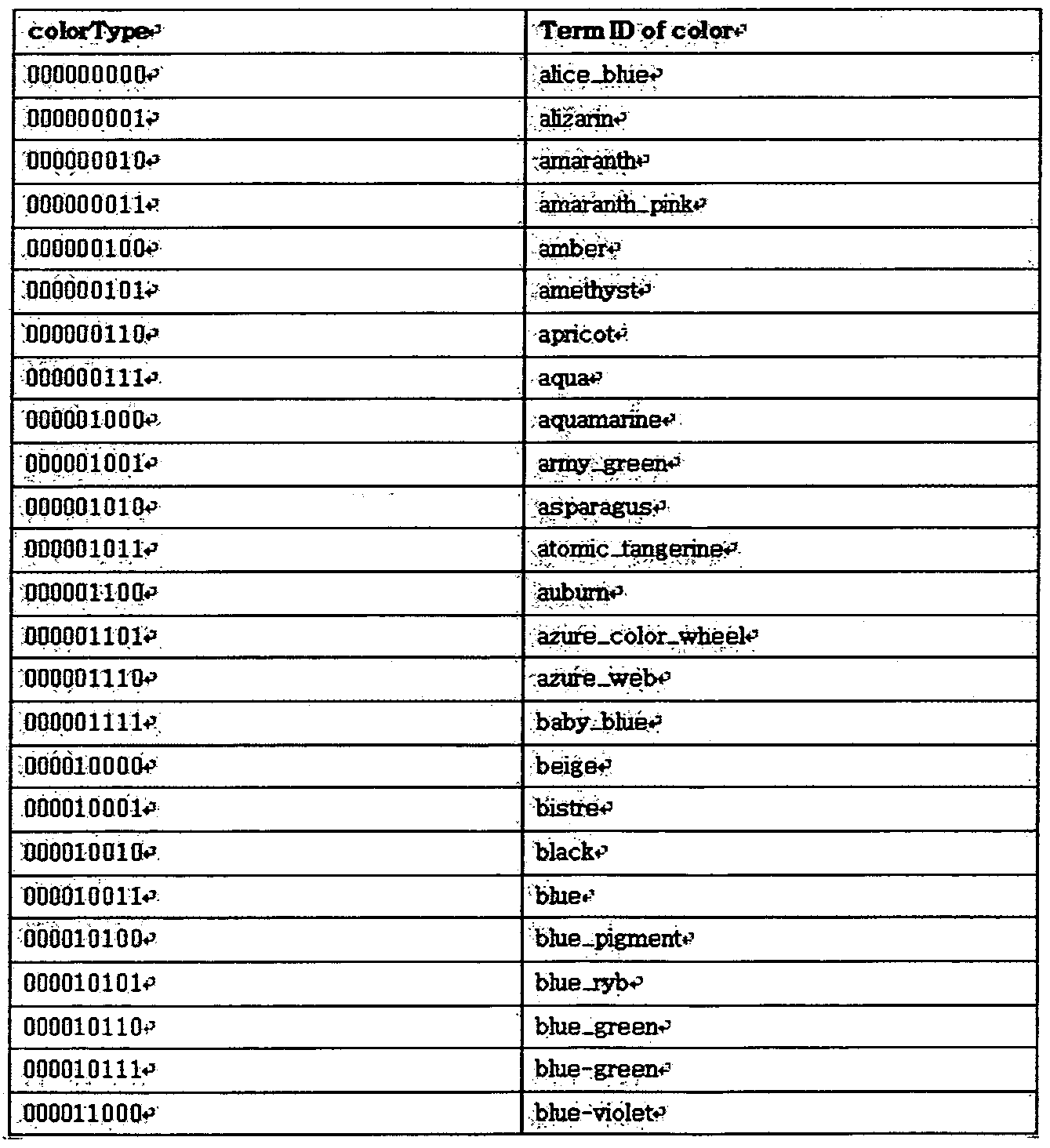

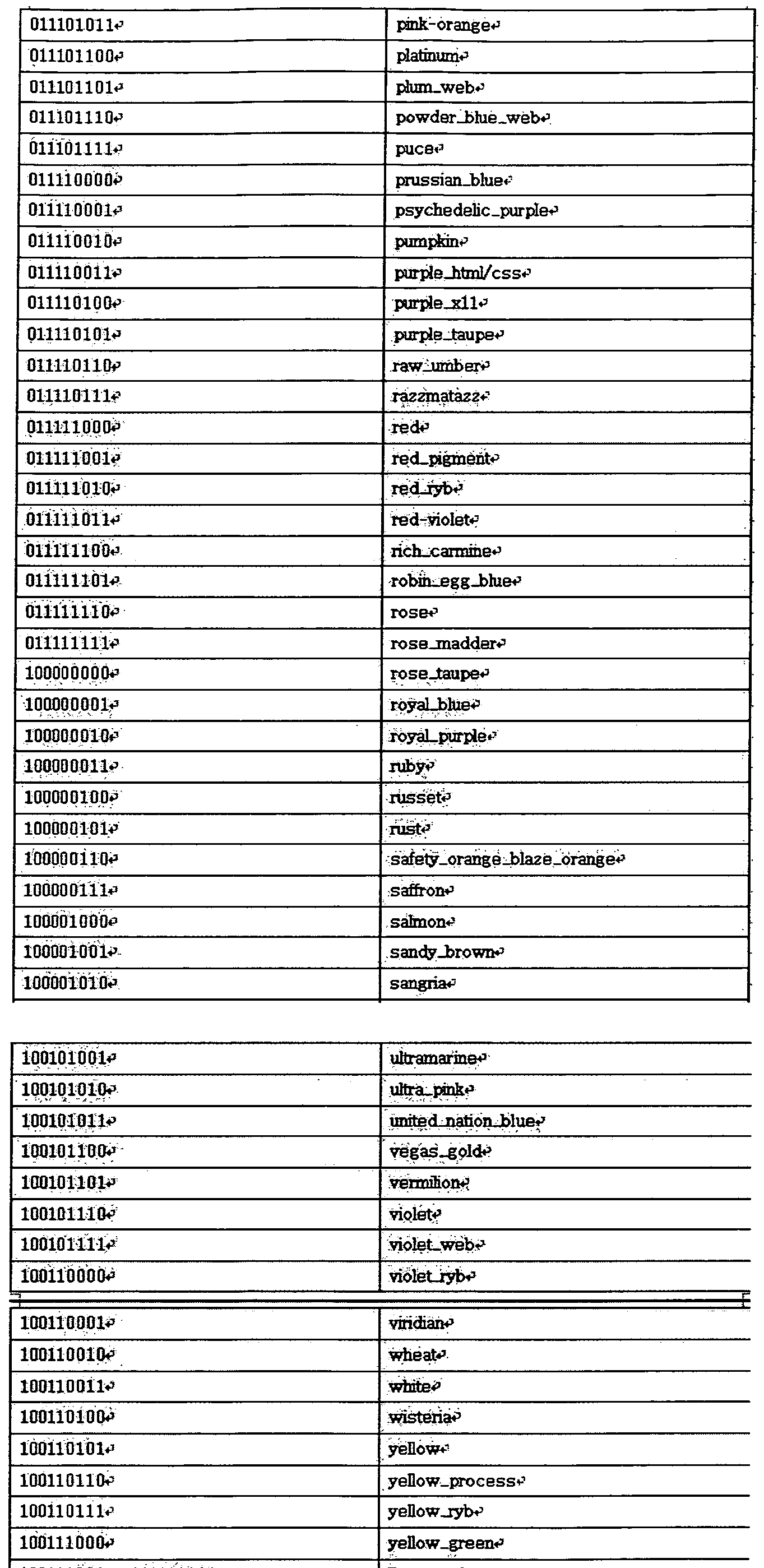

- Table 2 shows Descriptor Components Semantics for a color type according to an embodiment.

- Table 202 shows descriptor components semantics for a color RGB type according to one embodiment.

- Table 203 shows an XML Representation Syntax for sensory effect information implemented in a flash type sensor according to an embodiment. Table 203

- Table 204 shows a binary representation syntax for sensory effect information implemented in a flash-type sensory device, according to an embodiment.

- Table 204 shows descriptor components semantics for sensory effect information implemented in a flash-type sensory device according to an embodiment.

- sensory device 730 may further include a temperature type (Temperature Type).

- Table 205 shows an XML encoding syntax for sensory effect information implemented in a sensory device of a temperature type according to an embodiment.

- Table 206 shows a binary representation syntax for sensory effect information implemented in a sensory device of a temperature type according to an embodiment.

- Table 207 shows descriptor components semantics for sensory effect information implemented in a sensory device of a temperature type according to an embodiment.

- Table 208 shows an XML Representation Syntax for sensory effect information implemented in a wind-type sensory apparatus, according to an embodiment.

- Table 209 shows a binary representation syntax for sensory effect information implemented in a wind-type sensory apparatus, according to an embodiment.

Landscapes

- Engineering & Computer Science (AREA)

- Multimedia (AREA)

- Signal Processing (AREA)

- Theoretical Computer Science (AREA)

- Human Computer Interaction (AREA)

- Databases & Information Systems (AREA)

- Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Data Mining & Analysis (AREA)

- Automation & Control Theory (AREA)

- Two-Way Televisions, Distribution Of Moving Picture Or The Like (AREA)

- User Interface Of Digital Computer (AREA)

Abstract

Description

Claims

Priority Applications (4)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| EP11769010.7A EP2560395A4 (en) | 2010-04-12 | 2011-04-06 | SYSTEM AND METHOD FOR PROCESSING SENSORY EFFECTS |

| US13/641,082 US20130103703A1 (en) | 2010-04-12 | 2011-04-06 | System and method for processing sensory effects |

| JP2013504806A JP2013538469A (ja) | 2010-04-12 | 2011-04-06 | 実感効果処理システム及び方法 |

| CN201180018832.0A CN102893612B (zh) | 2010-04-12 | 2011-04-06 | 实感效果处理系统和方法 |

Applications Claiming Priority (2)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| KR10-2010-0033297 | 2010-04-12 | ||

| KR1020100033297A KR101746453B1 (ko) | 2010-04-12 | 2010-04-12 | 실감 효과 처리 시스템 및 방법 |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| WO2011129544A2 true WO2011129544A2 (ko) | 2011-10-20 |

| WO2011129544A3 WO2011129544A3 (ko) | 2012-01-12 |

Family

ID=44799128

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| PCT/KR2011/002409 WO2011129544A2 (ko) | 2010-04-12 | 2011-04-06 | 실감 효과 처리 시스템 및 방법 |

Country Status (6)

| Country | Link |

|---|---|

| US (1) | US20130103703A1 (ko) |

| EP (1) | EP2560395A4 (ko) |

| JP (1) | JP2013538469A (ko) |

| KR (1) | KR101746453B1 (ko) |

| CN (1) | CN102893612B (ko) |

| WO (1) | WO2011129544A2 (ko) |

Families Citing this family (10)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN103561713B (zh) * | 2011-05-26 | 2016-11-02 | 宝洁公司 | 具有有效香料浓郁度的组合物 |

| KR101888528B1 (ko) * | 2012-05-04 | 2018-08-14 | 엘지전자 주식회사 | 미디어 기기 및 그것의 제어 방법 |

| KR101888529B1 (ko) * | 2012-05-04 | 2018-08-14 | 엘지전자 주식회사 | 미디어 기기 및 그것의 제어 방법 |

| KR20130134130A (ko) * | 2012-05-30 | 2013-12-10 | 한국전자통신연구원 | 융합형 미디어 서비스 플랫폼에서의 미디어 처리 장치 및 그 방법 |

| US9098984B2 (en) * | 2013-03-14 | 2015-08-04 | Immersion Corporation | Haptic effects broadcasting during a group event |

| US10595095B2 (en) * | 2014-11-19 | 2020-03-17 | Lg Electronics Inc. | Method and apparatus for transceiving broadcast signal for viewing environment adjustment |

| KR102300997B1 (ko) * | 2015-03-11 | 2021-09-13 | 한국전자통신연구원 | 전정 재활 운동을 위한 실감 효과 장치 및 방법 |

| CN108885500A (zh) * | 2016-04-07 | 2018-11-23 | 国立研究开发法人科学技术振兴机构 | 触觉信息转换装置、触觉信息转换方法、及触觉信息转换程序 |

| US10861221B2 (en) * | 2017-07-14 | 2020-12-08 | Electronics And Telecommunications Research Institute | Sensory effect adaptation method, and adaptation engine and sensory device to perform the same |

| US11647261B2 (en) | 2019-11-22 | 2023-05-09 | Sony Corporation | Electrical devices control based on media-content context |

Family Cites Families (52)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US4167000A (en) * | 1976-09-29 | 1979-09-04 | Schlumberger Technology Corporation | Measuring-while drilling system and method having encoder with feedback compensation |

| US4077061A (en) * | 1977-03-25 | 1978-02-28 | Westinghouse Electric Corporation | Digital processing and calculating AC electric energy metering system |

| US4375287A (en) * | 1981-03-23 | 1983-03-01 | Smith Henry C | Audio responsive digital toy |

| US6940405B2 (en) * | 1996-05-30 | 2005-09-06 | Guardit Technologies Llc | Portable motion detector and alarm system and method |

| US6590588B2 (en) * | 1998-05-29 | 2003-07-08 | Palm, Inc. | Wireless, radio-frequency communications using a handheld computer |

| MXPA03000418A (es) * | 2000-07-13 | 2003-07-14 | Belo Company | Sistema y metodo para asociar informacion historica con datos sensoriales y distribucion de los mismos. |

| US20050203927A1 (en) * | 2000-07-24 | 2005-09-15 | Vivcom, Inc. | Fast metadata generation and delivery |

| JP2003092753A (ja) * | 2000-12-21 | 2003-03-28 | Victor Co Of Japan Ltd | 構造化メタデータの伝送方法 |

| US6678641B2 (en) * | 2001-08-08 | 2004-01-13 | Sony Corporation | System and method for searching selected content using sensory data |

| US20030090531A1 (en) * | 2001-11-02 | 2003-05-15 | Eastman Kodak Company | Digital data preservation system |

| JPWO2003088665A1 (ja) * | 2002-04-12 | 2005-08-25 | 三菱電機株式会社 | メタデータ編集装置、メタデータ再生装置、メタデータ配信装置、メタデータ検索装置、メタデータ再生成条件設定装置、及びメタデータ配信方法 |

| EP1499406A1 (en) * | 2002-04-22 | 2005-01-26 | Intellocity USA, Inc. | Method and apparatus for data receiver and controller |

| JP4052556B2 (ja) * | 2002-05-07 | 2008-02-27 | 日本放送協会 | 外部機器連動型コンテンツ生成装置、その方法及びそのプログラム |

| WO2004097350A2 (en) * | 2003-04-28 | 2004-11-11 | The Board Of Trustees Of The University Of Illinois | Room volume and room dimension estimation |

| KR100581060B1 (ko) * | 2003-11-12 | 2006-05-22 | 한국전자통신연구원 | 오감 데이터 동기화 전송 장치 및 그 방법과 그를 이용한실감형 멀티미디어 데이터 제공 시스템 및 그 방법 |

| JP2005284903A (ja) * | 2004-03-30 | 2005-10-13 | Matsushita Electric Ind Co Ltd | 文書符号化装置、文書復号化装置、文書符号化方法及び文書復号化方法 |

| US20060015560A1 (en) * | 2004-05-11 | 2006-01-19 | Microsoft Corporation | Multi-sensory emoticons in a communication system |

| US8949395B2 (en) * | 2004-06-01 | 2015-02-03 | Inmage Systems, Inc. | Systems and methods of event driven recovery management |

| US8634606B2 (en) * | 2004-12-07 | 2014-01-21 | Mitsubishi Electric Research Laboratories, Inc. | Method and system for binarization of biometric data |

| KR100737804B1 (ko) * | 2005-03-30 | 2007-07-10 | 전자부품연구원 | 센서 네트워크 및 메타데이터를 이용한 미디어 서비스 제공시스템 |

| US7602301B1 (en) * | 2006-01-09 | 2009-10-13 | Applied Technology Holdings, Inc. | Apparatus, systems, and methods for gathering and processing biometric and biomechanical data |

| WO2008048067A1 (en) * | 2006-10-19 | 2008-04-24 | Lg Electronics Inc. | Encoding method and apparatus and decoding method and apparatus |

| EP2081636A4 (en) * | 2006-10-26 | 2010-12-22 | Wicab Inc | SYSTEMS AND METHODS FOR ALTERING FUNCTIONS AND TREATING BRAIN AND BODY CONDITIONS AND DISEASES |

| US8635307B2 (en) * | 2007-02-08 | 2014-01-21 | Microsoft Corporation | Sensor discovery and configuration |

| US7667596B2 (en) * | 2007-02-16 | 2010-02-23 | Panasonic Corporation | Method and system for scoring surveillance system footage |

| US9052817B2 (en) * | 2007-06-13 | 2015-06-09 | Apple Inc. | Mode sensitive processing of touch data |

| US8095646B2 (en) * | 2007-08-16 | 2012-01-10 | Sony Computer Entertainment Inc. | Content ancillary to sensory work playback |

| CA2701900A1 (en) * | 2007-09-11 | 2009-03-19 | Rgb Light Limited | Byte representation for enhanced image compression |

| CN101408873A (zh) * | 2007-10-09 | 2009-04-15 | 劳英杰 | 全范围语义信息综合认知系统及其应用 |

| WO2009051426A2 (en) * | 2007-10-16 | 2009-04-23 | Electronics And Telecommunications Research Institute | Sensory effect media generating and consuming method and apparatus thereof |

| KR20090038835A (ko) * | 2007-10-16 | 2009-04-21 | 한국전자통신연구원 | 실감 미디어 생성 및 소비 방법 및 그 장치 및 실감 미디어메타데이터가 기록된 컴퓨터로 읽을 수 있는 기록매체 |

| US8245124B1 (en) * | 2008-03-20 | 2012-08-14 | Adobe Systems Incorporated | Content modification and metadata |

| KR101078641B1 (ko) * | 2008-07-14 | 2011-11-01 | 명지대학교 산학협력단 | 감각 재생 장치에 관계된 메타데이터를 이용한 멀티미디어 응용 시스템 및 방법 |

| JPWO2010007987A1 (ja) * | 2008-07-15 | 2012-01-05 | シャープ株式会社 | データ送信装置、データ受信装置、データ送信方法、データ受信方法および視聴環境制御方法 |

| US20110125790A1 (en) * | 2008-07-16 | 2011-05-26 | Bum-Suk Choi | Method and apparatus for representing sensory effects and computer readable recording medium storing sensory effect metadata |

| WO2010008234A2 (ko) * | 2008-07-16 | 2010-01-21 | 한국전자통신연구원 | 실감 효과 표현 방법 및 그 장치 및 실감 기기 성능 메타데이터가 기록된 컴퓨터로 읽을 수 있는 기록 매체 |

| KR20100008777A (ko) * | 2008-07-16 | 2010-01-26 | 한국전자통신연구원 | 실감 효과 표현 방법 및 그 장치 및 실감 기기 제어 메타데이터가 기록된 컴퓨터로 읽을 수 있는 기록 매체 |

| KR20100008775A (ko) * | 2008-07-16 | 2010-01-26 | 한국전자통신연구원 | 실감 효과 표현 방법 및 그 장치 및 사용자 환경 정보 메타데이터가 기록된 컴퓨터로 읽을 수 있는 기록 매체 |

| US20110188832A1 (en) * | 2008-09-22 | 2011-08-04 | Electronics And Telecommunications Research Institute | Method and device for realising sensory effects |

| KR101014630B1 (ko) * | 2008-09-25 | 2011-02-16 | 한국전자통신연구원 | 다중 디바이스 제어 서버와 지그비 코디네이터 및 앤드 디바이스와 이를 위한 다중 디바이스 제어 방법 |

| US9189670B2 (en) * | 2009-02-11 | 2015-11-17 | Cognex Corporation | System and method for capturing and detecting symbology features and parameters |

| KR101318756B1 (ko) * | 2009-02-20 | 2013-10-16 | 엘지디스플레이 주식회사 | 터치정보 처리방법 및 장치와 이를 이용한 평판표시장치 |

| WO2010120137A2 (ko) * | 2009-04-15 | 2010-10-21 | 한국전자통신연구원 | 감각 효과를 위한 메타데이터 제공 방법 및 장치, 감각 효과를 위한 메타데이터가 기록된 컴퓨터로 읽을 수 있는 기록 매체, 감각 재생 방법 및 장치 |

| KR20100138725A (ko) * | 2009-06-25 | 2010-12-31 | 삼성전자주식회사 | 가상 세계 처리 장치 및 방법 |

| US9027062B2 (en) * | 2009-10-20 | 2015-05-05 | Time Warner Cable Enterprises Llc | Gateway apparatus and methods for digital content delivery in a network |

| US20110241908A1 (en) * | 2010-04-02 | 2011-10-06 | Samsung Electronics Co., Ltd. | System and method for processing sensory effect |

| KR20110111251A (ko) * | 2010-04-02 | 2011-10-10 | 한국전자통신연구원 | 감각 효과를 위한 메타데이터 제공 방법 및 장치, 감각 효과를 위한 메타데이터가 기록된 컴퓨터로 읽을 수 있는 기록 매체, 감각 재생 방법 및 장치 |

| US20110276659A1 (en) * | 2010-04-05 | 2011-11-10 | Electronics And Telecommunications Research Institute | System and method for providing multimedia service in a communication system |

| KR101685922B1 (ko) * | 2010-04-05 | 2016-12-13 | 삼성전자주식회사 | 가상 세계 처리 장치 및 방법 |

| US20110282967A1 (en) * | 2010-04-05 | 2011-11-17 | Electronics And Telecommunications Research Institute | System and method for providing multimedia service in a communication system |

| KR101677718B1 (ko) * | 2010-04-14 | 2016-12-06 | 삼성전자주식회사 | 가상 세계 처리 장치 및 방법 |

| KR101640043B1 (ko) * | 2010-04-14 | 2016-07-15 | 삼성전자주식회사 | 가상 세계 처리 장치 및 방법 |

-

2010

- 2010-04-12 KR KR1020100033297A patent/KR101746453B1/ko active IP Right Grant

-

2011

- 2011-04-06 EP EP11769010.7A patent/EP2560395A4/en not_active Ceased

- 2011-04-06 WO PCT/KR2011/002409 patent/WO2011129544A2/ko active Application Filing

- 2011-04-06 US US13/641,082 patent/US20130103703A1/en not_active Abandoned

- 2011-04-06 JP JP2013504806A patent/JP2013538469A/ja active Pending

- 2011-04-06 CN CN201180018832.0A patent/CN102893612B/zh not_active Expired - Fee Related

Non-Patent Citations (1)

| Title |

|---|

| None |

Also Published As

| Publication number | Publication date |

|---|---|

| JP2013538469A (ja) | 2013-10-10 |

| US20130103703A1 (en) | 2013-04-25 |

| EP2560395A2 (en) | 2013-02-20 |

| KR101746453B1 (ko) | 2017-06-13 |

| KR20110113942A (ko) | 2011-10-19 |

| CN102893612B (zh) | 2015-11-25 |

| EP2560395A4 (en) | 2015-04-15 |

| WO2011129544A3 (ko) | 2012-01-12 |

| CN102893612A (zh) | 2013-01-23 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| WO2011129544A2 (ko) | 실감 효과 처리 시스템 및 방법 | |