WO2024252647A1 - 画像認識モデル管理装置、および、画像認識モデル管理システム - Google Patents

画像認識モデル管理装置、および、画像認識モデル管理システム Download PDFInfo

- Publication number

- WO2024252647A1 WO2024252647A1 PCT/JP2023/021444 JP2023021444W WO2024252647A1 WO 2024252647 A1 WO2024252647 A1 WO 2024252647A1 JP 2023021444 W JP2023021444 W JP 2023021444W WO 2024252647 A1 WO2024252647 A1 WO 2024252647A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- image recognition

- recognition model

- model

- external environment

- management server

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/50—Context or environment of the image

- G06V20/56—Context or environment of the image exterior to a vehicle by using sensors mounted on the vehicle

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/87—Arrangements for image or video recognition or understanding using pattern recognition or machine learning using selection of the recognition techniques, e.g. of a classifier in a multiple classifier system

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/50—Context or environment of the image

- G06V20/56—Context or environment of the image exterior to a vehicle by using sensors mounted on the vehicle

- G06V20/58—Recognition of moving objects or obstacles, e.g. vehicles or pedestrians; Recognition of traffic objects, e.g. traffic signs, traffic lights or roads

-

- G—PHYSICS

- G08—SIGNALLING

- G08G—TRAFFIC CONTROL SYSTEMS

- G08G1/00—Traffic control systems for road vehicles

- G08G1/16—Anti-collision systems

Definitions

- the present invention relates to an image recognition model management device and an image recognition model management system.

- Patent Document 1 describes a technology that addresses this issue.

- Paragraph 0011 of Patent Document 1 states, "Server 200 is a cloud server for providing cloud services to terminals, and in this embodiment, a recognition model suitable for recognizing the surrounding environment of mobile body Ve is selected from multiple recognition models stored in cloud 201 and supplied to mobile body Ve. Mobile body Ve uses the recognition model supplied from server 200 to recognize objects in the surrounding environment and perform ADAS and AD.”

- the present invention aims to provide an image recognition model management device and an image recognition model management system that can select an image recognition model from multiple image recognition models according to the external environment, which is the vehicle's surroundings, and transfer it to an external environment recognition device, even when communication with a management server is not possible.

- the image recognition model management device of the present invention is, for example, an image recognition model management device that transfers an image recognition model transmitted from a management server to an external environment recognition device, and includes a storage unit that stores a preset first image recognition model and a second image recognition model transmitted from the management server at a predetermined frequency or more, and a model processing unit that selects the first image recognition model or the second image recognition model and transfers it to the external environment recognition device, and the model processing unit switches the image recognition model to be transferred to the external environment recognition device based on external environment recognition data that represents the results of image recognition by the external environment recognition device.

- the image recognition model management system of the present invention is, for example, an image recognition model management system including a management server that transmits an image recognition model corresponding to the external environment of the vehicle, and an image recognition model management device that transfers the image recognition model transmitted from the management server to an external environment recognition device, and the management server includes a model database in which an image recognition model for each piece of external environment information of the vehicle is stored, an external environment analysis unit that generates external environment information of the vehicle based on current position information of the vehicle, and an image recognition model corresponding to the external environment information generated from the current position information, which is stored in the model database.

- the image recognition model management device includes a model extraction unit that extracts from a base, and a transmission unit that transmits the extracted image recognition model to the image recognition model management device.

- the image recognition model management device includes a storage unit that stores a preset first image recognition model and a second image recognition model transmitted from the management server at a predetermined frequency or more, and a model processing unit that selects the first image recognition model or the second image recognition model and transfers it to the external environment recognition device.

- the model processing unit switches the image recognition model to be transferred to the external environment recognition device based on external environment recognition data that represents the result of image recognition by the external environment recognition device.

- the present invention provides an image recognition model management device and an image recognition model management system that can select an image recognition model from a plurality of image recognition models according to the vehicle's external environment and transfer it to an external environment recognition device even when communication with a management server is not possible.

- FIG. 1 is a diagram illustrating an example of an overall configuration of an image recognition model management system according to a first embodiment.

- 1 shows an example of a flowchart of a process performed by an image recognition model management system.

- FIG. 13 is a diagram illustrating an example of a flowchart of a selection process of an image recognition model.

- FIG. 13 is a diagram for explaining extraction of a frequent model.

- FIG. 13 is a diagram illustrating an example of a flowchart of an evaluation process for an image recognition model used.

- 1A and 1B are diagrams illustrating examples of external environment situations with a good recognition rate.

- 1A and 1B are diagrams illustrating examples of external environment situations with poor recognition rates.

- 1 is a table showing an example of weather classification.

- FIG. 11 is a table showing an example of classification of travel time periods. 1 is a table showing an example of classification of road shapes. External environment situation pattern examples FIG. 13 is a diagram illustrating an example of the overall configuration of an image recognition model management system according to a second embodiment. 11 is a table showing an example of evaluation data.

- FIG. 1 is a diagram showing an example of the overall configuration of an image recognition model management system according to a first embodiment.

- the image recognition model management system includes a vehicle 1 and a management server 100.

- the management server 100 is a device that determines whether or not it is necessary to switch the image recognition model used by the external environment recognition device 10 that performs image recognition based on the current position information of the vehicle 1, and transmits the image recognition model to the vehicle when it is determined that it is necessary to switch to the image recognition model held by the management server 100. That is, the management server 100 is a device that transmits an image recognition model corresponding to the external environment of the vehicle to the image recognition model management device.

- the management server 100 includes an external environment analysis unit 110, a model extraction unit 120, a model database 121, a management server side receiving unit 130, and a management server side transmitting unit 131.

- the management server side receiving unit 130 acquires the current location information of the vehicle that transmits the image recognition model.

- the current location information includes the current location of the vehicle 1 as well as the current time.

- the external environment analysis unit 110 collects weather information using a public communication network such as the Internet, and generates weather information 111 (sunny, cloudy, rain, snow, thunderstorm, etc.) for the current position of the vehicle 1.

- the environment analysis unit 110 may include, as a means for collecting the weather information 111, a wide area network (WAN), a wireless communication network such as a telephone communication network, or other communication networks.

- WAN wide area network

- wireless communication network such as a telephone communication network

- the external environment analysis unit 110 generates the driving time period that includes the current time from among multiple preset driving time periods as driving time period information 112.

- the external environment analysis unit 110 generates road shape information 113 around the current location of the vehicle 1 based on map information and the current location notified by the vehicle.

- the map information may be stored in advance in a non-volatile storage area by the management server 100, or may be obtained from a public communication network such as the Internet.

- the external environment analysis unit 110 transmits the generated weather information 111, driving time zone information 112, and road shape information 113 to the model extraction unit 120.

- the weather information 111, driving time zone information 112, and road shape information 113 are examples of external environment information of the vehicle 1, and are not limited to these.

- the model extraction unit 120 extracts an image recognition model suitable for the external environment of the current position of the vehicle 1 from the model database 121 based on the weather information 111, driving time zone information 112, and road shape information 113 transmitted by the external environment analysis unit 110.

- Model database 121 A plurality of image recognition models for recognizing the surrounding environment of the current position are stored in the model database 121.

- the stored image recognition models are a group of image recognition models trained according to the weather, the driving time zone, and the road shape.

- the management server-side transmission unit 131 transmits the image recognition model extracted by the model extraction unit 120 to the vehicle 1.

- the weather information 111, the driving time zone information 112, and the road shape information 113 used in extracting the image recognition model are linked to the image recognition model and transmitted.

- the management server 100 transmits an image recognition model according to external environment information such as weather information 111, driving time zone information 112, and road shape information 113, so that the external environment recognition device 10 can perform image recognition using an image recognition model suitable for the weather, the day and night environment around the vehicle, the driving environment, etc.

- external environment information such as weather information 111, driving time zone information 112, and road shape information 113

- the vehicle 1 includes an external environment recognition device 10, a Global Navigation Satellite System (GNSS) receiver 20, a vehicle position receiving unit 21, a vehicle side transmitting unit 22, a vehicle side receiving unit 23, and an image recognition model management device 30.

- GNSS Global Navigation Satellite System

- the external environment recognition device 10 performs image recognition using an image recognition model on the observation results of the external environment by a camera sensor using an image pickup element mounted on the vehicle 1, and detects moving objects (pedestrians) existing around the vehicle 1. , bicycles, motorcycles, four-wheeled vehicles, buses, trucks, etc.), road structures (pylons, construction signs, road signs, road markings, traffic lights, etc.), road conditions (road width, road surface condition, etc.)

- the external environment recognition device 10 generates recognition data. Then, the external environment recognition device 10 transmits the external environment recognition data to the model processing unit 32 of the image recognition model management device 30.

- the GNSS receiver 20 receives navigation signals transmitted from positioning satellites that constitute the GNSS.

- the GNSS receiver 20 generates observation data based on the navigation signals and carrier waves of the navigation signals.

- the GNSS receiver 20 sequentially outputs the observation data to the vehicle position receiving unit 21.

- the vehicle position receiving unit 21 generates current position information including the current position and current time based on the observation data output from the GNSS receiver 20, and sequentially outputs the current position information of the vehicle 1 to the management server 100 via the vehicle-side transmitting unit 22. Since the output of the current position information of the vehicle 1 to the management server 100 is related to the transmission timing of the image recognition model, the cycle of outputting the current position information may be shortened to increase the update frequency of the image recognition model, or the cycle may be lengthened to decrease the update frequency of the image recognition model.

- the image recognition model management device 30 includes a microcomputer.

- the microcomputer is a processor (e.g., a CPU) that executes a program stored in a storage device.

- the microcomputer executes a predetermined program to operate as a functional unit that provides various functions.

- the storage device includes a non-volatile storage area and a volatile storage area.

- the non-volatile storage area includes a program area that stores a program executed by the microcomputer, and a data area that temporarily stores data used by the microcomputer when the program is executed.

- the volatile storage area stores data used by the microcomputer when the program is executed.

- the communication interface connects to other electronic control devices via a network such as CAN or Ethernet.

- the image recognition model management device 30 of this embodiment includes a storage unit 31 (e.g., the storage device described above) and a model processing unit 32 (e.g., the processor described above).

- the storage unit 31 stores a default model m1 (first image recognition model), a frequent model m2 (second image recognition model), and a received model m3 (third image recognition model).

- the default model m1 is an image recognition model that is set in advance in the storage unit 31, and is stored in a non-volatile storage area as the basic image recognition model used by the external environment recognition device 10. This image recognition model is used immediately after the ignition switch is turned on.

- the reception model m3 is an image recognition model received by the vehicle-side receiving unit 23 from the management server 100.

- the vehicle-side receiving unit 23 stores the received image recognition model as the reception model m3 in the storage unit 31.

- the weather information 111, driving time zone information 112, and road shape information 113 linked to the reception model m3 are also stored in the storage unit 31.

- the reception model m3 is an image recognition model that does not conform to the current external environment. Taking this into consideration, the reception model m3, as well as the weather information 111, driving time zone information 112, and road shape information 113 linked to the reception model m3 are stored in a volatile storage area.

- the frequent model m2 is a received model m3 for a driving time period or road shape for which an image recognition model is frequently transmitted from the management server 100, and is stored in a non-volatile storage area. Details of determining the frequency will be described later.

- the frequent model m2 stored in the non-volatile storage area can be erased by the user. It may also be saved as a preset memory and selected by the user.

- the default model m1, the frequent model m2, and the reception model m3 are stored one by one in the storage unit 31, but this is not limiting.

- any of the default model m1, the frequent model m2, and the reception model m3 may be stored multiple times.

- the reception model m3 is not stored in the storage unit 31.

- the model processing unit 32 selects an image recognition model to be transferred to the external environment recognition device 10 from the image recognition models stored in the storage unit 31, and transfers it to the external environment recognition device 10.

- the model processing unit 32 stores a received model m3 for a driving time zone or road shape in which an image recognition model is frequently transmitted from the management server 100 as a frequent model m2 in the storage unit 31. Furthermore, the model processing unit 32 evaluates the image recognition model used by the external environment recognition device 10 based on the external environment recognition data from the external environment recognition device 10. Specific processing performed by the model processing unit 32 will be described later with reference to FIGS. 3 to 5.

- ⁇ Flowchart> 2 shows an example of a flowchart of the process executed by the image recognition model management system. When the ignition switch is turned on, the process proceeds to step S100.

- step S100 the vehicle position receiving unit 21 of the vehicle 1 generates current position information based on the observation data of the GNSS receiver 20.

- step S101 the vehicle 1 determines whether wireless communication has been established with the management server 100.

- the establishment of wireless communication is determined, for example, by sending a communication request from the vehicle 1 to the management server 100 and checking a response from the management server 100. If wireless communication has been established, the process proceeds to step S102. If wireless communication has not been established, the process proceeds to step S108.

- step S102 the vehicle 1 transmits the current location information generated by the vehicle location receiving unit 21 to the management server 100.

- step S103 the external environment analysis unit 110 of the management server 100 generates weather information 111, driving time zone information 112, and road shape information 113 based on the current location information received from the vehicle 1.

- step S104 the model extraction unit 120 of the management server 100 determines whether or not it is necessary to switch the image recognition model. If it is determined that it is necessary to switch the image recognition model, the process proceeds to step S105, and if it is determined that it is not necessary to switch the image recognition model, the process proceeds to step S108.

- step S105 the model extraction unit 120 of the management server 100 extracts an image recognition model to be sent to the vehicle 1 from the model database 121 based on the weather information 111, the driving time zone information 112, and the road shape information 113.

- step S106 the management server side transmission unit 131 of the management server 100 transmits the image recognition model extracted in step S105 to the vehicle 1.

- step S107 vehicle 1 receives the image recognition model sent from management server 100.

- step S108 the model processing unit 32 of the vehicle 1 selects an image recognition model to be transferred to the external environment recognition device 10 from the image recognition models stored in the memory unit 31.

- the model processing unit 32 also stores in the memory unit 31 a received model m3 for a driving time period or road shape for which image recognition models are frequently transmitted from the management server 100 as a frequent model m2.

- the model processing unit 32 also evaluates the image recognition model used by the external environment recognition device 10 based on the external environment recognition data from the external environment recognition device 10.

- step S109 the model processing unit 32 of the vehicle 1 transfers the image recognition model extracted in step S108 to the external environment recognition device 10.

- step S110 the external environment recognition device 10 of the vehicle 1 applies the image recognition model transferred in step S109.

- Fig. 3 is a diagram showing an example of a flowchart of the image recognition model selection process.

- step S200 the vehicle 1 determines whether wireless communication has been established with the management server 100. The determination of whether wireless communication has been established is the same as in step S101 in FIG. 2. If wireless communication has been established, the process proceeds to step S201, and if wireless communication has not been established, the process proceeds to step S203.

- step S201 the model processing unit 32 checks whether an image recognition model has been transmitted from the management server 100 to the vehicle 1. Specifically, the model processing unit 32 checks whether a reception model m3 is stored in the memory unit 31. If the reception model m3 is stored in the memory unit 31, it means that an image recognition model suitable for the external environment is stored, and the process proceeds to step S202. If the reception model m3 is not stored in the memory unit 31, it means that an image recognition model suitable for the external environment is not stored, and the process proceeds to step S203.

- step S202 the model processing unit 32 selects the receiving model m3 as the image recognition model to be transferred to the external environment recognition device 10. If the receiving model m3 has already been selected, the receiving model m3 continues to be selected. In this case, the image recognition model is not switched.

- step S203 the model processing unit 32 checks whether the frequent model m2 is stored in the storage unit 31. If the frequent model m2 is stored, the process proceeds to step S204, and if the frequent model m2 is not stored, the process transitions to step S205.

- step S204 the model processing unit 32 selects the frequent model m2 as the image recognition model to be transferred to the external environment recognition device 10. If the frequent model m2 has already been selected, the frequent model m2 continues to be selected. In this case, the image recognition model is not switched.

- step S205 the model processing unit 32 selects the default model m1 as the image recognition model to be transferred to the external environment recognition device 10. If the default model m1 has already been selected, the default model m1 continues to be selected. In this case, the image recognition model is not switched.

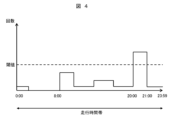

- FIG. 4 shows an example of a case where a frequent model m2 is extracted by focusing on a driving time zone.

- the horizontal axis indicates the driving time zone (from 0:00 to 23:59), and the vertical axis indicates the number of transmissions of the driving time zone that is transmitted from the management server 100 in association with the image recognition model.

- the width of the driving time zone is set to 1 hour. In the example of FIG. 4, the number of transmissions of the driving time zone from 20:00 to 21:00 is large, exceeding the threshold value of the number of transmissions (for example, 10 times).

- the number of transmissions of the image recognition model associated with the driving time zone from 20:00 to 21:00 is large, exceeding the threshold value of the number of transmissions (for example, 10 times). Therefore, the receiving model m3 associated with the driving time zone from 20:00 to 21:00 is stored in the storage unit 31 as the frequent model m2.

- the multiple image recognition models may be stored in the storage unit 31 as the frequent model m2.

- only the reception model m3 associated with the driving time period with the highest number of transmissions may be stored as the frequent model m2 in the storage unit 31. The same applies to the road shape instead of the driving time period.

- the example in FIG. 4 focuses on the number of transmissions, it is also possible to focus on the transmission ratio (the number of transmissions relative to the total number of transmissions, the threshold in this case is, for example, 50%).

- FIG. 5 is a diagram showing an example of a flowchart of an evaluation process of an image recognition model used by the external environment recognition device 10.

- the image recognition model used by the external environment recognition device 10 is the one transferred to the external environment recognition device 10 by the model processing unit 32.

- the flowchart shown in FIG. 5 is described as being performed in step S108 in FIG. 2, but is not limited thereto. For example, as a step separate from step S108, a step of evaluating the image recognition model being used and instructing switching of the image recognition model based on the evaluation result may be provided.

- the model processing unit 32 acquires external world recognition data from the external world recognition device 10.

- the external world recognition data is the result of image recognition by the external world recognition device 10, and includes object detection/non-detection information, object type information for detected objects, object recognition rate, etc.

- the model processing unit 32 acquires the external world recognition data in real time, but may also acquire external world recognition data for a predetermined period at a certain cycle. Note that if external world recognition data cannot be acquired from the external world recognition device 10 at the time of implementing the flowchart shown in FIG. 5 because the external world recognition device 10 is not performing external world recognition, etc., the flowchart shown in FIG. 5 is not implemented.

- step S301 if the image recognition model used by the external environment recognition device 10 is the default model m1, the model processing unit 32 ends the flowchart in FIG. 5 without evaluating the image recognition model. If the image recognition model used by the external environment recognition device 10 is the frequent model m2 or the received model m3, the process proceeds to step S302.

- step S302 the model processing unit 32 evaluates the image recognition model used by the external recognition device 10 based on the external recognition data, and determines whether or not to switch the image recognition model used by the external recognition device 10 to the default model m1 based on the evaluation result. Specifically, if the number of times that an object is repeatedly detected and not detected is equal to or exceeds a predetermined value (e.g., 5 times) based on the external recognition data, or if the number of times that the recognition result of the object type for the same object changes, such as an object recognized as a regular car changing to a motorcycle, bus, truck, etc., is equal to or exceeds a predetermined value (e.g., 5 times), the process proceeds to step S303; otherwise, the process proceeds to step S304.

- a predetermined value e.g., 5 times

- step S303 since it is determined that the accuracy of the frequent model m2 or the received model m3 is poor, the image recognition model being used is switched to the default model m1.

- step S304 it is determined that there is no problem with the accuracy of the frequent model m2 or the received model m3, so the image recognition model being used continues to be used.

- ⁇ Specific Situation> 6 shows an example of a situation with a good recognition rate.

- the weather is fine

- the driving time is daytime (8:00 to 16:00)

- the road shape is a general road (urban street) with few obstacles

- a pedestrian attempting to cross a crosswalk is detected in such an external environment. Since the situation shown in the example is not an external environment that is difficult to recognize, highly accurate recognition can be performed using the basic recognition model, and there is no need to transmit an image recognition model from the management server 100.

- Figure 7 shows an example of a situation with a poor recognition rate.

- the weather is rainy

- the driving time is at night (7:00 p.m. to 8:00 a.m.)

- the road shape is a general road (residential road) with puddles, and there is a pedestrian trying to cross the crosswalk in such an external environment.

- the road is narrow and it is raining, and there is a concern that the recognition accuracy of the basic recognition model will decrease for pedestrians holding umbrellas.

- the basic recognition model is additionally trained for this situation, there is a concern that it will affect situations with a good recognition rate. Therefore, by applying the image recognition model transmitted from the management server 100, the deterioration of recognition accuracy can be reduced.

- the management server 100 analyzes the external environment of the vehicle 1 and transmits an appropriate image recognition model, it is possible to achieve a uniform effect for all vehicles without variation in the effects of the invention.

- the evaluation may differ for each vehicle, and it is conceivable that the recognition accuracy of some vehicles may improve while that of others may deteriorate.

- the management server analyze the external environment, a certain effect can be achieved for all vehicles, and it is also possible to easily change the method of analyzing the external environment.

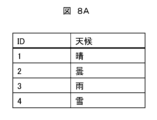

- FIG. 8A is a table showing an example of weather classification.

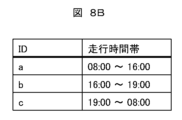

- FIG. 8B is a table showing an example of driving time zone classification.

- FIG. 8C is a table showing an example of road shape classification.

- the model extraction unit 120 of the management server 100 determines the image recognition model to be sent to the vehicle 1 mainly based on a combination of weather, driving time zone, and road shape.

- FIGS. 8A to 8C are just examples, and the number of combination elements may be increased, or the weather and driving time zone may be set in more detail. These classifications change depending on the granularity of the image recognition model to be sent.

- FIG. 9 is a diagram for explaining the determination of whether or not to switch the image recognition model to be transmitted.

- each row shows a combination of weather information 111, driving time zone information 112, and road shape information 113 output by the external environment analysis unit 110 of the management server 100.

- the fourth column from the left indicates whether or not to switch the image recognition model to be transmitted to the vehicle 1. Since the weather and driving time zone have a significant effect on the image recognition model, these two elements are often the factors that determine the image recognition model. In addition, changes in the external environment due to puddles after rain and snow accumulation, and mountain roads with many steep gradients also affect the decision on the image recognition model.

- the model extraction unit 120 of the management server 100 determines that it is necessary to switch the image recognition model when the driving time period is from 7 PM to 8 AM. Furthermore, when the driving time period is from 4 PM to 7 PM, the model extraction unit 120 determines that it is not necessary to switch the image recognition model to be transmitted if the weather is sunny, and determines that it is necessary to switch the image recognition model to be transmitted if the weather is cloudy. Furthermore, the model extraction unit 120 determines that it is necessary to switch the image recognition model to be transmitted if the weather is rainy or snowy. Furthermore, the model extraction unit 120 also determines that it is necessary to switch the image recognition model to be transmitted if the road shape is covered with snow. However, the method of determining whether to switch the image recognition model to be transmitted is not limited to these. For example, the determination method may change depending on the season, the area in which the vehicle is traveling, etc.

- the present invention provides an image recognition model management device and an image recognition model management system that can select an image recognition model from a plurality of image recognition models according to the vehicle's external environment and transfer it to an external environment recognition device even when communication with the management server is not possible.

- FIG. 10 is a diagram showing an example of the overall configuration of an image recognition model management system in Example 2. This system differs from Example 1 in that the image recognition model management device 30 includes a feedback unit 33. The following mainly describes the differences from Example 1.

- the feedback unit 33 generates evaluation data 114 based on the evaluation results of the model processing unit 32 so that it can be analyzed by the external environment analysis unit 110 of the management server 100.

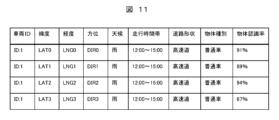

- Figure 11 is a table showing an example of evaluation data.

- the vehicle ID is unique for each vehicle and will not be duplicated.

- the latitude, longitude, and direction are stored as information acquired from the GNSS receiver 20 of the vehicle 1.

- the weather, driving time period, and road shape are based on information sent from the management server 100.

- the object type and object recognition rate are stored as the evaluation results of the model processing unit 32.

- the vehicle 1 transmits the information of the feedback unit 33 to the management server 100 via the vehicle-side transmission unit 22.

- the management server side receiving unit 130 of the management server 100 receives the evaluation data 114 transmitted from the vehicle.

- the external environment analysis unit 110 transmits the evaluation data 114 received by the management server side receiving unit 130 to the model extraction unit 120.

- the model processing unit 32 selects the image recognition model (received model m3) transmitted by the management server 100, and the external environment recognition device 10 uses the received model m3. Therefore, when communication between the management server 100 and the vehicle 1 is established and the management server 100 transmits an image recognition model, the evaluation data 114 represents an evaluation of the image recognition model transmitted by the management server 100.

- the model extraction unit 120 switches the image recognition model to be transmitted to another image recognition model and transmits it. Furthermore, the model extraction unit 120 links the evaluation data 114 to the corresponding image recognition model and stores it in the model database 121. For example, if image recognition model A and image recognition model B stored in the model database 121 are both suitable for similar weather information 111, driving time zone information 112, and road shape information 113, the evaluation data 114 can be used to select the one with the better object recognition rate, etc., and transmit it to the vehicle 1.

- the management server 100 can transmit an image recognition model that is more suitable for the external environment of the vehicle. This can improve the accuracy of image recognition by the external environment recognition device 10.

- the present invention is not limited to the above-described embodiments, and includes various modified examples.

- the above-described embodiments have been described in detail to clearly explain the present invention, and are not necessarily limited to those having all of the configurations described.

- the above-described configurations, functions, processing units, processing means, etc. may be realized in hardware, in part or in whole, by designing, for example, an integrated circuit.

- the above-described configurations, functions, etc. may be realized in software by a processor interpreting and executing a program that realizes each function.

- Information such as programs, tables, files, etc. that realize each function can be stored in a memory, a recording medium such as a hard disk or SSD (Solid State Drive), or a recording medium such as an IC card, SD card, or DVD.

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- Multimedia (AREA)

- Artificial Intelligence (AREA)

- Health & Medical Sciences (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Computing Systems (AREA)

- Databases & Information Systems (AREA)

- Evolutionary Computation (AREA)

- General Health & Medical Sciences (AREA)

- Medical Informatics (AREA)

- Software Systems (AREA)

- Traffic Control Systems (AREA)

Priority Applications (3)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| PCT/JP2023/021444 WO2024252647A1 (ja) | 2023-06-09 | 2023-06-09 | 画像認識モデル管理装置、および、画像認識モデル管理システム |

| DE112023005281.6T DE112023005281T5 (de) | 2023-06-09 | 2023-06-09 | Bilderkennungsmodell-Verwaltungsvorrichtung und Bilderkennungsmodell-Verwaltungssystem |

| JP2025525892A JPWO2024252647A1 (enExample) | 2023-06-09 | 2023-06-09 |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| PCT/JP2023/021444 WO2024252647A1 (ja) | 2023-06-09 | 2023-06-09 | 画像認識モデル管理装置、および、画像認識モデル管理システム |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| WO2024252647A1 true WO2024252647A1 (ja) | 2024-12-12 |

Family

ID=93795511

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| PCT/JP2023/021444 Pending WO2024252647A1 (ja) | 2023-06-09 | 2023-06-09 | 画像認識モデル管理装置、および、画像認識モデル管理システム |

Country Status (3)

| Country | Link |

|---|---|

| JP (1) | JPWO2024252647A1 (enExample) |

| DE (1) | DE112023005281T5 (enExample) |

| WO (1) | WO2024252647A1 (enExample) |

Citations (7)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2008146356A (ja) * | 2006-12-11 | 2008-06-26 | Nissan Motor Co Ltd | 視線方向推定装置及び視線方向推定方法 |

| JP2017168093A (ja) * | 2016-02-29 | 2017-09-21 | 株式会社リコー | 物理的シーンモデルの構築方法及び装置、運転支援方法及び装置 |

| JP2019154027A (ja) * | 2018-03-02 | 2019-09-12 | 富士通株式会社 | ビデオ監視システムのパラメータ設定方法、装置及びビデオ監視システム |

| WO2020090251A1 (ja) * | 2018-10-30 | 2020-05-07 | 日本電気株式会社 | 物体認識装置、物体認識方法および物体認識プログラム |

| JP2020126535A (ja) * | 2019-02-06 | 2020-08-20 | キヤノン株式会社 | 情報処理装置、情報処理装置の制御方法およびプログラム |

| JP2021033048A (ja) * | 2019-08-23 | 2021-03-01 | サウンドハウンド,インコーポレイテッド | 車載装置、発声を処理する方法およびプログラム |

| JP2023043957A (ja) * | 2021-09-17 | 2023-03-30 | 株式会社デンソーテン | 車載装置、画像認識システム、車載装置用の支援装置、画像認識方法、及び、モデルデータ送信方法 |

-

2023

- 2023-06-09 JP JP2025525892A patent/JPWO2024252647A1/ja active Pending

- 2023-06-09 WO PCT/JP2023/021444 patent/WO2024252647A1/ja active Pending

- 2023-06-09 DE DE112023005281.6T patent/DE112023005281T5/de active Pending

Patent Citations (7)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2008146356A (ja) * | 2006-12-11 | 2008-06-26 | Nissan Motor Co Ltd | 視線方向推定装置及び視線方向推定方法 |

| JP2017168093A (ja) * | 2016-02-29 | 2017-09-21 | 株式会社リコー | 物理的シーンモデルの構築方法及び装置、運転支援方法及び装置 |

| JP2019154027A (ja) * | 2018-03-02 | 2019-09-12 | 富士通株式会社 | ビデオ監視システムのパラメータ設定方法、装置及びビデオ監視システム |

| WO2020090251A1 (ja) * | 2018-10-30 | 2020-05-07 | 日本電気株式会社 | 物体認識装置、物体認識方法および物体認識プログラム |

| JP2020126535A (ja) * | 2019-02-06 | 2020-08-20 | キヤノン株式会社 | 情報処理装置、情報処理装置の制御方法およびプログラム |

| JP2021033048A (ja) * | 2019-08-23 | 2021-03-01 | サウンドハウンド,インコーポレイテッド | 車載装置、発声を処理する方法およびプログラム |

| JP2023043957A (ja) * | 2021-09-17 | 2023-03-30 | 株式会社デンソーテン | 車載装置、画像認識システム、車載装置用の支援装置、画像認識方法、及び、モデルデータ送信方法 |

Also Published As

| Publication number | Publication date |

|---|---|

| JPWO2024252647A1 (enExample) | 2024-12-12 |

| DE112023005281T5 (de) | 2025-11-20 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| US11257377B1 (en) | System for identifying high risk parking lots | |

| US10691131B2 (en) | Dynamic routing for autonomous vehicles | |

| US11435751B2 (en) | Vehicle-based road obstacle identification system | |

| US10990105B2 (en) | Vehicle-based virtual stop and yield line detection | |

| US11373524B2 (en) | On-board vehicle stop cause determination system | |

| JP6872959B2 (ja) | 通信システム、車両搭載器及びプログラム | |

| US20200211370A1 (en) | Map editing using vehicle-provided data | |

| US9404763B2 (en) | Departure/destination location extraction apparatus and departure/destination location extraction method | |

| JP4680326B1 (ja) | 警告音出力制御装置、警告音出力制御方法、警告音出力制御プログラムおよび記録媒体 | |

| JP2023153776A (ja) | 事故判定装置 | |

| RU2707153C1 (ru) | Серверное устройство и автомобильное устройство | |

| KR20150013775A (ko) | 현재 상황 묘사를 생성하는 방법 및 시스템 | |

| CN106056944B (zh) | 行驶环境评价系统及行驶环境评价方法 | |

| JP7755422B2 (ja) | 車載装置、画像認識システム、車載装置用の支援装置、画像認識方法、及び、モデルデータ送信方法 | |

| JP2017175209A (ja) | 通信制御装置 | |

| US20200208991A1 (en) | Vehicle-provided virtual stop and yield line clustering | |

| CN112887913B (zh) | 一种基于车联网的动态信息发送方法及设备 | |

| US11180090B2 (en) | Apparatus and method for camera view selection/suggestion | |

| WO2024252647A1 (ja) | 画像認識モデル管理装置、および、画像認識モデル管理システム | |

| EP4367478A1 (en) | Electronic horizon for adas function | |

| KR102895483B1 (ko) | 교통정보 제공 시스템 및 방법 | |

| CN119907902A (zh) | 用于自动驾驶的车辆的路线规划的方法和系统 | |

| US20220105866A1 (en) | System and method for adjusting a lead time of external audible signals of a vehicle to road users | |

| WO2019142697A1 (ja) | 情報収集システム、制御装置およびプログラム | |

| EP4671075A1 (en) | METHOD FOR EVALUATING A CANDIDATE AUTOMATED DRIVING FEATURE |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| 121 | Ep: the epo has been informed by wipo that ep was designated in this application |

Ref document number: 23940745 Country of ref document: EP Kind code of ref document: A1 |

|

| ENP | Entry into the national phase |

Ref document number: 2025525892 Country of ref document: JP Kind code of ref document: A |

|

| WWE | Wipo information: entry into national phase |

Ref document number: 2025525892 Country of ref document: JP |

|

| WWE | Wipo information: entry into national phase |

Ref document number: 112023005281 Country of ref document: DE |

|

| WWP | Wipo information: published in national office |

Ref document number: 112023005281 Country of ref document: DE |