WO2015011752A1 - 動画像符号化装置およびその動作方法 - Google Patents

動画像符号化装置およびその動作方法 Download PDFInfo

- Publication number

- WO2015011752A1 WO2015011752A1 PCT/JP2013/069753 JP2013069753W WO2015011752A1 WO 2015011752 A1 WO2015011752 A1 WO 2015011752A1 JP 2013069753 W JP2013069753 W JP 2013069753W WO 2015011752 A1 WO2015011752 A1 WO 2015011752A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- unit

- moving image

- quantization

- prediction

- frequency conversion

- Prior art date

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/563—Motion estimation with padding, i.e. with filling of non-object values in an arbitrarily shaped picture block or region for estimation purposes

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/60—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using transform coding

- H04N19/61—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using transform coding in combination with predictive coding

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/103—Selection of coding mode or of prediction mode

- H04N19/11—Selection of coding mode or of prediction mode among a plurality of spatial predictive coding modes

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/115—Selection of the code volume for a coding unit prior to coding

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/119—Adaptive subdivision aspects, e.g. subdivision of a picture into rectangular or non-rectangular coding blocks

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/132—Sampling, masking or truncation of coding units, e.g. adaptive resampling, frame skipping, frame interpolation or high-frequency transform coefficient masking

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/157—Assigned coding mode, i.e. the coding mode being predefined or preselected to be further used for selection of another element or parameter

- H04N19/159—Prediction type, e.g. intra-frame, inter-frame or bidirectional frame prediction

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/167—Position within a video image, e.g. region of interest [ROI]

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/176—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a block, e.g. a macroblock

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/42—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by implementation details or hardware specially adapted for video compression or decompression, e.g. dedicated software implementation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/513—Processing of motion vectors

- H04N19/517—Processing of motion vectors by encoding

- H04N19/52—Processing of motion vectors by encoding by predictive encoding

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/59—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving spatial sub-sampling or interpolation, e.g. alteration of picture size or resolution

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/85—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using pre-processing or post-processing specially adapted for video compression

- H04N19/86—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using pre-processing or post-processing specially adapted for video compression involving reduction of coding artifacts, e.g. of blockiness

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/90—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using coding techniques not provided for in groups H04N19/10-H04N19/85, e.g. fractals

- H04N19/91—Entropy coding, e.g. variable length coding [VLC] or arithmetic coding

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/124—Quantisation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/146—Data rate or code amount at the encoder output

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/42—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by implementation details or hardware specially adapted for video compression or decompression, e.g. dedicated software implementation

- H04N19/436—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by implementation details or hardware specially adapted for video compression or decompression, e.g. dedicated software implementation using parallelised computational arrangements

Definitions

- the present invention relates to a moving image encoding device and an operation method thereof, and more particularly to a technique effective for reducing an increase in the code amount of an encoded bit stream generated from a moving image encoding device in padding processing.

- MPEG is an abbreviation for Moving

- the MPEG-2 standard prescribes only the bitstream syntax (compression coding data string rules or coding data bitstream configuration method) and decoding process, so that satellite broadcasting and services, cable television, etc. It is flexible enough to be used in various situations such as interactive television and the Internet.

- the video signal is sampled and quantized to define the color and luminance components of each pixel of the digital video.

- Values indicating color and luminance components are stored in a structure known as a macroblock.

- the color and luminance values stored in the macroblock are converted into frequency values using discrete cosine transform (DCT).

- DCT discrete cosine transform

- the transform coefficients obtained by DCT have different frequencies depending on the brightness and color of the picture.

- the quantized DCT transform coefficients are encoded by variable length coding (VLC) that further compresses the video stream.

- VLC variable length coding

- I frame Intra-coded

- P frame unidirectional prediction

- B frame bi-directionally (predictive-coded) frame.

- the MPEG-2 moving image encoder includes a frame memory, a motion vector detection unit, a motion compensation unit, a subtraction unit, a DCT conversion unit, a quantization unit, an inverse quantization unit, an inverse DCT conversion unit, and a variable. It is composed of a long encoding unit.

- the encoded video signal is stored in the frame memory to detect the B frame encoding and motion vector, and then read out from the frame memory.

- the motion compensated prediction signal from the motion compensation unit is received by the subtraction unit. Subtraction is performed, and DCT conversion processing and quantization processing are executed by the DCT conversion unit and the quantization unit, respectively.

- the quantized DCT transform coefficient is subjected to variable length coding processing by the variable length coding unit, and after the local decoding processing is performed by the inverse quantization unit and the inverse DCT transform unit, the local decoding processing result is motion compensated. Is supplied to the subtracting unit via the unit.

- a moving picture decoding apparatus (Decoder) of MPEG-2 includes a buffer memory, a variable length decoding unit, an inverse quantization unit, an inverse DCT conversion unit, a motion compensation unit, an addition unit, and a frame memory.

- the variable length decoding unit, the inverse quantization unit, and the inverse DCT conversion unit perform variable length decoding processing, inverse quantization processing, and inverse DCT conversion processing, respectively. Executed. These processing results are added to a reference image generated based on the motion vector subjected to variable length decoding processing by the adding unit, and a reproduced image signal is generated from the output of the adding unit. This reproduced image signal is stored in the frame memory and used for prediction of other frames.

- a general video compression method according to the MPEG-4 standard (H.263) standardized by the international standard ISO / IEC 14496 for low-rate encoding of videophones, etc.

- the compression method based on the MPEG-4 (H.263) standard is called “hybrid type” using inter-frame prediction and discrete cosine transform, similar to MPEG-2, and is further divided in half-pixel units. Motion compensation was introduced.

- this compression method uses Huffman coding as entropy coding, but introduces a new technology called three-dimensional variable length coding (three-dimensional VLC) that simultaneously encodes run, level, and last.

- the run and level relate to the run-length coefficient, and the last indicates whether it is the last coefficient.

- the MPEG-4 (H.263) standard includes a basic part called Baseline and an extended standard called Annex.

- the MPEG-4 AVC (H.264) standard is an international standard ISO / IEC in order to achieve higher encoding efficiency. Standardized by 14496-10.

- AVC is an abbreviation for Advanced Video Coding

- the MPEG-4 AVC (H.264) standard is H.264. H.264 / AVC.

- Standard H. Video coding by H.246 / AVC is composed of a video coding layer (Video Coding Layer) and a network abstraction layer (Network Abstraction Layer). That is, the video coding layer is designed to effectively represent the video context, and the network abstraction layer formats the VCL representation of the video and is suitable for transfer by various transport layers and storage media. Header information in a way.

- inter coding i.e., frame

- inter coding i.e., frame

- I frame that uses intra coding without using correlation between frames

- P frame that is inter-predicted from one previously coded frame

- inter frame that is based on two previously coded frames.

- B frames that can be predicted.

- inter-frame predictive encoding subtraction between a moving image to be encoded and a motion-compensated reference image (predicted image) is executed, and a prediction residual by this subtraction is encoded.

- the encoding process includes orthogonal transform such as DCT (discrete cosine transform), quantization, and variable length encoding.

- Motion compensation motion correction

- motion correction includes processing for spatially moving a reference frame for inter-frame prediction, and motion compensation processing is performed in units of blocks of a frame to be encoded. When there is no motion in the image content, there is no movement and the pixel at the same position as the predicted pixel is used. When there is a motion, the most similar block is searched and the movement amount is set as a motion vector.

- the motion compensation block is a block of 16 pixels ⁇ 16 pixels / 16 pixels ⁇ 8 pixels in the MPEG-2 encoding method, and 16 pixels ⁇ 16 pixels / 16 pixels ⁇ 8 pixels / in the MPEG-4 encoding method. This is a block of 8 pixels ⁇ 8 pixels.

- the motion compensation block is 16 pixels ⁇ 16 pixels / 16 pixels ⁇ 8 pixels / 8 pixels ⁇ 16 pixels / 8 pixels ⁇ 8 pixels / 8 pixels ⁇ 4 pixels / It is a block of 4 pixels ⁇ 8 pixels / 4 pixels ⁇ 4 pixels.

- the encoding process described above is performed for each video screen (frame or field), and a block obtained by subdividing the screen (usually 16 pixels ⁇ 16 pixels, called a macro block (MB) in MPEG) is a processing unit. It will be. That is, for each block to be encoded, the most similar block (predicted image) is selected from the already encoded reference image, and the difference signal between the encoded image (block) and the predicted image is encoded (orthogonal transform). , Quantization, etc.). The difference between the relative positions of the block to be encoded and the prediction signal in the screen is called a motion vector.

- the video coding layer (VCL) according to H.246 / AVC is described as following an approach called block-based hybrid video coding.

- the VCL design is composed of macroblocks and slices. Each picture is divided into a plurality of macroblocks having a fixed size, and each macroblock has a luminance picture component of a rectangular picture area of 16 ⁇ 16 samples and 2 corresponding to it. Each of the two color difference components includes a square sample area.

- a picture can include one or more slices. Each slice is self-inclusive in the sense that it gives an active sequence parameter set and a picture parameter set, and the slice representation can basically be decoded without using information from other slices.

- the syntax element can be analyzed from the bit stream and the value of the sample in the picture area. However, in order to adapt the deblocking filter across slice boundaries for more complete decoding, some information from other slices is required.

- systems that handle moving image codes include digital HDTV (High Definition Television) broadcast receivers and digital video cameras that can shoot HDTV signals, and the image size is becoming larger.

- An image encoding apparatus and an image decoding apparatus that process these signals are required to have higher processing performance.

- the standard H.264. H.264 / MPEG-4 AVC is a successor to the new standard H.264. H.265 (ISO / IEC 23008-2) has been proposed, and this new standard is called HEVC (High Efficiency Video Coding).

- the HEVC standard is superior in compression efficiency by optimizing the block size and the like, and is approximately four times as high as the MPEG-2 standard.

- the compression performance is about twice that of H.264 / AVC.

- Patent Document 1 includes MPEG-1 / 2/4 and H.264. 261 / H. 263 / H.

- H.264-AVC various encoding and compression standards widely adopted such as H.264-AVC

- one macroblock consisting of 16 ⁇ 16 pixels is used as a processing unit for motion compensation and subsequent processing

- a flexible block structure is adopted as a processing unit.

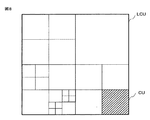

- the unit of this flexible block structure is called a coding unit (CU), which uses a quadtree to achieve good performance starting from the maximum coding unit (LCU).

- LCU maximum coding unit

- the maximum coding unit (LCU) size is 64 ⁇ 64 pixels which is much larger than the macroblock size (16 ⁇ 16 pixels).

- the maximum coding unit (LCU) described in Patent Document 1 below corresponds to a coding tree block (CTB) or a coding tree block (CTU) described in the HEVC standard.

- FIG. 1 of Patent Document 1 and related disclosure show an example of coding unit division based on quad tree, and at the depth “zero”, the first coding unit (CU) is composed of 64 ⁇ 64 pixels.

- the split flag “0” indicates that the current coding unit (CU) is not divided, while the split flag “1” indicates that the current coding unit (CU) is divided into four small coding units by quad tree.

- Patent Document 1 below also describes that the divided coding unit (CU) is further subjected to quad-tree division until reaching the size of the minimum coding unit (CU) specified in advance.

- Non-patent document 2 gives an overview of the HEVC standard.

- the core of the coding layer of the previous standard was a macroblock containing two chromaticity samples of 16 ⁇ 16 blocks and 8 ⁇ 8 blocks of luminance samples

- the similar structure of the HEVC standard is traditional A coding tree unit (CTU) whose size is selected by an encoder larger than a large macroblock.

- the coding tree unit (CTU) includes a luminance coding tree block (CTB), a chromaticity coding tree block (CTB), and syntax elements.

- the coding tree unit (CTU) quad tree syntax specifies the size and position of the coding tree block (CTB) for its luminance and chromaticity.

- the decision whether an inter picture or an intra picture is used to encode a picture region is made at the level of the coding unit (CU).

- the division structure of the prediction unit (PU) has its roots at the level of the coding unit (CU).

- the luminance and chromaticity coding block (CB) can be divided in size and predicted from the luminance and chromaticity prediction block (PB).

- the HEVC standard supports variable prediction block (PB) sizes from 64 ⁇ 64 samples to 4 ⁇ 4 samples.

- the prediction residual is encoded by block transform, and the tree structure of the transform unit (TU) has its root at the level of the coding unit (CU).

- the residual of the luminance coding block (CB) can be the same as that of the luminance transform block (TB), and can be divided into smaller luminance transform blocks (TB).

- Integer-based functions similar to those of the Discrete Cosine Transform (DCT) are defined for the size of 4 ⁇ 4, 8 ⁇ 8, 16 ⁇ 16, 32 ⁇ 32 sample square transform blocks (TB) ing.

- Non-Patent Document 2 describes the configuration of a hybrid video encoder that can generate a bitstream that complies with the HEVC standard. It is also described that a deblocking filter similar to that used in the H.264 / MPEG-4 AVC standard is included.

- Patent Document 2 in order to efficiently encode an image of an arbitrary shape, shape information of an image signal input from the outside is supplied to padding means that fills a blank area without image data in a block.

- the padding means Since the horizontal and vertical sizes of the input image signal need to be integral multiples of the block size for compression encoding, the padding means uses a blank area as an average value of the image area in order to encode an arbitrarily shaped image. The operation of filling in the image area or the operation of filling in by copying pixels at the end of the image area is executed.

- Patent Document 3 when a signal whose pixel value changes discontinuously at the edge of the screen when encoding an image signal, a high frequency component is generated due to the discontinuity of the signal, and many codes are encoded.

- An encoding device is described that solves the problem of generating quantities.

- the weighting factor determination unit calculates the position of the image signal in the screen based on the synchronization signal, and outputs a weighting factor w that approaches 0 as the end of the screen is approached.

- the first multiplier multiplies the input image signal by the weighting coefficient w

- the second multiplier multiplies the output of the constant value output unit by the coefficient 1-w

- the adder adds the output signals of the two multipliers After that, the output of the adder is encoded by the encoder. It is described that an extra code amount is not required because the image signal is smoothly set to a constant value at the edge of the screen.

- Patent Document 4 when an operator sets information indicating an important position of an image included in a transmission image in an operation unit, the image encoding unit converts image data in the important position of the image into images at other positions.

- a videophone device that encodes with improved image quality over data is described.

- Patent Document 5 in order to realize an off-screen motion vector (UMV) adopted in the MPEG-4 standard (in order to use the outside of the screen boundary as a reference image), the pixel value in the screen A padding process for extrapolating to the outside of the screen is described. Furthermore, since the extrapolation start position of the padding process is not the end of the effective image area or the end of the encoded macroblock in the MPEG-4 standard, the extrapolation start position of the padding process is different between the encoder and the decoder. A method for preventing noise generated in a decoder when there is inconsistency is described.

- UMV off-screen motion vector

- the inventors of the present invention have decided on the current standard H.264.

- Engaged in the development of a video encoding device Video Encoder

- This moving image encoding apparatus is not limited to the HEVC standard, but also the current standard H.264.

- H.264 / MPEG-4 AVC is also required to encode a moving image input signal.

- This HEVC standard is superior in compression efficiency by optimizing the block size, etc., and is about 4 times that of the MPEG-2 standard.

- the compression performance is about twice that of H.264 / AVC.

- the video encoding device and video decoding device have higher processing performance. Therefore, the HEVC standard is expected to satisfy these requirements.

- 4KTV having a display device with a size of 4096 pixels ⁇ 2160 pixels or 3840 pixels ⁇ 2160 pixels, which is about four times the pixel size of high definition HD (High pixel definition) (1920 pixels ⁇ 1080 pixels), has attracted attention.

- HD High pixel definition

- FIG. 10 shows the current standard H.264, which was studied by the inventors prior to the present invention.

- 2 is a diagram illustrating a configuration of a moving image encoding apparatus 1 that can generate an encoded bit stream by encoding a moving image input signal in accordance with a selected method of H.264 and the HEVC standard.

- the moving image encoding apparatus 1 includes a filter unit 107, a frame memory 108, a motion vector detection unit 109, a motion compensation unit 110, a buffer memory 111, an intra prediction unit 112, and a selector unit 113.

- the moving image signal VS is supplied to the input terminal of the padding processing unit 100, and the padding processing unit 100 performs padding processing as necessary. That is, as described in Patent Document 2, when the horizontal and vertical sizes of the video signal VS supplied to the video encoding device 1 are not integer multiples of the encoding block size, The padding processing unit 100 executes the padding process so that the horizontal and vertical sizes of the moving image signal are integer multiples of the encoded block size.

- FIG. 11 is a diagram for explaining the padding process in the padding processing unit 100 of the moving picture coding apparatus 1 examined by the inventors prior to the present invention shown in FIG.

- FIG. 11 shows a case where the moving picture encoding apparatus 1 shown in FIG. 10 executes the encoding process of the moving picture signal VS in accordance with the HEVC standard. That is, the coding block size in this case is a coding unit CU defined by the HEVC standard. Therefore, the coding unit CU may be a maximum coding unit (LCU) composed of 64 ⁇ 64 pixels or may be generated by dividing the maximum coding unit (LCU).

- LCU maximum coding unit

- the padding processing unit 100 executes the padding process so that the horizontal and vertical sizes of the moving image signal after the padding process are integer multiples of the encoded block size. That is, as shown in FIG. 11, padding processing data PD is added by the padding processing of the padding processing unit 100 in the right horizontal direction and vertical down direction of the moving image signal VS.

- the padding processing data PD can be formed, for example, by copying the pixel value itself or the average value of the pixel values of the moving image signal VS near the boundary between the moving image signal VS and the padding processing data PD. .

- the padding process data PD is added to the moving image signal VS so that the horizontal and vertical sizes of the moving image signal after the padding process are integer multiples of the encoded block size.

- moving picture coding processing is performed by the coding apparatus 1, it is possible to reduce high-frequency components generated by discontinuity of moving picture signals. Therefore, it is possible to reduce the code amount of the compressed video encoded bit stream CVBS generated from the variable length encoding unit 114 by reducing the high frequency component.

- the video signal VS after the padding processing of the padding processing unit 100 shown in FIG. 11 needs to be displayed by a video decoding device (Decoder).

- the padding processing data PD after the padding processing of the padding processing unit 100 shown in FIG. 11 is not required to be displayed by the moving picture decoding device (Decoder).

- the moving picture encoding apparatus 1 shown in FIG. When the encoding process of the moving image signal VS is executed according to H.264, the encoding block size is a macro block (MB).

- This macro block (MB) is a luminance component and has a size of 16 pixels ⁇ 16 pixels.

- the padding processing unit 100 executes the padding process so that the horizontal and vertical sizes of the moving image signal after the padding process are integer multiples of the macroblock (MB) size.

- the additional moving image signal VS + PD after the padding processing of the padding processing unit 100 is performed by one input terminal of the subtractor 101, one input terminal of the motion vector detection unit 109, and the intra prediction unit 112. It is supplied to one input terminal.

- a prediction mode indicating inter prediction or intra prediction of each picture of a moving image is supplied from a coding control unit (not shown) to the selector unit 113 and the variable length coding unit 114.

- the coding unit (CU) of the moving image signal VS to be inter-encoded is supplied to one input terminal of the subtractor 101.

- the motion vector detection unit 109 generates a motion vector MV in response to the moving image signal VS after the padding processing from the padding processing unit 100 and the reference image stored in the frame memory 108.

- the motion compensation unit 110 generates a motion compensated prediction signal in response to the generated motion vector MV and the reference image stored in the frame memory 108.

- the motion compensation prediction signal from the motion compensation unit 110 is subtracted from the moving image signal VS by the subtraction unit 101 via the selector unit 113.

- the frequency conversion unit 102 and the quantization unit 103 perform frequency conversion processing and quantization processing, respectively.

- the frequency transform coefficient quantized by the quantizing unit 103 and the motion vector MV generated by the motion vector detecting unit 109 are subjected to variable length coding processing by the variable length coding unit 114, and compressed video is transmitted via the video buffer 115.

- An encoded bitstream CVBS is generated.

- the frequency transform coefficient quantized by the quantizing unit 103 is subjected to local decoding processing by the inverse quantizing unit 104, the inverse frequency converting unit 105, the adder 106, and the filter unit 107, and the result of the local decoding processing is obtained. It is stored in the frame memory 108 as a reference image.

- the filter unit 107 has a function of a deblocking filter for reducing block distortion in accordance with the MPEG-4 AVC (H.264) standard. Furthermore, the filter unit 107 has a filter function called sample adaptive offset (SAO) after the deblocking filter process in order to comply with the HEVC standard. This filter function reconstructs the original signal amplitude satisfactorily by using a look-up table described by additional parameters determined by frequency distribution analysis of a coding control unit (not shown) of the moving picture coding apparatus 1. Is.

- the moving image signal VS after the padding processing from the padding processing unit 100 is supplied to one input terminal of the intra prediction unit 112. Since the buffer memory 111 stores the reference image encoded by the intra prediction and generated by the local decoding process, the reference image read from the buffer memory 111 is the other input terminal of the intra prediction unit 112. Has been supplied to. Therefore, when the intra prediction unit 112 intra-codes the coding unit (CU) of the moving image signal VS supplied to one input terminal, the intra prediction unit 112 has already been supplied from the buffer memory 111 to the other input terminal. An optimal coding unit is selected from a plurality of neighboring coding units (CUs) included in the reference image, and spatial information of the selected optimal coding unit is generated. As a result, the intra prediction unit 112 supplies the intra prediction information including the optimal intra-predicted coding unit (CU) and the corresponding spatial prediction mode to the selector unit 113.

- CU neighboring coding units

- the padding processing unit 100 uses the side of the additional video signal VS + PD after the padding process and Padding processing is performed so that the vertical size is an integral multiple of the coding block size. Therefore, even when the horizontal and vertical sizes of the moving image signal VS supplied to the moving image encoding device 1 are not integer multiples of the encoded block size, the padding process data PD is added, so that the moving image It is possible to reduce high frequency components generated by signal discontinuity. Therefore, it is possible to reduce the code amount of the compressed video encoded bit stream CVBS generated from the variable length encoding unit 114 by reducing the high frequency component.

- the moving picture encoding apparatus 1 shown in FIG. 10 reduces the amount of code of the encoded bitstream CVBS by reducing the high frequency components generated by the discontinuity of the moving picture signal by the padding processing of the padding processing unit 100. It becomes possible.

- the problem that the code amount of the encoded bit stream CVBS increases by the amount of padding processing data PD added to the moving image signal VS has been clarified by the study by the present inventors prior to the present invention.

- the padding processing data PD added to the moving image signal VS is, for example, the pixel value itself or the average value of the pixel values of the moving image signal VS near the boundary between the moving image signal VS and the padding processing data PD. Formed by copying. As a result, the pixel value of the padding process data PD becomes a non-zero value. Accordingly, since the padding process data PD is also encoded, the encoded bit stream CVBS includes data different from the moving image signal VS, so that the code amount of the encoded bit stream CVBS increases.

- the moving picture coding apparatus (1) executes a moving picture coding process of a syntax element related to a moving picture signal (VS) to be coded to produce a coded bit stream (CVBS). ).

- the moving picture coding apparatus Prior to the moving picture coding process, the moving picture coding apparatus (1) executes a padding process (100) in which padding process data (PD) is added to the moving picture signal (VS).

- a padding process 100 in which padding process data (PD) is added to the moving picture signal (VS).

- the horizontal and vertical sizes of the additional moving image signal to which the padding processing data (PD) is added by the padding processing are set to integer multiples of the coding block size of the moving image coding processing.

- the moving picture coding apparatus (1) determines whether the coding block of the syntax element belongs to the moving picture signal (VS) or the padding processing data (PD).

- the moving image code is formed so that the encoded bit stream having a first code amount is formed. Control is controlled.

- the encoded bitstream having a second code amount smaller than the first code amount is formed.

- the moving image encoding process is controlled (see FIG. 1).

- the moving image encoding device it is possible to reduce an increase in the amount of code of the encoded bit stream generated from the moving image encoding device during the padding process.

- FIG. 1 is a diagram showing a configuration of a moving picture encoding apparatus 1 according to the first embodiment.

- FIG. 2 is a diagram for explaining operations of the quantization output adjustment unit 116 and the quantization output control unit 117 included in the moving picture coding apparatus 1 according to the first embodiment.

- FIG. 3 is a diagram for explaining the configuration and operation of the motion vector detection control unit 118 and the motion vector detection unit 109 included in the video encoding device 1 according to the first embodiment.

- FIG. 4 is a diagram for explaining the configuration and operation of the intra prediction control unit 119 and the intra prediction unit 112 included in the video encoding device 1 of the first embodiment.

- FIG. 5 is a diagram for explaining the configuration and operation of the frequency conversion control unit 120 included in the video encoding device 1 of the first embodiment.

- FIG. 6 is a diagram illustrating the configuration and operation of the quantization parameter control unit 121 included in the video encoding device 1 according to the first embodiment.

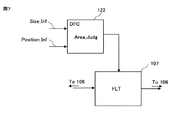

- FIG. 7 is a diagram illustrating the configuration and operation of the filter unit 107 and the filter control unit 122 for realizing low power consumption in the deblocking filter in the video encoding device 1 according to the first embodiment.

- FIG. 8 is a diagram illustrating how a coding unit (CU) is adaptively divided from a maximum coding unit (LCU).

- FIG. 9 is a diagram illustrating a configuration of a moving image encoding apparatus according to Embodiment 2 that executes parallel processing at a slice level or a tile level.

- FIG. 10 shows the current standard H.264 studied by the present inventors prior to the present invention.

- FIG. 2 is a diagram illustrating a configuration of a moving image encoding apparatus 1 that can generate an encoded bit stream by encoding a moving image input signal in accordance with a selected method of H.264 and the HEVC standard.

- FIG. 11 is a diagram for explaining the padding processing in the padding processing unit 100 of the moving image encoding apparatus 1 studied by the present inventors prior to the present invention shown in FIG.

- a moving picture encoding apparatus (1) performs a moving picture encoding process of syntax elements related to a moving picture signal (VS) to be encoded, thereby encoding bits.

- a stream (CVBS) is formed (see FIG. 1).

- the moving picture coding apparatus Prior to the moving picture coding process, the moving picture coding apparatus (1) executes a padding process (100) in which padding process data (PD) is added to the moving picture signal (VS).

- a padding process 100 in which padding process data (PD) is added to the moving picture signal (VS).

- the horizontal and vertical sizes of the additional moving image signal to which the padding processing data (PD) is added by the padding processing are set to integer multiples of the coding block size of the moving image coding processing.

- the moving picture coding apparatus (1) determines whether the coding block of the syntax element related to the moving picture signal belongs to the moving picture signal (VS) or the padding processing data (PD). Is done.

- the first code amount is set to The moving image encoding process is controlled according to the determination in the first case so that the encoded bit stream is formed.

- the first The moving image encoding process is controlled by the determination in the second case so that the encoded bit stream having the second code amount smaller than the code amount is formed.

- the moving picture encoding device (1) defined in [1] includes a padding processing unit, a motion vector detecting unit, a motion compensating unit, a subtracter, a frequency converting unit, and a quantizing unit.

- Unit an inverse quantization unit, an inverse frequency conversion unit, a memory (108, 111), an intra prediction unit, a selector unit, and a variable length coding unit.

- the padding processing unit (100) generates the additional moving image signal by executing the padding processing, and sends it to the subtracter (101), the motion vector detection unit (109), and the intra prediction unit (112). Supply.

- the motion vector detection unit (109) generates a motion vector (MV) from the additional moving image signal and the inter reference image stored in the memory (108).

- the motion compensation unit (110) is responsive to the motion vector (MV) generated from the motion vector detection unit (109) and the inter reference image stored in the memory (108). Is generated.

- the intra prediction unit (112) generates an intra prediction signal from the additional moving image signal and the intra reference image stored in the memory (111).

- the selector unit (113) outputs a selected prediction signal selected from the motion compensation prediction signal generated from the motion compensation unit (110) and the intra prediction signal generated from the intra prediction unit (112). To do.

- the additional moving image signal is supplied to one input terminal of the subtracter (101), and the selection prediction signal output from the selector unit (113) is supplied to the other input terminal of the subtracter (101). And a prediction residual is generated from the output terminal of the subtracter (101).

- the frequency conversion unit (102) and the quantization unit (103) perform frequency conversion processing and quantization processing, respectively.

- the result of the frequency conversion process of the frequency conversion unit (102) quantized by the quantization unit (103) is locally decoded by the inverse quantization unit (104) and the inverse frequency conversion unit (105). Processing is executed, and the result of the local decoding processing is stored in the memory (108, 111) as the inter reference image and the intra reference image.

- the result of the frequency conversion processing of the frequency conversion unit (102) quantized by the quantization unit (103) is encoded by the variable length encoding unit (114), and the variable length encoding unit

- the coded bitstream (CVBS) is generated from (114).

- the syntax element related to the moving image signal is at least one of the following information (A) to (D) (see FIG. 1).

- the video encoding device (1) defined in [2] includes an output terminal of the quantization unit (103) and the variable length encoding unit (114).

- a quantized output adjusting unit (116) connected between the input terminal of the dequantizing unit (104) and the input terminal of the inverse quantizing unit (104), and a quantized output control unit connected to the quantized output adjusting unit (116) (117).

- the quantization output control unit (117) performs the frequency conversion process of the frequency conversion unit (102) quantized by the quantization unit (103) which is the syntax element related to the moving image signal. It is determined whether the information belongs to the moving image signal (VS) or the padding processing data (PD).

- the quantized output adjustment unit (116) has a quantized output signal (201) generated by the quantizing process of the quantizing unit (103) and a data amount smaller than the quantized output signal (201).

- the adjustment signal (200) and the determination result generated from the quantization output control unit (117) are supplied.

- the quantization output adjustment unit (116) In response to the determination result of the quantization output control unit (117) that the information of the frequency conversion process belongs to the video signal (VS), the quantization output adjustment unit (116) The quantized output signal (201) generated from (103) is supplied to the input terminal of the variable length coding unit (114) and the input terminal of the inverse quantization unit (104).

- the quantization output adjustment unit (116) 200 is supplied to the input terminal of the variable length coding unit (114) and the input terminal of the inverse quantization unit (104) (see FIGS. 1 and 2).

- the moving picture encoding device (1) defined in [2] includes a motion vector detection control unit (118) connected to the motion vector detection unit (109). ).

- the motion vector detection unit (109) includes a motion vector search unit (1091), a prediction vector generation unit (1092), and a motion vector selector unit (1093).

- the motion vector search unit (1091) generates a search motion vector (MV) by performing a motion vector search operation on the encoded block included in the additional moving image signal and encoded by inter prediction.

- MV search motion vector

- the prediction vector generation unit (1092) includes a standard H.264 standard for the encoded block included in the additional moving image signal and encoded by inter prediction. H.264 or standard H.264 A motion vector prediction method defined in H.265 is executed to generate a prediction vector (PMV).

- PMV prediction vector

- the motion vector detection control unit (118) includes the encoded block encoded by inter prediction that is the syntax element included in the additional moving image signal and related to the moving image signal. It is determined whether the signal (VS) or the padding processing data (PD) belongs.

- the motion vector selector unit (1093) includes the search motion vector (MV) generated by the motion vector search unit (1091) and the prediction vector (PMV) generated by the prediction vector generation unit (1092). And a determination result generated from the motion vector detection control unit (118).

- the motion vector selector unit (1093) In response to the determination result of the motion vector detection control unit (118) that the encoded block encoded by the inter prediction belongs to the video signal (VS), the motion vector selector unit (1093) The search motion vector (MV) generated by the motion vector search unit (1091) is supplied to the motion compensation unit (110) as the motion vector (MV).

- the motion vector selector unit is generated by the prediction vector generation unit

- the prediction vector (PMV) is supplied to the motion compensation unit (110) as the motion vector (MV) (see FIGS. 1 and 3).

- the video encoding device (1) defined in [2] further includes an intra prediction control unit (119) connected to the intra prediction unit (112). To do.

- the intra prediction unit (112) includes an intra prediction direction determination unit (1121), a neighborhood prediction direction generation unit (1122), a prediction direction selector unit (1123), and an intra prediction processing unit (1124).

- the intra prediction direction determination unit (1121) performs an intra prediction operation on the encoded block included in the additional moving image signal and encoded by intra prediction to generate a prediction direction (PD).

- the neighborhood prediction direction generation unit (1122) includes a standard H.264 standard for the encoded block included in the additional moving image signal and encoded by intra prediction. H.264 or standard H.264

- the near direction prediction method defined in H.265 is executed to generate a near direction prediction (NPD).

- the intra prediction control unit (119) includes the encoded block that is encoded by intra prediction that is the syntax element included in the additional moving image signal and is related to the moving image signal. It is determined whether it belongs to (VS) or the padding processing data (PD).

- the prediction direction selector unit (1123) includes the prediction direction (PD) generated by the intra prediction direction determination unit (1121) and the vicinity prediction direction (1122) generated by the vicinity prediction direction generation unit (1122). NPD) and a determination result generated from the intra prediction control unit (119).

- the prediction direction selector unit (1123) In response to a determination result of the intra prediction control unit (119) that the encoded block encoded by the intra prediction belongs to the video signal (VS), the prediction direction selector unit (1123) The prediction direction (PD) generated by the intra prediction direction determination unit (1121) is supplied to the intra prediction processing unit (1124).

- the intra prediction processing unit (1124) includes the selector unit (113) based on the prediction direction (PD) generated by the intra prediction direction determination unit (1121) and the intra reference image stored in the memory (111).

- the intra prediction signal to be supplied to is generated.

- the prediction direction selector unit (1123) In response to the determination result of the intra prediction control unit (119) that the encoded block encoded by the intra prediction belongs to the padding process data (PD), the prediction direction selector unit (1123) The neighborhood prediction direction (NPD) generated by the neighborhood prediction direction generation unit (1122) is supplied to the intra prediction processing unit (1124).

- the intra prediction signal supplied to the selector unit (113) is generated from a reference image (see FIGS. 1 and 4).

- the video encoding device (1) defined in [3] includes a frequency conversion control unit (120) connected to the frequency conversion unit (102). In addition.

- the frequency conversion control unit (120) sets a frequency conversion size (TS) for the frequency conversion process executed by the frequency conversion unit (102).

- an encoded block processed by the frequency conversion process executed by the frequency conversion unit includes the moving image signal and the padding processing data.

- the partition operation of the coded block in the frequency conversion unit (102) is determined (see FIGS. 1 and 5).

- the frequency conversion control unit (120) is a frequency conversion size determination unit (1201).

- the frequency conversion size determination unit (1201) is a standard H.264 standard. H.264 or standard H.264

- One selected frequency transform size (TS) is selected from a plurality of types of frequency transform size (TS) candidates defined in H.265 and supplied to one input terminal of the frequency transform size selector unit (1204).

- the region determination unit (1203) determines whether or not a coding block having the one selected frequency transform size (TS) crosses over a boundary between the moving image signal and the padding processing data.

- the non-crossover frequency transform size determining unit (1202) is configured to perform non-crossover frequency transform so that an encoded block processed by the frequency transform processing does not cross over the boundary between the moving image signal and the padding processing data.

- a size (NTS) is generated and supplied to the other input terminal of the frequency conversion size selector unit (1204).

- the frequency transform size selector unit In response to the determination result of the region determining unit (1203) that the encoded block having the one selected frequency transform size does not cross over the boundary, the frequency transform size selector unit (1204) The selected frequency conversion size is supplied to the frequency conversion unit (102) as the frequency conversion size (TS) for the frequency conversion processing.

- the frequency conversion size selector unit sets the non-crossover frequency conversion size to the frequency.

- the frequency conversion unit supplies the frequency conversion size to the frequency conversion unit (see FIGS. 1 and 5).

- the moving picture encoding device (1) defined in [2] includes a quantization parameter control unit (121) connected to the quantization unit (103). Is further provided.

- the quantization parameter control unit (121) includes a quantization parameter generation unit (1211), a quantization parameter register unit (1212), a region determination unit (1213), and a quantization parameter selector unit (1214).

- the quantization parameter generation unit (1211) generates a quantization parameter (QP) corresponding to a code amount of the coded bit stream (CVBS) generated from the variable length coding unit (114), and The data is supplied to one input terminal of the quantization parameter selector unit (1214) and the input terminal of the quantization parameter register unit (1212).

- QP quantization parameter

- the quantization parameter (QP) generated at the output terminal of the quantization parameter register unit (1212) is supplied to the other input terminal of the quantization parameter selector unit (1214).

- the region determination unit (1213) includes a coding block quantized by the quantization unit (103) that is the syntax element related to the moving image signal, the moving image signal (VS) and the padding It is determined to which of the processing data (PD) it belongs.

- the quantization parameter selector unit (1214) supplies the quantization parameter (QP) supplied from the quantization parameter generation unit (1211) to the one input terminal to the quantization unit (103).

- the quantization parameter selector unit In response to a determination result of the region determination unit that the coding block quantized by the quantization unit belongs to the padding processing data (PD), the quantization parameter selector unit is configured to store the quantization parameter register.

- the quantization parameter (QP) supplied from the output terminal of the unit (1212) to the other input terminal is supplied to the quantization unit (103) (see FIGS. 1 and 6).

- the video encoding device (1) defined in any one of [2] to [8] includes a filter connected to the memory (108).

- a unit (107) and a filter controller (122) are further provided.

- the filter unit (107) performs a deblocking filter process on the result of the local decoding process performed by the inverse quantization unit (104) and the inverse frequency transform unit (105), and the deblocking filter

- the result of the processing is stored in the memory (108).

- the filter control unit (122) is configured such that the result of the local decoding process in which the deblocking filter process is performed by the filter unit (107) is a result of the video signal (VS) and the padding process data (PD). It is determined to which one it belongs.

- the motion vector detection unit, the motion compensation unit, and the subtraction are one semiconductor integrated circuit. Integrated on a chip (see FIG. 1).

- the encoding block of the video encoding process The size is either a macroblock having a size of 16 pixels ⁇ 16 pixels or a coding unit that can be formed from a maximum coding unit having a size of 64 pixels ⁇ 64 pixels (see FIG. 1).

- the video encoding device (1) defined in any one of [2] to [8] is a standard H.264 standard. H.264 and standard H.264.

- the moving image encoding process of the moving image signal (VS) is executed to form the encoded bit stream (CVBS) (FIG. 1). reference).

- the moving picture encoding device defined in any one of [2] to [8] includes an image dividing unit (301) and a plurality of moving picture encodings. And processing units (1A, 1B, 1C, 1D).

- the image dividing unit (301) generates a plurality of divided moving image signals by dividing the moving image signal (VS).

- the plurality of divided moving image signals generated by the image dividing unit are processed in parallel by the plurality of moving image encoding processing units (1A, 1B, 1C, 1D).

- Each of the moving image encoding processing units of the plurality of moving image encoding processing units (1A, 1B, 1C, 1D) includes the motion vector detecting unit, the motion compensating unit, the subtractor, the frequency converting unit, and the quantum converting unit. And an inverse-frequency transform unit, an intra-prediction unit, a selector unit, and a variable-length coding unit (see FIG. 9).

- the image dividing unit (301) and the plurality of moving image encoding processing units (1A, 1B, 1C) 1D) is characterized by being integrated on one semiconductor chip of a semiconductor integrated circuit (see FIG. 9).

- An embodiment according to another aspect is a moving image that forms a coded bitstream (CVBS) by executing a moving picture coding process of syntax elements related to a moving picture signal (VS) to be coded.

- CVBS coded bitstream

- VS moving picture signal

- the moving picture coding apparatus Prior to the moving picture coding process, the moving picture coding apparatus (1) executes a padding process (100) in which padding process data (PD) is added to the moving picture signal (VS).

- a padding process 100 in which padding process data (PD) is added to the moving picture signal (VS).

- the horizontal and vertical sizes of the additional moving image signal to which the padding processing data (PD) is added by the padding processing are set to integer multiples of the coding block size of the moving image coding processing.

- the moving picture coding apparatus (1) determines whether the coding block of the syntax element related to the moving picture signal belongs to the moving picture signal (VS) or the padding processing data (PD). Is done.

- the first code amount is set to The moving image encoding process is controlled according to the determination in the first case so that the encoded bit stream is formed.

- the first The moving image encoding process is controlled by the determination in the second case so that the encoded bit stream having the second code amount smaller than the code amount is formed.

- FIG. 1 is a diagram showing a configuration of a moving picture encoding apparatus 1 according to the first embodiment.

- the moving picture encoding apparatus 1 is the same as the moving picture encoding apparatus 1 shown in FIG. H.264 and the HEVC standard can be used to generate a coded bitstream by coding a moving image input signal in accordance with any method selected.

- the moving image encoding apparatus 1 includes a padding processing unit 100, a subtracter 101, a frequency conversion unit 102, a quantization unit 103, an inverse quantization unit 104, an inverse frequency conversion unit 105, an adder 106, and a variable length. It comprises an encoding unit 114 and a video buffer 115. Furthermore, the moving image encoding apparatus 1 includes a filter unit 107, a frame memory 108, a motion vector detection unit 109, a motion compensation unit 110, a buffer memory 111, an intra prediction unit 112, and a selector unit 113.

- the moving image coding apparatus 1 includes a quantization output adjustment unit 116 and a quantization output that are not included in the moving image coding apparatus 1 illustrated in FIG.

- the control unit 117, the motion vector detection control unit 118, the intra prediction control unit 119, the frequency conversion control unit 120, and the quantization parameter control unit 121 are configured.

- the moving picture encoding apparatus 1 according to the first embodiment includes a filter control unit 122 that controls the deblocking filter of the filter unit 107 in order to realize low power consumption.

- the moving picture coding apparatus 1 forms a coded bitstream CVBS by executing a moving picture coding process of syntax elements related to a moving picture signal VS to be coded. is there.

- the moving picture encoding apparatus 1 executes a padding process in which padding process data PD is added to the moving picture signal VS.

- the horizontal and vertical sizes of the additional moving image signal to which the padding processing data PD has been added by the padding processing are set to integer multiples of the coding block size of the moving image coding processing.

- the moving picture coding apparatus 1 determines whether the coding block of the syntax element related to the moving picture signal VS belongs to the moving picture signal VS or the padding processing data PD.

- the first The moving image encoding process is controlled by the determination in the first case so that the encoded bit stream CVBS having a code amount is formed.

- the first example of the syntax element related to the video signal VS to be encoded is the frequency conversion of the frequency conversion unit 102 quantized by the quantization unit 103 Processing information.

- a second example of a syntax element related to the moving image signal VS to be encoded is information on a coding block encoded by inter prediction using a motion vector and a motion compensated prediction signal.

- a third example of the syntax element related to the moving image signal VS to be encoded is information on a coding block encoded by intra prediction using an intra reference image.

- a fourth example of the syntax element related to the moving image signal VS to be encoded is information on a coding block that is quantized by the quantization unit 103.

- the frequency conversion processing information of the frequency conversion unit 102 quantized by the quantization unit 103 is supplied to the input terminal of the quantization output adjustment unit 116.

- the coding block inter-coded by the motion compensation unit 110 using the motion vector MV formed from the motion vector detection unit 109 is either the moving image signal VS or the padding processing data PD.

- the motion vector detection unit 109 itself controls the partition operation of the encoded block in the motion vector detection unit 109 so as to include only one of them.

- intra prediction is performed so that a coding block that is intra-coded by the intra prediction unit 112 using the intra prediction direction includes only one of the video signal VS and the padding processing data PD.

- the partition operation of the encoded block in the unit 112 is controlled by the intra prediction unit 112 itself.

- the quantization unit 103 when the quantization unit 103 quantizes the frequency conversion coefficient of the frequency conversion unit 102, the frequency conversion coefficient that is a coding block is either the moving image signal VS or the padding processing data PD.

- the quantization unit 103 itself controls the partition operation of the coding block in the quantization unit 103 so as to include only one of them.

- At least one of the first to fourth examples described above is selected and executed, so that in the padding process, the code of the encoded bitstream CVBS It becomes possible to reduce the increase in the amount.

- the moving picture encoding apparatus 1 according to the first embodiment, at least a plurality of the first example to the fourth example described above are selected and executed, so that in the padding process, the encoded bit stream CVBS is selected. It is possible to further reduce the increase in the amount of codes.

- the amount of code of the encoded bitstream CVBS during the padding process can be greatly reduced.

- the local decoding processing result which is an encoded block subjected to the deblocking filter processing by the filter unit 107, is converted into the video signal VS.

- the partition operation of the encoded block in the filter unit 107 is controlled by the filter unit 107 itself so as to include only one of the padding processing data PD.

- FIG. 2 is a diagram for explaining operations of the quantization output adjustment unit 116 and the quantization output control unit 117 included in the moving picture coding apparatus 1 according to the first embodiment.

- the coding unit (CU) of the moving image signal VS to which the padding processing data PD is added is supplied to one input terminal of the subtractor 101, and the motion compensated prediction signal from the motion compensation unit 110 or

- the intra prediction information from the intra prediction unit 12 is supplied to the other input terminal of the subtractor 101 via the selector unit 113, so that a prediction residual is generated from the output terminal of the subtractor 101.

- the frequency conversion unit 102 and the quantization unit 103 perform frequency conversion processing and quantization processing, respectively.

- the quantized frequency conversion coefficient 201 of the frequency conversion unit 102 is generated from the output terminal of the quantization unit 103.

- the frequency transform unit 102 performs integer-based discrete cosine transform (DCT: Discrete Cosine Transform) or discrete sine transform (DST: Discrete Sine Transform) that outputs only integer transform coefficients that do not include decimals.

- DCT Discrete Cosine Transform

- DST discrete sine transform

- the frequency conversion coefficient 201 shown in FIG. 2 includes three integer non-zero coefficients (“5”, “ ⁇ 1”, “2”) and thirteen zero coefficients (“0”). Yes.

- the frequency conversion coefficient 201 is supplied to one input terminal of the quantization output adjustment unit 116 configured by the selector SEL.

- 16 zeros are connected to the other input terminal of the quantization output adjustment unit 116 configured by the selector SEL so that the encoded bit stream CVBS having a small code amount is generated from the variable length encoding unit 114.

- An adjusted frequency conversion coefficient 200 including a coefficient (“0”) is supplied.

- the output terminal of the quantization output control unit 117 is connected to the selection control terminal of the quantization output adjustment unit 116 constituted by the selector SEL.

- Size information Size_Inf indicating the horizontal and vertical pixel sizes of the coding unit (CU) supplied to one input terminal of the subtractor 101 is supplied to one input terminal of the quantization output control unit 117, while the subtractor Position information Position_Inf of the coding unit (CU) supplied to one input terminal 101 is supplied to the other input terminal of the quantization output control unit 117.

- This position information Position_Inf is the raster scan start address (X, Y) at the upper left of the coding unit (CU).

- FIG. 8 is a diagram illustrating a state where the coding unit (CU) is adaptively divided from the maximum coding unit (LCU). Therefore, at least one of the raster scan start address at the upper left of the maximum coding unit (LCU) and the raster scan start address at the upper left of the coding unit (CU) inside the maximum coding unit (LCU) is quantized as position information Position_Inf. It is supplied to the output control unit 117.

- the quantization output control unit 117 is supplied with the size information Size_Inf and the position information Position_Inf of the coding unit (CU), and the coding unit (CU) belongs to either the moving image signal VS or the padding processing data PD. Is determined.

- the quantization output control unit 117 determines that the coding unit (CU) belongs to the video signal VS, for example, a high-level “1” selection output signal is generated from the output terminal of the quantization output control unit 117.

- the quantization output adjustment unit 116 is supplied to one input terminal in response to the high level “1” selection output signal generated from the output terminal of the quantization output control unit 117.

- the frequency conversion coefficient 201 including 13 non-zero coefficients and 13 zero coefficients is selected and output to the output terminal. Therefore, the frequency transform coefficient 201 including three non-zero coefficients and thirteen zero coefficients output to the output terminal of the quantization output adjustment unit 116 is the same as the input terminal of the variable length coding unit 114 and the inverse quantization unit.

- variable length coding unit 114 forms a coded bitstream CVBS having a relatively large code amount as the first code amount. Therefore, in this case, the video decoding device (Video Decoder) to which the encoded bit stream CVBS is supplied can reproduce the video signal VS with high image quality.

- the quantization output control unit 117 determines that the coding unit (CU) belongs to the padding process data PD, for example, a low level “0” selection output signal is output from the output terminal of the quantization output control unit 117. Generated. As a result, the quantization output adjustment unit 116 responds to the low level “0” selection output signal generated from the output terminal of the quantization output control unit 117, and supplies the 16 output signals supplied to the other input terminal. The adjustment frequency conversion coefficient 200 including the zero coefficient is selected and output to the output terminal.

- the adjusted frequency transform coefficient 200 including 16 zero coefficients output to the output terminal of the quantization output adjustment unit 116 is supplied to the input terminal of the variable length encoding unit 114 and the input terminal of the inverse quantization unit 104. Is done.

- the variable length coding unit 114 forms a coded bitstream CVBS having a second code amount smaller than the first code amount. Therefore, in this case, it is possible to reduce an increase in the code amount of the encoded bitstream CVBS by the padding process of the padding processing unit 100.

- the encoded bit stream CVBS is stored on a recording disk having a certain video storage capacity such as a nonvolatile memory or a DVD, the saved amount and the recording time can be lengthened, or the saved amount can be improved. It becomes possible.

- the frequency conversion coefficient 201 or the adjusted frequency conversion coefficient 200 output to the output terminal of the quantization output adjustment unit 116 is: This is supplied to the input terminal of the variable length coding unit 114 and the input terminal of the inverse quantization unit 104. Therefore, a moving picture decoding apparatus (Video decoder) to which a result of local decoding executed by the inverse quantization unit 104, the inverse frequency conversion unit 105, the adder 106, the filter unit 107, and the frame memory 108 and the encoded bit stream CVBS is supplied It is possible to prevent a mismatch between the reproduced image of Decoder).

- Video decoder Video decoder

- the moving image encoding apparatus 1 includes a quantization output adjusting unit 116, an output terminal of the quantization unit 103, an input terminal of the variable length encoding unit 114, and an inverse quantum. Connected to the input terminal of the conversion unit 104.

- two quantization output adjustment units 116 are used, and the first quantization output adjustment unit 116 is provided between the output terminal of the quantization unit 103 and the input terminal of the variable length encoding unit 114. It is also possible to connect the second quantization output adjustment unit 116 between the output terminal of the quantization unit 103 and the input terminal of the inverse quantization unit 104.

- a single quantization output control unit 117 controls the first quantization output adjustment unit 116 and the second quantization output adjustment unit 116 in common, and the coding unit (CU) pads the video signal VS and padding. It is possible to determine to which of the processing data PD belongs. Also in this other embodiment, it is possible to reduce an increase in the code amount of the encoded bitstream CVBS due to the padding processing of the padding processing unit 100, and the above-mentioned local decoding processing result and moving picture decoding apparatus described above It is possible to prevent the occurrence of mismatch with the reproduced image.

- the moving picture encoding apparatus 1 of the first embodiment is the current standard H.264.

- a coded bit stream CVBS is generated by encoding a moving image input signal in accordance with H.264

- a macroblock having a luminance component size of 16 pixels ⁇ 16 pixels instead of the above-described coding unit (CU).

- MB is to be processed.

- the frequency conversion coefficient 201, the adjustment frequency conversion coefficient 200, and the like are output from the output terminal of the quantization output adjustment unit 116. Is output.

- FIG. 3 is a diagram for explaining the configuration and operation of the motion vector detection control unit 118 and the motion vector detection unit 109 included in the video encoding device 1 according to the first embodiment.

- the motion vector detection unit 109 includes a motion vector search unit 1091, a prediction vector generation unit 1092, and a motion vector selector unit 1093.

- the motion vector search unit 1091 generates a motion vector MV by executing a general motion vector search operation according to the MPEG-2 and MPEG-4 standards, and the generated motion vector MV is the motion vector selector 1093. It is supplied to one input terminal.

- a general motion vector search operation executed by the motion vector search unit 1091 is performed by inter prediction with reference to a motion vector MV of a coding unit (CU) in the vicinity of a coding unit (CU) encoded by inter prediction.

- the motion vector MV of the coding unit (CU) to be converted is searched and generated.

- Prediction vector generation unit 1092 is the current standard H.264.

- the prediction vector PMV is generated by executing a prediction method defined by H.264 and the HEVC standard, and the generated prediction vector PMV is supplied to the other input terminal of the motion vector selector unit 1093.

- a motion vector candidate list that can be a prediction value is generated for a coding unit (CU) encoded by inter prediction, and a motion vector candidate list is encoded from the motion vectors included in the list.