WO2013008538A1 - 画像処理装置及び画像処理方法 - Google Patents

画像処理装置及び画像処理方法 Download PDFInfo

- Publication number

- WO2013008538A1 WO2013008538A1 PCT/JP2012/063321 JP2012063321W WO2013008538A1 WO 2013008538 A1 WO2013008538 A1 WO 2013008538A1 JP 2012063321 W JP2012063321 W JP 2012063321W WO 2013008538 A1 WO2013008538 A1 WO 2013008538A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- motion vector

- unit

- prediction unit

- layer

- information

- Prior art date

Links

- 238000012545 processing Methods 0.000 title claims abstract description 140

- 238000003672 processing method Methods 0.000 title claims description 6

- 230000033001 locomotion Effects 0.000 claims abstract description 860

- 239000013598 vector Substances 0.000 claims abstract description 673

- 238000000034 method Methods 0.000 claims description 108

- 230000008569 process Effects 0.000 claims description 88

- 239000010410 layer Substances 0.000 description 456

- 239000000872 buffer Substances 0.000 description 118

- 230000015654 memory Effects 0.000 description 48

- 238000004364 calculation method Methods 0.000 description 45

- 230000006870 function Effects 0.000 description 32

- 238000010586 diagram Methods 0.000 description 30

- 238000004891 communication Methods 0.000 description 22

- 238000013139 quantization Methods 0.000 description 22

- 238000003384 imaging method Methods 0.000 description 21

- 239000011229 interlayer Substances 0.000 description 19

- 230000005540 biological transmission Effects 0.000 description 17

- 238000001914 filtration Methods 0.000 description 12

- 230000005236 sound signal Effects 0.000 description 11

- 238000009825 accumulation Methods 0.000 description 10

- 230000003287 optical effect Effects 0.000 description 10

- 230000002123 temporal effect Effects 0.000 description 9

- 230000009466 transformation Effects 0.000 description 9

- 230000008707 rearrangement Effects 0.000 description 8

- 238000006243 chemical reaction Methods 0.000 description 7

- 238000007906 compression Methods 0.000 description 5

- 230000006835 compression Effects 0.000 description 4

- 238000012937 correction Methods 0.000 description 3

- 238000005516 engineering process Methods 0.000 description 3

- 239000000284 extract Substances 0.000 description 3

- 230000001419 dependent effect Effects 0.000 description 2

- 239000000203 mixture Substances 0.000 description 2

- 230000009471 action Effects 0.000 description 1

- 230000004075 alteration Effects 0.000 description 1

- 230000003321 amplification Effects 0.000 description 1

- 238000013459 approach Methods 0.000 description 1

- 230000010267 cellular communication Effects 0.000 description 1

- 230000001413 cellular effect Effects 0.000 description 1

- 230000000694 effects Effects 0.000 description 1

- 210000003127 knee Anatomy 0.000 description 1

- 239000004973 liquid crystal related substance Substances 0.000 description 1

- 230000007246 mechanism Effects 0.000 description 1

- 238000012986 modification Methods 0.000 description 1

- 230000004048 modification Effects 0.000 description 1

- 238000003199 nucleic acid amplification method Methods 0.000 description 1

- 230000002093 peripheral effect Effects 0.000 description 1

- 238000007639 printing Methods 0.000 description 1

- 230000009467 reduction Effects 0.000 description 1

- 230000004044 response Effects 0.000 description 1

- 239000004065 semiconductor Substances 0.000 description 1

- 239000007787 solid Substances 0.000 description 1

- 239000012536 storage buffer Substances 0.000 description 1

- 238000012360 testing method Methods 0.000 description 1

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/103—Selection of coding mode or of prediction mode

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/136—Incoming video signal characteristics or properties

- H04N19/137—Motion inside a coding unit, e.g. average field, frame or block difference

- H04N19/139—Analysis of motion vectors, e.g. their magnitude, direction, variance or reliability

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/176—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a block, e.g. a macroblock

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/187—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being a scalable video layer

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/30—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/30—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability

- H04N19/36—Scalability techniques involving formatting the layers as a function of picture distortion after decoding, e.g. signal-to-noise [SNR] scalability

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/46—Embedding additional information in the video signal during the compression process

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/513—Processing of motion vectors

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/513—Processing of motion vectors

- H04N19/517—Processing of motion vectors by encoding

- H04N19/52—Processing of motion vectors by encoding by predictive encoding

Definitions

- the present disclosure relates to an image processing apparatus and an image processing method.

- the purpose is to efficiently transmit or store digital images, and to compress the amount of information of images using image-specific redundancy.

- Compression techniques such as the 26x (ITU-T Q6 / 16 VCEG) standard and the Moving Picture Experts Group (MPEG) -y standard are widespread.

- MPEG Moving Picture Experts Group

- H.264 is used. Based on the 26x standard and incorporating new functions, higher compression rates can be achieved.

- An international standard named H.264 and MPEG-4 Part 10 Advanced Video Coding; AVC

- inter-frame prediction the content of the image to be encoded is predicted using the reference image, and only the difference between the predicted image and the actual image is encoded. Thereby, compression of the code amount is realized.

- the difference between the predicted image and the actual image is large, and a simple compression between frames can not be obtained. Therefore, the motion of the object is recognized as a motion vector, and the pixel value of the region where the motion appears is compensated according to the motion vector, thereby reducing the prediction error in inter-frame prediction.

- Such a method is called motion compensation.

- each coding unit (CU: Coding Unit) in an image is one or more prediction units. (PU: Prediction Unit), and motion vectors can be set for each prediction unit.

- the size and shape of the prediction unit of HEVC is H.264. It is more diverse than H.264 / AVC blocks and can more accurately reflect the motion of an object in motion compensation (see Non-Patent Document 1 below).

- Non-Patent Document 2 below predicts a motion vector using spatial correlation or temporal correlation of motion in order to reduce the code amount of the motion vector, and only the difference between the predicted motion vector and the actual motion vector.

- Non-Patent Document 3 below proposes to reduce the code amount of motion information by merging blocks having common motion information among adjacent blocks in an image.

- Scalable coding refers to a technology for hierarchically coding a layer transmitting a coarse image signal and a layer transmitting a fine image signal.

- the following three types of typical attributes are layered in scalable coding.

- -Spatial scalability spatial resolution or image size is layered.

- -Temporal scalability Frame rates are layered.

- -Signal to Noise Ratio (SNR) scalability SN ratios are layered.

- SNR Signal to Noise Ratio

- JCTVC-B205 “Test Model under Consideration”, Joint Collaborative Team on Video Coding meeting: Geneva, CH, 21-28 July, 2010

- VCEG-AI22 “Motion Vector Coding with Optimal PMV Selection”

- Jungyoup Yang et al, July, 2008

- JCTVC-A116 “Video Coding Technology Proposal by Fraunhofer HHI”

- M. Winken et al, April, 2010

- Non-Patent Document 2 described above and the method proposed by Non-Patent Document 3 do not assume scalable coding. If these existing techniques are applied to each layer of an image to be scalable coded, it is possible to expect a reduction in the amount of code to some extent. However, depending on the type of scalable coding, the correlation of motion between layers is remarkable. Therefore, it is useful to exploit such motion correlation between layers to improve coding efficiency.

- the technology according to the present disclosure aims to enhance coding efficiency by utilizing the correlation of motion between layers of an image to be scalable coded.

- the second corresponding to the first prediction unit in the first layer of the image to be scalable decoded including the first layer and a second layer higher than the first layer.

- Setting information for setting a motion vector in a second prediction unit in the layer wherein the information acquisition unit acquires the setting information related to the motion vector set in the first prediction unit;

- an image processing apparatus comprising: a motion vector setting unit configured to set a motion vector in the second prediction unit using the setting information acquired by the information acquisition unit.

- the image processing apparatus can be typically realized as an image decoding apparatus that decodes an image.

- the first prediction unit in the first layer of the image to be scalable-decoded including the first layer and a second layer higher than the first layer corresponds to the first prediction unit.

- the first prediction unit in the first layer of the image to be scalable-decoded including the first layer and a second layer higher than the first layer corresponds to the first prediction unit.

- An image processing apparatus comprising: an encoding unit that encodes the setting information generated by the information generation unit.

- the image processing apparatus can be typically realized as an image coding apparatus that codes an image.

- the first prediction unit in the first layer of the image to be scalable-decoded including the first layer and a second layer higher than the first layer corresponds to the first prediction unit.

- coding efficiency is further enhanced by exploiting the correlation of motion between layers of the scalable coded image.

- FIG. 7 is a first explanatory diagram for describing an example of predictor candidates for prediction of a motion vector.

- FIG. 10 is a second explanatory diagram for describing an example of a predictor candidate for prediction of a motion vector. It is a flowchart which shows an example of the flow of the motion search process by the motion search part which concerns on a 1st Example.

- FIG. 1 is a block diagram showing an example of the configuration of an image coding apparatus 10 according to an embodiment.

- the image coding apparatus 10 includes an A / D (Analogue to Digital) conversion unit 11, a rearrangement buffer 12, a subtraction unit 13, an orthogonal conversion unit 14, a quantization unit 15, a lossless encoding unit 16, Storage buffer 17, rate control unit 18, dequantization unit 21, inverse orthogonal transformation unit 22, addition unit 23, deblock filter 24, frame memory 25, selectors 26 and 27, intra prediction unit 30, and motion search unit 40.

- a / D Analogue to Digital

- the A / D converter 11 converts an image signal input in an analog format into image data in a digital format, and outputs a series of digital image data to the sorting buffer 12.

- the rearrangement buffer 12 rearranges the images included in the series of image data input from the A / D converter 11.

- the rearrangement buffer 12 rearranges the images according to the GOP (Group of Pictures) structure related to the encoding process, and then outputs the rearranged image data to the subtraction unit 13, the intra prediction unit 30, and the motion search unit 40. Do.

- GOP Group of Pictures

- the subtraction unit 13 is supplied with the image data input from the reordering buffer 12 and the prediction image data input from the intra prediction unit 30 or the motion search unit 40 described later.

- the subtraction unit 13 calculates prediction error data which is a difference between the image data input from the reordering buffer 12 and the prediction image data, and outputs the calculated prediction error data to the orthogonal transformation unit 14.

- the orthogonal transformation unit 14 performs orthogonal transformation on the prediction error data input from the subtraction unit 13.

- the orthogonal transformation performed by the orthogonal transformation unit 14 may be, for example, Discrete Cosine Transform (DCT) or Karhunen-Loeve Transform.

- the orthogonal transform unit 14 outputs transform coefficient data acquired by the orthogonal transform process to the quantization unit 15.

- the quantization unit 15 is supplied with transform coefficient data input from the orthogonal transform unit 14 and a rate control signal from the rate control unit 18 described later.

- the quantizing unit 15 quantizes the transform coefficient data, and outputs the quantized transform coefficient data (hereinafter, referred to as quantized data) to the lossless encoding unit 16 and the inverse quantization unit 21. Further, the quantization unit 15 changes the bit rate of the quantization data input to the lossless encoding unit 16 by switching the quantization parameter (quantization scale) based on the rate control signal from the rate control unit 18 Let

- the lossless encoding unit 16 generates an encoded stream by performing lossless encoding processing on the quantized data input from the quantization unit 15.

- the lossless coding by the lossless coding unit 16 may be, for example, variable length coding or arithmetic coding.

- the lossless encoding unit 16 multiplexes the information on the intra prediction or the information on the inter prediction input from the selector 27 in the header area of the encoded stream. Then, the lossless encoding unit 16 outputs the generated encoded stream to the accumulation buffer 17.

- the accumulation buffer 17 temporarily accumulates the encoded stream input from the lossless encoding unit 16. Then, the accumulation buffer 17 outputs the accumulated encoded stream to a transmission unit (not shown) (for example, a communication interface or a connection interface with a peripheral device) at a rate according to the band of the transmission path.

- a transmission unit for example, a communication interface or a connection interface with a peripheral device

- the rate control unit 18 monitors the free space of the accumulation buffer 17. Then, the rate control unit 18 generates a rate control signal according to the free space of the accumulation buffer 17, and outputs the generated rate control signal to the quantization unit 15. For example, when the free space of the accumulation buffer 17 is small, the rate control unit 18 generates a rate control signal for reducing the bit rate of the quantized data. Also, for example, when the free space of the accumulation buffer 17 is sufficiently large, the rate control unit 18 generates a rate control signal for increasing the bit rate of the quantized data.

- the inverse quantization unit 21 performs inverse quantization processing on the quantized data input from the quantization unit 15. Then, the inverse quantization unit 21 outputs the transform coefficient data acquired by the inverse quantization process to the inverse orthogonal transformation unit 22.

- the inverse orthogonal transform unit 22 restores prediction error data by performing inverse orthogonal transform processing on the transform coefficient data input from the inverse quantization unit 21. Then, the inverse orthogonal transform unit 22 outputs the restored prediction error data to the addition unit 23.

- the addition unit 23 generates decoded image data by adding the restored prediction error data input from the inverse orthogonal transform unit 22 and the predicted image data input from the intra prediction unit 30 or the motion search unit 40. . Then, the adding unit 23 outputs the generated decoded image data to the deblocking filter 24 and the frame memory 25.

- the deblocking filter 24 performs a filtering process to reduce block distortion that occurs during image coding.

- the deblocking filter 24 removes block distortion by filtering the decoded image data input from the adding unit 23, and outputs the decoded image data after filtering to the frame memory 25.

- the frame memory 25 stores the decoded image data input from the adding unit 23 and the decoded image data after filtering input from the deblocking filter 24 using a storage medium.

- the selector 26 reads the decoded image data after filtering used for inter prediction from the frame memory 25 and supplies the read decoded image data to the motion search unit 40 as reference image data. Further, the selector 26 reads the decoded image data before filtering used for intra prediction from the frame memory 25 and supplies the read decoded image data to the intra prediction unit 30 as reference image data.

- the selector 27 outputs predicted image data as a result of the inter prediction output from the motion search unit 40 to the subtraction unit 13 in the inter prediction mode, and outputs information on the inter prediction to the lossless encoding unit 16.

- the selector 27 outputs predicted image data as a result of intra prediction output from the intra prediction unit 30 to the subtraction unit 13 and also outputs information on intra prediction to the lossless encoding unit 16 .

- the selector 27 switches the inter prediction mode and the intra prediction mode according to the size of the cost function value output from the intra prediction unit 30 and the motion search unit 40.

- the intra prediction unit 30 is set in the image based on the image data to be encoded (original image data) input from the reordering buffer 12 and the decoded image data as reference image data supplied from the frame memory 25. Intra prediction processing for each block being Then, the intra prediction unit 30 outputs information on intra prediction including prediction mode information indicating an optimum prediction mode, a cost function value, and predicted image data to the selector 27.

- the motion search unit 40 performs a motion search process for inter prediction (inter-frame prediction) based on the original image data input from the reordering buffer 12 and the decoded image data supplied via the selector 26.

- the motion search process by the motion search unit 40 according to the present embodiment can be realized by extending the method described in the non-patent document 2 or the method described in the non-patent document 3.

- the motion search unit 40 can generate predictor information indicating an optimum predictor for each prediction unit.

- the motion search unit 40 can generate merge information indicating an optimal merge mode for each prediction unit.

- the motion search unit 40 outputs, to the selector 27, predictor information or merge information, information on inter prediction including motion vector information and reference image information, a cost function value, and predicted image data.

- predictor information or merge information information on inter prediction including motion vector information and reference image information, a cost function value, and predicted image data.

- the image coding apparatus 10 repeats the series of coding processes described here for each of a plurality of layers of an image to be scalable coded.

- the first layer to be encoded is a layer called the base layer that represents the coarsest image.

- the base layer coded stream can be decoded independently without decoding the coded streams of other layers.

- Layers other than the base layer are layers which represent finer images, called enhancement layers.

- the enhancement layer coded stream is coded using information contained in the base layer coded stream to increase coding efficiency. Therefore, in order to reproduce the image of the enhancement layer, the coded streams of both the base layer and the enhancement layer will be decoded.

- the number of layers handled in scalable coding may be three or more.

- the lowest layer is the base layer, and the remaining layers are the enhancement layers.

- the higher enhancement layer coded stream may be coded and decoded using the information contained in the lower enhancement layer or base layer coded stream.

- the dependent layer is referred to as a lower layer, and the dependent layer is referred to as an upper layer.

- correlation of motion between layers is used to efficiently code information on inter prediction. That is, in the inter prediction block, the setting of the motion vector to the upper layer based on the setting information related to the motion vector set to the lower layer is performed.

- the motion search unit 40 shown in FIG. 1 has a buffer for temporarily storing information obtained in inter prediction in the lower layer, and uses information stored in the buffer. Set the motion vector in the upper layer.

- the correlation of motion between layers can be particularly noticeable in scalable coding based on spatial scalability or SNR scalability.

- FIG. 2 is an explanatory diagram for describing an example of spatial scalability.

- Layer L1 is a base layer

- layers L2 and L3 are enhancement layers.

- the ratio of the spatial resolution of layer L2 to layer L1 is 2: 1.

- the ratio of the spatial resolution of layer L3 to layer L1 is 4: 1.

- the motion appearing in prediction unit B1 in layer L1 may similarly appear in the corresponding prediction unit B2 in layer L2 and the corresponding prediction unit B3 in layer L3. Is high. This is the correlation of movement between layers in spatial scalability.

- FIG. 3 is an explanatory diagram for describing an example of the SNR scalability.

- Layer L1 is a base layer

- layers L2 and L3 are enhancement layers.

- the spatial resolutions of layers L1, L2 and L3 are equal to one another.

- the minimum quantization scale of the layer L1 is 25, and the bit rate of the coded stream is suppressed to about 2 Mbps by the quantization of the orthogonal transformation coefficient.

- the minimum quantization scale of the layer L2 is 12, and the bit rate of the encoded stream is about 5 Mbps.

- the minimum quantization scale of the layer L3 is 0, and the bit rate of the encoded stream is about 10 Mbps.

- the motion appearing in the prediction unit B1 in the layer L1 can appear the same in the corresponding prediction unit B2 in the layer L2 and the corresponding prediction unit B3 in the layer L3.

- Sex is high. This is the correlation of motion between layers in SNR scalability.

- the image coding apparatus 10 uses the correlation of motion between layers as described above actively to code information related to inter prediction efficiently.

- the prediction unit of the lower layer corresponding to the prediction unit of the upper layer is, for example, a prediction unit of the lower layer having a pixel corresponding to a pixel at a predetermined position (for example, upper left) of the prediction unit of the upper layer.

- the prediction unit of the lower layer corresponding to the prediction unit of the upper layer is, for example, the largest overlap among prediction units in the lower layer overlapping with the prediction unit of the upper layer (sharing the pixel at the same position) It may be a prediction unit (with the largest number of shared pixels). With such a definition, it is possible to determine a prediction unit that is most likely to exhibit motion correlation as a "corresponding prediction unit”.

- FIG. 4 is a block diagram showing an example of a detailed configuration of the motion search unit 40 according to the first embodiment.

- the motion search unit 40 includes a search control unit 141, a motion vector calculation unit 142, a motion vector prediction unit 143, a motion vector buffer 144, a mode selection unit 145, an information generation unit 146, and a predictor information buffer 147. .

- the search control unit 141 arranges one or more prediction units in a coding unit, and causes the motion vector calculation unit 142 to calculate a motion vector for each prediction unit.

- the motion vector calculated by the motion vector calculation unit 142 is output to the motion vector prediction unit 143 and stored in the motion vector buffer 144.

- the motion vector prediction unit 143 generates a prediction motion vector using motion vectors (referred to as reference motion vectors) of other blocks stored in the motion vector buffer 144 according to each of the plurality of predictor candidates. Then, the motion vector prediction unit 143 calculates a differential motion vector that is the difference between the motion vector calculated by the motion vector calculation unit 142 and the predicted motion vector.

- the mode selection unit 145 generates predicted image data using the motion vector calculated by the motion vector calculation unit 142, and evaluates a cost function value calculated based on comparison between the generated predicted image data and the original image data. Do. Then, the mode selection unit 145 selects the arrangement of the optimum prediction unit that minimizes the cost function value and the optimum predictor for each prediction unit.

- the information generation unit 146 generates information on inter prediction including predictor information indicating an optimal predictor selected for each prediction unit and differential motion vector information indicating a corresponding differential motion vector.

- the predictor information may include an index specifying a reference motion vector.

- the predictor information may include a parameter specifying a prediction formula.

- the information generation unit 146 outputs the generated information on the inter prediction, the cost function value, and the predicted image data to the selector 27. Further, predictor information generated by the information generation unit 146 is temporarily stored in the predictor information buffer 147 for processing in the upper layer.

- FIG. 5 and 6 are explanatory diagrams for describing examples of predictor candidates for motion vector prediction that can be used in such inter prediction.

- one prediction unit PTe to be predicted and a prediction motion vector PMVe of the prediction unit PTe are shown.

- the prediction motion vector PMVe of the prediction unit PTe can be predicted, for example, using the motion vectors MVa, MVb and MVc of the prediction unit adjacent to the prediction unit PTe as a reference motion vector.

- the reference motion vector MVa is a motion vector set to a prediction unit adjacent to the left of the prediction unit PTe.

- the reference motion vector MVb is a motion vector set to a prediction unit adjacent above the prediction unit PTe.

- the reference motion vector MVc is a motion vector set to a prediction unit adjacent to the upper right of the prediction unit PTe.

- the predicted motion vector PMVe can be generated according to the following prediction equation using these reference motion vectors MVa, MVb and MVc.

- Equation (1) is a prediction equation based on spatial correlation of motion.

- Med in equation (1) represents a median operation. That is, according to equation (1), the predicted motion vector PMVe is a vector having the median of the horizontal components and the median of the vertical components of the reference motion vectors MVa, MVb and MVc as components.

- the predicted motion vector PMVe generated according to Equation (1) is an example of a predictor candidate.

- a predicted motion vector calculated by a prediction equation based on such spatial correlation of motion is called a spatial predictor.

- Formula (1) is only an example of a prediction formula. For example, if one of the motion vectors MVa, MVb, or MVc does not exist because the prediction unit to be predicted is located at the end of the image, the nonexistent motion vector may be omitted from the argument of the median operation. Good. Also, simpler spatial predictors may also be used as predictor candidates, as in the following equations (2) to (4).

- a temporal predictor which is a predicted motion vector calculated by a prediction equation based on temporal correlation of motion, may also be used as a predictor candidate.

- an image IM01 including a prediction unit PTe to be predicted and a reference image IM02 are shown.

- the block Bcol in the reference image IM02 is a co-located block of the prediction unit PTe.

- the prediction equation using temporal correlation of motion uses, for example, a motion vector set in the co-located block Bcol or a block adjacent to the co-located block B col as a reference motion vector.

- the motion vector set to the co-located block Bcol is taken as MVcol.

- MVt0 to MVt7 be the motion vectors set in the upper, left, lower, right, upper left, lower left, lower right and upper right blocks of the co-located block Bcol, respectively.

- the predicted motion vector PMVe can be generated from the reference motion vectors MVcol and MVt0 to MVt7, for example, using the following prediction equation (5) or (6).

- the motion vector predictor 143 After generating the motion vector predictor PMVe for each of the plurality of predictor candidates, the motion vector predictor 143 generates a difference between the motion vector MVe calculated by the motion vector calculator 142 and the motion vector predictor PMVe as in the following equation. To calculate a differential motion vector MVDe representing.

- the mode selector 145 selects the optimum predictor (for example, the predictor with the highest prediction accuracy) for each prediction unit, and the information generator 146 indicates the predictor information indicating the optimum predictor and the corresponding differential motion vector.

- Differential motion vector information is generated.

- motion vector information indicating a motion vector calculated by the motion vector calculation unit 142 may be generated instead of differential motion vector information.

- the information generated in this manner may be encoded by the lossless encoding unit 16 as information on inter prediction.

- the predictor information is temporarily stored in the predictor information buffer 147 for processing in the upper layer.

- Enhancement Layer In the motion search process of the enhancement layer, prediction of a motion vector is performed based on predictor information of a lower layer stored in the predictor information buffer 147.

- the search control unit 141 causes the motion vector calculation unit 142 to calculate a motion vector for each prediction unit arranged in the coding unit. Then, the search control unit 141 causes the motion vector prediction unit 143 to generate a predicted motion vector for each prediction unit.

- the generation of a predicted motion vector by the motion vector prediction unit 143 in the enhancement layer is performed using predictor information which is setting information stored in the predictor information buffer 147. More specifically, for example, when the predictor information indicates a spatial predictor as shown in Equation (1) for a prediction unit in the lower layer corresponding to a prediction unit in a certain upper layer, the motion vector predictor 143 obtains the reference motion vector of the adjacent prediction unit in the upper layer from the motion vector buffer 144.

- the motion vector prediction unit 143 substitutes the acquired reference motion vector into Equation (1) to generate a predicted motion vector.

- the predictor information indicates a temporal predictor as shown in Formula (5) for a prediction unit in a lower layer corresponding to a prediction unit in a certain upper layer

- the motion vector prediction unit 143 The reference motion vector of the co-located block in the image and the adjacent block of the co-located block is obtained from the motion vector buffer 144.

- the motion vector prediction unit 143 substitutes the acquired reference motion vector into Expression (5) to generate a predicted motion vector.

- the motion vector prediction unit 143 calculates a differential motion vector that represents the difference between the motion vector calculated by the motion vector calculation unit 142 and the predicted motion vector.

- the mode selection unit 145 generates predicted image data using the motion vector calculated by the motion vector calculation unit 142, and calculates a cost function value.

- the information generation unit 146 generates difference motion vector information indicating a difference motion vector calculated for each prediction unit. Then, the information generation unit 146 outputs, to the selector 27, information on inter prediction including the difference motion vector information, the cost function value, and the predicted image data.

- FIG. 7 is a flowchart showing an example of the flow of motion search processing by the motion search unit 40 according to the present embodiment.

- the motion search unit 40 performs motion search processing of the base layer (step S110).

- the arrangement of prediction units in each coding unit is determined, and the best predictor for each prediction unit is selected.

- the predictor information buffer 147 buffers predictor information indicating the optimum predictor of each prediction unit as setting information.

- steps S111 to S117 are motion search processes of the enhancement layer. Among these processes, the processes of steps S111 to S116 are repeated for each prediction unit of each enhancement layer (hereinafter, referred to as a target PU).

- a target PU each prediction unit of each enhancement layer

- the “upper layer” is a layer to be predicted

- the “lower layer” is a layer lower than the layer to be predicted.

- the motion vector calculation unit 142 calculates a motion vector for one target PU of the upper layer based on the pixel value of the original image and the pixel value of the reference image input from the frame memory 25 (step S111). . Then, the motion vector calculation unit 142 outputs the calculated motion vector to the motion vector prediction unit 143 and the motion vector buffer 144.

- the motion vector prediction unit 143 uses the predictor information for the corresponding PU in the lower layer stored in the predictor information buffer 147, and the reference motion vector acquired according to the predictor information, to obtain the PU of interest.

- a predicted motion vector is generated (step S112).

- the motion vector prediction unit 143 calculates a differential motion vector by subtracting a predicted motion vector from the motion vector (step S113). Then, the motion vector prediction unit 143 outputs the motion vector and the differential motion vector for the attention PU to the mode selection unit 145.

- the mode selection unit 145 generates predicted image data and a cost function value for the attention PU (step S114). Further, the information generation unit 146 generates difference motion vector information indicating a difference motion vector for the attention PU (step S115).

- step S116 when there is an unprocessed PU remaining in the layer to be predicted, the process returns to step S111 (step S116).

- step S117 if no unprocessed PU remains, it is determined whether there is a remaining layer (upper layer) (step S117).

- the processing to be performed after step S111 is repeated with the layer to be predicted up to that point as the lower layer and the next layer as the upper layer.

- Predictor information indicating the predictor selected for the lower layer is subsequently buffered by the predictor information buffer 147. If there are no remaining layers, the motion search process of FIG. 7 ends.

- the predicted image data generated here and the information on inter prediction (which may include differential motion vector information) may be output to the subtractor 13 and the lossless encoder 16 through the selector 27.

- predictor information is not encoded as information on inter prediction in the upper layer, and predictor information in the lower layer is reused, so the code amount of information on inter prediction is reduced. Can.

- FIG. 8 is a block diagram showing an example of a detailed configuration of the motion search unit 40 according to the second embodiment.

- the motion search unit 40 includes a search control unit 241, a motion vector calculation unit 242, a motion vector prediction unit 243, a motion vector buffer 244, a mode selection unit 245, and an information generation unit 246.

- the motion search process of the base layer according to the present embodiment may be the same as the motion search process of the base layer according to the first embodiment described above. However, in the present embodiment, predictor information of the base layer may not be buffered, and motion vector information of the base layer may be buffered across layers.

- the search control unit 241 arranges one or more prediction units in the coding unit, and causes the motion vector calculation unit 242 to calculate a motion vector for each prediction unit.

- the motion vector calculated by the motion vector calculation unit 242 is output to the motion vector prediction unit 243 and stored in the motion vector buffer 244.

- the motion vector prediction unit 243 generates a prediction motion vector using the reference motion vector stored in the motion vector buffer 244 according to each of the plurality of predictor candidates. Then, the motion vector prediction unit 243 calculates a differential motion vector that is the difference between the motion vector calculated by the motion vector calculation unit 242 and the predicted motion vector.

- the mode selection unit 245 generates predicted image data using the motion vector calculated by the motion vector calculation unit 242, and evaluates a cost function value calculated based on comparison between the generated predicted image data and the original image data. Do. Then, the mode selection unit 245 selects the arrangement of the optimum prediction unit that minimizes the cost function value and the optimum predictor for each prediction unit.

- the information generation unit 246 generates information on inter prediction including predictor information indicating an optimal predictor selected for each prediction unit and difference motion vector information indicating a corresponding difference motion vector. Then, the information generation unit 246 outputs the generated information on the inter prediction, the cost function value, and the predicted image data to the selector 27.

- the predictor candidate searched in the motion search process of the base layer according to the present embodiment may include one or both of the above-described spatial predictor and temporal predictor. Furthermore, in the motion search process of the enhancement layer according to the present embodiment, additional predictor candidates are introduced. Predictor candidates introduced here are predictor candidates that use a motion vector set in a corresponding prediction unit of a lower layer as a reference motion vector. Such a predictor is referred to herein as an inter-layer predictor.

- FIG. 9 is an explanatory diagram for describing an example of the inter-layer predictor.

- a prediction unit PTe in the layer L12 which is an upper layer

- a prediction motion vector PMVe of the prediction unit PTe are shown.

- the prediction unit PTbase in the layer L11 which is the lower layer, is a prediction unit corresponding to the prediction unit PTe.

- the reference motion vector MVbase is a motion vector set in the prediction unit PTbase.

- the inter-layer predictor can be expressed, for example, by the following equation (8).

- the motion vector expanded as in the following equation according to the ratio N of the spatial resolution between the lower layer and the upper layer is an inter-layer predictor. It may be used. In that case, the values of the vertical component and the horizontal component of the inter-layer predictor may be rounded so as to match the precision (for example, 1 ⁇ 4 pixel precision, etc.) of the motion vector of the upper layer.

- the selection of an optimal predictor from a plurality of predictor candidates is performed also in the motion search process of the enhancement layer.

- the search control unit 241 causes the motion vector calculation unit 242 to calculate a motion vector for each prediction unit in the coding unit.

- the motion vector calculated by the motion vector calculation unit 242 is output to the motion vector prediction unit 243 and stored in the motion vector buffer 244.

- the motion vector buffer 244 also stores the motion vector (reference motion vector) calculated for each prediction unit of the lower layer.

- the motion vector prediction unit 243 generates a prediction motion vector using the reference motion vector stored in the motion vector buffer 244 according to each of the plurality of predictor candidates.

- the plurality of predictor candidates here include the inter-layer predictors described above.

- the motion vector prediction unit 243 calculates a differential motion vector that is the difference between the motion vector calculated by the motion vector calculation unit 242 and the predicted motion vector.

- the mode selection unit 245 generates predicted image data using the motion vector calculated by the motion vector calculation unit 242, and evaluates a cost function value calculated based on comparison between the generated predicted image data and the original image data. Do. Then, the mode selection unit 245 selects an optimum predictor for each prediction unit.

- the information generation unit 246 generates information on inter prediction including predictor information indicating an optimal predictor selected for each prediction unit and difference motion vector information indicating a corresponding difference motion vector. If the above-described inter-layer predictor is selected as the optimal predictor, the predictor information may include an index specifying the reference motion vector of the lower layer. Then, the information generation unit 246 outputs the generated information on the inter prediction, the cost function value, and the predicted image data to the selector 27.

- FIG. 10 is a flowchart showing an example of the flow of motion search processing by the motion search unit 40 according to the present embodiment.

- the motion search unit 40 performs motion search processing of the base layer (step S120).

- the arrangement of prediction units in each coding unit is determined, and the best predictor for each prediction unit is selected.

- the motion vector buffer 244 buffers the motion vector calculated for each prediction unit.

- steps S121 to S127 are motion search processes of the enhancement layer. Among these processes, the processes of steps S121 to S126 are repeated for each focused PU of each enhancement layer.

- the “upper layer” is a layer to be predicted

- the “lower layer” is a layer lower than the layer to be predicted.

- the motion vector calculation unit 242 calculates a motion vector for one target PU of the upper layer based on the pixel value of the original image and the pixel value of the reference image input from the frame memory 25 (step S121). . Then, the motion vector calculation unit 242 outputs the calculated motion vector to the motion vector prediction unit 243 and the motion vector buffer 244.

- the motion vector prediction unit 243 generates a predicted motion vector for the target PU using the reference motion vector stored in the motion vector buffer 244 according to each of the plurality of predictor candidates (step S122).

- the plurality of predictor candidates here include inter-layer predictors.

- the motion vector prediction unit 243 calculates a differential motion vector for each of the plurality of predictor candidates (step S123). Then, the motion vector prediction unit 243 outputs the motion vector and the differential motion vector for each predictor candidate to the mode selection unit 245.

- the mode selection unit 245 generates predicted image data for each predictor candidate, and evaluates the cost function value to select an optimal predictor (step S124). Then, the information generation unit 246 generates predictor information indicating the selected optimum predictor and differential motion vector information indicating the corresponding differential motion vector (step S125).

- step S126 when there is an unprocessed PU remaining in the layer to be predicted, the process returns to step S121 (step S126).

- step S127 if no unprocessed PU remains, it is determined whether there is a remaining layer (upper layer) (step S127). If there is a remaining layer, The processing of step S121 and subsequent steps is repeated with the layer to be predicted up to that point as the lower layer and the next layer as the upper layer.

- the motion vector calculated for each target PU of the lower layer is buffered by the motion vector buffer 244. If there is no remaining layer, the motion search process of FIG. 10 ends.

- the prediction image data generated here and information on inter prediction (which may include predictor information and differential motion vector information) may be output to the subtraction unit 13 and the lossless encoding unit 16 via the selector 27.

- predictor information indicating that an inter-layer predictor based on a motion vector set in a lower layer should be used may be encoded as information on inter prediction in the upper layer.

- the lossless encoding unit 16 that encodes predictor information may assign a minimum code number to an inter-layer predictor among a plurality of predictor candidates in encoding of predictor information of the upper layer.

- the correlation of motion between layers is stronger than the spatial correlation of motion and the temporal correlation of motion. Therefore, by assigning the minimum code number to the inter-layer predictor, it is possible to use more shorter code words in the coded stream after variable-length coding, thereby further reducing the code amount.

- FIG. 11 is a block diagram showing an example of a detailed configuration of the motion search unit 40 according to the third embodiment.

- the motion search unit 40 includes a search control unit 341, a motion vector calculation unit 342, a motion vector buffer 344, a mode selection unit 345, an information generation unit 346, and a merge information buffer 347.

- the search control unit 341 arranges one or more prediction units in the coding unit, and causes the motion vector calculation unit 342 to calculate a motion vector for each prediction unit.

- the motion vector calculated by the motion vector calculation unit 342 is output to the mode selection unit 345 and stored in the motion vector buffer 344.

- the mode selection unit 345 merges prediction units when the motion vector calculated by the motion vector calculation unit 342 for a certain prediction unit is common to the reference motion vector set in one or more adjacent prediction units. Decide that. In the approach proposed by the above non-patent document 3, a certain prediction unit can be merged with the upper adjacent prediction unit or the left adjacent prediction unit.

- the mode selection unit 345 may select, for example, as a merge mode, either merging with a prediction unit adjacent above, merging with a prediction unit adjacent to the left, or no merging. Furthermore, the mode selection unit 345 generates predicted image data for each prediction unit, and calculates a cost function value based on the comparison between the generated predicted image data and the original image data.

- the information generation unit 346 generates information on inter prediction including merge information indicating a merge mode for each prediction unit and motion vector information for a prediction unit not merged with another prediction unit. Then, the information generation unit 346 outputs the generated information on inter prediction, the cost function value, and the predicted image data to the selector 27.

- the merge information generated in the present embodiment may include, for example, two flags “MergeFlag” and “MergeLeftFlag”.

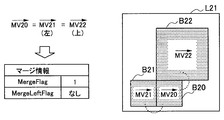

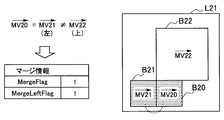

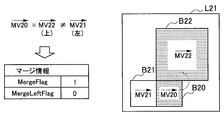

- FIGS. 12A to 12C respectively show examples of merge information that can be generated in this embodiment.

- a prediction unit B20 which is a target PU in the layer L21 is shown.

- the prediction units B21 and B22 are adjacent to the left and above the prediction unit B20, respectively.

- the motion vector MV20 is a motion vector calculated by the motion vector calculation unit 342 for the prediction unit B20.

- the motion vectors MV21 and MV22 are reference motion vectors set in prediction units B21 and B22, respectively.

- the motion vector MV20 is common to both of the reference motion vectors MV21 and MV22.

- MergeLeftFlag is not included in merge information.

- the decoding side that has received such merge information may set a motion vector common to the motion vector set to the prediction unit B21 or B22 to the prediction unit B20 without decoding MergeLeftFlag.

- the motion vector MV20 is common to the reference motion vector MV21 and different from the reference motion vector MV22.

- the decoding side that has received such merge information may set a motion vector common to the motion vector set to the prediction unit B21 to the prediction unit B20.

- the motion vector MV20 is common to the reference motion vector MV22 and different from the reference motion vector MV21.

- the decoding side that has received such merge information may set a motion vector common to the motion vector set to the prediction unit B22 to the prediction unit B20.

- Enhancement Layer In the motion search process of the enhancement layer, using the merge information of the lower layer stored in the merge information buffer 347, a motion vector is set to each prediction unit.

- the prediction image data is generated using the motion vector of the prediction unit adjacent to the left, and the cost function value is calculated.

- the mode selection unit 345 generates predicted image data using the motion vector input from the motion vector calculation unit 342, and calculates a cost function value.

- the information generation unit 346 generates information on inter prediction including motion vector information on a prediction unit that is not merged with another prediction unit. Then, the information generation unit 346 outputs the generated information on inter prediction, the cost function value, and the predicted image data to the selector 27.

- FIG. 13 is a flowchart showing an example of the flow of motion search processing by the motion search unit 40 according to the present embodiment.

- the motion search unit 40 performs motion search processing of the base layer (step S130).

- the arrangement of prediction units in each coding unit is determined, and the merge mode of each prediction unit is selected.

- the motion vector buffer 344 buffers the motion vector calculated for each prediction unit.

- the merge information buffer 347 buffers merge information indicating a merge mode selected for each prediction unit as setting information.

- steps S131 to S136 are motion search processes of the enhancement layer. Among these processes, the processes of steps S131 to S135 are repeated for each focused PU of each enhancement layer.

- the “upper layer” is a layer to be predicted

- the “lower layer” is a layer lower than the layer to be predicted.

- the search control unit 341 refers to merge information stored in the merge information buffer 347 as to whether a PU of a corresponding lower layer is merged with another PU for one target PU of the upper layer. It determines (step S131).

- the corresponding lower layer PU is merged with another PU, the PU of interest is also merged with the other PU, and thus the subsequent processing of step S132 is skipped.

- step S132 the motion vector calculation unit 342 calculates a motion vector based on the pixel values of the original image and the pixel values of the reference image input from the frame memory 25 for the target PUs not merged with other PUs ( Step S132). Then, the motion vector calculation unit 342 outputs the calculated motion vector to the mode selection unit 345 and the motion vector buffer 344.

- the mode selection unit 345 generates predicted image data using the motion vector calculated by the motion vector calculation unit 342 or obtained from the motion vector buffer 344 and calculates a cost function value (step S133). Then, the information generation unit 346 generates motion vector information for a target PU that is not merged with another PU (step S134).

- step S135) when there is an unprocessed PU remaining in the layer to be predicted, the process returns to step S131 (step S135).

- step S136 if no unprocessed PU remains, it is determined whether there is a remaining layer (upper layer) (step S136). If there is a remaining layer, Processing from step S131 onward is repeated with the layer to be predicted up to that point as the lower layer and the next layer as the upper layer.

- the motion vector calculated for each target PU of the lower layer is buffered by the motion vector buffer 344.

- the merge information is subsequently buffered by the merge information buffer 347. If there are no remaining layers, the motion search process of FIG. 13 ends.

- the predicted image data and the information on inter prediction generated here can be output to the subtracting unit 13 and the lossless encoding unit 16 via the selector 27.

- merge information is not encoded as information on inter prediction of the upper layer, and merge information of the lower layer is reused, so the code amount of information on inter prediction is reduced. Can.

- FIG. 14 is a block diagram showing an example of a detailed configuration of the motion search unit 40 according to the fourth embodiment.

- the motion search unit 40 includes a search control unit 441, a motion vector calculation unit 442, a motion vector buffer 444, a mode selection unit 445, and an information generation unit 446.

- the motion search process of the base layer according to the present embodiment may be the same as the motion search process of the base layer according to the third embodiment described above. However, in the present embodiment, the merge information of the base layer may not be buffered.

- the search control unit 441 arranges one or more prediction units in the coding unit, and causes the motion vector calculation unit 442 to calculate a motion vector for each prediction unit.

- the motion vector calculated by the motion vector calculation unit 442 is output to the mode selection unit 445 and stored in the motion vector buffer 444.

- the mode selection unit 445 merges prediction units when the motion vector calculated by the motion vector calculation unit 442 for a certain prediction unit is common to the reference motion vector set in one or more adjacent prediction units. Decide that.

- the mode selection unit 445 generates predicted image data for each prediction unit, and calculates a cost function value based on comparison between the generated predicted image data and the original image data.

- the information generation unit 346 generates information on inter prediction including merge information indicating a merge mode for each prediction unit and motion vector information for a prediction unit not merged with another prediction unit. Then, the information generation unit 346 outputs the generated information on inter prediction, the cost function value, and the predicted image data to the selector 27.

- merge information generated in the motion search process of the base layer may include two flags “MergeFlag” and “MergeLeftFlag” similar to those in the third embodiment.

- merge information generated in the motion search process of the enhancement layer may additionally include a new flag "MergeBaseFlag".

- FIGS. 15A to 15C respectively show examples of merge information that can be generated in this embodiment.

- a prediction unit B30 which is a target PU in the upper layer L30 is shown.

- the prediction units B31 and B32 are adjacent to the left and above the prediction unit B30, respectively.

- the motion vector MV30 is a motion vector calculated by the motion vector calculation unit 442 for the prediction unit B30.

- the motion vectors MV31 and MV32 are reference motion vectors set to prediction units B31 and B32, respectively.

- the prediction unit B20 which is a PU corresponding to the target PU in the lower layer L21, is also shown.

- the motion vector MV20 is a reference motion vector buffered for the prediction unit B20.

- the motion vector MV30 is common to all of the reference motion vectors MV31, MV32 and MV20.

- MergeBaseFlag and MergeLeftFlag are not included in merge information.

- the decoding side having received such merge information may set a motion vector common to the motion vector set to the prediction unit B20, B31 or B32 to a prediction unit B30 without decoding MergeBaseFlag and MergeLeftFlag.

- the motion vector MV30 is common to the reference motion vector MV20 and different from the reference motion vectors MV31 and MV32.

- the decoding side having received such merge information may set a motion vector common to the motion vector set to the prediction unit B20 in the lower layer L21 to the prediction unit B30 in the upper layer L30.

- the motion vector MV30 is common to the reference motion vector MV31 and different from the reference motion vectors MV20 and MV32.

- the decoding side having received such merge information may set a motion vector common to the motion vector set to the prediction unit B31 to the prediction unit B30.

- the search control unit 441 causes the motion vector calculation unit 442 to calculate a motion vector for each prediction unit in the coding unit.

- the motion vector calculated by the motion vector calculation unit 442 is output to the mode selection unit 445 and stored in the motion vector buffer 444.

- the motion vector buffer 444 also stores a motion vector (reference motion vector) calculated for each prediction unit of the lower layer.

- the mode selection unit 445 does not perform these operations. Decide to merge prediction units.

- the mode selection unit 445 selects, for example, merge with the lower layer, merge with the upper adjacent prediction unit, merge with the left adjacent prediction unit, or no merge as the merge mode. obtain. Furthermore, the mode selection unit 445 generates predicted image data for each prediction unit, and calculates a cost function value based on comparison between the generated predicted image data and the original image data.

- the information generation unit 346 generates information on inter prediction including merge information indicating a merge mode for each prediction unit and motion vector information for a prediction unit not merged with another prediction unit. Then, the information generation unit 346 outputs the generated information on inter prediction, the cost function value, and the predicted image data to the selector 27.

- FIG. 16 is a flowchart showing an example of the flow of motion search processing by the motion search unit 40 according to the present embodiment.

- the motion search unit 40 performs motion search processing of the base layer (step S140).

- the arrangement of prediction units in each coding unit is determined, and the merge mode of each prediction unit is selected.

- the motion vector buffer 444 buffers the motion vector calculated for each prediction unit.

- steps S141 to S146 are motion search processes of the enhancement layer. Among these processes, the processes of steps S141 to S145 are repeated for each focused PU of each enhancement layer.

- the “upper layer” is a layer to be predicted

- the “lower layer” is a layer lower than the layer to be predicted.

- the motion vector calculation unit 442 calculates a motion vector for one target PU of the upper layer based on the pixel values of the original image and the pixel values of the reference image input from the frame memory 25 (step S141). . Then, the motion vector calculation unit 442 outputs the calculated motion vector to the mode selection unit 445 and the motion vector buffer 444.

- the mode selection unit 445 selects the merge mode by comparing the motion vector calculated by the motion vector calculation unit 442 with the reference motion vector stored in the motion vector buffer 444 (step S142). For example, if the motion vector calculated for the attention PU is common to the reference motion vector buffered for the corresponding PU in the lower layer, merging with the lower layer may be selected.

- the mode selection unit 445 generates predicted image data using the motion vector for the attention PU, and calculates a cost function value (step S144). Then, the information generation unit 446 generates setting information including merge information on the target PU (and motion vector information on the target PU not merged with other PUs) (step S144).

- step S145 when there is an unprocessed PU remaining in the layer to be predicted, the process returns to step S141 (step S145).

- step S146 if no unprocessed PU remains, it is determined whether there is a remaining layer (upper layer) (step S146). If there is a remaining layer, Processing from step S141 is repeated, with the layer to be predicted up to that point as the lower layer and the next layer as the upper layer.

- the motion vector calculated for each target PU of the lower layer is buffered by the motion vector buffer 444. If there is no remaining layer, the motion search process of FIG. 16 ends.

- the predicted image data and the information on inter prediction generated here can be output to the subtracting unit 13 and the lossless encoding unit 16 via the selector 27.

- merge information indicating that the focused PU is merged with the corresponding PU in the lower layer (the common motion vector is set) as information on inter prediction of the upper layer May be encoded. Therefore, it is possible to merge prediction units with a significantly lower layer of motion correlation, and to effectively reduce the amount of code because motion vectors are not encoded for prediction units in the upper layer to be merged. Can.

- FIG. 17 is a block diagram showing an example of the configuration of the image decoding apparatus 60 according to an embodiment.

- the image decoding apparatus 60 includes an accumulation buffer 61, a lossless decoding unit 62, an inverse quantization unit 63, an inverse orthogonal transformation unit 64, an addition unit 65, a deblocking filter 66, a rearrangement buffer 67, and D / A (Digital to Analogue) conversion unit 68, frame memory 69, selectors 70 and 71, intra prediction unit 80, and motion compensation unit 90.

- D / A Digital to Analogue

- the accumulation buffer 61 temporarily accumulates the coded stream input through the transmission path.

- the lossless decoding unit 62 decodes the encoded stream input from the accumulation buffer 61 according to the encoding scheme used during encoding. Further, the lossless decoding unit 62 decodes the information multiplexed in the header area of the encoded stream.

- the information multiplexed in the header area of the encoded stream may include, for example, the information on inter prediction described above and the information on intra prediction.

- the lossless decoding unit 62 outputs information on inter prediction to the motion compensation unit 90. In addition, the lossless decoding unit 62 outputs information on intra prediction to the intra prediction unit 80.

- the inverse quantization unit 63 inversely quantizes the quantized data after being decoded by the lossless decoding unit 62.

- the inverse orthogonal transform unit 64 generates prediction error data by performing inverse orthogonal transform on the transform coefficient data input from the dequantization unit 63 according to the orthogonal transform scheme used at the time of encoding. Then, the inverse orthogonal transform unit 64 outputs the generated prediction error data to the addition unit 65.

- the addition unit 65 adds the prediction error data input from the inverse orthogonal transform unit 64 and the prediction image data input from the selector 71 to generate decoded image data. Then, the adding unit 65 outputs the generated decoded image data to the deblocking filter 66 and the frame memory 69.

- the deblocking filter 66 removes block distortion by filtering the decoded image data input from the adding unit 65, and outputs the decoded image data after filtering to the rearrangement buffer 67 and the frame memory 69.

- the rearrangement buffer 67 rearranges the images input from the deblocking filter 66 to generate a series of time-series image data. Then, the rearrangement buffer 67 outputs the generated image data to the D / A converter 68.

- the D / A conversion unit 68 converts the digital format image data input from the rearrangement buffer 67 into an analog format image signal. Then, the D / A conversion unit 68 displays an image, for example, by outputting an analog image signal to a display (not shown) connected to the image decoding device 60.

- the frame memory 69 stores the decoded image data before filtering input from the adding unit 65 and the decoded image data after filtering input from the deblocking filter 66 using a storage medium.

- the selector 70 switches the output destination of the image data from the frame memory 69 between the intra prediction unit 80 and the motion compensation unit 90 for each block in the image according to the mode information acquired by the lossless decoding unit 62. .

- the selector 70 outputs the decoded image data before filtering supplied from the frame memory 69 to the intra prediction unit 80 as reference image data.

- the selector 70 outputs the decoded image data after filtering supplied from the frame memory 69 to the motion compensation unit 90 as reference image data.

- the selector 71 switches the output source of the predicted image data to be supplied to the addition unit 65 between the intra prediction unit 80 and the motion compensation unit 90 according to the mode information acquired by the lossless decoding unit 62. For example, when the intra prediction mode is designated, the selector 71 supplies predicted image data output from the intra prediction unit 80 to the addition unit 65. Further, when the inter prediction mode is designated, the selector 71 supplies predicted image data output from the motion compensation unit 90 to the addition unit 65.

- the intra prediction unit 80 performs intra prediction processing based on the information on intra prediction input from the lossless decoding unit 62 and the reference image data from the frame memory 69, and generates predicted image data. Then, the intra prediction unit 80 outputs the generated predicted image data to the selector 71.

- the motion compensation unit 90 performs motion compensation processing based on the information on inter prediction input from the lossless decoding unit 62 and the reference image data from the frame memory 69 to generate predicted image data.

- the motion compensation processing by the motion compensation unit 90 according to the present embodiment can be realized by extending the method described in the above-mentioned Non-Patent Document 2 or the method described in the above-mentioned Non-Patent Document 3. Then, the motion compensation unit 90 outputs, to the selector 71, predicted image data generated as a result of the motion compensation process. In the next section, four embodiments of the detailed configuration of the motion compensation unit 90 will be described.

- the image decoding device 60 repeats the series of decoding processing described here for each of the plurality of layers of the scalable coded image.

- the first layer to be decoded is the base layer. After the base layer is decoded, one or more enhancement layers are decoded.

- decoding an enhancement layer information obtained by decoding a lower layer, which is a base layer or another enhancement layer, is used.

- a motion vector is set in a prediction unit in a certain upper layer using setting information related to the motion vector set in the corresponding prediction unit in the lower layer.

- the setting information may include, for example, the above-described predictor information, merge information, or motion vector information.

- FIG. 18 is a block diagram showing an example of a detailed configuration of the motion compensation unit 90 according to the first embodiment.

- the motion compensation unit 90 includes an information acquisition unit 191, a motion vector setting unit 192, a predictor information buffer 193, a motion vector buffer 194, and a compensation unit 195.

- the information acquisition unit 191 acquires information on inter prediction to be decoded from the coded stream by the lossless decoding unit 62.

- the information on inter prediction may include predictor information and differential motion vector information (motion vector information for a prediction unit in which no motion vector is predicted).

- the predictor information acquired here indicates, for example, a predictor selected for each prediction unit at the time of encoding among the various predictor candidates described above.

- the motion vector setting unit 192 sets a motion vector for each prediction unit. Then, the motion vector set to each prediction unit by the motion vector setting unit 192 is output to the compensation unit 195 and stored in the motion vector buffer 194.

- predictor information for each prediction unit is temporarily stored in the predictor information buffer 193 for processing in the upper layer.

- the setting of the motion vector by the motion vector setting unit 192 may be performed using a predictor indicated by the predictor information and a difference motion vector indicated by the difference motion vector information for each prediction unit. For example, when the predictor information indicates a spatial predictor as shown in equation (1) for a certain prediction unit, the motion vector setting unit 192 performs motion vector buffer on a reference motion vector of a prediction unit adjacent to the prediction unit. Obtain from 194. Then, the motion vector setting unit 192 substitutes the acquired reference motion vector into Expression (1) to generate a predicted motion vector. Furthermore, the motion vector setting unit 192 reconstructs the motion vector by adding the difference motion vector to the generated predicted motion vector.

- a motion vector reconstructed in this way can be set to each prediction unit.

- the compensation unit 195 generates predicted image data of each prediction unit using the motion vector set in each prediction unit by the motion vector setting unit 192 and the reference image data input from the frame memory 69. Then, the compensation unit 195 outputs the generated predicted image data to the addition unit 65 via the selector 71.