WO2011001761A1 - 情報処理装置、情報処理方法、プログラム及び電子装置 - Google Patents

情報処理装置、情報処理方法、プログラム及び電子装置 Download PDFInfo

- Publication number

- WO2011001761A1 WO2011001761A1 PCT/JP2010/058773 JP2010058773W WO2011001761A1 WO 2011001761 A1 WO2011001761 A1 WO 2011001761A1 JP 2010058773 W JP2010058773 W JP 2010058773W WO 2011001761 A1 WO2011001761 A1 WO 2011001761A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- skin

- user

- area

- light

- image

- Prior art date

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/20—Analysis of motion

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V40/00—Recognition of biometric, human-related or animal-related patterns in image or video data

- G06V40/10—Human or animal bodies, e.g. vehicle occupants or pedestrians; Body parts, e.g. hands

- G06V40/107—Static hand or arm

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/10—Image acquisition

- G06V10/12—Details of acquisition arrangements; Constructional details thereof

- G06V10/14—Optical characteristics of the device performing the acquisition or on the illumination arrangements

- G06V10/143—Sensing or illuminating at different wavelengths

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V40/00—Recognition of biometric, human-related or animal-related patterns in image or video data

- G06V40/20—Movements or behaviour, e.g. gesture recognition

- G06V40/28—Recognition of hand or arm movements, e.g. recognition of deaf sign language

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N23/00—Cameras or camera modules comprising electronic image sensors; Control thereof

Definitions

- the present invention relates to an information processing device, an information processing method, a program, and an electronic device.

- the present invention relates to an information processing method, a program, and an electronic apparatus.

- extraction techniques for extracting the shape of the user's hand there are a pattern matching method using image pattern matching, a skin region extraction method for extracting the user's skin region, and the like.

- a plurality of shape images obtained by imaging hands of various shapes and sizes are learned in advance, and a shape image that is most similar to the captured image (for example, pixel values of corresponding pixels).

- the shape of the hand displayed in the shape image that minimizes the sum of the differences is extracted as the shape of the user's hand.

- the shape of the hand in the captured image is significantly different from the shape of the hand in the shape image, or the hand in the captured image is in a state of overlapping the face or the like.

- a skin region representing the user's skin in the captured image is extracted using skin color information representing the color of human skin.

- the shape of both the face and hand is defined as the skin area. It is difficult to extract only the shape of the hand as a skin region.

- the present invention has been made in view of such a situation, and an accurate hand shape of a user is obtained from a captured image obtained by capturing the user while suppressing an increase in the amount of calculation required for a series of processes. It enables extraction at high speed.

- the present invention is based on, for example, a change in the luminance value of a pixel constituting an area corresponding to the shape of the extracted user's hand or the like on the display image on which the user is displayed. The movement can be easily detected.

- the first information processing apparatus includes an irradiation unit that irradiates the user with light having a first wavelength and light having a second wavelength different from the first wavelength, and the user.

- the first image obtained by receiving the reflected light of the light of the first wavelength irradiated to the second and the second image obtained by receiving the reflected light of the light of the second wavelength irradiated to the user From the skin display area on the display image including the skin display area on which the user's skin is displayed based on the acquisition means for acquiring the image of the image and the first and second images, the body of the user Object area extraction means for extracting an object area where an object representing a predetermined skin region is displayed, and a luminance value of a pixel constituting the object area on the display image, or a value calculated based on the luminance value

- an information processing apparatus including a distance change detecting means for detecting a relative change in a distance from said irradiation means to said object.

- an area constituted by pixels included in the upper n percent having a large luminance value is detected as the partial display area where a part of the object is displayed.

- a partial display area detecting means can be further provided.

- the portion according to a change in one of a luminance value of a pixel constituting the partial display region of the object region on the display image or a value calculated based on the luminance value.

- a change in relative distance to a part of the object displayed in the display area can be detected.

- the object area extracting means detects a shape area representing the shape of the object on the skin display area based on a distribution of luminance values of pixels constituting the skin display area on the display image, and displays the skin display.

- the object region corresponding to the shape region can be extracted from the region.

- the distance change detecting means may detect a change in a relative distance from the irradiation means to the object in accordance with a change in an average value of luminance values of pixels constituting the object area on the display image. it can.

- the first wavelength ⁇ 1 and the second wavelength ⁇ 2 satisfy the relationship of the following equation: ⁇ 1 ⁇ 2 630 nm ⁇ ⁇ 1 ⁇ 1000 nm 900 nm ⁇ ⁇ 2 ⁇ 1100 nm Can be.

- a first information processing method is an information processing method of an information processing device that detects a change in distance to a user, and the information processing device includes an irradiation unit, an acquisition unit, An object region extracting unit; and a distance change detecting unit, wherein the irradiating unit irradiates the user with light having a first wavelength and light having a second wavelength different from the first wavelength.

- the means receives the first image obtained by receiving the reflected light of the light of the first wavelength irradiated to the user and the reflected light of the light of the second wavelength irradiated to the user.

- the object region extracting means obtains the skin on the display image including the skin display region where the user's skin is displayed based on the first and second images. From the display area, the predetermined skin of the user's body An object region in which an object representing the position is displayed, and the distance change detection means either one of a luminance value of a pixel constituting the object region on the display image or a value calculated based on the luminance value

- the information processing method includes a step of detecting a change in a relative distance from the irradiating means to the object in accordance with a change in.

- the first program according to the first aspect of the present invention is an information processing apparatus including an irradiation unit that irradiates the user with light having a first wavelength and light having a second wavelength different from the first wavelength.

- a computer that controls the first image obtained by receiving the reflected light of the first wavelength light irradiated to the user, and the reflected light of the second wavelength light irradiated to the user.

- the skin display area on a display image including an acquisition means for acquiring a second image obtained by receiving light and a skin display area on which the user's skin is displayed based on the first and second images

- An object region extracting means for extracting an object region in which an object representing a predetermined skin part of the user's body is displayed, and a luminance value of a pixel constituting the object region on the display image, or the luminance value Depending on one of the change in value calculated on the basis of a program for functioning as a distance change detecting means for detecting a relative change in a distance from said irradiation means to said object.

- the first electronic device includes: an irradiation unit configured to irradiate the user with light having a first wavelength and light having a second wavelength different from the first wavelength; A first image obtained by receiving the reflected light of the irradiated light of the first wavelength, and a second image obtained by receiving the reflected light of the second wavelength of light irradiated on the user Based on the acquisition means for acquiring an image and the skin display area on the display image including the skin display area on which the user's skin is displayed based on the first and second images, the predetermined body of the user An object region extracting means for extracting an object region in which an object representing a skin region is displayed, and a luminance value of a pixel constituting the object region on the display image, or a value calculated based on the luminance value Depending on one change, The distance change detecting means for detecting a change in the relative distance from the irradiation means to the object and a predetermined process corresponding to the detection of the change in the

- the first image obtained by receiving the reflected light of the light having the first wavelength irradiated to the user, and the second wavelength irradiated to the user is obtained by receiving the reflected light of the light having the first wavelength irradiated to the user, and the second wavelength irradiated to the user.

- An object region in which an object representing a predetermined skin part of the user's body is extracted from the skin display region on the upper side, and the luminance value of the pixels constituting the object region on the display image, or the A change in relative distance from the irradiating means to the object is detected according to one change in the value calculated based on the luminance value.

- a second information processing apparatus is an information processing apparatus that extracts a shape of an object representing a predetermined skin part of a user's body from a captured image obtained by imaging the user. And an irradiation means for irradiating the user with light having a first wavelength and light having a second wavelength different from the first wavelength, and reflected light of the light having the first wavelength irradiated to the user.

- a shape region extracting means for extracting a skin region representing the skin of the user based on whether or not, and a shape region extracting means for extracting a shape region representing the shape of the object on the skin region,

- the extraction unit is configured to obtain a luminance value of a pixel constituting an area corresponding to the skin area on a display image on which the object and the user's part corresponding to an area other than the shape area on the skin area are displayed.

- the information processing apparatus extracts the shape region based on a distribution.

- the difference value calculation means can calculate the difference value obtained by normalizing a difference obtained by subtracting the luminance value of the second image from the luminance value of the first image.

- the difference value calculation means can calculate a ratio between the luminance value of the first image and the luminance value of the second image as the difference value.

- the information processing apparatus includes an irradiation unit, an acquisition unit, a difference value calculation unit, a skin region extraction unit, and a shape region extraction unit, and the irradiation unit has a first wavelength.

- the user is irradiated with light and light having a second wavelength different from the first wavelength, and the obtaining means receives the reflected light of the light having the first wavelength irradiated to the user.

- a second image obtained by receiving reflected light of the second wavelength light irradiated to the user, and the difference value calculating means is configured to acquire the first or second difference value.

- the first wavelength light and the first wavelength A difference value representing a difference in reflectance from light of a wavelength of is calculated, and the difference value calculated by the skin region extraction unit for each pixel constituting the first or second image is equal to or greater than a predetermined threshold value.

- the shape area extracting means extracts a shape area representing the shape of the object on the skin area, and extracts the shape area representing the shape of the object on the skin area.

- the area extracting means includes a luminance value of a pixel constituting an area corresponding to the skin area on the display image on which the object and the user's part corresponding to an area other than the shape area on the skin area are displayed.

- a second program is an information processing apparatus that extracts a shape of an object representing a predetermined skin part of a user's body from a captured image obtained by imaging the user,

- a computer that controls an information processing apparatus including an irradiation unit that irradiates the user with light having a first wavelength and light having a second wavelength different from the first wavelength.

- An acquisition means for acquiring a first image obtained by receiving reflected light of light having a wavelength of 2 and a second image obtained by receiving reflected light of light of the second wavelength irradiated to the user

- difference value calculating means for calculating a difference value representing a difference in reflectance between the light of the first wavelength and the light of the second wavelength for each pixel constituting the first or second image.

- a skin region extracting means for extracting a skin region representing the user's skin based on whether the difference value is equal to or greater than a predetermined threshold; and a shape region representing the shape of the object on the skin region.

- the shape area extracting means functions as the shape area extracting means for extracting the skin on the display image on which the object and the part of the user corresponding to the area other than the shape area on the skin area are displayed. This is a program for extracting the shape region based on the distribution of luminance values of pixels constituting the region corresponding to the region.

- a second electronic device is an electronic device that extracts a shape of an object representing a predetermined skin region of a user's body from a captured image obtained by imaging the user, An irradiation means for irradiating the user with light having a first wavelength and light having a second wavelength different from the first wavelength, and reflected light of the light having the first wavelength irradiated to the user.

- a skin area extracting means for extracting a skin area representing the user's skin

- a shape area extracting means for extracting a shape area representing the shape of the object on the skin area

- the shape area extracting means includes the object and the user's part corresponding to an area other than the shape area on the skin area on the display image.

- the electronic device extracts the shape region based on a distribution of luminance values of pixels constituting a region corresponding to the skin region.

- the first image obtained by receiving the reflected light of the light having the first wavelength irradiated to the user, and the second wavelength irradiated to the user is acquired, and for each pixel constituting the acquired first or second image, the light of the first wavelength and the second wavelength

- a difference value representing a difference in reflectance from light is calculated, and based on whether or not the difference value calculated for each pixel constituting the first or second image is equal to or greater than a predetermined threshold value,

- a skin region representing the user's skin is extracted, and a shape region representing the shape of the object on the skin region is extracted.

- the movement of the user's hand or the like can be easily detected.

- the second aspect of the present invention it is possible to extract a user's accurate hand shape and the like at high speed while suppressing an increase in the amount of calculation required for a series of processes.

- FIG. 1 shows a configuration example of an information processing system 1 according to the present embodiment.

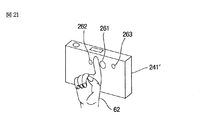

- the information processing system 1 executes a predetermined process according to a gesture (or posture) using a user's hand, and includes an information processing device 21, a camera 22, and a light emitting device 23.

- the user changes the shape of his / her hand (in front of the lens surface of the camera 22) or moves his / her hand.

- the information processing system 1 recognizes the shape and movement of the user's hand and executes a predetermined process corresponding to the recognition result.

- the user moves his / her hand or changes the shape of the hand in front of the lens surface of the camera 22, and the user moves his / her hand over the lens of the camera 22 rather than the face or chest. It is assumed that a gesture (or posture) is performed at a position close to the surface.

- the information processing device 21 controls the camera 22 and the light emitting device 23. Further, the information processing apparatus 21 recognizes the shape and movement of the user's hand based on the captured image obtained by the imaging of the camera 22, and executes predetermined processing corresponding to the recognition result.

- the camera 22 has a lens used for imaging a subject such as a user, and the front surface of the lens is covered with a visible light cut filter 22a that blocks visible light.

- the camera 22 receives only the reflected light of the invisible light irradiated to the subject by the light emitting device 23, and the captured image obtained as a result is processed into the information processing device. 21 will be supplied.

- the camera 22 receives only reflected light of a first wavelength (for example, near-infrared light of 870 [nm]) that is invisible light emitted to the subject by the light emitting device 23, and is obtained as a result.

- the first captured image is supplied to the information processing device 21.

- the camera 22 is invisible light that is emitted to the subject by the light-emitting device 23 and only reflects reflected light having a second wavelength different from the first wavelength (for example, near infrared light of 950 [nm]).

- the second captured image obtained as a result of receiving light is supplied to the information processing apparatus 21.

- infrared components for example, near infrared at 870 [nm] and near infrared at 950 [nm]

- external light such as sunlight or fluorescent lamps

- the visible light region has higher light receiving sensitivity than the infrared region, the visible light influence can be reduced by providing the visible light cut filter 22a. For this reason, since the reflected light of the light irradiated to the subject can be received from the light emitting device 23 with almost no influence of visible light, the robustness of skin detection using the spectral reflectance characteristics can be improved. It becomes possible.

- the front surface of the lens of the camera 22 is covered with the visible light cut filter 22a.

- the lens of the camera 22 The front surface may be configured not to be covered with the visible light cut filter 22a.

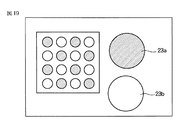

- the light emitting device 23 includes LEDs (light emitting diodes) 23a 1 and 23a 2 that emit light having a first wavelength, and LEDs 23b 1 and 23b 2 that emit light having a second wavelength.

- LED23a 1 and 23a 2 when there is no need to distinguish LED23a 1 and 23a 2 is a LED23a 1 and 23a 2 simply referred LED23a.

- LED23b 1 and 23b 2 When there is no need to distinguish LED23b 1 and 23b 2 is a LED23b 1 and 23b 2 simply referred LED23b.

- the LED 23a and the LED 23b emit light alternately according to the control of the information processing device 21.

- the output of the LED 23a and the LED 23b is such that the reflected light of the first wavelength and the reflected light of the second wavelength have the same intensity (light quantity) of the reflected light received by the camera 22. Has been adjusted.

- the relative sensitivity characteristic of the camera 22 with respect to the light of the first wavelength and the relative sensitivity characteristic of the camera 22 with respect to the light of the second wavelength are the same, the reflectance in the light of each of the first and second wavelengths.

- the brightness value obtained when irradiating light of the first wavelength to the same object (for example, mirror surface) is the same as the brightness value obtained when irradiating light of the second wavelength.

- the intensity (light quantity) of reflected light received by the camera 22 is adjusted to be the same.

- the relative sensitivity characteristic of the camera 22 usually tends to decrease in sensitivity as the distance from the visible light region increases. For example, compared with the sensitivity at 870 [nm]. , The sensitivity at 950 [nm] is 1/2 or less.

- the luminance value obtained when the light having the first wavelength is irradiated to the object having the same reflectance in the light of each of the first and second wavelengths, and the second The outputs of the LEDs 23a and 23b are adjusted according to, for example, the relative sensitivity characteristics of the camera 22 so that the luminance values obtained when the light of the wavelength is irradiated are the same.

- the LEDs 23a and the LEDs 23b are alternately arranged in a grid pattern, and a diffusion plate for uniformly diffusing the light emitted by the LEDs 23a and 23b is provided on the front surfaces of the LEDs 23a and 23b. 23c is provided. Thereby, the subject is irradiated with light of the first or second wavelength evenly.

- the light emitting device 23 is disposed at a position where the light emitted from the LED 23a or the LED 23b is reliably irradiated to at least the user's hand.

- the light emitting device 23 since the user changes the shape of the hand in front of the lens surface of the camera 22, the light emitting device 23 is disposed in a state of being close to the camera 22, for example.

- the light emitting device 23 will be described as being disposed in the vicinity of the camera 22, but the positional relationship between the light emitting device 23 and the camera 22 is not limited thereto. That is, any positional relationship may be used as long as the user's hand located in front of the lens surface of the camera 22 can be irradiated and the reflected light obtained by the irradiation can be reliably received by the camera 22. .

- the light emitting device 23 and the camera 22 that are close to each other are separated and the light emitting device 23 is disposed closer to the user's hand than the camera 22, the light emitting device 23 and the camera 22 are disposed. Compared with the case where it arrange

- the distance between the light emitting device 23 and the user's hand is shorter than when the light emitting device 23 and the camera 22 are arranged close to each other, the power consumption of the light emitting device 23 can be reduced. It becomes.

- FIG. 2 shows a configuration example of the information processing apparatus 21.

- the information processing apparatus 21 includes a control unit 41, a binarization unit 42, a skin extraction unit 43, a threshold value determination unit 44, a mask image generation unit 45, and a shape extraction unit 46.

- the control unit 41 controls the light emitting device 23 to cause the LEDs 23a and the LEDs 23b to emit light alternately.

- the first captured image and the second captured image are supplied from the camera 22 to the binarization unit 42.

- the binarization unit 42 Based on the first and second captured images supplied from the camera 22, the binarization unit 42 extracts a skin region representing the user's skin and a region other than the skin region from the first captured image ( To detect.

- the binarization unit 42 employs the first captured image as the target for extracting the skin region and the region other than the skin region.

- the target to be extracted As such, the second captured image or the like can be adopted.

- the binarization unit 42 binarizes the pixel values of the pixels constituting the extracted skin area and the pixel values of the pixels constituting the area other than the skin area (for example, 0 and 1).

- the binarized skin image thus generated is generated and supplied to the skin extraction unit 43 and the shape extraction unit 46.

- the first captured image is supplied from the camera 22 to the skin extraction unit 43 and the mask image generation unit 45.

- the skin extraction unit 43 Based on the binarized skin image supplied from the binarization unit 42, the skin extraction unit 43 extracts a region corresponding to the skin region in the binarized skin image from the first captured image from the camera 22 (user (The area where the skin part is displayed) is extracted.

- the skin extraction unit 43 generates a skin image including the extracted region and supplies the skin image to the threshold value determination unit 44.

- the skin extraction unit 43 may supply the extracted region to the threshold value determination unit 44 as a skin image.

- the threshold value determination unit 44 creates a histogram of the skin image (the luminance value of the pixels constituting the skin image) based on the skin image from the skin extraction unit 43. Then, the threshold value determination unit 44 determines a mask threshold value used for generating a mask image to be described later based on the created histogram of the skin image, and supplies it to the mask image generation unit 45.

- the threshold value determination unit 44 uses, for example, a histogram of the skin image as the distribution of the luminance values of the pixels constituting the skin image in order to determine the mask threshold value.

- a histogram of the skin image as the distribution of the luminance values of the pixels constituting the skin image in order to determine the mask threshold value.

- any information may be used as long as it represents the distribution of luminance values of pixels constituting the skin image, without being limited to the histogram of the skin image.

- the threshold value determination unit 44 calculates the maximum value or the minimum value of the luminance values of the pixels constituting the skin image based on the skin image histogram, and determines the mask threshold value. However, it is not limited to this.

- the threshold value determination unit 44 calculates an average value, a variance value, a minimum value, a maximum value, and the like for the luminance values of the pixels constituting the skin image based on the histogram of the skin image,

- the mask threshold value may be determined using a variance value, a minimum value, a maximum value, or the like.

- the mask image generation unit 45 generates a mask image from the first captured image supplied from the camera 22 based on the mask threshold value from the threshold value determination unit 44 and supplies the mask image to the shape extraction unit 46.

- the mask image is a mask area composed of pixels having luminance values included in the luminance value range specified by the mask threshold, and other areas in the first captured image. An image binarized into a mask area.

- the shape extraction unit 46 uses, for example, the user's hand as a region corresponding to the mask region in the mask image from the binarized skin image from the binarization unit 42. A shape region representing the shape is extracted.

- the shape extraction unit 46 recognizes the shape of the hand based on the extracted shape region, performs processing according to the recognition result, and outputs the processing result to the subsequent stage.

- the binarization unit 42 extracts the skin region and the region other than the skin region from the first captured image, but the skin region and the region other than the skin region are extracted from the second captured image. You may make it extract. In this case, the skin image extraction unit 43 and the mask image generation unit 45 are supplied with the second captured image from the camera 22 instead of the first captured image.

- the skin extraction unit 43 generates a skin image from the second captured image

- the mask image generation unit 45 generates a mask image from the second captured image

- the skin extraction unit 43 creates new synthesized images (for example, the first and second captured images, respectively) obtained by synthesizing the first captured image and the second captured image at a predetermined ratio.

- the skin area and the area other than the skin area are extracted for a synthesized image (a synthesized image synthesized at a ratio of 1: 1) having an average luminance value of corresponding pixels as a luminance value. May be.

- the skin extraction unit 43 is a display area in which the same subject as the subject displayed on the first or second captured image is displayed at the same position. Can be adopted as an image to be extracted.

- FIG. 5 illustrates a binarized skin image generated by the binarization unit 42 based on the first captured image and the second captured image.

- FIG. 3 shows the reflection characteristics of human skin with respect to irradiation light having different wavelengths.

- the horizontal axis indicates the wavelength of light applied to the human skin

- the vertical axis indicates the reflectance of the light applied to the human skin.

- the reflectance of reflected light obtained by irradiating human skin with light of 870 [nm] is about 63 [%], and 950 [

- the reflectance of the reflected light obtained by irradiating [nm] light is about 50 [%].

- the change in reflectance is moderate around 800 to 1000 [nm]. Many. Although illustration is omitted, in the example of hair, the reflectance gradually increases as the wavelength increases in the vicinity of 800 to 1000 [nm]. In the example of hair, the reflectance of the reflected light obtained by irradiating light of 870 [nm] is about 6 [%], and the reflectance of the reflected light obtained by irradiating light of 950 [nm] Is about 8%.

- FIG. 4 shows a first captured image obtained by receiving reflected light of 870 [nm] light irradiated to the user, and obtained by receiving reflected light of 950 [nm] light irradiated to the user. An example of each second captured image is shown.

- the user's face 61 and hand 62 are displayed as the user's skin area, and the shirt 63 and the background 64 worn by the user are displayed as areas other than the user's skin area.

- a first captured image is shown.

- the user's face 81 and hand 82 are displayed as the user's skin area, and the shirt 83 and the background 84 worn by the user are areas other than the user's skin area.

- the displayed second captured image is shown.

- the reflectance of light having a wavelength of 870 [nm] is greater than the reflectance of light having a wavelength of 950 [nm] in the reflection characteristics of the user's skin. .

- the reflected light when 950 [nm] light is applied to the lens of the camera 22 as the reflected light of the light irradiated to the user's skin. Light that is brighter than the brightness is incident.

- the luminance values of the pixels constituting the user's skin area (face 61 and hand 62) in the first captured image are the user's skin areas (face 81 and hand) in the second captured image, respectively. 82), which is larger than the luminance value of the pixels constituting the pixel 82).

- the reflectance of light having a wavelength of 870 [nm] is the same as or smaller than the reflectance of light having a wavelength of 950 [nm]. Often has become.

- the lens of the camera 22 is irradiated with light of 950 [nm] as reflected light of the light irradiated to the part other than the user's skin part.

- the luminance values of the pixels constituting the region (the shirt 63 and the background 64) other than the user's skin region in the first captured image are respectively other than the user's skin region in the second captured image.

- the value is the same as or smaller than the luminance value of the pixels constituting the region (the shirt 83 and the background 84).

- each of the luminance values of the pixels constituting the corresponding user's skin part in the second captured image from the luminance values of the pixels constituting the part other than the user's skin part in the first captured image.

- the difference obtained by subtracting is a value of 0 or less (non-positive value).

- the binarization unit 42 calculates the difference between the luminance values of the corresponding pixels in the first captured image and the second captured image, and based on the calculated difference, An area other than the user's skin area is extracted. Then, the binarization unit 42 generates a binarized skin image with the extracted user's skin area as the value 1 and the area other than the extracted user's skin area as the value 0.

- the binarization unit 42 calculates the calculated difference (difference obtained by subtracting the luminance value of the pixel constituting the corresponding second captured image from the luminance value of the pixel constituting the first captured image). Is positive, the corresponding pixel is extracted as a pixel constituting the user's skin area. If the calculated difference is not positive, the corresponding pixel is extracted as a pixel constituting an area other than the user's skin area.

- the binarization unit 42 sets the pixel value of the pixel extracted as the pixel constituting the user's skin area to 1, and sets the pixel value of the pixel extracted as the pixel constituting the area other than the user's skin area to 0

- a binarized skin image is generated and supplied to the skin extraction unit 43 and the shape extraction unit 46.

- the difference calculated in the part other than the skin part may be a positive value although it is smaller than the difference calculated in the skin part. Therefore, even if the difference is positive, if the difference is less than the predetermined threshold, it is desirable that the pixel value 0 is set as a part other than the user's skin part.

- the binarization unit 42 determines whether or not the calculated difference is equal to or greater than a predetermined threshold value. If the difference is equal to or greater than the predetermined threshold value, the corresponding pixel constitutes the user's skin area. When extracted as a pixel and not equal to or greater than a predetermined threshold, it is desirable to generate a binarized skin image by extracting the corresponding pixel as a pixel constituting an area other than the user's skin area.

- the luminance values L1 and L2 in the skin region can change due to the distance from the light emitting device 23 to the subject, the imaging conditions of the camera 22, and the like, so the difference L1-L2 in the skin region is It may happen that the constant value C1 is not reached.

- the binarizing unit 42 must use a different threshold value for each difference L1-L2, which is a very complicated process.

- the binarization unit 42 normalizes the difference L1-L2, and uses the difference L1-L2 as the difference between the reflectance of the light of the first wavelength and the reflectance of the light of the second wavelength.

- the binarization unit 42 uses the difference L1-L2 as the difference between the reflectance of the light of the first wavelength and the reflectance of the light of the second wavelength.

- the binarization unit 42 normalizes (divides) the difference L1-L2 with one of the luminance values L1 or L2, the normalized difference (L1-L2) / L1 or (L1 Since the same threshold value prepared in advance can be used for -L2) / L2, it is not necessary to prepare a different threshold value for each difference L1-L2.

- the difference may be normalized by the luminance value related value related to the luminance value L1 or L2, for example, (L1 + L2) / 2 Or you may normalize with (L1 + L2).

- the capacity of the built-in memory (not shown) for the binarization unit 42 to hold the threshold value in advance can be reduced. Further, if the binarization unit 42 uses the same threshold value regardless of the difference L1-L2, it is possible to save time and effort for changing the threshold value for each difference L1-L2. It is possible to suppress a calculation amount for generating an image and generate a binarized skin image more quickly.

- the binarization unit 42 uses the ratio L1 / L2 Is extracted as a skin region, and when the ratio L1 / L2 is less than the threshold, it is extracted as a non-skin region.

- the binarization unit 42 calculates a difference absolute value between luminance values of corresponding pixels between the first captured image and the second captured image, and the calculated difference absolute value is equal to or greater than a predetermined threshold value. Based on whether or not, the user's skin part (skin area) and other parts (areas other than the skin area) may be extracted to generate a binarized skin image.

- the same threshold value can be used for any difference absolute value by normalizing with the luminance value L1 or L2.

- the luminance value is also taken into account. It is desirable to extract the skin area. That is, for example, only a portion having a high luminance value L1 (or luminance value L2) out of the skin regions extracted using the absolute difference value may be extracted as the final skin region.

- FIG. 5 shows an example of a binarized skin image generated by the binarization unit 42.

- a black portion indicates a skin region represented by a pixel value 1.

- This skin area is composed of a face area 101 indicating the skin part of the user's face and a hand area 102 indicating the skin part of the user's hand.

- the face area 101 shown in FIG. 5 includes not only the skin part of the face but also eyebrows, eyes, hair, and the like. Consists of only.

- a white portion indicates an area other than the skin area represented by a pixel value of 0.

- the binarization unit 42 supplies the generated binarized skin image to the skin extraction unit 43 and the shape extraction unit 46.

- the skin extraction unit 43 Based on the binarized skin image from the binarization unit 42, the skin extraction unit 43 converts the first captured image supplied from the camera 22 into the face area 101 and the hand area 102 in the binarized skin image. A corresponding area (area where the face 61 and the hand 62 are displayed) is extracted. And the skin extraction part 43 produces

- FIG. 6 shows an example of the skin image extracted by the skin extraction unit 43.

- the user's face 61 and hand 62 are displayed in the skin image shown in FIG.

- the skin image shown in FIG. 6 includes eyebrows, eyes, hair, and the like as the user's face 61 in addition to the skin portion of the face.

- the face 61 represents only the skin portion of the face.

- the skin extraction unit 43 multiplies the luminance values of corresponding pixels of the binarized skin image from the binarization unit 42 and the first captured image from the camera 22, respectively.

- the skin extraction unit 43 extracts and extracts an area (area where the face 61 and the hand 62 are displayed) composed of pixels whose multiplication result is not 0 from the pixels constituting the first captured image. A skin image including the region is generated.

- the face 61 included in the area corresponding to the face area 101 of the binarized skin image and the area corresponding to the hand area 102 of the binarized skin image are included.

- the hand 62 is extracted as it is, and the brightness value of the area corresponding to the area other than the skin area of the binarized skin image (shown in white in FIG. 6) is set to the value 255, for example.

- a skin image as shown in FIG. 6 is generated from the captured image.

- the skin extraction unit 43 supplies the generated skin image to the threshold value determination unit 44.

- the threshold determination unit 44 determines a mask threshold used for generating a mask image based on the skin image from the skin extraction unit 43.

- FIG. 7 shows an example of the histogram of the skin image.

- the horizontal axis indicates the luminance value of the pixels constituting the skin image.

- the vertical axis indicates the number of pixels corresponding to the luminance value on the horizontal axis.

- the number of pixels for the luminance value 255 of the pixels constituting the area represented by the white part is also displayed in the skin image of FIG. 6. The number is not shown because it is not used to determine the mask threshold.

- the threshold value determination unit 44 creates a histogram as shown in FIG. 7 for the luminance values of the pixels constituting the skin image from the skin extraction unit 43.

- the LED 23a and the LED 23b of the light emitting device 23 emit light in the state of being close to the camera 22, so that the luminance value of the user's part (in this case, a hand) existing closer to the camera 22 (light emitting device 23) is larger. Increases, and the luminance value decreases as the user part (in this case, a face or the like) exists farther from the camera 22.

- the luminance value of the pixels constituting the skin portion of the hand existing at a position close to the camera 22 is larger than the luminance value of the pixels constituting the skin portion of the face existing at a position far from the camera 22.

- the luminance values from the luminance value 0 to the luminance value 54 are the luminance values of the pixels constituting the face 61 (region), and the luminance values from the luminance value 55 to the luminance value 110 constitute the hand 62. This is the luminance value of the pixel.

- the threshold value determination unit 44 determines the luminance value (luminance value 55 in this example) when the number of pixels is a minimum as the lower threshold value Th_L, and sets the maximum luminance value (luminance value 110 in this example) as the upper threshold value Th_H. decide.

- the upper limit threshold Th_H is determined to be the maximum luminance value

- the threshold value determination unit 44 can also determine the mask threshold value using an average value, a variance value, a minimum value, a maximum value, and the like regarding the luminance values of the pixels constituting the skin image.

- the threshold value determination unit 44 may determine the average value for the luminance value of the pixels constituting the skin image as the lower limit threshold value Th_L, or the maximum value for the luminance value of the pixels constituting the skin image. One half of the value may be determined as the lower limit threshold Th_L. For example, the threshold determination unit 44 may determine the average of the minimum value and the maximum value of the luminance values of the pixels constituting the skin image as the lower limit threshold Th_L.

- the threshold value determination unit 44 prepares a threshold value determination function for determining the lower limit threshold value Th_L for each variance value, for example, and the threshold value corresponding to the variance value for the luminance value of the pixels constituting the skin image.

- the lower limit threshold Th_L may be determined by a determination function.

- the threshold value determining function for example, a function having a minimum value, a maximum value, or the like for the luminance value of the pixels constituting the skin image as a variable can be adopted.

- a function f (x) is generated, and a first derivative f ′ (x) is further generated from the generated function f (x), and a lower limit is set based on the first derivative f ′ (x). Since the value determined as the lower limit threshold Th_L can be easily calculated as compared with the case where the threshold Th_L is determined, the lower limit threshold Th_L can be determined more quickly.

- the threshold value determination unit 44 determines, for example, the maximum value of the luminance value of the pixels constituting the skin image as the upper limit threshold value Th_H, but the skin image is determined in the same manner as in the case of the lower limit threshold value Th_L.

- the upper threshold value Th_H can be determined using an average value, a variance value, a minimum value, a maximum value, and the like regarding the luminance values of the constituent pixels.

- the upper threshold value Th_H can be determined in the same manner as the lower threshold value Th_L.

- the threshold determination unit 44 supplies the determined lower limit threshold Th_L and upper limit threshold Th_H to the mask image generation unit 45 as mask thresholds.

- the mask image generation unit 45 detects a mask region and a non-mask region from the first captured image from the camera 22 based on the mask threshold values (lower threshold value Th_L and upper threshold value Th_H) from the threshold value determination unit 44, A mask image in which the detected mask area and non-mask area are binarized to different values is generated.

- the mask image generation unit 45 is present at a position close to the light emitting device 23, and therefore, as a region corresponding to a user part (in this case, a hand) whose luminance value is large, a lower threshold value Th_L or more.

- a mask region constituted by pixels having a luminance value equal to or lower than the upper threshold value Th_H is detected.

- the mask image generation unit 45 is located at a position far from the light emitting device 23, and therefore the lower threshold value Th_L is set as an area corresponding to a user part (in this case, a face) whose luminance value is small. A non-mask area composed of pixels having a luminance value not included in the range equal to or higher than the upper threshold value Th_H is detected.

- the mask image generation unit 45 generates a mask image in which the detected mask area and non-mask area are binarized to different values.

- FIG. 8 shows an example of a mask image.

- a mask area 121 shown in black indicates an area in the corresponding first captured image whose luminance value is not less than the lower threshold Th_L and not more than the upper threshold Th_H. Yes.

- the non-mask area shown in white is an area in the corresponding first captured image that is less than the lower threshold Th_L or greater than the upper threshold Th_H. Is shown.

- the mask image generation unit 45 masks the pixel having the luminance value when the luminance value of the pixel constituting the first captured image from the camera 22 is equal to or higher than the lower limit threshold Th_L and equal to or lower than the upper limit threshold Th_H.

- the pixel is detected as a pixel included in the region, and the luminance value is converted to a pixel value of 1.

- the mask image generation unit 45 A pixel having a luminance value is detected as a pixel included in the non-mask area, and the luminance value is converted to a pixel value of zero.

- the pixel value after conversion is a value different from the luminance value, and represents a value that is either 0 or 1.

- the mask image generation unit 45 is configured by a mask region 121 (shown in black) configured by pixels having a value of 1 and a non-mask region (shown in white) configured by pixels having a value of 0.

- a mask image is generated and supplied to the shape extraction unit 46.

- the threshold value determination unit 44 determines the lower limit threshold value Th_L and the upper limit threshold value Th_H. However, for example, one of the lower limit threshold value Th_L and the upper limit threshold value Th_H may be determined as the mask threshold value.

- the maximum luminance value of the pixels constituting the first captured image supplied from the camera 22 to the mask image generation unit 45 is a luminance value corresponding to human skin (for example, the luminance value 110 in FIG. 7).

- the threshold value determination unit 44 may determine only the lower limit threshold value Th_L (for example, the luminance value 55) as the mask threshold value.

- the mask image generation unit 45 when the luminance value of the pixel constituting the first captured image from the camera 22 is equal to or higher than the lower limit threshold Th_L, the mask image generation unit 45 includes the pixel having the luminance value in the mask area. And the luminance value is converted into a pixel value of 1. Further, when the luminance value of the pixel constituting the first captured image from the camera 22 is less than the lower limit threshold Th_L, the mask image generation unit 45 includes the pixel having the luminance value in the non-mask area. And the luminance value is converted to a pixel value of 0.

- the threshold value determination unit 44 determines only the upper limit threshold value Th_H as the mask threshold value, for example, the shape of the face 61 may be extracted instead of the shape of the hand 62. At this time, for example, the threshold value determination unit 44 determines the luminance value 55 shown in FIG. 7 as the upper limit threshold value Th_H as the mask threshold value.

- the shape extraction unit 46 changes the face region 101 and the hand region 102 in the binarized skin image from the binarization unit 42 to the mask region 121 in the mask image.

- the corresponding region for example, a shape region representing the shape of the user's hand is extracted.

- the shape extraction unit 46 is located closer to the light emitting device 23 on the basis of the mask region 121 and the non-mask region that form the mask image from the mask image generation unit 45, and thus the luminance value increases.

- the user's part in this case, the hand 62

- the part of the user corresponding to the mask area 121 and the part of the user whose luminance value is small present now

- the difference in the relative distance from the light emitting device 23 to the face 61) (the part of the user corresponding to the non-mask area) is distinguished.

- the shape extraction unit 46 is located at a position close to the light emitting device 23 due to the difference in relative distance from the distinguished light emitting device 23, for example.

- the shape region (in this case, the region representing the shape of the hand) is extracted by distinguishing the hand 62).

- FIG. 9 shows a display example of an extracted image including a shape region extracted by the shape extraction unit 46.

- the shape region 141 represents the shape of the user's hand.

- the shape extraction unit 46 multiplies the value of the pixel constituting the mask image from the mask image generation unit 45 and the value of the pixel constituting the binarized skin image from the corresponding binarization unit 42, respectively. .

- the shape extraction unit 46 determines that the multiplication result is not 0 in the binarized skin image, that is, the face region 101 and the hand region 102 (FIG. 5) in the binarized skin image. A portion overlapping the mask region 121 (FIG. 8) is extracted as the shape region 141.

- the shape extraction unit 46 recognizes the shape of the user's hand based on the extracted shape region 141, and performs processing according to the recognition result.

- the mask region 121 in the mask image shown in FIG. 8 includes a shirt worn by the user in addition to the user's hand.

- the shape extraction unit 46 does not extract an area representing the shape of the shirt, The shape region 141 representing only the hand shape can be accurately extracted.

- FIG. 10 is a flowchart for explaining the shape extraction process. This shape extraction process is repeatedly executed from when the information processing system 1 is powered on.

- step S1 the control unit 41 controls the LED 23a of the light emitting device 23 to start light emission of the first wavelength. In addition, when the LED 23b emits light, the control unit 41 stops light emission of the LED 23b and starts light emission of the LED 23a.

- step S ⁇ b> 2 the camera 22 captures an image of a user irradiated with light having the first wavelength, and supplies a first captured image obtained as a result to the information processing apparatus 21.

- step S3 the control unit 41 controls the LED 23a of the light emitting device 23, stops the light emission of the first wavelength light, controls the LED 23b of the light emitting device 23, and starts the light emission of the second wavelength. .

- step S ⁇ b> 4 the camera 22 captures an image of a user irradiated with light of the second wavelength, and supplies a second captured image obtained as a result to the information processing apparatus 21.

- step S5 the binarizing unit 42, as shown in FIG. 5, based on the difference between the luminance values of the corresponding pixels of the first captured image and the second captured image supplied from the camera 22.

- a binarized skin image is generated and supplied to the skin extraction unit 43 and the shape extraction unit 46.

- step S ⁇ b> 6 the skin extraction unit 43 corresponds to the skin region in the binarized skin image from the first captured image from the camera 22 based on the binarized skin image supplied from the binarization unit 42. To be extracted (region where the user's skin part is displayed).

- the skin extraction unit 43 generates a skin image including the extracted region and supplies the skin image to the threshold value determination unit 44.

- step S7 the threshold value determination unit 44 creates a skin image histogram as shown in FIG. 7 based on the luminance values of the pixels constituting the skin image from the skin extraction unit 43.

- step S8 the threshold determination unit 44 determines the luminance value when the number of pixels is minimum based on the created skin image histogram as the lower limit threshold Th_L, and determines the maximum luminance value as the upper limit threshold Th_H. .

- the threshold value determination unit 44 supplies the determined lower threshold value Th_L and upper limit threshold value Th_H to the mask image generation unit 45 as mask threshold values.

- step S9 the mask image generation unit 45 binarizes the first captured image from the camera 22 based on the mask thresholds (the lower limit threshold Th_L and the upper limit threshold Th_H) from the threshold determination unit 44, and FIG. A mask image as shown is generated and supplied to the shape extraction unit 46.

- step S10 the shape extraction unit 46, based on the mask image from the mask image generation unit 45, as a region corresponding to the mask region in the mask image from the binarized skin image from the binarization unit 42, for example, An extraction area representing the shape of the user's hand is extracted.

- the shape extraction unit 46 recognizes the shape of the hand from the extracted extraction region, performs processing according to the recognition result, and outputs the processing result to the subsequent stage.

- a mask image is generated from the first captured image captured by one camera 22 based on the mask threshold, and binarized skin is generated based on the generated mask image.

- the shape of the user's hand was extracted from the image.

- a distance image representing the distance between the camera and the user's hand or the like is generated, and the distance image is used as a mask image to generate a user's hand.

- the amount of calculation required to generate the mask image can be reduced, and the shape of the user's hand and the like can be extracted with a smaller number of parts.

- the skin part of the face is not included as the skin part, and only the skin part of the hand is included.

- a mask image including the mask area 121 included and the non-mask area is generated.

- the mask region 121 includes the skin portion as the skin portion. Since only the skin portion of the hand is included without including the skin portion of the face, only the hand region 102 can be extracted from the binarized skin image.

- the light emitted from the LED 23a and the LED 23b is dazzling, so that the user does not feel unpleasant.

- a diffusion plate 23c is provided in front of the LED 23a and the LED 23b.

- the invisible light emitted by the LEDs 23a and 23b is uniformly diffused, the subject is irradiated with uniform light without unevenness due to the amount of light.

- the reflected light of the invisible light irradiated to the subject is received by the camera 22 as uniform light without unevenness due to the amount of light, so that the camera 22 displays the first and second captured images without unevenness due to the amount of light. Obtainable.

- the information processing system 1 uses the first and second captured images without unevenness due to the light amount in order to extract the hand shape and the like, for example, the first and second captured images with unevenness due to the light amount. Compared with the case of using, the shape of the hand and the like can be extracted more accurately.

- the information processing system 1 for example, in about 80 [ms] from the start of the shape extraction process so that the user can recognize the changed hand shape every time the user changes the hand shape. It is desirable to configure so that the shape of the hand can be extracted.

- the hand shape is desirable to configure to be extracted within 80 [ms] from the start of the shape extraction process. This is because it is known from experiments and the like conducted in advance that the user hardly feels stress when the processing time for extracting the hand shape is within 80 [ms].

- the difference L1-L2 is calculated and normalized, and the shape of the hand is extracted by a very simple process of comparing with the threshold value. Even when using an inexpensive and low-speed CPU (Central Processing Unit), processing time within 80 [ms] can be easily realized.

- CPU Central Processing Unit

- the processing time within 80 [ms] can be easily realized, so that the manufacturing cost can be suppressed and the user can be stressed. It is possible to quickly perform a process of extracting the shape of the hand without causing the user to feel.

- the shape of the hand 62 can be accurately extracted even when, for example, the face 61 and the hand 62 overlap as a skin region.

- the user wears a short-sleeved shirt or the like, it is also conceivable that the user's face 61 and the hand 62 as well as the arm or the like overlap.

- the skin extraction unit 43 extracts a skin image on which the arm 63 is displayed in addition to the face 61 and the hand 62 as shown in FIG. A histogram as shown in FIG.

- FIG. 12 shows an example of a histogram generated based on the skin image as shown in FIG.

- the distance from the light emitting device 23 to the hand 62 is the first closest (shorter), and the distance from the light emitting device 23 to the arm 63 is the same.

- the distance is the second closest, and the distance from the light emitting device 23 to the face 61 is the third closest.

- the histogram of the skin image shown in FIG. 11 is a pixel corresponding to the face 61 of the user from the brightness value 0 to the brightness value 75 as shown in the uppermost part of FIG. 12 (indicated by the solid line).

- the pixels from the luminance value 76 to the luminance value 150 are pixels corresponding to the user's arm 63, and the pixels having the luminance value 151 to the luminance value 250 are pixels corresponding to the user's hand 62.

- the histogram at the luminance values 76 to 150 corresponding to the arm 63 is flat. Therefore, unlike the histogram shown in FIG. 7, there is no clear minimum value (the luminance value 55 in FIG. 7) that distinguishes the face 61 from the hand 62. For this reason, the lower limit threshold Th_L cannot be determined in the same manner as described with reference to FIG.

- the threshold value determination unit 44 determines the shape of the histogram based on the histogram to be generated, and refers to a different method (for example, the method described in FIG. 7 or FIG. 12 according to the determined shape of the histogram.

- the lower limit threshold Th_L and the like are determined by the method described below.

- the threshold value determination unit 44 determines, for example, the lower limit threshold value Th_L based on the histogram shown in FIG. 12 will be described.

- a luminance value representing a boundary between the luminance value corresponding to the face 61 and the luminance value corresponding to the arm 63 (in this case, a luminance value near the value 75)

- the luminance value representing the boundary between the luminance value corresponding to the arm 63 and the luminance value corresponding to the hand 62 (in this case, the luminance value near the value 150) is an inflection point of the function g (x), that is, the first order. It is known that the derivative g ′ (x) is x when the maximum value or the minimum value is reached.

- the threshold value determination unit 44 differentiates the generated function g (x) once to generate a first derivative g ′ (x).

- X x0 is calculated.

- the closest value (for example, x0 when x2 ⁇ x0 is the smallest) (in this case, the luminance value 150) is determined as the lower limit threshold Th_L.

- the threshold value determination unit 44 determines, for example, the upper limit threshold value Th_H as the maximum value of luminance values in the histogram shown in FIG. 12 (in this case, the luminance value 250).

- the lower limit threshold Th_L and the upper limit threshold Th_H determined in this way are used to generate a mask image used when extracting the shape of the hand 62.

- the closest value (for example, x0 when x0-x1 is the smallest) (in this case, the luminance value 75) is determined as the lower limit threshold Th_L, and the upper limit threshold Th_H is determined as the luminance value in the histogram shown in FIG.

- the maximum value (in this case, the luminance value 250) may be determined.

- the lower threshold value Th_L and the upper threshold value Th_H determined in this way are used to generate a mask image used when extracting the shape formed by the hand 62 and the arm 63.

- the threshold determination unit 44 sets the lower limit threshold Th_L to the luminance value 75.

- the upper threshold value Th_H is determined as the luminance value 150, respectively.

- the lower limit threshold Th_L and the upper limit threshold Th_H are determined as described with reference to FIGS. 11 and 12, for example, even if a part of each of the face 61, the hand 62, and the arm 63 overlaps, For example, the shape and the like of the hand 62 can be accurately extracted.

- the threshold value determination unit 44 differentiates the first derivative g ′ (x) to obtain 2

- the shape extraction unit 46 corresponds to the shape region extracted from the skin image from the skin extraction unit 43.

- the corresponding region is detected, and the luminance value distribution of the pixels constituting the detected corresponding region is represented by, for example, the light emitting device 23 among the ones displayed in the corresponding region based on the histogram of the corresponding region. Only regions corresponding to those present at close positions can be extracted.

- the shape extraction unit 46 extracts only the region corresponding to the fingertip of the index finger from the region of the hand 62. be able to.

- the fingertip of the index finger of the hand 62 exists at a position closest to the light emitting device 23.

- FIG. 14 shows an example of the histogram of the corresponding region.

- the histogram shown in the uppermost part of FIG. 14 shows a histogram for only the luminance values of the pixels constituting the corresponding area where the hand 62 is displayed, for example.

- the histogram shown in the uppermost part of FIG. 14 shows a histogram for only the luminance values of the pixels constituting the corresponding area where the hand 62 is displayed, for example.

- the histogram shown in the uppermost part of FIG. 14 shows a histogram for only the luminance values of the pixels constituting the corresponding area where the hand 62 is displayed, for example.

- FIG. 14 shows a histogram for only the luminance values of the pixels constituting the corresponding area where the hand 62 is displayed, for example.

- the skin extracting unit 43 For example, based on the binarized skin image from the binarizing unit 42 and the first captured image from the camera 22, the skin extracting unit 43 generates a skin image as shown in FIG.

- the data is supplied to the shape extraction unit 46.

- the shape extraction unit 46 detects the corresponding region corresponding to the extracted shape region from the skin image from the skin extraction unit 43, and based on the luminance value of the pixels constituting the detected corresponding region, the shape extraction unit 46 is shown in FIG. A histogram is generated.

- the shape extraction unit 46 uses the range where the luminance value is high among the luminance values constituting the generated histogram as a tip region representing the fingertip of the index finger from the skin image (corresponding region) from the skin extraction unit 43. Can be extracted.

- the distance between the light emitting device 23 and the fingertip of the index finger is the closest among the various parts of the user's skin. For this reason, in the histogram shown in FIG. 14, the luminance value corresponding to the fingertip of the index finger is the highest.

- the area of the fingertip portion of the index finger is relatively small. Accordingly, the corresponding portion in the histogram of FIG. 14 is flat without having an extreme value, like the portion corresponding to the arm 63 of FIG.

- n [%] is determined in accordance with the part to be extracted or the like by an experiment or the like performed in advance.

- the shape extraction unit 46 performs a corresponding process according to the extracted tip region (the shape and the like).

- the ratio d2 / d1 between the relative distance d1 from the light emitting device 23 to the fingertip of the user's index finger and the relative distance d2 from the light emitting device 23 to the base of the user's index finger is, for example, the light emitting device 23. And the distance from the user's hand increases.

- the number of pixels at the fingertip portion of the index finger is small, but the luminance value of the pixel is the luminance value of the pixel at the base portion of the index finger. Since a histogram that is sufficiently large (for example, a luminance value that falls within the upper n [%]) is obtained, the fingertip portion of the user's index finger can be extracted relatively accurately.

- the shape extraction unit 46 detects the corresponding region corresponding to the extracted shape region from the skin image from the skin extraction unit 43, but the target of the image for detecting the corresponding region is It is not limited to this.

- the shape extraction unit 46 may be supplied with the first captured image from the camera 22, and the corresponding region may be detected for the first captured image.

- the second captured image may be targeted.

- the shape extraction unit 46 may target any image as long as it is a display image in which the same subject as that displayed on the first or second captured image is displayed at the same position. .

- the skin extraction unit 43 supplies the extracted skin image to the shape extraction unit 46, but the shape extraction unit 46 supplies the extracted shape region to the skin extraction unit 43.

- the skin extraction unit 43 detects the corresponding region corresponding to the shape region from the shape extraction unit 46 from the extracted skin image, and among the ones displayed in the corresponding region, the light emitting device 23 Only the region corresponding to the one existing at the closest position may be extracted.

- the histogram generated based on the skin image from the skin extraction unit 43 shows clearly the luminance value corresponding to the fingertip of the index finger as shown in FIG. 14 (for example, As shown in FIG. 14, when the brightness value of the histogram corresponding to the fingertip of the index finger is flat), the area corresponding to the brightness value of the upper n [%] is used as the mask area.

- a mask threshold can be determined.

- the threshold determination unit 44 includes the upper n [%].

- the minimum brightness value is determined as the lower limit threshold Th_L

- the maximum value among the plurality of brightness values constituting the histogram is determined as the upper limit threshold Th_H.

- the shape extraction unit 46 the shape of the fingertip portion of the index finger existing closest to the light emitting device 23 in the user's skin region is extracted from the binarized skin image from the binarization unit 42. It becomes.

- the information processing system 1 uses, for example, the fact that the brightness value of the user's skin area increases as the distance from the light emitting device 23 increases, and the brightness value decreases as the distance from the light emitting device 23 increases.

- the corresponding processing can be performed by recognizing the movement in the direction of the light emitting device.

- the so-called mouse click operation or determination operation is performed in conjunction with the movement of the user's hand in the front-rear direction, that is, the direction of the light emitting device 23 (z direction).

- the hand is moved in the z direction, it also moves in the xy direction, causing a problem that a desired content cannot be selected.

- the following method can solve the problem.

- the shape extraction unit 46 corresponds to a corresponding region (for example, a hand is displayed) corresponding to a shape region (for example, a region representing the shape of a hand) extracted from the first captured image obtained by the image capturing by the camera 22. Area) is extracted. Then, the shape extraction unit 46 detects the position of the hand based on the luminance values of the pixels constituting the extracted corresponding area.

- the shape extraction unit 46 can employ the second captured image in addition to the first captured image as a target for extracting the corresponding region. That is, the shape extraction unit 46 displays a display image (including the first and second captured images) in which the same subject as the subject displayed on the first or second captured image is displayed at the same position. As a target, a corresponding area can be extracted.

- step S21 the control unit 41 to the shape extraction unit 46, the camera 22, and the light emitting device 23 of the information processing device 21 perform the shape extraction process described with reference to FIG. As a result, the shape extraction unit 46 extracts a shape region from the binarized skin image from the binarization unit 42 based on the mask image from the mask image generation unit 45.

- step S ⁇ b> 22 the shape extraction unit 46 detects coordinates (x, y) t based on the extracted shape region. Specifically, for example, the shape extraction unit 46 calculates the center of gravity (x, y) of the extracted shape region as coordinates (x, y) t .

- step S23 the shape extraction unit 46 detects a corresponding region (for example, a region where a hand is displayed) corresponding to the extracted shape region among all the regions on the first captured image from the camera 22.

- the shape extraction unit 46 is assumed to be supplied with the first captured image from the camera 22.

- the shape extraction unit 46 calculates the average value (average luminance value) Y t of the luminance values of the pixels constituting the corresponding area based on the detected luminance values of the pixels constituting the corresponding area.

- step S24 the shape extraction unit 46 stores the calculated coordinates (x, y) t and the average luminance value Y t in the built-in memory in association with the calculated t-th order.

- step S25 the shape extraction unit 46 reads out the average luminance value Y t-1 stored in the previous step S24 among the average luminance values Y 1 to Y t-1 stored in the built-in memory.

- the shape extraction unit 46 skips step S25 and proceeds to step S26.

- step S25 the shape extraction unit 46, the magnitude of the calculated average luminance value Y t, i.e., for example, from the calculated average luminance value Y t, minus the average luminance value Y t-1 read out by the internal memory to obtain Whether or not the relative distance from the light emitting device 23 to the subject has largely changed is determined based on whether or not the absolute value of the difference Y t ⁇ Y t ⁇ 1 is less than a predetermined threshold value.

- the shape extraction unit 46 changes or increases the relative distance from the light emitting device 23 to the subject based on whether or not the difference Y t ⁇ Y t ⁇ 1 is positive. It can also be determined whether or not it has changed.

- step S25 when the shape extraction unit 46 determines that the relative distance from the light emitting device 23 to the subject has not changed significantly, the process proceeds to step S26.

- step S26 the shape extraction unit 46 controls display on a display device (not shown) based on the calculated coordinates (x, y) t . Specifically, for example, the shape extraction unit 46 moves the pointer displayed on the screen of the display device to a position corresponding to the calculated coordinates (x, y) t .

- step S26 After the process of step S26 is completed, the process returns to step S21, and thereafter the same process is performed.

- step S25 If the shape extraction unit 46 determines in step S25 that the relative distance from the light emitting device 23 to the subject has changed significantly, the process proceeds to step S27.

- step S27 the shape extraction unit 46 displays the coordinates (x, y) tk stored in the built-in memory, for example, on the screen of the display device (not shown) corresponding to the coordinates (x, y) t-5. Assuming that a so-called click operation has been performed at the position, processing based on the click operation is performed, the processing returns to step S21, and thereafter the same processing is performed.

- the shape extraction unit 46 based on the average luminance value Y t, and from the light emitting device 23 so as to determine whether the relative distance to the object has changed significantly Therefore, it is possible to recognize a gesture such as a click operation by the user.

- step S27 the shape extraction unit 46 displays a screen of a display device (not shown) corresponding to, for example, coordinates (x, y) t-5.

- the processing based on the click operation is performed assuming that the click operation has been performed at the upper position.

- the above-described processing may be performed by adopting a region representing a shape formed by the hand and the arm in addition to the region representing the shape of the hand as the shape region.

- the shape extraction unit 46 extracts only the fingertip (tip) portion of the index finger from the hand shape extracted as the shape region as described with reference to FIGS. 13 and 14. Based on the average luminance value Y t of the luminance values of the pixels constituting the area where the extracted fingertip portion is displayed, it is determined whether or not the relative distance between the light emitting device 23 and the fingertip portion has changed significantly. You may do it.