EP1526508B1 - Verfahren zum Auswählen von Syntheseneinheiten - Google Patents

Verfahren zum Auswählen von Syntheseneinheiten Download PDFInfo

- Publication number

- EP1526508B1 EP1526508B1 EP04105204A EP04105204A EP1526508B1 EP 1526508 B1 EP1526508 B1 EP 1526508B1 EP 04105204 A EP04105204 A EP 04105204A EP 04105204 A EP04105204 A EP 04105204A EP 1526508 B1 EP1526508 B1 EP 1526508B1

- Authority

- EP

- European Patent Office

- Prior art keywords

- pitch

- segment

- synthesis

- units

- similarity

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Expired - Lifetime

Links

Images

Classifications

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L19/00—Speech or audio signals analysis-synthesis techniques for redundancy reduction, e.g. in vocoders; Coding or decoding of speech or audio signals, using source filter models or psychoacoustic analysis

- G10L19/0018—Speech coding using phonetic or linguistical decoding of the source; Reconstruction using text-to-speech synthesis

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L13/00—Speech synthesis; Text to speech systems

- G10L13/06—Elementary speech units used in speech synthesisers; Concatenation rules

Definitions

- the invention relates to a method for selecting synthesis units.

- It relates for example to a method for selecting and coding synthesis units for a very low bit rate speech coder, for example less than 600 bits / sec.

- the coding scheme used consists of modeling the acoustic space of the speaker (or speakers) by Hidden Markov Models (HMM). These dependent or speaker-independent models are obtained during a prior learning phase from algorithms identical to those used in speech recognition systems. The essential difference lies in the fact that the models are learned on vectors grouped by classes in an automatic way and not in a supervised way from a phonetic transcription.

- the learning procedure then consists of automatically obtaining the segmentation of the learning signals (for example by using the so-called temporal decomposition method), group the segments obtained in a finite number of classes corresponding to the number of HMM models that one wishes to build. The number of models is directly related to the resolution sought to represent the acoustic space of the speaker or speakers.

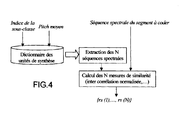

- spectral trajectory embodiment is selected from several so-called synthesis units. These units are extracted from the learning base during its segmentation using the HMM models. It is possible to take into account the context for example by using several subclasses to take into account the transitions from one class to another. A first index indicates the class to which belongs the segment considered, a second index specifies the subclass to which it belongs as being the class index of the preceding segment. The subclass index is therefore not to be transmitted, and the class index must be memorized for the next segment. The subclasses thus defined make it possible to take into account the different transitions to the class associated with the segment in question. To the spectral information is added the information of prosody, that is to say the value of the parameters of pitch and energy and their evolutions.

- a conventional method consists first of all in selecting the closest unit from a spectral point of view and, once the unit has been selected, in coding the prosody information, either independently of the selected unit.

- the method according to the present invention proposes a new method of selecting the closest synthesis unit together with the modeling and quantization of the additional information required at the decoder for the reproduction of the speech signal.

- the fundamental frequency or pitch, or the spectral distortion, and / or the profile are used as proximity criteria. of energy and performs a step of merging the criteria used to determine the representative synthesis unit.

- the method comprises for example a coding step and / or a pitch correction step by modifying the synthesis profile.

- the step of encoding and / or correcting the pitch may be a linear transformation of the pitch profile of origin.

- the method is for example used for the selection and / or coding of synthesis units for a very low bit rate speech coder.

- the speech signal is analyzed frame to frame in order to extract the characteristic parameters (spectral parameters, pitch, energy).

- This analysis is done conventionally using a sliding window defined on the horizon of the frame.

- This frame has a duration of the order of 20 ms, and the update is done with an offset of the analysis window of the order of 10 ms to 20 ms.

- HMM Hidden Markov Models

- the decoder uses this index to find the synthesis unit in the dictionary built during the learning phase.

- the synthesis units that make up the dictionary are simply the sequences of parameters associated with the segments obtained on the training corpus.

- a dictionary class contains all the units associated with the same HMM model. Each synthesis unit is therefore characterized by a sequence of spectral parameters, a pitch value sequence (pitch profile), a gain sequence (energy profile).

- each class (from 1 to 64) of the dictionary is subdivided into 64 subclasses, where each subclass contains the synthesis units that are preceded temporally by a segment belonging to the same class .

- This approach allows take into account the past context, and thus improve the return of transitional zones from one unit to another.

- the present invention relates in particular to a method for selecting a multicriterion synthesis unit.

- the method makes it possible, for example, to simultaneously take into account the pitch, the spectral distortion, and the pitch and energy evolution profiles.

- the method may comprise, in a variant embodiment, a pitch coding step by correcting the synthesis pitch profile explained in detail below.

- the criterion relating to the pitch evolution profile partly makes it possible to take account of the voicing information. However, it can be disabled when the segment is completely unvoiced, or the selected subclass is unvoiced. Indeed, we can notice mainly three types of subclasses: the subclasses containing mostly voiced units, those containing mainly unvoiced units, and the subclasses containing mainly mixed units.

- the method according to the invention is not limited to optimizing the bit rate allocated to the prosody information but also makes it possible to keep for the coding phase the entirety of the synthesis units obtained during the learning phase with a number of constant bits to encode the unit of synthesis.

- the synthesis unit is characterized by both the pitch value and its index. This approach makes it possible, in a speaker-independent coding scheme, to cover all the possible pitch values and to select the synthesis unit, taking into account, in part, the characteristics of the speaker. In fact, for the same speaker there is a correlation between the range of variation of the pitch and the characteristics of the vocal tract (in particular the length).

- the described unit selection principle can be applied to any system whose operation is based on a selection of units and therefore also to a system of synthesis from the text.

- the similarity measure may be a spectral distance.

- Step A9) comprises, for example, a step where all the spectra of the same segment are averaged and the similarity measure is a cross-correlation measurement.

- the spectral distortion criterion is for example calculated on harmonic structures resampled to constant pitch or resampled to the pitch of the segment to be coded, after interpolation of the initial harmonic structures.

- the similarity criterion will depend on the spectral parameters used (for example the type of parameters used for the representation of the envelope).

- spectral parameters for example the type of parameters used for the representation of the envelope.

- LSP Line Spectral Pair, LSF, Line Spectral Frequencies

- cepstral parameters are generally used, and they can either be derived from a Linear Prediction Cepstrum Coefficients (LPCC) or estimated from a filter bank often on a scale Mel or Bark perceptual (MFCC, Mel Frequency Cepstrum Coefficients).

- LPCC Linear Prediction Cepstrum Coefficients

- MFCC Mel Frequency Cepstrum Coefficients

- a pretreatment then consists in estimating a spectral envelope from the harmonic amplitudes (linear interpolation or polynomial spline type) and resampling the envelope thus obtained, either by using the fundamental frequency of the segment to be coded, or by using a frequency fundamental constant (100 Hz for example).

- a constant fundamental frequency makes it possible to pre-calculate all the harmonic structures of the synthesis units during the learning phase.

- the re-sampling is then done only on the segment to be coded. On the other hand, if one limits oneself to a temporal alignment by linear interpolation, it is possible to average the harmonic structures on all the segments considered.

- the similarity measure can then be estimated simply from the average harmonic structure of the segment to be encoded, and that of the synthesis unit considered. This similarity measure can also be a standardized cross-correlation measure. It can also be noted that the resampling procedure can be performed on a perceptual scale of frequencies (Mel or Bark).

- the method comprises a pitch coding step by modifying the synthesis profile. This consists of resynthesizing a pitch profile from that of the selected synthesis unit and a linearly variable gain over the duration of the segment to be encoded. It is then sufficient to transmit an additional value to characterize the correction gain over the entire segment.

- the rate associated with the prosody is then between 225 and 300 bits / sec, which leads to an overall rate between 450 and 600 bits / sec.

Landscapes

- Engineering & Computer Science (AREA)

- Computational Linguistics (AREA)

- Health & Medical Sciences (AREA)

- Audiology, Speech & Language Pathology (AREA)

- Human Computer Interaction (AREA)

- Physics & Mathematics (AREA)

- Acoustics & Sound (AREA)

- Multimedia (AREA)

- Signal Processing (AREA)

- Compression, Expansion, Code Conversion, And Decoders (AREA)

- Transition And Organic Metals Composition Catalysts For Addition Polymerization (AREA)

- Separation By Low-Temperature Treatments (AREA)

Claims (14)

- Verfahren zur Auswahl von Syntheseeinheiten einer Information, die in Form eines zu codierenden Sprachsegments vorliegt und in Syntheseeinheiten zerlegt werden kann, dadurch gekennzeichnet, dass es mindestens die folgenden Schritte aufweist:für ein betrachtetes Informationssegment:• Bestimmen des Werts F0 der mittleren Grundfrequenz für das betrachtete Informationssegment,• Auswählen einer Untergruppe von Syntheseeinheiten, die als diejenige definiert wird, deren mittlere Pitch-Werte dem Pitch-Wert F0 am nächsten sind,• Anwenden eines oder mehrerer Näherungskriterien an die ausgewählten Syntheseeinheiten, um eine Syntheseeinheit zu bestimmen, die für das Informationssegment repräsentativ ist.

- Verfahren zur Auswahl von Syntheseeinheiten nach Anspruch 1, dadurch gekennzeichnet, dass als Näherungskriterien die Grundfrequenz oder Pitch, die spektrale Verzerrung, und/oder das Energieprofil verwendet werden, und ein Schritt des Mischens der Kriterien ausgeführt wird, um die repräsentative Syntheseeinheit zu bestimmen.

- Verfahren zur Auswahl von Syntheseeinheiten nach Anspruch 1, dadurch gekennzeichnet, dass für ein zu codierendes Sprachsegment der Bezugspitch ausgehend von einem Prosodiegenerator erhalten wird.

- Verfahren nach Anspruch 2, dadurch gekennzeichnet, dass die Schätzung des Gleichartigkeitskriteriums für das Profil des Pitchs mindestens die folgenden Schritte aufweist:A1) Auswählen, in der identifizierten Unterklasse des Verzeichnisses der Syntheseeinheiten und ausgehend vom Mittelwert des Pitchs, der im Sinne des Kriteriums des mittleren Pitchs am nächsten liegenden N Einheiten,A2)zeitliches Abgleichen der N Profile mit demjenigen des zu codierenden Segments,A3)Berechnen von N Gleichartigkeitsmessungen zwischen den abgeglichenen N Pitch-Profilen und dem Profil des Pitchs des zu codierenden Sprachsegments, um die N Gleichartigkeitskoeffizienten {rp(1), rp(2), ..., rp(N)} zu erhalten.

- Verfahren nach Anspruch 2, dadurch gekennzeichnet, dass die Gleichartigkeitsschätzung für das energetische Profil mindestens die folgenden Schritte aufweist:A4)Bestimmen der Entwicklungsprofile der Energie für die ausgewählten N Einheiten gemäß einem Näherungskriterium des mittleren Pitchs,A5) zeitliches Abgleichen der N Profile mit demjenigen des zu codierenden Segments,A6)Berechnen von N Gleichartigkeitsmessungen zwischen den abgeglichenen N Energieprofilen und dem Energieprofil des zu codierenden Sprachsegments, um die N Gleichartigkeitskoeffizienten {re(1), re (2), ..., re(N)} zu erhalten.

- Verfahren nach Anspruch 2, dadurch gekennzeichnet, dass die Schätzung der Gleichartigkeitskriterien für die spektrale Hüllkurve mindestens die folgenden Schritte aufweist:A7)zeitliches Abgleichen der N Profile mit demjenigen des zu codierenden Segments,A8)Bestimmen der Entwicklungsprofile der spektralen Parameter für die ausgewählten N Einheiten gemäß einem Näherungskriterium des mittleren Pitchs,A9)Berechnen von N Messungen der Gleichartigkeiten zwischen der spektralen Sequenz des zu codierenden Segments und den dem zu codierenden Sprachsegment entsprechenden extrahierten N spektralen Sequenzen, um die N Gleichartigkeitskoeffizienten {rs(1), rs(2}, ..., rs(N)} zu erhalten.

- Verfahren nach einem der Ansprüche 4, 5 und 6, dadurch gekennzeichnet, dass der zeitliche Abgleich ein durch dynamische Programmierung (DTW) erhaltener zeitlicher Abgleich oder ein Abgleich durch lineare Anpassung der Längen ist.

- Verfahren nach einem der Ansprüche 4, 5 und 6, dadurch gekennzeichnet, dass die Gleichartigkeitsmessung einer genormte Interkorrelationsmessung ist.

- Verfahren nach Anspruch 6, dadurch gekennzeichnet, dass die Gleichartigkeitsmessung eine spektrale Entfernungsmessung ist.

- Verfahren nach Anspruch 6, dadurch gekennzeichnet, dass der Schritt A9) einen Schritt enthält, in dem die Gruppe der Spektren eines gleichen Segments gemittelt wird, und dass die Gleichartigkeitsmessung eine Interkorrelationsmessung ist.

- Verfahren nach Anspruch 6, dadurch gekennzeichnet, dass das spektrale Verzerrungskriterium an mit konstantem Pitch erneut abgetasteten oder mit dem Pitch des zu codierenden Segments erneut abgetasteten harmonischen Strukturen nach Interpolation der anfänglichen harmonischen Strukturen berechnet wird.

- Verfahren nach einem der Ansprüche 1 bis 11, dadurch gekennzeichnet, dass es einen Schritt der Codierung und/oder einen Schritt der Korrektur des Pitchs durch Veränderung des Syntheseprofils aufweist.

- Verfahren nach Anspruch 12, dadurch gekennzeichnet, dass der Schritt der Codierung und/oder der Korrektur des Pitchs eine lineare Umwandlung des Profils des ursprünglichen Pitchs ist.

- Verwendung des Verfahrens nach einem der Ansprüche 1 bis 12 für die Auswahl und/oder die Codierung von Syntheseeinheiten für einen Sprachcodierer mit sehr niedrigem Datenfluss.

Applications Claiming Priority (2)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| FR0312494 | 2003-10-24 | ||

| FR0312494A FR2861491B1 (fr) | 2003-10-24 | 2003-10-24 | Procede de selection d'unites de synthese |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| EP1526508A1 EP1526508A1 (de) | 2005-04-27 |

| EP1526508B1 true EP1526508B1 (de) | 2009-05-27 |

Family

ID=34385390

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| EP04105204A Expired - Lifetime EP1526508B1 (de) | 2003-10-24 | 2004-10-21 | Verfahren zum Auswählen von Syntheseneinheiten |

Country Status (6)

| Country | Link |

|---|---|

| US (1) | US8195463B2 (de) |

| EP (1) | EP1526508B1 (de) |

| AT (1) | ATE432525T1 (de) |

| DE (1) | DE602004021221D1 (de) |

| ES (1) | ES2326646T3 (de) |

| FR (1) | FR2861491B1 (de) |

Families Citing this family (15)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP4265501B2 (ja) * | 2004-07-15 | 2009-05-20 | ヤマハ株式会社 | 音声合成装置およびプログラム |

| JP4025355B2 (ja) * | 2004-10-13 | 2007-12-19 | 松下電器産業株式会社 | 音声合成装置及び音声合成方法 |

| US7126324B1 (en) * | 2005-11-23 | 2006-10-24 | Innalabs Technologies, Inc. | Precision digital phase meter |

| EP2058803B1 (de) * | 2007-10-29 | 2010-01-20 | Harman/Becker Automotive Systems GmbH | Partielle Sprachrekonstruktion |

| US8401849B2 (en) * | 2008-12-18 | 2013-03-19 | Lessac Technologies, Inc. | Methods employing phase state analysis for use in speech synthesis and recognition |

| US8731931B2 (en) * | 2010-06-18 | 2014-05-20 | At&T Intellectual Property I, L.P. | System and method for unit selection text-to-speech using a modified Viterbi approach |

| US9664518B2 (en) * | 2010-08-27 | 2017-05-30 | Strava, Inc. | Method and system for comparing performance statistics with respect to location |

| CN102651217A (zh) * | 2011-02-25 | 2012-08-29 | 株式会社东芝 | 用于合成语音的方法、设备以及用于语音合成的声学模型训练方法 |

| US9291713B2 (en) | 2011-03-31 | 2016-03-22 | Strava, Inc. | Providing real-time segment performance information |

| US9116922B2 (en) | 2011-03-31 | 2015-08-25 | Strava, Inc. | Defining and matching segments |

| US8620646B2 (en) * | 2011-08-08 | 2013-12-31 | The Intellisis Corporation | System and method for tracking sound pitch across an audio signal using harmonic envelope |

| US10453479B2 (en) | 2011-09-23 | 2019-10-22 | Lessac Technologies, Inc. | Methods for aligning expressive speech utterances with text and systems therefor |

| US8718927B2 (en) | 2012-03-12 | 2014-05-06 | Strava, Inc. | GPS data repair |

| US8886539B2 (en) * | 2012-12-03 | 2014-11-11 | Chengjun Julian Chen | Prosody generation using syllable-centered polynomial representation of pitch contours |

| CN113412512A (zh) * | 2019-02-20 | 2021-09-17 | 雅马哈株式会社 | 音信号合成方法、生成模型的训练方法、音信号合成系统及程序 |

Family Cites Families (10)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JPH10260692A (ja) * | 1997-03-18 | 1998-09-29 | Toshiba Corp | 音声の認識合成符号化/復号化方法及び音声符号化/復号化システム |

| JP2000056789A (ja) * | 1998-06-02 | 2000-02-25 | Sanyo Electric Co Ltd | 音声合成装置及び電話機 |

| JP2000075878A (ja) * | 1998-08-31 | 2000-03-14 | Canon Inc | 音声合成装置およびその方法ならびに記憶媒体 |

| US6581032B1 (en) * | 1999-09-22 | 2003-06-17 | Conexant Systems, Inc. | Bitstream protocol for transmission of encoded voice signals |

| US6574593B1 (en) * | 1999-09-22 | 2003-06-03 | Conexant Systems, Inc. | Codebook tables for encoding and decoding |

| JP3515039B2 (ja) * | 2000-03-03 | 2004-04-05 | 沖電気工業株式会社 | テキスト音声変換装置におけるピッチパタン制御方法 |

| JP3728172B2 (ja) * | 2000-03-31 | 2005-12-21 | キヤノン株式会社 | 音声合成方法および装置 |

| FR2815457B1 (fr) * | 2000-10-18 | 2003-02-14 | Thomson Csf | Procede de codage de la prosodie pour un codeur de parole a tres bas debit |

| SE521600C2 (sv) * | 2001-12-04 | 2003-11-18 | Global Ip Sound Ab | Lågbittaktskodek |

| CA2388352A1 (en) * | 2002-05-31 | 2003-11-30 | Voiceage Corporation | A method and device for frequency-selective pitch enhancement of synthesized speed |

-

2003

- 2003-10-24 FR FR0312494A patent/FR2861491B1/fr not_active Expired - Fee Related

-

2004

- 2004-10-21 AT AT04105204T patent/ATE432525T1/de not_active IP Right Cessation

- 2004-10-21 ES ES04105204T patent/ES2326646T3/es not_active Expired - Lifetime

- 2004-10-21 DE DE602004021221T patent/DE602004021221D1/de not_active Expired - Lifetime

- 2004-10-21 EP EP04105204A patent/EP1526508B1/de not_active Expired - Lifetime

- 2004-10-22 US US10/970,731 patent/US8195463B2/en not_active Expired - Fee Related

Also Published As

| Publication number | Publication date |

|---|---|

| EP1526508A1 (de) | 2005-04-27 |

| FR2861491A1 (fr) | 2005-04-29 |

| US8195463B2 (en) | 2012-06-05 |

| FR2861491B1 (fr) | 2006-01-06 |

| DE602004021221D1 (de) | 2009-07-09 |

| US20050137871A1 (en) | 2005-06-23 |

| ES2326646T3 (es) | 2009-10-16 |

| ATE432525T1 (de) | 2009-06-15 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| EP4266306B1 (de) | Verarbeitung eines sprachsignals | |

| US7996222B2 (en) | Prosody conversion | |

| Arslan | Speaker transformation algorithm using segmental codebooks (STASC) | |

| EP1526508B1 (de) | Verfahren zum Auswählen von Syntheseneinheiten | |

| Le Cornu et al. | Generating intelligible audio speech from visual speech | |

| JPH075892A (ja) | 音声認識方法 | |

| US20020184009A1 (en) | Method and apparatus for improved voicing determination in speech signals containing high levels of jitter | |

| EP1606792B1 (de) | Verfahren zur analyse der grundfrequenz, verfahren und vorrichtung zur sprachkonversion unter dessen verwendung | |

| JPH08123484A (ja) | 信号合成方法および信号合成装置 | |

| Lee et al. | A segmental speech coder based on a concatenative TTS | |

| Min et al. | Deep vocoder: Low bit rate compression of speech with deep autoencoder | |

| Berisha et al. | Bandwidth extension of speech using perceptual criteria | |

| Unnibhavi et al. | A survey of speech recognition on south Indian Languages | |

| JPH1097274A (ja) | 話者認識方法及び装置 | |

| Nose et al. | Speaker-independent HMM-based voice conversion using adaptive quantization of the fundamental frequency | |

| Salor et al. | Dynamic programming approach to voice transformation | |

| Sharma et al. | Non-intrusive bit-rate detection of coded speech | |

| CN119360823B (zh) | 基于多子带生成策略的语音合成系统、方法、介质及设备 | |

| Do et al. | Objective evaluation of HMM-based speech synthesis system using kullback-leibler divergence. | |

| EP1846918A1 (de) | Verfahren zur schätzung einer sprachumsetzungsfunktion | |

| En-Najjary et al. | Fast GMM-based voice conversion for text-to-speech synthesis systems. | |

| Lee et al. | Ultra low bit rate speech coding using an ergodic hidden Markov model | |

| Kura | Novel pitch detection algorithm with application to speech coding | |

| Černocký et al. | Very low bit rate speech coding: comparison of data-driven units with syllable segments | |

| López | Methods for speaking style conversion from normal speech to high vocal effort speech |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PUAI | Public reference made under article 153(3) epc to a published international application that has entered the european phase |

Free format text: ORIGINAL CODE: 0009012 |

|

| AK | Designated contracting states |

Kind code of ref document: A1 Designated state(s): AT BE BG CH CY CZ DE DK EE ES FI FR GB GR HU IE IT LI LU MC NL PL PT RO SE SI SK TR |

|

| AX | Request for extension of the european patent |

Extension state: AL HR LT LV MK |

|

| 17P | Request for examination filed |

Effective date: 20051007 |

|

| AKX | Designation fees paid |

Designated state(s): AT BE BG CH CY CZ DE DK EE ES FI FR GB GR HU IE IT LI LU MC NL PL PT RO SE SI SK TR |

|

| 17Q | First examination report despatched |

Effective date: 20070612 |

|

| GRAP | Despatch of communication of intention to grant a patent |

Free format text: ORIGINAL CODE: EPIDOSNIGR1 |

|

| GRAS | Grant fee paid |

Free format text: ORIGINAL CODE: EPIDOSNIGR3 |

|

| GRAA | (expected) grant |

Free format text: ORIGINAL CODE: 0009210 |

|

| AK | Designated contracting states |

Kind code of ref document: B1 Designated state(s): AT BE BG CH CY CZ DE DK EE ES FI FR GB GR HU IE IT LI LU MC NL PL PT RO SE SI SK TR |

|

| REG | Reference to a national code |

Ref country code: GB Ref legal event code: FG4D Free format text: NOT ENGLISH |

|

| REG | Reference to a national code |

Ref country code: CH Ref legal event code: EP |

|

| REG | Reference to a national code |

Ref country code: IE Ref legal event code: FG4D Free format text: LANGUAGE OF EP DOCUMENT: FRENCH |

|

| REF | Corresponds to: |

Ref document number: 602004021221 Country of ref document: DE Date of ref document: 20090709 Kind code of ref document: P |

|

| REG | Reference to a national code |

Ref country code: DE Ref legal event code: R096 Ref document number: 602004021221 Country of ref document: DE Effective date: 20090709 |

|

| REG | Reference to a national code |

Ref country code: ES Ref legal event code: FG2A Ref document number: 2326646 Country of ref document: ES Kind code of ref document: T3 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: PT Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090927 Ref country code: FI Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: AT Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 |

|

| NLV1 | Nl: lapsed or annulled due to failure to fulfill the requirements of art. 29p and 29m of the patents act | ||

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: SI Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: NL Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: PL Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: SE Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090827 |

|

| REG | Reference to a national code |

Ref country code: IE Ref legal event code: FD4D |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: EE Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: DK Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: RO Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: CZ Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 Ref country code: IE Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: SK Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: BG Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090827 |

|

| PLBE | No opposition filed within time limit |

Free format text: ORIGINAL CODE: 0009261 |

|

| STAA | Information on the status of an ep patent application or granted ep patent |

Free format text: STATUS: NO OPPOSITION FILED WITHIN TIME LIMIT |

|

| BERE | Be: lapsed |

Owner name: THALES Effective date: 20091031 |

|

| 26N | No opposition filed |

Effective date: 20100302 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: MC Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20091031 |

|

| REG | Reference to a national code |

Ref country code: DE Ref legal event code: R097 Ref document number: 602004021221 Country of ref document: DE Effective date: 20100302 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: GR Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090828 Ref country code: BE Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20091031 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: LU Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20091021 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: HU Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20091128 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: TR Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: CY Free format text: LAPSE BECAUSE OF FAILURE TO SUBMIT A TRANSLATION OF THE DESCRIPTION OR TO PAY THE FEE WITHIN THE PRESCRIBED TIME-LIMIT Effective date: 20090527 |

|

| REG | Reference to a national code |

Ref country code: FR Ref legal event code: PLFP Year of fee payment: 13 |

|

| REG | Reference to a national code |

Ref country code: FR Ref legal event code: PLFP Year of fee payment: 14 |

|

| PGFP | Annual fee paid to national office [announced via postgrant information from national office to epo] |

Ref country code: DE Payment date: 20171018 Year of fee payment: 14 |

|

| PGFP | Annual fee paid to national office [announced via postgrant information from national office to epo] |

Ref country code: CH Payment date: 20171013 Year of fee payment: 14 Ref country code: GB Payment date: 20171013 Year of fee payment: 14 Ref country code: ES Payment date: 20171102 Year of fee payment: 14 Ref country code: IT Payment date: 20171024 Year of fee payment: 14 |

|

| REG | Reference to a national code |

Ref country code: FR Ref legal event code: PLFP Year of fee payment: 15 |

|

| REG | Reference to a national code |

Ref country code: DE Ref legal event code: R119 Ref document number: 602004021221 Country of ref document: DE |

|

| REG | Reference to a national code |

Ref country code: CH Ref legal event code: PL |

|

| GBPC | Gb: european patent ceased through non-payment of renewal fee |

Effective date: 20181021 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: DE Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20190501 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: CH Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20181031 Ref country code: LI Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20181031 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: GB Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20181021 Ref country code: IT Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20181021 |

|

| REG | Reference to a national code |

Ref country code: ES Ref legal event code: FD2A Effective date: 20191203 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: ES Free format text: LAPSE BECAUSE OF NON-PAYMENT OF DUE FEES Effective date: 20181022 |

|

| P01 | Opt-out of the competence of the unified patent court (upc) registered |

Effective date: 20230517 |

|

| PGFP | Annual fee paid to national office [announced via postgrant information from national office to epo] |

Ref country code: FR Payment date: 20230921 Year of fee payment: 20 |