WO2019100712A1 - 视频编码方法、计算机设备和存储介质 - Google Patents

视频编码方法、计算机设备和存储介质 Download PDFInfo

- Publication number

- WO2019100712A1 WO2019100712A1 PCT/CN2018/092688 CN2018092688W WO2019100712A1 WO 2019100712 A1 WO2019100712 A1 WO 2019100712A1 CN 2018092688 W CN2018092688 W CN 2018092688W WO 2019100712 A1 WO2019100712 A1 WO 2019100712A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- image frame

- current

- quantization parameter

- frame

- obtaining

- Prior art date

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/124—Quantisation

- H04N19/126—Details of normalisation or weighting functions, e.g. normalisation matrices or variable uniform quantisers

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/136—Incoming video signal characteristics or properties

- H04N19/14—Coding unit complexity, e.g. amount of activity or edge presence estimation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/103—Selection of coding mode or of prediction mode

- H04N19/114—Adapting the group of pictures [GOP] structure, e.g. number of B-frames between two anchor frames

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/124—Quantisation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/146—Data rate or code amount at the encoder output

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/157—Assigned coding mode, i.e. the coding mode being predefined or preselected to be further used for selection of another element or parameter

- H04N19/159—Prediction type, e.g. intra-frame, inter-frame or bidirectional frame prediction

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/172—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a picture, frame or field

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/187—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being a scalable video layer

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/46—Embedding additional information in the video signal during the compression process

- H04N19/463—Embedding additional information in the video signal during the compression process by compressing encoding parameters before transmission

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/573—Motion compensation with multiple frame prediction using two or more reference frames in a given prediction direction

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/577—Motion compensation with bidirectional frame interpolation, i.e. using B-pictures

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/30—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/30—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability

- H04N19/31—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability in the temporal domain

Definitions

- the present application relates to the field of video coding, and in particular to a video coding method, apparatus, computer device and storage medium.

- the encoding rate of the image can be adjusted by the quantization parameter (QP) of the video adjustment encoder, so that a stable code rate and a smaller video can be guaranteed under the limitation of the video transmission bandwidth. Delay.

- the quantization parameter of each image frame the quantization parameter of the current image frame needs to be calculated according to the set code rate control model, the calculation complexity is large, and the video coding efficiency is low.

- a video encoding method, computer device, and storage medium are provided.

- a video encoding method comprising:

- the computer device obtains a current image frame to be encoded

- the computer device encodes the current image frame based on the current quantization parameter.

- a computer device comprising a memory and a processor, the memory storing computer readable instructions, the computer readable instructions being executed by the processor such that the processor performs the following steps:

- the current image frame is encoded according to the current quantization parameter.

- One or more non-volatile storage media storing computer readable instructions, when executed by one or more processors, cause one or more processors to perform the following steps:

- the current image frame is encoded according to the current quantization parameter.

- FIG. 1A is an application environment diagram of a video encoding method provided in an embodiment

- FIG. 1B is an application environment diagram of a video encoding method provided in an embodiment

- 2A is a flowchart of a video encoding method in an embodiment

- 2B is a schematic diagram of a temporal layered B frame coding structure in an embodiment

- FIG. 3 is a flowchart of obtaining a current quantization parameter offset corresponding to a current image frame according to a current layer in an embodiment

- FIG. 4 is a flowchart of obtaining reference quantization parameters corresponding to a reference image frame of a current image frame in an embodiment

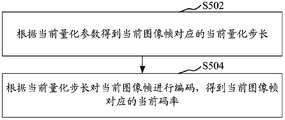

- FIG. 5 is a flow chart of encoding a current image frame according to a current quantization parameter in one embodiment

- FIG. 6 is a flow chart of a video encoding method in an embodiment

- FIG. 7 is a flow chart of a video encoding method in an embodiment

- FIG. 8 is a flowchart of obtaining a current quantization parameter corresponding to a current image frame according to a reference quantization parameter and a current quantization parameter offset according to an embodiment

- Figure 9 is a block diagram showing the structure of a video encoding apparatus in an embodiment

- FIG. 10 is a structural block diagram of a current quantization parameter obtaining module in an embodiment

- FIG. 11 is a structural block diagram of a reference quantization parameter acquisition module in an embodiment

- FIG. 12 is a structural block diagram of an encoding module in an embodiment

- Figure 13 is a block diagram showing the structure of a video encoding apparatus in an embodiment

- Figure 14 is a block diagram showing the structure of a video encoding apparatus in an embodiment

- 15 is a structural block diagram of a current offset obtaining module in an embodiment

- Figure 16 is a block diagram showing the internal structure of a computer device in an embodiment.

- Figure 17 is a block diagram showing the internal structure of a computer device in one embodiment.

- FIG. 1A is an application environment diagram of a video encoding method provided in an embodiment.

- video encoding since the content of each frame in the video image is different and the complexity is different, the number of bits of each frame of the encoding is constant. The changes, and the transmission channel is constantly changing, so you need to set the encoder buffer to balance the encoding bit rate and bandwidth.

- the video image sequence is input to the video encoder 1

- the coded stream encoded by the video encoder 1 is input to the encoder buffer 2 for buffering and transmitted through the network.

- the code rate controller 3 adjusts the quantization parameter on the video encoder according to the set rate control model to control the code rate obtained by the video encoder for video encoding, and prevents the encoder buffer 2 from overflowing or underflowing.

- the video coding method in the embodiment of the present application may be applied to a computer device.

- the computer device may be an independent physical server or a terminal, or may be a server cluster composed of multiple physical servers, and may be a cloud server, a cloud database, a cloud storage, and the like.

- Cloud server for basic cloud computing services such as CDN.

- the terminal can be a smart phone, a tablet, a laptop, a desktop computer, a smart speaker, a smart watch, etc., but is not limited thereto.

- the terminal 110 or the server 120 may perform video encoding by an encoder or video decoding by a decoder.

- the terminal 110 or the server 120 may also perform video encoding by running a video encoding program by the processor, or performing video decoding by running a video decoding program by the processor.

- the server 120 may directly transmit the encoded data to the processor for decoding, or may store it in the database for subsequent decoding.

- the encoded data may be directly sent to the terminal 110 through the output interface, or the encoded data may be stored in a database for subsequent transmission.

- the encoded data refers to encoding the image frame. The data obtained.

- a video encoding method is proposed.

- the present embodiment is mainly applied to the foregoing computer device by using the method, and may specifically include the following steps:

- Step S202 Acquire a current image frame to be encoded.

- the video is composed of a sequence of images. Each image can be considered as one frame.

- the current image frame refers to the image frame that is currently encoded.

- the video image sequence is input to the video encoder, and the video encoder acquires the image frame to be encoded according to a preset order for encoding.

- Step S204 Acquire a current layer where the current image frame is located in the image group in which the image frame is located, and the image group includes a plurality of image frames.

- a group of pictures refers to a group of consecutive images, which can form a group of consecutive images in a sequence of video images to facilitate random access editing.

- Images in an image group The number of frames may be set according to actual needs, for example, may be 8.

- the image group may include three types of frames: I frame, P frame, and B frame, where I frame is an intra prediction frame, and P frame is a one-way frame.

- the prediction frame can be predicted by inter-frame prediction.

- the B-frame is a bi-directional prediction frame, which can be referenced by referring to the previous image frame or the subsequent image frame.

- the current layer refers to the layer in which the current image frame is located in the image group.

- the layered coding structure may be preset, and the layer of the image frame in the image group is obtained according to the layered coding structure.

- the layered coding structure refers to dividing the image frame in the image group into multiple layers for coding, and different layers may be used. Corresponding to different coding qualities, the specific layering method can be set according to actual needs or application scenarios. For example, layering according to the time sequence of image frames in the image group, Time layered B frame coding structure (hierarchical B structure).

- the layered coding structure sets the number of layers, the image frames corresponding to each layer, and the reference relationship between image frames.

- the upper layer image frames can be referred to the lower layer image frames. Encoding.

- Hierarchical coding structure hierarchical coding structure

- the number of middle layers can be set according to actual needs.

- the image group can be divided into 2 layers, 3 layers or 4 layers.

- the coding order of the image frames in the image group is obtained according to the layered coding structure, and the image is obtained.

- the image frames in the group are encoded, and after the current image frame is obtained, the current layer corresponding to the current image frame can be obtained according to the layered coding structure.

- one image group includes 8 image frames

- the B-frame of the image group is divided into 3 layers: B0 layer, B1 layer, and B2 layer, wherein B0 layer Including the 4th B frame, the B1 layer includes the 2nd and 6th B frames, the remaining B frame image frames belong to the B2 layer, the start of the arrow represents the reference frame image, and the direction of the arrow indicates the encoding according to the reference frame.

- the image frames are sorted according to the display order of the image frames, and the time-layered B-frame coding structure is as shown in FIG. 2B.

- a high-level image frame may be encoded with reference to a lower layer image frame.

- a reference frame of an image frame of a B0 layer is a P frame at both ends.

- the image frame of the B2 layer can be encoded with reference to the image frame of the B1 layer.

- the time layered B frame coding structure is not limited to a three layer coding structure, and may be, for example, a two layer, four layer, or the like coding structure.

- Step S206 Obtain a current quantization parameter offset corresponding to the current image frame according to the current layer, and image frames of different layers in the image group correspond to different quantization parameter offsets.

- quantization is needed to reduce the amount of data, and the value of the residual residual cosine transform of the prediction residual is mapped to a smaller value, for example, A smaller value is obtained by dividing by the quantization step size.

- the quantization parameter is the sequence number of the quantization step size, and the corresponding quantization step size can be found according to the quantization parameter.

- the quantization parameter is small, most of the details of the image frame are preserved, and the corresponding code rate is high. If the quantization parameter is large, the corresponding code rate is low, but the image distortion is large and the quality is not high. That is, the relationship between the quantization parameter and the code rate is negatively correlated.

- the basic method of rate control in the encoding process is to control the code rate corresponding to the image by adjusting the quantization parameter.

- the quantization parameter offset refers to the quantization parameter offset value of the encoded image frame relative to its reference frame.

- the image frames of different layers in the image group correspond to different quantization parameter offsets, and the offsets of the quantization parameters corresponding to the image frames of different layers may be preset, and may be set according to actual needs.

- the quantization parameter offsets of the first to third layers may be 0.42, 0.8, and 1.2, respectively.

- the upper layer image frame can be encoded with reference to the lower layer image frame, so the upper layer image frame is less referenced, and the lower layer image frame is referenced more frequently, so the higher layer corresponding quantization parameter can be set.

- the shift amount is larger than the quantization parameter offset of the lower layer. That is, the correspondence between the level of the image frame and the offset of the quantization parameter is a positive correlation to improve the compression efficiency of the video coding.

- Step S208 Acquire a reference quantization parameter corresponding to a reference image frame of the current image frame.

- the reference frame is used to predict the encoded image frame, and the method for selecting the reference frame may be set according to actual needs.

- the reference image frame is a reference frame selected from a list of candidate reference frames of the current image frame.

- the reference frame can be selected, for example, based on spatial similarity or the like.

- taking the current image frame as a B frame that is, a bidirectional prediction frame

- each B frame has two candidate reference frame sequences

- the first image frame of the two candidate reference frame sequences can be respectively taken as a reference image. frame.

- the reference quantization parameter may be an encoding quantization parameter when the reference image frame is encoded.

- the reference quantization parameter may also be obtained by combining the coding quantization parameter corresponding to the reference image frame and the corresponding quantization parameter offset.

- the reference quantization parameter is the quantization quantization parameter corresponding to the reference image frame minus the quantization parameter offset corresponding to the reference image frame.

- the reference quantization parameter may be an average of the quantization parameters in the reference image frame.

- Step S210 Obtain a current quantization parameter corresponding to the current image frame according to the reference quantization parameter and the current quantization parameter offset.

- the current quantization parameter corresponding to the current image frame is obtained by combining the reference quantization parameter and the current quantization parameter offset.

- the current quantization parameter may be the sum of the reference quantization parameter and the current quantization parameter offset.

- the current quantization parameter can also be obtained by combining the frame distance of the reference image frame with the current image frame. For example, the weight corresponding to the frame distance is set, and the influence value corresponding to the reference image frame is obtained according to the weight corresponding to the reference image frame and the coded quantization parameter of the reference image frame, and the current quantization parameter is obtained according to the influence value and the current quantization parameter offset.

- Step S212 encoding the current image frame according to the current quantization parameter.

- the corresponding relationship between the quantization parameter and the quantization step size is preset, so that the corresponding quantization step size can be obtained according to the current quantization parameter, and the current image frame is quantized according to the quantization step size.

- the Round(x) function refers to rounding off the quantized values.

- the correspondence between the quantization parameter and the quantization step size can be set as needed.

- the quantization step has a total of 52 values, which is an integer between 0 and 51.

- the quantization step has a value between 0 and 39. The integer is incremented, and the quantization step size increases as the quantization parameter increases. Each time the quantization parameter is increased by 6, the quantization step size is doubled. It can be understood that when the quantization parameter in the correspondence between the quantization parameter and the quantization step is an integer, the current quantization parameter may be rounded, and the rounding method may be rounding off an integer.

- the above video encoding method can be used for compression of video files, such as compression of WeChat small videos.

- the current layer in which the current image frame is located is obtained, and then the quantization parameter offset corresponding to the current image frame is obtained according to the current layer, and the reference image of the current image frame is obtained.

- a reference quantization parameter corresponding to the frame obtaining a current quantization parameter corresponding to the current image frame according to the reference quantization parameter and the quantization parameter offset, thereby encoding the current image frame according to the current quantization parameter, because the current image frame to be encoded is located

- the quantization parameter offset corresponding to the current layer and the coding quantization parameter corresponding to the reference image frame obtain the current quantization parameter corresponding to the current image frame, the computational complexity is small, and the quantization parameter offset is flexibly changed according to the needs of different layer image frames.

- the coding efficiency is high.

- the current image frame is a bidirectional prediction frame

- the step of obtaining the current quantization parameter offset corresponding to the current image frame according to the current layer includes:

- Step S302 Acquire a quantization offset parameter between the preset image frame of the current layer and the unidirectional prediction frame.

- quantization offset parameters corresponding to unidirectional prediction frames may be set for different layers of each hierarchical coding structure.

- the quantization parameter offset is calculated according to the quantization offset parameter, and the quantization parameter offset is positively correlated with the quantization offset parameter.

- the formula for obtaining the quantization offset by the quantization offset parameter may be obtained according to the correspondence between the quantization step size and the quantization parameter. For example, for the current luminance coding, the quantization step has a total of 52 values, which is an integer between 0 and 51. And the quantization parameter has a correlation with the quantization step size, and the quantization parameter increases as the quantization step size increases.

- the quantization parameter When the quantization parameter is increased by 6, the quantization parameter is doubled, and the quantization parameter offset is based on 2

- the logarithm of the quantized offset parameter is the product of 6.

- the specific value of the quantization offset parameter can be preset according to needs or experience.

- the quantization offset parameter between the unidirectional prediction frame and the unidirectional prediction frame is smaller than the quantization parameter corresponding to the high-level bidirectional prediction frame, so that the quantization offset parameter is greater than 1.

- the quantization offset parameters corresponding to the B0 layer, the B1 layer, and the B2 layer may be 1.05, 1.10, and 1.15, respectively.

- Step S304 obtaining a current quantization parameter offset corresponding to the current image frame according to the quantization offset parameter.

- the current quantization parameter offset corresponding to the current image frame may be obtained according to the obtained quantization offset parameter and the corresponding calculation formula.

- the specific calculation formula can be obtained according to the correspondence between the quantization step size and the quantization parameter.

- the step of acquiring the reference quantization parameter corresponding to the reference image frame of the current image frame in step S208 includes:

- Step S402 acquiring a reference frame type of the reference image frame and an encoding quantization parameter of the reference image frame.

- the reference frame type may include an I frame, a B frame, and a P frame.

- the type of reference frame can be set as needed or set by different video coding standards. For example, in some video coding standards such as H26.3, B frames cannot be used as reference frames. In some video coding standards, a B frame can be used as a reference frame.

- the coded quantization parameter of the reference picture frame refers to the quantization parameter used when encoding the reference picture frame.

- Step S404 obtaining a reference quantization parameter corresponding to the reference image frame according to the reference frame type and the coding quantization parameter.

- the coding quantization parameter corresponding to the reference image frame may be adjusted according to different reference frame types to obtain a reference quantization parameter.

- the coded quantization parameter corresponding to the reference image frame may be used as a reference quantization parameter.

- the reference quantization parameter can be obtained by combining the quantization parameter offset and the coding quantization parameter.

- the reference quantization parameter is obtained by subtracting the quantization parameter offset corresponding to the reference frame from the coding quantization parameter corresponding to the reference image frame.

- the method for obtaining the current quantization parameter according to the reference quantization parameter of the reference image frame and the current quantization parameter offset provided by the embodiment of the present application may be used.

- the current quantization parameter of the current image frame may be calculated without using the method provided in the embodiment of the present application, for example, the current image frame is a P frame, and the current image is calculated according to the code rate control model.

- the current quantization parameter corresponding to the frame controls the code rate to avoid large error in the code rate.

- the rate control model can adopt RM8 (reference mode 8) and VM8 (verification mode 8), etc., and is not limited.

- the current quantization parameter corresponding to the current image frame may be calculated by using a rate control model, and the quantization parameter offset of the P frame relative to the I frame may also be set, and then the quantization corresponding to the P frame may be used.

- the parameter and the quantization parameter offset of the P frame relative to the I frame obtain the current quantization parameter corresponding to the current image frame.

- the quantization parameter corresponding to the I frame is the coding quantization parameter corresponding to the P frame minus the quantization parameter offset of the P frame relative to the I frame, that is, the I frame is smaller than the quantization parameter corresponding to the P frame, and the I frame corresponds to The coding accuracy is high and the image distortion is small.

- the quantization parameter corresponding to the P frame closest to the I frame may be selected minus the quantization parameter offset of the P frame relative to the I frame.

- the current image frame is a bidirectional prediction frame

- the step of obtaining the reference quantization parameter corresponding to the reference image frame according to the reference frame type and the coding quantization parameter includes: when the reference frame type is a bidirectional prediction frame, acquiring the reference image frame at The layer in which the image group is located.

- the reference quantization parameter offset corresponding to the reference image frame is obtained according to the layer in which the reference image frame is located in the image group. And determining a reference quantization parameter corresponding to the reference image frame according to the coding quantization parameter and the reference quantization parameter offset corresponding to the reference image frame.

- the reference quantization parameter offset refers to a quantization parameter offset corresponding to a layer where the reference image frame is located.

- the quantization parameter offset is obtained according to the current layer corresponding to the image frame, and the corresponding current quantization parameter is obtained according to the quantization parameter offset and the reference quantization parameter of the reference image frame, and therefore, if reference is made.

- the coded quantization parameter corresponding to the reference image frame is also obtained by using the quantization parameter offset corresponding to the layer in which it is located and the reference quantization parameter corresponding to the reference image frame.

- the reference image frame is a bidirectionally predicted frame

- the reference quantization parameter corresponding to the reference image frame is obtained according to the coded quantization parameter of the reference image frame and the reference quantization parameter offset corresponding to the reference image frame.

- the reference quantization parameter is the difference between the encoded quantization parameter and the reference quantization parameter offset. Specifically, it can be set as needed.

- the step of encoding the current image frame according to the current quantization parameter includes:

- Step S502 obtaining a current quantization step size corresponding to the current image frame according to the current quantization parameter.

- the quantization step corresponding to the current quantization parameter is obtained as the current quantization step according to the correspondence between the quantization parameter and the quantization step.

- Step S504 encoding the current image frame according to the current quantization step size, to obtain a current code rate corresponding to the current image frame.

- the code rate refers to the number of data bits transmitted per unit time.

- the current code rate refers to the code rate corresponding to the current image frame code encoded by the current quantization step size.

- the rate unit can be the number of bits transmitted per second. The higher the rate, the faster the data is transmitted. Encoding according to the current quantization step size, and obtaining the current code rate corresponding to the current image frame after encoding.

- the current image frame is a bidirectional predicted frame.

- the video encoding method further includes the following steps:

- Step S602 acquiring a preset quantization offset parameter of the image frame of the current layer and the unidirectional prediction frame.

- the quantization parameter offset is calculated based on the quantization offset parameter. Since the current quantization parameter of the current image frame is obtained according to the current quantization parameter offset of the current image frame, the code rate corresponding to the current image frame may be corrected according to the quantization offset parameter when the image complexity parameter is calculated, and the correction is obtained. The subsequent code rate then calculates the corresponding image complexity parameter to improve the accuracy of the image complexity parameter.

- Step S604 obtaining a corresponding complexity update value according to the current code rate corresponding to the current image frame and the quantization offset parameter.

- the image complexity parameter is used to indicate the complexity of the image frame.

- the image complexity parameter is a parameter in the rate control model that is used to calculate the quantization step size of the image frame.

- the larger the image complexity parameter the more complex the information such as image texture and the higher the bit rate is required for transmission.

- the complexity update value is used to update the image complexity parameter to be able to dynamically update the complexity parameters of the image.

- Step S606 obtaining an updated image complexity parameter according to the image complexity parameter and the complexity update value corresponding to the forward image frame of the current image frame.

- the image complexity parameter corresponding to the forward image frame refers to an image complexity parameter updated after the forward image frame is encoded.

- a forward image frame refers to an image frame that is encoded before the current image frame. It can be the previous image frame to be able to update the image complexity parameter in time, and of course other forward image frames, which can be set as needed.

- the image complexity parameter corresponding to the forward image frame is updated by using the complexity update value, and the updated image complexity parameter is obtained, thereby updating the image complexity parameter in the rate control model.

- the complexity update value is derived from the corrected code rate corresponding to the current image frame and the current quantization step size.

- the updated image complexity parameter may be the sum of the image complexity parameter and the complexity update value corresponding to the forward image frame of the current image frame, and is expressed as follows:

- the order of the current image frame is the jth frame

- the previous frame of the forward direction is the j-1 frame

- the complexity parameter after encoding the j-1th image frame is cplxsum j-1

- the bit j is the jth frame

- the code rate corresponding to the current image frame, Scale j is the current quantization step size corresponding to the jth frame. If the current image frame is divided into multiple coding units, and each coding unit corresponds to one quantization step, Scale j may be the current image frame corresponding to The average quantization step size.

- pbFactor_i represents the quantization offset parameter of the i-th bidirectional prediction frame and the unidirectional prediction frame.

- the pre_param j is a pre-analysis parameter of the j-th frame, and may be the SAD (Sum of Absolute Difference) corresponding to the j-th frame, that is, the current image frame, and the SAD refers to the absolute error sum of the predicted value and the actual value of the current image frame.

- SAD Sud of Absolute Difference

- the video encoding method further includes the following steps:

- Step S702 Acquire a frame type corresponding to the encoded backward image frame corresponding to the current image frame.

- the backward image frame refers to an image frame that is encoded after the current image frame. It can be the next image frame, and of course other backward image frames, which can be set as needed.

- the types of frames may include I frames, B frames, and P frames.

- Step S704 when the backward image frame is a non-bidirectional prediction frame, obtain a quantization parameter corresponding to the backward image frame according to the preset code rate control model and the updated image complexity parameter.

- the non-bidirectional prediction frame includes an I frame and a P frame.

- the P frame may obtain the quantization parameter corresponding to the backward image frame according to the preset code rate control model and the updated image complexity parameter.

- the image complexity parameter in the rate control model is the model parameter. Therefore, the code rate control model is updated by using the updated image complexity parameter, and the quantization parameter corresponding to the backward image frame is obtained according to the code rate control model.

- the rate control model can be set as needed. For example, it may be a TM5 (test mode 5) algorithm. Since the quantization parameter of the backward image frame is calculated by using the rate control model when the backward image frame is a non-bidirectionally predicted frame, the error of the video bit rate may not be excessively large as the image frame increases.

- the reference image frame is multiple.

- the step S210 is to obtain the current quantization parameter corresponding to the current image frame according to the reference quantization parameter and the current quantization parameter offset.

- Step S802 acquiring a frame distance between each reference image frame and a current image frame.

- the frame distance may be represented by the number of frames in which the image frame is spaced from the image frame, or may be represented by the time difference of the display time between the image frame and the image frame.

- the number of frames in which an image frame is spaced from an image frame can be represented by a difference in display order between image frames. For example, if the reference image frame is the second frame of the image group and the current image frame is the sixth frame of the same image group, the frame distance is 4.

- the reference image frame may be two, for example, two reference image frames of the B frame.

- Step S804 calculating a first quantization parameter corresponding to the current image frame according to a frame distance between each reference image frame and the current image frame and a corresponding reference quantization parameter.

- a scaling factor corresponding to the frame distance may be set, and then the first quantization parameter corresponding to the image frame is obtained according to the scaling factor and the corresponding reference quantization parameter.

- the weight corresponding to the current reference image frame may be obtained according to the frame distance between the current reference image frame and the current image frame, and then the current image frame correspondingly obtained according to the weight corresponding to each reference image frame and the corresponding reference quantization parameter.

- the frame distance and the weight may be negatively correlated, such that the quantization parameter corresponding to the reference image frame that is closer to the current image frame has a greater influence on the quantization parameter of the current image frame.

- the weight may be the reciprocal of the frame distance.

- the current image frame is a bidirectional prediction frame

- the first reference frame and the current image frame are separated by n 1 frame

- the reference quantization parameter of the first reference image frame is equal to qp 1

- the second The reference image frame and the current image frame distance are n 2 frames

- the reference quantization parameter of the second reference image frame is equal to qp 2 .

- the first quantization parameter is equal to the weighted average of the reference quantization parameter and the weight of the two reference image frames

- the weight of the quantization parameter of the first reference image frame is the second reference image frame The ratio of the frame distance to the current image frame to the total frame distance.

- the weight of the quantization parameter of the second reference image frame is the ratio of the frame distance of the first reference image frame and the current image frame to the total frame distance, according to the frame distance between each reference image frame and the current image frame and the corresponding reference

- the first quantization parameter corresponding to the current image frame calculated by the quantization parameter may be as shown in formula (1).

- Step S806 obtaining a current quantization parameter corresponding to the current image frame according to the first quantization parameter corresponding to the current image frame and the current quantization parameter offset.

- the current quantization parameter corresponding to the current image frame is obtained by combining the first quantization parameter and the current quantization parameter offset.

- the current quantization parameter may be the sum of the first quantization parameter and the current quantization parameter offset.

- the current image frame is a bi-predictive frame and the reference image frame is two.

- Step S210 that is, obtaining the current quantization parameter corresponding to the current image frame according to the reference quantization parameter and the current quantization parameter offset, further comprising determining whether the reference image frame is an I frame. In one embodiment, if one of the reference image frames is referenced If the reference image frame is an I frame and the other reference image frame is a P frame, steps S802 to 806 are not performed, and the reference quantization parameter corresponding to the reference image frame of the P frame and the current quantization parameter offset are obtained according to the frame type. The current quantization parameter corresponding to the current image frame.

- the reference quantization parameter and the sum of the current quantization parameter offsets obtain the current quantization parameter corresponding to the current image frame.

- the second quantization parameter may be obtained according to the coding quantization parameter of the two reference image frames, and then according to the second quantization parameter and the preset value. And get the current quantization parameters.

- the second quantization parameter may be the mean of the coded quantization parameters of the two reference image frames.

- the preset value can be set according to actual needs. For example, the preset value may be a quantization parameter offset of the P frame relative to the I frame.

- the image group includes 8 image frames, the 8 image frame types are the first 7 B frames, and the last one is a P frame, and the reference relationship is shown in FIG. 2B as an example.

- the video coding method provided by the example is explained.

- the reference relationship of FIG. 2B and the reference image frame of the current image frame are encoded prior to the current image frame.

- the first image frame encoded in the image group is the last image frame, so the last image frame of the image group is acquired. Since the current image frame is a P frame, the quantization parameter of the last image frame of the image group is calculated according to the set code rate control model and the target code rate, and the image frame is encoded according to the calculated quantization parameter.

- the fourth image frame of the image group is obtained, and the fourth image frame is obtained as a B frame, and is a B0 layer, so the quantization parameter offset corresponding to the B0 layer is obtained.

- the reference quantization parameter of the reference image frame of the non-I frame is added to the offset of the quantization parameter corresponding to the B0 layer to obtain the fourth The coded quantization parameter corresponding to the image frame, that is, the current quantization parameter. If the two reference frames of the fourth image frame are all I frames, calculate the mean value of the reference quantization parameters corresponding to the two reference image frames, and add the mean value to the offset of the set P frame relative to the quantization parameter of the I frame. And adding the quantization parameter offset corresponding to the B0 layer, obtaining the coding quantization parameter corresponding to the fourth image frame.

- the frame distances of the two reference image frames and the fourth image frame are respectively obtained, and the weight corresponding to the reference image frame is calculated according to the frame distance, and then A weighted average value is obtained according to the weight corresponding to each image frame and the corresponding reference quantization parameter, and the obtained weighted average value is added to the quantization parameter offset corresponding to the B0 layer to obtain a coded quantization parameter corresponding to the fourth image frame.

- the second image frame is sequentially acquired, and the sixth image frame, the first image frame, the third image frame, the fifth image frame, and the seventh image frame are encoded, and the encoding process can refer to the fourth

- the flow of the image frames performed in steps 2 to 6 will not be described here.

- the quantization parameter corresponding to the last image frame of the next image group is calculated according to the code rate control model and the image complexity parameter corresponding to the seventh image frame.

- the various steps in the various embodiments of the present application are not necessarily performed in the order indicated by the steps. Except as explicitly stated herein, the execution of these steps is not strictly limited, and the steps may be performed in other orders. Moreover, at least some of the steps in the embodiments may include multiple sub-steps or multiple stages, which are not necessarily performed at the same time, but may be executed at different times, and the execution of these sub-steps or stages The order is also not necessarily sequential, but may be performed alternately or alternately with other steps or at least a portion of the sub-steps or stages of the other steps.

- a video encoding apparatus which may specifically include:

- the current frame obtaining module 902 is configured to acquire a current image frame to be encoded.

- the current layer obtaining module 904 is configured to acquire a current layer where the current image frame is located in the image group in which the image frame is located, and the image group includes a plurality of image frames.

- the current offset obtaining module 906 is configured to obtain the current quantization parameter offset corresponding to the current image frame according to the current layer, and the image frames of different layers in the image group correspond to different quantization parameter offsets.

- the reference quantization parameter obtaining module 908 is configured to acquire a reference quantization parameter corresponding to the reference image frame of the current image frame.

- the current quantization parameter obtaining module 910 is configured to obtain a current quantization parameter corresponding to the current image frame according to the reference quantization parameter and the current quantization parameter offset.

- the encoding module 912 is configured to encode the current image frame according to the current quantization parameter.

- the reference image frame is multiple

- the current quantization parameter obtaining module 910 includes:

- the frame distance obtaining unit 910A is configured to acquire a frame distance between each reference image frame and the current image frame.

- the parameter calculation unit 910B is configured to calculate, according to a frame distance between each reference image frame and the current image frame, and a corresponding reference quantization parameter, a first quantization parameter corresponding to the current image frame.

- the current quantization parameter obtaining unit 910C is configured to obtain a current quantization parameter corresponding to the current image frame according to the first quantization parameter corresponding to the current image frame and the current quantization parameter offset.

- the parameter calculation unit 910B is configured to: obtain a weight corresponding to the current reference image frame according to a frame distance between the current reference image frame and the current image frame, where the frame distance and the weight are negatively correlated. Obtaining a first quantization parameter corresponding to the current image frame according to the weight corresponding to each reference image frame and the corresponding reference quantization parameter.

- the reference quantization parameter acquisition module 908 includes:

- the reference frame information obtaining unit 908A is configured to acquire a reference frame type of the reference image frame and an encoding quantization parameter of the reference image frame.

- the reference quantization parameter obtaining unit 908B is configured to obtain a reference quantization parameter corresponding to the reference image frame according to the reference frame type and the encoding quantization parameter.

- the current image frame is a bidirectionally predicted frame

- the reference quantization parameter obtaining unit 908B is configured to: when the reference frame type is a bidirectionally predicted frame, acquire a layer in which the reference image frame is located in the image group in which it is located.

- the reference quantization parameter offset corresponding to the reference image frame is obtained according to the layer in which the reference image frame is located in the image group. And determining a reference quantization parameter corresponding to the reference image frame according to the coding quantization parameter and the reference quantization parameter offset corresponding to the reference image frame.

- the encoding module 912 includes:

- the step size obtaining unit 912A is configured to obtain a current quantization step size corresponding to the current image frame according to the current quantization parameter.

- the encoding unit 912B is configured to encode the current image frame according to the current quantization step size to obtain a current code rate corresponding to the current image frame.

- the current image frame is a bidirectional predicted frame

- the apparatus further includes:

- the offset parameter obtaining module 1302 is configured to acquire a quantization offset parameter between the preset image frame of the current layer and the unidirectional prediction frame.

- the update value obtaining module 1304 is configured to obtain a corresponding complexity update value according to the current code rate corresponding to the current image frame and the quantization offset parameter.

- the updating module 1306 is configured to obtain the updated image complexity parameter according to the image complexity parameter corresponding to the forward image frame of the current image frame and the complexity update value.

- the apparatus further includes:

- the frame type obtaining module 1402 is configured to acquire a frame type corresponding to the encoded backward image frame corresponding to the current image frame.

- the post-vectorization parameter obtaining module 1404 is configured to obtain, according to the preset code rate control model and the updated image complexity parameter, a quantization parameter corresponding to the backward image frame when the backward image frame is a non-bidirectional prediction frame.

- the current offset yielding module 906 includes:

- the offset parameter obtaining unit 906A is configured to acquire a quantization offset parameter between the preset image frame of the current layer and the unidirectional prediction frame.

- the current offset obtaining unit 906B is configured to obtain a current quantization parameter offset corresponding to the current image frame according to the quantization offset parameter.

- Figure 16 is a diagram showing the internal structure of a computer device in one embodiment.

- the computer device may specifically be the terminal 110 in FIG. 1B.

- the computer device includes the computer device including a processor, a memory, a network interface, an input device, and a display screen connected by a system bus.

- the memory comprises a non-volatile storage medium and an internal memory.

- the non-volatile storage medium of the computer device stores an operating system and can also store computer readable instructions that, when executed by the processor, cause the processor to implement a video encoding method.

- the internal memory can also store computer readable instructions that, when executed by the processor, cause the processor to perform a video encoding method.

- the display screen of the computer device may be a liquid crystal display or an electronic ink display screen

- the input device of the computer device may be a touch layer covered on the display screen, or a button, a trackball or a touchpad provided on the computer device casing, and It can be an external keyboard, trackpad or mouse.

- Figure 17 is a diagram showing the internal structure of a computer device in one embodiment.

- the computer device may specifically be the server 120 of Figure 1B.

- the computer device includes the computer device including a processor, a memory, and a network interface connected by a system bus.

- the memory includes a nonvolatile storage medium and an internal memory.

- the non-volatile storage medium of the computer device stores an operating system and can also store computer readable instructions that, when executed by the processor, cause the processor to implement a video encoding method.

- the internal memory can also store computer readable instructions that, when executed by the processor, cause the processor to perform a video encoding method.

- FIGS. 16 and 17 are merely block diagrams of the partial structures related to the solution of the present application, and do not constitute a limitation of the computer device to which the solution of the present application is applied, the specific computer.

- the device may include more or fewer components than shown in the figures, or some components may be combined, or have different component arrangements.

- the video encoding device can be implemented in the form of a computer readable instruction that can be executed on a computer device as shown in FIGS. 16 and 17.

- the program modules constituting the video encoding device may be stored in a memory of the computer device, such as the current frame obtaining module 902, the current layer obtaining module 904, the current offset obtaining module 906, and the reference quantization parameter obtaining module 908 shown in FIG.

- the current quantization parameter is obtained by a module 910 and an encoding module 912.

- the computer readable instructions formed by the various program modules cause the processor to perform the steps in the video encoding method of various embodiments of the present application described in this specification.

- the computer device shown in FIGS. 16 and 17 can acquire the current image frame to be encoded by the current frame acquisition module 902 in the video encoding device as shown in FIG.

- the current layer in the image group in which the current image frame is located is acquired by the current layer acquisition module 904, and the image group includes a plurality of image frames.

- the current offset obtaining module 906 obtains the current quantization parameter offset corresponding to the current image frame according to the current layer.

- the reference quantization parameter corresponding to the reference image frame of the current image frame is obtained by referring to the quantization parameter acquisition module.

- the current quantization parameter obtaining module 910 obtains the current quantization parameter corresponding to the current image frame according to the reference quantization parameter and the current quantization parameter offset.

- the current image frame is encoded by the encoding module 912 based on the current quantization parameter.

- Non-volatile memory can include read only memory (ROM), programmable ROM (PROM), electrically programmable ROM (EPROM), electrically erasable programmable ROM (EEPROM), or flash memory.

- Volatile memory can include random access memory (RAM) or external cache memory.

- RAM is available in a variety of formats, such as static RAM (SRAM), dynamic RAM (DRAM), synchronous DRAM (SDRAM), double data rate SDRAM (DDRSDRAM), enhanced SDRAM (ESDRAM), synchronization chain.

- SRAM static RAM

- DRAM dynamic RAM

- SDRAM synchronous DRAM

- DDRSDRAM double data rate SDRAM

- ESDRAM enhanced SDRAM

- Synchlink DRAM SLDRAM

- Memory Bus Radbus

- RDRAM Direct RAM

- DRAM Direct Memory Bus Dynamic RAM

- RDRAM Memory Bus Dynamic RAM

Abstract

Description

Claims (30)

- 一种视频编码方法,所述方法包括:计算机设备获取待编码的当前图像帧;所述计算机设备获取所述当前图像帧在所在的图像组中所处的当前层,所述图像组包括多个图像帧;所述计算机设备根据所述当前层得到所述当前图像帧对应的当前量化参数偏移量,所述图像组中不同层的图像帧对应不同的量化参数偏移量;所述计算机设备获取所述当前图像帧的参考图像帧对应的参考量化参数;所述计算机设备根据所述参考量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数;所述计算机设备根据所述当前量化参数对所述当前图像帧进行编码。

- 根据权利要求1所述的方法,其特征在于,所述参考图像帧为多个,所述计算机设备根据所述参考量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数的步骤包括:所述计算机设备获取所述各个参考图像帧与所述当前图像帧之间的帧距离;所述计算机设备根据所述各个参考图像帧与所述当前图像帧之间的帧距离以及对应的参考量化参数计算得到所述当前图像帧对应的第一量化参数;所述计算机设备根据所述当前图像帧对应的第一量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数。

- 根据权利要求2所述的方法,其特征在于,所述计算机设备根据所述各个参考图像帧与所述当前图像帧之间的帧距离以及对应的参考量化参数计算得到所述当前图像帧对应的第一量化参数的步骤包括:所述计算机设备根据当前参考图像帧与所述当前图像帧之间的帧距离得到所述当前参考图像帧对应的权重,其中帧距离与权重为负相关关系;所述计算机设备根据所述各个参考图像帧对应的权重以及对应的参考量化参数得到所述当前图像帧对应的第一量化参数。

- 根据权利要求1所述的方法,其特征在于,所述计算机设备获取所述当前图像帧的参考图像帧对应的参考量化参数的步骤包括:所述计算机设备获取所述参考图像帧的参考帧类型以及所述参考图像帧的编码量化参数;所述计算机设备根据所述参考帧类型以及所述编码量化参数得到所述参考图像帧对应的参考量化参数。

- 根据权利要求4所述的方法,其特征在于,所述当前图像帧为双向预测帧,所述计算机设备根据所述参考帧类型以及所述编码量化参数得到所述参考图像帧对应的参考量化参数的步骤包括:当所述参考帧类型为双向预测帧时,所述计算机设备获取所述参考图像帧在所在的图像组中所处的层;所述计算机设备根据所述参考图像帧在图像组中所处的层得到所述参考图像帧对应的参考量化参数偏移量;所述计算机设备根据所述编码量化参数以及所述参考图像帧对应的参考量化参数偏移量得到述参考图像帧对应的参考量化参数。

- 根据权利要求1所述的方法,其特征在于,所述计算机设备根据所述当前量化参数对所述当前图像帧进行编码的步骤包括:所述计算机设备根据所述当前量化参数得到所述当前图像帧对应的当前量化步长;所述计算机设备根据所述当前量化步长对所述当前图像帧进行编码,得到所述当前图像帧对应的当前码率。

- 根据权利要求6所述的方法,其特征在于,所述当前图像帧为双向预测帧,所述方法还包括:所述计算机设备获取预设的所述当前层的图像帧与单向预测帧之间的量化偏移参数;所述计算机设备根据所述当前图像帧对应的当前码率以及所述量化偏移参数得到对应的复杂度更新值;所述计算机设备根据所述当前图像帧的前向图像帧对应的图像复杂度参数 以及所述复杂度更新值得到更新后的图像复杂度参数。

- 根据权利要求7所述的方法,其特征在于,所述方法还包括:所述计算机设备获取所述当前图像帧对应的的编码在后的后向图像帧对应的帧类型;所述计算机设备当所述后向图像帧为非双向预测帧时,根据预设的码率控制模型以及所述更新后的图像复杂度参数得到所述后向图像帧对应的量化参数。

- 根据权利要求1所述的方法,其特征在于,所述计算机设备根据所述当前层得到所述当前图像帧对应的当前量化参数偏移量的步骤包括:所述计算机设备获取预设的所述当前层的图像帧与单向预测帧之间的量化偏移参数;所述计算机设备根据所述量化偏移参数得到所述当前图像帧对应的当前量化参数偏移量。

- 根据权利要求1所述的方法,其特征在于,图像帧的层级与量化参数偏移量的对应关系为正相关关系。

- 一种计算机设备,包括存储器和处理器,所述存储器中存储有计算机可读指令,所述计算机可读指令被所述处理器执行时,使得所述处理器执行如下步骤:获取待编码的当前图像帧;获取所述当前图像帧在所在的图像组中所处的当前层,所述图像组包括多个图像帧;根据所述当前层得到所述当前图像帧对应的当前量化参数偏移量,所述图像组中不同层的图像帧对应不同的量化参数偏移量;获取所述当前图像帧的参考图像帧对应的参考量化参数;根据所述参考量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数;根据所述当前量化参数对所述当前图像帧进行编码。

- 根据权利要求11所述的计算机设备,其特征在于,所述参考图像帧为 多个,所述根据所述参考量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数包括:获取所述各个参考图像帧与所述当前图像帧之间的帧距离;根据所述各个参考图像帧与所述当前图像帧之间的帧距离以及对应的参考量化参数计算得到所述当前图像帧对应的第一量化参数;根据所述当前图像帧对应的第一量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数。

- 根据权利要求12所述的计算机设备,其特征在于,所述根据所述各个参考图像帧与所述当前图像帧之间的帧距离以及对应的参考量化参数计算得到所述当前图像帧对应的第一量化参数包括:根据当前参考图像帧与所述当前图像帧之间的帧距离得到所述当前参考图像帧对应的权重,其中帧距离与权重为负相关关系;根据所述各个参考图像帧对应的权重以及对应的参考量化参数得到所述当前图像帧对应的第一量化参数。

- 根据权利要求11所述的计算机设备,其特征在于,所述计算机设备获取所述当前图像帧的参考图像帧对应的参考量化参数包括:获取所述参考图像帧的参考帧类型以及所述参考图像帧的编码量化参数;根据所述参考帧类型以及所述编码量化参数得到所述参考图像帧对应的参考量化参数。

- 根据权利要求14所述的计算机设备,其特征在于,所述当前图像帧为双向预测帧,所述根据所述参考帧类型以及所述编码量化参数得到所述参考图像帧对应的参考量化参数包括:当所述参考帧类型为双向预测帧时,获取所述参考图像帧在所在的图像组中所处的层;根据所述参考图像帧在图像组中所处的层得到所述参考图像帧对应的参考量化参数偏移量;根据所述编码量化参数以及所述参考图像帧对应的参考量化参数偏移量得到述参考图像帧对应的参考量化参数。

- 根据权利要求11所述的计算机设备,其特征在于,所述根据所述当前量化参数对所述当前图像帧进行编码包括:根据所述当前量化参数得到所述当前图像帧对应的当前量化步长;根据所述当前量化步长对所述当前图像帧进行编码,得到所述当前图像帧对应的当前码率。

- 根据权利要求16所述的计算机设备,其特征在于,所述当前图像帧为双向预测帧,所述计算机可读指令还使得所述处理器执行如下步骤:获取预设的所述当前层的图像帧与单向预测帧之间的量化偏移参数;根据所述当前图像帧对应的当前码率以及所述量化偏移参数得到对应的复杂度更新值;根据所述当前图像帧的前向图像帧对应的图像复杂度参数以及所述复杂度更新值得到更新后的图像复杂度参数。

- 根据权利要求17所述的计算机设备,其特征在于,所述计算机可读指令还使得所述处理器执行如下步骤:获取所述当前图像帧对应的的编码在后的后向图像帧对应的帧类型;当所述后向图像帧为非双向预测帧时,根据预设的码率控制模型以及所述更新后的图像复杂度参数得到所述后向图像帧对应的量化参数。

- 根据权利要求11所述的计算机设备,其特征在于,所述根据所述当前层得到所述当前图像帧对应的当前量化参数偏移量包括:获取预设的所述当前层的图像帧与单向预测帧之间的量化偏移参数;根据所述量化偏移参数得到所述当前图像帧对应的当前量化参数偏移量。

- 根据权利要求11所述的计算机设备,其特征在于,图像帧的层级与量化参数偏移量的对应关系为正相关关系。

- 一个或多个存储有计算机可读指令的非易失性存储介质,所述计算机可读指令被一个或多个处理器执行时,使得一个或多个处理器执行如下步骤:获取待编码的当前图像帧;获取所述当前图像帧在所在的图像组中所处的当前层,所述图像组包括多个图像帧;根据所述当前层得到所述当前图像帧对应的当前量化参数偏移量,所述图像组中不同层的图像帧对应不同的量化参数偏移量;获取所述当前图像帧的参考图像帧对应的参考量化参数;根据所述参考量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数;根据所述当前量化参数对所述当前图像帧进行编码。

- 根据权利要求21所述的计算机存储介质,其特征在于,所述参考图像帧为多个,所述根据所述参考量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数包括:获取所述各个参考图像帧与所述当前图像帧之间的帧距离;根据所述各个参考图像帧与所述当前图像帧之间的帧距离以及对应的参考量化参数计算得到所述当前图像帧对应的第一量化参数;根据所述当前图像帧对应的第一量化参数以及所述当前量化参数偏移量得到所述当前图像帧对应的当前量化参数。

- 根据权利要求22所述的计算机存储介质,其特征在于,所述根据所述各个参考图像帧与所述当前图像帧之间的帧距离以及对应的参考量化参数计算得到所述当前图像帧对应的第一量化参数包括:根据当前参考图像帧与所述当前图像帧之间的帧距离得到所述当前参考图像帧对应的权重,其中帧距离与权重为负相关关系;根据所述各个参考图像帧对应的权重以及对应的参考量化参数得到所述当前图像帧对应的第一量化参数。

- 根据权利要求21所述的计算机存储介质,其特征在于,所述计算机存储介质获取所述当前图像帧的参考图像帧对应的参考量化参数包括:获取所述参考图像帧的参考帧类型以及所述参考图像帧的编码量化参数;根据所述参考帧类型以及所述编码量化参数得到所述参考图像帧对应的参考量化参数。

- 根据权利要求24所述的计算机存储介质,其特征在于,所述当前图像帧为双向预测帧,所述根据所述参考帧类型以及所述编码量化参数得到所述参 考图像帧对应的参考量化参数包括:当所述参考帧类型为双向预测帧时,获取所述参考图像帧在所在的图像组中所处的层;根据所述参考图像帧在图像组中所处的层得到所述参考图像帧对应的参考量化参数偏移量;根据所述编码量化参数以及所述参考图像帧对应的参考量化参数偏移量得到述参考图像帧对应的参考量化参数。

- 根据权利要求21所述的计算机存储介质,其特征在于,所述根据所述当前量化参数对所述当前图像帧进行编码包括:根据所述当前量化参数得到所述当前图像帧对应的当前量化步长;根据所述当前量化步长对所述当前图像帧进行编码,得到所述当前图像帧对应的当前码率。

- 根据权利要求26所述的计算机存储介质,其特征在于,所述当前图像帧为双向预测帧,所述计算机可读指令还使得所述处理器执行如下步骤:获取预设的所述当前层的图像帧与单向预测帧之间的量化偏移参数;根据所述当前图像帧对应的当前码率以及所述量化偏移参数得到对应的复杂度更新值;根据所述当前图像帧的前向图像帧对应的图像复杂度参数以及所述复杂度更新值得到更新后的图像复杂度参数。

- 根据权利要求27所述的计算机存储介质,其特征在于,所述计算机可读指令还使得所述处理器执行如下步骤:获取所述当前图像帧对应的的编码在后的后向图像帧对应的帧类型;当所述后向图像帧为非双向预测帧时,根据预设的码率控制模型以及所述更新后的图像复杂度参数得到所述后向图像帧对应的量化参数。

- 根据权利要求21所述的计算机存储介质,其特征在于,所述根据所述当前层得到所述当前图像帧对应的当前量化参数偏移量包括:获取预设的所述当前层的图像帧与单向预测帧之间的量化偏移参数;根据所述量化偏移参数得到所述当前图像帧对应的当前量化参数偏移量。

- 根据权利要求21所述的计算机存储介质,其特征在于,图像帧的层级与量化参数偏移量的对应关系为正相关关系。

Priority Applications (4)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| JP2019563158A JP6906637B2 (ja) | 2017-11-21 | 2018-06-25 | ビデオ符号化方法、コンピュータ機器、及びコンピュータ・プログラム |

| EP18880220.1A EP3716624A4 (en) | 2017-11-21 | 2018-06-25 | VIDEO CODING PROCESS, COMPUTER DEVICE, AND STORAGE MEDIA |

| KR1020197035254A KR102263979B1 (ko) | 2017-11-21 | 2018-06-25 | 비디오 인코딩 방법, 컴퓨터 장치 및 저장 매체 |

| US16/450,705 US10944970B2 (en) | 2017-11-21 | 2019-06-24 | Video coding method, computer device, and storage medium |

Applications Claiming Priority (2)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201711166195.7 | 2017-11-21 | ||

| CN201711166195.7A CN109819253B (zh) | 2017-11-21 | 2017-11-21 | 视频编码方法、装置、计算机设备和存储介质 |

Related Child Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| US16/450,705 Continuation US10944970B2 (en) | 2017-11-21 | 2019-06-24 | Video coding method, computer device, and storage medium |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| WO2019100712A1 true WO2019100712A1 (zh) | 2019-05-31 |

Family

ID=66600324

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| PCT/CN2018/092688 WO2019100712A1 (zh) | 2017-11-21 | 2018-06-25 | 视频编码方法、计算机设备和存储介质 |

Country Status (7)

| Country | Link |

|---|---|

| US (1) | US10944970B2 (zh) |

| EP (1) | EP3716624A4 (zh) |

| JP (1) | JP6906637B2 (zh) |

| KR (1) | KR102263979B1 (zh) |

| CN (1) | CN109819253B (zh) |

| MA (1) | MA50876A (zh) |

| WO (1) | WO2019100712A1 (zh) |

Cited By (1)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2019193182A (ja) * | 2018-04-27 | 2019-10-31 | 富士通株式会社 | 符号化装置、符号化方法、及び符号化プログラム |

Families Citing this family (7)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN110536134B (zh) * | 2019-09-27 | 2022-11-04 | 腾讯科技(深圳)有限公司 | 视频编码、解码方法和装置、存储介质及电子装置 |

| CN111988611B (zh) * | 2020-07-24 | 2024-03-05 | 北京达佳互联信息技术有限公司 | 量化偏移信息的确定方法、图像编码方法、装置及电子设备 |

| CN112165620A (zh) * | 2020-09-24 | 2021-01-01 | 北京金山云网络技术有限公司 | 视频的编码方法及装置、存储介质、电子设备 |

| CN112165618B (zh) * | 2020-09-27 | 2022-08-16 | 北京金山云网络技术有限公司 | 一种视频编码方法、装置、设备和计算机可读存储介质 |

| CN112203096A (zh) * | 2020-09-30 | 2021-01-08 | 北京金山云网络技术有限公司 | 视频编码方法、装置、计算机设备和存储介质 |

| WO2023050072A1 (en) * | 2021-09-28 | 2023-04-06 | Guangdong Oppo Mobile Telecommunications Corp., Ltd. | Methods and systems for video compression |

| CN113938689B (zh) * | 2021-12-03 | 2023-09-26 | 北京达佳互联信息技术有限公司 | 量化参数确定方法和装置 |

Citations (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN102577379A (zh) * | 2009-10-05 | 2012-07-11 | 汤姆逊许可证公司 | 用于视频编码和译码中的嵌入量化参数调节的方法和装置 |

| CN102714725A (zh) * | 2010-01-06 | 2012-10-03 | 杜比实验室特许公司 | 用于多层视频编码应用的高性能码率控制 |

| GB2499874A (en) * | 2012-03-02 | 2013-09-04 | Canon Kk | Scalable video coding methods |

| CN104871539A (zh) * | 2012-12-18 | 2015-08-26 | 索尼公司 | 图像处理装置和图像处理方法 |

| CN104954793A (zh) * | 2015-06-18 | 2015-09-30 | 电子科技大学 | 一种GOP级的QP-Offset设置方法 |

Family Cites Families (17)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US8135063B2 (en) * | 2006-09-08 | 2012-03-13 | Mediatek Inc. | Rate control method with frame-layer bit allocation and video encoder |

| US8331438B2 (en) * | 2007-06-05 | 2012-12-11 | Microsoft Corporation | Adaptive selection of picture-level quantization parameters for predicted video pictures |

| CN100562118C (zh) * | 2007-07-03 | 2009-11-18 | 上海富瀚微电子有限公司 | 一种视频编码的码率控制方法 |

| CN101917614B (zh) * | 2010-06-03 | 2012-07-04 | 北京邮电大学 | 一种基于h.264分层b帧编码结构的码率控制方法 |

| KR20120096863A (ko) * | 2011-02-23 | 2012-08-31 | 한국전자통신연구원 | 고효율 비디오 부호화의 계층적 부호화 구조를 위한 비트율 제어 기법 |

| US10298939B2 (en) * | 2011-06-22 | 2019-05-21 | Qualcomm Incorporated | Quantization in video coding |

| CN102420987A (zh) * | 2011-12-01 | 2012-04-18 | 上海大学 | 基于分层b帧结构的码率控制的自适应比特分配方法 |

| CN105519108B (zh) * | 2012-01-09 | 2019-11-29 | 华为技术有限公司 | 量化矩阵编码的加权预测方法和装置 |

| GB2506594B (en) * | 2012-09-28 | 2016-08-17 | Canon Kk | Method and devices for encoding an image of pixels into a video bitstream and decoding the corresponding video bitstream |

| US9978156B2 (en) * | 2012-10-03 | 2018-05-22 | Avago Technologies General Ip (Singapore) Pte. Ltd. | High-throughput image and video compression |

| US9510002B2 (en) * | 2013-09-09 | 2016-11-29 | Apple Inc. | Chroma quantization in video coding |

| KR101789954B1 (ko) * | 2013-12-27 | 2017-10-25 | 인텔 코포레이션 | 차세대 비디오 코딩을 위한 콘텐츠 적응적 이득 보상된 예측 |

| US9661329B2 (en) * | 2014-04-30 | 2017-05-23 | Intel Corporation | Constant quality video coding |

| US10397574B2 (en) * | 2014-05-12 | 2019-08-27 | Intel Corporation | Video coding quantization parameter determination suitable for video conferencing |

| CN105872545B (zh) * | 2016-04-19 | 2019-03-29 | 电子科技大学 | 一种随机接入视频编码中层次化时域率失真优化方法 |

| CN106961603B (zh) * | 2017-03-07 | 2018-06-15 | 腾讯科技(深圳)有限公司 | 帧内编码帧码率分配方法和装置 |

| CN107197251B (zh) * | 2017-06-27 | 2019-08-13 | 中南大学 | 一种新视频编码标准的基于分层b帧的帧间模式快速选择方法及装置 |

-

2017

- 2017-11-21 CN CN201711166195.7A patent/CN109819253B/zh active Active

-

2018

- 2018-06-25 EP EP18880220.1A patent/EP3716624A4/en active Pending

- 2018-06-25 KR KR1020197035254A patent/KR102263979B1/ko active IP Right Grant

- 2018-06-25 MA MA050876A patent/MA50876A/fr unknown

- 2018-06-25 WO PCT/CN2018/092688 patent/WO2019100712A1/zh unknown

- 2018-06-25 JP JP2019563158A patent/JP6906637B2/ja active Active

-

2019

- 2019-06-24 US US16/450,705 patent/US10944970B2/en active Active

Patent Citations (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN102577379A (zh) * | 2009-10-05 | 2012-07-11 | 汤姆逊许可证公司 | 用于视频编码和译码中的嵌入量化参数调节的方法和装置 |

| CN102714725A (zh) * | 2010-01-06 | 2012-10-03 | 杜比实验室特许公司 | 用于多层视频编码应用的高性能码率控制 |

| GB2499874A (en) * | 2012-03-02 | 2013-09-04 | Canon Kk | Scalable video coding methods |

| CN104871539A (zh) * | 2012-12-18 | 2015-08-26 | 索尼公司 | 图像处理装置和图像处理方法 |

| CN104954793A (zh) * | 2015-06-18 | 2015-09-30 | 电子科技大学 | 一种GOP级的QP-Offset设置方法 |

Non-Patent Citations (1)

| Title |

|---|

| See also references of EP3716624A4 |

Cited By (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2019193182A (ja) * | 2018-04-27 | 2019-10-31 | 富士通株式会社 | 符号化装置、符号化方法、及び符号化プログラム |

| JP7125594B2 (ja) | 2018-04-27 | 2022-08-25 | 富士通株式会社 | 符号化装置、符号化方法、及び符号化プログラム |

| JP7343817B2 (ja) | 2018-04-27 | 2023-09-13 | 富士通株式会社 | 符号化装置、符号化方法、及び符号化プログラム |

Also Published As

| Publication number | Publication date |

|---|---|

| JP6906637B2 (ja) | 2021-07-21 |

| KR102263979B1 (ko) | 2021-06-10 |

| US10944970B2 (en) | 2021-03-09 |

| CN109819253A (zh) | 2019-05-28 |

| KR20200002032A (ko) | 2020-01-07 |

| JP2020520197A (ja) | 2020-07-02 |

| EP3716624A1 (en) | 2020-09-30 |

| US20190320175A1 (en) | 2019-10-17 |

| EP3716624A4 (en) | 2021-06-09 |

| MA50876A (fr) | 2020-09-30 |

| CN109819253B (zh) | 2022-04-22 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| WO2019100712A1 (zh) | 视频编码方法、计算机设备和存储介质 | |

| US10827182B2 (en) | Video encoding processing method, computer device and storage medium | |

| US11563974B2 (en) | Method and apparatus for video decoding | |

| US11412229B2 (en) | Method and apparatus for video encoding and decoding | |

| WO2018161868A1 (zh) | 帧内编码帧码率分配方法、计算机设备和存储介质 | |

| US11206405B2 (en) | Video encoding method and apparatus, video decoding method and apparatus, computer device, and storage medium | |

| US11172220B2 (en) | Video encoding method, and storage medium thereof | |

| US11070817B2 (en) | Video encoding method, computer device, and storage medium for determining skip status | |

| CN110166771B (zh) | 视频编码方法、装置、计算机设备和存储介质 | |

| CN102113328B (zh) | 确定用于比较运动补偿视频编码中的图像块的度量的方法和系统 | |

| WO2018161845A1 (zh) | 视频编码的码率分配、编码单元码率分配方法及计算机设备 | |

| US11949879B2 (en) | Video coding method and apparatus, computer device, and storage medium | |

| US20150172680A1 (en) | Producing an Output Need Parameter for an Encoder | |

| JPWO2008111454A1 (ja) | 量子化制御方法及び装置、そのプログラム並びにプログラムを記録した記録媒体 | |

| JP7431752B2 (ja) | ビデオ符号化、ビデオ復号化方法、装置、コンピュータ装置及びコンピュータプログラム | |

| US11303916B2 (en) | Motion compensation techniques for video | |

| WO2022021422A1 (zh) | 视频编码方法、编码器、系统以及计算机存储介质 | |

| TWI510050B (zh) | 量化控制裝置及方法,以及量化控制程式 | |

| KR101035746B1 (ko) | 동영상 인코더와 동영상 디코더에서의 분산적 움직임 예측 방법 | |

| Mys et al. | Decoder-driven mode decision in a block-based distributed video codec | |

| TWI543587B (zh) | 速率控制方法及系統 | |

| KR20130032807A (ko) | 동영상 부호화 장치 및 방법 | |

| US20130243084A1 (en) | Bit rate regulation module and method for regulating bit rate | |

| CN115190309B (zh) | 视频帧处理方法、训练方法、装置、设备及存储介质 | |

| US20240129473A1 (en) | Probability estimation in multi-symbol entropy coding |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| 121 | Ep: the epo has been informed by wipo that ep was designated in this application |

Ref document number: 18880220 Country of ref document: EP Kind code of ref document: A1 |

|

| ENP | Entry into the national phase |

Ref document number: 2019563158 Country of ref document: JP Kind code of ref document: A |

|

| ENP | Entry into the national phase |

Ref document number: 20197035254 Country of ref document: KR Kind code of ref document: A |

|

| NENP | Non-entry into the national phase |

Ref country code: DE |

|

| ENP | Entry into the national phase |

Ref document number: 2018880220 Country of ref document: EP Effective date: 20200622 |