WO2016035283A1 - Information processing apparatus, control method, and program - Google Patents

Information processing apparatus, control method, and program Download PDFInfo

- Publication number

- WO2016035283A1 WO2016035283A1 PCT/JP2015/004276 JP2015004276W WO2016035283A1 WO 2016035283 A1 WO2016035283 A1 WO 2016035283A1 JP 2015004276 W JP2015004276 W JP 2015004276W WO 2016035283 A1 WO2016035283 A1 WO 2016035283A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- virtual object

- information processing

- processing apparatus

- display

- content item

- Prior art date

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/03—Arrangements for converting the position or the displacement of a member into a coded form

- G06F3/041—Digitisers, e.g. for touch screens or touch pads, characterised by the transducing means

- G06F3/042—Digitisers, e.g. for touch screens or touch pads, characterised by the transducing means by opto-electronic means

- G06F3/0425—Digitisers, e.g. for touch screens or touch pads, characterised by the transducing means by opto-electronic means using a single imaging device like a video camera for tracking the absolute position of a single or a plurality of objects with respect to an imaged reference surface, e.g. video camera imaging a display or a projection screen, a table or a wall surface, on which a computer generated image is displayed or projected

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/011—Arrangements for interaction with the human body, e.g. for user immersion in virtual reality

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/03—Arrangements for converting the position or the displacement of a member into a coded form

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/03—Arrangements for converting the position or the displacement of a member into a coded form

- G06F3/0304—Detection arrangements using opto-electronic means

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0481—Interaction techniques based on graphical user interfaces [GUI] based on specific properties of the displayed interaction object or a metaphor-based environment, e.g. interaction with desktop elements like windows or icons, or assisted by a cursor's changing behaviour or appearance

- G06F3/04812—Interaction techniques based on cursor appearance or behaviour, e.g. being affected by the presence of displayed objects

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0481—Interaction techniques based on graphical user interfaces [GUI] based on specific properties of the displayed interaction object or a metaphor-based environment, e.g. interaction with desktop elements like windows or icons, or assisted by a cursor's changing behaviour or appearance

- G06F3/04815—Interaction with a metaphor-based environment or interaction object displayed as three-dimensional, e.g. changing the user viewpoint with respect to the environment or object

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0487—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser

- G06F3/0488—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures

- G06F3/04883—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures for inputting data by handwriting, e.g. gesture or text

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F1/00—Details not covered by groups G06F3/00 - G06F13/00 and G06F21/00

- G06F1/16—Constructional details or arrangements

- G06F1/1613—Constructional details or arrangements for portable computers

- G06F1/1633—Constructional details or arrangements of portable computers not specific to the type of enclosures covered by groups G06F1/1615 - G06F1/1626

- G06F1/1637—Details related to the display arrangement, including those related to the mounting of the display in the housing

- G06F1/1639—Details related to the display arrangement, including those related to the mounting of the display in the housing the display being based on projection

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F2203/00—Indexing scheme relating to G06F3/00 - G06F3/048

- G06F2203/048—Indexing scheme relating to G06F3/048

- G06F2203/04803—Split screen, i.e. subdividing the display area or the window area into separate subareas

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F2203/00—Indexing scheme relating to G06F3/00 - G06F3/048

- G06F2203/048—Indexing scheme relating to G06F3/048

- G06F2203/04804—Transparency, e.g. transparent or translucent windows

Definitions

- the present disclosure relates to an information processing apparatus, a control method, and a program.

- Devices for displaying various types of information by an operation on a touch panel provided in a smartphone, tablet terminal, and other devices are widely used.

- the tablet terminal with a large screen enables a way to achieve simultaneous operation by multiple users to be considered.

- PTL 1 discloses a technique for detecting the moving direction and speed of an icon operated by a touch pen or the like, and for shifting the icon further by a predetermined distance in the moving direction when a moving speed at the time of removing the touch pen or the like from the icon is greater than or equal to a predetermined speed.

- a display device with a large screen which is designed for allowing multiple users to perform simultaneous operations or viewing, includes a projector or a wall-mounted display.

- a display system which makes possible for multiple user to perform simultaneous operations or viewing by projecting an image onto a projection surface other than a screen, such as a tabletop, a ceiling, and a wall, has been widely used.

- Such a display system may detect an operation on displayed information (hereinafter referred to as "virtual object") using an imaging device or a touch panel, and may control display thereof depending on an operation input.

- the present disclosure provides an information processing apparatus, control method, and program, capable of displaying information in a more appropriate and efficient by controlling movement of a displayed virtual object depending on a positional relationship between the virtual object and a real object.

- an information processing apparatus including circuitry configured to initiate display of a virtual object, based on a gesture operation, starting from a point of origin and moving towards a target point; and continue to display the virtual object in display motion after the gesture operation, wherein a path of travel of the virtual object or a display characteristic of the virtual object is determined based on a positional relationship between the virtual object and another object that is a real object located in proximity to the path of travel of the virtual object.

- an information processing method including displaying a virtual object, based on a gesture operation, starting from a point of origin and moving towards a target point; and continuing to display the virtual object in display motion after the gesture operation, wherein a path of travel of the virtual object or a display characteristic of the virtual object is determined based on a positional relationship between the virtual object and another object that is a real object located in proximity to the path of travel of the virtual object.

- a non-transitory computer-readable medium having embodied thereon a program, which when executed by a computer causes the computer to execute an information processing method, the method including displaying a virtual object, based on a gesture operation, starting from a point of origin and moving towards a target point; and continuing to display the virtual object in display motion after the gesture operation, wherein a path of travel of the virtual object or a display characteristic of the virtual object is determined based on a positional relationship between the virtual object and another object that is a real object located in proximity to the path of travel of the virtual object.

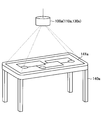

- FIG. 1 is a diagram illustrated to describe an exemplary configuration of an information processing system according to an embodiment of the present disclosure.

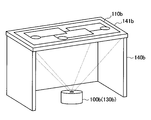

- FIG. 2 is a diagram illustrated to describe another exemplary configuration of the information processing system according to an embodiment of the present disclosure.

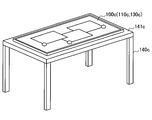

- FIG. 3 is a diagram illustrated to describe another exemplary configuration of the information processing system according to an embodiment of the present disclosure.

- FIG. 4 is a diagram illustrating an example of content to be displayed.

- FIG. 5 is a diagram illustrating an example of transferring and displaying a content item from a smartphone.

- FIG. 6 is a diagram illustrating an example of transferring and displaying a content item from a smartphone.

- FIG. 7 is a diagram illustrating an example of transferring and displaying a content item from a smartphone.

- FIG. 1 is a diagram illustrated to describe an exemplary configuration of an information processing system according to an embodiment of the present disclosure.

- FIG. 2 is a diagram illustrated to describe another exemplary configuration of the information processing system according to an embodiment of the present disclosure.

- FIG. 8 is a diagram illustrating an example of operating a transferred content item.

- FIG. 9 is a diagram illustrating an example of operating the movement of a transferred content item.

- FIG. 10 is a diagram illustrating an example of operating the movement of a transferred content item.

- FIG. 11 is a diagram illustrating an example of operating the movement of a transferred content item.

- FIG. 12 is a diagram illustrating an example of operating a transferred content item.

- FIG. 13 is a diagram illustrated to describe an exemplary configuration of an information processing apparatus according to an embodiment of the present disclosure.

- FIG. 14 is a flowchart illustrating a display control process performed by the information processing system according to an embodiment of the present disclosure.

- FIG. 15 is a diagram illustrated to describe a first start condition of the information processing system according to an embodiment of the present disclosure.

- FIG. 16 is a diagram illustrated to describe a second start condition of the information processing system according to an embodiment of the present disclosure.

- FIG. 17 is a diagram illustrated to describe how to acquire a first slide route according to an embodiment.

- FIG. 18 is a diagram illustrated to describe how to acquire a second slide route according to an embodiment.

- FIG. 19 is a diagram illustrated to describe how to search a route that circumvents an object in acquiring a second slide route according to an embodiment.

- FIG. 20 is a diagram illustrated to describe how to search a route that circumvents an object in acquiring a second slide route according to an embodiment.

- FIG. 21 is a diagram illustrated to describe how to search a route that circumvents an object in acquiring a second slide route according to an embodiment.

- FIG. 22 is a diagram illustrated to describe how to search a route that circumvents an object in acquiring a second slide route according to an embodiment.

- FIG. 23 is a diagram illustrated to describe how to acquire a first slide speed according to an embodiment.

- FIG. 24 is a diagram illustrated to describe how to acquire a first slide speed according to an embodiment.

- FIG. 25 is a diagram illustrated to describe advantageous effects obtained by the slide speed control depending on the capacity of content according to an embodiment.

- FIG. 26 is a diagram illustrated to describe a first object proximity operation according to an embodiment.

- FIG. 27 is a diagram illustrated to describe a second object proximity operation according to an embodiment.

- FIG. 28 is a diagram illustrated to describe a third object proximity operation according to an embodiment.

- FIG. 29 is a diagram illustrated to describe a fourth object proximity operation according to an embodiment.

- FIG. 30 is a diagram illustrated to describe a first termination condition according to an embodiment.

- FIG. 31 is a diagram illustrated to describe a second termination condition according to an embodiment.

- FIG. 32 is a diagram illustrated to describe a first termination process according to an embodiment.

- FIG. 33 is a diagram illustrated to describe another example of the first termination process according to an embodiment.

- FIG. 34 is a diagram illustrated to describe a second termination process according to an embodiment.

- FIG. 35 is a diagram illustrated to describe another example of the second termination process according to an embodiment.

- FIG. 36 is a diagram illustrated to describe a third termination process according to an embodiment.

- FIG. 37 is a diagram illustrated to describe another example of the third termination process according to an embodiment.

- FIG. 38 is a diagram illustrated to describe how to disable the event for a content item during sliding.

- FIG. 39 is a diagram illustrated to describe how to disable the event for a content item during sliding.

- FIG. 40 is a diagram illustrated to describe a supplement to data transfer of the information processing system according

- FIG. 1 is a diagram illustrated to describe an exemplary configuration of an information processing system according to an embodiment of the present disclosure.

- An exemplary configuration of an information processing system according to an embodiment of the present disclosure will be described with reference to FIG. 1.

- the information processing system is configured to include an information processing apparatus 100a having an input function and an output function.

- the information processing apparatus 100a displays information on the top surface 141a (tabletop) of a table 140a and allows the user to operate the information displayed on the top surface 141a.

- a system of displaying information on the top surface 141a of the table 140a as shown in FIG. 1 is also referred to as "projection type" hereinafter.

- the information processing apparatus 100a is provided over the table 140a, for example, in a suspended state from a ceiling. In other words, the information processing apparatus 100a is spaced apart from the table 140a on which information is displayed.

- the information processing apparatus 100a has an input function (input unit 110a) for inputting particulars of an operation by the user on information displayed on the top surface 141a (tabletop), the shape of an object being placed on the table 140a, or other details.

- Examples of the input unit 110a of the information processing apparatus 100a include a camera for capturing the table 140a with one lens, a stereo camera capable of capturing the table 140a with two lenses and recording its depth directional information, or a microphone for picking up sound form the user's mouth and its surrounding environmental sound (simply referred to as "microphone").

- the information processing apparatus 100a is allowed to analyze an image captured using the camera to detect an object placed on the table 140a.

- the stereo camera may include a visible light camera and an infrared camera.

- the information processing apparatus 100a is allowed to acquire depth information. The acquired depth information allows the information processing apparatus 100a to detect a person's finger or an object placed on the table 140a by distinguishing it from a pattern of the top surface or a tablecloth.

- the acquired depth information also allows the information processing apparatus 100a to detect a user's hand being in contact with or in proximity to the top surface 141a of the table 140a or to detect the removal of the hand from the top surface 141a.

- a contact of an operation body of the user such as finger with a surface on which information is displayed (here, the top surface 141a) or a proximity of the operating body, such as hand, to the surface is collectively referred to as "contact”.

- a microphone array may be used to pick up sound in a particular direction.

- the information processing apparatus 100a may adjust the sound pickup direction of the microphone array in any optional direction.

- the operation performed by the user may be detected using a touch panel that is provided on the top surface 141a of the table 140a and is used to detect a contact with the user's finger or the like.

- the user's operation may include an operation using a stylus on a surface on which information is displayed and a gesture operation to the camera, in addition to that described above.

- the information processing apparatus 100a has an output function (output unit 130a) for displaying information on the top surface 141a (tabletop) of the table 140a or outputting sound.

- Examples of the output unit 130a include a projector and a loudspeaker.

- the output unit 130a projects information onto the top surface 141a of the table 140a.

- the output unit 130a outputs sound based on a sound signal.

- One or more loudspeakers may be provided.

- the information processing apparatus 100a is allowed to define which loudspeaker is used to output sound or adjust the sound output direction.

- the output unit 130a may be provided with lighting equipment.

- the information processing apparatus 100a is allowed to control on/off state of the lighting equipment based on the contents of information inputted by the input unit 110.

- the user who uses the information processing system as shown in FIG. 1 is allowed to perform an operation on the information displayed on the top surface 141a of the table 140a by means of the output function (output unit 130a) of the information processing apparatus 100a, with the user's finger placed on the top surface 141a.

- the information processing apparatus 100a is allowed to be connected to other devices, which is not shown in FIG. 1.

- the information processing apparatus 100a is allowed to be connected to a mobile device carried by the user, such as a smartphone and a tablet terminal, directly by a wireless connection, or through a server or the like over a network.

- FIGS. 2 and 3 are diagrams illustrated to describe another example of the information processing system according to an embodiment of the present disclosure.

- FIG. 2 is a diagram illustrated to describe another exemplary configuration of the information processing system according to an embodiment of the present disclosure.

- an information processing apparatus 100b may project and display information from under a table 140b onto a top surface 141b of the table 140b.

- the information processing apparatus 100b includes an output unit 130b that is implemented as a projector.

- the top surface 141b of the table 140b which is used as a surface on which information is displayed, is formed of a transparent material such as glass plate and transparent plastic plate.

- a system of projecting information from under the table 140b and displaying information on the top surface 141b as shown in FIG. 2 is also referred to as "rear projection type" hereinafter.

- the table 140b may include an input unit 110b provided on the top surface 141b.

- the input unit 110b is implemented by a touch panel and detects the user's operation on information displayed on the top surface 141b.

- the input unit 110b is not limited to the configuration in which it is provided on the top surface 141b.

- the input unit 110b when the input unit 110b is implemented as a camera, the input unit 110b may be spaced apart from the table 140b under the table 140b, and the input unit 110b may be incorporated into the information processing apparatus 100b.

- FIG. 3 is a diagram illustrated to describe another exemplary configuration of the information processing system according to an embodiment of the present disclosure.

- FIG. 3 illustrates an information processing apparatus 100c that is implemented by a touch panel display and is placed on a top surface 141c of a table 140c.

- an input unit 110c and an output unit 130c may be incorporated into the touch panel display.

- a surface on which information is displayed is the touch panel display.

- a system of displaying information using the touch panel display placed on the top surface 141c as shown in FIG. 3 is also referred to as "flat arrangement display type".

- the information processing system shown in FIG. 3 includes a camera provided over the touch panel display for detecting the user's position.

- the information processing apparatus 100a provided with the input unit 110a and the output unit 130a over the table 140a, that is, the configuration in which the input unit 110a and the output unit 130a are spaced apart from the surface on which information is displayed as shown in FIG. 1.

- the information processing apparatus 100a, the input unit 110a, the output unit 130a, the table 140a, and the top surface 141a are respectively simply called as information processing apparatus 100, the input unit 110, the output unit 130, the table 140, and the top surface 141.

- the information processing apparatus 100 displays various types of information on the top surface 141 of the table 140 depending on the user's operation.

- Information to be displayed is acquired from a local storage of the information processing apparatus 100 or an external storage of an external device (smartphones, tablet terminals, servers, etc.).

- FIG. 4 is a diagram illustrating an example of content to be displayed. As shown in FIG. 4, various types of information capable of being operated by multiple users are displayed on the top surface 141. In the example shown in FIG. 4, a music video application window 20, a launcher view 22, a music application window 23 are displayed in proximity to the corresponding individual users. The launcher view 22 is displayed depending on a contact operation with an icon 21.

- Information outputted and displayed by the information processing apparatus 100 is not limited to the example shown in FIG. 4.

- the Information including, but not limited to, web browser, timer application, note application, calculator application, calendar application, media files (e.g., moving image file, music file, photograph file), and image data may be displayed.

- FIG. 4 describes the case in which an icon to be used is selected from the launcher view 22 and then the selected icon is expanded and displayed on the table

- content transferred from the information processing apparatus placed on the table may be expanded and displayed on the table.

- FIGS. 5 to 12 are diagrams illustrating an example of transferring and displaying content from a smartphone.

- FIG. 8 is a diagram illustrating an example of operating the transferred content.

- FIG. 9 is a diagram illustrating an example of operating the movement of the transferred content.

- FIGS. 10 and 11 are diagrams illustrating an example of controlling the movement of the transferred content.

- FIG. 12 is a diagram illustrating an example of operating the transferred content.

- the user places a smartphone 1 in a predetermined region S1 on the top surface 141 of the table 140.

- the information processing apparatus 100 placed over the top surface 141 can eliminate the necessity to recognize the smartphone 1.

- the information processing apparatus 100 fixes the position of the region S1 corresponding to each seat at the table 140 without displaying the region S1 on the top surface 141, and thus the users only place their smartphones 1 on the top surface 141 in front of their respective seats.

- the information processing apparatus 100 may display the region S1 on the top surface 141 to prompt the user to place the information processing apparatus such as the smartphone 1 in the region S1 by means of displaying or sound output.

- the information processing apparatus 100 may allow the user to tap a region near the smartphone 1 without displaying the region S2 on the top surface 141.

- the information processing apparatus 100 may display the region S2 on the top surface 141 to prompt the user to tap the region S1 by means of displaying or sound output.

- the information processing apparatus 100 when recognizing that the region S2 is tapped, is connected to the smartphone 1 placed on the region S1.

- the information processing apparatus 100 then extracts (receives) content from a local storage of the smartphone 1 and projects the content on the top surface 141 for display.

- the information processing apparatus 100 controls display of content in such a way that content is popped out from the smartphone 1 into the top surface 141 as shown in FIG. 7.

- a range to be projected (display region) onto the top surface 141 may be set as a range shown by a region S3, as an example.

- An example of the content to be extracted includes a plurality of photograph content items captured/browsed in close proximity and a plurality of music content items acquired/viewed in close proximity.

- the information processing apparatus 100 controls a plurality of photograph content items of the extracted content items to be displayed on the left side of the smartphone 1 when viewed from the user and controls a plurality of music content items (jacket photograph image) to be displayed on the left side.

- the information processing apparatus 100 displays a plurality of content items to be overlapped with each other in such a way that at least a portion of the image may be visible as shown in FIG. 7.

- the user is allowed to operate each of the content items displayed on the top surface 141 with the user's finger or the like in contact with the top surface 141.

- the selected music content item 25 is reproduced.

- the information processing apparatus 100 recognizes a tap operation on the music content item 25 with the finger or the like and controls the selected music content item 25 to be outputted from a loudspeaker (including a loudspeaker provided in the information processing apparatus 100, a loudspeaker provided on the table 140, and a loudspeaker provided in its vicinity). This makes it possible for the user to reproduce the music being viewed while the user eats at the table 140.

- the user can passes the photograph content item on to the opponent. For example, when there is a photograph content item 26 which a user wants to show to an opposite user located at the position facing the user across the table 140, the user drags the photograph content item 26 to the opposite user with the user's finger in contact with the photograph content item 26, as shown in FIG. 9. In this state, the user performs a flick operation on the photograph content item 26 to be slid to the opposite user by giving momentum to it.

- the information processing apparatus 100 detects a moving direction and a moving speed of the photograph content item 26 and controls display of the photograph content item 26 in such a way that the photograph content item 26 further slides in the moving direction by a predetermined distance depending on the moving speed (to allow an object to be moved without another user operation after the previous user operation, like an inertial movement).

- the information processing apparatus 100 basically moves the photograph content item 26 in a straight line from the position at which the flick operation is performed.

- the information processing apparatus 100 moves it along a slide route that is a path circumventing the obstacle.

- the information processing apparatus 100 performs display control by sliding the photograph content item 26 to the opposite user along a route that circumvents dishes 5a and 5b, as indicated by solid arrows in FIG. 10.

- the information processing apparatus 100 may change the direction of the photograph content item 26 into the direction that is easily viewed by the opposite user.

- the opposite user can expand and browse the photograph content item 26 by tapping on it as shown in FIG. 12.

- the information processing apparatus 100 recognizes the operation by the opposite user on the photograph content item 26. Then, when a tap operation is performed, the information processing apparatus 100 controls the photograph content item 26 to be displayed in an enlarged form.

- the information processing apparatus 100 switches scenes by fading out the content on the display surface (top surface 141).

- FIG. 13 is a diagram illustrated to describe an exemplary configuration of the information processing apparatus 100 according to an embodiment of the present disclosure.

- the information processing apparatus 100 is configured to include an input unit 110, a controller 120, and an output unit 130.

- the input unit 110 allows particulars of an operation on the information processing apparatus 100 by the user who uses the information processing apparatus 100 or the shape or the like of an object placed on a surface to which the output unit 130 outputs information (e.g., the top surface 141a of the table 140a shown in FIG. 1) to be inputted.

- An example of particulars of an operation on the information processing apparatus 100 by the user who uses the information processing apparatus 100 includes particulars of the operation on a GUI outputted on a display surface by the information processing apparatus 100.

- the particulars of an operation on the information processing apparatus 100 or information on the shape or the like of an object, which are inputted to the input unit 110, are sent to the controller 120.

- the input unit 110 may be configured as a camera including one lens, a stereo camera including two lenses, or a microphone.

- the controller 120 controls each component of the information processing apparatus 100.

- the controller 120 generates information that is to be outputted from the output unit 130 using information inputted to the input unit 110.

- the controller 120 is configured to include a detection unit 121 and an output control unit 122.

- the detection unit 121 performs a process for detecting particulars of an operation on the information processing apparatus 100 by the user who uses the information processing apparatus 100, contents of information being outputted from the output unit 130, and the shape or the like of an object placed on a surface to which the output unit 130 outputs information (e.g., the top surface 141a of the table 140a shown in FIG. 1).

- the particulars detected by the detection unit 121 are sent to the output control unit 122.

- the output control unit 122 performs control for generating information to be outputted from the output unit 130 based on the particulars detected by the detection unit 121. More specifically, the output control unit 122 controls the moving speed and/or moving route of a virtual object, in accordance with the moving speed and/or moving route of the virtual object obtained based on the moving direction and/or moving speed of the user operation on information (virtual object) outputted from the output unit 130. The information generated by the output control unit 122 is sent to the output unit 130.

- the information processing apparatus 100 when configured as a projection type shown in FIG. 1, a previous calibration for achieving matching between coordinates of a surface on which information is displayed and coordinates where an operating body such as the user's hand is in contact with the display surface may be performed.

- the detection unit 121 can detect which portion of the GUI is in contact with the operating body such as the user's hand.

- the output control unit 122 controls display of display information (virtual object) depending on the user's operation on a virtual object displayed on the display surface. Specifically, when the display information is moved by the flick operation, the output control unit 122 performs display control of information to be displayed depending on a positional relationship with a real object located near the moving route of the display information.

- the detailed display control will be described in more detail at the heading ⁇ 3. Operation Process>> described later.

- the controller 120 may be configured to include a central processing unit (CPU), a read only memory (ROM), and a random access memory (RAM).

- CPU central processing unit

- ROM read only memory

- RAM random access memory

- the controller 120 includes a CPU device or the like, such device may be composed of electronic circuits.

- the controller 120 may have a communication function for performing a wireless communication with other devices and a function for controlling the operation of other devices connected to the information processing apparatus 100, such as lighting equipment.

- the controller 120 is configured to include a storage unit for storing content control information (so-called content control information database).

- the content control information may be particulars of inputted operation and information indicating what type of content control is performed on an object or the like placed on the table 140a.

- the controller 120 performs display control depending on information inputted from the input unit 110 by referring to the content control information stored in the storage unit.

- the output unit 130 outputs information depending on information about particulars of the operation by the user who uses the information processing apparatus 100, contents of information being outputted from the output unit 130, or the shape or the like of an object placed on a surface to which the output unit 130 outputs information (e.g., the top surface 141a of the table 140a shown in FIG. 1), which are inputted by the input unit 110.

- the output unit 130 outputs information based on information generated by the output control unit 122.

- An example of the information outputted from the output unit 130 includes information displayed on the display surface, sound outputted from a loudspeaker (not shown), or the like.

- the information processing apparatus 100 shown in FIG. 13 may be configured as a stand-alone device, or may be configured partially or entirely in a separate device.

- the controller 120 may be included in a device such as a server that is connected to the input unit 110 and the output unit 130 over a network or the like.

- the controller 120 When the controller 120 is included in the device such as a server, information from the input unit 110 is transmitted to the device such as a server through the network or the like.

- the controller 120 performs a process on the information from the input unit 120.

- Information to be outputted from the output unit 130 is sent from the device such as a server to the output unit 130 through the network or the like.

- the information processing system is configured in such a way that multiple users may execute respective independent applications on the same screen, for example, displayed on the table 140a, as shown in FIGS. 4, 5 to 12.

- a graphical user interface (GUI) of the applications shown in FIG. 4 is generated by the output control unit 122 and is outputted from the output unit 130.

- GUI graphical user interface

- the information processing system acquires operation particulars by the user on the GUI of the application outputted on an information display surface by the output unit 130 through the input unit 110.

- the information processing system allows the user to be in contact with the display surface with an operating body such as the user's hand or allows the operating body being in contact with the display surface to move on the display surface.

- the information processing system receives an operation on the GUI of the application outputted on the information display surface by the output unit 130.

- An embodiment of the present disclosure particularly relates to an operation for moving a position at which the GUI is displayed among operations on the GUI of applications.

- a real object such as cups or dishes is placed on the top surface 141a of the table 140a.

- the GUI may be moved by a predetermined distance depending on the moving speed on the straight line in the moving direction depending on the flick operation by the user.

- the projected GUI is difficult to view because of being overlapped with a real object such as cups or dishes, or the GUI projected onto some food causes its appearance to be worse.

- the GUI When the GUI remains at a position being overlapped with a real object, it is necessary to move the real object to operate the GUI, resulting in inconvenience.

- the information processing apparatus 100 is used as a rear projection type or a flat arrangement display type, if the GUI remains at a position hidden under a real object, the user will lose sight of the GUI.

- the display control when a position at which the displayed GUI (i.e. virtual object) is displayed is changed depending on the user's operation, the display control may be performed in consideration of a real object placed on a display surface, thereby displaying information in a more suitable and efficient manner.

- FIG. 14 is a flowchart illustrating a display control process performed by the information processing system according to an embodiment of the present disclosure.

- step S103 the controller 120 of the information processing apparatus 100 acquires "content control information: start condition".

- the controller 120 acquires the operation particulars on the content (specifically, GUI of application) performed by the user inputted from the input unit 110, refers to the content control information stored in a storage unit, and determines whether a condition of starting the movement display control (specifically, slide operation) is satisfied.

- FIG. 15 is a diagram illustrated to describe a first start condition according to an embodiment. As shown in the left part of FIG. 15, when a drag operation is performed to move a content item 30 displayed on the top surface 141 that is the display surface in a state where the content item is in contact with an operating body such as a finger, the movement display control according to an embodiment is not started. On the other hand, as shown in the right part of FIG.

- the controller 120 determines that the start condition is satisfied, and then the movement display control according to an embodiment is started.

- FIG. 16 is a diagram illustrated to describe a second start condition according to an embodiment.

- the movement display control according to an embodiment is not started.

- the controller 120 determines that the start condition is satisfied, and then the movement display control according to an embodiment is started.

- the controller 120 determines that the start condition of movement display control is satisfied.

- the start condition described above is merely an example, and the present disclosure is not limited thereto.

- step S106 if the controller 120 determines that the start condition is satisfied (YES in step S106), then in subsequent step S109, the controller 120 acquires "content control information: slide route and slide speed". In other words, the controller 120 acquires (sets) the slide route and slide speed depending on the moving direction of the flick operation by the user or the size of the content.

- FIG. 17 is a diagram illustrated to describe how to acquire a first slide route according to an embodiment.

- the controller 120 sets a point where the user's finger is removed from the content item 30 by the flick operation as a start point S, and sets a point of a predetermined distance D on the straight line in the moving direction of the flick operation from the start point S as a termination point T. Then, the controller 120 acquires the shortest route (i.e. the straight line) from the start point S to the termination point T as a slide route.

- the controller 120 acquires the shortest route (i.e. the straight line) from the start point S to the termination point T as a slide route.

- the predetermined distance D is calculated based on the moving speed of the flick operation and the virtual coefficient of friction with the top surface 141.

- FIG. 18 is a diagram illustrated to describe how to acquire a second slide route according to an embodiment.

- the controller 120 acquires a route that circumvents the obstacle as a slide route.

- the controller 120 recognizes in advance the position of an object depending on the conditions of the object (size and position of the object) placed on the top surface 141, which is inputted from the input unit 110, and thus acquires a route that circumvents the object.

- the description of how to search a route that circumvents an object will be made later with reference to FIGS. 19 to 25.

- the controller 120 may acquire a route in which the moving direction changes as a slide route, as shown in the right part of FIG. 18.

- the content item 30 is moved along the shortest route to the termination point T (i.e. the straight line) and reflects the route to the dish 5b at the time when the content item 30 reaches the dishes 5a as an obstacle.

- the controller 120 does not recognize in advance the position of a real object or when a real object is placed on the route after the movement control of the content item 30 by the flick operation is started, it is possible to prevent a real object and the content item 30 to be overlapped with each other.

- FIGS. 19 to 25 are diagrams illustrated to describe how to search a route that circumvents an object to acquire the second slide route according to an embodiment.

- the controller 120 recognizes an object placed on the top surface 141 and determines object regions 50a and 50b on which an object is placed, as shown in FIG. 19.

- an object may be recognized based on a captured image and depth information that are inputted from the input unit 110.

- the controller 120 recognizes that the flick operation is performed by the user's finger in contact with the content item 30 based on the operation particulars inputted from the input unit 110.

- the controller 120 estimates the moving direction and the termination point T from the vector at the time of the flick operation on the content item 30 as shown in the left part of FIG. 19.

- the controller 120 sets control points C1 and C2 of the three-dimensional Bezier curve on the shortest route between the start point S and the termination point T (straight line) as shown in the right part of FIG. 19.

- the controller 120 determines whether the route collides with the object region 50a or 50b.

- the determination as to whether there is a collision uses an ordinary algorithm and thus the method for determination is not limited.

- the controller 120 performs intersection determination by calculating the outer product of four sides of the object region 50a or 50b and the straight line or curved line.

- the controller 120 shifts the control points C1 and C2 from the initial position as shown in the left part of FIG. 20 and again determines whether there is a collision with an object.

- the route shown in the left part of FIG. 20 also collides with the object region 50a, and thus the controller 120 shifts the control points C1 and C2 to another points and again determines whether there is a collision with an object as shown in the right part of FIG. 20.

- the controller 120 repeatedly performs the collision determination and adjustment on the control points C1 and C2, and then searches a route that does not collide with the object regions 50a and 50b.

- the controller 120 acquires the found route as a slide route and slides the content item 30 along a curve as shown in the right part of FIG. 21.

- the controller 120 may exceptionally acquire the shortest route to the termination point T (straight line) as a slide route, as shown in the left part of FIG. 22. In this case, the controller 120 slides the content item 30 from the start point S toward the termination point T along the straight line as shown in the right part of FIG. 22.

- the projection type information processing apparatus 100 is used as shown in FIG. 1, the content item 30 passing through the object region 50a is projected onto an object. Thus, the content item 30 is difficult to view and, when the object is a dish, the appearance of food on it becomes worse.

- the controller 120 may adjust transmittance of the content item 30 in such a way that the content item 30 may be translucent when passing through the object region 50a. Furthermore, an appearance of an object (such as the content item 30), or a displaying of the object, may be made based on virtual physical amounts of items or contents associated with or represented by the object.

- the description of how to acquire a slide route has been made.

- the description of how to acquire a slide speed will be made with reference to FIGS. 23 and 24.

- the speed of the content item 30 when the content item 30 is moved depending on the flick operation is calculated by using the virtual coefficient of friction between the moving speed upon the flick operation and the top surface 141 (or based on a coefficient of restitution associated with a collision or contact with the content item 30) .

- the coefficient of friction proportional to the size (capacity) of content to be moved the user can intuitively recognize the magnitude of capacity of content.

- the coefficient of friction when the content item 30 of a single music file slides is set to be smaller than the coefficient of friction when content item 32 of three music files together slides.

- the coefficient of friction i.e. resistance

- the coefficient of friction is set to be lower.

- the coefficient of friction is set to be higher. Accordingly, even when the user removes the finger from a content item at the same moving speed, the slide speed and the slide distance vary depending on the number of content items.

- the content item 30 having smaller number of files and lower coefficient of friction is moved at higher speed and longer distance than the content item 32 having larger number of files and higher coefficient of friction.

- the magnitude can also be represented by a number of rotations the content item 32 is displayed to make, when the content item 32 is displayed to rotate while moving.

- the magnitude can also be represented by a sound, and the sound can further be generated to be a higher or a lower volume, based on the magnitude.

- an appearance of an object (such as the content item 32), or a displaying of the object, may be made based on the number of content items.

- Such coefficient of friction is not limited to the number of moving files, but it may be varied depending on the capacity of a file.

- the coefficient of friction when a content item 34 of a moving image file having a time length of five minutes is slid is set to be smaller than the coefficient of friction when a content item 35 of a moving image file having a time length of 30 minutes is slid.

- the coefficient of friction is set to be lower.

- the coefficient of friction is set to be higher. Accordingly, even when the user removes the finger from a content item at the same moving speed, the slide speed and the slide distance vary depending on the size.

- an appearance of an object (such as the content item 32), or a displaying of the object, may be made based on the size of the file, and the size of the file may be a time length or an image size.

- the appearance of the object, or the displaying of the object may be made based on a weight of the object, an importance of the object or of contents associated with the object, or a length of time contents associated with the object have been placed.

- the user can intuitively recognize the capacity of content. This allows a usage pattern as shown in FIG. 25 to be implemented.

- FIG. 25 is a diagram illustrated to describe advantageous effects obtained by the slide speed control depending on the capacity of content according to an embodiment.

- a user A slides a content item 32, which is formed by selecting and collecting some recommended songs (content of music file), toward a user B by performing the flick operation on the content item.

- the information processing apparatus 100 sets the moving speed of the flick operation by the user A by calculating the coefficient of friction that is increased in proportion to the capacity of the content item 32 (e.g., sum of sizes), and controls the movement of the content item 32. This allows the user B to view the flick operation performed by the user A or the content item 32 slid from the user A, and to intuitively recognize the capacity of the content item 32.

- the user B determines that the capacity is insufficient if all of the music files included in the content item 32 are transferred to a portable music player 6 depending on the recognized capacity, the user B divides the music files included in the content item 32 into content items 32a, 32b, and 32c as shown in the middle part of FIG. 25. Then, the user B can transfer only a content item (e.g., content item 32a) selected from the content items 32a, 32b, and 32c to the portable music player 6 as shown in the lower part of FIG. 25.

- the information processing apparatus 100 establishes a wireless communication with the portable music player 6 and controls the content item 32a to be transferred.

- step S112 the controller 120 updates display of content, that is, performs the slide movement control, based on the content control information including the slide route and slide speed obtained in step S109.

- step S115 the controller 120 determines whether a termination condition is satisfied.

- the description of the termination condition according to an embodiment will be made in detail with reference to "3-4. Termination Condition" described later.

- the controller 120 determines in step S121 whether the sliding content is close to a real object placed on the top surface 141. Specifically, the controller 120 determines whether the content is in proximity to a real object by referring to a captured image, depth information or the like inputted from the input unit 110.

- the controller 120 acquires "content control information: object proximity operation" in step S124.

- content control information object proximity operation

- the controller 120 can also acquire a route that passes through the object by moving it on the straight line from a start point to a termination point, regardless of presence of absence of a route that circumvents an object.

- the controller 120 controls display of the content to perform a predetermined object proximity operation depending on the property of the object when the content is in proximity to the object (step S124).

- the first to fourth object proximity operations according to an embodiment will be described with reference to FIGS. 26 to 29.

- FIG. 26 is a diagram illustrated to describe the first object proximity operation according to an embodiment.

- the controller 120 controls the content item 30 to pass through a dish 5c depending on the color of the object, with the transmittance of the content item 30 maintained.

- the content item 30 projected by the projection type information processing apparatus 100 is controlled to be translucent. In this case, the user may lose sight of the content item 30 when the content item passes through (overlaps with) the object.

- the controller 120 controls display of the content item 30 to pass through the object, with the transmittance of the content item 30 maintained.

- FIG. 27 is a diagram illustrated to describe the second object proximity operation according to an embodiment.

- the controller 120 controls the content item 30 to pass through a dish 5d depending on the color of an object by setting the content item 30 to be translucent.

- the controller 120 controls the content item 30 to pass through the object by changing the state of the content item 30 to a translucent state. This prevents the user from losing sight of the content item 30 when the content item 30 passes through the object, and this prevents appearance of the food on the dish 5d overlapped with the content item 30 from being worse.

- FIG. 28 is a diagram illustrated to describe the third object proximity operation according to an embodiment.

- the controller 120 controls the content item 30 to pass through a dish 5e by setting the content item 30 to be a transparent state (i.e. non-display) depending on a material of an object.

- a transparent state i.e. non-display

- the content item 30 is projected onto the object by the projection type information processing apparatus 100, the projection light reflected by the object may enter the eyes of the user, or the projection light is irregularly reflected and then an image of the content item 30 may be unclear.

- the controller 120 controls the content item 30 to pass through the object by changing the state of the content item 30 to be a transparent state (i.e. non-display). This makes it possible to prevent the projection light passed through the object from being reflected.

- FIG. 29 is a diagram illustrated to describe the fourth object proximity operation according to an embodiment.

- the controller 120 controls the content item 30 to pass through a dish 5f, with the content item 30 maintained in a transparent state (i.e. non-display) or with the transmittance of the content item 30 maintained, depending on the state of the object.

- the display control on the content item 30 is performed as follows. When there is food in the dish 5f as shown in the left part of FIG. 29, the content item 30 is controlled to pass through the object by changing its state to a transparent state (i.e. non-display). When no food is left in the dish 5f as shown in the right part of FIG.

- the content item 30 is controlled to pass through the object, with the transmittance of the content item 30 maintained.

- the presence or absence of food is estimated based on a captured image or depth information. If no food is left on the dish 5f after eating, it is not necessary to consider the appearance of food.

- the content item 30 is passed without any change, with the transmittance maintained, thereby preventing the user from losing sight of the content item 30.

- the display control at the time of passage of content depending on the properties (color, material, state, etc.) of an object in proximity has been described in detail.

- the display control information (content control information) depending on the properties of an object is stored, for example, in a storage unit (not shown) of the controller 120.

- step S109 although the details of how to acquire a route circumventing an object, a route reflected from an object, or a route passing through an object as a slide route have been described, an embodiment is not limited thereto.

- the controller 120 controls the content to circumvent the object with a height higher than a predetermined value when the content is close to the object.

- the controller 120 may control the content to circumvent the object made of such material when the content is in proximity to the object.

- the controller 120 may control the content to circumvent the object filled with food when the content is in proximity to the object.

- the controller 120 may control the object in which its position is recognized in advance (object placed for a long time) to be circumvented when the object is in proximity and the object that is temporarily placed to be passed through.

- the controller 120 may control the object that is placed for a long time to be circumvented and the object that is temporarily placed to be passed through.

- FIG. 30 is a diagram illustrated to describe a first termination condition according to an embodiment.

- the controller 120 may stop the slide movement of the content item 30 by causing the content item to go into a stall by virtual friction with the top surface 141.

- FIG. 31 is a diagram illustrated to describe a second termination condition according to an embodiment.

- the controller 120 controls the slide movement of the content item 30 to be stopped. More specifically, as shown in FIG. 31, for example, the palm of the hand is directed to face the direction in which the content item 30 travels, and a state that stands perpendicular to the top surface 141 is recognized as a particular posture.

- the termination condition according to the present embodiment has been described.

- the termination condition according to an embodiment is merely an example, and in addition to this, for example, the movement may be controlled to be stopped when the edge of the top surface 141 is reached.

- Termination Process> Referring back to FIG. 14, if it is determined that the termination condition is satisfied (YES in step S115), then the controller 120 controls a predetermined termination process to be performed.

- the termination process according to an embodiment will be described in detail with reference to FIGS. 32 to 37.

- FIG. 32 is a diagram illustrated to describe a first termination process according to an embodiment.

- the controller 120 controls the content item 30 to approach the real object and controls display of the content item 30 to be aligned around the real object. This makes it possible to prevent each content item that is slid around the real object from being displayed irregularly.

- the content item 30 may be moved to a position not to be overlapped with the real object by shifting the stop position.

- the controller 120 performs a termination process depending on a real object placed in proximity to the location where the content item 30 goes into a stall.

- the controller 120 can perform a termination process for transferring content to the real object. The description will be made with reference to FIG. 33.

- FIG. 33 is a diagram illustrated to describe another example of the first termination process according to an embodiment.

- the controller 120 establishes a wireless communication with a digital camera 7 and controls the content item 37 to be transferred to the digital camera 7.

- the controller 120 does not transfer the content item to the digital camera 7.

- the termination process depending on a real object placed in proximity to the location where content goes into a stall has been described.

- the controller 120 can also perform the termination process depending on a GUI of application displayed in proximity to the location where content goes into a stall. The description will be made with reference to FIG. 34 and 35.

- FIG. 34 is a diagram illustrated to describe a second termination process according to an embodiment.

- the controller 120 controls the content item 37 to be displayed on the photograph application's GUI 45 by incorporating the content item 37 into the photograph application's GUI 45.

- FIG. 35 is a diagram illustrated to describe another example of the second termination process according to an embodiment. As shown in FIG. 35, when a sliding content item 38 is a photograph file and the GUI displayed in proximity to the stop position of the content item 38 is a mail screen 46, the controller 120 controls the content item 38 to be pasted to the mail screen 46.

- the controller 120 can also control to perform a termination process for changing display of content to be optimized for the user. The description will be made with reference to FIGS. 36 and 37.

- FIG. 36 is a diagram illustrated to describe a third termination process according to an embodiment.

- the controller 120 when the content item 37 is slid to the user B, the controller 120 performs display control by rotating the content item 37 in a direction that is easy to be viewed from the user B.

- the controller 120 may align the plurality of content items so that a plurality of content items are not overlapped with each other at the edge of the top surface 141 on the user B, or the controller 120 may align the plurality of content items in such a way that a plurality of content items are overlapped with each other at the edge of the top surface 141 on the user B.

- the controller 120 adjusts the overlapped position using a random number in such a way that at least a portion of each of the content items may be visible.

- FIG. 37 is a diagram illustrated to describe another example of the third termination process according to an embodiment.

- the controller 120 optimizes display of content depending on the attribute of a person in proximity to the location where content is stopped. Specifically, as shown in the left part of FIG. 37, when a user C in proximity to the location where a content item 39 is stopped is an aged person, the controller 120 may control the font size or image size of the content item 39 to be displayed in an enlarged form. As shown in the right part of FIG. 37, when a user D in proximity to the location where a content item 40 is stopped is a foreigner, the controller 120 may control the text of the content item 40 to be displayed in a translated version, or may control a locale to be changed.

- the attribute of a person is obtained by the analysis of a captured image or the like acquired by the input unit 110.

- information indicating what change is necessary may be associated with each region of a display surface, and the contents associated with a region including a movement destination may be further changed. For example, an English region, a German region, and a region where text is displayed in an enlarged form are set in advance, and a change including English translation, German translation, and enlargement of text may be added to each region including a movement destination.

- an operation on a content item during sliding may be not accepted.

- the controller 120 allows display control of only a content item itself (e.g., movement in x-y axis, transmittance, and rotation) to be performed during sliding, and prevent an event (e.g., linking in a content item or clicking buttons) within a content item (GUI) from being accepted.

- a content item e.g., movement in x-y axis, transmittance, and rotation

- an event e.g., linking in a content item or clicking buttons

- FIGS. 38 and 39 are diagrams illustrated to describe how to disable the event for a content item during sliding. This example is based on the assumption that a GUI of a game application (content item 42) is displayed on the top surface 141, and a user A plays a game with a user B. As shown in the left part of FIG. 38, when the user A makes the first move on the content item 42 displayed on the game board screen, the content item 42 is slid in the direction of the opponent user B as shown in the right part of FIG. 38.

- the controller 120 prevents the operation from being accepted. This makes it possible to prevent an erroneous operation (miss the order) of the content item 42 during sliding. As shown in the right part of FIG. 39, when the content item 42 reaches the user B and then is stopped, the controller 120 accepts an input of the second move by the user B.

- the transfer of a content item according to an embodiment is not limited to the transfer to a device placed on the top surface 141.

- a content item may be transferred to a device placed around the table 140. An example thereof will be described with reference to FIG. 40.

- FIG. 40 is a diagram illustrated to describe a supplement to data transfer of the information processing system according to an embodiment.

- the projection type information processing apparatus 100a displays a content item 35 by projecting the content item 35 on the top surface 141a of the table 140a using an output unit 130a that includes a projector.

- the user's operation on the content item 35 is recognized based on information inputted by an input unit 110a that includes a camera and depth sensor.

- the controller 120 controls the content item 35 to be displayed depending on the particulars of the operation inputted from the input unit 110a. Specifically, the controller 120 allows the content item 35 to be slid in the moving direction at the time of the flick operation depending on the flick operation of the content item 35 by the user.

- the controller 120 controls the content item 35 reached a predetermined region S12 at the left end of the top surface 141a in FIG. 40 to be transferred to the television set 8.

- the transferred content item 35 is reproduced in the television set 8.

- a content item can be transferred to a device (e.g., television set and audio device) in a location remote from the table 140a depending on the sliding direction of a content item.

- a device e.g., television set and audio device

- the positional relationship between the table 140a and the surrounding devices may be determined, for example, based on an image captured around the table 140a and obtained by the input unit 110a including a camera or by a communication between the information processing apparatus 100a and the surrounding devices.

- the controller 120 may display the trajectory of the moving content item for a predetermined period of time (e.g., approximately three seconds) after the start of movement.

- the trajectory may be displayed in a string shape that is extended from the content item.

- the controller 120 recognizes the opponent user by audio analysis.

- the controller 120 may modify the discrepancy, and may control the content item to be displayed so that the content item is delivered to the opponent user.

- the information processing system is capable of displaying information in a more appropriate and efficient by controlling movement of a displayed virtual object depending on a positional relationship with a real object.

- a computer program that allows hardware including CPU, ROM, and RAM incorporated in the above-described information processing apparatus 100 to perform functions of the information processing apparatus 100 may also be created.

- a storage medium on which the computer program is stored may be provided.

- the information processing apparatus 100 may be configured to include a head mounted display (HMD).

- the information processing apparatus 100 controls information to be displayed on the top surface 141 in such a way that the information is visible to the user through a display unit (output unit 130) of the HMD.

- HMD head mounted display

- the information processing apparatus 100 acquires the user's operation on the information displayed on the top surface 141 (e.g., drag operation or flick operation that is in contact with the top surface 141 and moves it with the finger) by the input unit 110 provided in the HMD, such as a camera or depth sensor.

- the information processing apparatus 100 may be configured to include the output unit 130 that constituted by the HMD.

- the storage unit includes the storage unit (local memory) included in the controller 120.

- the content control information is stored in the storage unit.

- An embodiment of the present disclosure is not limited to this configuration.

- the content control information may be stored in a server (external memory) on a network, which is connected by a communication function of the controller 120.

- a mode of controlling the movement (e.g., passing through or circumventing) of a virtual object is determined depending on the property information (e.g., size, dish, state) of a real object

- the mode of controlling the movement of a virtual object may be determined depending on the property information of a real object and the property information of a virtual object, or may be determined only depending on the property information of a virtual object.

- An information processing apparatus including: circuitry configured to initiate display of a virtual object, based on a gesture operation, starting from a point of origin and moving towards a target point; and continue to display the virtual object in display motion after the gesture operation, wherein a path of travel of the virtual object or a display characteristic of the virtual object is determined based on a positional relationship between the virtual object and another object that is a real object located in proximity to the path of travel of the virtual object, and when the virtual object passes or enters into a predetermined area of the another object, the path of travel is diverted from a direct path or the display characteristic is changed.

- the information processing apparatus according to any of (1) to (5), wherein the information includes material information of the real object.

- the virtual object is displayed to travel a route of display motion that circumvents a predetermined area of the real object, when the real object is made of a reflective material.

- the information includes information indicating a categorical type of the real object.

- the information processing apparatus according to any of (1) to (8), wherein the virtual object is displayed to travel a route of display motion that circumvents a predetermined area of the real object, when the real object includes food.

- the information processing apparatus according to any of (1) to (12), wherein the virtual object, when displayed to be passing or entering the predetermined area of the another object, is displayed to have a transmittance determined based on a characteristic of the another object.

- the information processing apparatus according to any of (1) to (13), wherein the characteristic of the another object is related to a color or a shape.

- a mode of display moving the virtual object is changed based on information associated with the another object.

- the circuitry is further configured to stop display motion of the virtual object when the virtual object visually collides with a hand of the user having a particular posture.

- An information processing method including: displaying a virtual object, based on a gesture operation, starting from a point of origin and moving towards a target point; and continuing to display the virtual object in display motion after the gesture operation, wherein a path of travel of the virtual object or a display characteristic of the virtual object is determined based on a positional relationship between the virtual object and another object that is a real object located in proximity to the path of travel of the virtual object.

- An information processing apparatus including: a controller configured to control display of a virtual object depending on a user's operation on the displayed virtual object, wherein the controller controls display of the virtual object depending on positional relationship between the virtual object and a real object located in proximity to a moving path of the virtual object when the virtual object is moved.

- a controller configured to control display of a virtual object depending on a user's operation on the displayed virtual object, wherein the controller controls display of the virtual object depending on positional relationship between the virtual object and a real object located in proximity to a moving path of the virtual object when the virtual object is moved.

- the controller moves an object to be displayed without another user's operation after the previous user's operation.

- the controller acquires a moving speed and a moving path of the virtual object based on a moving direction and a moving speed of a predetermined user's operation on the virtual object.

- the information processing apparatus according to any one of (25) to (35), wherein the virtual object is a GUI of application, a media file, or image data.

- the controller prevents an operation input to the virtual object from being accepted during control of a movement of the virtual object.

- the controller performs control in a manner that a movement of the virtual object is stopped by colliding with a hand of a user when the hand of the user in proximity to the virtual object has a particular posture during control of a movement of the virtual object.

- a control method including: controlling display of a virtual object depending on a user's operation on the displayed virtual object, wherein display of the virtual object is controlled depending on positional relationship between the virtual object and a real object located in proximity to a moving path of the virtual object when the virtual object is moved.

Landscapes

- Engineering & Computer Science (AREA)

- General Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Human Computer Interaction (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Multimedia (AREA)

- User Interface Of Digital Computer (AREA)

- Processing Or Creating Images (AREA)

- Controls And Circuits For Display Device (AREA)

Abstract