WO2025041215A1 - Dispositif de traitement d'informations, procédé de traitement d'informations et programme - Google Patents

Dispositif de traitement d'informations, procédé de traitement d'informations et programme Download PDFInfo

- Publication number

- WO2025041215A1 WO2025041215A1 PCT/JP2023/029921 JP2023029921W WO2025041215A1 WO 2025041215 A1 WO2025041215 A1 WO 2025041215A1 JP 2023029921 W JP2023029921 W JP 2023029921W WO 2025041215 A1 WO2025041215 A1 WO 2025041215A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- point cloud

- cloud data

- dimensional

- data

- estimation model

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/10—Segmentation; Edge detection

Definitions

- the present invention relates to an information processing device, an information processing method, and a program.

- Non-Patent Document 1 aims to improve classification accuracy by using missing synthetic data to learn both the completion of missing or occluded areas and semantic segmentation.

- Non-Patent Document 1 requires the preparation of synthetic data in advance, which increases the time and effort required to create and pre-process training data (i.e., the cost of training).

- This disclosure has been made in consideration of the above problems, and its purpose is to provide a highly accurate segmentation technique while suppressing increases in costs.

- An information processing device includes an acquisition means for acquiring input data, a point cloud data generation means for generating three-dimensional point cloud data from the input data, a thinned point cloud data generation means for generating three-dimensional thinned point cloud data by applying a thinning process to at least one of the input data and the three-dimensional point cloud data, and a learning means for training an estimation model that receives point cloud data as input and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data.

- An information processing device includes an acquisition means for acquiring input data, a point cloud data generation means for generating three-dimensional point cloud data from the input data, and an estimation means for estimating the complemented point cloud data corresponding to the three-dimensional point cloud data generated by the point cloud data generation means and the segmentation label for the complemented point cloud data using an estimation model that receives point cloud data as an input and outputs complemented point cloud data and a segmentation label for the complemented point cloud data, the estimation model being machine-learned by referring to the three-dimensional point cloud data and three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data.

- An information processing method includes acquiring input data, generating three-dimensional point cloud data from the input data, generating three-dimensional thinned point cloud data by applying a thinning process to at least one of the input data and the three-dimensional point cloud data, and training an estimation model that receives point cloud data as input and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data, by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data.

- An information processing method includes acquiring input data, generating three-dimensional point cloud data from the input data, and estimating the complemented point cloud data corresponding to the three-dimensional point cloud data generated in the generating step and the segmentation label related to the complemented point cloud data using an estimation model that takes the point cloud data as input and outputs complemented point cloud data and a segmentation label related to the complemented point cloud data, the estimation model being machine-learned by referring to the three-dimensional point cloud data and three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data.

- FIG. 1 is a block diagram showing a configuration of an information processing device according to the present disclosure.

- 1 is a diagram for explaining a data flow in an information processing device according to the present disclosure.

- 1 is a block diagram showing a configuration of an information processing device according to the present disclosure.

- FIG. 2 is a diagram for explaining processing executed by an information processing device according to the present disclosure.

- 1 is a block diagram showing a configuration of an information processing device according to the present disclosure.

- 1 is a block diagram showing a hardware configuration of an information processing device according to the present disclosure.

- a first exemplary embodiment which is an example of an embodiment of the present invention, will be described in detail with reference to the drawings.

- This exemplary embodiment is the basic form of each exemplary embodiment described later.

- the scope of application of each technical means adopted in this exemplary embodiment is not limited to this exemplary embodiment. That is, each technical means adopted in this exemplary embodiment can be adopted in other exemplary embodiments included in this disclosure to the extent that no particular technical obstacle occurs.

- each technical means shown in the drawings referred to for explaining this exemplary embodiment can also be adopted in other exemplary embodiments included in this disclosure to the extent that no particular technical obstacle occurs.

- Fig. 1 is a block diagram showing the configuration of the information processing device 1. As shown in Fig. 1, the information processing device 1 includes an acquisition unit 11, a point cloud data generation unit 12, a thinned point cloud data generation unit 13, and a learning unit 14.

- the acquisition unit 11 acquires input data.

- the input data is, for example, input data for the learning phase.

- the image data may be configured to include at least one of: RGB data in which each pixel (data point) represents an RGB value; depth data in which each pixel (data point) represents a depth value; and three-dimensional point cloud data in which each data point represents a three-dimensional coordinate.

- the three-dimensional point cloud data may be point cloud data acquired by a LiDAR (Light Detection and Ranging, or Laser Imaging Detection and Ranging) device, as an example, but this example does not limit the present exemplary embodiment.

- the three-dimensional point cloud data may be configured to include a feature value of each data point in addition to the three-dimensional coordinates assigned to each data point.

- each feature value may be configured to include at least one of the RGB value and the normal value (normal vector) of each data point.

- the point cloud data generating unit 12 may be configured to generate attribute data including the feature value of each data point (for example, at least one of the RGB value and the normal value (normal vector)) in association with the three-dimensional point cloud data including the three-dimensional coordinates of each data point.

- the point cloud data generator 12 may also generate the above-mentioned three-dimensional point cloud data using algorithms such as Structure from Motion (SfM) and Simultaneous Localization and Mapping (SLAM).

- SfM Structure from Motion

- SLAM Simultaneous Localization and Mapping

- the point cloud data generator 12 with the above-mentioned configuration, can generate the above-mentioned three-dimensional point cloud data from one or more frames (one or more data sets) included in the input data acquired by the acquisition unit 11.

- the point cloud data generation unit 12 may be configured to output the three-dimensional point cloud data as is.

- the three-dimensional point cloud data may be configured to include the feature amounts of each of the above-mentioned data points (for example, at least one of RGB values and normal values (normal vectors)) before outputting the data.

- the attribute data including the feature amounts of each of the above-mentioned data points may be output in association with the three-dimensional point cloud data including the three-dimensional coordinates of each data point.

- the information processing device 1 may be configured not to include the point cloud data generation unit 12. Such a configuration is also included in this exemplary embodiment.

- the thinned point cloud data generating unit 13 generates three-dimensional thinned point cloud data by applying a thinning process to at least one of the input data acquired by the acquiring unit 11 and the three-dimensional point cloud data generated by the point cloud data generating unit 12.

- the thinning process may be, for example, The thinning process includes a process of generating the three-dimensional thinned point cloud data by using only a part of the frames among the plurality of frames included in the input data.

- the thinning process includes The configuration may include a process of generating the three-dimensional thinned point cloud data using only a portion of a plurality of data points included in at least one of the input data and the three-dimensional point cloud data.

- the learning unit 14 trains an estimation model to which point cloud data is input, and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data.

- the learning unit 14 trains the estimation model by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data. Note that the expression “completion” in this exemplary embodiment is derived from “point cloud completion” as an example, but this wording does not limit this exemplary embodiment.

- the learning unit 14 Refer to training data including the three-dimensional point cloud data and the three-dimensional thinned point cloud data,

- the three-dimensional thinned point cloud data included in the training data is input to the estimation model to generate three-dimensional point cloud data in the estimation model;

- the estimation model is subjected to machine learning so that the difference between the 3D point cloud data generated by the estimation model and the 3D point cloud data included in the training data is reduced.

- the three-dimensional point cloud data included in the teacher data may include a correct answer label (also called a true value label) for segmentation, or the three-dimensional point cloud data included in the teacher data may be associated with (associated with) a correct answer label for segmentation.

- the learning unit 14 may be configured to train the estimation model so that the difference between the segmentation label output (estimated) by the estimation model and the correct answer label for segmentation is small.

- the correct answer label may be, as one example, included in the input data or associated with the input data, and the acquisition unit 11 may acquire the correct answer label.

- the learning unit 14 configured as described above can effectively learn the estimation model that receives point cloud data as input and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data.

- the term "complement” in the above description includes the complementation of so-called missing areas and the complementation of occlusion areas, but this term does not limit this exemplary embodiment.

- a thinning process is applied to at least one of the input data and the three-dimensional point cloud data to generate three-dimensional thinned point cloud data, and the estimation model is trained by referring to the three-dimensional thinned point cloud data, so that a highly accurate estimation model can be generated while suppressing an increase in cost. Therefore, according to the above configuration, a highly accurate segmentation technology can be provided while suppressing an increase in cost.

- the information processing device 1 may be configured not to include the point cloud data generation unit 12.

- the information processing device 1 may be configured to include a learning unit 14 that learns an estimation model that receives point cloud data as an input, and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data, by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data.

- the information processing device 1 configured in this manner can also achieve the above-mentioned effects.

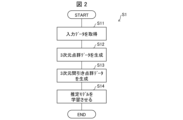

- Fig. 2 is a flow diagram showing the flow of the information processing method S1.

- the information processing method S1 includes a step (process) S11 of acquiring input data, a step (process) S12 of generating three-dimensional point cloud data, a step (process) S13 of generating three-dimensional thinned point cloud data, and a step (process) S14 of training an estimation model.

- step S11 the acquisition unit 11 acquires input data.

- the specific process performed by the acquisition unit 11 has been described above, and therefore will not be described here.

- step S12 the point cloud data generating unit 12 generates three-dimensional point cloud data from the input data acquired by the acquiring unit 11.

- the specific processing by the point cloud data generating unit 12 has been described above, and therefore will not be described here.

- step S13 the thinned point cloud data generator 13 generates three-dimensional thinned point cloud data by applying a thinning process to at least one of the input data acquired by the acquisition unit 11 and the three-dimensional point cloud data generated by the point cloud data generator 12.

- the specific process by the thinned point cloud data generator 13 has been described above, and therefore will not be described here.

- step S14 the learning unit 14 trains an estimation model to which point cloud data is input and which outputs the complemented point cloud data and a segmentation label related to the complemented point cloud data.

- the learning unit 14 trains the estimation model by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data. The specific processing by the learning unit 14 has been described above, and therefore will not be described here.

- a thinning process is applied to at least one of the input data and the three-dimensional point cloud data to generate three-dimensional thinned point cloud data, and the estimation model is trained by referring to the three-dimensional thinned point cloud data, so that a highly accurate estimation model can be generated while suppressing an increase in cost. Therefore, according to the above configuration, a highly accurate segmentation technology can be provided while suppressing an increase in cost.

- the information processing method S1 may be configured not to include step S12.

- the information processing method S1 may include a learning step S14 in which an estimation model having point cloud data as input and having complemented point cloud data and segmentation labels related to the complemented point cloud data as output is learned by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data.

- the information processing method S1 configured in this manner can also achieve the above-mentioned effects.

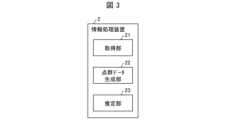

- Fig. 3 is a block diagram showing the configuration of the information processing device 2.

- the information processing device 2 includes an acquisition unit 21, a point cloud data generation unit 22, and an estimation unit 23.

- the acquisition unit 11 acquires input data.

- the input data is, for example, input data for an inference phase (estimation phase, test phase).

- a specific example of the input data acquired by the acquisition unit 21 does not limit the present exemplary embodiment, but may be, for example,

- the image data may be configured to include at least one of: RGB data in which each pixel (data point) represents an RGB value; depth data in which each pixel (data point) represents a depth value; and three-dimensional point cloud data in which each data point represents a three-dimensional coordinate.

- the three-dimensional point cloud data may be point cloud data acquired by a LiDAR (Light Detection and Ranging, or Laser Imaging Detection and Ranging) device, as an example, but this example does not limit the present exemplary embodiment.

- LiDAR Light Detection and Ranging, or Laser Imaging Detection and Ranging

- the acquisition unit 21 can be configured to acquire data in the same format as the input data acquired by the acquisition unit 11 provided in the information processing device 1 described above, but the acquisition unit 21 does not need to acquire the correct answer label regarding the segmentation described in the information processing device 1.

- Point cloud data generator 22 The point cloud data generator 12 generates three-dimensional point cloud data from the input data acquired by the acquisition unit 21 .

- the point cloud data generating unit 22 generates three-dimensional point cloud data from the input data acquired by the acquiring unit 21, the input data including at least one of RGB data and depth data. Identifying the three-dimensional coordinates of each pixel included in the RGB data by referencing the depth data of each pixel in the depth data; - A configuration may be adopted in which three-dimensional point cloud data is generated in which the specified three-dimensional coordinates are assigned to each pixel (each data point).

- the three-dimensional point cloud data may be configured to include at least one of the RGB values and normal values (normal vectors) of each data point in addition to the three-dimensional coordinates assigned to each data point.

- a configuration may be adopted in which attribute data including at least one of the RGB values and normal values (normal vectors) of each data point is generated in association with the three-dimensional point cloud data including the three-dimensional coordinates of each data point.

- the point cloud data generation unit 22 may be configured to output the three-dimensional point cloud data as is.

- the three-dimensional point cloud data may be configured to include at least one of the RGB values and normal values (normal vectors) of each of the above-mentioned data points before outputting the data.

- the point cloud data generation unit 22 may be configured to output attribute data including at least one of the RGB values and normal values (normal vectors) of each of the above-mentioned data points, in association with the three-dimensional point cloud data including the three-dimensional coordinates of each data point.

- the information processing device 2 may be configured not to include the point cloud data generation unit 22. Such a configuration is also included in this exemplary embodiment.

- the point cloud data generator 22 can be configured to perform processing similar to that of the point cloud data generator 12 included in the information processing device 1, as an example, but this is not a limitation of this exemplary embodiment.

- the estimation unit 23 uses a machine-learned estimation model to estimate the complemented point cloud data corresponding to the three-dimensional point cloud data generated by the point cloud data generation unit 22 and the segmentation label related to the complemented point cloud data.

- the machine-learned estimation model may be an estimation model trained by the learning unit 14 included in the information processing device 1 described above.

- the machine-learned estimation model is an estimation model that receives point cloud data as an input and outputs complemented point cloud data and a segmentation label related to the complemented point cloud data, and is machine-learned with reference to the three-dimensional point cloud data and the three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data.

- the estimation model that has been machine-learned with reference to three-dimensional thinned point cloud data generated by applying a thinning process to the three-dimensional point cloud data is used to estimate the complemented point cloud data corresponding to the three-dimensional point cloud data generated in the point cloud data generation process and the segmentation label related to the complemented point cloud data, so that it is possible to perform an estimation process using a highly accurate estimation model while suppressing an increase in cost. Therefore, according to the above configuration, it is possible to provide a highly accurate segmentation technology while suppressing an increase in cost.

- the information processing device 2 may be configured not to include the point cloud data generation unit 22.

- the information processing device 2 may be configured to include an estimation unit 23 that uses an estimation model that is machine-learned by referring to three-dimensional point cloud data and three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data, to estimate the complemented point cloud data corresponding to the three-dimensional point cloud data acquired by the acquisition unit 21 and the segmentation label related to the complemented point cloud data, using an estimation model that receives point cloud data as an input and outputs complemented point cloud data and a segmentation label related to the complemented point cloud data.

- the information processing device 2 configured in this manner can also achieve the above-mentioned effects.

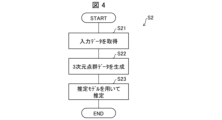

- Fig. 4 is a flow diagram showing the flow of the information processing method S2.

- the information processing method S2 includes a step (process) S21 of acquiring input data, a step (process) S22 of generating three-dimensional point cloud data, and a step (process) S23 of performing estimation using an estimation model.

- Step S21 the acquiring unit 21 acquires input data.

- the specific process performed by the acquiring unit 21 has been described above, and therefore will not be described here.

- step S22 the point cloud data generating unit 22 generates three-dimensional point cloud data from the input data acquired by the acquiring unit 21.

- the specific processing by the point cloud data generating unit 22 has been described above, and therefore will not be described here.

- step S23 the estimation unit 23 estimates the complemented point cloud data corresponding to the three-dimensional point cloud data generated in step S22 and the segmentation label related to the complemented point cloud data by using the machine-learned estimation model.

- the machine-learned estimation model can be an estimation model trained by the above-mentioned information processing method S1.

- the machine-learned estimation model is an estimation model that inputs point cloud data and outputs complemented point cloud data and a segmentation label related to the complemented point cloud data, and is machine-learned with reference to the three-dimensional point cloud data and the three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data.

- the estimation model machine-learned with reference to three-dimensional thinned point cloud data generated by applying a thinning process to the three-dimensional point cloud data is used to estimate the complemented point cloud data corresponding to the three-dimensional point cloud data generated in the point cloud data generation process and the segmentation label related to the complemented point cloud data, so that it is possible to perform an estimation process using a highly accurate estimation model while suppressing an increase in cost. Therefore, according to the above configuration, it is possible to provide a highly accurate segmentation technology while suppressing an increase in cost.

- the input/output unit 40 is configured to include at least one of input/output devices such as a keyboard, a mouse, a display, a printer, and a touch panel. Alternatively, the input/output unit 40 may be configured to be connected to input/output devices such as a keyboard, a mouse, a display, a printer, and a touch panel. In this configuration, the input/output unit 40 accepts input of various information from the connected input device to the information processing device 1A. Moreover, the input/output unit 40 outputs various information to the connected output device under the control of the control unit 10A.

- An example of the input/output unit 40 is an interface such as a Universal Serial Bus (USB).

- USB Universal Serial Bus

- the storage unit 20A stores various data referenced by the control unit 10A and various data generated by the control unit 10A.

- Input data IND ⁇ 3D point cloud data PCD ⁇ Three-dimensional thinned point cloud data TPCD First feature amount F1 Second feature amount F2 ⁇ Estimation model PM ⁇ Segmentation data SG

- the input data IND is data acquired by an acquisition unit 11 (21) described later. Specific examples of the input data IND will be described later.

- the three-dimensional point cloud data PCD is data generated by a point cloud data generation unit 12 (22) described later. Specific examples of the three-dimensional point cloud data PCD will be described later.

- the three-dimensional thinned point cloud data TPCD is data generated by a thinned point cloud data generation unit 13 described later. Specific examples of the three-dimensional thinned point cloud data TPCD will be described later.

- the control unit 10A also includes a feature quantity selection unit 15, as shown in FIG. 5.

- the feature quantity selection unit 15 includes a first feature quantity selection unit 151 and a second feature quantity selection unit 152, as shown in FIG. 5.

- the acquisition unit 11 (21) acquires input data IND.

- the acquisition unit 11 acquires input data for learning in the learning phase, and acquires input data for inference in the inference phase (estimation phase, test phase).

- the image data may be configured to include at least one of: RGB data in which each pixel (data point) represents an RGB value; depth data in which each pixel (data point) represents a depth value; and three-dimensional point cloud data in which each data point represents a three-dimensional coordinate.

- the three-dimensional point cloud data may be point cloud data acquired by a LiDAR (Light Detection and Ranging, or Laser Imaging Detection and Ranging) device, as an example, but this example does not limit the present exemplary embodiment.

- the acquisition unit 11 (21) can be configured to acquire input data including correct answer labels for segmentation (input data in which correct answer labels for segmentation are attached to each data point) in the learning phase, and to acquire input data that does not include the correct answer labels in the inference phase.

- the input data IND acquired by the acquisition unit 11 (21) is stored in the memory unit 20A, for example, and is referenced by the point cloud data generation unit 12 (22), the thinned point cloud data generation unit 13, the learning unit 14, the estimation unit 23, etc.

- the point cloud data generating unit 12 (22) generates three-dimensional point cloud data PCD from the input data IND acquired by the acquiring unit 11 (21).

- the point cloud data generating unit 12 (22) generates three-dimensional point cloud data PCD from the input data IND acquired by the acquiring unit 11 (21), the input data IND including at least one of RGB data and depth data.

- the point cloud data generating unit 12 (22) Identifying the three-dimensional coordinates of each pixel included in the RGB data by referencing the depth data of each pixel in the depth data; - A configuration may be adopted in which three-dimensional point cloud data PCD is generated in which the specified three-dimensional coordinates are assigned to each pixel (each data point).

- the three-dimensional point cloud data PCD may be configured to include at least one of the RGB values and normal values (normal vectors) of each data point in addition to the three-dimensional coordinates assigned to each data point.

- a configuration may be adopted in which attribute data including at least one of the RGB values and normal values (normal vectors) of each data point is generated in association with the three-dimensional point cloud data including the three-dimensional coordinates of each data point.

- the point cloud data generation unit 12 (22) may be configured to output the three-dimensional point cloud data as it is as three-dimensional point cloud data PCD.

- the three-dimensional point cloud data acquired by the acquisition unit 11 (21) may be configured to include at least one of the RGB values and normal values (normal vectors) of each of the above-mentioned data points and output the data as three-dimensional point cloud data PCD.

- the attribute data including at least one of the RGB values and normal values (normal vectors) of each of the above-mentioned data points may be output in association with the three-dimensional point cloud data PCD including the three-dimensional coordinates of each data point.

- the point cloud data generation unit 12 (22) can be configured to, as an example, generate three-dimensional point cloud data PCD by referring to all frames included in the input data IND.

- the information processing device 1A may be configured not to include the point cloud data generation unit 12 (22). Such a configuration is also included in this exemplary embodiment.

- the thinned point cloud data generating unit 13 applies a thinning process to at least one of the input data IND acquired by the acquiring unit 11 (21) and the three-dimensional point cloud data PCD generated by the point cloud data generating unit 12 (22) to generate three-dimensional thinned point cloud data TPCD.

- the thinning process may be, for example, The process includes a process of generating the three-dimensional thinned point cloud data TPCD using only some of the frames included in the input data IND.

- the thinning process may include, as an example, a process of generating the three-dimensional thinned point cloud data TPCD using only N % (N is a real number less than 100) of the frames included in the input data IND.

- the thinned point cloud data generating unit 13 performs the process of selecting the N % of frames as follows: Randomly select the frames to be removed.

- a process may be performed in which a group of consecutive frames are removed in such a way that a specific area is missing from the image or object represented by the input data IND.

- the thinning process may include:

- the configuration may include a process of generating the three-dimensional thinned point cloud data TPCD by using only some of the data points included in at least one of the input data IND and the three-dimensional point cloud data PCD.

- the thinning process may include:

- the configuration may include at least one of the following: a process for reducing the resolution of a depth image contained in the input data IND; and a process for applying a thinning method to the three-dimensional point cloud data contained in the input data IND so as to reduce the density of the light receiving sensors of the LiDAR device.

- the learning unit 14 trains an estimation model PM to which the point cloud data IND is input and which outputs complemented point cloud data and a segmentation label related to the complemented point cloud data.

- the learning unit 14 trains the estimation model PM by referring to the three-dimensional point cloud data PCD and the three-dimensional thinned point cloud data TPCD.

- the learning unit 14 Refer to teacher data TD including the three-dimensional point cloud data PCD1 and three-dimensional thinned point cloud data TPCD1 obtained by thinning the three-dimensional point cloud data PCD1,

- the three-dimensional thinned point cloud data TPCD1 included in the teacher data TD is input to the estimation model PM, thereby causing the estimation model PM to generate three-dimensional point cloud data PCD2 (which may also be referred to as complemented three-dimensional point cloud data IPCD1); -

- the estimation model PM is subjected to machine learning so that the difference between the three-dimensional point cloud data PCD2 generated by the estimation model PM and the three-dimensional point cloud data PCD1 included in the training data TD is reduced.

- the three-dimensional point cloud data PCD1 included in the teacher data TD may include a correct answer label GTL1 for segmentation, or the three-dimensional point cloud data PCD1 included in the teacher data TD may be configured to accompany (associate) the correct answer label GTL1 for segmentation.

- the learning unit 14 may be configured to train the estimation model PM so that the difference between the segmentation label PL1 output (estimated) by the estimation model PM and the correct answer label GTL1 for segmentation is small.

- the correct answer label GTL1 may be, as an example, included in the input data IND or may be configured to accompany (associate) with the input data IND, and the acquisition unit 11 may be configured to acquire the correct answer label GTL1.

- the learning unit 14 may also be configured to learn the estimation model PM by further referring to a first feature value F1 associated with at least one of the input data IND, the three-dimensional point cloud data PCD, and the three-dimensional thinned point cloud data TPCD.

- the first feature amount F1 may be At least one of the RGB values and normal values (normal vectors) of each data point included in (or associated with) the input data IND or the three-dimensional point cloud data PCD can be used. A specific method of training the estimation model PM with reference to the first feature amount F1 will be described later.

- the estimation model PM is, for example, A first estimation model PM1 that receives point cloud data and outputs complemented point cloud data; and a second estimation model PM2 that receives at least the interpolated point cloud data and outputs a segmentation label related to the interpolated point cloud data.

- a more specific configuration example of the estimation model PM will be described later with reference to the drawings.

- the learning unit 14 configured as described above can effectively train the estimation model PM, which receives point cloud data as input and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data.

- the estimation model PM is learned by referring to the three-dimensional thinned point cloud data TPCD generated by applying a thinning process to at least one of the input data IND and the three-dimensional point cloud data PCD, so that it is possible to generate a highly accurate estimation model while suppressing increases in cost. Therefore, with the above configuration, it is possible to provide a highly accurate segmentation technique while suppressing increases in cost.

- Estimatiation unit 23 The estimation unit 23, similarly to the exemplary embodiment 2, estimates the complemented point cloud data corresponding to the three-dimensional point cloud data PCD generated by the point cloud data generation unit 12 (22) and the segmentation label related to the complemented point cloud data by using a machine-learned estimation model.

- the machine-learned estimation model can use the estimation model PM trained by the learning unit 14 described above.

- the machine-learned estimation model is an estimation model that inputs point cloud data and outputs complemented point cloud data and a segmentation label related to the complemented point cloud data, and is the estimation model PM trained by machine learning with reference to the three-dimensional point cloud data and the three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data.

- the estimation unit 23 may also be configured to further refer to a second feature F2 associated with at least one of the input data IND and the three-dimensional point cloud data PCD generated by the point cloud data generation unit 12 (22) to estimate the complemented point cloud data corresponding to the three-dimensional point cloud data PCD generated by the point cloud data generation unit 12 (22) and the segmentation label related to the complemented point cloud data.

- a second feature F2 associated with at least one of the input data IND and the three-dimensional point cloud data PCD generated by the point cloud data generation unit 12 (22) to estimate the complemented point cloud data corresponding to the three-dimensional point cloud data PCD generated by the point cloud data generation unit 12 (22) and the segmentation label related to the complemented point cloud data.

- the second feature amount F2 may be At least one of the RGB values and normal values (normal vectors) of each data point included in (or associated with) the input data IND or the three-dimensional point cloud data PCD can be used.

- the type of the second feature F2 e.g., RGB value or normal value

- data including the "complemented point cloud data” and “segmentation labels related to the complemented point cloud data” estimated (generated) by the estimation unit 23 is stored in the storage unit 20A as the segmentation data SD shown in FIG. 5, for example, and is provided to the outside of the information processing device 100A via the communication unit 30 and the input/output unit 40, etc.

- the segmentation data SD may also be expressed as a segmentation image, a segmentation result, etc.

- the estimation unit 23 also functions as a presentation means that presents a segmentation image including objects having a display color and a display texture corresponding to the segmentation label estimated by the estimation unit 23 to the user via a display provided in the input/output unit 40.

- the estimation unit 23 configured as described above performs estimation processing using the estimation model PM that has been trained with reference to the three-dimensional thinned point cloud data TPCD that has been generated by applying a thinning process to at least one of the input data IND and the three-dimensional point cloud data PCD, making it possible to perform highly accurate estimation while suppressing increases in cost. Therefore, with the above configuration, it is possible to provide a highly accurate segmentation technique while suppressing increases in cost.

- the feature quantity selection unit 15 includes a first feature quantity selection unit 151 and a second feature quantity selection unit 152.

- the first feature quantity selection unit 151 selects a first feature quantity F1 to be referred to when the learning unit 14 learns the estimation model PM.

- the second feature quantity selection unit 152 selects a second feature quantity F2 to be referred to when the estimation unit 23 performs estimation processing using the estimation model PM.

- FIG. 6 is a diagram for explaining an example of the flow of data in the learning phase of the information processing device 1A.

- the acquisition unit 11 acquires input data IND.

- the input data IND is, as an example, input data for learning, and each data point included in the input data is assigned, as an example, a correct answer label GTL1 for segmentation.

- the point cloud data generation unit 12 generates three-dimensional point cloud data PCD by referring to the input data IND.

- the generated three-dimensional point cloud data PCD is supplied to the thinned point cloud data generation unit 13 and the first estimation model learning unit 141 provided in the learning unit 14.

- the thinned point cloud data generation unit 13 generates three-dimensional thinned point cloud data TPCD from the three-dimensional point cloud data PCD, and supplies the generated three-dimensional thinned point cloud data TPCD to the first estimation model learning unit 141.

- the first estimation model learning unit 141 is configured to learn the first estimation model PM1 included in the estimation model PM.

- the first estimation model learning unit 141 also calculates a first loss value (first loss function) (also denoted as BCE_Loss) indicating the difference between the above-mentioned complemented three-dimensional point cloud data IPCD1 and the three-dimensional point cloud data PCD generated by the point cloud data generation unit 12.

- first loss function also denoted as BCE_Loss

- the first estimation model learning unit 141 supplies the coordinates of each data point included in the complemented three-dimensional point cloud data IPCD1 to the first feature quantity selection unit 151. Then, the first feature quantity selection unit 151 Compare the coordinates associated with the first feature amount F1 stored in the storage unit 20A with the coordinates of each data point included in the interpolated three-dimensional point cloud data IPCD1; Identifying the first feature values F1 corresponding to each data point included in the interpolated three-dimensional point cloud data IPCD1 from among the first feature values F1 stored in the storage unit 20A; The identified first feature amount F1 is supplied to the second estimation model learning unit 142.

- the first feature F1 supplied from the first feature selection unit 151 is input to the second estimation model PM2, and an estimated label PL1 relating to the segmentation output by the second estimation model PM2 is obtained.

- the second estimation model learning unit 142 also calculates a second loss value (second loss function) (also indicated as CE_Loss) indicating the difference between the estimated label PL1 and, as an example, the correct label GTL1 for the segmentation acquired by the acquisition unit 11.

- second loss function also indicated as CE_Loss

- the learning unit 14 trains a first estimation model PM that receives point cloud data as input and outputs complemented point cloud data, and a second estimation model PM2 that receives at least the complemented point cloud data as input and outputs segmentation labels related to the complemented point cloud data.

- FIG. 7 is a diagram for explaining an example of the flow of data regarding the network in the learning phase.

- at least one of the acquisition unit 11 and the point cloud data generation unit 12 acquires teacher data TD including three-dimensional point cloud data PCD1 and ground truth labels GTL1 (also indicated as true value labels GTL1 in FIG. 7) assigned to each data point of the three-dimensional point cloud data PCD1.

- teacher data TD including three-dimensional point cloud data PCD1 and ground truth labels GTL1 (also indicated as true value labels GTL1 in FIG. 7) assigned to each data point of the three-dimensional point cloud data PCD1.

- the three-dimensional point cloud data BPCD1 from which attribute data such as RGB values and normal values have been removed is input to the thinned point cloud data generator 13, and the thinned point cloud data generator 13 generates three-dimensional thinned point cloud data BTPCD1.

- the three-dimensional thinned point cloud data BTPCD1 is then input to the first estimation model PM1 (also referred to as the complementation network in FIG. 7).

- the first estimation model PM1 outputs the complemented three-dimensional point cloud data IPCD1.

- the complemented three-dimensional point cloud data IPCD1 is input to the second estimation model PM2 (also referred to as semantic segmentation network in FIG. 7) together with the first feature F1 (referred to as first feature data F1 in FIG. 7).

- the first feature F1 is the first feature F1 selected by the first feature selection unit 151 described above.

- the first feature F1 is input to the second estimation model PM2 in the form of a mask feature, for example.

- the mask feature refers to a data group in which the data points at which the first feature F1 selected by the first feature selection unit 151 exists have the value of the feature, and the other data points are assigned a null feature (a predetermined value such as 0, 255, -1, etc.).

- the difference between the complemented three-dimensional point cloud data IPCD1 and the above-mentioned three-dimensional point cloud data BPCD1 is calculated as a first loss value (BCE_Loss).

- the second estimation model PM2 outputs an estimated label PL1 for the segmentation of each data point of the complemented 3D point cloud data IPCD1.

- the difference between the estimated label PL1 and the ground truth label GTL1 is calculated as a second loss value (CE_Loss).

- the learning unit 14 updates the parameters of the first estimation model PM1 and the second estimation model PM2 so that the total loss value represented by the first loss value and the second loss value becomes smaller.

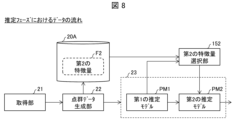

- FIG. 8 is a diagram for explaining an example of the flow of data in the estimation phase of the information processing device 1A.

- the acquisition unit 21 acquires input data IND.

- the input data IND is, as an example, input data for estimation, and unlike the input data for learning described above, each data point included in the input data is not assigned a correct answer label GTL1 for segmentation.

- the point cloud data generation unit 22 generates three-dimensional point cloud data PCD by referring to the input data IND.

- the generated three-dimensional point cloud data PCD is supplied to a first estimation model PM1 provided in the estimation unit 23.

- the point cloud data generator 22 also associates at least one of the RGB values and normal values (normal vectors) of each data point included in (or associated with) the input data IND or the three-dimensional point cloud data PCD with the coordinates of the data point, and stores them in the memory unit 20A as a second feature F2.

- the estimation unit 23 inputs the three-dimensional point cloud data PCD generated by the point cloud data generation unit 22 into the first estimation model PM1.

- the first estimation model PM1 is, as an example, the first estimation model PM1 that has been machine-learned by the first estimation model learning unit 141 described above in the learning phase.

- the first estimation model PM1 to which the above-mentioned three-dimensional point cloud data PCD is input outputs the complemented three-dimensional point cloud data IPCD2.

- the estimation unit 23 also supplies the coordinates of each data point included in the complemented three-dimensional point cloud data IPCD2 to the second feature quantity selection unit 152.

- the second feature quantity selection unit 152 then: Compare the coordinates associated with the second feature amount F2 stored in the storage unit 20A with the coordinates of each data point included in the interpolated three-dimensional point cloud data IPCD2, Identifying the second feature amounts F2 corresponding to each data point included in the interpolated three-dimensional point cloud data IPCD2 from among the second feature amounts F2 stored in the storage unit 20A; The identified second feature F2 is supplied to a second estimation model PM2.

- the estimation unit 23 Interpolated three-dimensional point cloud data IPCD2 calculated using the first estimation model PM1; and - The second feature F2 supplied from the second feature selection unit 152 is input into the second estimation model PM2, and an estimated label PL2 regarding the segmentation output by the second estimation model PM2 (in other words, a segmentation label regarding the complemented 3D point cloud data IPCD2) is obtained.

- data including the "complemented three-dimensional point cloud data IPCD2" and “segmentation labels related to the complemented three-dimensional point cloud data IPCD2" estimated (generated) by the estimation unit 23 is stored in the storage unit 20A as the segmentation data SD shown in FIG. 5, for example, and is provided to the outside of the information processing device 100A via the communication unit 30 and the input/output unit 40, etc.

- FIG. 9 is a diagram for explaining an example of the flow of data related to the network in the estimation phase.

- three-dimensional point cloud data PCD2 is acquired by at least one of the acquisition unit 21 and the point cloud data generation unit 22.

- the three-dimensional point cloud data BPCD2 from which attribute data such as RGB values and normal values have been removed is input to the first estimation model PM1 (also referred to as the complementation network in FIG. 9).

- the first estimation model PM1 outputs the complemented three-dimensional point cloud data IPCD2.

- the complemented three-dimensional point cloud data IPCD2 is input to the second estimation model PM2 (also referred to as the semantic segmentation network in FIG. 7) together with the second feature F2 (referred to as the second feature data F2 in FIG. 9).

- the second feature F2 is the second feature F2 selected by the second feature selection unit 152 described above.

- the second feature F2 is input to the second estimation model PM2 in the form of a mask feature, for example.

- the mask feature refers to a data group in which the data points at which the second feature F2 selected by the second feature selection unit 152 exists have the value of the feature, and the other data points are assigned a null feature (a predetermined value such as 0, 255, -1, etc.).

- the second estimation model PM2 outputs estimated labels PL2 for the segmentation of each data point of the interpolated 3D point cloud data IPCD2.

- the estimation phase - Obtain input data, Generate three-dimensional point cloud data from the input data;

- An estimation model that takes point cloud data as input and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data, and is configured to estimate complemented point cloud data corresponding to the three-dimensional point cloud data generated in the point cloud data generation process and segmentation labels related to the complemented point cloud data using an estimation model that has been machine-learned by referring to three-dimensional point cloud data and three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data.

- the estimation model in the learning phase, is trained by referring to three-dimensional thinned point cloud data generated by applying a thinning process to three-dimensional point cloud data. Then, in the estimation phase, the machine-learned estimation model is used to estimate complemented point cloud data corresponding to the three-dimensional point cloud data generated in the point cloud data generation process and segmentation labels related to the complemented point cloud data. Therefore, with the above configuration, it is possible to perform estimation processing using a highly accurate estimation model while suppressing increases in costs.

- the estimation model PM is trained by further referring to a first feature associated with at least one of the input data, the three-dimensional point cloud data, and the three-dimensional thinned point cloud data, and in the estimation phase, estimation processing is performed using the estimation model PM by further referring to a second feature associated with at least one of the input data and the three-dimensional point cloud data. Therefore, with the above configuration, it is possible to perform estimation processing using a more accurate estimation model while suppressing increases in costs.

- the estimation model PM is - a first network;

- a configuration may be adopted in which the first network is branched off from a second network, the above-mentioned loss value CE_Loss (or BCE_Loss) is calculated by referring to the output of the first network, the above-mentioned loss value BCE_Loss (or CE_Loss) is calculated by referring to the output of the second network, and the parameters of the first network and the second network are updated by referring to a total loss value including these loss values.

- Fig. 10 is a block diagram showing the configuration of the information processing device 1B. As shown in Fig. 10, the information processing device 1B includes a projection unit 16 in addition to the units included in the information processing device 1A according to the second exemplary embodiment.

- the storage unit 20B of the information processing device 1B stores projected point cloud data PPCD (also referred to as post-projection point cloud data PPCD) in addition to the data stored in the storage unit 20A of the information processing device 1A according to the second exemplary embodiment.

- PPCD projected point cloud data

- the following explanation will focus on the differences from the information processing device 1A.

- the projection unit 16 generates projected point cloud data PPCD by projecting the complemented point cloud data generated by the estimation model PM onto a two-dimensional plane.

- the two-dimensional plane is, for example, a two-dimensional plane corresponding to a projection onto a camera position (viewpoint) that was not used when the thinned point cloud data generation unit 13 generated the three-dimensional thinned point cloud data TPCD in the learning phase.

- the projection unit 16 may be expressed as generating the projected point cloud data PPCD by projecting the complemented point cloud data generated by the estimation model PM from a camera position (viewpoint) that was not used when the thinned point cloud data generation unit 13 generated the three-dimensional thinned point cloud data TPCD in the learning phase.

- the projection unit 16 projects the complemented point cloud data generated by the estimation model PM as follows: A position (viewpoint) different from the position (viewpoint) of an imaging device (including LiDAR) when the imaging device acquires at least one of the RGB values, depth values, and 3D point cloud data included in the input data IND.

- the projection unit 16 may be configured to project the interpolated point cloud data generated by the estimation model PM onto the It may also be expressed as a configuration in which the projected point cloud data PPCD is generated by projecting to a viewpoint different from the viewpoint for at least any of the RGB values, depth values, and three-dimensional point cloud data contained in the input data IND that the point cloud data generation unit 12 (22) refers to when generating the three-dimensional point cloud data PCD.

- the learning unit 14 learns the estimation model by further referring to the projected point cloud data PPCD and the segmentation ground truth label GTL corresponding to the projected point cloud data.

- the learning unit 14 calculates a third loss value (third loss function) CE_2D_Loss indicating the difference between the estimated label estimated by the estimation model PM for the projected point cloud data PPCD and the segmentation correct label GTL acquired by the acquisition unit 11.

- a third loss value CE_2D_Loss indicating the difference between the estimated label estimated by the estimation model PM for the projected point cloud data PPCD and the segmentation correct label GTL acquired by the acquisition unit 11.

- ⁇ and ⁇ indicate weighting coefficients that can be appropriately set.

- the third loss value CE_2D_Loss may be calculated by the projection unit 16.

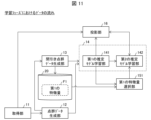

- FIG. 11 is a diagram for explaining an example of the data flow in the learning phase of the information processing device 1B.

- the segmentation correct label GTL is supplied from the acquisition unit 11 to the projection unit 16,

- the first estimation model learning unit 141 supplies the complemented three-dimensional point cloud data IPCD generated by the first estimation model PM1

- the second estimation model learning unit 142 supplies the estimated labels PL, which are estimated by the second estimation model PM2 and relate to each data point of the three-dimensional point cloud data PPCD after projection by the projection unit 16.

- the projection unit 16 calculates a third loss value CE_2D_Loss indicating the difference between the segmentation ground truth label GTL and the estimated label PL, and supplies it to the learning unit 14.

- the learning unit 14 calculates a total loss value (Loss_total) using the linear sum of the first loss value BCE_Loss, the second loss value CE_Loss, and the third loss value CE_2D_Loss, as described above.

- the learning unit 14 updates the parameters of the first estimation model PM1 and the second estimation model PM2 so that the value of the total loss value (Loss_total) becomes smaller.

- the interpolated point cloud data IPCD generated by the estimation model PM is projected onto a two-dimensional plane to generate projected point cloud data PPCD;

- the learning unit 14 is configured to learn the estimation model PM by further referring to the projected point cloud data PPCD and the segmentation ground truth label GTL corresponding to the projected point cloud data PPCD.

- a more suitable loss function can be configured by referring to the projected point cloud data PPCD, so that an estimation model PM with higher estimation accuracy can be generated in the learning phase.

- highly accurate estimation can be performed using such an estimation model PM.

- a fourth exemplary embodiment which is an example of an embodiment of the present invention, will be described in detail with reference to the drawings. Components having the same functions as those described in the above exemplary embodiment will be given the same reference numerals, and their description will be omitted as appropriate.

- the scope of application of each technical means adopted in this exemplary embodiment is not limited to this exemplary embodiment. That is, each technical means adopted in this exemplary embodiment can be adopted in other exemplary embodiments included in this disclosure to the extent that no particular technical hindrance occurs. In addition, each technical means shown in each drawing referred to for explaining this exemplary embodiment can be adopted in other exemplary embodiments included in this disclosure to the extent that no particular technical hindrance occurs.

- Fig. 12 is a block diagram showing the configuration of the information processing device 1C.

- a control unit 10C included in the information processing device 1C has the same configuration as the control unit 10A included in the information processing device 1A.

- a storage unit 20C included in the information processing device 1C stores thinning information TI in addition to the data stored in the storage unit 20A included in the information processing device 1A according to the second exemplary embodiment.

- the thinning information TI here is information that indicates what type of thinning process was performed when the thinned point cloud data generation unit 13 executed the thinning process. The following explanation will focus on the points that are different from the information processing device 1A.

- the thinning information TI is information indicating what type of thinning processing has been performed when the thinned point cloud data generating unit 13 executes the thinning processing.

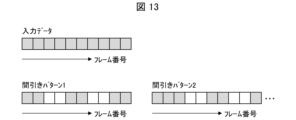

- Fig. 13 is a diagram for explaining an example of the thinning information TI.

- the upper part of FIG. 13 shows a schematic representation of multiple frames included in the input data IND acquired by the acquisition unit 11 during the learning phase.

- the frame numbers increase as you move to the right, for example.

- the lower left side of Fig. 13 shows thinning pattern 1 in the thinning process by the thinned point cloud data generation unit 13.

- the shaded frames are frames that are referenced by the thinned point cloud data generation unit 13 when generating the three-dimensional thinned point cloud data TPCD

- the non-shaded frames are frames that are not referenced when generating the three-dimensional thinned point cloud data TPCD.

- the non-shaded frames are frames that are thinned out when generating the three-dimensional thinned point cloud data TPCD.

- thinning pattern 1 in certain 10 consecutive frames, - Refer to two consecutive frames, - The next two consecutive frames are thinned out. - The next two consecutive frames are referenced. The next two consecutive frames are thinned out, and the next two consecutive frames are referenced.

- Fig. 13 shows thinning pattern 2 in the thinning process by the thinned point cloud data generating unit 13.

- thinning pattern 2 in certain consecutive 10 frames, - Refer to three consecutive frames, - The next two consecutive frames are thinned out. - The next two consecutive frames are referenced. The next two consecutive frames are then thinned out, and the next frame is referenced.

- the thinned point cloud data generation unit 13 stores, as an example, a frame index indicating the thinned frame, as thinning information TI in the storage unit 20C. By referencing such thinning information TI, the thinned point cloud data generation unit 13 generates multiple mutually different three-dimensional thinned point cloud data TPCD using multiple thinning patterns for the same input data IND or the same three-dimensional point cloud data PCD.

- the information processing device 1C stores the thinned frame index when generating the three-dimensional thinned point cloud data TPCD in the thinned point cloud data generating unit 13, thereby creating multiple three-dimensional thinned point cloud data TPCD with different thinning methods from a single data input, thereby strengthening the data used for learning in the learning phase.

- a fifth exemplary embodiment which is an example of an embodiment of the present invention, will be described in detail with reference to the drawings. Components having the same functions as those described in the above exemplary embodiment will be given the same reference numerals, and their description will be omitted as appropriate.

- the scope of application of each technical means adopted in this exemplary embodiment is not limited to this exemplary embodiment. That is, each technical means adopted in this exemplary embodiment can be adopted in other exemplary embodiments included in this disclosure, as long as no particular technical hindrance occurs. In addition, each technical means shown in each drawing referred to for explaining this exemplary embodiment can be adopted in other exemplary embodiments included in this disclosure, as long as no particular technical hindrance occurs.

- Fig. 14 is a block diagram showing the configuration of the information processing device 1D.

- the information processing device 1D has the following configurations (or data) among the units included in the information processing devices 1A, 1B, and 1C according to exemplary embodiments 2 to 4, and other configurations are not essential.

- - In the control unit 10D, an acquisition unit 21, a point cloud data generation unit 22, an estimation unit 23, a second feature selection unit 152, and -

- input data IND input data IND, three-dimensional point cloud data PCD, a second feature F2, an estimation model PM, and segmentation data SG.

- the information processing device 1D has a configuration for performing processing of the estimation phase using the estimation model PM, but does not have a configuration for learning the estimation model PM.

- the estimation model PM used by the information processing device 1D for the estimation processing may be the estimation model PM learned by the information processing devices 1A, 1B, and 1C according to exemplary embodiments 2 to 4.

- the information processing device 1D configured as described above uses the estimation model PM, which has been machine-learned with reference to three-dimensional thinned point cloud data generated by applying a thinning process to three-dimensional point cloud data, to estimate complemented point cloud data corresponding to the three-dimensional point cloud data generated in the point cloud data generation process and segmentation labels related to the complemented point cloud data.

- This makes it possible to perform estimation processing using a highly accurate estimation model while suppressing increases in costs. Therefore, with the above configuration, it is possible to provide a highly accurate segmentation technology while suppressing increases in costs.

- the information processing devices 1, 1A, 1B, 1C, and 1D (hereinafter simply referred to as information processing device 1, etc.) according to the exemplary embodiments described above can also be applied to the medical and healthcare fields, for example.

- the technology of the present application can be used for medical applications by performing segmentation based on medical images of patients.

- processing may be performed according to the processing flow shown below.

- Step S101 Scan step

- a medical professional such as a doctor or medical staff uses an imaging device (endoscope, fMRI, etc.) to capture images of a patient's organs (stomach, intestines, etc.) and generate a medical image. Then, the medical image is input to an information processing device 1, etc. as input data IND.

- an imaging device endoscope, fMRI, etc.

- Step S102 3D modeling step

- the point cloud data generating unit 12 (22) included in the information processing device 1 or the like refers to the input data IND and generates three-dimensional point cloud data PCD corresponding to the input data IND.

- the three-dimensional point cloud data PCD generated by the point cloud data generating unit 12 (22) may be configured to be presented to medical professionals, medical staff, patients, etc. via a display or the like included in the input/output unit 40, as an example. Note that this step can be applied to both the learning phase and the estimation phase.

- Step S103A decision making step

- the estimation unit 23 included in the information processing device 1 or the like performs semantic segmentation on the medical image using the machine-learned estimation model PM, and outputs the segmentation result.

- the semantic segmentation performs segmentation (area classification) into, for example, lesion areas (inflammation, ulcer, polyp), normal areas, sites, and the like. This allows medical personnel to, for example, make a treatment plan. Therefore, the present device can support medical personnel in making diagnostic decisions.

- the acquisition unit 11 (21) of the information processing device 1 or the like acquires medical images as the input data

- the estimation unit 23 functions as a presentation means for presenting the segmentation results to assist medical professionals in making decisions.

- each of the above devices is realized, for example, by a computer that executes instructions of a program, which is software that realizes each function.

- a computer that executes instructions of a program, which is software that realizes each function.

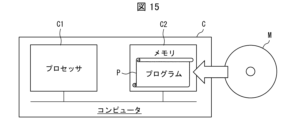

- An example of such a computer (hereinafter referred to as computer C) is shown in FIG. 15.

- FIG. 15 is a block diagram showing the hardware configuration of computer C that functions as each of the above devices.

- Computer C has at least one processor C1 and at least one memory C2.

- Memory C2 stores a program P for operating computer C as each of the above devices.

- processor C1 reads and executes program P from memory C2, thereby realizing each function of each of the above devices.

- the processor C1 may be, for example, a CPU (Central Processing Unit), GPU (Graphic Processing Unit), DSP (Digital Signal Processor), MPU (Micro Processing Unit), FPU (Floating point number Processing Unit), PPU (Physics Processing Unit), TPU (Tensor Processing Unit), quantum processor, microcontroller, or a combination of these.

- the memory C2 may be, for example, a flash memory, HDD (Hard Disk Drive), SSD (Solid State Drive), or a combination of these.

- the program P can also be recorded on a non-transitory, tangible recording medium M that can be read by the computer C.

- a recording medium M can be, for example, a tape, a disk, a card, a semiconductor memory, or a programmable logic circuit.

- the computer C can obtain the program P via such a recording medium M.

- the program P can also be transmitted via a transmission medium.

- a transmission medium can be, for example, a communications network or broadcast waves.

- the computer C can also obtain the program P via such a transmission medium.

- the thinning process by the thinned point cloud data generating means includes the following steps: The information processing device according to claim A1, further comprising a process of generating the three-dimensional thinned point cloud data using only a portion of a plurality of frames included in the input data.

- the estimation model is a first estimation model that receives point cloud data and outputs interpolated point cloud data; and a second estimation model that receives at least the complemented point cloud data and outputs a segmentation label related to the complemented point cloud data.

- Appendix A6 a projection unit for projecting the interpolated point cloud data generated by the estimation model onto a two-dimensional surface to generate projected point cloud data;

- the information processing device according to any one of Appendices A1 to A5, wherein the learning means further refers to the projected point cloud data and a segmentation answer label corresponding to the projected point cloud data to learn the estimation model.

- Appendix A7 The information processing device according to any one of appendices A1 to A6, wherein the thinned point cloud data generation means generates a plurality of mutually different three-dimensional thinned point cloud data for the same input data or the same three-dimensional point cloud data.

- An information processing device comprising: an estimation means for estimating, using an estimation model trained by machine learning with reference to three-dimensional point cloud data and three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data, the complemented point cloud data corresponding to the three-dimensional point cloud data generated by the point cloud data generation means and the segmentation label related to the complemented point cloud data, the estimation model having point cloud data as input and complemented point cloud data and segmentation labels related to the complemented point cloud data as output.

- the acquisition means acquires a medical image as the input data

- the information processing device according to claim 9, wherein the presentation means presents the segmentation result to assist a medical professional in making a decision.

- Appendix A11 The information processing device according to any one of Appendices A8 to A10, wherein the estimation means further refers to features accompanying the three-dimensional point cloud data generated by the point cloud data generation means, and estimates complemented point cloud data corresponding to the three-dimensional point cloud data generated by the point cloud data generation means and a segmentation label related to the complemented point cloud data.

- the estimation model is A first estimation model that receives point cloud data as input and outputs interpolated point cloud data; and a second estimation model that receives at least the complemented point cloud data and outputs a segmentation label related to the complemented point cloud data.

- Appendix B1 An acquisition step of acquiring input data; a point cloud data generation step of generating three-dimensional point cloud data from the input data; a thinned point cloud data generating step of generating three-dimensional thinned point cloud data by applying a thinning process to at least one of the input data and the three-dimensional point cloud data; an information processing method including a learning process for learning an estimation model that receives point cloud data as input and outputs complemented point cloud data and segmentation labels related to the complemented point cloud data, by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data.

- the thinning process in the thinned point cloud data generation step includes the following steps: The information processing method according to claim B1, further comprising a process of generating the three-dimensional thinned point cloud data using only a portion of a plurality of frames included in the input data.

- the thinning process in the thinned point cloud data generation step includes the following steps:

- the information processing method described in Appendix B1 or B2 includes a process of generating the three-dimensional thinned point cloud data using only a portion of the multiple data points included in at least one of the input data and the three-dimensional point cloud data.

- the estimation model is a first estimation model that receives point cloud data and outputs interpolated point cloud data;

- the information processing method according to claim 1 or 2 further comprising: a second estimation model that receives at least the complemented point cloud data and outputs a segmentation label related to the complemented point cloud data.

- the method further includes a projection step of generating projected point cloud data by projecting the interpolated point cloud data generated by the estimation model onto a two-dimensional surface,

- the information processing method according to any one of Appendices B1 to B5, wherein the learning step further refers to the projected point cloud data and a segmentation answer label corresponding to the projected point cloud data to learn the estimation model.

- Appendix B7 The information processing method according to any one of appendices B1 to B6, wherein the thinned point cloud data generation step generates a plurality of mutually different three-dimensional thinned point cloud data for the same input data or the same three-dimensional point cloud data.

- An information processing method comprising: an estimation step of estimating the complemented point cloud data corresponding to the three-dimensional point cloud data generated in the point cloud data generation step and the segmentation label related to the complemented point cloud data, using an estimation model that has been machine-learned by referring to three-dimensional point cloud data and three-dimensional thinned point cloud data obtained by applying a thinning process to the three-dimensional point cloud data, and the segmentation label related to the complemented point cloud data, the estimation model having point cloud data as input and complemented point cloud data and segmentation labels related to the complemented point cloud data as output.

- the acquiring step acquires a medical image as the input data;

- Appendix B11 The information processing method according to any one of Appendices B8 to B10, wherein the estimation step further refers to features associated with the three-dimensional point cloud data generated by the point cloud data generation step, and estimates complemented point cloud data corresponding to the three-dimensional point cloud data generated by the point cloud data generation step and a segmentation label related to the complemented point cloud data.

- the estimation model is A first estimation model that receives point cloud data as input and outputs interpolated point cloud data; and a second estimation model that receives at least the complemented point cloud data and outputs a segmentation label related to the complemented point cloud data.

- (Appendix C1) A program for causing a computer to function as an information processing device, The computer, An acquisition means for acquiring input data; a point cloud data generating means for generating three-dimensional point cloud data from the input data; a thinned point cloud data generating means for generating three-dimensional thinned point cloud data by applying a thinning process to at least one of the input data and the three-dimensional point cloud data; An information processing program that functions as a learning means that receives point cloud data as input, and learns an estimation model that outputs complemented point cloud data and segmentation labels related to the complemented point cloud data, by referring to the three-dimensional point cloud data and the three-dimensional thinned point cloud data.