WO2023279862A1 - 图像处理方法、装置和电子设备 - Google Patents

图像处理方法、装置和电子设备 Download PDFInfo

- Publication number

- WO2023279862A1 WO2023279862A1 PCT/CN2022/093914 CN2022093914W WO2023279862A1 WO 2023279862 A1 WO2023279862 A1 WO 2023279862A1 CN 2022093914 W CN2022093914 W CN 2022093914W WO 2023279862 A1 WO2023279862 A1 WO 2023279862A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- image

- field

- view

- images

- frame

- Prior art date

Links

- 238000003672 processing method Methods 0.000 title claims abstract description 55

- 238000012545 processing Methods 0.000 claims abstract description 275

- 238000000034 method Methods 0.000 claims abstract description 119

- 230000004927 fusion Effects 0.000 claims abstract description 56

- 238000013507 mapping Methods 0.000 claims abstract description 54

- 238000013135 deep learning Methods 0.000 claims abstract description 44

- 230000008569 process Effects 0.000 claims description 54

- 230000035945 sensitivity Effects 0.000 claims description 50

- 238000012937 correction Methods 0.000 claims description 28

- 230000009467 reduction Effects 0.000 claims description 25

- 238000003860 storage Methods 0.000 claims description 21

- 238000004590 computer program Methods 0.000 claims description 18

- 230000009466 transformation Effects 0.000 claims description 16

- 230000011218 segmentation Effects 0.000 claims description 15

- 238000006243 chemical reaction Methods 0.000 claims description 13

- 238000007499 fusion processing Methods 0.000 claims description 12

- 238000010586 diagram Methods 0.000 description 26

- 230000006870 function Effects 0.000 description 25

- 238000004891 communication Methods 0.000 description 21

- 230000000694 effects Effects 0.000 description 11

- 206010034972 Photosensitivity reaction Diseases 0.000 description 10

- 210000000988 bone and bone Anatomy 0.000 description 10

- 238000010295 mobile communication Methods 0.000 description 10

- 230000036211 photosensitivity Effects 0.000 description 10

- 230000009977 dual effect Effects 0.000 description 8

- 238000005516 engineering process Methods 0.000 description 8

- 238000003384 imaging method Methods 0.000 description 7

- 238000007726 management method Methods 0.000 description 7

- 239000004065 semiconductor Substances 0.000 description 7

- 230000000007 visual effect Effects 0.000 description 7

- 238000004364 calculation method Methods 0.000 description 6

- 238000009825 accumulation Methods 0.000 description 5

- 238000001514 detection method Methods 0.000 description 5

- 238000011282 treatment Methods 0.000 description 5

- 229920001621 AMOLED Polymers 0.000 description 4

- 238000013528 artificial neural network Methods 0.000 description 4

- 239000003086 colorant Substances 0.000 description 4

- 230000000295 complement effect Effects 0.000 description 4

- 230000001133 acceleration Effects 0.000 description 3

- 230000008859 change Effects 0.000 description 3

- 238000001914 filtration Methods 0.000 description 3

- 229910044991 metal oxide Inorganic materials 0.000 description 3

- 150000004706 metal oxides Chemical class 0.000 description 3

- 230000003190 augmentative effect Effects 0.000 description 2

- 238000010009 beating Methods 0.000 description 2

- 230000006399 behavior Effects 0.000 description 2

- 230000009286 beneficial effect Effects 0.000 description 2

- 230000005540 biological transmission Effects 0.000 description 2

- 230000036772 blood pressure Effects 0.000 description 2

- 238000005282 brightening Methods 0.000 description 2

- 238000013500 data storage Methods 0.000 description 2

- 239000010985 leather Substances 0.000 description 2

- 230000007774 longterm Effects 0.000 description 2

- 238000012986 modification Methods 0.000 description 2

- 230000004048 modification Effects 0.000 description 2

- 230000003287 optical effect Effects 0.000 description 2

- 230000002093 peripheral effect Effects 0.000 description 2

- 239000002096 quantum dot Substances 0.000 description 2

- 230000005855 radiation Effects 0.000 description 2

- 239000007787 solid Substances 0.000 description 2

- 239000000758 substrate Substances 0.000 description 2

- 241001270131 Agaricus moelleri Species 0.000 description 1

- 206010034960 Photophobia Diseases 0.000 description 1

- 230000002159 abnormal effect Effects 0.000 description 1

- 230000009471 action Effects 0.000 description 1

- 238000003491 array Methods 0.000 description 1

- 230000000712 assembly Effects 0.000 description 1

- 238000000429 assembly Methods 0.000 description 1

- 230000003416 augmentation Effects 0.000 description 1

- 230000002146 bilateral effect Effects 0.000 description 1

- 238000004422 calculation algorithm Methods 0.000 description 1

- 239000003990 capacitor Substances 0.000 description 1

- 230000008094 contradictory effect Effects 0.000 description 1

- 230000003247 decreasing effect Effects 0.000 description 1

- 238000013461 design Methods 0.000 description 1

- 238000005538 encapsulation Methods 0.000 description 1

- 230000005484 gravity Effects 0.000 description 1

- 238000005286 illumination Methods 0.000 description 1

- 230000003993 interaction Effects 0.000 description 1

- 208000013469 light sensitivity Diseases 0.000 description 1

- 239000004973 liquid crystal related substance Substances 0.000 description 1

- 238000004519 manufacturing process Methods 0.000 description 1

- 239000000463 material Substances 0.000 description 1

- 239000011159 matrix material Substances 0.000 description 1

- 238000005259 measurement Methods 0.000 description 1

- 239000013307 optical fiber Substances 0.000 description 1

- 230000008447 perception Effects 0.000 description 1

- 238000011946 reduction process Methods 0.000 description 1

- 238000009877 rendering Methods 0.000 description 1

- 230000008439 repair process Effects 0.000 description 1

- 230000004044 response Effects 0.000 description 1

- 230000006641 stabilisation Effects 0.000 description 1

- 238000011105 stabilization Methods 0.000 description 1

- 238000011269 treatment regimen Methods 0.000 description 1

- 230000001960 triggered effect Effects 0.000 description 1

- 230000001755 vocal effect Effects 0.000 description 1

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T5/00—Image enhancement or restoration

- G06T5/50—Image enhancement or restoration using two or more images, e.g. averaging or subtraction

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T3/00—Geometric image transformations in the plane of the image

- G06T3/40—Scaling of whole images or parts thereof, e.g. expanding or contracting

- G06T3/4015—Image demosaicing, e.g. colour filter arrays [CFA] or Bayer patterns

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T3/00—Geometric image transformations in the plane of the image

- G06T3/40—Scaling of whole images or parts thereof, e.g. expanding or contracting

- G06T3/4046—Scaling of whole images or parts thereof, e.g. expanding or contracting using neural networks

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T5/00—Image enhancement or restoration

- G06T5/60—Image enhancement or restoration using machine learning, e.g. neural networks

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T5/00—Image enhancement or restoration

- G06T5/70—Denoising; Smoothing

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T5/00—Image enhancement or restoration

- G06T5/90—Dynamic range modification of images or parts thereof

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/30—Determination of transform parameters for the alignment of images, i.e. image registration

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

- G06V10/56—Extraction of image or video features relating to colour

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

- G06V10/60—Extraction of image or video features relating to illumination properties, e.g. using a reflectance or lighting model

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N9/00—Details of colour television systems

- H04N9/64—Circuits for processing colour signals

- H04N9/73—Colour balance circuits, e.g. white balance circuits or colour temperature control

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10016—Video; Image sequence

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10024—Color image

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10141—Special mode during image acquisition

- G06T2207/10144—Varying exposure

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20081—Training; Learning

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20084—Artificial neural networks [ANN]

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20212—Image combination

- G06T2207/20221—Image fusion; Image merging

Definitions

- the present application relates to the field of image processing, in particular to an image processing method, device and electronic equipment.

- the mobile phone is configured with two cameras, one is a main camera, and the other is a wide-angle camera or a telephoto camera.

- the field of view of the wide-angle camera is larger than that of the main camera, which is suitable for close-up shooting

- the field of view of the telephoto camera is smaller than that of the main camera, which is suitable for long-range shooting.

- the fused image will have poor stereoscopic effect and poor quality because the field of view angles of the two cameras do not match. poor.

- the two images obtained by a mobile phone using such a dual camera there are parts where the field of view overlaps, and there are also parts where the field of view does not overlap. If the two images are directly fused, then the part of the final captured image where the field of view angles overlap has high definition, and the part that does not overlap has low definition, so that the captured image will have inconsistencies in the definition of the central part and surrounding parts.

- the problem is that there will be a fusion boundary on the image, which will affect the imaging effect.

- the application provides an image processing method, device and electronic equipment, by adding a reference coordinate layer to one of the two images with different viewing angles, and then inputting it into the deep learning network model for processing to obtain the corresponding image to improve shooting image quality.

- an image processing method comprising:

- the multiple frames of original images are images taken for the same scene to be shot.

- the multiple frames of original images include: a first field of view image and a second field of view image, and the field of view corresponding to the first field of view image

- the field angle is different from the field angle corresponding to the second field of view image;

- a reference coordinate layer is added to the second field of view image, and the reference coordinate layer is used to reflect the field angle corresponding to the second field of view image and the first

- the mapping relationship between the viewing angles corresponding to the viewing angle images; according to the first viewing angle image, the second viewing angle image and the reference coordinate layer, the layer set is obtained; the deep learning network model is used to process the layer set,

- a first enhanced image is obtained; the first enhanced image is located in RGB color space; and a second enhanced image is obtained according to the first enhanced image.

- the embodiment of the present application provides an image processing method, by obtaining the first field of view image and the second field of view image corresponding to different field of view, and adding a reference coordinate layer to the second field of view image to form a map

- the layer set and then use the deep learning network model to process the layer set to obtain the first enhanced image, and then obtain the second enhanced image according to the first enhanced image.

- the reference coordinate layer reflects the mapping relationship between the field angle corresponding to the second field of view image and the field angle corresponding to the first field of view image, thus, by adding a reference coordinate layer, different viewing angles can be added.

- the mapping relationship information between field angles enables subsequent adjustments to be made according to the mapping relationship between different field angles, so that more details can be preserved, and the fusion is more natural, thereby achieving the purpose of improving image quality.

- the deep learning network model can perform multiple processes on the layer set at the same time, such as noise reduction, demosaicing, color fusion and field of view fusion, etc., avoiding the accumulation of errors caused by serial processing, thus, also The sharpness of the image can be improved.

- the method before adding a reference coordinate layer to the second FOV image, the method further includes: performing the first FOV image and/or the second FOV image A first process is performed, and the first process includes: registration.

- the accuracy in subsequent image processing can be improved.

- the first field of view image includes one or more of the following: multiple frames of the first image, multiple frames of the second image, and at least one frame of the third image; wherein, the multiple frames

- the first image includes at least one frame of long-exposure image and at least one frame of short-exposure image

- the second image is a Bayer pattern image with normal exposure

- the third image is a grayscale image.

- the first image is a Bayer format image or a grayscale image.

- the second field-of-view image is a Bayer pattern image or a grayscale image.

- registering the second field of view images includes: taking the first frame of the second image as a reference frame , to perform registration on the second field of view image.

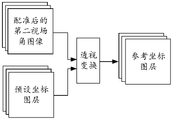

- the method further includes: according to the second image in the first frame and the registered second FOV image, The preset coordinate layer performs perspective transformation to obtain a reference coordinate layer, and the preset coordinate layer is used to reflect the difference between the field angle corresponding to the preset second field of view image and the field angle corresponding to the first field of view image mapping relationship between them.

- the second field of view image is registered, so the preset coordinate layer can be adjusted according to the registered second field of view image , to obtain a reference coordinate layer that can more accurately reflect the mapping relationship between the field angle corresponding to the first field of view image and the field angle corresponding to the second field of view image.

- the preset coordinate layer includes an overlapping area; the overlapping area is used to represent: the image sticker with a smaller viewing angle in the first frame of the second image and the second viewing angle image The area corresponding to an image with a larger field of view.

- the first processing further includes: black level correction.

- the first processing further includes : Automatic white balance.

- the first processing further includes: channel splitting; wherein, channel splitting refers to splitting a Bayer format image into multiple single-channel sublayers to be enhanced, each The single-channel sublayer to be enhanced contains only one color channel signal.

- channel splitting refers to splitting a Bayer format image into multiple single-channel sublayers to be enhanced, each The single-channel sublayer to be enhanced contains only one color channel signal.

- the first processing further includes: adding a variance layer; wherein, the variance layer includes a plurality of pixels, and the variance value corresponding to each pixel is determined by the sensitivity corresponding to the original image.

- the variance layer includes a plurality of pixels, and the variance value corresponding to each pixel is determined by the sensitivity corresponding to the original image.

- the prior information can be increased, so that the follow-up can be based on different noises Different levels of noise reduction can be performed to preserve more details and achieve the purpose of improving the clarity of the image.

- the deep learning network model is used to process the layer set to obtain the first enhanced image, including: using the deep learning network model to perform noise reduction, demosaicing, color fusion and visual The field angles are fused to obtain the first enhanced image.

- the deep learning network model can perform multiple processes at the same time, the accumulation of errors caused by serial processing is avoided, thereby improving the clarity of the image.

- obtaining the second enhanced image according to the first enhanced image includes: performing enhancement processing on the first enhanced image to obtain the second enhanced image, and the enhancement processing includes color enhancement processing and / or brightness enhancement processing.

- performing color enhancement and/or brightness enhancement on the first enhanced image can enhance the visual effect of the image, so that the enhanced image content and image color can better meet the user's visual needs.

- performing enhancement processing on the first enhanced image to obtain a second enhanced image includes: segmenting the first enhanced image by using a segmentation model to obtain a mask map; according to the first enhanced image and a mask map, using a tone mapping model to obtain a gain coefficient map; the gain coefficient map includes a plurality of pixels and a corresponding gain value for each pixel; multiplying the first enhanced image by the gain coefficient map to obtain a second enhanced image.

- non-linear enhancement can be performed on the first enhanced image, thus, the first enhanced image can be processed more delicately.

- the gain coefficient map includes 3 frames of color gain coefficient map and/or 1 frame of brightness gain coefficient map, and each frame of color gain coefficient map only enhances one color, and the brightness gain The coefficient map is used to enhance the brightness.

- the first processing when the first processing is not performed on multiple frames of first images in the first field of view image, but the first processing is performed on the second field of view image, according to the first The field of view image, the second field of view image and the reference coordinate layer to obtain the layer set, including: according to the first field of view image except for the images of multiple frames of the first image, the second field of view image and Refer to the coordinate layer to get the layer set.

- the method before using the segmentation model to obtain the mask map corresponding to the first enhanced image, the method further includes: using the long-exposure image and the short-exposure image in the multiple frames of the first image, Perform long and short exposure fusion processing on the first enhanced image to obtain an intermediate enhanced image; use the intermediate enhanced image as the first enhanced image.

- the long and short exposure fusion processing is performed on the first enhanced image, so that the details of the dark area and the overexposed area in the first enhanced image can be improved, and an intermediate enhanced image with higher definition can be obtained.

- the long-exposure image and the short-exposure image are used to perform long-short-exposure fusion processing on the first enhanced image to obtain an intermediate enhanced image, including: combining the first enhanced image with the first image to be fused Perform fusion to obtain a first intermediate fusion image; fuse the first intermediate fusion image and the second image to be fused to obtain the intermediate enhanced image; wherein, the first image to be fused and the second image to be fused are respectively long exposure images and short exposure images.

- the method further includes: performing color space conversion on the second enhanced image to obtain the first target image in the YUV color space.

- performing color space conversion can reduce the amount of subsequent calculations and save storage space.

- the deep learning network model and the segmentation model are respectively any one of the Unet model, the Resnet model and the PSPnet model.

- the tone mapping model is any one of Unet model, Resnet model and Hdrnet model.

- an image processing apparatus in a second aspect, includes a unit for performing each step in the above first aspect or any possible implementation manner of the first aspect.

- an image processing device including: a receiving interface and a processor; the receiving interface is used to obtain multiple frames of original images from an electronic device, the multiple frames of original images are images taken for the same scene to be shot, and multiple The frame original image includes: a first field of view image and a second field of view image, and the field of view corresponding to the first field of view image is different from the field of view corresponding to the second field of view image; the processor is used to call

- the computer program stored in the memory is used to execute the processing steps in the image processing method provided in the first aspect or any possible implementation manner of the first aspect.

- an electronic device including a camera module, a processor, and a memory; the camera module is used to obtain multiple frames of original images, and the multiple frames of original images are images taken for the same scene to be shot, and the multiple frames

- the original image includes: a first field of view image and a second field of view image, and the field of view corresponding to the first field of view image is different from the field of view corresponding to the second field of view image; the memory is used for storing A computer program running on the processor; the processor is configured to execute the processing steps in the image processing method provided in the first aspect or any possible implementation manner of the first aspect.

- the camera module includes a color camera, a black-and-white camera, and a third camera

- the color camera and the black-and-white camera are used to take pictures of the same scene to be shot at a first field of view

- the third The camera is used to take pictures of the scene to be shot with a second field of view; the first field of view is different from the second field of view; the color camera is used to obtain multiple frames of the first image and multiple frames after the processor obtains the photographing instruction

- the second image, the multi-frame first image includes at least one frame of long exposure image and one frame of short exposure image; the second image is a Bayer format image with normal exposure; the black and white camera is used to obtain at least one frame after the processor obtains the camera instruction

- the camera module includes a color camera, a black-and-white camera, and a third camera

- the color camera and the black-and-white camera are used to take pictures of the same scene to be shot at a first field of view

- the third The camera is used to take pictures of the scene to be shot with a second field of view; the first field of view is different from the second field of view; the color camera is used to obtain multiple frames of second images after the processor obtains the photographing instruction,

- the second image is a Bayer format image with normal exposure;

- the black and white camera is used to obtain multiple frames of the first image and at least one frame of the third image after the processor obtains the camera instruction, and the multiple frames of the first image include at least one frame of long-exposure image and a short-exposure image;

- the third image is a grayscale image; and the third camera is used to acquire at least one frame of a second field-of-view image after the processor acquires the photographing instruction.

- a chip including: a processor, configured to call and run a computer program from a memory, so that a device installed with the chip executes the chip as provided in the first aspect or any possible implementation manner of the first aspect. The steps of processing in the image processing method.

- a computer-readable storage medium stores a computer program, and the computer program includes program instructions.

- the program instructions When executed by a processor, the processor executes the first aspect or the first method. Steps of performing processing in the image processing method provided in any possible implementation manner of the aspect.

- a computer program product includes a computer-readable storage medium storing a computer program, and the computer program enables the computer to execute the image provided in the first aspect or any possible implementation manner of the first aspect The step in the processing method that performs the processing.

- the image processing method, device and electronic equipment provided by this application form The layer set, and then use the deep learning network model to process the layer set to obtain a first enhanced image, and then obtain a second enhanced image based on the first enhanced image.

- the reference coordinate layer reflects the mapping relationship between the field angle corresponding to the second field of view image and the field angle corresponding to the first field of view image, thus, by adding a reference coordinate layer, different viewing angles can be added.

- the mapping relationship information between field angles enables subsequent adjustments to be made according to the mapping relationship between different field angles, so that more details can be preserved, and the fusion is more natural, thereby achieving the purpose of improving image quality.

- the deep learning network model can perform multiple processes on the layer set at the same time, such as noise reduction, demosaicing, color fusion and field of view fusion, etc., avoiding the accumulation of errors caused by serial processing, thus, also The sharpness of the image can be improved.

- FIG. 1 is a schematic diagram of processing images captured by dual cameras provided by the related art

- FIG. 2 is a schematic structural diagram of an electronic device provided in an embodiment of the present application.

- FIG. 3 is a hardware architecture diagram of an image processing device provided in an embodiment of the present application.

- FIG. 4 is a schematic flow diagram of an image processing method provided in an embodiment of the present application.

- FIG. 5 is a schematic flow chart of registering multiple frames of second images provided by an embodiment of the present application.

- FIG. 6 is a schematic diagram of obtaining a reference coordinate layer provided by an embodiment of the present application.

- FIG. 7 is a schematic diagram of performing perspective transformation processing on a preset coordinate layer according to an embodiment of the present application.

- FIG. 8 is a schematic diagram of performing black level correction on a second image according to an embodiment of the present application.

- FIG. 9 is a schematic diagram of channel splitting of a second image provided by an embodiment of the present application.

- FIG. 10 is a schematic diagram of performing channel splitting on a second image and adding a variance layer according to an embodiment of the present application

- Fig. 11 is a schematic diagram of the variance layer provided by the embodiment of the present application.

- FIG. 12 is a schematic flow diagram of obtaining the first enhanced image corresponding to the layer set by using the deep learning network model provided by the embodiment of the present application;

- FIG. 13 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 14 is a schematic flow diagram for obtaining a second enhanced image by performing enhancement processing on the first enhanced image according to an embodiment of the present application

- FIG. 15 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 16 is another schematic flow diagram of performing enhancement processing on the first enhanced image to obtain the second enhanced image provided by the embodiment of the present application;

- FIG. 17 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 18 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 19 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 20 is a schematic flowchart of another image processing method provided in the embodiment of the present application.

- FIG. 21 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 22 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 23 is a schematic flowchart of another image processing method provided by the embodiment of the present application.

- FIG. 24 is a schematic structural diagram of an image processing device provided by an embodiment of the present application.

- Fig. 25 is a schematic structural diagram of a chip provided by the embodiment of the application.

- a relationship means that there may be three kinds of relationships, for example, A and/or B means: A exists alone, A and B exist simultaneously, and B exists alone.

- plural refers to two or more than two.

- first and second are used for descriptive purposes only, and cannot be understood as indicating or implying relative importance or implicitly specifying the quantity of indicated technical features. Thus, a feature defined as “first” and “second” may explicitly or implicitly include one or more of these features. In the description of this embodiment, unless otherwise specified, “plurality” means two or more.

- RGB (red, green, blue) color space refers to a color model related to the structure of the human visual system. According to the structure of the human eye, all colors are seen as different combinations of red, green and blue.

- YUV color space refers to a color coding method, Y represents brightness, U and V represent chroma.

- RGB color space focuses on the human eye's perception of color, while the YUV color space focuses on the sensitivity of vision to brightness.

- RGB color space and YUV color space can be converted to each other.

- the pixel value refers to a group of color components corresponding to each pixel in the color image located in the RGB color space.

- each pixel corresponds to a group of three primary color components, wherein the three primary color components are red component R, green component G and blue component B respectively.

- Bayer pattern color filter array when the image is converted from the actual scene to image data, usually the image sensor receives the red channel signal, the green channel signal and the blue channel signal respectively, three information of three channel signals, and then synthesize the information of three channel signals into a color image.

- CFA color filter array

- the surface is covered with a color filter array to obtain the information of the three channel signals.

- the Bayer format color filter array means that the filters are arranged in a checkerboard format. For example, the smallest repeating unit in the Bayer format color filter array is: one filter for obtaining the red channel signal, two filters for obtaining the green channel signal , a filter for obtaining the blue channel signal is arranged in a 2 ⁇ 2 manner.

- a Bayer image that is, an image output by an image sensor based on a Bayer format color filter array.

- the pixels of multiple colors in this image are arranged in a Bayer pattern.

- each pixel in the Bayer format image only corresponds to a channel signal of one color.

- green pixels pixels corresponding to the green channel signal

- blue pixels pixels corresponding to the blue channel signal

- red pixels pixels corresponding to the blue channel signal

- Pixels corresponding to the red channel signal each account for 25% of all pixels.

- the minimum repeating unit of the Bayer format image is: one red pixel, two green pixels and one blue pixel are arranged in a 2 ⁇ 2 manner.

- Grayscale image a grayscale image is a single-channel image, used to represent different brightness levels, the brightest is all white, and the darkest is all black. That is, each pixel in a grayscale image corresponds to a different degree of brightness between black and white.

- 256 gray scales (0th grayscale to grayscale 255 grayscale).

- Binary image means that each pixel on the image has only two possible values or grayscale states.

- the gray value corresponding to the pixel included in the image can only be 0 or 255, 0 and 255 represent white and black respectively; or in other words, the corresponding value of the pixel in the image can only be 0 or 1, 0 and 1 respectively Represents white and black.

- Registration refers to the matching of geographic coordinates of different images obtained by different imaging methods in the same area. Among them, it includes the processing of three aspects: geometric correction, projection transformation and unified scale.

- Black level correction due to the presence of dark current in the image sensor, when there is no light, the pixels also have a certain output voltage, and the pixels at different positions may correspond to different output voltages, therefore, it is necessary to correct The output voltage corresponding to the bright (ie, black) pixel is corrected.

- dead point is the white point in the output image in a completely black environment, and the black point in the output image in a bright environment.

- the three primary color channel signals should have a linear response relationship with the ambient brightness, but due to poor signal output by the image sensor, white or black spots may appear, for this, it can be automatically detected and repaired automatically, or, establish The bad pixel linked list repairs bad pixels at fixed positions.

- a point refers to a pixel.

- Noise reduction refers to the process of reducing noise in an image. Common methods include mean filtering, Gaussian filtering, and bilateral filtering.

- Field of view which is used to indicate the maximum angle range that the camera can capture. If the object to be photographed is within the angle range, the object to be photographed will be captured by the camera. If the object to be photographed is outside the angle range, the object to be photographed will not be captured by the camera.

- FOV Field of view

- the camera can be divided into a main camera, a wide-angle camera, and a telephoto camera due to different field of view angles.

- the field of view of the wide-angle camera is larger than that of the main camera, and the focal length is smaller, which is suitable for close-up shooting; while the field of view of the telephoto camera is smaller than that of the main camera, and the focal length is longer. Suitable for remote shooting.

- the mobile phone is configured with two cameras, one is a main camera, and the other is a wide-angle camera or a telephoto camera, or the two cameras are respectively a wide-angle camera and a telephoto camera.

- the angle of view of the wide-angle camera is larger than that of the main camera, and the angle of view of the telephoto camera is smaller than that of the main camera. Then, the image taken by the main camera and the image taken by the wide-angle camera, or; the image taken by the main camera and the image taken by the telephoto camera are simply fused; Simple fusion.

- FIG. 1 shows a schematic diagram of processing images captured by dual cameras in a related technology.

- the first field of view image taken by the main camera is usually filled in the second field of view image taken by the wide-angle camera according to the size of the field of view, or the telephoto camera

- the captured image of the first field of view is filled in the image of the second field of view captured by the main camera or the wide-angle camera.

- the fused image will have poor stereoscopic effect and poor quality.

- the two images obtained by a mobile phone using such a dual camera there are parts where the field of view overlaps, and there are also parts where the field of view does not overlap. If the two images are fused directly, the overlapping parts and non-overlapping parts of the final captured image may not be in alignment, and part of the content may be broken or deformed.

- the overlapping part of the field of view may have high definition, and the non-overlapping part may have low definition, so that the captured image will have the problem of inconsistency in the definition of the central part and the surrounding part, that is, there will be a fusion boundary on the image, which will affect the imaging. Effect.

- the embodiment of the present application provides an image processing method, by acquiring the first field of view image and the second field of view image corresponding to different field of view, and adding a reference coordinate map to the second field of view image Layers to form a layer set, and then use the deep learning network model to process the layer set to obtain the first enhanced image, and then obtain the second enhanced image based on the first enhanced image.

- the reference coordinate layer reflects the mapping relationship between the field angle corresponding to the second field of view image and the field angle corresponding to the first field of view image, thus, by adding a reference coordinate layer, different viewing angles can be added.

- the mapping relationship information between field angles enables subsequent adjustments to be made according to the mapping relationship between different field angles, so that more details can be preserved, and the fusion is more natural, thereby achieving the purpose of improving image quality.

- the image processing method provided in the embodiment of the present application may be applicable to various electronic devices, and correspondingly, the image processing apparatus provided in the embodiment of the present application may be electronic devices in various forms.

- the electronic device may be various camera devices such as SLR cameras and card players, mobile phones, tablet computers, wearable devices, vehicle-mounted devices, augmented reality (augmented reality, AR)/virtual reality (virtual reality) reality, VR) equipment, notebook computer, ultra-mobile personal computer (ultra-mobile personal computer, UMPC), netbook, personal digital assistant (personal digital assistant, PDA), etc., or other equipment or devices capable of image processing,

- camera devices such as SLR cameras and card players, mobile phones, tablet computers, wearable devices, vehicle-mounted devices, augmented reality (augmented reality, AR)/virtual reality (virtual reality) reality, VR) equipment, notebook computer, ultra-mobile personal computer (ultra-mobile personal computer, UMPC), netbook, personal digital assistant (personal digital assistant, PDA), etc., or other equipment or devices capable of image processing

- the embodiment of the present application does not set any limitation on the specific type of the electronic device.

- FIG. 2 shows a schematic structural diagram of an electronic device 100 provided in an embodiment of the present application.

- the electronic device 100 may include a processor 110, an external memory interface 120, an internal memory 121, a universal serial bus (universal serial bus, USB) interface 130, a charging management module 140, a power management module 141, a battery 142, an antenna 1, and an antenna 2 , mobile communication module 150, wireless communication module 160, audio module 170, speaker 170A, receiver 170B, microphone 170C, earphone jack 170D, sensor module 180, button 190, motor 191, indicator 192, camera 193, display screen 194, and A subscriber identification module (subscriber identification module, SIM) card interface 195 and the like.

- SIM subscriber identification module

- the sensor module 180 may include a pressure sensor 180A, a gyroscope sensor 180B, an air pressure sensor 180C, a magnetic sensor 180D, an acceleration sensor 180E, a distance sensor 180F, a proximity light sensor 180G, a fingerprint sensor 180H, a temperature sensor 180J, a touch sensor 180K, an ambient light sensor 180L, bone conduction sensor 180M, etc.

- the processor 110 may include one or more processing units, for example: the processor 110 may include an application processor (application processor, AP), a modem processor, a graphics processing unit (graphics processing unit, GPU), an image signal processor (image signal processor, ISP), controller, video codec, digital signal processor (digital signal processor, DSP), baseband processor, and/or neural network processor (neural-network processing unit, NPU), etc. Wherein, different processing units may be independent devices, or may be integrated in one or more processors.

- application processor application processor, AP

- modem processor graphics processing unit

- GPU graphics processing unit

- image signal processor image signal processor

- ISP image signal processor

- controller video codec

- digital signal processor digital signal processor

- baseband processor baseband processor

- neural network processor neural-network processing unit

- the controller may be the nerve center and command center of the electronic device 100 .

- the controller can generate an operation control signal according to the instruction opcode and timing signal, and complete the control of fetching and executing the instruction.

- a memory may also be provided in the processor 110 for storing instructions and data.

- the memory in processor 110 is a cache memory.

- the memory may hold instructions or data that the processor 110 has just used or recycled. If the processor 110 needs to use the instruction or data again, it can be called directly from the memory. Repeated access is avoided, and the waiting time of the processor 110 is reduced, thereby improving the efficiency of the system.

- the processor 110 may run the software code of the image processing method provided in the embodiment of the present application to capture an image with higher definition.

- processor 110 may include one or more interfaces.

- the interface may include an integrated circuit (inter-integrated circuit, I2C) interface, an integrated circuit built-in audio (inter-integrated circuit sound, I2S) interface, a pulse code modulation (pulse code modulation, PCM) interface, a universal asynchronous transmitter (universal asynchronous receiver/transmitter, UART) interface, mobile industry processor interface (mobile industry processor interface, MIPI), general-purpose input and output (general-purpose input/output, GPIO) interface, subscriber identity module (subscriber identity module, SIM) interface, and /or universal serial bus (universal serial bus, USB) interface, etc.

- I2C integrated circuit

- I2S integrated circuit built-in audio

- PCM pulse code modulation

- PCM pulse code modulation

- UART universal asynchronous transmitter

- MIPI mobile industry processor interface

- GPIO general-purpose input and output

- subscriber identity module subscriber identity module

- SIM subscriber identity module

- USB universal serial bus

- the MIPI interface can be used to connect the processor 110 with peripheral devices such as the display screen 194 and the camera 193 .

- MIPI interface includes camera serial interface (camera serial interface, CSI), display serial interface (display serial interface, DSI), etc.

- the processor 110 communicates with the camera 193 through the CSI interface to realize the shooting function of the electronic device 100 .

- the processor 110 communicates with the display screen 194 through the DSI interface to realize the display function of the electronic device 100 .

- the GPIO interface can be configured by software.

- the GPIO interface can be configured as a control signal or as a data signal.

- the GPIO interface can be used to connect the processor 110 with the camera 193 , the display screen 194 , the wireless communication module 160 , the audio module 170 , the sensor module 180 and so on.

- the GPIO interface can also be configured as an I2C interface, I2S interface, UART interface, MIPI interface, etc.

- the USB interface 130 is an interface conforming to the USB standard specification, specifically, it can be a Mini USB interface, a Micro USB interface, a USB Type C interface, and the like.

- the USB interface 130 can be used to connect a charger to charge the electronic device 100 , and can also be used to transmit data between the electronic device 100 and peripheral devices. It can also be used to connect headphones and play audio through them. This interface can also be used to connect other electronic devices, such as AR devices.

- the interface connection relationship between the modules shown in the embodiment of the present application is only a schematic illustration, and does not constitute a structural limitation of the electronic device 100 .

- the electronic device 100 may also adopt different interface connection manners in the foregoing embodiments, or a combination of multiple interface connection manners.

- the charging management module 140 is configured to receive a charging input from a charger.

- the power management module 141 is used for connecting the battery 142 , the charging management module 140 and the processor 110 .

- the power management module 141 receives the input from the battery 142 and/or the charging management module 140 to provide power for the processor 110 , the internal memory 121 , the display screen 194 , the camera 193 , and the wireless communication module 160 .

- the wireless communication function of the electronic device 100 can be realized by the antenna 1 , the antenna 2 , the mobile communication module 150 , the wireless communication module 160 , a modem processor, a baseband processor, and the like.

- Antenna 1 and Antenna 2 are used to transmit and receive electromagnetic wave signals.

- Each antenna in electronic device 100 may be used to cover single or multiple communication frequency bands. Different antennas can also be multiplexed to improve the utilization of the antennas.

- Antenna 1 can be multiplexed as a diversity antenna of a wireless local area network.

- the antenna may be used in conjunction with a tuning switch.

- the mobile communication module 150 can provide wireless communication solutions including 2G/3G/4G/5G applied on the electronic device 100 .

- the mobile communication module 150 may include at least one filter, switch, power amplifier, low noise amplifier (low noise amplifier, LNA) and the like.

- the mobile communication module 150 can receive electromagnetic waves through the antenna 1, filter and amplify the received electromagnetic waves, and send them to the modem processor for demodulation.

- the mobile communication module 150 can also amplify the signals modulated by the modem processor, and convert them into electromagnetic waves through the antenna 1 for radiation.

- at least part of the functional modules of the mobile communication module 150 may be set in the processor 110 .

- at least part of the functional modules of the mobile communication module 150 and at least part of the modules of the processor 110 may be set in the same device.

- the wireless communication module 160 can provide wireless local area networks (wireless local area networks, WLAN) (such as wireless fidelity (Wireless Fidelity, Wi-Fi) network), bluetooth (bluetooth, BT), global navigation satellite, etc. applied on the electronic device 100.

- System global navigation satellite system, GNSS

- frequency modulation frequency modulation, FM

- near field communication technology near field communication, NFC

- infrared technology infrared, IR

- the wireless communication module 160 may be one or more devices integrating at least one communication processing module.

- the wireless communication module 160 receives electromagnetic waves via the antenna 2, frequency-modulates and filters the electromagnetic wave signals, and sends the processed signals to the processor 110.

- the wireless communication module 160 can also receive the signal to be sent from the processor 110 , frequency-modulate it, amplify it, and convert it into electromagnetic waves through the antenna 2 for radiation.

- the antenna 1 of the electronic device 100 is coupled to the mobile communication module 150, and the antenna 2 is coupled to the wireless communication module 160, so that the electronic device 100 can communicate with the network and other devices through wireless communication technology.

- the wireless communication technology may include global system for mobile communications (GSM), general packet radio service (general packet radio service, GPRS), code division multiple access (code division multiple access, CDMA), broadband Code division multiple access (wideband code division multiple access, WCDMA), time division code division multiple access (time-division code division multiple access, TD-SCDMA), long term evolution (long term evolution, LTE), BT, GNSS, WLAN, NFC , FM, and/or IR techniques, etc.

- GSM global system for mobile communications

- GPRS general packet radio service

- code division multiple access code division multiple access

- CDMA broadband Code division multiple access

- WCDMA wideband code division multiple access

- time division code division multiple access time-division code division multiple access

- TD-SCDMA time-division code division multiple access

- the GNSS may include a global positioning system (global positioning system, GPS), a global navigation satellite system (global navigation satellite system, GLONASS), a Beidou navigation satellite system (beidou navigation satellite system, BDS), a quasi-zenith satellite system (quasi -zenith satellite system (QZSS) and/or satellite based augmentation systems (SBAS).

- GPS global positioning system

- GLONASS global navigation satellite system

- Beidou navigation satellite system beidou navigation satellite system

- BDS Beidou navigation satellite system

- QZSS quasi-zenith satellite system

- SBAS satellite based augmentation systems

- the electronic device 100 realizes the display function through the GPU, the display screen 194 , and the application processor.

- the GPU is a microprocessor for image processing, and is connected to the display screen 194 and the application processor. GPUs are used to perform mathematical and geometric calculations for graphics rendering.

- Processor 110 may include one or more GPUs that execute program instructions to generate or change display information.

- the display screen 194 is used to display images, videos and the like.

- the display screen 194 includes a display panel.

- the display panel can be a liquid crystal display (LCD), an organic light-emitting diode (OLED), an active matrix organic light emitting diode or an active matrix organic light emitting diode (active-matrix organic light emitting diode, AMOLED), flexible light-emitting diode (flex light-emitting diode, FLED), Miniled, MicroLed, Micro-oLed, quantum dot light emitting diodes (quantum dot light emitting diodes, QLED), etc.

- the electronic device 100 may include 1 or N display screens 194 , where N is a positive integer greater than 1.

- Camera 193 is used to capture images. It can be triggered by an application command to realize the camera function, such as capturing images of any scene.

- a camera may include components such as an imaging lens, an optical filter, and an image sensor. The light emitted or reflected by the object enters the imaging lens, passes through the filter, and finally converges on the image sensor.

- the image sensor is mainly used for converging and imaging the light emitted or reflected by all objects in the camera perspective (also called the scene to be shot, the target scene, or the scene image that the user expects to shoot); the filter is mainly used to It is used to filter out redundant light waves (such as light waves other than visible light, such as infrared) in the light; the image sensor is mainly used to perform photoelectric conversion on the received light signal, convert it into an electrical signal, and input it into the processor 130 for subsequent processing .

- the camera 193 may be located at the front of the electronic device 100, or at the back of the electronic device 100, and the specific number and arrangement of the cameras may be set according to requirements, which are not limited in this application.

- the electronic device 100 includes a front camera and a rear camera.

- a front camera or a rear camera may include one or more cameras.

- the image processing method provided in the embodiment of the present application may be used.

- the camera is arranged on an external accessory of the electronic device 100, the external accessory is rotatably connected to the frame of the mobile phone, and the angle formed between the external accessory and the display screen 194 of the electronic device 100 is 0-360 degrees any angle between.

- the electronic device 100 takes a selfie

- the external accessory drives the camera to rotate to a position facing the user.

- the mobile phone has multiple cameras, only some of the cameras may be set on the external accessories, and the rest of the cameras may be set on the electronic device 100 body, which is not limited in this embodiment of the present application.

- the internal memory 121 may be used to store computer-executable program codes including instructions.

- the internal memory 121 may include an area for storing programs and an area for storing data.

- the stored program area can store an operating system, at least one application program required by a function (such as a sound playing function, an image playing function, etc.) and the like.

- the storage data area can store data created during the use of the electronic device 100 (such as audio data, phonebook, etc.) and the like.

- the internal memory 121 may include a high-speed random access memory, and may also include a non-volatile memory, such as at least one magnetic disk storage device, flash memory device, universal flash storage (universal flash storage, UFS) and the like.

- the processor 110 executes various functional applications and data processing of the electronic device 100 by executing instructions stored in the internal memory 121 and/or instructions stored in a memory provided in the processor.

- the internal memory 121 can also store the software code of the image processing method provided by the embodiment of the present application.

- the processor 110 runs the software code, it executes the process steps of the image processing method to obtain an image with higher definition.

- the internal memory 121 can also store captured images.

- the external memory interface 120 can be used to connect an external memory card, such as a Micro SD card, so as to expand the storage capacity of the electronic device 100.

- the external memory card communicates with the processor 110 through the external memory interface 120 to implement a data storage function. Such as saving files such as music in an external memory card.

- the software code of the image processing method provided in the embodiment of the present application can also be stored in an external memory, and the processor 110 can run the software code through the external memory interface 120 to execute the process steps of the image processing method to obtain a high-definition image.

- Image Images captured by the electronic device 100 may also be stored in an external memory.

- the user can designate whether to store the image in the internal memory 121 or the external memory.

- the electronic device 100 when the electronic device 100 is currently connected to the external memory, if the electronic device 100 captures one frame of image, a prompt message may pop up to remind the user whether to store the image in the external memory or the internal memory; of course, there may be other specified ways , the embodiment of the present application does not impose any limitation on this; or, when the electronic device 100 detects that the amount of memory in the internal memory 121 is less than a preset amount, it may automatically store the image in the external memory.

- the electronic device 100 can implement audio functions through the audio module 170 , the speaker 170A, the receiver 170B, the microphone 170C, the earphone interface 170D, and the application processor. Such as music playback, recording, etc.

- the pressure sensor 180A is used to sense the pressure signal and convert the pressure signal into an electrical signal.

- pressure sensor 180A may be disposed on display screen 194 .

- the gyro sensor 180B can be used to determine the motion posture of the electronic device 100 .

- the angular velocity of the electronic device 100 around three axes ie, x, y and z axes

- the gyro sensor 180B can be used for image stabilization.

- the air pressure sensor 180C is used to measure air pressure.

- the electronic device 100 calculates the altitude based on the air pressure value measured by the air pressure sensor 180C to assist positioning and navigation.

- the magnetic sensor 180D includes a Hall sensor.

- the electronic device 100 may use the magnetic sensor 180D to detect the opening and closing of the flip leather case.

- the electronic device 100 when the electronic device 100 is a clamshell machine, the electronic device 100 can detect opening and closing of the clamshell according to the magnetic sensor 180D. Then according to the detected opening and closing state of the holster or the opening and closing state of the flip cover, features such as automatic unlocking of the flip cover are set.

- the acceleration sensor 180E can detect the acceleration of the electronic device 100 in various directions (generally three axes). When the electronic device 100 is stationary, the magnitude and direction of gravity can be detected. It can also be used to identify the posture of electronic devices, and can be used in applications such as horizontal and vertical screen switching, pedometers, etc.

- the distance sensor 180F is used to measure the distance.

- the electronic device 100 may measure the distance by infrared or laser. In some embodiments, when shooting a scene, the electronic device 100 may use the distance sensor 180F for distance measurement to achieve fast focusing.

- Proximity light sensor 180G may include, for example, light emitting diodes (LEDs) and light detectors, such as photodiodes.

- the light emitting diodes may be infrared light emitting diodes.

- the electronic device 100 emits infrared light through the light emitting diode.

- Electronic device 100 uses photodiodes to detect infrared reflected light from nearby objects. When sufficient reflected light is detected, it may be determined that there is an object near the electronic device 100 . When insufficient reflected light is detected, the electronic device 100 may determine that there is no object near the electronic device 100 .

- the electronic device 100 can use the proximity light sensor 180G to detect that the user is holding the electronic device 100 close to the ear to make a call, so as to automatically turn off the screen to save power.

- the proximity light sensor 180G can also be used in leather case mode, automatic unlock and lock screen in pocket mode.

- the ambient light sensor 180L is used for sensing ambient light brightness.

- the electronic device 100 can adaptively adjust the brightness of the display screen 194 according to the perceived ambient light brightness.

- the ambient light sensor 180L can also be used to automatically adjust the white balance when taking pictures.

- the ambient light sensor 180L can also cooperate with the proximity light sensor 180G to detect whether the electronic device 100 is in the pocket, so as to prevent accidental touch.

- the fingerprint sensor 180H is used to collect fingerprints.

- the electronic device 100 can use the collected fingerprint characteristics to implement fingerprint unlocking, access to application locks, take pictures with fingerprints, answer incoming calls with fingerprints, and the like.

- the temperature sensor 180J is used to detect temperature.

- the electronic device 100 uses the temperature detected by the temperature sensor 180J to implement a temperature treatment strategy. For example, when the temperature reported by the temperature sensor 180J exceeds the threshold, the electronic device 100 may reduce the performance of the processor located near the temperature sensor 180J, so as to reduce power consumption and implement thermal protection.

- the electronic device 100 when the temperature is lower than another threshold, the electronic device 100 heats the battery 142 to prevent the electronic device 100 from being shut down abnormally due to the low temperature.

- the electronic device 100 boosts the output voltage of the battery 142 to avoid abnormal shutdown caused by low temperature.

- the touch sensor 180K is also called “touch device”.

- the touch sensor 180K can be disposed on the display screen 194, and the touch sensor 180K and the display screen 194 form a touch screen, also called a “touch screen”.

- the touch sensor 180K is used to detect a touch operation on or near it.

- the touch sensor can pass the detected touch operation to the application processor to determine the type of touch event.

- Visual output related to the touch operation can be provided through the display screen 194 .

- the touch sensor 180K may also be disposed on the surface of the electronic device 100 , which is different from the position of the display screen 194 .

- the bone conduction sensor 180M can acquire vibration signals. In some embodiments, the bone conduction sensor 180M can acquire the vibration signal of the vibrating bone mass of the human voice. The bone conduction sensor 180M can also contact the human pulse and receive the blood pressure beating signal. In some embodiments, the bone conduction sensor 180M can also be disposed in the earphone, combined into a bone conduction earphone.

- the audio module 170 can analyze the voice signal based on the vibration signal of the vibrating bone mass of the vocal part acquired by the bone conduction sensor 180M, so as to realize the voice function.

- the application processor can analyze the heart rate information based on the blood pressure beating signal acquired by the bone conduction sensor 180M, so as to realize the heart rate detection function.

- the keys 190 include a power key, a volume key and the like.

- the key 190 may be a mechanical key. It can also be a touch button.

- the electronic device 100 can receive key input and generate key signal input related to user settings and function control of the electronic device 100 .

- the motor 191 can generate a vibrating reminder.

- the motor 191 can be used for incoming call vibration prompts, and can also be used for touch vibration feedback.

- touch operations applied to different applications may correspond to different vibration feedback effects.

- the indicator 192 can be an indicator light, and can be used to indicate charging status, power change, and can also be used to indicate messages, missed calls, notifications, and the like.

- the SIM card interface 195 is used for connecting a SIM card.

- the SIM card can be connected and separated from the electronic device 100 by inserting it into the SIM card interface 195 or pulling it out from the SIM card interface 195 .

- the structure illustrated in the embodiment of the present application does not constitute a specific limitation on the electronic device 100 .

- the electronic device 100 may include more or fewer components than shown in the figure, or combine certain components, or separate certain components, or arrange different components.

- the illustrated components can be realized in hardware, software or a combination of software and hardware.

- FIG. 3 shows a hardware architecture diagram of an image processing apparatus 200 provided by an embodiment of the present application.

- the image processing device 200 may be, for example, a processor chip.

- the hardware architecture diagram shown in FIG. 3 may be the processor 110 in FIG. 2 , and the image processing method provided in the embodiment of the present application may be applied on the processor chip.

- the image processing apparatus 200 includes: at least one CPU, a memory, a microcontroller (microcontroller unit, MCU), a GPU, an NPU, a memory bus, a receiving interface, a sending interface, and the like.

- the image processing device 200 may also include an AP, a decoder, a dedicated graphics processor, and the like.

- the connectors include various interfaces, transmission lines or buses, etc. These interfaces are usually electrical communication interfaces, but they may also be mechanical interfaces or other forms The interface of this application does not impose any restrictions on it.

- the CPU may be a single-core (single-CPU) processor or a multi-core (multi-CPU) processor.

- the CPU may be a processor group composed of multiple processors, and the multiple processors are coupled to each other through one or more buses.

- the connection interface can be the data input interface of the processor chip.

- the receiving interface and the sending interface can be high definition multimedia interface (high definition multimedia interface, HDMI), V-By-One Interface, embedded display port (embedded display port, eDP), mobile industry processor interface (mobile industry processor interface, MIPI) display port (DP), etc.

- the memory can refer to the above description of the internal memory 121.

- the above-mentioned parts are integrated on the same chip.

- the CPU, GPU, decoder, receiving interface, and sending interface are integrated on one chip, and each part inside the chip accesses an external memory through a bus.

- a dedicated graphics processor can be a dedicated ISP.

- the NPU can also be used as an independent processor chip.

- the NPU is used to implement related operations of various neural networks or deep learning.

- the image processing method provided in the embodiment of the present application may be implemented by a GPU or an NPU, or may be implemented by a dedicated graphics processor.

- the chip involved in the embodiment of the present application is a system manufactured on the same semiconductor substrate by an integrated circuit process, also called a semiconductor chip, which may be an integrated circuit formed on the substrate by using an integrated circuit process. Assemblies whose outer layers are usually encapsulated by semiconductor encapsulation materials.

- the integrated circuit may include various functional devices, and each type of functional device includes transistors such as logic gate circuits, metal oxide semiconductor (MOS) transistors, and diodes, and may also include other components such as capacitors, resistors, or inductors.

- MOS metal oxide semiconductor

- Each functional device can work independently or under the action of necessary driver software, and can realize various functions such as communication, calculation or storage.

- FIG. 4 is a schematic flowchart of an image processing method shown in an embodiment of the present application. As shown in FIG. 4, the image processing method 10 includes: S10 to S50.

- the multiple frames of original images include: a first viewing angle image and a second viewing angle image, and the viewing angle corresponding to the first viewing angle image is different from the viewing angle corresponding to the second viewing angle image.

- the execution subject of the image processing method may be the electronic device 100 provided with the camera module as shown in FIG. 2 , or the image processing apparatus 200 shown in FIG. 3 .

- the execution subject is the electronic device 100

- multiple frames of original images are obtained through the cameras in the camera module, specifically through several cameras or which camera is used to obtain them, which can be set and changed as required, and this embodiment of the application does not make any limit.

- the execution subject is an image processing device

- multiple frames of original images can be obtained through the receiving interface, and the multiple frames of original images are captured by a camera module of an electronic device connected to the image processing device.

- the aforementioned original image may also be called a RAW image.

- the multi-frame original image can be a Bayer format image, or a grayscale image, or part of it can be a Bayer format image, and part of it can be a grayscale image. Specifically, it can be acquired as needed, and this embodiment of the present application does not impose any restrictions on this .

- the first field-of-view image and the second field-of-view image may each include one frame, or may include multiple frames, but at least the acquired multi-frame original images include one frame of the first field of view image and 1 frame of the second field of view image. It should be understood that the multiple frames of the first field of view image and the multiple frames of the second field of view image may not be shot at the same time, but they should be images shot for the same scene to be shot in the same time period.

- the difference between the viewing angle corresponding to the first viewing angle image and the viewing angle corresponding to the second viewing angle image can be expressed as: the viewing angle corresponding to the first viewing angle image is larger than the viewing angle corresponding to the second viewing angle image The viewing angle, or, the viewing angle corresponding to the first viewing angle image is smaller than the viewing angle corresponding to the second viewing angle image.

- the first field-of-view image includes one or more of the following: multiple frames of the first image, multiple frames of the second image, and at least one frame of the third image.

- the multiple frames of the first image include at least one frame of long-exposure image and at least one frame of short-exposure image

- the second image is a Bayer pattern image with normal exposure

- the third image is a grayscale image

- Multiple frames of first images, multiple frames of second images, and at least one frame of third images are acquired, and a second field of view image is acquired.

- first image, the second image, and the third image all belong to the first field of view image, so the field of view corresponding to the first image, the field of view corresponding to the second image, and the field of view corresponding to the third image

- the angles are all the same, and are different from the angle of view corresponding to the second angle of view image.

- the long-exposure image refers to an image obtained after a long time exposure during shooting

- the short-exposure image refers to an image obtained after a short time exposure during shooting, wherein both long-exposure and short-exposure are relatively normal in terms of exposure time.

- the exposure time is the time used for photoelectric conversion when the image sensor captures an image.

- the 2 frames of first images are respectively 1 frame of long-exposure image and 1 frame of short-exposure image; when 3 or more frames of first images are acquired, the multi-frame Except that one frame of the first image is a long-exposure image and one frame is a short-exposure image, the other images may be long-exposure images or short-exposure images, which can be acquired according to needs, which is not limited in this embodiment of the present application.

- the first image is a Bayer image or a grayscale image.

- the long-exposure image may be a long-exposure Bayer pattern image or a long-exposure grayscale image

- the short-exposure image may be a short-exposure Bayer pattern image or a short-exposure grayscale image.

- both the long-exposure image and the short-exposure image are Bayer format images

- the first image and the second image can be captured by the same camera.

- both the long-exposure image and the short-exposure image are grayscale images

- the first image and the third image can be captured by the same camera.

- it may also be obtained separately by multiple different cameras, which is not limited in this embodiment of the present application.

- the second field-of-view image is a Bayer pattern image or a grayscale image.

- the dimensions of multiple frames of original images may all be the same.

- the sizes of the multiple frames of original images may also be partly the same, partly different; or completely different.

- the embodiment of the present application does not impose any limitation on this.

- the acquired multiple frames of images of the first field of view may be enlarged or reduced so that all the images of the first field of view are of the same size, so as to facilitate subsequent processing and calculation.

- zooming in and out may be performed, so that all the second field of view images have the same size, so as to facilitate subsequent processing and calculation.

- multiple frames of original images may be acquired continuously, and the acquisition intervals may be the same or different.

- multiple frames of original images may not be acquired continuously.

- the multiple frames of first images may be acquired continuously.

- the multiple frames of second images may be acquired continuously.

- the multiple frames of third images may also be acquired continuously.