WO2023183366A1 - Planar mode improvement for intra prediction - Google Patents

Planar mode improvement for intra prediction Download PDFInfo

- Publication number

- WO2023183366A1 WO2023183366A1 PCT/US2023/015869 US2023015869W WO2023183366A1 WO 2023183366 A1 WO2023183366 A1 WO 2023183366A1 US 2023015869 W US2023015869 W US 2023015869W WO 2023183366 A1 WO2023183366 A1 WO 2023183366A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- video

- block

- samples

- reference sample

- video block

- Prior art date

Links

- 230000006872 improvement Effects 0.000 title description 4

- 239000013074 reference sample Substances 0.000 claims abstract description 152

- 238000012545 processing Methods 0.000 claims abstract description 63

- 238000003672 processing method Methods 0.000 claims abstract description 32

- 230000015654 memory Effects 0.000 claims description 38

- 238000012549 training Methods 0.000 claims description 27

- 238000003860 storage Methods 0.000 claims description 24

- 238000013139 quantization Methods 0.000 claims description 19

- 230000006835 compression Effects 0.000 claims description 12

- 238000007906 compression Methods 0.000 claims description 12

- 230000011664 signaling Effects 0.000 claims description 9

- 230000006870 function Effects 0.000 claims description 7

- 230000001419 dependent effect Effects 0.000 claims 2

- 238000000034 method Methods 0.000 abstract description 94

- 239000000523 sample Substances 0.000 description 141

- 239000013598 vector Substances 0.000 description 51

- 241000023320 Luma <angiosperm> Species 0.000 description 32

- OSWPMRLSEDHDFF-UHFFFAOYSA-N methyl salicylate Chemical compound COC(=O)C1=CC=CC=C1O OSWPMRLSEDHDFF-UHFFFAOYSA-N 0.000 description 32

- 230000008569 process Effects 0.000 description 31

- 208000037170 Delayed Emergence from Anesthesia Diseases 0.000 description 24

- 238000010586 diagram Methods 0.000 description 24

- 238000000638 solvent extraction Methods 0.000 description 24

- 238000001914 filtration Methods 0.000 description 21

- 230000003044 adaptive effect Effects 0.000 description 18

- 238000004891 communication Methods 0.000 description 15

- 238000005192 partition Methods 0.000 description 13

- 230000002123 temporal effect Effects 0.000 description 6

- 230000005540 biological transmission Effects 0.000 description 5

- 238000012986 modification Methods 0.000 description 5

- 230000004048 modification Effects 0.000 description 5

- 238000009795 derivation Methods 0.000 description 4

- 239000011159 matrix material Substances 0.000 description 4

- 238000004458 analytical method Methods 0.000 description 3

- 230000008901 benefit Effects 0.000 description 3

- 238000010276 construction Methods 0.000 description 3

- 238000013500 data storage Methods 0.000 description 3

- 238000013507 mapping Methods 0.000 description 3

- 238000003491 array Methods 0.000 description 2

- 238000004590 computer program Methods 0.000 description 2

- 238000013461 design Methods 0.000 description 2

- 238000006073 displacement reaction Methods 0.000 description 2

- 230000003287 optical effect Effects 0.000 description 2

- 238000012360 testing method Methods 0.000 description 2

- 238000012935 Averaging Methods 0.000 description 1

- 241000985610 Forpus Species 0.000 description 1

- 101150058395 US22 gene Proteins 0.000 description 1

- 238000004364 calculation method Methods 0.000 description 1

- 230000015556 catabolic process Effects 0.000 description 1

- 230000008859 change Effects 0.000 description 1

- 230000003247 decreasing effect Effects 0.000 description 1

- 238000006731 degradation reaction Methods 0.000 description 1

- 238000005516 engineering process Methods 0.000 description 1

- 238000009499 grossing Methods 0.000 description 1

- 230000003993 interaction Effects 0.000 description 1

- 238000012432 intermediate storage Methods 0.000 description 1

- 239000004973 liquid crystal related substance Substances 0.000 description 1

- 230000002093 peripheral effect Effects 0.000 description 1

- 230000011218 segmentation Effects 0.000 description 1

- 238000000926 separation method Methods 0.000 description 1

- 238000001228 spectrum Methods 0.000 description 1

- 230000003068 static effect Effects 0.000 description 1

- 230000001360 synchronised effect Effects 0.000 description 1

- 238000012546 transfer Methods 0.000 description 1

- 230000009466 transformation Effects 0.000 description 1

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/117—Filters, e.g. for pre-processing or post-processing

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/103—Selection of coding mode or of prediction mode

- H04N19/11—Selection of coding mode or of prediction mode among a plurality of spatial predictive coding modes

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/176—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a block, e.g. a macroblock

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/593—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving spatial prediction techniques

Definitions

- This application is related to video coding and compression. More specifically, this application relates to video processing apparatuses and methods for intra prediction with reference sample filters.

- Digital video is supported by a variety of electronic devices, such as digital televisions, laptop or desktop computers, tablet computers, digital cameras, digital recording devices, digital media players, video gaming consoles, smart phones, video teleconferencing devices, video streaming devices, etc.

- the electronic devices transmit and receive or otherwise communicate digital video data across a communication network, and/or store the digital video data on a storage device. Due to a limited bandwidth capacity of the communication network and limited memory' resources of the storage device, video coding may be used to compress the video data according to one or more video coding standards before it is communicated or stored.

- video coding standards include Versatile Video Coding (VVC), Joint Exploration test Model (JEM), High-Efficiency Video Coding (HEVC/H.265), Advanced Video Coding (AVC/H.264), Moving Picture Expert Group (MPEG) coding, or the like.

- Video coding generally utilizes prediction methods (e.g., inter-prediction, intra-prediction, or the like) that take advantage of redundancy inherent in the video data.

- Video coding aims to compress video data into a form that uses a lower bit rate, while avoiding or minimizing degradations to video quality.

- SUMMARY [0004] Implementations of the disclosure provide a video processing apparatus and method for performing intra prediction on a video block.

- the video processing method may include receiving, by a processor, reference samples from a video frame of a video comprising the video block.

- the video processing method may further include determining, by the processor, a reference sample filter based on a size of each video block.

- the reference sample filter is among a plurality of reference sample filters each derived for a different video block size.

- the video processing method may also include applying, by the processor, the determined reference sample filter to the received reference samples.

- the video processing method may additionally include performing, by the processor, the intra prediction on the video block using the filtered reference samples.

- Implementations of the present disclosure also provide a video processing apparatus for performing intra prediction on a video block.

- the video processing apparatus may include a memory and one or more processors coupled to the memory.

- the one or more processors may be configured to receive reference samples from a video frame of a video comprising the video block.

- the one or more processors may be further configured to determine a reference sample filter based on a size of each video block.

- the reference sample filter is among a plurality of reference sample filters each derived for a different video block size.

- the one or more processors may also be configured to apply the determined reference sample filter to the received reference samples.

- the one or more processors may also be configured to perform the intra prediction on the video block using the filtered reference samples.

- Implementations of the present disclosure further provide a non-transitory computer- readable storage medium having stored therein instructions which, when executed by one or more processors, cause the one or more processors to perform a video processing method for performing intra prediction on a video block.

- the video processing method may include receiving reference samples from a video frame of a video comprising the video block.

- the video processing method may further include determining a reference sample filter based on a size of each video block.

- the reference sample filter is among a plurality of reference sample filters each derived for a different video block size.

- the video processing method may also include applying the determined reference sample filter to the received reference samples.

- the video processing method may additionally include performing the intra prediction on the video block using the filtered reference samples.

- FIG.1 is a block diagram illustrating an exemplary system for encoding and decoding video blocks in accordance with some implementations of the present disclosure.

- FIG.2 is a block diagram illustrating an exemplary video encoder in accordance with some implementations of the present disclosure.

- FIG.3 is a block diagram illustrating an exemplary video decoder in accordance with some implementations of the present disclosure.

- FIGS. 4A through 4E are graphical representations illustrating how a frame is recursively partitioned into multiple video blocks of different sizes and shapes in accordance with some implementations of the present disclosure.

- FIG.5 illustrates a diagram of intra modes in accordance with some implementations of the present disclosure.

- FIGS. 6A and 6B illustrate exemplary reference samples for wide-angular intra prediction in accordance with some implementations of the present disclosure.

- FIG. 7 illustrates an exemplary discontinuity in case of wide-angle intra prediction direction in accordance with some implementations of the present disclosure.

- FIG. 1 illustrates an exemplary discontinuity in case of wide-angle intra prediction direction in accordance with some implementations of the present disclosure.

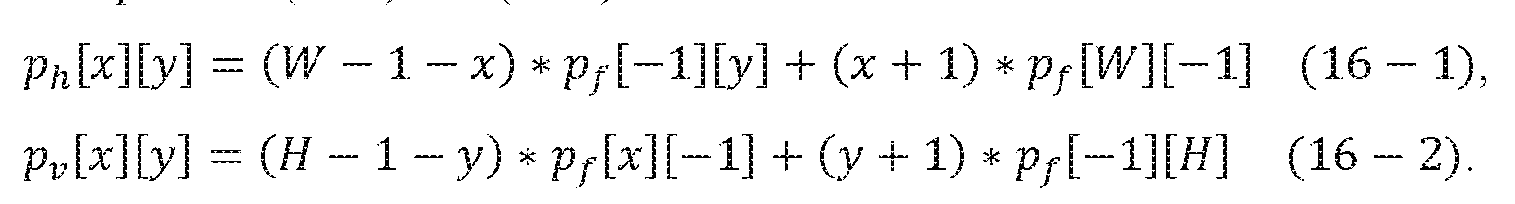

- FIG. 8 illustrates an interpolation of a sample in a current video block for intra prediction in planar mode in accordance with some implementations of the present disclosure.

- FIG. 9 is a block diagram illustrating an exemplary sample prediction process in accordance with some implementations of the present disclosure.

- FIG.10 illustrates a diagram of intra prediction along an interpolation direction and the perpendicular interpolation direction through a sample of the video block in accordance with some implementations of the present disclosure.

- FIG. 11 illustrates a diagram of intra prediction using a block-level interpolation direction to interpolate samples in a video block in accordance with some implementations of the present disclosure.

- FIG.12 illustrates a diagram of intra prediction using fractional reference samples to interpolate a sample in a video block in accordance with some implementations of the present disclosure.

- FIG. 13 illustrates a diagram of intra prediction using second order gradients of reconstructed samples in neighboring video blocks to interpolate a sample in a video block in accordance with some implementations of the present disclosure.

- FIG. 14 illustrates a diagram of intra prediction using second order gradients of fractional reference samples generated based on the reconstructed samples in neighboring video blocks to interpolate a sample in a video block in accordance with some implementations of the present disclosure.

- FIG. 13 illustrates a diagram of intra prediction using second order gradients of fractional reference samples generated based on the reconstructed samples in neighboring video blocks to interpolate a sample in a video block in accordance with some implementations of the present disclosure.

- FIG. 14 illustrates a diagram of intra prediction using second order gradients of fractional reference samples generated based on the reconstructed samples in neighboring video blocks to interpol

- FIG. 15 illustrates a diagram of intra prediction using different sample-specific interpolation directions to interpolate samples in a video block in accordance with some implementations of the present disclosure.

- FIG.16 is a flow chart of an exemplary method for performing intra prediction on a video block in accordance with some implementations of the present disclosure.

- FIG. 17 is an illustration of exemplary adaptive reference sample filtering in accordance with some implementations of the present disclosure.

- FIG.18 is an illustration of exemplary derivation of an adaptive reference sample filter using reconstructed samples in accordance with some implementations of the present disclosure.

- FIG.19 is a flow chart of an exemplary method for performing planar prediction with an adaptive reference sample filter in accordance with some implementations of the present disclosure.

- FIG.20 is a block diagram illustrating an exemplary block size-based reference sample filtering process in accordance with some implementations of the present disclosure.

- FIG. 21 is a flow chart of an exemplary method for performing reference sample filtering with block size-based reference sample filters in accordance with some implementations of the present disclosure.

- FIG. 22 is a flow chart of an exemplary method for training fixed block size-based reference sample filters offline in accordance with some implementations of the present disclosure.

- FIG.23 is a flow chart of an exemplary method for determining adaptive block size- based reference sample filters in accordance with some implementations of the present disclosure.

- FIG.25 is a block diagram illustrating a computing environment coupled with a user interface in accordance with some implementations of the present disclosure.

- DETAILED DESCRIPTION [0034]

- the system 10 includes a source device 12 that generates and encodes video data to be decoded at a later time by a destination device 14.

- the source device 12 and the destination device 14 may include any of a wide variety of electronic devices, including desktop or laptop computers, tablet computers, smart phones, set-top boxes, digital televisions, cameras, display devices, digital media players, video gaming consoles, video streaming device, or the like.

- the source device 12 and the destination device 14 are equipped with wireless communication capabilities.

- the destination device 14 may receive the encoded video data to be decoded via a link 16.

- the link 16 may include any type of communication medium or device capable of forwarding the encoded video data from the source device 12 to the destination device 14.

- the link 16 may include a communication medium to enable the source device 12 to transmit the encoded video data directly to the destination device 14 in real time.

- the encoded video data may be modulated according to a communication standard, such as a wireless communication protocol, and transmitted to the destination device 14.

- the communication medium may include any wireless or wired communication medium, such as a Radio Frequency (RF) spectrum or one or more physical transmission lines.

- RF Radio Frequency

- the communication medium may form part of a packet-based network, such as a local area network, a wide-area network, or a global network such as the Internet.

- the communication medium may include routers, switches, base stations, or any other equipment that may be useful to facilitate communication from the source device 12 to the destination device 14.

- the encoded video data may be transmitted from an output interface 22 to a storage device 32. Subsequently, the encoded video data in the storage device 32 may be accessed by the destination device 14 via an input interface 28.

- the storage device 32 may include any of a variety of distributed or locally accessed data storage media such as a hard drive, Blu-ray discs, Digital Versatile Disks (DVDs), Compact Disc Read-Only Memories (CD-ROMs), flash memory, volatile or non-volatile memory, or any other suitable digital storage media for storing the encoded video data.

- the storage device 32 may correspond to a file server or another intermediate storage device that may store the encoded video data generated by the source device 12.

- the destination device 14 may access the stored video data from the storage device 32 via streaming or downloading.

- the file server may be any type of computer capable of storing the encoded video data and transmitting the encoded video data to the destination device 14.

- Exemplary file servers include a web server (e.g., for a website), a File Transfer Protocol (FTP) server, Network Attached Storage (NAS) devices, or a local disk drive.

- the destination device 14 may access the encoded video data through any standard data connection, including a wireless channel (e.g., a Wireless Fidelity (Wi-Fi) connection), a wired connection (e.g., Digital Subscriber Line (DSL), cable modem, etc.), or any combination thereof that is suitable for accessing encoded video data stored on a file server.

- a wireless channel e.g., a Wireless Fidelity (Wi-Fi) connection

- a wired connection e.g., Digital Subscriber Line (DSL), cable modem, etc.

- the transmission of the encoded video data from the storage device 32 may be a streaming transmission, a download transmission, or a combination of both.

- the source device 12 includes a video source 18, a video encoder 20, and the output interface 22.

- the video source 18 may include a source such as a video capturing device, e.g., a video camera, a video archive containing previously captured video, a video feeding interface to receive video data from a video content provider, and/or a computer graphics system for generating computer graphics data as the source video, or a combination of such sources.

- a video capturing device e.g., a video camera, a video archive containing previously captured video, a video feeding interface to receive video data from a video content provider, and/or a computer graphics system for generating computer graphics data as the source video, or a combination of such sources.

- the source device 12 and the destination device 14 may include camera phones or video phones.

- the implementations described in the present disclosure may be applicable to video coding in general, and may be applied to wireless and/or wired applications.

- the captured, pre-captured, or computer-generated video may be encoded by the video encoder 20.

- the encoded video data may be transmitted directly to the destination device 14 via the output interface 22 of the source device 12.

- the encoded video data may also (or alternatively) be stored onto the storage device 32 for later access by the destination device 14 or other devices, for decoding and/or playback.

- the output interface 22 may further include a modem and/or a transmitter.

- the destination device 14 includes the input interface 28, a video decoder 30, and a display device 34.

- the input interface 28 may include a receiver and/or a modem and receive the encoded video data over the link 16.

- the encoded video data communicated over the link 16, or provided on the storage device 32 may include a variety of syntax elements generated by the video encoder 20 for use by the video decoder 30 in decoding the video data.

- the destination device 14 may include the display device 34, which can be an integrated display device and an external display device that is configured to communicate with the destination device 14.

- the display device 34 displays the decoded video data for a user, and may include any of a variety of display devices such as a Liquid Crystal Display (LCD), a plasma display, an Organic Light Emitting Diode (OLED) display, or another type of display device.

- LCD Liquid Crystal Display

- OLED Organic Light Emitting Diode

- the video encoder 20 and the video decoder 30 may operate according to proprietary or industry standards, such as VVC, HEVC, MPEG-4, Part 10, AVC, or extensions of such standards.

- the present disclosure is not limited to a specific video encoding/decoding standard and may be applicable to other video encoding/decoding standards. It is generally contemplated that the video encoder 20 of the source device 12 may be configured to encode video data according to any of these current or future standards. Similarly, it is also generally contemplated that the video decoder 30 of the destination device 14 may be configured to decode video data according to any of these current or future standards.

- the video encoder 20 and the video decoder 30 each may be implemented as any of a variety of suitable encoder and/or decoder circuitry, such as one or more microprocessors, Digital Signal Processors (DSPs), Application Specific Integrated Circuits (ASICs), Field Programmable Gate Arrays (FPGAs), discrete logic, software, hardware, firmware or any combinations thereof.

- DSPs Digital Signal Processors

- ASICs Application Specific Integrated Circuits

- FPGAs Field Programmable Gate Arrays

- an electronic device may store instructions for the software in a suitable, non-transitory computer-readable medium and execute the instructions in hardware using one or more processors to perform the video encoding/decoding operations disclosed in the present disclosure.

- FIG. 2 is a block diagram illustrating an exemplary video encoder 20 in accordance with some implementations described in the present application.

- the video encoder 20 may perform intra and inter predictive coding of video blocks within video frames. Intra predictive coding relies on spatial prediction to reduce or remove spatial redundancy in video data within a given video frame or picture. Inter predictive coding relies on temporal prediction to reduce or remove temporal redundancy in video data within adjacent video frames or pictures of a video sequence.

- the video encoder 20 includes a video data memory 40, a prediction processing unit 41, a Decoded Picture Buffer (DPB) 64, a summer 50, a transform processing unit 52, a quantization unit 54, and an entropy encoding unit 56.

- the prediction processing unit 41 further includes a motion estimation unit 42, a motion compensation unit 44, a partition unit 45, an intra prediction processing unit 46, and an intra Block Copy (BC) unit 48.

- the video encoder 20 also includes an inverse quantization unit 58, an inverse transform processing unit 60, and a summer 62 for video block reconstruction.

- An in-loop filter 63 such as a deblocking filter, may be positioned between the summer 62 and the DPB 64 to filter block boundaries to remove block artifacts from reconstructed video data.

- Another in-loop filter such as an SAO filter, Cross Component Sample Adaptive Offset (CCSAO) filter, and/or Adaptive in-Loop Filter (ALF), may also be used in addition to the deblocking filter to filter an output of the summer 62.

- SAO SAO filter

- CCSAO Cross Component Sample Adaptive Offset

- ALF Adaptive in-Loop Filter

- the present application is not limited to the embodiments described herein, and instead, the application may be applied to a situation where an offset is selected for any of a luma component, a Cb chroma component and a Cr chroma component according to any other of the luma component, the Cb chroma component and the Cr chroma component to modify said any component based on the selected offset.

- a first component mentioned herein may be any of the luma component, the Cb chroma component and the Cr chroma component

- a second component mentioned herein may be any other of the luma component, the Cb chroma component and the Cr chroma component

- a third component mentioned herein may be a remaining one of the luma component, the Cb chroma component and the Cr chroma component.

- the in- loop filters may be omitted, and the decoded video block may be directly provided by the summer 62 to the DPB 64.

- the video encoder 20 may take the form of a fixed or programmable hardware unit or may be divided among one or more of the illustrated fixed or programmable hardware units.

- the video data memory 40 may store video data to be encoded by the components of the video encoder 20.

- the video data in the video data memory 40 may be obtained, for example, from the video source 18 as shown in FIG.1.

- the DPB 64 is a buffer that stores reference video data (for example, reference frames or pictures) for use in encoding video data by the video encoder 20 (e.g., in intra or inter predictive coding modes).

- the video data memory 40 and the DPB 64 may be formed by any of a variety of memory devices.

- the video data memory 40 may be on-chip with other components of the video encoder 20, or off-chip relative to those components.

- the partition unit 45 within the prediction processing unit 41 partitions the video data into video blocks.

- This partitioning may also include partitioning a video frame into slices, tiles (for example, sets of video blocks), or other larger Coding Units (CUs) according to predefined splitting structures such as a Quad-Tree (QT) structure associated with the video data.

- the video frame is or may be regarded as a two- dimensional array or matrix of samples with sample values.

- a sample in the array may also be referred to as a pixel or a pel.

- a number of samples in horizontal and vertical directions (or axes) of the array or picture define a size and/or a resolution of the video frame.

- the video frame may be divided into multiple video blocks by, for example, using QT partitioning.

- the video block again is or may be regarded as a two-dimensional array or matrix of samples with sample values, although of smaller dimension than the video frame.

- a number of samples in horizontal and vertical directions (or axes) of the video block define a size of the video block.

- the video block may further be partitioned into one or more block partitions or sub-blocks (which may form again blocks) by, for example, iteratively using QT partitioning, Binary-Tree (BT) partitioning, Triple- Tree (TT) partitioning or any combination thereof.

- BT Binary-Tree

- TT Triple- Tree partitioning or any combination thereof.

- block or “video block” as used herein may be a portion, in particular a rectangular (square or non- square) portion, of a frame or a picture.

- the block or video block may be or correspond to a Coding Tree Unit (CTU), a CU, a Prediction Unit (PU), or a Transform Unit (TU), and/or may be or correspond to a corresponding block, e.g., a Coding Tree Block (CTB), a Coding Block (CB), a Prediction Block (PB), or a Transform Block (TB).

- CTB Coding Tree Block

- CB Coding Block

- PB Prediction Block

- TB Transform Block

- the block or video block may be or correspond to a sub-block of a CTB, a CB, a PB, a TB, etc.

- the prediction processing unit 41 may select one of a plurality of possible predictive coding modes, such as one of a plurality of intra predictive coding modes or one of a plurality of inter predictive coding modes, for the current video block based on error results (e.g., coding rate and the level of distortion).

- the prediction processing unit 41 may provide the resulting intra or inter prediction coded block (e.g., a predictive block) to the summer 50 to generate a residual block and to the summer 62 to reconstruct the encoded block for use as part of a reference frame subsequently.

- the prediction processing unit 41 also provides syntax elements, such as motion vectors, intra-mode indicators, partition information, and other such syntax information to the entropy encoding unit 56.

- the intra prediction processing unit 46 within the prediction processing unit 41 may perform intra predictive coding of the current video block relative to one or more neighbor blocks in the same frame as the current block to be coded to provide spatial prediction.

- the motion estimation unit 42 and the motion compensation unit 44 within the prediction processing unit 41 perform inter predictive coding of the current video block relative to one or more predictive blocks in one or more reference frames to provide temporal prediction.

- the video encoder 20 may perform multiple coding passes, e.g., to select an appropriate coding mode for each block of video data.

- the motion estimation unit 42 determines the inter prediction mode for a current video frame by generating a motion vector, which indicates the displacement of a video block within the current video frame relative to a predictive block within a reference frame, according to a predetermined pattern within a sequence of video frames.

- Motion estimation performed by the motion estimation unit 42, may be a process of generating motion vectors, which may estimate motion for video blocks.

- a motion vector for example, may indicate the displacement of a video block within a current video frame or picture relative to a predictive block within a reference frame.

- the predetermined pattern may designate video frames in the sequence as P frames or B frames.

- the intra BC unit 48 may determine vectors, e.g., block vectors, for intra BC coding in a manner similar to the determination of motion vectors by the motion estimation unit 42 for inter prediction, or may utilize the motion estimation unit 42 to determine the block vectors.

- a predictive block for the video block may be or may correspond to a block or a reference block of a reference frame that is deemed as closely matching the video block to be coded in terms of pixel difference, which may be determined by Sum of Absolute Difference (SAD), Sum of Square Difference (SSD), or other difference metrics.

- the video encoder 20 may calculate values for sub-integer pixel positions of reference frames stored in the DPB 64.

- the video encoder 20 may interpolate values of one-quarter pixel positions, one-eighth pixel positions, or other fractional pixel positions of the reference frame. Therefore, the motion estimation unit 42 may perform a motion search relative to the full pixel positions and fractional pixel positions and output a motion vector with fractional pixel precision.

- the motion estimation unit 42 calculates a motion vector for a video block in an inter prediction coded frame by comparing the position of the video block to the position of a predictive block of a reference frame selected from a first reference frame list (List 0) or a second reference frame list (List 1), each of which identifies one or more reference frames stored in the DPB 64.

- Motion compensation performed by the motion compensation unit 44, may involve fetching or generating the predictive block based on the motion vector determined by the motion estimation unit 42.

- the motion compensation unit 44 may locate a predictive block to which the motion vector points in one of the reference frame lists, retrieve the predictive block from the DPB 64, and forward the predictive block to the summer 50.

- the summer 50 then forms a residual block of pixel difference values by subtracting pixel values of the predictive block provided by the motion compensation unit 44 from the pixel values of the current video block being coded.

- the pixel difference values forming the residual block may include luma or chroma component differences or both.

- the motion compensation unit 44 may also generate syntax elements associated with the video blocks of a video frame for use by the video decoder 30 in decoding the video blocks of the video frame.

- the syntax elements may include, for example, syntax elements defining the motion vector used to identify the predictive block, any flags indicating the prediction mode, or any other syntax information described herein. It is noted that the motion estimation unit 42 and the motion compensation unit 44 may be integrated together, which are illustrated separately for conceptual purposes in FIG.2.

- the intra BC unit 48 may generate vectors and fetch predictive blocks in a manner similar to that described above in connection with the motion estimation unit 42 and the motion compensation unit 44, but with the predictive blocks being in the same frame as the current block being coded and with the vectors being referred to as block vectors as opposed to motion vectors.

- the intra BC unit 48 may determine an intra- prediction mode to use to encode a current block.

- the intra BC unit 48 may encode a current block using various intra-prediction modes, e.g., during separate encoding passes, and test their performance through rate-distortion analysis.

- the intra BC unit 48 may select, among the various tested intra-prediction modes, an appropriate intra-prediction mode to use and generate an intra-mode indicator accordingly.

- the intra BC unit 48 may calculate rate-distortion values using a rate-distortion analysis for the various tested intra-prediction modes, and select the intra-prediction mode having the best rate-distortion characteristics among the tested modes as the appropriate intra-prediction mode to use.

- Rate-distortion analysis generally determines an amount of distortion (or error) between an encoded block and an original, unencoded block that was encoded to produce the encoded block, as well as a bitrate (i.e., a number of bits) used to produce the encoded block.

- Intra BC unit 48 may calculate ratios from the distortions and rates for the various encoded blocks to determine which intra-prediction mode exhibits the best rate-distortion value for the block.

- the intra BC unit 48 may use the motion estimation unit 42 and the motion compensation unit 44, in whole or in part, to perform such functions for Intra BC prediction according to the implementations described herein.

- a predictive block may be a block that is deemed as closely matching the block to be coded, in terms of pixel difference, which may be determined by SAD, SSD, or other difference metrics, and identification of the predictive block may include calculation of values for sub-integer pixel positions.

- the video encoder 20 may form a residual block by subtracting pixel values of the predictive block from the pixel values of the current video block being coded, forming pixel difference values.

- the pixel difference values forming the residual block may include both luma and chroma component differences.

- the intra prediction processing unit 46 may intra-predict a current video block, as an alternative to the inter-prediction performed by the motion estimation unit 42 and the motion compensation unit 44, or the intra block copy prediction performed by the intra BC unit 48, as described above. In particular, the intra prediction processing unit 46 may determine an intra prediction mode to use to encode a current block.

- the intra prediction processing unit 46 may encode a current block using various intra prediction modes, e.g., during separate encoding passes, and the intra prediction processing unit 46 (or a mode selection unit, in some examples) may select an appropriate intra prediction mode to use from the tested intra prediction modes.

- the intra prediction processing unit 46 may provide information indicative of the selected intra-prediction mode for the block to the entropy encoding unit 56.

- the entropy encoding unit 56 may encode the information indicating the selected intra-prediction mode in a bitstream.

- the summer 50 forms a residual block by subtracting the predictive block from the current video block.

- the residual video data in the residual block may be included in one or more TUs and is provided to the transform processing unit 52.

- the transform processing unit 52 transforms the residual video data into transform coefficients using a transform, such as a Discrete Cosine Transform (DCT) or a conceptually similar transform.

- DCT Discrete Cosine Transform

- the transform processing unit 52 may send the resulting transform coefficients to the quantization unit 54.

- the quantization unit 54 quantizes the transform coefficients to further reduce the bit rate.

- the quantization process may also reduce the bit depth associated with some or all of the coefficients.

- the degree of quantization may be modified by adjusting a quantization parameter.

- the quantization unit 54 may then perform a scan of a matrix including the quantized transform coefficients.

- the entropy encoding unit 56 may perform the scan. [0061] Following quantization, the entropy encoding unit 56 may use an entropy encoding technique to encode the quantized transform coefficients into a video bitstream, e.g., using Context Adaptive Variable Length Coding (CAVLC), Context Adaptive Binary Arithmetic Coding (CABAC), Syntax-based context-adaptive Binary Arithmetic Coding (SBAC), Probability Interval Partitioning Entropy (PIPE) coding, or another entropy encoding methodology or technique.

- CAVLC Context Adaptive Variable Length Coding

- CABAC Context Adaptive Binary Arithmetic Coding

- SBAC Syntax-based context-adaptive Binary Arithmetic Coding

- PIPE Probability Interval Partitioning Entropy

- the encoded bitstream may then be transmitted to the video decoder 30 as shown in FIG.1 or archived in the storage device 32 as shown in FIG.

- the entropy encoding unit 56 may also use an entropy encoding technique to encode the motion vectors and the other syntax elements for the current video frame being coded.

- the inverse quantization unit 58 and the inverse transform processing unit 60 apply inverse quantization and inverse transformation, respectively, to reconstruct the residual block in the pixel domain for generating a reference block for prediction of other video blocks. A reconstructed residual block may be generated thereof.

- the motion compensation unit 44 may generate a motion compensated predictive block from one or more reference blocks of the frames stored in the DPB 64.

- the motion compensation unit 44 may also apply one or more interpolation filters to the predictive block to calculate sub-integer pixel values for use in motion estimation.

- the summer 62 adds the reconstructed residual block to the motion compensated predictive block produced by the motion compensation unit 44 to produce a reference block for storage in the DPB 64.

- the reference block may then be used by the intra BC unit 48, the motion estimation unit 42, and the motion compensation unit 44 as a predictive block to inter predict another video block in a subsequent video frame.

- FIG. 3 is a block diagram illustrating an exemplary video decoder 30 in accordance with some implementations of the present application.

- the video decoder 30 includes a video data memory 79, an entropy decoding unit 80, a prediction processing unit 81, an inverse quantization unit 86, an inverse transform processing unit 88, a summer 90, and a DPB 92.

- the prediction processing unit 81 further includes a motion compensation unit 82, an intra prediction unit 84, and an intra BC unit 85.

- the video decoder 30 may perform a decoding process generally reciprocal to the encoding process described above with respect to the video encoder 20 in connection with FIG. 2.

- the motion compensation unit 82 may generate prediction data based on motion vectors received from the entropy decoding unit 80, while the intra prediction unit 84 may generate prediction data based on intra-prediction mode indicators received from the entropy decoding unit 80.

- a unit of the video decoder 30 may be tasked to perform the implementations of the present application.

- the implementations of the present disclosure may be divided among one or more of the units of the video decoder 30.

- the intra BC unit 85 may perform the implementations of the present application, alone, or in combination with other units of the video decoder 30, such as the motion compensation unit 82, the intra prediction unit 84, and the entropy decoding unit 80.

- the video decoder 30 may not include the intra BC unit 85 and the functionality of intra BC unit 85 may be performed by other components of the prediction processing unit 81, such as the motion compensation unit 82.

- the video data memory 79 may store video data, such as an encoded video bitstream, to be decoded by the other components of the video decoder 30.

- the video data stored in the video data memory 79 may be obtained, for example, from the storage device 32, from a local video source, such as a camera, via wired or wireless network communication of video data, or by accessing physical data storage media (e.g., a flash drive or hard disk).

- the video data memory 79 may include a Coded Picture Buffer (CPB) that stores encoded video data from an encoded video bitstream.

- the DPB 92 of the video decoder 30 stores reference video data for use in decoding video data by the video decoder 30 (e.g., in intra or inter predictive coding modes).

- the video data memory 79 and the DPB 92 may be formed by any of a variety of memory devices, such as dynamic random access memory (DRAM), including Synchronous DRAM (SDRAM), Magneto-resistive RAM (MRAM), Resistive RAM (RRAM), or other types of memory devices.

- DRAM dynamic random access memory

- SDRAM Synchronous DRAM

- MRAM Magneto-resistive RAM

- RRAM Resistive RAM

- the video data memory 79 and the DPB 92 are depicted as two distinct components of the video decoder 30 in FIG.3.

- the video data memory 79 and the DPB 92 may be provided by the same memory device or separate memory devices.

- the video data memory 79 may be on-chip with other components of the video decoder 30, or off-chip relative to those components.

- the video decoder 30 receives an encoded video bitstream that represents video blocks of an encoded video frame and associated syntax elements.

- the video decoder 30 may receive the syntax elements at the video frame level and/or the video block level.

- the entropy decoding unit 80 of the video decoder 30 may use an entropy decoding technique to decode the bitstream to obtain quantized coefficients, motion vectors or intra-prediction mode indicators, and other syntax elements.

- the entropy decoding unit 80 then forwards the motion vectors or intra-prediction mode indicators and other syntax elements to the prediction processing unit 81.

- the intra prediction unit 84 of the prediction processing unit 81 may generate prediction data for a video block of the current video frame based on a signaled intra-prediction mode and reference data from previously decoded blocks of the current frame.

- the motion compensation unit 82 of the prediction processing unit 81 produces one or more predictive blocks for a video block of the current video frame based on the motion vectors and other syntax elements received from the entropy decoding unit 80.

- Each of the predictive blocks may be produced from a reference frame within one of the reference frame lists.

- the video decoder 30 may construct the reference frame lists, e.g., List 0 and List 1, using default construction techniques based on reference frames stored in the DPB 92.

- the intra BC unit 85 of the prediction processing unit 81 produces predictive blocks for the current video block based on block vectors and other syntax elements received from the entropy decoding unit 80.

- the predictive blocks may be within a reconstructed region of the same picture as the current video block processed by the video encoder 20.

- the motion compensation unit 82 and/or the intra BC unit 85 determines prediction information for a video block of the current video frame by parsing the motion vectors and other syntax elements, and then uses the prediction information to produce the predictive blocks for the current video block being decoded.

- the motion compensation unit 82 uses some of the received syntax elements to determine a prediction mode (e.g., intra or inter prediction) used to code video blocks of the video frame, an inter prediction frame type (e.g., B or P), construction information for one or more of the reference frame lists for the frame, motion vectors for each inter predictive encoded video block of the frame, inter prediction status for each inter predictive coded video block of the frame, and other information to decode the video blocks in the current video frame.

- a prediction mode e.g., intra or inter prediction

- an inter prediction frame type e.g., B or P

- construction information for one or more of the reference frame lists for the frame e.g., motion vectors for each inter predictive encoded video block of the frame, inter prediction status for each inter predictive coded video block of the frame, and other information to decode the video blocks in the current video frame.

- the intra BC unit 85 may use some of the received syntax elements, e.g., a flag, to determine that the current video block was predicted using the intra BC mode, construction information of which video blocks of the frame are within the reconstructed region and should be stored in the DPB 92, block vectors for each intra BC predicted video block of the frame, intra BC prediction status for each intra BC predicted video block of the frame, and other information to decode the video blocks in the current video frame.

- the motion compensation unit 82 may also perform interpolation using the interpolation filters as used by the video encoder 20 during encoding of the video blocks to calculate interpolated values for sub-integer pixels of reference blocks.

- the motion compensation unit 82 may determine the interpolation filters used by the video encoder 20 from the received syntax elements and use the interpolation filters to produce predictive blocks.

- the inverse quantization unit 86 inversely quantizes the quantized transform coefficients provided in the bitstream and decoded by the entropy decoding unit 80 using the same quantization parameter calculated by the video encoder 20 for each video block in the video frame to determine a degree of quantization.

- the inverse transform processing unit 88 applies an inverse transform, e.g., an inverse DCT, an inverse integer transform, or a conceptually similar inverse transform process, to the transform coefficients in order to reconstruct the residual blocks in the pixel domain.

- the summer 90 reconstructs a decoded video block for the current video block by summing the residual block from the inverse transform processing unit 88 and a corresponding predictive block generated by the motion compensation unit 82 and the intra BC unit 85.

- the decoded video block may also be referred to as a reconstructed block for the current video block.

- An in-loop filter 91 such as a deblocking filter, SAO filter, CCSAO filter, and/or ALF may be positioned between the summer 90 and the DPB 92 to further process the decoded video block.

- the in-loop filter 91 may be omitted, and the decoded video block may be directly provided by the summer 90 to the DPB 92.

- the decoded video blocks in a given frame are then stored in the DPB 92, which stores reference frames used for subsequent motion compensation of next video blocks.

- the DPB 92, or a memory device separate from the DPB 92 may also store decoded video for later presentation on a display device, such as the display device 34 of FIG.1.

- a video sequence typically includes an ordered set of frames or pictures. Each frame may include three sample arrays, denoted SL, SCb, and SCr.

- SL is a two-dimensional array of luma samples.

- SCb is a two-dimensional array of Cb chroma samples.

- SCr is a two-dimensional array of Cr chroma samples.

- a frame may be monochrome and therefore includes only one two-dimensional array of luma samples.

- the video encoder 20 (or more specifically the partition unit 45) generates an encoded representation of a frame by first partitioning the frame into a set of CTUs.

- a video frame may include an integer number of CTUs arranged consecutively in a raster scan order from left to right and from top to bottom.

- Each CTU is a largest logical coding unit and the width and height of the CTU are signaled by the video encoder 20 in a sequence parameter set, such that all the CTUs in a video sequence have the same size being one of 128 ⁇ 128, 64 ⁇ 64, 32 ⁇ 32, and 16 ⁇ 16. But it should be noted that a CTU in the present disclosure is not necessarily limited to a particular size. As shown in FIG.4B, each CTU may include one CTB of luma samples, two corresponding coding tree blocks of chroma samples, and syntax elements used to code the samples of the coding tree blocks.

- a CTU may include a single coding tree block and syntax elements used to code the samples of the coding tree block.

- a coding tree block may be an N ⁇ N block of samples.

- the video encoder 20 may recursively perform tree partitioning such as binary-tree partitioning, ternary-tree partitioning, quad-tree partitioning or a combination thereof on the coding tree blocks of the CTU and divide the CTU into smaller CUs.

- the 64 ⁇ 64 CTU 400 is first divided into four smaller CUs, each having a block size of 32 ⁇ 32.

- CU 410 and CU 420 are each divided into four CUs of 16 ⁇ 16 by block size.

- the two 16 ⁇ 16 CUs 430 and 440 are each further divided into four CUs of 8 ⁇ 8 by block size.

- FIG.4D depicts a quad-tree data structure illustrating the end result of the partition process of the CTU 400 as depicted in FIG. 4C, each leaf node of the quad-tree corresponding to one CU of a respective size ranging from 32 ⁇ 32 to 8 ⁇ 8.

- each CU may include a CB of luma samples and two corresponding coding blocks of chroma samples of a frame of the same size, and syntax elements used to code the samples of the coding blocks.

- a CU may include a single coding block and syntax structures used to code the samples of the coding block.

- quad-tree partitioning depicted in FIGS. 4C and 4D is only for illustrative purposes and one CTU can be split into CUs to adapt to varying local characteristics based on quad/ternary/binary-tree partitions.

- each quad-tree leaf CU can be further partitioned by a binary and ternary tree structure.

- FIG. 4E there are multiple possible partitioning types of a coding block having a width W and a height H, i.e., quaternary partitioning, vertical binary partitioning, horizontal binary partitioning, vertical ternary partitioning, vertical extended ternary partitioning, horizontal ternary partitioning, and horizontal extended ternary partitioning.

- the video encoder 20 may further partition a coding block of a CU into one or more M ⁇ N PBs.

- a PB may include a rectangular (square or non-square) block of samples on which the same prediction, inter or intra, is applied.

- a PU of a CU may include a PB of luma samples, two corresponding PBs of chroma samples, and syntax elements used to predict the PBs.

- a PU may include a single PB and syntax structures used to predict the PB.

- the video encoder 20 may generate predictive luma, Cb, and Cr blocks for luma, Cb, and Cr PBs of each PU of the CU. [0080]

- the video encoder 20 may use intra prediction or inter prediction to generate the predictive blocks for a PU.

- the video encoder 20 may generate the predictive blocks of the PU based on decoded samples of the frame associated with the PU. If the video encoder 20 uses inter prediction to generate the predictive blocks of a PU, the video encoder 20 may generate the predictive blocks of the PU based on decoded samples of one or more frames other than the frame associated with the PU.

- the video encoder 20 may generate a luma residual block for the CU by subtracting the CU’s predictive luma blocks from its original luma coding block such that each sample in the CU’s luma residual block indicates a difference between a luma sample in one of the CU’s predictive luma blocks and a corresponding sample in the CU’s original luma coding block.

- the video encoder 20 may generate a Cb residual block and a Cr residual block for the CU, respectively, such that each sample in the CU’s Cb residual block indicates a difference between a Cb sample in one of the CU’s predictive Cb blocks and a corresponding sample in the CU’s original Cb coding block, and each sample in the CU’s Cr residual block may indicate a difference between a Cr sample in one of the CU’s predictive Cr blocks and a corresponding sample in the CU’s original Cr coding block.

- video encoder 20 may use quad-tree partitioning to decompose the luma, Cb, and Cr residual blocks of a CU into one or more luma, Cb, and Cr transform blocks respectively.

- a transform block may include a rectangular (square or non-square) block of samples on which the same transform is applied.

- a TU of a CU may include a transform block of luma samples, two corresponding transform blocks of chroma samples, and syntax elements used to transform the transform block samples.

- each TU of a CU may be associated with a luma transform block, a Cb transform block, and a Cr transform block.

- the luma transform block associated with the TU may be a sub-block of the CU’s luma residual block.

- the Cb transform block may be a sub-block of the CU’s Cb residual block.

- the Cr transform block may be a sub-block of the CU’s Cr residual block.

- a TU may include a single transform block and syntax structures used to transform the samples of the transform block.

- Video encoder 20 may apply one or more transforms to a luma transform block of a TU to generate a luma coefficient block for the TU.

- a coefficient block may be a two-dimensional array of transform coefficients.

- a transform coefficient may be a scalar quantity.

- the video encoder 20 may apply one or more transforms to a Cb transform block of a TU to generate a Cb coefficient block for the TU.

- the video encoder 20 may apply one or more transforms to a Cr transform block of a TU to generate a Cr coefficient block for the TU.

- the video encoder 20 may quantize the coefficient block. Quantization generally refers to a process in which transform coefficients are quantized to possibly reduce the amount of data used to represent the transform coefficients, providing further compression.

- the video encoder 20 may apply an entropy encoding technique to encode syntax elements indicating the quantized transform coefficients. For example, the video encoder 20 may perform CABAC on the syntax elements indicating the quantized transform coefficients. Finally, the video encoder 20 may output a bitstream that includes a sequence of bits that form a representation of coded frames and associated data, which is either saved in the storage device 32 or transmitted to the destination device 14. [0085] After receiving a bitstream generated by the video encoder 20, the video decoder 30 may parse the bitstream to obtain syntax elements from the bitstream. The video decoder 30 may reconstruct the frames of the video data based at least in part on the syntax elements obtained from the bitstream.

- the process of reconstructing the video data is generally reciprocal to the encoding process performed by the video encoder 20.

- the video decoder 30 may perform inverse transforms on the coefficient blocks associated with TUs of a current CU to reconstruct residual blocks associated with the TUs of the current CU.

- the video decoder 30 also reconstructs the coding blocks of the current CU by adding the samples of the predictive blocks for PUs of the current CU to corresponding samples of the transform blocks of the TUs of the current CU. After reconstructing the coding blocks for each CU of a frame, video decoder 30 may reconstruct the frame.

- video coding achieves video compression using primarily two modes, i.e., intra-frame prediction (or intra-prediction) and inter-frame prediction (or inter-prediction).

- Intra Block Copy IBC

- inter-frame prediction contributes more to the coding efficiency than intra-frame prediction because of the use of motion vectors for predicting a current video block from a reference video block.

- the motion vector predictor of the current CU is subtracted from the actual motion vector of the current CU to produce a Motion Vector Difference (MVD) for the current CU.

- MVD Motion Vector Difference

- a set of rules can be adopted by both the video encoder 20 and the video decoder 30 for constructing a motion vector candidate list (also known as a “merge list”) for a current CU using those potential candidate motion vectors associated with spatially neighboring CUs and/or temporally co-located CUs of the current CU and then selecting one member from the motion vector candidate list as a motion vector predictor for the current CU.

- a motion vector candidate list also known as a “merge list”

- CMV partitioning in Enhanced Compression Model ECM

- a CTU can be split into CUs by using a quaternary-tree structure (denoted as a coding tree) to adapt to various local characteristics.

- a decision whether to code a picture area using inter-picture (temporal) or intra-picture (spatial) prediction can be made at the leaf CU level.

- Each leaf CU can be further split into one, two, or four PUs according to a PU splitting type. Within each PU, the same prediction process can be applied, and the relevant information can be transmitted to the video decoder 30 on a PU basis.

- the leaf CU After obtaining a residual block by applying the prediction process based on the PU splitting type, the leaf CU can be partitioned into transform units (TUs) according to another quaternary-tree structure similar to the coding tree for the CU.

- TUs transform units

- a feature of the HEVC structure includes that it has multiple partition unit concepts such as CU, PU, and TU.

- a quadtree with a nested multi-type tree using a binary-split or ternary-split structure replaces the concepts of the multiple partition unit types (e.g., CU, PU, TU) in HEVC.

- the separation of the CU, PU, and TU concepts is removed except as needed for CUs that have a size too large for the maximum transform length, thereby supporting a higher flexibility for CU partition shapes.

- a CU can have either a square or rectangular shape.

- a CTU is first partitioned by a quaternary tree (a.k.a. quadtree) structure.

- the quaternary tree leaf nodes can be further partitioned by a multi-type tree structure.

- a multi-type tree structure As shown in FIG. 4E, there are various splitting types in the multi-type tree structure, including vertical binary splitting (SPLIT_BT_VER), horizontal binary splitting (SPLIT_BT_HOR), vertical ternary splitting (SPLIT_TT_VER), and horizontal ternary splitting (SPLIT_TT_HOR).

- the multi-type tree leaf nodes are referred to as CUs. Unless a CU is too large for the maximum transform length, this segmentation with the multi-type tree structure is used for prediction and transform processing without any further partitioning.

- FIG. 5 illustrates a diagram of intra modes in accordance with some examples.

- a basic intra prediction scheme applied in VVC is almost the same as that of HEVC, except that several prediction tools are further extended, added and/or improved, e.g., extended intra prediction with wide-angle intra modes, Multiple Reference Line (MRL) intra prediction, Position-Dependent intra Prediction Combination (PDPC), ISP prediction, Cross-Component Linear Model (CCLM) prediction, and Matrix weighted Intra Prediction (MIP).

- extended intra prediction with wide-angle intra modes e.g., Multiple Reference Line (MRL) intra prediction, Position-Dependent intra Prediction Combination (PDPC), ISP prediction, Cross-Component Linear Model (CCLM) prediction, and Matrix weighted Intra Prediction (MIP).

- MIP Matrix weighted Intra Prediction

- the number of angular intra modes is extended from 33 in HEVC to 93 in VVC.

- modes 2 to 66 are conventional angular intra modes

- modes -1 to -14 and modes 67 to 80 are wide-angle intra modes.

- the planar mode (mode 0 in FIG.5) and Direct Current (DC) mode (mode 1 in FIG.5) of HEVC are also applied in VVC.

- angular intra modes may be selected from the 93 angular intra modes for different block shapes. For example, for both square and rectangular video blocks, besides planar and DC modes, 65 angular intra modes among the 93 angular intra modes are also supported for each block shape.

- an index of a wide-angle intra mode of the video block may be adaptively determined by video decoder 30 according to an index of a conventional angular intra mode received from video encoder 20 using a mapping relationship as shown in Table 1 below.

- the wide-angle intra modes are signaled by video encoder 20 using the indexes of the conventional angular intra modes, which are mapped to indexes of the wide- angle intra modes by video decoder 30 after being parsed, thus ensuring that a total number (i.e., 67) of intra modes (i.e., the planar mode, the DC mode and 65 angular intra modes among the 93 angular intra modes) is unchanged, and the intra mode coding method is unchanged. As a result, a good efficiency of signaling intra modes is achieved while providing a consistent design across different block sizes.

- Table 1 shows a mapping relationship between indexes of conventional angular intra modes and indexes of wide-angle intra modes for the intra prediction of different block shapes in VCC, wherein W represents a width of a video block, and H represents a height of the video block.

- top reference samples (illustrated in gray color) with length 2W+1 and the left reference samples (illustrated in gray color) with length 2H+1 are used for wide-angle intra prediction to support the angular intra modes.

- a current video block e.g., a current CU

- two vertically-adjacent predicted samples e.g., 702 and 704

- two non-adjacent reference samples e.g., 706 and 708

- Low-pass reference samples filter and side smoothing can be applied to the wide-DQJOH ⁇ SUHGLFWLRQ ⁇ WR ⁇ UHGXFH ⁇ WKH ⁇ QHJDWLYH ⁇ HIIHFW ⁇ RI ⁇ WKH ⁇ LQFUHDVHG ⁇ JDS ⁇ ⁇ S ⁇ in FIG. 7.

- a wide-angle mode represents a non-fractional offset

- 8 modes in the wide-angle PRGHV ⁇ VDWLVI ⁇ WKLV ⁇ FRQGLWLRQ ⁇ ZKLFK ⁇ DUH ⁇ > ⁇ @ ⁇ :KHQ ⁇ D ⁇ video block is predicted by these modes, samples in the reference buffer are directly copied without applying any interpolation. With such a modification, the number of samples needed to be smoothed is reduced.

- the modification aligns the design of non-fractional modes in the conventional prediction modes and wide-angle modes.

- 4:2:2 and 4:4:4 chroma formats are supported as well as 4:2:0.

- Chroma derived mode (DM) derivation table for 4:2:2 chroma format was initially ported from HEVC extending the number of entries from 35 to 67 to align with the extension of intra prediction modes. Since HEVC specification does not support interpolation angles EHORZ ⁇ GHJUHHs and above 45 degrees, luma intra prediction modes ranging from 2 to 5 are mapped to 2.

- chroma DM derivation table for 4:2:2: chroma format is updated by replacing some values of the entries of the mapping table to convert interpolation angle more precisely for chroma blocks.

- the intra prediction samples are generated from a set of neighboring reference samples, which may introduce discontinuities along block boundaries between a current video block and neighboring video blocks thereof.

- the PDPC tool can be used in VVC to solve such problems by employing a weighted combination of intra prediction samples with boundary reference samples.

- PDPC may be enabled for the following intra modes without signaling: planar mode, DC mode, angular intra modes with indexes less than or equal to that of a horizontal intra mode (i.e., mode 18), and angular intra modes with indexes greater than or equal to that of a vertical intra mode (i.e., mode 50) and less than or equal to 80.

- a Block Differential Pulse Coded Modulation (BDPCM) mode is applied for the current block or an index of a selected row/column of reference samples for MRL intra prediction is greater than 0, PDPC is not applied.

- BDPCM Block Differential Pulse Coded Modulation

- sample 802 is one of samples in the current video block that is to be predicted/interpolated.

- Reference samples of the current video block for interpolating samples (e.g., 802) of the current video block located above and left to the current video block are illustrated in gray squares.

- Reference sample 808 has the same horizontal coordinate as sample 802 and reference sample 810 has the same vertical coordinate as sample 802. If sample 802 locates at coordinate (x, y), reference samples 808 and 810 locate at (x, -1) and (-1, y), respectively.

- FIG.9 is a block diagram illustrating an exemplary sample prediction process 900 in accordance with some implementations of the present disclosure.

- sample prediction process 900 can be performed by prediction processing unit 41 of video encoder 20, or prediction processing unit 81 of video decoder 30.

- sample prediction process 900 may be performed by a processor (e.g., a processor 2520 as shown in FIG. 25) on an encoder side or a decoder side.

- a processor e.g., a processor 2520 as shown in FIG. 25

- sample prediction process 900 may include a reference sample determination 902, a block-level interpolation direction determination 904 or a sample-specific interpolation direction determination 906, and a sample interpolation 908.

- FIG.9 is described below together with FIGS.10-15.

- the processor may receive a plurality of reconstructed samples in neighboring block regions (e.g., previously decoded blocks) in a video frame of a video.

- the neighboring video blocks are located on a left neighboring region of the current video block, a top neighboring region of the current video block, or both illustrated in gray squares as shown in FIG.8.

- the reconstructed samples may be located on any neighboring regions of the current video block illustrated in gray squares as shown in FIG. 10.

- the processor may determine reference samples in the neighboring video blocks according to two slant lines through a sample of the current video block. One of the two slant lines is along an interpolation direction and the other slant line is perpendicular to the first slant line.

- slant line 1012 is perpendicular to slant line 1014 on sample 1002.

- four reconstructed samples e.g., sample 1004, sample 1006, sample 1008, and sample 1010 are determined by the processor according to slant lines 1012 and 1014 as reference samples for interpolating sample 1002 in the current video block.

- the processor may generate a prediction of sample 1002 based on sample 1004, sample 1006, sample 1008, and sample 1010 according to bilinear interpolation.

- the processor interpolates each of samples in the current video block along a single interpolation direction.

- the interpolation direction is a block- level interpolation direction.

- a block-level interpolation direction with interpolation angles of ⁇ 1 and ⁇ 2 is used to interpolate all samples in the current video block (e.g., samples 1102 and 1104).

- block-level interpolation direction determination 904 may be implemented by a processor in an encoder (e.g., video encoder 20). The processor determines the block-level interpolation direction of the current video block according to a predetermined set of candidate interpolation directions.

- the predetermined set of candidate interpolation directions may include but not limited to (i.e., ⁇ 0, 30°, 45°, 60°, 135° ⁇ ).

- the processor calculates the rate-distortion cost of the current video block when the video block is interpolated according to each of the candidate interpolation directions.

- the processor first determines reference samples along the candidate interpolation direction for each sample and interpolates the sample based on the determined reference samples. The distance between the sample and each of the reference samples are used as weights in the interpolation.

- the processor then calculates a rate-distortion cost of the sample, where the rate-distortion cost of the sample is determined based on an amount of distortion between the interpolated sample and the original, uncoded sample.

- the processor further sums up the rate-distortion costs of all the samples in the current video block as the rate-distortion cost of the current video block.

- the processor determines the candidate interpolation direction associated with the rate-distortion cost having a minimum value as the block-level interpolation direction.

- the processor may generate a syntax element (e.g., planar_pred_dir) and signal the determined block-level interpolation direction into a bitstream to a decoder (e.g., decoder 30).

- a processor in the decoder e.g., video decoder 30

- the processor in the decoder may decode the coded current video block based on the received the syntax element.

- all reference samples used for interpolating samples in the current video block e.g., samples 1102 and 1104 lie on the interpolation direction. In some implementations, reference samples do not lie on the interpolation direction.

- FIG. 12 illustrates a diagram of intra prediction using fractional reference samples to interpolate a sample in a video block in accordance with some implementations of the present disclosure.

- Interpolation angle ⁇ in FIG.12 is not equal to 45° or 135°, some of the reconstructed samples (illustrated in gray squares) in the neighboring block regions may not lie on the interpolation direction (illustrated in dashed arrow lines).

- the processor generates fractional reference samples (e.g., samples 1204, 1206, 1208, and 1210) by interpolating two adjacent reconstructed samples nearest to the interpolation direction (e.g., dash dot lines 1212 and 1214). As shown in FIG.12, fractional reference sample 1204 (illustrated in dashed square) is generated by interpolating reconstructed samples 1204-1 and 1204-2.

- fractional reference sample 1206 is generated by interpolating reconstructed samples 1206-1 and 1206-02.

- fractional reference sample 1208 is generated by interpolating reconstructed samples 1208-1 and 1208-02.

- fractional reference sample 1210 is generated by interpolating reconstructed samples 1210-1 and 1210-02. [0108]

- the processor can calculate coordinates of the fractional reference samples based on the width and the height of the current video block and the interpolation d irection of the current video block according to Equations (3-1) – (6-2):

- W and H denote a width and a height of the current video block

- ⁇ denotes an interpolation angle of the current video block and denotes the coordinate of the sample to be interpolated (e.g., sample 1202 in FIG. 12)

- (x 1 , y 1 ) denotes the coordinate of the fractional reference sample (e.g., sample 1204 in FIG.12) in the neighboring block region that is right to the current video block

- (x2, y2) denotes the coordinate of the fractional reference sample (e.g., sample 1206 in FIG. 12) in the neighboring block region that is left to the current video block

- (x 3 , y 3 ) denotes the coordinate of the fractional reference sample (e.g., sample 1208 in FIG.

- (x4, y4) denotes the coordinate of the fractional reference sample (e.g., sample 1210 in FIG.12) in the neighboring block region that is below the current video block.

- the processor may determine weights used for interpolating each of the fractional reference samples based on the calculated coordinate of the fractional reference sample and the two adjacent reconstructed samples.

- the processor further interpolates the sample (e.g., sample 1202 in FIG.12) in the current video block based on the four fractional reference samples generated by the processor. [0109]

- the processor may use finite-precision interpolation for generating the fractional reference samples and interpolating the sample in the current video block.

- typical interpolation precision applied by the processor includes integer, 1 ⁇ 2, 1 ⁇ 4, 1/8, 1/16, etc.

- the processor calculates the coordinate of the fractional reference sample using any of Equations (3-1) – (6-2)

- the calculated coordinate of the fractional reference samples is rounded to the nearest allowed fractional position.

- the reference samples below and right to the current video block are not used for interpolating the current video block. For example, if the video is coded via VVC or ECM standards, the processor does not interpolate sample 1202 in FIG.

- the processor interpolates sample 1202 based on samples 1204 and 1210. Instead, the processor interpolates sample 1202 based on samples 1206 and 1208. If the reference samples below and right to the current video block can be generated for interpolating the current video block using other methods or in other standards, four reference samples are used for interpolating the current video block. [0111] In some implementations, to implement block-level interpretation direction determination 904, the interpolation direction of the current video block is inferred from the neighboring reconstructed samples in the encoder (e.g., video encoder 20) or the decoder (e.g., decoder 30). For example, FIG.

- FIG. 13 illustrates a diagram of intra prediction using second order gradients of reconstructed samples in neighboring video blocks to interpolate a sample in a video block in accordance with some implementations of the present disclosure.

- Three neighboring rows/columns of reconstructed sample e.g., a zero th line 1301, a first line 1303, and a second line 1305) in FIG.13 are used to infer the interpolation direction of the current video block.

- second order gradients of the neighboring reconstructed samples are used to decide the block-level interpolation direction. For each sample within the current video block (e.g., sample 1302 in FIG.