WO2022172732A1 - 情報処理システム、電子楽器、情報処理方法および機械学習システム - Google Patents

情報処理システム、電子楽器、情報処理方法および機械学習システム Download PDFInfo

- Publication number

- WO2022172732A1 WO2022172732A1 PCT/JP2022/002233 JP2022002233W WO2022172732A1 WO 2022172732 A1 WO2022172732 A1 WO 2022172732A1 JP 2022002233 W JP2022002233 W JP 2022002233W WO 2022172732 A1 WO2022172732 A1 WO 2022172732A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- data

- performance

- learning

- tendency

- practice

- Prior art date

Links

- 230000010365 information processing Effects 0.000 title claims abstract description 53

- 238000010801 machine learning Methods 0.000 title claims description 67

- 238000003672 processing method Methods 0.000 title claims description 7

- 238000012545 processing Methods 0.000 claims description 65

- 238000000034 method Methods 0.000 claims description 52

- 230000007786 learning performance Effects 0.000 claims description 38

- 230000008859 change Effects 0.000 claims description 5

- 239000000203 mixture Substances 0.000 claims description 2

- 238000003860 storage Methods 0.000 description 69

- 238000004891 communication Methods 0.000 description 64

- 238000010586 diagram Methods 0.000 description 26

- 230000008569 process Effects 0.000 description 20

- 230000006870 function Effects 0.000 description 17

- 239000011295 pitch Substances 0.000 description 13

- 238000012706 support-vector machine Methods 0.000 description 12

- 238000013528 artificial neural network Methods 0.000 description 11

- 230000009191 jumping Effects 0.000 description 11

- 210000003811 finger Anatomy 0.000 description 6

- 239000000470 constituent Substances 0.000 description 5

- 230000015654 memory Effects 0.000 description 5

- 238000002360 preparation method Methods 0.000 description 5

- 238000003825 pressing Methods 0.000 description 5

- 239000004065 semiconductor Substances 0.000 description 5

- 230000000694 effects Effects 0.000 description 4

- 238000013527 convolutional neural network Methods 0.000 description 3

- 239000000284 extract Substances 0.000 description 3

- 210000004932 little finger Anatomy 0.000 description 3

- 238000009826 distribution Methods 0.000 description 2

- 238000005516 engineering process Methods 0.000 description 2

- 238000012986 modification Methods 0.000 description 2

- 230000004048 modification Effects 0.000 description 2

- 230000003287 optical effect Effects 0.000 description 2

- 230000000306 recurrent effect Effects 0.000 description 2

- 230000002787 reinforcement Effects 0.000 description 2

- 230000006403 short-term memory Effects 0.000 description 2

- 230000004931 aggregating effect Effects 0.000 description 1

- 238000005520 cutting process Methods 0.000 description 1

- 230000000881 depressing effect Effects 0.000 description 1

- 238000005401 electroluminescence Methods 0.000 description 1

- 238000011156 evaluation Methods 0.000 description 1

- 210000004247 hand Anatomy 0.000 description 1

- 238000003384 imaging method Methods 0.000 description 1

- 239000004973 liquid crystal related substance Substances 0.000 description 1

- 238000009527 percussion Methods 0.000 description 1

- 230000001902 propagating effect Effects 0.000 description 1

- 230000004044 response Effects 0.000 description 1

Images

Classifications

-

- G—PHYSICS

- G09—EDUCATION; CRYPTOGRAPHY; DISPLAY; ADVERTISING; SEALS

- G09B—EDUCATIONAL OR DEMONSTRATION APPLIANCES; APPLIANCES FOR TEACHING, OR COMMUNICATING WITH, THE BLIND, DEAF OR MUTE; MODELS; PLANETARIA; GLOBES; MAPS; DIAGRAMS

- G09B15/00—Teaching music

- G09B15/001—Boards or like means for providing an indication of chords

- G09B15/002—Electrically operated systems

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N20/00—Machine learning

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

- G06N3/084—Backpropagation, e.g. using gradient descent

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

- G06N3/09—Supervised learning

-

- G—PHYSICS

- G09—EDUCATION; CRYPTOGRAPHY; DISPLAY; ADVERTISING; SEALS

- G09B—EDUCATIONAL OR DEMONSTRATION APPLIANCES; APPLIANCES FOR TEACHING, OR COMMUNICATING WITH, THE BLIND, DEAF OR MUTE; MODELS; PLANETARIA; GLOBES; MAPS; DIAGRAMS

- G09B15/00—Teaching music

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10G—REPRESENTATION OF MUSIC; RECORDING MUSIC IN NOTATION FORM; ACCESSORIES FOR MUSIC OR MUSICAL INSTRUMENTS NOT OTHERWISE PROVIDED FOR, e.g. SUPPORTS

- G10G1/00—Means for the representation of music

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H1/00—Details of electrophonic musical instruments

- G10H1/0008—Associated control or indicating means

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H1/00—Details of electrophonic musical instruments

- G10H1/0033—Recording/reproducing or transmission of music for electrophonic musical instruments

- G10H1/0041—Recording/reproducing or transmission of music for electrophonic musical instruments in coded form

- G10H1/0058—Transmission between separate instruments or between individual components of a musical system

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H2210/00—Aspects or methods of musical processing having intrinsic musical character, i.e. involving musical theory or musical parameters or relying on musical knowledge, as applied in electrophonic musical tools or instruments

- G10H2210/031—Musical analysis, i.e. isolation, extraction or identification of musical elements or musical parameters from a raw acoustic signal or from an encoded audio signal

- G10H2210/061—Musical analysis, i.e. isolation, extraction or identification of musical elements or musical parameters from a raw acoustic signal or from an encoded audio signal for extraction of musical phrases, isolation of musically relevant segments, e.g. musical thumbnail generation, or for temporal structure analysis of a musical piece, e.g. determination of the movement sequence of a musical work

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H2210/00—Aspects or methods of musical processing having intrinsic musical character, i.e. involving musical theory or musical parameters or relying on musical knowledge, as applied in electrophonic musical tools or instruments

- G10H2210/031—Musical analysis, i.e. isolation, extraction or identification of musical elements or musical parameters from a raw acoustic signal or from an encoded audio signal

- G10H2210/066—Musical analysis, i.e. isolation, extraction or identification of musical elements or musical parameters from a raw acoustic signal or from an encoded audio signal for pitch analysis as part of wider processing for musical purposes, e.g. transcription, musical performance evaluation; Pitch recognition, e.g. in polyphonic sounds; Estimation or use of missing fundamental

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H2210/00—Aspects or methods of musical processing having intrinsic musical character, i.e. involving musical theory or musical parameters or relying on musical knowledge, as applied in electrophonic musical tools or instruments

- G10H2210/031—Musical analysis, i.e. isolation, extraction or identification of musical elements or musical parameters from a raw acoustic signal or from an encoded audio signal

- G10H2210/091—Musical analysis, i.e. isolation, extraction or identification of musical elements or musical parameters from a raw acoustic signal or from an encoded audio signal for performance evaluation, i.e. judging, grading or scoring the musical qualities or faithfulness of a performance, e.g. with respect to pitch, tempo or other timings of a reference performance

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H2210/00—Aspects or methods of musical processing having intrinsic musical character, i.e. involving musical theory or musical parameters or relying on musical knowledge, as applied in electrophonic musical tools or instruments

- G10H2210/571—Chords; Chord sequences

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10H—ELECTROPHONIC MUSICAL INSTRUMENTS; INSTRUMENTS IN WHICH THE TONES ARE GENERATED BY ELECTROMECHANICAL MEANS OR ELECTRONIC GENERATORS, OR IN WHICH THE TONES ARE SYNTHESISED FROM A DATA STORE

- G10H2250/00—Aspects of algorithms or signal processing methods without intrinsic musical character, yet specifically adapted for or used in electrophonic musical processing

- G10H2250/311—Neural networks for electrophonic musical instruments or musical processing, e.g. for musical recognition or control, automatic composition or improvisation

Definitions

- the present disclosure relates to technology for supporting performance of musical instruments such as electronic musical instruments.

- Patent Literature 1 discloses a method in which statistical values such as standard deviation are calculated from differences between parameters of music data prepared in advance and parameters of performance data representing a performance performed by a user, and a method according to the type of the parameter.

- a technique for aggregating statistical values is disclosed.

- an object of one aspect of the present disclosure is to realize effective performance practice according to the user's performance tendencies.

- an information processing system includes a performance data acquisition unit that acquires performance data representing a performance of a piece of music by a user; and the learning tendency data representing the tendency of the performance represented by the learning performance data.

- a tendency identification section for generating tendency data representing a tendency of a player's performance, and a practice phrase identification section for identifying practice phrases according to the tendency data generated by the tendency identification section.

- An electronic musical instrument includes a performance accepting unit that accepts a performance of a song by a user, a performance data acquisition unit that acquires performance data representing the performance accepted by the performance accepting unit, and a performance of the song.

- the performance data obtained by the performance data obtaining unit is input to a first trained model that has learned the relationship between the learning performance data represented by the learning performance data and the learning tendency data representing the performance tendency represented by the learning performance data.

- a tendency identifying unit that outputs, from the first trained model, tendency data representing a tendency of the performance by the user; It comprises a practice phrase identification unit that identifies practice phrases according to a tendency, and a presentation processing unit that presents the practice phrases to the user.

- An information processing method acquires performance data representing a performance of a song by a user, learning performance data representing the performance of the song, and a tendency of the performance represented by the learning performance data.

- the user By inputting the acquired performance data into the first trained model that has learned the relationship with the learning trend data, the user generates the trend data representing the performance trend of the user, and generates the trend data according to the trend data. Identify practice phrases.

- a machine learning system acquires performance data representing a performance of a song by a user, and indication data representing a point in the song and a tendency of the performance at that point.

- an acquisition unit ; and a first learning performance data representing a combination of learning performance data representing a performance within a section of the performance data including the time point represented by the indication data, and learning tendency data representing a performance tendency represented by the indication data.

- a first learning processing unit that establishes a first trained model in which the relationship between the learning performance data and the learning tendency data is learned by machine learning using learning data.

- FIG. 1 is a block diagram illustrating the configuration of a performance system according to a first embodiment

- FIG. 1 is a block diagram illustrating the configuration of an electronic musical instrument

- FIG. 1 is a block diagram illustrating the configuration of an information processing system

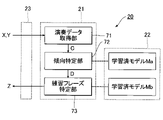

- FIG. 1 is a block diagram illustrating a functional configuration of an information processing system

- FIG. 8 is a flowchart illustrating a specific procedure of specific processing

- 1 is a block diagram illustrating the configuration of a machine learning system

- FIG. 1 is a block diagram illustrating a functional configuration of a machine learning system

- FIG. It is a block diagram which illustrates the structure of the information device which a leader uses.

- FIG. 1 is a block diagram illustrating the configuration of an electronic musical instrument

- FIG. 1 is a block diagram illustrating the configuration of an information processing system

- FIG. 1 is a block diagram illustrating a functional configuration of an information processing system

- FIG. 8 is a flowchart illustrating a specific procedure of specific processing

- 1 is a block diagram

- FIG. 7 is a block diagram illustrating the functional configuration of an information processing system according to a second embodiment;

- FIG. FIG. 11 is a flow chart illustrating a procedure of specific processing in the second embodiment;

- FIG. FIG. 11 is a block diagram illustrating a functional configuration of an information processing system according to a third embodiment;

- FIG. FIG. 11 is a flowchart illustrating a procedure of identification processing in the third embodiment;

- FIG. FIG. 11 is a block diagram illustrating a functional configuration of a machine learning system according to a third embodiment;

- FIG. 11 is a flowchart illustrating a procedure of learning processing in the third embodiment

- FIG. 11 is a block diagram illustrating the functional configuration of an electronic musical instrument according to a fourth embodiment

- FIG. 12 is a block diagram illustrating a functional configuration of an information device in a fifth embodiment

- FIG. 1 is a block diagram illustrating the configuration of a performance system 100 according to the first embodiment.

- the performance system 100 is a computer system for the user U of the electronic musical instrument 10 to practice playing the electronic musical instrument 10 , and includes the electronic musical instrument 10 , an information processing system 20 and a machine learning system 30 .

- Each element constituting the performance system 100 communicates with each other via a communication network 200 such as the Internet.

- the performance system 100 actually includes a plurality of electronic musical instruments 10, the following description will focus on any one electronic musical instrument 10 for the sake of convenience.

- FIG. 2 is a block diagram illustrating the configuration of the electronic musical instrument 10.

- the electronic musical instrument 10 is a performance device used by the user U to play music.

- the electronic musical instrument 10 of the first embodiment is an electronic keyboard instrument having a plurality of keys operated by a user U.

- FIG. The electronic musical instrument 10 is implemented by a computer system comprising a control device 11 , a storage device 12 , a communication device 13 , a performance device 14 , a display device 15 , a sound source device 16 and a sound emitting device 17 .

- the electronic musical instrument 10 can be realized as a single device, or as a plurality of devices configured separately from each other.

- the control device 11 is composed of one or more processors that control each element of the electronic musical instrument 10 .

- the control device 11 includes one or more types of CPU (Central Processing Unit), SPU (Sound Processing Unit), DSP (Digital Signal Processor), FPGA (Field Programmable Gate Array), or ASIC (Application Specific Integrated Circuit). It consists of a processor.

- the storage device 12 is a single or multiple memories that store programs executed by the control device 11 and various data used by the control device 11 .

- the storage device 12 is composed of a known recording medium such as a magnetic recording medium or a semiconductor recording medium, or a combination of a plurality of types of recording media.

- the storage device 12 is a portable recording medium that can be attached to and detached from the electronic musical instrument 10, or a recording medium that can be written to or read by the control device 11 via the communication network 200 (for example, a cloud storage). may be used.

- the storage device 12 of the first embodiment stores a plurality of song data X representing different songs.

- the music data X of each piece of music specifies the time series of a plurality of notes forming part or all of the piece of music. Specifically, the music data X specifies the pitch and sounding period for each note in the music.

- the music data X is, for example, data in a format conforming to the MIDI (Musical Instrument Digital Interface) standard.

- the communication device 13 communicates with the information processing system 20 via the communication network 200 .

- Communication between the communication device 13 and the communication network 200 may be either wired communication or wireless communication.

- a communication device 13 separate from the electronic musical instrument 10 may be connected to the electronic musical instrument 10 by wire or wirelessly.

- an information terminal such as a smart phone or a tablet terminal is exemplified.

- the display device 15 displays images under the control of the control device 11 .

- various display panels such as a liquid crystal display panel or an organic EL (Electroluminescence) panel are used as the display device 15 .

- the display device 15 uses, for example, the music data X of the music played by the user U to display the score of the music.

- the performance device 14 is an input device that accepts a performance by the user U. Specifically, the performance device 14 has a keyboard on which a plurality of keys corresponding to different pitches are arranged. The user U plays music by sequentially operating desired keys of the performance device 14 .

- the performance device 14 is an example of a "play reception section".

- the control device 11 generates performance data Y representing the performance of music by the user U.

- the performance data Y designates the pitch and sounding period for each of a plurality of notes designated by the user U by operating the performance device 14 .

- the performance data Y like the music data X, is time-series data in a format conforming to the MIDI standard, for example.

- the communication device 13 transmits to the information processing system 20 the performance data Y representing the performance of the music by the user U and the music data X of the music.

- the music data X is data representing an exemplary or standard performance of the music

- the performance data Y is data representing an actual performance of the music by the user U.

- each note specified by the music data X and each note specified by the performance data Y correlate with each other, they do not completely match.

- the difference between the music data X and the performance data Y is particularly noticeable at a portion of the music at which the user U is likely to make a mistake in performance or a portion at which the user U is not good at playing.

- the sound source device 16 generates an acoustic signal A corresponding to the performance on the performance device 14.

- the acoustic signal A is a signal representing the waveform of a musical tone instructed to be played by the performance device 14 .

- the tone generator device 16 is a MIDI tone generator that generates an acoustic signal A representing musical tones of each note designated by the performance data Y in chronological order. That is, the tone generator device 16 generates an acoustic signal A representing a tone of a pitch corresponding to a key pressed by the user U among the plurality of keys of the performance device 14 .

- the control device 11 may implement the functions of the tone generator device 16 by executing a program stored in the storage device 12 . That is, the sound source device 16 dedicated to generating the acoustic signal A is omitted.

- the sound emitting device 17 emits the performance sound represented by the acoustic signal A.

- a speaker or headphones are used as the sound emitting device 17 .

- the sound source device 16 and the sound emitting device 17 in the first embodiment function as a reproduction system 18 that reproduces musical tones according to the user U's performance.

- FIG. 3 is a block diagram illustrating the configuration of the information processing system 20.

- the information processing system 20 provides the user U with musical phrases (hereinafter referred to as “practice phrases”) Z that are suitable for the user U to practice playing.

- the information processing system 20 is implemented by a computer system comprising a control device 21 , a storage device 22 and a communication device 23 .

- the information processing system 20 may be implemented as a single device, or may be implemented as a plurality of devices configured separately from each other.

- the control device 21 is composed of one or more processors that control each element of the information processing system 20 .

- the control device 21 is composed of one or more processors such as CPU, SPU, DSP, FPGA, or ASIC.

- Communication device 23 communicates with each of electronic musical instrument 10 and machine learning system 30 via communication network 200 . Communication between the communication device 23 and the communication network 200 may be either wired communication or wireless communication.

- the storage device 22 is a single or multiple memories that store programs executed by the control device 21 and various data used by the control device 21 .

- the storage device 22 is composed of a known recording medium such as a magnetic recording medium or a semiconductor recording medium, or a combination of a plurality of types of recording media.

- a portable recording medium that can be attached to and detached from the information processing system 20, or a recording medium (for example, cloud storage) that can be written or read by the control device 21 via the communication network 200, for example, is stored in the storage device 22. may be used as

- FIG. 4 is a block diagram illustrating the functional configuration of the information processing system 20.

- the storage device 22 stores a plurality of practice phrases Z corresponding to different trend data D.

- FIG. In other words, the storage device 22 stores a table in which each of the plurality of trend data D and each of the plurality of practice phrases Z are associated with each other.

- the tendency data D is data in an arbitrary format that represents the performance tendency of the performer (hereinafter referred to as "performance tendency").

- the performance tendency is, for example, the tendency of the performer to make mistakes in performance or the tendency of the performer to perform poorly. For example, “the timing of key pressing is shifted", “pressing other keys adjacent to the target key”, “wrong pitch”, “not good at jumping”, “not good at playing chords", and “fingering”. Any one of a plurality of types of performance tendencies such as “I'm not good at playing” is designated by the trend data D.

- the jump progress is a portion where two notes whose pitch difference exceeds a predetermined value (for example, 3 degrees) are played in succession.

- finger-passing is a playing method in which a note on the upper pitch side is played by moving another finger so as to pass under the finger pressing the key corresponding to one note.

- the practice phrase Z is time-series data representing a piece of music composed of a plurality of notes, and is specifically a melody suitable for practicing the electronic musical instrument 10 (for example, part or all of a practice piece).

- the practice phrase Z is composed of a time series of single notes or chords.

- the practice phrase Z corresponding to each trend data D represents a piece of music suitable for improving the performance trend specified by the trend data D.

- FIG. For example, for the performance tendency data D indicating that the player is "bad at jumping", practice phrases Z rich in jumping are registered. For the performance tendency data D indicating that the player is not good at playing chords, a practice phrase Z containing many chords is registered.

- the practice phrase Z is, for example, data in MIDI format that specifies pitches and sounding periods for each of a plurality of notes.

- the control device 21 of the information processing system 20 acquires a plurality of elements (the performance data acquiring section 71, It implements the trend identifying section 72 and the practice phrase identifying section 73).

- the performance data acquisition unit 71 acquires performance data Y representing the performance of music by the user U. Specifically, the performance data acquisition unit 71 receives the music data X and the performance data Y transmitted from the electronic musical instrument 10 through the communication device 23 . Control data C including music data X and performance data Y is generated by the performance data acquisition unit 71 .

- the tendency identification unit 72 generates tendency data D representing the performance tendency of the user U according to the control data C.

- the learned model Ma is used for generating the trend data D by the trend identifying unit 72 .

- the trained model Ma is an example of the "first trained model”.

- the learned model Ma is a statistical estimation model that has learned the above tendencies. That is, the learned model Ma is a statistical estimation model that has learned the relationship between the combination of the music data X and the performance data Y (that is, the control data C) and the tendency data D representing the performance tendency of the performer.

- the tendency identification unit 72 outputs the tendency data D representing the performance tendency of the user U from the learned model Ma. .

- the learned model Ma is composed of, for example, a deep neural network (DNN: Deep Neural Network).

- DNN Deep Neural Network

- any form of neural network such as a recurrent neural network (RNN) or a convolutional neural network (CNN) is used as the trained model Ma.

- RNN recurrent neural network

- CNN convolutional neural network

- the trained model Ma may be configured by combining multiple types of deep neural networks. Further, additional elements such as long short-term memory (LSTM) may be installed in the learned model Ma.

- LSTM long short-term memory

- the learned model Ma is a combination of a program that causes the control device 21 to execute an operation for generating the trend data D from the control data C, and a plurality of variables (specifically, weights and biases) applied to the operation. Realized.

- a program for realizing the trained model Ma and a plurality of variables are stored in the storage device 22 .

- Numerical values for each of the plurality of variables that define the trained model Ma are set in advance by machine learning.

- the practice phrase identification unit 73 uses the tendency data D identified by the tendency identification unit 72 to identify the practice phrase Z according to the performance tendency of the user U. Specifically, the practice phrase identification unit 73 searches the storage device 22 for the practice phrase Z corresponding to the trend data D identified by the trend identification unit 72, among the plurality of practice phrases Z stored in the storage device 22. do. That is, a practice phrase Z suitable for improving the performance tendency of the user U represented by the tendency data D is specified.

- the practice phrase Z specified by the practice phrase specifying section 73 is transmitted from the communication device 23 to the electronic musical instrument 10 .

- the communication device 13 of the electronic musical instrument 10 receives the practice phrase Z transmitted from the information processing system 20 .

- the control device 11 causes the display device 15 to display the musical score of the practice phrase Z.

- FIG. The user U plays the practice phrase Z while checking the score displayed on the display device 15. - ⁇

- FIG. 5 is a flowchart illustrating a specific procedure of processing (hereinafter referred to as "specific processing") Sa executed by the control device 21 of the information processing system 20. As shown in FIG.

- the performance data acquisition unit 71 waits until the communication device 23 receives the music data X and the performance data Y transmitted from the electronic musical instrument 10 (Sa1: NO).

- the tendency identification unit 72 inputs the control data C including the music data X and the performance data Y to the learned model Ma.

- the trend data D is output from the learned model Ma (Sa2).

- the practice phrase identification unit 73 identifies the practice phrase Z corresponding to the tendency data D among the plurality of practice phrases Z stored in the storage device 22 (Sa3).

- the practice phrase identification unit 73 transmits the practice phrase Z from the communication device 23 to the electronic musical instrument 10 (Sa4).

- the performance data Y representing the performance of a piece of music by the user U is input to the trained model Ma, thereby generating the tendency data D representing the performance tendency of the user U.

- a practice phrase Z corresponding to the trend data D is specified. Therefore, when the user U plays the practice phrase Z, effective practice corresponding to the performance tendency of the user U is realized.

- the practice phrase Z corresponding to the performance tendency of the user U is specified among the plurality of practice phrases Z corresponding to different performance tendencies (tendency data D). Therefore, the load of the process of specifying the practice phrase Z according to the performance tendency of the user U is reduced.

- FIG. 6 is a block diagram illustrating the configuration of the machine learning system 30.

- the machine learning system 30 comprises a control device 31 , a storage device 32 and a communication device 33 .

- the machine learning system 30 is realized as a single device, and also as a plurality of devices configured separately from each other.

- the control device 31 is composed of one or more processors that control each element of the machine learning system 30.

- the control device 31 is composed of one or more processors such as CPU, SPU, DSP, FPGA, or ASIC.

- the communication device 33 communicates with the information processing system 20 via the communication network 200 . Communication between the communication device 33 and the communication network 200 may be either wired communication or wireless communication.

- the storage device 32 is a single or multiple memories that store programs executed by the control device 31 and various data used by the control device 31 .

- the storage device 32 is composed of a known recording medium such as a magnetic recording medium or a semiconductor recording medium, or a combination of a plurality of types of recording media.

- a portable recording medium that can be attached to and detached from the machine learning system 30, or a recording medium that can be written or read by the control device 31 via the communication network 200 (for example, cloud storage) is used as the storage device 32. may be used.

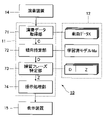

- FIG. 7 is a block diagram illustrating the functional configuration of the machine learning system 30.

- the control device 31 functions as a plurality of elements (learning data acquisition unit 81a and learning processing unit 82a) for establishing the trained model Ma by machine learning by executing the programs stored in the storage device 32 .

- the learning processing unit 82a establishes a learned model Ma by supervised machine learning (learning processing Sc described later) using a plurality of learning data Ta.

- the learning data acquisition unit 81a acquires a plurality of learning data Ta.

- a plurality of pieces of learning data Ta acquired by the learning data acquiring section 81 a are stored in the storage device 32 .

- Each of the plurality of learning data Ta is composed of a combination of learning control data Ct and learning tendency data Dt.

- the control data Ct includes learning music data Xt and learning performance data Yt.

- the music data Xt is an example of "learning music data”

- the performance data Yt is an example of "learning performance data”

- the tendency data Dt is an example of "learning tendency data”.

- the music represented by the music data Xt is an example of the "reference music”.

- the learning data acquisition unit 81a is an example of a "first learning data acquisition unit”

- the learning processing unit 82a is an example of a “first learning processing unit”.

- the learning data Ta is an example of "first learning data”.

- the learning data Ta is generated using the result of the performance of the music by the trainee U1 and the guidance of the performance by the instructor U2.

- a trainee U1 uses the electronic musical instrument 10 to play a piece of music.

- the instructor U2 uses the information device 40 to evaluate and instruct the performance by the trainee U1.

- the information device 40 is, for example, an information terminal such as a smart phone or a tablet terminal.

- the trainee U1 and the instructor U2 are, for example, located at remote locations. However, the trainee U1 and the instructor U2 may be located at the same place.

- the electronic musical instrument 10 transmits to the information device 40 and the machine learning system 30 the music data X0 representing the music and the performance data Y0 representing the performance of the music by the trainee U1.

- the music data X0 like the music data X described above, designates the time series of a plurality of notes forming the music.

- the performance data Y0 designates the time series of a plurality of notes designated by the trainee U1 by manipulating the performance device 14.

- FIG. 8 is a block diagram illustrating the configuration of the information device 40.

- the information device 40 is a computer system for the instructor U2 to evaluate and guide the performance of the electronic musical instrument 10 by the trainee U1, and includes a control device 41, a storage device 42, a communication device 43, an operation device 44, and a display device 45. a playback system 46; Note that the information device 40 may be implemented as a single device, or may be implemented as a plurality of devices configured separately from each other.

- the control device 41 is composed of one or more processors that control each element of the information device 40 .

- the control device 41 is composed of one or more processors such as CPU, SPU, DSP, FPGA, or ASIC.

- the storage device 42 is a single or multiple memories that store programs executed by the control device 41 and various data used by the control device 41 .

- the storage device 42 is composed of a known recording medium such as a magnetic recording medium or a semiconductor recording medium, or a combination of a plurality of types of recording media.

- a portable recording medium that can be attached to and detached from the information device 40, or a recording medium that can be written or read by the control device 41 via the communication network 200 (for example, cloud storage) is used as the storage device 42. may be used.

- the communication device 43 communicates with each of the electronic musical instrument 10 and the machine learning system 30 via the communication network 200 . Communication between the communication device 43 and the communication network 200 may be either wired communication or wireless communication.

- the communication device 43 receives, for example, music data X0 and performance data Y0 transmitted from the electronic musical instrument 10 .

- the operation device 44 is an input device that receives instructions from the instructor U2.

- the operation device 44 is, for example, a plurality of operators operated by the instructor U2, or a touch panel that detects contact by the instructor U2.

- the display device 45 displays images under the control of the control device 41 . Specifically, the display device 45 displays the time series of notes specified by the performance data Y received by the communication device 43 . That is, the display device 45 displays an image representing the performance by the trainee U1. Note that the time series of notes specified by the music data X may be displayed in parallel with the notes of the performance data Y.

- the reproduction system 46 reproduces the musical tones of each note specified by the performance data Y, similar to the reproduction system 18 of the electronic musical instrument 10 . That is, the musical tone played by the trainee U1 is reproduced by the reproduction system 46.

- the instructor U2 can confirm the performance of the music by the trainee U1.

- the instructor U2 operates the operation device 44 to input performance tendencies to be pointed out regarding the performance of the music by the trainee U1.

- Instructor U2 designates performance tendencies regarding performance of a piece of music by trainee U1, and points in time at which performance tendencies are observed in the piece of music. The performance tendency is selected from a plurality of options by the instructor U2 by operating the operating device 44, for example.

- FIG. 9 is a schematic diagram of indication data P.

- Pointed-out data P includes trend data Dt and time data ⁇ for each point pointed out by instructor U2.

- the tendency data Dt is data representing the performance tendency pointed out by the instructor U2.

- the time data ⁇ is data representing the time at which the performance tendency is observed in the piece of music.

- the pointing data P is data representing a time point within a piece of music and a performance tendency at that time point.

- the communication device 43 transmits the pointing data P generated by the control device 41 to the electronic musical instrument 10 and the machine learning system 30 .

- the communication device 13 of the electronic musical instrument 10 receives indication data P transmitted from the information device 40 .

- the control device 11 displays the performance tendency indicated by the indication data P on the display device 15 . By visually recognizing the image on the display device 15, the practicer U1 can confirm the instruction (playing tendency) by the instructor U2.

- the learning data acquisition unit 81a in the machine learning system 30 communicates the music data X0 and the performance data Y0 transmitted from the electronic musical instrument 10 and the indication data P transmitted from the information device 40. Received by device 33 .

- the learning data acquisition unit 81a uses the music data X0, the performance data Y0, and the indication data P to generate learning data Ta.

- the electronic musical instrument 10 is an example of the "first device”

- the information device 40 is an example of the "second device”.

- FIG. 10 is a flowchart illustrating a specific procedure of a process (hereinafter referred to as "preparation process") Sb in which the learning data acquisition unit 81a generates the learning data Ta.

- the preparation process Sb is started.

- the learning data acquisition unit 81a acquires the music data X0, the performance data Y0, and the indication data P from the communication device 33 (Sb1).

- the learning data acquisition unit 81a extracts, as music data Xt, a section (hereinafter referred to as "specific section") of the music data X0 that includes the point in time specified by the time data ⁇ of the indication data P (Sb2).

- the specific section is, for example, a section of a predetermined length with a point in time designated by the time data ⁇ as the midpoint.

- the learning data acquiring unit 81a extracts, as performance data Yt, a portion of the performance data Y0 within a specific section including the point in time specified by the time data ⁇ of the indication data P (Sb3). That is, for each of the music data X0 and the performance data Y0, a specific section including the point in time when the instructor U2 pointed out the performance tendency is extracted.

- the learning data acquisition unit 81a generates learning control data Ct including the music data Xt and the performance data Yt generated by the above procedure (Sb4). Then, the learning data acquisition unit 81a generates learning data Ta by correlating the learning control data Ct and the trend data Dt included in the indication data P (Sb5).

- music data Xt and performance data Yt corresponding to a specific section and instructor U2 pointing out the specific section are obtained for performances of various pieces of music by a large number of trainees U1.

- a large amount of learning data Ta including the tendency data Dt of the performance tendency is generated.

- FIG. 11 is a flowchart illustrating a specific procedure of the learning process Sc in which the controller 31 of the machine learning system 30 establishes the learned model Ma.

- the learning process Sc is also expressed as a method of generating a learned model Ma by machine learning (a method of generating a learned model).

- the learning processing unit 82a selects one of the plurality of learning data Ta (hereinafter referred to as "selected learning data Ta") stored in the storage device 32 (Sc1). As illustrated in FIG. 7, the learning processing unit 82a inputs the control data Ct of the selected learning data Ta into an initial or provisional model (hereinafter referred to as “provisional model Ma0") (Sc2), and to obtain the trend data D output by the provisional model Ma0 (Sc3).

- provisional model Ma0 initial or provisional model

- the learning processing unit 82a calculates a loss function representing the error between the trend data D generated by the provisional model Ma0 and the trend data Dt of the selected learning data Ta (Sc4).

- the learning processing unit 82a updates a plurality of variables of the temporary model Ma0 so that the loss function is reduced (ideally minimized) (Sc5). Error backpropagation, for example, is used to update multiple variables according to the loss function.

- the learning processing unit 82a determines whether or not a predetermined end condition is satisfied (Sc6).

- the termination condition is, for example, that the loss function falls below a predetermined threshold, or that the amount of change in the loss function falls below a predetermined threshold. If the termination condition is not satisfied (Sc6: NO), the learning processing unit 82a selects the unselected learning data Ta as new selected learning data Ta (Sc1). That is, the processing (Sc2-Sc5) for updating a plurality of variables of the provisional model Ma0 is repeated until the termination condition is met (Sc6: YES).

- the learning processing unit 82a terminates updating (Sc2-Sc5) of a plurality of variables defining the provisional model Ma0.

- the provisional model Ma0 at the time when the termination condition is met is determined as the learned model Ma. That is, the variables of the learned model Ma are fixed to the values at the end of the learning process Sc.

- the trained model Ma statistically to output appropriate trend data D. That is, the learned model Ma is a statistical learning model that has learned the relationship between the performance of a piece of music by a performer (control data C) and the performance tendency of the performer (tendency data D), as described above.

- the learning processing unit 82a transmits the learned model Ma established by the above procedure from the communication device 33 to the information processing system 20 (Sc7). Specifically, the learning processing unit 82 a transmits a plurality of variables of the trained model Ma from the communication device 33 to the information processing system 20 .

- the control device 21 of the information processing system 20 stores the learned model Ma received from the machine learning system 30 in the storage device 22 . Specifically, a plurality of variables that define the learned model Ma are stored in the storage device 22 .

- FIG. 12 is a block diagram illustrating the functional configuration of the information processing system 20 according to the second embodiment.

- a plurality of practice phrases Z are stored in storage device 22 .

- one reference phrase Zref is stored in the storage device 22 instead of the plurality of practice phrases Z in the first embodiment.

- the reference phrase Zref is time-series data representing a piece of music composed of a plurality of notes, similar to the practice phrase Z of the first embodiment. Specifically, the reference phrase Zref is a melody suitable for practicing the electronic musical instrument 10 (for example, part or all of an etude).

- the practice phrase identification unit 73 of the second embodiment generates the practice phrase Z by editing the reference phrase Zref according to the tendency data D generated by the tendency identification unit 72 . Specifically, the practice phrase specifying unit 73 edits the reference phrase Zref so that the difficulty level of playing the part of the reference phrase Zref that is related to the performance tendency specified by the tendency data D is lowered.

- FIG. 13 is a flowchart illustrating specific procedures of the specific process Sa in the second embodiment.

- the specific process Sa of the second embodiment is a process in which step Sa3 in the specific process Sa of the first embodiment is replaced with step Sa13.

- the practice phrase identification unit 73 of the second embodiment generates the practice phrase Z by editing the reference phrase Zref stored in the storage device 22 according to the tendency data D (Sa13).

- the processing (Sa4) in which the practice phrase specifying unit 73 transmits the practice phrase Z to the electronic musical instrument 10 is the same as in the first embodiment.

- a specific example of editing the reference phrase Zref (Sa13) will be described below.

- the practice phrase identification unit 73 generates a practice phrase Z by changing one or more chords included in the reference phrase Zref. For example, the practice phrase identification unit 73 omits, for example, one or more constituent tones other than the root note among the plurality of constituent tones for a chord that includes constituent tones exceeding a predetermined number. Also, for a chord whose pitch difference between the lowest note and the highest note exceeds a predetermined value, a predetermined number of constituent notes including the highest note are omitted. Omitting the constituent notes reduces the difficulty of playing the chord. As illustrated above, the editing of the reference phrase Zref by the practice phrase identification unit 73 includes code changes.

- the practice phrase identification unit 73 generates the practice phrase Z by omitting or changing the jumping progression included in the reference phrase Zref. For example, the practice phrase identification unit 73 omits the last note of the two notes related to the jumping progression. In addition, the practice phrase specifying unit 73 changes the last note of the two notes related to the jumping progression to another note on the bass side. As illustrated above, the editing of the reference phrase Zref by the practice phrase identification unit 73 includes omission or change of the jump progress.

- the reference phrase Zref includes specification of performance methods such as fingering. Specifically, the practice phrase Z includes designation of the number of the fingers on which each of the notes should be played. If the tendency data D represents the performance tendency of "not good at passing through fingers", the practice phrase identification unit 73 generates the practice phrase Z by changing the fingering for the reference phrase Zref. For example, assuming that it is difficult for a novice player to press a key with the little finger, the practice phrase identification unit 73 assigns the number of a note for which the little finger number is designated in the reference phrase Zref to another note other than the little finger. Change to finger number.

- the changed fingerings (fingering numbers for each note) by the practice phrase identification unit 73 are displayed on the display device 15 together with the musical score of the practice phrase Z.

- the editing of the reference phrase Zref by the practice phrase identification unit 73 includes changing the playing method of the musical instrument.

- FIG. 14 is a block diagram illustrating a functional configuration of an information processing system 20 according to a third embodiment.

- the configuration in which the practice phrase identification unit 73 identifies the practice phrase Z corresponding to the user U's trend data D from among the plurality of practice phrases Z stored in the storage device 22 was exemplified.

- the practice phrase identification unit 73 of the third embodiment identifies the practice phrase Z according to the trend data D using the learned model Mb.

- the trained model Mb is an example of a "second trained model".

- the practice phrase Z corresponding to each trend data D is a piece of music suitable for improving the performance trend specified by the trend data D.

- the learned model Mb is a statistical estimation model that has learned the relationship between the trend data D and the practice phrase Z.

- FIG. The practice phrase identification unit 73 of the third embodiment inputs the tendency data D generated by the tendency identification unit 72 to the learned model Mb, thereby identifying the practice phrase Z corresponding to the performance tendency represented by the tendency data D. .

- the trained model Mb outputs an index of validity for the trend data D for each of a plurality of different practice phrases Z (that is, the degree of validity of each practice phrase Z with respect to the performance tendencies of the user U). do.

- the practice phrase identification unit 73 identifies the practice phrase Z having the largest index among the plurality of practice phrases Z stored in the storage device 22 .

- the trained model Mb is composed of, for example, a deep neural network.

- a deep neural network For example, any type of neural network, such as a recurrent neural network or a convolutional neural network, is used as the trained model Mb.

- the trained model Mb may be configured by combining multiple types of deep neural networks. Further, additional elements such as long short-term memory (LSTM) may be installed in the trained model Mb.

- LSTM long short-term memory

- the learned model Mb is a combination of a program that causes the control device 21 to execute an operation for estimating the practice phrase Z from the tendency data D, and a plurality of variables (specifically, weights and biases) applied to the operation. Realized.

- a program for realizing the trained model Mb and a plurality of variables are stored in the storage device 22 .

- Numerical values for each of the plurality of variables that define the trained model Mb are set in advance by machine learning.

- FIG. 15 is a flowchart illustrating specific procedures of the specific process Sa in the third embodiment.

- the specific process Sa of the third embodiment is a process in which step Sa23 replaces Sa3 in the specific process Sa of the first embodiment.

- the practice phrase identification unit 73 of the third embodiment identifies the practice phrase Z by inputting the tendency data D to the learned model Mb (Sa23).

- the processing (Sa4) in which the practice phrase specifying unit 73 transmits the practice phrase Z to the electronic musical instrument 10 is the same as in the first embodiment.

- FIG. 16 is a block diagram illustrating a functional configuration of the machine learning system 30 regarding generation of a trained model Mb.

- the control device 31 executes a program stored in the storage device 32 to function as a plurality of elements (learning data acquiring section 81b and learning processing section 82b) for establishing the learned model Mb by machine learning.

- the learning processing unit 82b establishes a learned model Mb by supervised machine learning (learning processing Sd described later) using a plurality of learning data Tb.

- the learning data acquisition unit 81b acquires a plurality of learning data Tb. Specifically, the learning data acquisition unit 81 b acquires from the storage device 32 a plurality of learning data Tb stored in the storage device 32 .

- the learning data acquisition unit 81b is an example of a "second learning data acquisition unit”

- the learning processing unit 82b is an example of a "second learning processing unit”.

- the learning data Tb is an example of "second learning data”.

- Each of the plurality of learning data Tb is composed of a combination of learning tendency data Dt and learning practice phrase Zt.

- the practice phrase Zt of each learning data Tb is a piece of music suitable for the performance tendency indicated by the tendency data Dt of the learning data Tb.

- the combination of the tendency data Dt and the practice phrase Zt is selected by the creator of the learning data T, for example.

- the tendency data Dt is an example of "learning tendency data”

- the practice phrase Zt is an example of "learning practice phrase”.

- FIG. 17 is a flowchart illustrating a specific procedure of the learning process Sd in which the control device 31 establishes the learned model Mb.

- the learning process Sd is also expressed as a method of generating a learned model Mb by machine learning (a method of generating a learned model).

- the learning data acquisition unit 81b selects one of the plurality of learning data Tb (hereinafter referred to as "selected learning data Tb") stored in the storage device 32 (Sd1). As illustrated in FIG. 16, the learning processing unit 82b inputs the tendency data Dt of the selected learning data Tb to an initial or provisional model (hereinafter referred to as “provisional model Mb0") (Sd2), to acquire the practice phrase Z estimated by the provisional model Mb0 (Sd3).

- provisional model Mb0 initial or provisional model

- the learning processing unit 82b calculates a loss function representing the error between the practice phrase Z estimated by the provisional model Mb0 and the practice phrase Zt of the selected learning data Tb (Sd4).

- the learning processing unit 82b updates multiple variables of the provisional model Mb0 so that the loss function is reduced (ideally minimized) (Sd5). Error backpropagation, for example, is used to update multiple variables according to the loss function.

- the learning processing unit 82b determines whether or not a predetermined end condition is satisfied (Sd6). If the termination condition is not satisfied (Sd6: NO), the learning processing unit 82b selects the unselected learning data Tb as new selected learning data Tb (Sd1). That is, the processing (Sd2-Sd5) for updating a plurality of variables of the provisional model Mb0 is repeated until the termination condition is satisfied (Sd6: YES). The provisional model Mb0 at the time when the end condition is met (Sd6: YES) is determined as the learned model Mb.

- the trained model Mb statistically analyzes the unknown trend data D under the latent relationship between the trend data Dt and the practice phrases Zt in the plurality of learning data Tb. Estimate a practice phrase Z that is appropriate for . That is, the learned model Mb is a statistical estimation model that has learned the relationship between the trend data D and the practice phrase Z.

- FIG. The practice phrase identification unit 73 of the third embodiment identifies the practice phrase Z by inputting the tendency data D into the trained model Mb that has learned the relationship between the tendency data Dt and the practice phrase Zt.

- the learning processing unit 82b transmits the learned model Mb established by the above procedure from the communication device 33 to the information processing system 20 (Sd7).

- the control device 21 of the information processing system 20 stores the learned model Mb received from the machine learning system 30 in the storage device 22 .

- the practice phrase Z is identified by inputting the tendency data D output by the tendency identification unit 72 to the learned model Mb. Therefore, it is possible to specify a statistically valid practice phrase Z based on the latent relationship between the learning tendency data Dt and the learning practice phrase Zt.

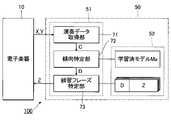

- FIG. 18 is a block diagram illustrating the functional configuration of an electronic musical instrument 10 according to a fourth embodiment.

- the information processing system 20 includes the performance data acquisition section 71, the tendency identification section 72, and the practice phrase identification section 73 as examples.

- the electronic musical instrument 10 includes a performance data acquisition section 71 , a tendency identification section 72 and a practice phrase identification section 73 .

- the above elements are realized by the controller 11 executing a program stored in the storage device 12 .

- the control device 11 also functions as a presentation processing unit 74 .

- the storage device 12 of the electronic musical instrument 10 stores a plurality of pieces of music data X similar to those in the first embodiment, as well as a learned model Ma and a plurality of practice phrases Z.

- the learned model Ma established by the machine learning system 30 is transferred to the electronic musical instrument 10 and stored in the storage device 12 .

- each of the plurality of practice phrases Z corresponds to different trend data D.

- the performance data acquisition unit 71 acquires the performance data Y representing the performance of the music by the user U and the music data X of the music. Specifically, the performance data acquisition unit 71 generates performance data Y according to the user U's operation on the performance device 14 . The performance data acquisition unit 71 also acquires the music data X of the music played by the user U from the storage device 12 . The performance data acquisition unit 71 generates control data C including music data X and performance data Y.

- the tendency identification unit 72 generates the tendency data D representing the performance tendency of the user U according to the control data C, as in the first embodiment. Specifically, the tendency identification unit 72 identifies the tendency data D by inputting the control data C including the music data X and the performance data Y into the learned model Ma.

- the practice phrase identification unit 73 uses the trend data D identified by the tendency identification unit 72 to identify the practice phrase Z according to the performance tendency of the user U, as in the first embodiment. Specifically, the practice phrase identification unit 73 searches the storage device 12 for the practice phrase Z corresponding to the trend data D identified by the trend identification unit 72, among the plurality of practice phrases Z stored in the storage device 12. do.

- the presentation processing unit 74 presents the practice phrase Z identified by the practice phrase identification unit 73 to the user U. Specifically, the presentation processing unit 74 causes the display device 15 to display the musical score of the practice phrase Z. FIG. Also, the presentation processing unit 74 may cause the playback system 18 to play back the performance sound of the practice phrase Z. FIG.

- the fourth embodiment also achieves the same effects as the first embodiment.

- the configuration of the second embodiment in which the practice phrase identifying unit 73 generates the practice phrase Z by editing the reference phrase Zref, and the configuration in which the practice phrase identifying unit 73 identifies the practice phrase Z using the learned model Mb. is similarly applied to the fourth embodiment in which the practice phrase specifying section 73 is installed in the electronic musical instrument 10.

- FIG. 19 is a block diagram illustrating the configuration of a performance system 100 according to a fifth embodiment.

- a performance system 100 includes an electronic musical instrument 10 and an information device 50 .

- the information device 50 is, for example, a device such as a smart phone or a tablet terminal.

- the information device 50 is connected to the electronic musical instrument 10 by wire or wirelessly, for example.

- the information device 50 is realized by a computer system comprising a control device 51 and a storage device 52.

- the control device 51 is composed of one or more processors that control each element of the information device 50 .

- the control device 51 is composed of one or more processors such as CPU, SPU, DSP, FPGA, or ASIC.

- the storage device 52 is one or more memories that store programs executed by the control device 51 and various data used by the control device 51 .

- the storage device 52 is composed of a known recording medium such as a magnetic recording medium or a semiconductor recording medium, or a combination of multiple types of recording media.

- a storage device 52 is a portable recording medium that can be attached to and detached from the information device 50, or a recording medium that can be written or read by the control device 51 via the communication network 200 (for example, cloud storage). may be used.

- the control device 51 By executing the programs stored in the storage device 52, the control device 51 implements a performance data acquisition section 71, a tendency identification section 72, and a practice phrase identification section 73.

- the configurations and operations of the performance data acquisition unit 71, the trend identification unit 72, and the practice phrase identification unit 73 are the same as those of the first to fourth embodiments.

- the practice phrase Z specified by the practice phrase specifying section 73 is transmitted to the electronic musical instrument 10 .

- the control device 11 of the electronic musical instrument 10 causes the display device 15 to display the musical score of the practice phrase Z.

- the fifth embodiment also achieves the same effects as those of the first to fourth embodiments.

- the information processing system 20 of the first to third embodiments, the electronic musical instrument 10 of the fourth embodiment, and the information device 50 of the fifth embodiment are examples of the "information processing system 20".

- one trained model Ma was used to generate the trend data D.

- a plurality of trained models Ma may be selectively used to generate the trend data D. good.

- the tendency identifying unit 72 selects a trained model Ma corresponding to the musical instrument played by the user U from among a plurality of trained models Ma, and inputs the control data C to the trained model Ma to obtain the tendency data D. Generate.

- the relationship between the content of the performance by the user U (performance data Y) and the performance tendency of the user U (tendency data D) differs for each musical instrument. According to the configuration that selectively uses a plurality of trained models Ma corresponding to different musical instruments, it is possible to generate the tendency data D that appropriately represents the playing tendency of the musical instrument that the user U actually plays.

- one trained model Mb is used to generate practice phrase Z, but a plurality of trained models Mb may be selectively used to generate practice phrase Z. good.

- a plurality of trained models Mb corresponding to different musical instruments are prepared.

- the practice phrase identification unit 73 selects a learned model Mb corresponding to the musical instrument played by the user U from among a plurality of learned models Mb, and inputs the tendency data D to the learned model Mb to determine the practice phrase Z to generate

- Any one of the plurality of learned models Ma established by the machine learning system 30 may be selectively transferred to the electronic musical instrument 10 of the fourth embodiment. For example, among a plurality of trained models Ma corresponding to different musical instruments, the trained model Ma corresponding to the musical instrument specified by the user U of the electronic musical instrument 10 is transferred from the machine learning system 30 to the electronic musical instrument 10 . Similarly, any one of the plurality of learned models Ma established by the machine learning system 30 may be selectively transferred to the information device 50 of the fifth embodiment. In the third embodiment, any one of a plurality of learned models Mb established by the machine learning system 30 may be selectively transferred to the information processing system 20 .

- the instruction data P is generated in response to instructions from the instructor U2.

- the trainee U1 designates a performance tendency (for example, a weak performance style) and the point in time when the performance tendency is observed.

- the control device 11 generates indication data P according to an instruction from the user U, and transmits the indication data P from the communication device 13 to the machine learning system 30 .

- control data C includes the music data X and the performance data Y, but the content of the control data C is not limited to the above examples.

- the control data C may include image data of an image of the user U playing the electronic musical instrument 10 .

- the control data C includes image data of both hands of the user U during performance.

- the control data Ct for learning includes image data of an image of the performer.

- a form in which the control data C does not include the music data X is also assumed.

- control data C including at least performance data Y is input to the learned model Ma. That is, the tendency identification unit 72 generates the tendency data D by inputting the performance data Y to the learned model Ma.

- the practice phrase identification unit 73 may identify the practice phrase Z for which the difficulty level of playing is low.

- the practice phrase identification unit 73 selects one practice phrase Z corresponding to the tendency data D from among the plurality of practice phrases Z stored in the storage device 22 as the reference phrase Zref (Sa3), and selects the reference phrase Zref as A practice phrase Z is generated by editing according to the tendency data D (Sa13). That is, the trend data D is shared for selection of the practice phrase Z (Sa3) and editing of the reference phrase Zref (Sa13).

- the practice phrase identification unit 73 generates the practice phrase Z by editing one reference phrase Zref stored in the storage device 22.

- a reference phrase Zref may be selectively used to generate a practice phrase Z.

- the practice phrase generator may generate the practice phrase Z using the reference phrase Zref of the song selected by the user U of the electronic musical instrument 10 from among the plurality of reference phrases Zref stored in the storage device 22. .

- any type of musical instrument may be played by the user U.

- the user U may play an electric stringed instrument such as an electric guitar.

- Acoustic signals (audio data) representing vibrations of the strings of the electric stringed instrument, or data in MIDI format generated by analyzing musical tones produced by the electric stringed instrument are used as the performance data Y.

- Performance tendencies related to electric stringed instruments include, for example, tendencies such as "insufficient muting at locations that should be muted" and "strings other than strings corresponding to target notes are sounding".

- the deep neural network was exemplified as the trained model Ma, but the trained model Ma is not limited to the deep neural network.

- a statistical estimation model such as HMM (Hidden Markov Model) or SVM (Support Vector Machine) may be used as the learned model Ma.

- HMM Hidden Markov Model

- SVM Small Vector Machine

- the trained model Ma using SVM will be described in detail below.

- an SVM is prepared for each of all possible combinations for selecting two types of performance tendencies from a plurality of types of performance tendencies.

- a hyperplane in multidimensional space is established by machine learning (learning process Sc).

- the hyperplane is a boundary plane that separates the space in which the control data C corresponding to one of the two performance tendencies is distributed and the space in which the control data C corresponding to the other performance tendency is distributed.

- the learned model Ma is composed of a plurality of SVMs corresponding to different combinations of performance tendencies (multi-class SVM).

- the trend identification unit 72 inputs control data C to each of the plurality of SVMs of the learned model Ma.

- the SVM corresponding to each combination selects one of the two types of performance tendencies associated with the combination according to which of the two spaces separated by the hyperplane the control data C exists. Selection of performance tendencies is similarly executed in each of a plurality of SVMs corresponding to different combinations.

- the tendency identification unit 72 generates tendency data D representing a performance tendency that maximizes the number of selections by a plurality of SVMs among a plurality of types of performance tendencies.

- the tendency identification unit 72 inputs the control data C to the learned model Ma to obtain the tendency data D representing the performance tendency of the user U. acts as an element that generates In the above description, attention is paid to the trained model Ma, but similarly, a statistical estimation model such as HMM or SVM is also used for the trained model Mb of the third embodiment.

- supervised machine learning using a plurality of learning data T was exemplified as learning processing Sc, but unsupervised machine learning that does not require learning data T or reinforcement that maximizes reward Learning may establish a trained model Ma.

- Machine learning using known clustering is exemplified as unsupervised machine learning.

- the learned model Mb of the third embodiment may be established by unsupervised machine learning or reinforcement learning.

- the machine learning system 30 has established the learned model Ma.

- the function of the machine learning system 30 to establish the trained model Ma is different from the information processing system 20 of the first to third embodiments and the electronic model of the fourth embodiment. It may be installed in the musical instrument 10 or the information device 50 of the fifth embodiment.

- the learned model Ma is used to generate the trend data D according to the control data C, but the use of the learned model Ma may be omitted.

- a table in which each of the plurality of control data C and each of the plurality of trend data D are associated with each other may be used to generate the trend data D.

- FIG. The table in which the correspondence between the control data C and the trend data D is registered is stored, for example, in the storage device 22 of the first embodiment, the storage device 12 of the fourth embodiment, or the storage device 52 of the fifth embodiment.

- the tendency identification unit 72 searches the table for the tendency data D corresponding to the control data C generated by the performance data acquisition unit 71 .

- control data C including the music data X and the performance data Y and the learned model Ma that learned the relationship between the trend data D were used.

- the configuration and method for generation are not limited to the above examples.

- a reference table in which trend data D is associated with each of a plurality of different control data C may be used for generation of trend data D by trend identifying unit 72 .

- the reference table is a data table in which the correspondence between control data C and trend data D is registered, and is stored in, for example, storage device 22 (storage device 12 in the fourth embodiment).

- the trend identification unit 72 searches the reference table for the control data C corresponding to the combination of the music data X and the performance data Y, and selects the trend data D associated with the control data C among the plurality of trend data D. Taken from a reference table.

- the learned model Mb that learned the relationship between the trend data D and the practice phrase Z was used. is not limited to the examples of

- a reference table in which practice phrases Z are associated with each of a plurality of different trend data D may be used for generating practice phrases Z by the practice phrase identification unit 73 .

- the reference table is a data table in which correspondence between trend data D and practice phrases Z is registered, and is stored in, for example, storage device 22 (storage device 12 in the fourth embodiment).

- the practice phrase identification unit 73 searches the reference table for the practice phrase Z corresponding to the trend data D, and acquires the practice phrase Z associated with the trend data D from among the plurality of practice phrases Z from the reference table.

- the performance data acquisition unit 71 acquires the performance data Y representing the performance of the user U from the electronic musical instrument 10.

- the performance data acquisition unit 71 may acquire from the electronic musical instrument 10 the performance data Y in which the past performance of the user U is recorded. That is, it is irrelevant in the present disclosure whether or not the performance data acquisition unit 71 acquires the performance data Y in real time with respect to the performance by the user U.