WO2019069968A1 - Dispositif de codage, dispositif de décodage, procédé de codage et procédé de décodage - Google Patents

Dispositif de codage, dispositif de décodage, procédé de codage et procédé de décodage Download PDFInfo

- Publication number

- WO2019069968A1 WO2019069968A1 PCT/JP2018/036979 JP2018036979W WO2019069968A1 WO 2019069968 A1 WO2019069968 A1 WO 2019069968A1 JP 2018036979 W JP2018036979 W JP 2018036979W WO 2019069968 A1 WO2019069968 A1 WO 2019069968A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- picture

- decoding

- pictures

- temporal

- encoding

- Prior art date

Links

- 238000000034 method Methods 0.000 title claims description 265

- 230000015654 memory Effects 0.000 claims abstract description 120

- 230000002123 temporal effect Effects 0.000 claims description 617

- 238000012545 processing Methods 0.000 description 174

- 230000008569 process Effects 0.000 description 165

- 230000003044 adaptive effect Effects 0.000 description 138

- 230000033001 locomotion Effects 0.000 description 108

- 230000000875 corresponding effect Effects 0.000 description 86

- 238000010586 diagram Methods 0.000 description 61

- 238000013139 quantization Methods 0.000 description 58

- 101100464932 Bacillus subtilis (strain 168) ppsC gene Proteins 0.000 description 53

- 239000013598 vector Substances 0.000 description 53

- 101100464927 Bacillus subtilis (strain 168) ppsB gene Proteins 0.000 description 44

- 101100137463 Bacillus subtilis (strain 168) ppsA gene Proteins 0.000 description 36

- 101100342406 Saccharomyces cerevisiae (strain ATCC 204508 / S288c) PRS1 gene Proteins 0.000 description 36

- 101150056693 pps1 gene Proteins 0.000 description 36

- 238000006243 chemical reaction Methods 0.000 description 29

- 229920000069 polyphenylene sulfide Polymers 0.000 description 23

- 230000004048 modification Effects 0.000 description 13

- 238000012986 modification Methods 0.000 description 13

- 238000004891 communication Methods 0.000 description 12

- 238000012937 correction Methods 0.000 description 12

- 230000006870 function Effects 0.000 description 12

- 230000005236 sound signal Effects 0.000 description 12

- 238000011156 evaluation Methods 0.000 description 11

- 230000011664 signaling Effects 0.000 description 9

- 230000009466 transformation Effects 0.000 description 9

- 238000009795 derivation Methods 0.000 description 8

- 230000010365 information processing Effects 0.000 description 7

- PXFBZOLANLWPMH-UHFFFAOYSA-N 16-Epiaffinine Natural products C1C(C2=CC=CC=C2N2)=C2C(=O)CC2C(=CC)CN(C)C1C2CO PXFBZOLANLWPMH-UHFFFAOYSA-N 0.000 description 6

- 230000008859 change Effects 0.000 description 6

- 230000003287 optical effect Effects 0.000 description 6

- 238000012217 deletion Methods 0.000 description 5

- 230000037430 deletion Effects 0.000 description 5

- 238000003672 processing method Methods 0.000 description 5

- 230000002146 bilateral effect Effects 0.000 description 4

- 230000005540 biological transmission Effects 0.000 description 4

- 238000004590 computer program Methods 0.000 description 4

- 230000000694 effects Effects 0.000 description 4

- 238000003702 image correction Methods 0.000 description 4

- 238000007726 management method Methods 0.000 description 4

- 230000002093 peripheral effect Effects 0.000 description 4

- 238000004458 analytical method Methods 0.000 description 3

- 229910003460 diamond Inorganic materials 0.000 description 3

- 239000010432 diamond Substances 0.000 description 3

- 239000011159 matrix material Substances 0.000 description 3

- 238000005192 partition Methods 0.000 description 3

- 101100537098 Mus musculus Alyref gene Proteins 0.000 description 2

- 101150095908 apex1 gene Proteins 0.000 description 2

- 230000006835 compression Effects 0.000 description 2

- 238000007906 compression Methods 0.000 description 2

- 239000000470 constituent Substances 0.000 description 2

- 238000001914 filtration Methods 0.000 description 2

- 239000000047 product Substances 0.000 description 2

- 239000004065 semiconductor Substances 0.000 description 2

- 238000001228 spectrum Methods 0.000 description 2

- 230000001360 synchronised effect Effects 0.000 description 2

- 230000001131 transforming effect Effects 0.000 description 2

- 230000007704 transition Effects 0.000 description 2

- 238000012935 Averaging Methods 0.000 description 1

- 241000023320 Luma <angiosperm> Species 0.000 description 1

- 238000009825 accumulation Methods 0.000 description 1

- 230000009471 action Effects 0.000 description 1

- 230000004913 activation Effects 0.000 description 1

- 238000013459 approach Methods 0.000 description 1

- 230000001174 ascending effect Effects 0.000 description 1

- 230000003190 augmentative effect Effects 0.000 description 1

- 238000004364 calculation method Methods 0.000 description 1

- 238000010276 construction Methods 0.000 description 1

- 230000001276 controlling effect Effects 0.000 description 1

- 230000002596 correlated effect Effects 0.000 description 1

- 238000013500 data storage Methods 0.000 description 1

- 230000001419 dependent effect Effects 0.000 description 1

- 238000001514 detection method Methods 0.000 description 1

- 239000000284 extract Substances 0.000 description 1

- 239000000446 fuel Substances 0.000 description 1

- 238000009499 grossing Methods 0.000 description 1

- 238000003384 imaging method Methods 0.000 description 1

- 230000006872 improvement Effects 0.000 description 1

- 238000010801 machine learning Methods 0.000 description 1

- 239000000463 material Substances 0.000 description 1

- OSWPMRLSEDHDFF-UHFFFAOYSA-N methyl salicylate Chemical group COC(=O)C1=CC=CC=C1O OSWPMRLSEDHDFF-UHFFFAOYSA-N 0.000 description 1

- 238000010295 mobile communication Methods 0.000 description 1

- 238000005457 optimization Methods 0.000 description 1

- 238000012805 post-processing Methods 0.000 description 1

- 238000007781 pre-processing Methods 0.000 description 1

- 239000007787 solid Substances 0.000 description 1

- 239000013589 supplement Substances 0.000 description 1

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/70—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by syntax aspects related to video coding, e.g. related to compression standards

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/117—Filters, e.g. for pre-processing or post-processing

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/172—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a picture, frame or field

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/30—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability

- H04N19/31—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using hierarchical techniques, e.g. scalability in the temporal domain

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/46—Embedding additional information in the video signal during the compression process

Definitions

- the present disclosure relates to an encoding apparatus and the like that encode a moving image including a plurality of pictures.

- H.264 also called High Efficiency Video Coding (HEVC)

- HEVC High Efficiency Video Coding

- the encoding of the moving image can not be properly performed.

- the present disclosure provides an encoding apparatus and the like that can appropriately set information related to encoding of a moving image.

- An encoding apparatus is an encoding apparatus that encodes a moving image including a plurality of pictures, and includes a circuit and a memory, the circuit using the memory, and Encoding a first picture of the pictures; (i) parameters for a second picture after the first picture in encoding order among the plurality of pictures after the encoding of the first picture A first operation of encoding a set and encoding of the second picture after encoding of the parameter set, or (ii) after encoding of the first picture, without encoding the parameter set Performing a second operation of encoding a second picture, wherein the circuit performs the first operation or the second operation when the second picture is a predetermined picture Performing the first operation.

- An encoding apparatus and the like can appropriately set information related to encoding of a moving image.

- FIG. 1 is a block diagram showing a functional configuration of the coding apparatus according to the first embodiment.

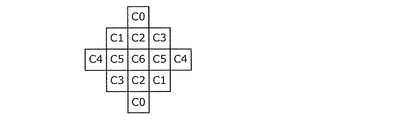

- FIG. 2 is a diagram showing an example of block division in the first embodiment.

- FIG. 3 is a table showing transform basis functions corresponding to each transform type.

- FIG. 4A is a view showing an example of the shape of a filter used in ALF.

- FIG. 4B is a view showing another example of the shape of a filter used in ALF.

- FIG. 4C is a view showing another example of the shape of a filter used in ALF.

- FIG. 5A is a diagram illustrating 67 intra prediction modes in intra prediction.

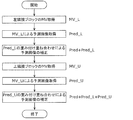

- FIG. 5B is a flowchart for describing an outline of predicted image correction processing by OBMC processing.

- FIG. 5A is a diagram illustrating 67 intra prediction modes in intra prediction.

- FIG. 5B is a flowchart for describing an outline of predicted image correction processing by OBMC processing.

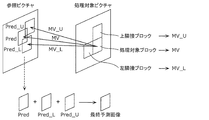

- FIG. 5C is a conceptual diagram for describing an outline of predicted image correction processing by OBMC processing.

- FIG. 5D is a diagram illustrating an example of FRUC.

- FIG. 6 is a diagram for describing pattern matching (bilateral matching) between two blocks along a motion trajectory.

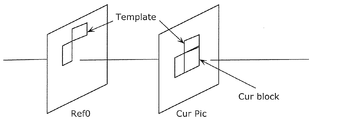

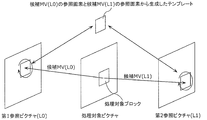

- FIG. 7 is a diagram for describing pattern matching (template matching) between a template in a current picture and a block in a reference picture.

- FIG. 8 is a diagram for explaining a model assuming uniform linear motion.

- FIG. 9A is a diagram for describing derivation of a motion vector in units of sub blocks based on motion vectors of a plurality of adjacent blocks.

- FIG. 9B is a diagram for describing an overview of motion vector derivation processing in the merge mode.

- FIG. 9A is a diagram for describing derivation of a motion vector in units of sub blocks based on motion vectors of a plurality of adjacent blocks.

- FIG. 9B is a diagram for describing an

- FIG. 9C is a conceptual diagram for describing an overview of DMVR processing.

- FIG. 9D is a diagram for describing an outline of a predicted image generation method using luminance correction processing by LIC processing.

- FIG. 10 is a block diagram showing a functional configuration of the decoding apparatus according to the first embodiment.

- FIG. 11 is a block diagram showing a configuration of a loop filter unit of the coding apparatus in the first embodiment.

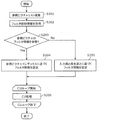

- FIG. 12A is a flow chart showing a first specific example of a management procedure of filter information in the first embodiment.

- FIG. 12B is a flowchart showing a first specific example of a setting procedure of filter information in the first embodiment.

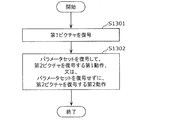

- FIG. 13A is a flowchart illustrating a second specific example of the management procedure of filter information in the first embodiment.

- FIG. 13B is a flowchart illustrating a second specific example of the setting procedure of the filter information in the first embodiment.

- FIG. 14A is a conceptual diagram showing a first specific example of reference restriction of filter information in the first embodiment.

- FIG. 14B is a conceptual diagram showing a second specific example of the reference restriction of the filter information in the first embodiment.

- FIG. 15 is a block diagram showing a configuration of a loop filter unit of the decoding apparatus in the first embodiment.

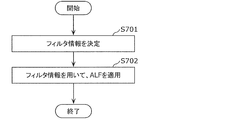

- FIG. 16 is a flowchart showing a first specific example of the processing procedure of the filter information in the modification.

- FIG. 17 is a flowchart illustrating a second specific example of the processing procedure of the filter information in the modification.

- FIG. 18 is a conceptual diagram showing a first specific example of the PPS notification in the modification.

- FIG. 19 is a conceptual diagram showing a second specific example of the PPS notification in the modified embodiment.

- FIG. 20A is a conceptual diagram showing a third specific example of the PPS notification in the modified embodiment.

- FIG. 20B is a conceptual diagram showing a fourth specific example of the PPS notification in the modified embodiment.

- FIG. 21A is a conceptual diagram showing a fifth example of the PPS notification in the modified embodiment.

- FIG. 21B is a conceptual diagram showing a sixth specific example of the PPS notification in the modified embodiment.

- FIG. 22 is a block diagram showing an implementation example of a coding apparatus.

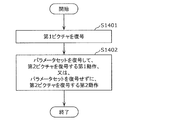

- FIG. 23 is a flow chart showing a first operation example of the coding apparatus.

- FIG. 24 is a flowchart showing a second operation example of the coding apparatus.

- FIG. 25 is a flowchart showing a third operation example of the coding apparatus.

- FIG. 26 is a flow chart showing a fourth operation example of the coding apparatus.

- FIG. 27 is a block diagram showing an implementation example of a decoding device.

- FIG. 28 is a flow chart showing a first operation example of the decoding device.

- FIG. 29 is a flow chart showing a second operation example of the decoding device.

- FIG. 30 is a flowchart showing a third operation example of the decoding device.

- FIG. 31 is a flowchart showing a fourth operation example of the decoding device.

- FIG. 32 is an overall configuration diagram of a content supply system for realizing content distribution service.

- FIG. 33 is a diagram illustrating an example of a coding structure at the time of scalable coding.

- FIG. 33 is a diagram illustrating an example of a coding structure at the time of scalable coding.

- FIG. 33 is a diagram illustrating an example of a coding structure at the

- FIG. 34 is a diagram illustrating an example of a coding structure at the time of scalable coding.

- FIG. 35 is a view showing an example of a display screen of a web page.

- FIG. 36 is a diagram showing an example of a display screen of a web page.

- FIG. 37 is a diagram illustrating an example of a smartphone.

- FIG. 38 is a block diagram illustrating a configuration example of a smartphone.

- the encoding apparatus that encodes a moving image including a plurality of pictures may encode the coding target picture of the plurality of pictures with reference to the encoded picture of the plurality of pictures.

- a decoding apparatus that decodes a moving image including a plurality of pictures may decode a decoding target picture among the plurality of pictures with reference to a decoded picture among the plurality of pictures.

- Each of the plurality of pictures may be assigned a temporal ID indicating a hierarchy related to temporal scalability.

- the temporal ID corresponds to an integer value of 0 or more. For example, it is prohibited to refer to a coded picture having a temporal ID larger than that of the current picture in coding of the current picture. As a result, the encoded picture referred to in the encoding of the encoding target picture is appropriately limited, and the processing complexity in encoding of the encoding target picture is suppressed.

- the decoding apparatus can perform thinning-out in decoding of a moving image by decoding only a part of pictures of which temporal IDs are equal to or less than a predetermined value among all pictures, thereby reducing the amount of processing. .

- a picture included in a plurality of pictures may be used as a temporal sub-layer access (TSA) picture.

- TSA temporal sub-layer access

- a transition may be made from a state in which each picture with a temporal ID smaller than that in the TSA picture is decoded to a state in which each picture with a temporal ID the same as or larger than a TSA picture is decoded. It is possible.

- the transition to a state in which a picture with a larger temporal ID is decoded is called an up switch.

- a picture with a large temporal ID may not be decoded by decimation. Then, when the up switch is performed without using a restriction such as a TSA picture, a picture which has not been decoded may be referred to. Therefore, it is difficult to perform an appropriate up switch if no restriction such as a TSA picture is used.

- a picture included in a plurality of pictures may be used as a step-wise temporal sub-layer access (STSA) picture.

- STSA step-wise temporal sub-layer access

- the up switch for transitioning to the state in which the temporal ID is the same as or larger than the TSA picture is guaranteed from the state in which each picture with the temporal ID smaller than the TSA picture is decoded Ru.

- the up switch is transitioned to the state where each picture with the same temporal ID as the STSA picture is decoded.

- the filter information of the adaptive loop filter is an example of information used in coding and decoding of a moving image.

- the adaptive loop filter is a filter for bringing a reconstructed image generated in coding or decoding of a moving image closer to the original image, and for performing image processing such as smoothing or sharpening on the reconstructed image. It is a filter.

- the adaptive loop filter is appropriately applied to the reconstructed image using the filter information, and moving image encoding and decoding are appropriately performed.

- filter information is not set appropriately, encoding and decoding of a moving picture are not properly performed. That is, if the information used in encoding and decoding of a moving image is not appropriately set, encoding and decoding of the moving image can not be appropriately performed.

- an encoding apparatus is an encoding apparatus that applies an adaptive loop filter in encoding of a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- a plurality of first filter information for applying an adaptive loop filter to a first picture of the plurality of pictures using the memory Using the determined first filter information to determine by referring to second filter information associated with a second picture preceding the first picture among the pictures in coding order; Applying an adaptive loop filter to one picture, and the circuit

- the NAL (Network Abstraction Layer) unit type of the first picture is a predetermined NAL unit type, the picture is a picture preceding the first picture among the plurality of pictures in coding order and It may be prohibited to refer to the third filter information associated with the third picture whose temporal ID is the same as the first picture as the second filter information.

- the encoding apparatus can determine the first filter information of the first picture with reference to the second filter information of the second picture. At this time, the encoding apparatus can prohibit reference to the third filter information of the third picture in the same layer as the first picture of the predetermined NAL unit type as the second filter information.

- the encoding apparatus can perform filtering on filter information of a picture of the same layer as the first picture of the predetermined NAL unit type in the same manner as a reference restriction that can be performed on a picture of the same layer as the first picture of the predetermined NAL unit type Referential restrictions can be made. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the circuit is a picture preceding the first picture among the plurality of pictures in coding order and the temporal ID is higher than the first picture. It may be prohibited to refer to the fourth filter information associated with the fourth picture which is a large picture as the second filter information.

- the encoding apparatus can perform reference restriction on filter information associated with the picture in temporal scalability, as with the reference restriction imposed on the picture. Therefore, the encoding apparatus can appropriately limit and set the filter information to be referred to.

- the circuit may further include a plurality of fifth filter information for applying an adaptive loop filter to a fifth picture after the first picture in coding order among the plurality of pictures.

- the circuit may determine that the NAL unit type of the first picture is the predetermined NAL unit type. It is prohibited to refer to the third filter information associated with a picture as the sixth filter information. It may be.

- the encoding apparatus can determine the fifth filter information of the fifth picture after the first picture in the encoding order with reference to the sixth filter information of the sixth picture. At this time, the encoding apparatus can prohibit reference to the third filter information of the third picture of the same layer as the first picture of the predetermined NAL unit type as the sixth filter information.

- the coding apparatus may filter the picture of the same layer as the first picture in the same manner as the reference restriction that may be performed on the picture of the same layer as the first picture after the first picture of the predetermined NAL unit type.

- Referential restriction can be performed on information. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the circuit encodes more than the first picture among the plurality of pictures. It may be prohibited to refer to the fourth filter information associated with the fourth picture, which is the previous picture in order and whose temporal ID is larger than the first picture, as the sixth filter information.

- the fourth filter information of the fourth picture having a temporal ID larger than that of the first picture is the fifth filter information. It is forbidden to refer as.

- the encoding device performs the reference restriction on the filter information in the same manner as the reference restriction that may be performed on the picture having the temporal ID larger than the first picture after the first picture of the predetermined NAL unit type. It can be carried out. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the circuit is a picture preceding the first picture in the coding order among the first picture and the plurality of pictures, and the temporal ID is 0.

- the seventh picture which is a larger picture

- the NAL unit type of the eighth picture is the above

- reference to the seventh filter information associated with the seventh picture as the second filter information may be prohibited.

- the encoding apparatus refers to the seventh filter information of the seventh picture having the same or a larger temporal ID as the eighth filter than the eighth picture of the predetermined NAL unit type as the second filter information. It can be prohibited.

- the coding apparatus performs the reference restriction on the seventh filter information of the seventh picture in the same manner as the reference restriction that can be performed on the seventh picture after the eighth picture of the predetermined NAL unit type. be able to. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the predetermined NAL unit type may be a NAL unit type of a TSA (Temporal Sub-layer Access) picture.

- the encoding apparatus can perform reference restriction on the filter information of the picture in the same layer as that of the TSA picture, similarly to the reference restriction that can be performed on the picture in the same hierarchy as that of the TSA picture. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the circuit may further include a plurality of fifth filter information for applying an adaptive loop filter to a fifth picture after the first picture in coding order among the plurality of pictures.

- a plurality of fifth filter information for applying an adaptive loop filter to a fifth picture after the first picture in coding order among the plurality of pictures.

- the determined fifth filter information to determine by referring to the sixth filter information associated with the sixth picture preceding the fifth picture among the pictures in coding order; Applying an adaptive loop filter to five pictures, and in the circuit determining the fifth filter information, the NAL unit type of the first picture is the predetermined NAL unit type, and the fifth picture

- the third picture if the temporal ID of the second picture is the same as the temporal ID of the first picture It may be prohibited to refer to the third filter information associated as the sixth filter information.

- the encoding apparatus determines fifth filter information of the fifth picture of the same layer as the first picture after the first picture in coding order with reference to the sixth filter information of the sixth picture. be able to. At this time, the encoding apparatus can prohibit reference to the third filter information of the third picture of the same layer as the first picture of the predetermined NAL unit type as the sixth filter information.

- the encoding apparatus may filter the same as the reference restriction that may be applied to the picture before the first picture in the same layer as the first picture after the first picture of the predetermined NAL unit type.

- Referential restriction can be performed on information. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the predetermined NAL unit type may be a NAL unit type of a STSA (Step-wise Temporal Sub-layer Access) picture.

- STSA Step-wise Temporal Sub-layer Access

- the encoding apparatus can perform reference restriction on the filter information of the picture in the same hierarchy as that of the STSA picture, as in the case of the reference restriction that can be performed on a picture in the same hierarchy as that of the STSA picture. Therefore, the encoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- a decoding device is a decoding device that applies an adaptive loop filter in decoding of a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned.

- a circuit, and a memory wherein the circuit uses first memory to apply first filter information for applying an adaptive loop filter to a first picture of the plurality of pictures using the memory; Determining with reference to second filter information associated with a second picture preceding the first picture in decoding order, and using the determined first filter information for the first picture Applying an adaptive loop filter, the circuit comprising: In the determining step, when the NAL (Network Abstraction Layer) unit type of the first picture is a predetermined NAL unit type, the temporal ID is the picture preceding the first picture in the decoding order among the plurality of pictures. It may be prohibited to refer to the third filter information associated with the third picture which is the same picture as the first picture as the second filter information.

- NAL Network Abstraction Layer

- the decoding apparatus can determine the first filter information of the first picture with reference to the second filter information of the second picture. At this time, the decoding apparatus can prohibit reference to the third filter information of the third picture of the same layer as the first picture of the predetermined NAL unit type as the second filter information.

- the decoding device references the filter information of the picture of the same layer as the first picture of the predetermined NAL unit type, as in the case of the reference restriction that can be performed on the picture of the same layer as the first picture of the predetermined NAL unit type. You can do restrictions. Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the circuit is a picture preceding the first picture in decoding order among the plurality of pictures, and the temporal ID is larger than the first picture.

- Reference to the fourth filter information associated with the fourth picture, which is a picture, as the second filter information may be prohibited.

- the decoding device can perform the reference restriction on the filter information associated with the picture in the time scalability, in the same manner as the reference restriction imposed on the picture. Therefore, the decoding apparatus can appropriately limit and set the filter information to be referred to.

- the circuit may further include fifth filter information for applying an adaptive loop filter to a fifth picture of the plurality of pictures after the first picture in decoding order, The step of determining with reference to sixth filter information associated with the sixth picture preceding the fifth picture among the pictures in decoding order, and using the determined fifth filter information, the fifth picture And applying the adaptive loop filter to the image, and the circuit determines the fifth filter information, if the NAL unit type of the first picture is the predetermined NAL unit type; Prohibiting reference to the associated third filter information as the sixth filter information It may be.

- the decoding apparatus can determine the fifth filter information of the fifth picture after the first picture in decoding order with reference to the sixth filter information of the sixth picture. At this time, the decoding apparatus can prohibit reference to the third filter information of the third picture of the same layer as the first picture of the predetermined NAL unit type as the sixth filter information.

- the decoding apparatus is configured to filter information of a picture in the same hierarchy as the first picture, similarly to a reference restriction that may be performed on a picture in the same hierarchy as the first picture after the first picture of the predetermined NAL unit type. Can be restricted to Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the circuit determines the decoding order of the plurality of pictures in the decoding order than the first picture. It may be prohibited to refer to the fourth filter information associated with the fourth picture which is the previous picture and whose temporal ID is larger than the first picture as the sixth filter information.

- the fourth filter information of the fourth picture having a temporal ID larger than that of the first picture is the fifth filter information. It is forbidden to refer as.

- the decoding device performs the reference restriction on the filter information in the same manner as the reference restriction that may be performed on the picture having the temporal ID larger than that of the first picture after the first picture of the predetermined NAL unit type. be able to. Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the circuit is a picture preceding the first picture in the decoding order among the first picture and the plurality of pictures, and the temporal ID is less than 0.

- the seventh picture which is also a large picture

- reference to the seventh filter information associated with the seventh picture as the second filter information may be prohibited.

- the decoding device is prohibited to refer to the seventh filter information of the seventh picture of the same or larger temporal ID as the second filter information as compared to the eighth picture after the eighth picture of the predetermined NAL unit type. can do.

- the decoding device performs the reference restriction on the seventh filter information of the seventh picture in the same manner as the reference restriction that may be performed on the seventh picture after the eighth picture of the predetermined NAL unit type. Can. Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the predetermined NAL unit type may be a NAL unit type of a TSA (Temporal Sub-layer Access) picture.

- the decoding apparatus can perform reference restriction on filter information of pictures in the same hierarchy as that of the TSA picture, as in the case of reference restrictions that can be performed on pictures in the same hierarchy as that of the TSA picture. Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the circuit may further include fifth filter information for applying an adaptive loop filter to a fifth picture of the plurality of pictures after the first picture in decoding order, The step of determining with reference to sixth filter information associated with the sixth picture preceding the fifth picture among the pictures in decoding order, and using the determined fifth filter information, the fifth picture Applying an adaptive loop filter to the circuit, and the circuit determines the fifth filter information, wherein the NAL unit type of the first picture is the predetermined NAL unit type, and the temporal of the fifth picture is If the ID is the same as the temporal ID of the first picture, It may be prohibited to refer to the third filter information attached as the sixth filter information.

- the decoding device may determine the fifth filter information of the fifth picture of the same layer as the first picture after the first picture in decoding order with reference to the sixth filter information of the sixth picture. it can. At this time, the decoding apparatus can prohibit reference to the third filter information of the third picture of the same layer as the first picture of the predetermined NAL unit type as the sixth filter information.

- the decoding apparatus performs filter information in the same manner as the reference restriction that can be performed on the picture before the first picture. Can be restricted to Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the predetermined NAL unit type may be a NAL unit type of a STSA (Step-wise Temporal Sub-layer Access) picture.

- STSA Step-wise Temporal Sub-layer Access

- the decoding apparatus can perform reference restriction on filter information of pictures in the same hierarchy as that of the STSA picture, as in the case of reference restrictions that can be performed on pictures in the same hierarchy as that of the STSA picture. Therefore, the decoding apparatus can associate filter information with a picture and appropriately manage it, and can appropriately limit and set referenced filter information. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- an encoding method is an encoding method that applies an adaptive loop filter in encoding of a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned.

- a first filter information for applying an adaptive loop filter to a first picture of the plurality of pictures, and a second filter information preceding the first picture of the plurality of pictures in coding order Determining with reference to second filter information associated with a picture, and applying an adaptive loop filter to the first picture using the determined first filter information,

- the NAL Netwo (k Abstraction Layer)

- the unit type is a predetermined NAL unit type

- the reference restriction is applied to the filter information of the picture in the same layer as the first picture of the predetermined NAL unit type, in the same manner as the reference restriction that can be performed on the picture in the same layer as the first picture of the predetermined NAL unit type. Is possible. Therefore, it is possible to appropriately manage filter information in association with a picture, and it is possible to appropriately limit and set reference filter information. Therefore, it is possible to appropriately set information related to the coding of a moving image.

- a decoding method is a decoding method that applies an adaptive loop filter in decoding of a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned.

- First filter information for applying an adaptive loop filter to a first picture of the plurality of pictures is associated with a second picture preceding the first picture in the decoding order among the plurality of pictures.

- the NAL Network A of the first picture

- the unit type is a predetermined NAL unit type, it is associated with a third picture among the plurality of pictures which is a picture before the first picture in decoding order and whose temporal ID is the same as the first picture It may be prohibited to refer the generated third filter information as the second filter information.

- the reference restriction is applied to the filter information of the picture in the same layer as the first picture of the predetermined NAL unit type, in the same manner as the reference restriction that can be performed on the picture in the same layer as the first picture of the predetermined NAL unit type. Is possible. Therefore, it is possible to appropriately manage filter information in association with a picture, and it is possible to appropriately limit and set reference filter information. Therefore, it is possible to appropriately set information related to decoding of a moving image.

- the encoding apparatus is an encoding apparatus that encodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned, respectively;

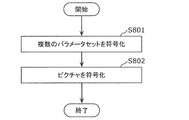

- a memory the circuit using the memory to encode a plurality of parameter sets, each of which is assigned 0 as a temporal ID indicating a hierarchy related to the time scalability; and encoding the plurality of parameter sets

- the step of encoding the first picture in the coding order among the plurality of pictures, and the plurality of parameter sets are respectively assigned to the plurality of layers indicated by the plurality of temporal IDs assigned to the plurality of pictures.

- each of the plurality of parameter sets It may be a parameter set for one or more pictures to which the parameter set of the number of pictures are assigned a temporal ID indicating the corresponding hierarchy.

- the encoding apparatus can collectively encode a plurality of parameter sets corresponding to each of the plurality of layers first. Further, 0 is assigned as a temporal ID to each of the plurality of parameter sets. Thus, multiple parameter sets can be properly processed without being discarded. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the plurality of pictures constitute a first picture group

- the plurality of parameter sets constitute a first parameter set group

- the moving picture further constitutes a plurality of second picture groups.

- a plurality of parameter sets each including a picture, and the circuit is further assigned 0 as a temporal ID indicating a hierarchy relating to the temporal scalability after coding of the pictures making up the first picture group; Encoding a plurality of parameter sets that constitute a parameter set group; and, after encoding the plurality of parameter sets that constitute the second parameter set group, of the plurality of pictures that constitute the second picture group Code the first picture in coding order

- the plurality of parameter sets constituting the second parameter set group correspond respectively to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures constituting the second picture group.

- each of the plurality of parameter sets constituting the second parameter set group corresponds to a hierarchy corresponding to the parameter set constituting the second parameter set group among the plurality of pictures constituting the second picture group It may be a parameter set for one or more pictures to which a temporal ID indicating.

- the encoding apparatus can collectively encode a plurality of parameter sets corresponding to each of the plurality of layers for each picture group. Therefore, the encoding apparatus can appropriately set information related to encoding of a moving image for each picture group.

- a decoding device that decodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned, and includes a circuit and a memory.

- the circuit may use the memory to decode a plurality of parameter sets, each of which is assigned 0 as a temporal ID indicating a hierarchy related to the time scalability, and after decoding the plurality of parameter sets, the plurality of parameter sets.

- the plurality of parameter sets respectively corresponding to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures, and the plurality of parameters

- Each of the sets comprises the plurality of pictures Out it may be a parameter set the parameter set for one or more pictures are assigned temporal ID indicating the corresponding hierarchy.

- the decoding apparatus can collectively decode a plurality of parameter sets corresponding to each of the plurality of layers at first. Further, 0 is assigned as a temporal ID to each of the plurality of parameter sets. Thus, multiple parameter sets can be properly processed without being discarded. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the plurality of pictures constitute a first picture group

- the plurality of parameter sets constitute a first parameter set group

- the moving picture further constitutes a plurality of second picture groups.

- the plurality of parameter sets constituting the second parameter set group respectively correspond to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures constituting the second picture group

- Each of the plurality of parameter sets constituting the 2 parameter set group is a temporal ID indicating a layer to which the parameter set constituting the second parameter set group corresponds among the plurality of

- the decoding device can collectively decode a plurality of parameter sets corresponding to each of the plurality of layers for each picture group. Therefore, the decoding device can appropriately set information related to decoding of a moving image for each picture group.

- an encoding method for encoding a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned, the temporal scalability Encoding a plurality of parameter sets, each of which is assigned 0 as a temporal ID indicating a hierarchy related to H. After encoding the plurality of parameter sets, encoding the first picture in the encoding order among the plurality of pictures. And the plurality of parameter sets correspond respectively to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures, and each of the plurality of parameter sets is one of the plurality of pictures.

- the hierarchy corresponding to the parameter set It may be a parameter set for one or more pictures to temporal ID is assigned.

- a decoding method for decoding a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned, respectively.

- Decoding the plurality of parameter sets to which 0 is respectively assigned as the temporal ID, and decoding the first picture in the decoding order among the plurality of pictures after decoding the plurality of parameter sets A plurality of parameter sets respectively correspond to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures, and each of the plurality of parameter sets corresponds to a layer corresponding to the parameter set among the plurality of pictures.

- Temporal indicating D may be a parameter set for one or more pictures are assigned.

- an encoding apparatus that encodes a moving image including a plurality of pictures, and includes a circuit and a memory, and the circuit uses the memory. Coding the first picture of the plurality of pictures, and (i) coding the first picture, and then coding the first picture after the first picture in coding order of the plurality of pictures. Encoding a parameter set for two pictures, and encoding the second picture after encoding the parameter set, or (ii) encoding the parameter set after encoding the first picture And performing the second operation of encoding the second picture, and the circuit is configured to perform the second operation by performing the first operation or the second operation. If a turbocharger, it may perform the first operation.

- the coding apparatus can code the parameter set for a predetermined picture before the predetermined picture. Therefore, the parameter set for the predetermined picture can be properly processed at the up switch or the like for the predetermined picture. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the predetermined picture may be a TSA (Temporal Sub-layer Access) picture.

- the coding apparatus can code the parameter set for the TSA picture before the TSA picture. Therefore, the parameter set for the TSA picture can be properly processed, such as at the up switch for the TSA picture.

- the encoding apparatus can appropriately set information related to encoding of a moving image.

- each of the plurality of pictures is a picture to which a temporal ID indicating a hierarchy related to temporal scalability is assigned, and in the first operation, the circuit generates the second picture after encoding the first picture.

- Each of the plurality of related parameter sets indicates a layer to which the related parameter set corresponds among the plurality of pictures, each corresponding to a plurality of layers indicated by a plurality of temporal IDs equal to or greater than the temporal ID assigned to 2 pictures.

- Temporal ID assigned It may be a parameter set for one or more pictures.

- the encoding apparatus can encode a plurality of parameter sets for a plurality of pictures having the same temporal ID as the predetermined picture or a temporal ID larger than the predetermined picture before the predetermined picture. Therefore, the parameter set can be properly processed at an up switch or the like for a picture having a temporal ID larger than that of the predetermined picture. Thus, the encoding apparatus can appropriately set information related to encoding of a moving image.

- the predetermined picture may be a step-wise temporal sub-layer access (STSA) picture.

- STSA step-wise temporal sub-layer access

- the coding apparatus can code the parameter set for the STSA picture before the STSA picture. Therefore, the parameter set for the STSA picture can be properly processed, such as in the up switch for the STSA picture.

- the encoding apparatus can appropriately set information related to encoding of a moving image.

- the second picture may be a picture to be encoded next to the first picture among the plurality of pictures.

- the encoding apparatus can appropriately encode the parameter set for the predetermined picture immediately before encoding the predetermined picture.

- parameter sets for a given picture may be properly processed.

- the encoding apparatus can appropriately set information related to encoding of a moving image.

- each of the plurality of pictures is a picture to which a temporal ID indicating a hierarchy related to temporal scalability is assigned, and the circuit further encodes the first picture among the plurality of pictures in coding order.

- a plurality of global parameter sets which are a plurality of parameter sets including the parameter set for the second picture, are encoded, wherein the plurality of global parameter sets are indicated by a plurality of temporal IDs assigned to the plurality of pictures

- Each of the plurality of inclusive parameter sets corresponds to a plurality of layers, and each of the plurality of inclusive parameter sets is a parameter set for one or more pictures to which temporal IDs indicating the layers to which the inclusive parameter set corresponds are assigned. It is also good.

- the encoding apparatus may re-encode the parameter set for the predetermined picture before the predetermined picture even when the plurality of parameter sets including the parameter set for the predetermined picture are encoded first. it can.

- parameter sets for a given picture may be properly processed.

- the encoding apparatus can appropriately set information related to encoding of a moving image.

- a decoding device that decodes a moving image including a plurality of pictures, and includes a circuit and a memory, and the circuit uses the memory to execute the process.

- the decoding apparatus can decode the parameter set for the predetermined picture before the predetermined picture. Therefore, the parameter set for the predetermined picture can be properly processed at the up switch or the like for the predetermined picture. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the predetermined picture may be a TSA (Temporal Sub-layer Access) picture.

- the decoding apparatus can decode the parameter set for the TSA picture before the TSA picture. Therefore, the parameter set for the TSA picture can be properly processed, such as at the up switch for the TSA picture. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- each of the plurality of pictures is a picture to which a temporal ID indicating a hierarchy relating to temporal scalability is assigned, and the circuit performs, for the second picture, the decoding of the first picture in the first operation.

- Decoding a plurality of related parameter sets that are a plurality of parameter sets including the parameter set, and decoding the second picture after decoding the plurality of related parameter sets, and the plurality of related parameter sets are the second picture

- the decoding apparatus can decode a plurality of parameter sets for a plurality of pictures having the same temporal ID as the predetermined picture or a larger temporal ID than the predetermined picture, before the predetermined picture. Therefore, the parameter set can be properly processed at an up switch or the like for a picture having a temporal ID larger than that of the predetermined picture. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the predetermined picture may be a step-wise temporal sub-layer access (STSA) picture.

- STSA step-wise temporal sub-layer access

- the decoding apparatus can decode the parameter set for the STSA picture before the STSA picture. Therefore, the parameter set for the STSA picture can be properly processed, such as in the up switch for the STSA picture. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the second picture may be a picture decoded next to the first picture among the plurality of pictures.

- the decoding apparatus can appropriately decode the parameter set for the predetermined picture immediately before decoding the predetermined picture.

- parameter sets for a given picture may be properly processed. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- each of the plurality of pictures is a picture to which a temporal ID indicating a layer related to temporal scalability is assigned, and the circuit further decodes the first picture in decoding order among the plurality of pictures.

- Decoding a plurality of inclusive parameter sets that are a plurality of parameter sets including the parameter set for the second picture, wherein the plurality of inclusive parameter sets are indicated by a plurality of temporal IDs assigned to the plurality of pictures

- Each of the plurality of inclusive parameter sets may correspond to a layer, and each of the plurality of inclusive parameter sets may be a parameter set for one or more pictures to which a temporal ID indicating a layer corresponding to the inclusive parameter set is assigned. .

- the decoding apparatus can decode the parameter set for the predetermined picture again before the predetermined picture even when the plurality of parameter sets including the parameter set for the predetermined picture are decoded first.

- parameter sets for a given picture may be properly processed. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- an encoding method for encoding a moving image including a plurality of pictures, and encoding the first picture of the plurality of pictures; (I) After encoding the first picture, encoding a parameter set for a second picture after the first picture in encoding order among the plurality of pictures, and encoding the parameter set, A first operation of encoding a second picture, or (ii) performing a second operation of encoding the second picture without encoding the parameter set after the encoding of the first picture; In the step of performing the first operation or the second operation, the first operation may be performed if the second picture is a predetermined picture.

- the parameter set for the predetermined picture before the predetermined picture. Therefore, the parameter set for the predetermined picture can be properly processed at the up switch or the like for the predetermined picture. Therefore, it is possible to appropriately set information related to the coding of a moving image.

- a decoding method for decoding a moving image including a plurality of pictures, and decoding the first picture of the plurality of pictures; (i) After decoding the first picture, decoding a parameter set for a second picture after the first picture among the plurality of pictures in decoding order, and decoding the second picture after decoding the parameter set And (ii) performing a second operation of decoding the second picture without decoding the parameter set after decoding of the first picture, the first operation or the second operation.

- the first operation may be performed if the second picture is a predetermined picture.

- the encoding apparatus is an encoding apparatus that encodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned, respectively;

- a memory the circuit using the memory to encode a first picture of the plurality of pictures; (i) after the coding of the first picture, of the plurality of pictures A first operation of encoding a parameter set for a second picture after the first picture in encoding order and encoding the second picture after encoding of the parameter set, or (ii) the first operation Performing a second operation of encoding the second picture without encoding the parameter set after encoding the picture;

- the temporal IDs assigned to the second picture are larger than the smallest temporal ID of the plurality of temporal IDs assigned to the plurality of pictures, and the plurality of temporal IDs are If the temporal ID is smaller than the largest temporal ID, the first operation may be performed.

- the encoding apparatus can appropriately set information related to encoding of a moving image.

- the circuit performs the first operation even when the temporal ID assigned to the second picture is the maximum temporal ID. Good.

- the encoding apparatus can appropriately set information related to encoding of a moving image.

- the circuit may encode the first picture. After that, the parameter set and the top parameter set are encoded, and after the parameter set and the top parameter set are encoded, the second picture is encoded, and the top parameter set is assigned the largest temporal ID. It may be a parameter set for one or more pictures to be performed.

- the circuit may perform the second operation when the temporal ID assigned to the second picture is the maximum temporal ID. .

- the circuit performs the second picture under a condition that a predetermined flag included in a sequence parameter set for the plurality of pictures has a predetermined value.

- the first operation may be performed if the temporal ID to be assigned is larger than the minimum temporal ID and smaller than the maximum temporal ID.

- each of the plurality of pictures is TSA (Temporal Sub-layer Access) when the temporal ID assigned to the picture is not the minimum temporal ID. It may be a picture.

- the encoding apparatus can appropriately encode the parameter set for the picture in the middle layer before the picture in the middle layer in the predetermined sequence composed of the TSA pictures excluding the lowest layer.

- a decoding device that decodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned, and includes a circuit and a memory.

- the circuit may decode the first picture of the plurality of pictures using the memory; and (i) after decoding the first picture, the circuit in the decoding order of the plurality of pictures.

- the temporal ID assigned to the second picture is larger than the smallest temporal ID of the plurality of temporal IDs assigned to the plurality of pictures, and the largest temporal of the plurality of temporal IDs. If the ID is smaller than the ID, the first operation may be performed.

- the decoding device can decode the parameter set for the picture in the middle layer before the picture in the middle layer. Therefore, the parameter set for the picture in the middle layer can be properly processed, such as in the up switch for the picture in the middle layer. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the circuit performs the first operation even when the temporal ID assigned to the second picture is the maximum temporal ID. Good.

- the decoding device can decode the parameter set for the top layer picture before the top layer picture. Therefore, the parameter set for the top layer picture can be properly processed, such as in the up switch for the top layer picture. Therefore, the decoding device can appropriately set the information related to the decoding of the moving image.

- the circuit performs the decoding after the first picture.

- Decoding the parameter set and the top parameter set, and decoding the second picture after decoding the parameter set and the top parameter set, wherein the top parameter set is assigned the largest temporal ID 1 It may be a parameter set for one or more pictures.

- the circuit may perform the second operation when the temporal ID assigned to the second picture is the maximum temporal ID. .

- the decoding apparatus can omit decoding the parameter set for the picture of the top layer before the picture of the top layer.

- the circuit performs the second picture under a condition that a predetermined flag included in a sequence parameter set for the plurality of pictures has a predetermined value.

- the first operation may be performed if the temporal ID to be assigned is larger than the minimum temporal ID and smaller than the maximum temporal ID.

- the decoding device can appropriately decode the parameter set for the picture in the middle layer before the picture in the middle layer in the predetermined sequence.

- each of the plurality of pictures is TSA (Temporal Sub-layer Access) when the temporal ID assigned to the picture is not the minimum temporal ID. It may be a picture.

- the decoding apparatus can appropriately decode the parameter set for the picture in the middle layer before the picture in the middle layer in the predetermined sequence configured by the TSA pictures except for the lowest layer.

- an encoding method for encoding a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- the first operation is performed. You may go.

- a decoding method for decoding a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned, and among the plurality of pictures Decoding the first picture of (i) decoding the parameter set for the second picture after the first picture in decoding order among the plurality of pictures after the decoding of the first picture; A first operation of decoding the second picture after decoding of the set, or (ii) a second operation of decoding the second picture without decoding the parameter set after decoding of the first picture And performing the first operation or the second operation, the temporal I being assigned to the second picture.

- the first operation may be performed if the value is larger than the smallest temporal ID of the plurality of temporal IDs allocated to the plurality of pictures and smaller than the largest temporal ID of the plurality of temporal IDs. .

- the encoding apparatus includes a division unit, an intra prediction unit, an inter prediction unit, a conversion unit, a quantization unit, an entropy coding unit, and a filter unit. May be

- the division unit may divide a picture into a plurality of blocks.

- the intra prediction unit may perform intra prediction on blocks included in the plurality of blocks.

- the inter prediction unit may perform inter prediction on the block.

- the conversion unit may generate a conversion coefficient by converting a prediction error between a predicted image obtained by the intra prediction or the inter prediction and an original image.

- the quantization unit may quantize the transform coefficient to generate a quantization coefficient.

- the entropy coding unit may code the quantization coefficient to generate a coded bit stream.

- the filter unit may apply a filter to a reconstructed image generated using the predicted image.

- the encoding apparatus may be an encoding apparatus that applies an adaptive loop filter in encoding of a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are assigned.

- the filter unit is configured to apply first filter information for applying an adaptive loop filter to a first picture of the plurality of pictures in coding order relative to the first picture of the plurality of pictures. Determining with reference to second filter information associated with a previous second picture; and applying an adaptive loop filter to the first picture using the determined first filter information. You may go.

- the filter unit is in coding order than the first picture among the plurality of pictures. It may be prohibited to refer to the third filter information associated with the third picture that is the previous picture and has the same temporal ID as the first picture as the second filter information.

- the encoding apparatus may be an encoding apparatus that encodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- the entropy encoding unit encodes a plurality of parameter sets each of which is assigned 0 as a temporal ID indicating a hierarchy related to the temporal scalability, and after encoding the plurality of parameter sets, the plurality of pictures And encoding the first picture in the encoding order.

- the plurality of parameter sets respectively correspond to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures, and each of the plurality of parameter sets corresponds to the parameter set of the plurality of pictures. It may be a parameter set for one or more pictures to which a temporal ID indicating a corresponding hierarchy is assigned.

- the encoding apparatus may be an encoding apparatus that encodes a moving image including a plurality of pictures.

- the entropy coding unit encodes the first picture of the plurality of pictures, and (i) after coding the first picture, the coding of the first picture in the coding order A first operation of encoding a parameter set for a second picture after one picture and encoding the second picture after encoding the parameter set, or (ii) after encoding the first picture A second operation of encoding the second picture may be performed without encoding the parameter set.

- the entropy coding unit may perform the first operation when the second picture is a predetermined picture.

- the encoding apparatus may be an encoding apparatus that encodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- the entropy coding unit encodes the first picture of the plurality of pictures, and (i) after coding the first picture, the coding of the first picture in the coding order A first operation of encoding a parameter set for a second picture after one picture and encoding the second picture after encoding the parameter set, or (ii) after encoding the first picture A second operation of encoding the second picture may be performed without encoding the parameter set.

- the entropy coding unit is the smallest of temporal IDs assigned to the second picture among the plurality of temporal IDs assigned to the plurality of pictures.

- the first operation may be performed when it is larger than the temporal ID and smaller than the largest temporal ID of the plurality of temporal IDs.

- the decoding device may include an entropy decoding unit, an inverse quantization unit, an inverse transform unit, an intra prediction unit, an inter prediction unit, and a filter unit.

- the entropy decoding unit may decode quantization coefficients of blocks in a picture from a coded bit stream.

- the dequantization unit may dequantize the quantization coefficient to obtain a transform coefficient.

- the inverse transform unit may inverse transform the transform coefficient to obtain a prediction error.

- the intra prediction unit may perform intra prediction on the block.

- the inter prediction unit may perform inter prediction on the block.

- the filter unit may apply a filter to a reconstructed image generated using the prediction image obtained by the intra prediction or the inter prediction and the prediction error.

- the decoding device may be a decoding device that applies an adaptive loop filter in the decoding of a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- the filter unit may perform first filter information for applying an adaptive loop filter to a first picture of the plurality of pictures before the first picture of the plurality of pictures in decoding order. Determining with reference to second filter information associated with the second picture of the second picture, and applying an adaptive loop filter to the first picture using the determined first filter information.

- the filter unit precedes, in decoding order, the first picture among the plurality of pictures. It may be prohibited to refer, as the second filter information, third filter information associated with a third picture which is a picture of the first picture and the temporal ID of which is the same picture.

- the decoding device may be a decoding device that decodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- the entropy decoding unit decodes a plurality of parameter sets each of which is assigned 0 as a temporal ID indicating a hierarchy related to the temporal scalability, and after decoding the plurality of parameter sets, decodes the plurality of pictures. Decoding the first picture in order may be performed.

- the plurality of parameter sets respectively correspond to a plurality of layers indicated by a plurality of temporal IDs assigned to the plurality of pictures, and each of the plurality of parameter sets corresponds to the parameter set of the plurality of pictures. It may be a parameter set for one or more pictures to which a temporal ID indicating a corresponding hierarchy is assigned.

- the decoding device may be a decoding device that decodes a moving image including a plurality of pictures.

- the entropy decoding unit decodes the first picture of the plurality of pictures, and (i) after decoding the first picture, the first picture in decoding order among the plurality of pictures.

- a first operation of decoding a parameter set for a subsequent second picture and decoding of the second picture after decoding of the parameter set, or (ii) without decoding the parameter set after decoding of the first picture Performing a second operation of decoding the second picture.

- the entropy decoding unit may perform the first operation if the second picture is a predetermined picture.

- the decoding device may be a decoding device that decodes a moving image including a plurality of pictures to which temporal IDs indicating layers related to temporal scalability are respectively assigned.

- the entropy decoding unit decodes the first picture of the plurality of pictures, and (i) after decoding the first picture, the first picture in decoding order among the plurality of pictures.

- a first operation of decoding a parameter set for a subsequent second picture and decoding of the second picture after decoding of the parameter set, or (ii) without decoding the parameter set after decoding of the first picture Performing a second operation of decoding the second picture.

- the entropy decoding unit in the step of performing the first operation or the second operation, the temporal ID assigned to the second picture is the minimum temporal of the plurality of temporal IDs assigned to the plurality of pictures. If the ID is larger than the ID and smaller than the largest temporal ID of the plurality of temporal IDs, the first operation may be performed.

- these general or specific aspects may be realized by a system, an apparatus, a method, an integrated circuit, a computer program, or a non-transitory recording medium such as a computer readable CD-ROM, and the system

- the present invention may be realized as any combination of an apparatus, a method, an integrated circuit, a computer program, and a storage medium.

- Embodiment 1 First, an outline of the first embodiment will be described as an example of an encoding apparatus and a decoding apparatus to which the process and / or the configuration described in each aspect of the present disclosure described later can be applied.

- Embodiment 1 is merely an example of an encoding apparatus and a decoding apparatus to which the process and / or the configuration described in each aspect of the present disclosure can be applied, and the processing and / or the process described in each aspect of the present disclosure

- the configuration can also be implemented in a coding apparatus and a decoding apparatus that are different from the first embodiment.

- the encoding apparatus or the decoding apparatus according to the first embodiment corresponds to the constituent elements described in each aspect of the present disclosure among a plurality of constituent elements that configure the encoding apparatus or the decoding apparatus.