WO2012164924A1 - 動画像符号化装置、動画像符号化方法及び動画像符号化プログラム、並びに動画像復号装置、動画像復号方法及び動画像復号プログラム - Google Patents

動画像符号化装置、動画像符号化方法及び動画像符号化プログラム、並びに動画像復号装置、動画像復号方法及び動画像復号プログラム Download PDFInfo

- Publication number

- WO2012164924A1 WO2012164924A1 PCT/JP2012/003540 JP2012003540W WO2012164924A1 WO 2012164924 A1 WO2012164924 A1 WO 2012164924A1 JP 2012003540 W JP2012003540 W JP 2012003540W WO 2012164924 A1 WO2012164924 A1 WO 2012164924A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- motion vector

- prediction

- prediction block

- block

- picture

- Prior art date

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/136—Incoming video signal characteristics or properties

- H04N19/137—Motion inside a coding unit, e.g. average field, frame or block difference

- H04N19/139—Analysis of motion vectors, e.g. their magnitude, direction, variance or reliability

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/513—Processing of motion vectors

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/70—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by syntax aspects related to video coding, e.g. related to compression standards

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/103—Selection of coding mode or of prediction mode

- H04N19/105—Selection of the reference unit for prediction within a chosen coding or prediction mode, e.g. adaptive choice of position and number of pixels used for prediction

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/124—Quantisation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/134—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or criterion affecting or controlling the adaptive coding

- H04N19/157—Assigned coding mode, i.e. the coding mode being predefined or preselected to be further used for selection of another element or parameter

- H04N19/159—Prediction type, e.g. intra-frame, inter-frame or bidirectional frame prediction

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/172—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a picture, frame or field

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/176—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a block, e.g. a macroblock

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/184—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being bits, e.g. of the compressed video stream

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/513—Processing of motion vectors

- H04N19/517—Processing of motion vectors by encoding

- H04N19/52—Processing of motion vectors by encoding by predictive encoding

Definitions

- the present invention relates to a moving image encoding and decoding technique, and more particularly to a moving image encoding and decoding technique using motion compensated prediction.

- MPEG-4 AVC / H.3 is a typical video compression encoding system.

- H.264 Motion compensation is used in which a picture is divided into a plurality of rectangular blocks, a picture that has already been encoded / decoded is used as a reference picture, and motion from the reference picture is predicted.

- a technique for predicting motion by this motion compensation is called inter prediction.

- MPEG-4 AVC / H. In the inter prediction in H.264, a plurality of pictures can be used as reference pictures, and the most suitable reference picture is selected for each block from the plurality of reference pictures to perform motion compensation. Therefore, a reference index is assigned to each reference picture, and the reference picture is specified by this reference index.

- L0 prediction mainly used as forward prediction

- L1 prediction list 1 prediction

- bi-prediction using two inter predictions of L0 prediction and L1 prediction at the same time is also defined.

- bi-directional prediction is performed, the inter-predicted signals of L0 prediction and L1 prediction are multiplied by a weighting coefficient, an offset value is added and superimposed, and a final inter prediction signal is generated.

- weighting coefficients and offset values used for weighted prediction representative values are set for each reference picture in each list and encoded.

- the encoding information related to inter prediction includes, for each block, a prediction mode for distinguishing between L0 prediction and L1 prediction and bi-prediction, a reference index for specifying a reference picture for each reference list for each block, and a moving direction and a moving amount of the block.

- a prediction process is performed on a motion vector in order to reduce the amount of code of the motion vector generated in each block.

- MPEG-4 AVC / H.264 using the fact that the motion vector to be encoded has a strong correlation with the motion vectors of neighboring neighboring blocks, a prediction motion vector is calculated by performing prediction from neighboring neighboring blocks, and the coding target motion vector is calculated. A difference motion vector that is a difference between the motion vector and the predicted motion vector is calculated, and the difference motion vector is encoded to reduce the amount of codes.

- a median value is calculated from the motion vectors of neighboring blocks A, B, and C in the vicinity to obtain a predicted motion vector, and the difference between the motion vector and the predicted motion vector As a result, the amount of code of the motion vector is reduced (Non-Patent Document 1).

- the shape of the encoding target block and the adjacent block are different as shown in FIG. 48B, when there are a plurality of adjacent blocks on the left side, When there is an adjacent block, the leftmost block is the prediction block, and the encoding target block is divided into 16 ⁇ 8 pixels or 8 ⁇ 16 pixels as shown in FIGS. 48 (c) and 48 (d).

- a prediction block of a reference destination is determined, and prediction is performed from the motion vector of the determined prediction block.

- Non-Patent Document 1 since only one prediction vector is obtained, the prediction accuracy of the prediction motion vector may be lowered depending on the image, and the encoding efficiency may not be improved.

- the present inventors have come to recognize the necessity of further compressing the encoded information and reducing the overall code amount in the image encoding method using motion compensated prediction.

- the present invention has been made in view of such a situation, and an object of the present invention is to calculate a motion vector encoding that improves the encoding efficiency by calculating a prediction motion vector candidate, thereby reducing the code amount of the difference motion vector. And providing a decoding technique. Another object of the present invention is to provide a moving image encoding and decoding technique for improving encoding efficiency by calculating encoding information candidates to reduce the amount of encoded information.

- a moving image encoding device is a moving image encoding device that encodes the moving image using motion compensation in units of blocks obtained by dividing each picture of the moving image.

- a first encoded prediction block adjacent to the encoding target prediction block in the same picture as the encoding target prediction block, and the encoding target prediction in a picture different from the encoding target prediction block Prediction from motion vectors of one of the second encoded prediction blocks located at the same position as or around the block, derive a plurality of motion vector predictor candidates, and derive the motion vector predictor candidates as predicted motion

- a motion vector predictor candidate generation unit 120, 121) to be registered in the vector candidate list and a motion vector predictor selected from the motion vector predictor candidate list That comprises predicted motion vector selection portion (124), encoder for encoding the information indicating the position of the selected prediction motion vector in the motion vector predictor candidate list and (109).

- the motion vector predictor candidate generation unit (120, 121) predicts a motion vector of a prediction block of the first encoded prediction block in order to obtain a set number of motion vector predictor candidates.

- each prediction block in a predetermined order is assigned to each adjacent block group of the left adjacent block group and the upper adjacent block group.

- Condition 1 A motion vector of the same reference picture exists in the same reference list as the encoding mode selected in the encoding target prediction block, Condition 2. 2. a motion vector of the same reference picture exists in a reference list different from the encoding mode selected in the encoding target prediction block; Condition 4.

- a motion vector of a different reference picture exists in the same reference list as the encoding mode selected in the encoding target prediction block.

- the conditions 1 and 2 are prioritized. The process is performed for each prediction block in order, and then the conditions 3 and 4 are performed for each prediction block in the priority order of the conditions 3 and 4.

- Another aspect of the present invention is a video encoding method.

- This method is a moving image coding method for coding the moving image using motion compensation in units of blocks obtained by dividing each picture of the moving image, and the coding target in the same picture as the encoding target prediction block

- the first encoded prediction block adjacent to the prediction block, and the second encoded prediction at the same position as or around the encoding target prediction block in a picture different from the encoding target prediction block A prediction motion vector candidate generating step of predicting from a plurality of motion vectors of the block, deriving a plurality of prediction motion vector candidates, and registering the derived prediction motion vector candidates in a prediction motion vector candidate list;

- a predicted motion vector selection step of selecting a predicted motion vector from the vector candidate list; and the predicted motion vector candidate list

- a coding step of coding the information indicating the position of the selected prediction motion vector definitive is a moving image coding method for coding the moving image using motion compensation in units of blocks obtained by dividing each picture of the moving image,

- a motion vector of which prediction block of the first encoded prediction blocks is selected as a prediction motion vector candidate.

- condition 1 for each prediction block in a predetermined order for each adjacent block group on the left side and the adjacent block group on the upper side .

- a motion vector of the same reference picture exists in the same reference list as the encoding mode selected in the encoding target prediction block, Condition 2.

- a motion vector of the same reference picture exists in a reference list different from the encoding mode selected in the encoding target prediction block; Condition 4.

- a motion vector of a different reference picture exists in the same reference list as the encoding mode selected in the encoding target prediction block.

- the conditions 1 and 2 are prioritized. The process is performed for each prediction block in order, and then the conditions 3 and 4 are performed for each prediction block in the priority order of the conditions 3 and 4.

- a moving picture decoding apparatus is a moving picture decoding apparatus that decodes a coded bit string obtained by coding the moving picture using motion compensation in units of blocks obtained by dividing each picture of the moving picture.

- the first decoded prediction block adjacent to the decoding target prediction block in the same picture as the decoding target prediction block and the decoding target prediction block in a picture different from the decoding target prediction block are located at the same or a peripheral position.

- Predicted motion vector that predicts from any one of the motion vectors of a second decoded prediction block, derives a plurality of predicted motion vector candidates, and registers the derived predicted motion vector candidates in the predicted motion vector candidate list

- the prediction motion vector candidate generation unit (220, 221) obtains a motion vector of which prediction block of the first decoded prediction block in order to obtain a set number of prediction motion vector candidates.

- Condition 2 When determining whether to be a motion vector for deriving a vector candidate with a priority order, for each prediction block in a predetermined order for each adjacent block group on the left adjacent block group and the adjacent block group on the upper side Condition 1 Condition 2.

- a motion vector of the same reference picture exists in the same reference list as that of the encoding mode selected in the decoding target prediction block. 2.

- a motion vector of the same reference picture exists in a reference list different from the encoding mode selected in the decoding target prediction block;

- Condition 4 A motion vector of a different reference picture exists in the same reference list as the encoding mode selected in the decoding target prediction block.

- each reference condition that a motion vector of a different reference picture exists in a reference list different from the encoding mode selected in the decoding target prediction block first, the priority order of the conditions 1 and 2 for the conditions 1 and 2 Then, for each prediction block, the conditions 3 and 4 are performed for each prediction block in the priority order of the conditions 3 and 4.

- Still another aspect of the present invention is a moving picture decoding method.

- This method is a moving picture decoding method for decoding a coded bit sequence in which the moving picture is coded using motion compensation in units of blocks obtained by dividing each picture of the moving picture, and includes the same picture as the prediction target block to be decoded.

- a first decoded prediction block that is adjacent to the decoding target prediction block, and a second decoded prediction that is in the same position as or around the decoding target prediction block in a picture different from the decoding target prediction block A prediction motion vector candidate generating step of predicting from a plurality of motion vectors of the block, deriving a plurality of prediction motion vector candidates, and registering the derived prediction motion vector candidates in a prediction motion vector candidate list; A decoding step for decoding information indicating a position of a predicted motion vector to be selected in the vector candidate list; Based on the information indicating the position of the predicted motion vector to be the selections, and a prediction motion vector selection step of selecting a prediction motion vector from the predicted motion vector candidate list.

- the predictive motion vector candidate generation step derives a predictive motion vector candidate from which the predictive motion vector of the first decoded predictive blocks in order to obtain a set number of predictive motion vector candidates.

- Condition 2. A motion vector of the same reference picture exists in the same reference list as that of the encoding mode selected in the decoding target prediction block. 2. a motion vector of the same reference picture exists in a reference list different from the encoding mode selected in the decoding target prediction block;

- Condition 4 A motion vector of a different reference picture exists in the same reference list as the encoding mode selected in the decoding target prediction block.

- each reference condition that a motion vector of a different reference picture exists in a reference list different from the encoding mode selected in the decoding target prediction block first, the priority order of the conditions 1 and 2 for the conditions 1 and 2 Then, for each prediction block, the conditions 3 and 4 are performed for each prediction block in the priority order of the conditions 3 and 4.

- a plurality of prediction motion vectors are calculated, an optimal prediction motion vector is selected from the plurality of prediction motion vectors, and the amount of generated code of the difference motion vector is reduced, thereby improving the encoding efficiency.

- a plurality of encoding information candidates are calculated, optimal motion information is selected from the plurality of encoding information, and the generated code amount of the encoded information to be transmitted is reduced, Encoding efficiency can be improved.

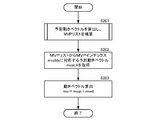

- FIG. 3 is a block diagram illustrating a detailed configuration of a motion vector calculation unit in FIG. 2. It is a flowchart explaining operation

- movement of the difference motion vector calculation part of FIG. 3 is a flowchart illustrating an operation of a motion vector calculation unit in FIG. 2. It is a flowchart explaining the prediction method of a motion vector. It is a flowchart explaining the prediction motion vector candidate calculation method. It is a flowchart explaining the prediction motion vector candidate calculation method. It is a flowchart explaining the prediction motion vector candidate calculation method. It is a flowchart explaining the prediction motion vector candidate calculation method.

- a plurality of prediction motion vectors are calculated from the motion vectors of the block, and a difference vector between the motion vector of the block to be encoded and the selected prediction motion vector is calculated and encoded, thereby reducing the code amount.

- the amount of code is reduced by estimating the encoding information of the encoding target block by using the encoding information of the surrounding blocks that have already been encoded.

- a plurality of predicted motion vectors are calculated from the motion vectors of the peripheral blocks that have been decoded, and a decoding target is calculated from the difference vector decoded from the encoded stream and the selected predicted motion vector.

- the motion vector of the block is calculated and decoded.

- the encoding information of the decoding target block is estimated by using the encoding information of the peripheral blocks that have been decoded.

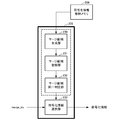

- FIG. 1 is a block diagram showing a configuration of a video encoding apparatus according to the embodiment.

- the moving image encoding apparatus according to the embodiment includes an image memory 101, a motion vector detection unit 102, a difference motion vector calculation unit 103, an inter prediction information estimation unit 104, a motion compensation prediction unit 105, a prediction method determination unit 106, a residual signal.

- Generation unit 107, orthogonal transform / quantization unit 108, first encoded bit string generation unit 109, second encoded bit string generation unit 110, multiplexing unit 111, inverse quantization / inverse orthogonal transform unit 112, decoded image signal A superimposing unit 113, an encoded information storage memory 114, and a decoded image memory 115 are provided.

- the image memory 101 temporarily stores image signals to be encoded supplied in order of shooting / display time.

- the image memory 101 supplies the stored image signal to be encoded to the motion vector detection unit 102, the prediction method determination unit 106, and the residual signal generation unit 107 in units of predetermined pixel blocks.

- the images stored in the order of shooting / display time are rearranged in the encoding order and output from the image memory 101 in units of pixel blocks.

- the motion vector detection unit 102 calculates a motion vector for each prediction block size and each prediction mode by block matching or the like between the image signal supplied from the image memory 101 and the decoded image (reference picture) supplied from the decoded image memory 115. Detection is performed in units of prediction blocks, and the detected motion vectors are supplied to the motion compensation prediction unit 105, the difference motion vector calculation unit 103, and the prediction method determination unit 106.

- the prediction block is a unit for performing motion compensation, and details thereof will be described later.

- the difference motion vector calculation unit 103 calculates a plurality of motion vector predictor candidates using the encoded information of the already encoded image signal stored in the encoded information storage memory 114, and puts it in an MVP list to be described later. Registering, selecting an optimal motion vector predictor from a plurality of motion vector predictor candidates registered in the MVP list, calculating a motion vector difference from the motion vector detected by the motion vector detection unit 102 and the motion vector predictor, The calculated difference motion vector is supplied to the prediction method determination unit 106.

- the weighting parameter for weighted prediction of the selected prediction block (weighting to multiply the motion compensation image signal)

- the coefficient value and the weighting offset value to be added) are also supplied to the prediction method determination unit 106.

- the prediction method determination unit 106 is supplied with an MVP index that identifies a prediction motion vector selected from prediction motion vector candidates registered in the MVP list. The detailed configuration and operation of the difference motion vector calculation unit 103 will be described later.

- the inter prediction information estimation unit 104 estimates inter prediction information in merge mode.

- the merge mode is inter prediction information such as a prediction mode of the prediction block, a reference index (information for specifying a reference image used for motion compensation prediction from a plurality of reference images registered in the reference list), a motion vector, and the like. Is a mode in which inter prediction information of an adjacent inter-predicted prediction block that has been encoded or an inter-predicted prediction block of a different image is used.

- a plurality of merge candidates are calculated and registered in a merge candidate list described later

- An optimal merge candidate is selected from a plurality of merge candidates registered in the merge candidate list, and inter prediction information such as a prediction mode, a reference index, and a motion vector of the selected merge candidate is supplied to the motion compensation prediction unit 105.

- a merge index that identifies the selected merge candidate is supplied to the prediction method determination unit 106.

- the weighting parameter for the weighted prediction of the selected merge candidate is also supplied to the motion compensation prediction unit 105.

- a merge index that identifies the selected merge candidate is supplied to the prediction method determination unit 106.

- coding information such as quantization parameters for quantization of the selected coded prediction block can also be used as a predicted value.

- Information is supplied to the prediction method determination unit 106. The detailed configuration and operation of the inter prediction information estimation unit 104 will be described later.

- the motion compensation prediction unit 105 generates a prediction image signal by motion compensation prediction from a reference picture using the motion vectors detected by the motion vector detection unit 102 and the inter prediction information estimation unit 104, and uses the prediction image signal as a prediction method determination unit. 106.

- the unidirectional prediction is performed in the L0 prediction mainly used as the forward prediction and the L1 prediction mainly used as the backward prediction.

- bi-prediction bi-directional prediction is performed, and a weighting factor is adaptively applied to each inter-predicted signal of L0 prediction mainly used as forward prediction and L1 prediction mainly used as backward prediction. Are multiplied, offset values are added and superimposed, and a final predicted image signal is generated.

- weighting parameters including weighting coefficients and offset values used for weighted prediction may be switched in units of pictures, may be switched in units of slices, or may be switched in units of prediction blocks.

- weighting parameter When the weighting parameter is switched in units of pictures or slices, a representative value is set and encoded for each reference picture in each list in units of pictures or slices.

- weighting parameters When switching in units of prediction blocks, weighting parameters are set in units of prediction blocks and encoded.

- the prediction method determination unit 106 evaluates the code amount of the difference motion vector, the amount of distortion between the motion compensated prediction signal and the image signal, and the like, so that an optimum prediction block size (prediction block size) is selected from a plurality of prediction methods. 4 will be described later with reference to FIG. 4), a prediction method such as prediction mode, merge mode or the like is determined, information indicating the determined prediction method, a difference motion vector corresponding to the determined prediction method, and the like Is supplied to the first encoded bit string generation unit 109. It should be noted that the weighted parameter used when performing weighted prediction and the predicted value of the encoded information of the quantization parameter used when performing quantization / inverse quantization are supplied to the first encoded bit string generation unit 109 as necessary. .

- the prediction method determination unit 106 stores information indicating the determined prediction method and encoded information including a motion vector corresponding to the determined prediction method in the encoded information storage memory 114. Note that the weighting parameter for weighted prediction supplied from the prediction method determination unit 106 is stored in the encoded information storage memory 114 as necessary.

- the prediction method determination unit 106 provides a motion compensated prediction image signal corresponding to the determined prediction mode to the residual signal generation unit 107 and the decoded image signal superimposition unit 113.

- the residual signal generation unit 107 generates a residual signal by subtracting the image signal to be encoded and the prediction signal, and supplies the residual signal to the orthogonal transform / quantization unit 108.

- the orthogonal transform / quantization unit 108 performs orthogonal transform and quantization on the residual signal in accordance with the quantization parameter to generate an orthogonal transform / quantized residual signal, and a second encoded bit string generation unit 110 and the inverse quantization / inverse orthogonal transform unit 112. Further, the orthogonal transform / quantization unit 108 stores the quantization parameter in the encoded information storage memory 114.

- the first encoded bit string generation unit 109 encodes encoded information according to the prediction method determined by the prediction method determination unit 106 for each prediction block, in addition to information on a sequence, a picture, a slice, and an encoded block unit.

- a parameter for determining whether or not inter prediction is used a parameter for determining whether or not the mode is merge mode in the case of inter prediction, a merge index in the case of merge mode, a prediction mode, MVP index, and difference motion in the case of not being in merge mode

- Encoding information such as vector information is encoded in accordance with a prescribed syntax rule described later to generate a first encoded bit string, which is supplied to the multiplexing unit 111.

- the merge index mergeIdx can be specified as 0, and is not encoded.

- the MVP index mergeIdx can be specified as 0, so that it is not encoded.

- variable length encoding is performed by assigning a code having a shorter code length to an MVP index having a higher priority in the MVP list (that is, having a lower index number).

- a variable index is assigned by assigning a code having a shorter code length to a merge index having a higher priority in the merge list (that is, a smaller index number).

- the weighting prediction weighting parameter supplied from the prediction method determination unit 106 when not in the merge mode is also encoded.

- the difference between the predicted value of the quantization parameter encoding information for quantization and the value actually used is encoded.

- the second encoded bit string generation unit 110 entropy-encodes the residual signal that has been orthogonally transformed and quantized according to a specified syntax rule to generate a second encoded bit string, and supplies the second encoded bit string to the multiplexing unit 111.

- the multiplexing unit 111 multiplexes the first encoded bit string and the second encoded bit string in accordance with a prescribed syntax rule, and outputs a bit stream.

- the inverse quantization / inverse orthogonal transform unit 112 calculates a residual signal by performing inverse quantization and inverse orthogonal transform on the orthogonal transform / quantized residual signal supplied from the orthogonal transform / quantization unit 108 to perform decoding. This is supplied to the image signal superimposing unit 113.

- the decoded image signal superimposing unit 113 superimposes the prediction signal according to the determination by the prediction method determining unit 106 and the residual signal that has been inversely quantized and inverse orthogonal transformed by the inverse quantization / inverse orthogonal transform unit 112 to generate a decoded image. Generated and stored in the decoded image memory 115.

- the decoded image may be stored in the decoded image memory 115 after filtering processing for reducing distortion such as block distortion due to encoding.

- predicted encoded information such as a flag for identifying information of a post filter such as ALF or a deblocking filter is stored in the encoded information storage memory 114 as necessary.

- FIG. 2 is a block diagram showing a configuration of a moving picture decoding apparatus according to an embodiment corresponding to the moving picture encoding apparatus of FIG.

- the moving picture decoding apparatus includes a separation unit 201, a first encoded bit string decoding unit 202, a second encoded bit string decoding unit 203, a motion vector calculation unit 204, an inter prediction information estimation unit 205, and a motion compensation prediction unit 206.

- the decoding process of the moving picture decoding apparatus in FIG. 2 corresponds to the decoding process provided in the moving picture encoding apparatus in FIG. 1, so the motion compensation prediction unit 206 in FIG.

- the configurations of the inverse orthogonal transform unit 207, the decoded image signal superimposing unit 208, the encoded information storage memory 209, and the decoded image memory 210 are the same as those of the motion compensation prediction unit 105, the inverse quantization / inverse of the moving image encoding device in FIG.

- the orthogonal transform unit 112, the decoded image signal superimposing unit 113, the encoded information storage memory 114, and the decoded image memory 115 have functions corresponding to the respective configurations.

- the bit stream supplied to the separation unit 201 is separated according to a rule of a prescribed syntax, and the separated encoded bit string is supplied to the first encoded bit string decoding unit 202 and the second encoded bit string decoding unit 203.

- the first encoded bit string decoding unit 202 decodes the supplied encoded bit string to obtain sequence, picture, slice, encoded block unit information, and predicted block unit encoded information. Specifically, a parameter for determining whether or not inter prediction, a parameter for determining whether or not inter prediction, a merge index in the merge mode, a prediction mode, an MVP index, a difference motion vector, etc. in the non-merge mode

- the information is decoded according to a prescribed syntax rule to be described later, and the encoded information is supplied to the motion vector calculation unit 204 or the inter prediction information estimation unit 205 and the motion compensation prediction unit 206 and stored in the encoded information storage memory 209.

- the merge index mergeIdx can be specified as 0. Therefore, the encoded bit string is not encoded, and mergeIdx is set to 0. To do. Therefore, in the merge mode, the first encoded bit string decoding unit 202 is supplied with the number of merge candidates registered in the merge candidate list calculated by the inter prediction information estimation unit 205. Similarly, when the mode is not the merge mode, if there is one predicted motion vector candidate registered in the MVP list, which will be described later, the MVP index mvpIdx can be specified as 0, so it is not encoded, and mvpIdx is set to 0. Therefore, when not in the merge mode, the first encoded bit string decoding unit 202 supplies the number of motion vector predictor candidates registered in the MVP list calculated by the motion vector calculation unit 204.

- the second encoded bit string decoding unit 203 calculates a residual signal that has been orthogonally transformed / quantized by decoding the supplied encoded bit string, and dequantized / inverted the residual signal that has been orthogonally transformed / quantized. This is given to the orthogonal transform unit 207.

- the motion vector calculation unit 204 uses the encoded information of the already decoded image signal stored in the encoded information storage memory 209 to generate a plurality of prediction motion vector candidates. Is calculated and registered in an MVP list, which will be described later, and prediction according to the encoded information decoded and supplied by the first encoded bit string decoding unit 202 from among a plurality of motion vector predictor candidates registered in the MVP list A motion vector is selected, a motion vector is calculated from the difference vector decoded by the first encoded bit string decoding unit 202 and the selected predicted motion vector, and is supplied to the motion compensated prediction unit 206, and the encoded information storage memory 209 To supply. Furthermore, the number of predicted motion vector candidates registered in the MVP list calculated by the motion vector calculation unit 204 is supplied to the first encoded bit string decoding unit 202. The detailed configuration and operation of the motion vector calculation unit 204 will be described later.

- the inter prediction information estimation unit 205 estimates the inter prediction information in the merge mode when the prediction block to be decoded is in the merge mode.

- a plurality of merge candidates are calculated and registered in a merge candidate list, which will be described later, using the encoded information of the already decoded prediction block stored in the encoded information storage memory 114, and registered in the merge candidate list.

- a merge candidate corresponding to the merge index decoded and supplied by the first encoded bit string decoding unit 202 is selected from a plurality of merge candidates, and the selected merge candidate prediction mode, reference index, prediction motion vector, etc. are selected.

- the prediction information is supplied to the motion compensation prediction unit 206 and stored in the encoded information storage memory 209.

- the number of merge candidates registered in the merge candidate list calculated by the inter prediction information estimation unit 205 is supplied to the first encoded bit string decoding unit 202.

- the weighting parameter for the weighted prediction of the selected merge candidate is also supplied to the motion compensated prediction unit 206.

- encoded information other than the inter prediction information of the quantization parameter of quantization can also be used as a prediction value. Coding information to be predicted can also be supplied to the prediction method determination unit 106. The detailed configuration and operation of the inter prediction information estimation unit 205 will be described later.

- the motion compensated prediction unit 206 generates a predicted image signal by motion compensation prediction from the reference picture using the motion vector calculated by the motion vector calculation unit 204, and supplies the predicted image signal to the decoded image signal superimposing unit 208.

- a weighted coefficient is adaptively multiplied and superimposed on the two motion compensated prediction image signals of L0 prediction and L1 prediction to generate a final prediction image signal.

- the inverse quantization / inverse orthogonal transform unit 207 performs inverse orthogonal transform and inverse quantization on the orthogonal transform / quantized residual signal decoded by the first encoded bit string decoding unit 202, and performs inverse orthogonal transform / An inverse quantized residual signal is obtained.

- the decoded image signal superimposing unit 208 superimposes the predicted image signal subjected to motion compensation prediction by the motion compensation prediction unit 206 and the residual signal subjected to inverse orthogonal transform / inverse quantization by the inverse quantization / inverse orthogonal transform unit 207.

- the decoded image signal is decoded and stored in the decoded image memory 210.

- the decoded image may be stored in the decoded image memory 210 after filtering processing for reducing block distortion or the like due to encoding is performed on the decoded image.

- the motion vector prediction method according to the embodiment is implemented in the differential motion vector calculation unit 103 of the video encoding device in FIG. 1 and the motion vector calculation unit 204 of the video decoding device in FIG.

- the inside of the screen is equally divided into square units of any same size.

- This unit is defined as a tree block, and is used as a basic unit of address management for specifying an encoding / decoding target block (an encoding target block in encoding and a decoding target block in decoding) in an image.

- the tree block can be divided into four hierarchically in the tree block according to the texture in the screen, and the block can be made smaller in block size if necessary.

- This block is defined as a coding block and is used as a basic unit of processing when performing coding and decoding.

- the tree block is also the maximum size coding block.

- An encoded block having a minimum size that cannot be further divided into four is referred to as a minimum encoded block.

- each prediction block within the coding block a number starting from 0 is assigned to the prediction block existing inside the coding block. This number is defined as the predicted block index puPartIdx.

- the number described in each prediction block of the encoded block in FIG. 4 represents the prediction block index puPartIdx of the prediction block.

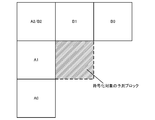

- FIG. 5 is a diagram illustrating a prediction block group adjacent to a prediction block to be encoded / decoded within the same picture as a prediction block to be encoded / decoded.

- FIG. 9 illustrates an already encoded / decoded prediction block group that exists at the same position as or near the prediction block to be encoded / decoded in a picture temporally different from the prediction block to be encoded / decoded. It is a figure to do.

- the prediction block group of the present invention will be described with reference to FIGS. 5, 6, 7, 8, and 9.

- a first prediction block group composed of a prediction block A2 adjacent to the upper left of the prediction block to be encoded / decoded is defined as a prediction block group adjacent to the left side.

- the prediction adjacent to the left side is used. If the block A is adjacent to the left side of the prediction block to be encoded / decoded, the prediction block A1 is used. If the block A is adjacent to the lower left side of the prediction block to be encoded / decoded, the prediction block A0 is set. If it is adjacent to the upper left of the target prediction block, the prediction block is A2.

- the lowest is among them. Only the prediction block A10 is included in the prediction block group adjacent to the left side as the prediction block A1 adjacent to the left side. However, only the top prediction block A12 can be included in the prediction block group adjacent to the left side as the prediction block A1 adjacent to the left side, or the lowest prediction block A10 and the top prediction block A12 can be included.

- Both may be included in the prediction block group adjacent on the left side, or all prediction blocks A10, A11, A12 adjacent on the left side may be included in the prediction block group adjacent on the left side.

- a second prediction block group composed of a prediction block B2 (same as the prediction block A2) adjacent to the upper left of the prediction block to be coded / decoded is defined as a prediction block group adjacent to the upper side.

- the prediction adjacent to the upper side according to the above condition. If the block B is adjacent to the upper side of the prediction block to be encoded / decoded, the prediction block B1 is set. If the block B is adjacent to the upper right of the prediction block to be encoded / decoded, the prediction block B0 is set. If it is adjacent to the upper left of the target prediction block, the prediction block is B2.

- the rightmost prediction block B10 is adjacent to the upper side.

- the prediction block B1 to be included is included in the prediction block group adjacent on the upper side.

- only the leftmost prediction block B12 may be included in the prediction block group adjacent to the upper side as the prediction block B1 adjacent to the upper side, or the rightmost prediction block B10 and the leftmost prediction block B12 may be included. Both may be included in the prediction block group adjacent on the left side, or all prediction blocks adjacent on the upper side may be included in the prediction block group adjacent on the upper side.

- prediction block A2 / B2 adjacent to the upper right is included in both the prediction block group adjacent to the left side and the prediction block group adjacent to the left side.

- the prediction block group adjacent to A2 and the upper side is described, it is assumed to be the prediction block B2.

- the prediction block adjacent to the upper left belongs to both the prediction block group adjacent to the left side and the prediction block group adjacent to the upper side, thereby increasing the chance of searching for motion vector predictor candidates.

- the maximum processing amount does not increase, but when importance is placed on reducing the processing amount in serial processing, the prediction block adjacent to the upper left may belong to only one of the groups. .

- a third prediction block group composed of block groups T0, T1, T2, T3, and T4 is defined as a prediction block group at different times.

- a reference picture is designated and referred from a reference index for each reference list LX.

- L0 and L1 are prepared, and X is 0 or 1.

- Inter prediction that refers to a reference picture registered in the reference list L0 is referred to as L0 prediction (Pred_L0)

- motion compensation prediction that refers to a reference picture registered in the reference list L1 is referred to as L1 prediction (Pred_L1).

- L0 prediction is mainly used for forward prediction

- L1 prediction is used for backward prediction.

- (About POC) POC is a variable associated with an image to be encoded, and a value that increases by 1 in the output order is set. Depending on the POC value, it is possible to determine whether the images are the same, to determine the context in the output order, or to determine the distance between images. For example, when the POC of two images have the same value, it can be determined that they are the same image. When the two images have different POC values, it can be determined that the image with the smaller POC value is the image that is output first, and the difference between the POC values of the two images indicates the inter-frame distance.

- a motion vector prediction method is performed in any of the encoding and decoding processes for each prediction block constituting the encoding block.

- inter-picture coding by motion compensation inter prediction

- an encoded motion vector used when calculating a differential motion vector to be coded from a motion vector to be coded is used.

- the prediction motion vector is calculated, in the case of decoding, the prediction motion vector is calculated using the decoded motion vector used when calculating the motion vector to be decoded.

- FIG. 10 shows a first syntax pattern described in the slice header in units of slices of the bitstream generated according to the present invention.

- motion vector candidates of a prediction block at the same position or in the vicinity of a prediction block to be processed in a picture that is different in time direction in a prediction block that is not in inter prediction merge mode have priority Indicates whether or not to be registered in the MVP list to be described later, and in the prediction block in the inter prediction merge mode, the merge candidate at the same position or in the vicinity as the processing target prediction block in the picture that is different in the time direction is given priority.

- a second flag mv_temporal_high_priority_flag indicating whether or not to be registered in the merge candidate list described later is set. This value may be fixed to true (1) or false (0) in order to simplify the determination process described later, but it is more coded by changing adaptively for each frame in order to improve coding efficiency. Reduce the amount.

- the code amount of an MVP index or a merge index described later can be reduced by setting false (0).

- the inter prediction reliability is higher than when the distance is large, and it is determined that the distance is suitable.

- the code amount can be further reduced.

- encoding efficiency can be improved by lowering the priority of the MVP candidates and merge candidates in the time direction by reducing the threshold value X.

- the priority order can be controlled based on statistics in the course of the encoding process.

- mv_temporal_high_priority_flag of the subsequent encoding target image is set to true (1), and when it is small, the distance between the encoding / decoding target image and the reference picture is far By setting false (0), it is possible to reduce the code amount of an MVP index or a merge index, which will be described later.

- a reference list of L0s of pictures including a prediction block to be processed that includes a picture colPic that is different in the temporal direction used in calculating a temporal motion vector predictor candidate or a merge candidate.

- a third flag collocated_from_l0_flag indicating which reference image registered in the L1 reference list is used is set.

- the first indicates whether to change the registration order in the MVP list or merge candidate list described later for each prediction block.

- Four flags mv_list_adaptive_idx_flag are set.

- syntax elements may be installed in a picture parameter set describing syntax elements set in units of pictures.

- the first flag mv_competition_temporal_flag, the second flag mv_temporal_high_priority_flag, the third flag collocated_from_l0_flag, the fourth flag mv_list_adaptive_idx_flag, and the fourth flag mv_list_adaptive_idx_mode are used separately. It can also be controlled independently.

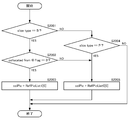

- FIG. 11 shows a syntax pattern described in units of prediction blocks.

- PredMode of the prediction block is MODE_INTER indicating inter-picture prediction (inter prediction)

- merge_flag [x0] [y0] indicating whether the mode is the merge mode is set.

- x0 and y0 are indices indicating the position of the upper left pixel of the prediction block in the screen of the luminance signal

- merge_flag [x0] [y0] is the prediction block located at (x0, y0) in the screen. It is a flag indicating whether or not merge mode.

- merge_flag [x0] [y0] indicates merge mode.

- NumMergeCand exceeds 1, syntax of index of merge list that is a list of predicted motion vector candidates to be referred to.

- An element merge_idx [x0] [y0] is installed.

- x0 and y0 are indices indicating the position of the upper left pixel of the prediction block in the screen

- merge_idx [x0] [y0] is the merge index of the prediction block located at (x0, y0) in the screen. It is.

- the function NumMergeCand represents the number of merge candidates and will be described later.

- merge_idx [x0] [y0] of the index of this merge list is encoded only when the number of merge candidates NumMergeCand is greater than 1, if the total number of motion vector predictor candidates is one. This is because one of them becomes a merge candidate, and the merge candidate to be referred to is determined without transmitting merge_idx [x0] [y0].

- merge_flag [x0] [y0] when merge_flag [x0] [y0] is 0, it indicates that it is not a merge mode.

- a syntax element inter_pred_flag [x0] [y0] for identifying the inter prediction mode is installed.

- a reference picture index syntax element ref_idx_lX [x0] [y0] for specifying a reference picture, a motion vector and a prediction of a prediction block obtained by motion vector detection A syntax element mvd_lX [x0] [y0] [j] of a difference motion vector from the motion vector is installed.

- X is 0 or 1, indicating the prediction direction

- the array index x0 is the x coordinate of the prediction block

- y0 is the y coordinate of the prediction block

- j is the component of the differential motion vector

- j 0 is the x component.

- x0 and y0 are indices indicating the position of the upper left pixel of the prediction block in the screen

- mvp_idx_lX [x0] [y0] is a list of prediction blocks LX located at (x0, y0) in the screen. This is an MVP index.

- the subscript LX represents a reference list, and two L0 and L1 are prepared, and 0 or 1 is entered in X.

- the function NumMVPCand (LX) represents a function for calculating the total number of motion vector predictor candidates for the prediction block in the prediction direction LX (X is 0 or 1), and will be described later.

- the index mvp_idx_lX [x0] [y0] of the MVP list is encoded when the total number of motion vector predictor candidates NumMVPCand (LX) is larger than 1 by the motion vector prediction method. This is because if the total number of prediction motion vector candidates is one, one of them becomes a prediction motion vector, and therefore the reference motion vector candidate to be referred to is determined without transmitting mvp_idx_lX [x0] [y0].

- the motion vector prediction method is a case where inter-picture prediction is performed by motion compensation in units of slices, that is, when the slice type is a P slice (unidirectional prediction slice) or a B slice (bidirectional prediction slice).

- the prediction mode of the prediction block is applied to a prediction block of inter-picture prediction (MODE_INTER).

- FIG. 12 is a diagram showing a detailed configuration of the differential motion vector calculation unit 103 of the moving picture encoding device of FIG. A portion surrounded by a thick frame line in FIG. 12 indicates the differential motion vector calculation unit 103.

- the part surrounded by a thick dotted line inside thereof shows an operation unit of a motion vector prediction method described later, and is similarly installed in a video decoding device corresponding to the video encoding device of the embodiment, The same determination result that does not contradict between encoding and decoding can be obtained.

- a motion vector prediction method in encoding will be described with reference to FIG.

- the difference motion vector calculation unit 103 includes a prediction motion vector candidate generation unit 120, a prediction motion vector registration unit 121, a prediction motion vector candidate identity determination unit 122, a prediction motion vector candidate code amount calculation unit 123, a prediction motion vector selection unit 124, and A motion vector subtraction unit 125 is included.

- a difference motion vector of the motion vector used in the inter prediction method selected in the encoding target block is calculated. Specifically, when the encoding target block is L0 prediction, a differential motion vector of the L0 motion vector is calculated, and when the encoding target block is L1 prediction, a differential motion vector of the L1 motion vector is calculated.

- both L0 prediction and L1 prediction are performed, and a differential motion vector of the L0 motion vector and a differential motion vector of the L1 motion vector are calculated.

- the motion vector predictor candidate generation unit 120 For each reference list (L0, L1), the motion vector predictor candidate generation unit 120 has a prediction block group adjacent to the upper side (prediction block group adjacent to the left side of the prediction block in the same picture as the prediction block to be encoded: A0, A1, A2 in FIG. 5), a prediction block group adjacent to the left side (prediction block group adjacent to the upper side of the prediction block in the same picture as the prediction block to be encoded: B0, B1, B2 in FIG. 5) , A prediction block group at a different time (a prediction block group that has already been encoded and exists at the same position as or near the prediction block in a picture temporally different from the prediction block to be encoded: T0 in FIG.

- One motion is predicted for each prediction block group from the three prediction block groups T1, T2, and T3).

- mvLXA and mvLXB are called spatial motion vectors

- mvLXCol is called a temporal motion vector.

- These prediction motion vector candidates mvLXA, mvLXB, and mvLXCol may be calculated by scaling according to the relationship between the POC of the encoding target image and the POC of the reference picture.

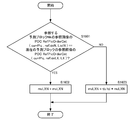

- the motion vector predictor candidate generation unit 120 performs a later-described condition determination on the prediction blocks in each prediction block group in a predetermined order for each prediction block group.

- a motion vector is selected and set as prediction motion vector candidates mvLXA, mvLXB, and mvLXCol.

- the prediction block group adjacent to the left side is adjacent to the upper side in the order from the bottom to the top (order A0 to A0, A1, A2 in FIG. 5).

- prediction block groups at different times in the order from the right to the left of the prediction block group adjacent on the upper side order from B0 to B0, B1, B2 in FIG. 5.

- a condition determination described later is performed for each prediction block in the order of T0 to T0, T1, T2, and T3 in FIG.

- a motion vector is selected, and candidate motion vector predictors are mvLXA, mvLXB, and mvLXCol, respectively.

- the lowest prediction block has the highest priority, and the priority is given from the bottom to the top.

- the rightmost prediction block Blocks have the highest priority and are prioritized from right to left.

- the prediction block of T0 has the highest priority, and the priority is given in the order of T0, T1, T2, and T3.

- the priority order based on the position of this prediction block is defined as priority order A.

- Condition determination 1 Prediction using the same reference index, that is, a reference frame, is performed in the adjacent prediction block with the same reference list as the motion vector of the difference motion vector calculation target of the prediction block of encoding / decoding target.

- Condition determination 2 Although the reference list is different from the motion vector of the difference motion vector calculation target of the prediction block to be encoded / decoded, prediction using the same reference frame is performed in the adjacent prediction block.

- Condition determination 3 Prediction using the same reference list as the motion vector of the difference motion vector calculation target of the prediction block of the encoding / decoding target and using a different reference frame is performed in the adjacent prediction block.

- Condition determination 4 Prediction using a different reference frame in a reference list that is different from a motion vector that is a difference motion vector calculation target of a prediction block that is an encoding / decoding target is performed in an adjacent prediction block.

- priority order B This priority order is designated as priority order B. If any of these conditions is met, it is determined that there is a motion vector that matches the condition in the prediction block, and the subsequent condition determination is not performed. Note that when the condition of condition determination 1 or condition determination 2 is met, the motion vector of the corresponding adjacent prediction block corresponds to the same reference frame, so that it is used as a predicted motion vector candidate as it is. If the condition of condition determination 4 is met, the motion vector of the corresponding adjacent prediction block corresponds to a different reference frame. Therefore, the motion vector is calculated by scaling based on the motion vector as a candidate for the motion vector predictor.

- condition determination of each adjacent prediction block is processed in series instead of in parallel, in the condition determination of the second prediction block group to be performed second (if the condition determination of the left adjacent prediction block group is first, the upper adjacent In the condition determination of the prediction block group), when the motion vector predictor candidate of the prediction block group has the same value as the motion vector predictor candidate determined in the previous prediction block group, the motion vector predictor candidate is not adopted.

- the next condition determination may be performed.

- the following four methods can be set as a method of looping scanning of the spatial prediction block, depending on the above four condition determination methods.

- the appropriateness of the prediction vector and the maximum processing amount differ depending on each method, and these are selected and set in consideration of them. Only method 1 will be described in detail later with reference to the flowcharts of FIGS. 17 to 21. For other methods 2 to 4, those skilled in the art will follow the procedure for carrying out method 1 for the procedure for carrying out method 2 to 4. Since this is a matter that can be designed accordingly, detailed description is omitted.

- the loop process of the scan of the spatial prediction block in a moving image encoder is demonstrated here, it cannot be overemphasized that a similar process is also possible in a moving image decoder.

- Method 1 Of the four condition determinations, one condition determination is performed for each prediction block. If the condition is not satisfied, the process proceeds to the condition determination for the adjacent prediction block. The process ends after four rounds of condition determination for each prediction block. Specifically, the condition determination is performed in the following priority order.

- N is A or B

- Condition determination 1 of the prediction block N0 (same reference list, same reference frame) 2.

- Condition determination 1 of the prediction block N1 (same reference list, same reference frame) 3.

- Prediction block N2 condition determination 1 (same reference list, same reference frame) 4).

- Prediction block N0 condition determination 2 (different reference list, same reference frame) 5.

- Prediction block N1 condition determination 2 (different reference list, same reference frame) 6).

- Prediction block N2 condition determination 2 (different reference list, same reference frame) 7.

- Prediction block N0 condition determination 3 (same reference list, different reference frames) 8).

- Condition determination 3 of the prediction block N1 (same reference list, different reference frames) 9.

- Prediction block N2 condition decision 3 (same reference list, different reference frames) 10.

- Prediction block N0 condition determination 4 (different reference list, different reference frame) 11.

- Prediction block N1 condition determination 4 (different reference list, different reference frame) 12

- Prediction block N2 condition determination 4 (different reference list, different reference frame) According to the method 1, since an unscaled predicted motion vector using the same reference frame is easily selected, there is an effect that a possibility that the code amount of the difference motion vector becomes small is increased.

- Method 2 Prioritizing the determination of the unscaled motion vector predictor using the same prediction frame, two condition determinations are performed for each prediction block among the four condition determinations. If the condition is not satisfied, the process proceeds to the condition determination for the next prediction block.

- Condition determination 1 and condition determination 2 are performed in the first round, and condition determination 3 and condition determination 4 are performed in the next round of prediction blocks. Specifically, the condition determination is performed in the following priority order. (However, N is A or B) 1.

- Condition determination 1 of the prediction block N0 (same reference list, same reference frame) 2.

- Prediction block N0 condition determination 2 (different reference list, same reference frame) 3.

- Condition determination 1 of the prediction block N1 (same reference list, same reference frame) 4).

- Prediction block N1 condition determination 2 (different reference list, same reference frame) 5.

- Prediction block N2 condition determination 1 (same reference list, same reference frame) 6).

- Prediction block N2 condition determination 2 (different reference list, same reference frame) 7.

- Prediction block N0 condition determination 3 (same reference list, different reference frames) 8).

- Prediction block N0 condition determination 4 (different reference list, different reference frame) 9.

- Condition determination 3 of the prediction block N1 (same reference list, different reference frames) 10.

- Prediction block N1 condition determination 4 (different reference list, different reference frame) 11.

- Prediction block N2 condition decision 3 (same reference list, different reference frames) 12 Prediction block N2 condition determination 4 (different reference list, different reference frame)

- the method 2 similarly to the method 1, an unscaled predicted motion vector using the same reference frame is easily selected, so that there is an effect that the possibility that the code amount of the difference motion vector becomes small increases.

- the maximum number of rounds for condition determination is two, the number of memory accesses to the encoded information of the prediction block is smaller than that in Method 1 when considering implementation in hardware, and complexity is reduced.

- Method 3 In the first round, the condition determination of condition determination 1 is performed for each prediction block, and if the condition is not satisfied, the process proceeds to the condition determination of the adjacent prediction block. In the next lap, condition determination is performed in order of condition determination 2, condition determination 3 and condition determination 4 for each prediction block, and then the process proceeds to the next. Specifically, the condition determination is performed in the following priority order.

- N is A or B

- Condition determination 1 of the prediction block N0 (same reference list, same reference frame) 2.

- Condition determination 1 of the prediction block N1 (same reference list, same reference frame) 3.

- Prediction block N2 condition determination 1 (same reference list, same reference frame) 4).

- Prediction block N0 condition determination 2 (different reference list, same reference frame) 5.

- Prediction block N0 condition determination 3 (same reference list, different reference frames) 6).

- Prediction block N0 condition determination 4 (different reference list, different reference frame) 7.

- Prediction block N1 condition determination 2 (different reference list, same reference frame) 8).

- Condition determination 3 of the prediction block N1 (same reference list, different reference frames) 9.

- Prediction block N1 condition determination 4 (different reference list, different reference frame) 10.

- Prediction block N2 condition determination 2 (different reference list, same reference frame) 11.

- Prediction block N2 condition decision 3 (same reference list, different reference frames) 12 Prediction block N2 condition determination 4 (different reference list, different reference frame) According to the method 3, since an unscaled predicted motion vector using the same reference frame in the same reference list is easily selected, there is an effect that the possibility that the code amount of the difference motion vector becomes small increases. In addition, since the maximum number of rounds for condition determination is two, the number of memory accesses to the encoded information of the prediction block is smaller than that in Method 1 when considering implementation in hardware, and complexity is reduced.

- Method 4 Priority is given to the condition determination of the same prediction block, and four condition determinations are performed within one prediction block. If all the conditions are not met, it is determined that there is no motion vector matching the condition in the prediction block. The condition of the next prediction block is determined. Specifically, the condition determination is performed in the following priority order. (However, N is A or B) 1. Condition determination 1 of the prediction block N0 (same reference list, same reference frame) 2. Prediction block N0 condition determination 2 (different reference list, same reference frame) 3. Prediction block N0 condition determination 3 (same reference list, different reference frames) 4). Prediction block N0 condition determination 4 (different reference list, different reference frame) 5. Condition determination 1 of the prediction block N1 (same reference list, same reference frame) 6).

- Prediction block N1 condition determination 2 (different reference list, same reference frame) 7. Condition determination 3 of the prediction block N1 (same reference list, different reference frames) 8).

- Prediction block N1 condition determination 4 (different reference list, different reference frame) 9.

- Prediction block N2 condition determination 1 (same reference list, same reference frame) 10.

- Prediction block N2 condition determination 2 (different reference list, same reference frame) 11.

- Prediction block N2 condition decision 3 (same reference list, different reference frames) 12

- Prediction block N2 condition determination 4 (different reference list, different reference frame) According to method 4, the number of rounds for condition determination is at most one, so that the number of memory accesses to the encoded information of the prediction block is considered as method 1, method 2, and method 3 when implementation in hardware is taken into consideration. And complexity is reduced.

- Method 5 As in method 4, prioritizing condition determination for the same prediction block, if four condition determinations are made within one prediction block and all the conditions are not met, there is a motion vector that matches the conditions in the prediction block. It is determined that it does not, and the condition of the next prediction block is determined. However, in the condition determination in the prediction block, method 4 gives priority to the same reference frame, but method 5 gives priority to the same reference list. Specifically, the condition determination is performed in the following priority order. (However, N is A or B) 1. Condition determination 1 of the prediction block N0 (same reference list, same reference frame) 2. Prediction block N0 condition determination 3 (same reference list, different reference frames) 3. Prediction block N0 condition determination 2 (different reference list, same reference frame) 4).

- Prediction block N0 condition determination 4 (different reference list, different reference frame) 5.

- Condition determination 1 of the prediction block N1 (same reference list, same reference frame) 6).

- Condition determination 3 of the prediction block N1 (same reference list, different reference frames) 7.

- Prediction block N1 condition determination 2 (different reference list, same reference frame) 8).

- Prediction block N1 condition determination 4 (different reference list, different reference frame) 9.

- Prediction block N2 condition determination 1 (same reference list, same reference frame) 10.

- Prediction block N2 condition decision 3 (same reference list, different reference frames) 11.

- Prediction block N2 condition determination 2 (different reference list, same reference frame) 12

- Prediction block N2 condition determination 4 (different reference list, different reference frame)

- the reference block reference count can be reduced as compared with the method 4

- the complexity can be reduced by reducing the number of times of access to the memory and the amount of processing such as condition determination. Can do.