WO2012043352A1 - 立体画像データ送信装置、立体画像データ送信方法、立体画像データ受信装置および立体画像データ受信方法 - Google Patents

立体画像データ送信装置、立体画像データ送信方法、立体画像データ受信装置および立体画像データ受信方法 Download PDFInfo

- Publication number

- WO2012043352A1 WO2012043352A1 PCT/JP2011/071564 JP2011071564W WO2012043352A1 WO 2012043352 A1 WO2012043352 A1 WO 2012043352A1 JP 2011071564 W JP2011071564 W JP 2011071564W WO 2012043352 A1 WO2012043352 A1 WO 2012043352A1

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- data

- information

- image data

- display

- subtitle

- Prior art date

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N7/00—Television systems

- H04N7/025—Systems for the transmission of digital non-picture data, e.g. of text during the active part of a television frame

- H04N7/03—Subscription systems therefor

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/106—Processing image signals

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/106—Processing image signals

- H04N13/128—Adjusting depth or disparity

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/106—Processing image signals

- H04N13/139—Format conversion, e.g. of frame-rate or size

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/106—Processing image signals

- H04N13/161—Encoding, multiplexing or demultiplexing different image signal components

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/106—Processing image signals

- H04N13/172—Processing image signals image signals comprising non-image signal components, e.g. headers or format information

- H04N13/183—On-screen display [OSD] information, e.g. subtitles or menus

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N13/00—Stereoscopic video systems; Multi-view video systems; Details thereof

- H04N13/10—Processing, recording or transmission of stereoscopic or multi-view image signals

- H04N13/194—Transmission of image signals

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/20—Servers specifically adapted for the distribution of content, e.g. VOD servers; Operations thereof

- H04N21/23—Processing of content or additional data; Elementary server operations; Server middleware

- H04N21/236—Assembling of a multiplex stream, e.g. transport stream, by combining a video stream with other content or additional data, e.g. inserting a URL [Uniform Resource Locator] into a video stream, multiplexing software data into a video stream; Remultiplexing of multiplex streams; Insertion of stuffing bits into the multiplex stream, e.g. to obtain a constant bit-rate; Assembling of a packetised elementary stream

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/43—Processing of content or additional data, e.g. demultiplexing additional data from a digital video stream; Elementary client operations, e.g. monitoring of home network or synchronising decoder's clock; Client middleware

- H04N21/434—Disassembling of a multiplex stream, e.g. demultiplexing audio and video streams, extraction of additional data from a video stream; Remultiplexing of multiplex streams; Extraction or processing of SI; Disassembling of packetised elementary stream

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N7/00—Television systems

- H04N7/025—Systems for the transmission of digital non-picture data, e.g. of text during the active part of a television frame

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N2213/00—Details of stereoscopic systems

- H04N2213/003—Aspects relating to the "2D+depth" image format

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N2213/00—Details of stereoscopic systems

- H04N2213/005—Aspects relating to the "3D+depth" image format

Definitions

- the present invention relates to a stereoscopic image data transmission device, a stereoscopic image data transmission method, a stereoscopic image data reception device, and a stereoscopic image data reception method, and in particular, a stereoscopic image data transmission device that transmits superimposition information data such as captions together with stereoscopic image data. Etc.

- Patent Document 1 proposes a transmission method using a television broadcast radio wave of stereoscopic image data.

- stereoscopic image data having left-eye image data and right-eye image data is transmitted, and stereoscopic image display using binocular parallax is performed.

- FIG. 41 shows the relationship between the display position of the left and right images of an object (object) on the screen and the playback position of the stereoscopic image in stereoscopic image display using binocular parallax.

- the right and left line of sight intersects in front of the screen surface.

- the position is in front of the screen surface.

- DPa represents a horizontal disparity vector related to the object A.

- the right and left lines of sight intersect on the screen surface. It becomes on the surface.

- the left image Lc is shifted to the left side and the right image Rc is shifted to the right side, the right and left lines of sight intersect at the back of the screen surface.

- the playback position is behind the screen.

- DPc represents a horizontal disparity vector related to the object C.

- Non-Patent Document 1 describes details of the HDMI standard.

- the transmission side transmits superimposition information data such as captions together with the two-dimensional image data.

- the reception side processes the data of the superimposition information, generates display data for displaying the superimposition information, and superimposes the superimposition information by superimposing the display data on the two-dimensional image data. Two-dimensional images are obtained.

- the superimposition information data such as captions when transmitting stereoscopic image data.

- the superimposition information data is for a two-dimensional image

- the superimposition information data for the two-dimensional image is combined with the transmission format of the stereoscopic image data in accordance with the stereoscopic image data transmission format.

- a process for generating display data to be superimposed on the data is required. Therefore, such a high-level processing function is necessary as a set-top box that receives stereoscopic image data, which is expensive.

- An object of the present invention is to facilitate processing on the receiving side when transmitting superimposition information data such as captions together with stereoscopic image data.

- the concept of this invention is An image data output unit for outputting stereoscopic image data of a predetermined transmission format having left eye image data and right eye image data; A superimposition information data output unit for outputting superimposition information data to be superimposed on an image based on the left eye image data and the right eye image data; The superimposition information data output from the superimposition information data output unit is converted into left-eye superimposition information data corresponding to the left-eye image data included in the stereoscopic image data of the predetermined transmission format and the predetermined transmission format.

- a superimposition information data processing unit for converting into superimposition information data for transmission having right eye superimposition information data corresponding to the right eye image data included in the stereoscopic image data; Corresponding to the first display area corresponding to the display position of the left eye superimposition information and the display position of the right eye superimposition information within the display area of the transmission superimposition information data output from the superimposition information data processing unit

- the second display area is set, and the area information of each of the first display area and the second display area, and the superimposition information included in the first display area and the second display area are set.

- Display control information generation for generating display control information including information on each target frame to be displayed and parallax information for shifting and adjusting display positions of superimposition information included in the first display area and the second display area, respectively And

- the first data stream including the stereoscopic image data output from the image data output unit, the transmission superimposition information data output from the superimposition information data processing unit, and the display control information generation unit

- a stereoscopic image data transmission apparatus comprising: a data transmission unit that transmits a multiplexed data stream having a second data stream including display control information.

- the image data output unit outputs stereoscopic image data of a predetermined transmission format having left-eye image data and right-eye image data.

- the transmission format of stereoscopic image data includes a side-by-side (Side By Side) method, a top-and-bottom (Top & Bottom) method, and the like.

- the superimposition information data output unit outputs the superimposition information data to be superimposed on the left eye image data and the right eye image data.

- the superimposition information is information such as subtitles, graphics, and text superimposed on the image.

- the superimposition information data processing unit converts the superimposition information data into transmission superimposition information data having left eye superimposition information data and right eye superimposition information data.

- the data of the left eye superimposition information is data corresponding to the left eye image data included in the stereoscopic image data of the predetermined transmission format described above, and is superimposed on the left eye image data included in the stereoscopic image data on the receiving side.

- the right eye superimposition information data is data corresponding to the right eye image data included in the stereoscopic image data of the above-described predetermined transmission format, and is superimposed on the right eye image data included in the stereoscopic image data on the receiving side. This is data for generating display data of right eye superimposition information.

- the superimposition information data is, for example, subtitle data (DVB subtitle data).

- left eye superimposition information data and right eye superimposition information data are generated as follows. For example, when the transmission method of stereoscopic image data is a side-by-side method, the superimposition information data processing unit generates left eye superimposition information data and right eye superimposition information data as data of different objects in the same region. The For example, when the transmission method of stereoscopic image data is a top-and-bottom method, the superimposition information data processing unit generates left-eye superimposition information data and right-eye superimposition information data as data of objects in different regions. Is done.

- it further includes a disparity information output unit that outputs disparity information between the left eye image based on the left eye image data and the right eye image based on the right eye image data, and the superimposition information data processing unit is output from the disparity information output unit.

- the reception side does not perform a process of providing a parallax between the left eye superimposition information and the right eye superimposition information, and displays the superimposition information such as subtitles between each object in the image. Perspective consistency can be maintained in an optimum state.

- the display control information generating unit includes a first display area corresponding to the display position of the left eye superimposition information and a second display area corresponding to the display position of the right eye superimposition information in the display area of the transmission superimposition information data. Is set, and display control information related to the first and second display areas is generated. These first and second display areas are set, for example, in response to a user operation or automatically.

- the display control information includes area information on the first display area and area information on the second display area.

- the display control information includes information on a target frame for displaying the superimposition information included in the first display area and information on a target frame for displaying the superimposition information included in the second display area.

- the display control information includes disparity information for shifting and adjusting the display position of the superimposition information included in the first display area, and disparity information for shifting and adjusting the display position of the superimposition information included in the second display area. Is included. These pieces of parallax information are for providing parallax between the superimposition information included in the first display area and the superposition information included in the second display area.

- a disparity information output unit that outputs disparity information between a left eye image based on left eye image data and a right eye image based on right eye image data is further provided, and the display control information generation unit is output from the disparity information output unit Based on the parallax information, the parallax information for shifting and adjusting the display positions of the superimposition information included in the first display area and the second display area may be acquired.

- the data transmission unit transmits a multiplexed data stream including the first data stream and the second data stream.

- the first data stream includes stereoscopic image data in a predetermined transmission format output from the image data output unit.

- the second data stream includes transmission superimposition information data output from the superimposition information data processing unit and display control information generated by the display control information generation unit.

- the superimposing information data for transmission having the left eye superimposing information data and the right eye superimposing information data corresponding to the transmission format is transmitted together with the stereoscopic image data. Therefore, on the receiving side, based on the transmission superimposition information data, the display data of the left eye superimposition information superimposed on the left eye image data possessed by the stereoscopic image data and the right eye superimposed on the right eye image data possessed by the stereoscopic image data Display data of superimposition information can be easily generated, and processing can be facilitated.

- the first display area corresponding to the display position of the left eye superimposition information and the second display area corresponding to the display position of the right eye superimposition information Display control information (region information, target frame information, parallax information) related to the display region is transmitted.

- region information, target frame information, parallax information related to the display region is transmitted.

- parallax can be given to the display position of the superimposition information in the first display area and the second display area, and in the display of the superimposition information such as captions, the consistency of perspective between each object in the image is improved. It becomes possible to maintain the optimum state.

- the parallax information included in the display control information generated by the display control information generation unit may have sub-pixel accuracy.

- the shift operation is smoothly performed. Can contribute to the improvement of image quality.

- the display control information generated by the display control information generation unit further controls on / off of the display of the superimposition information included in the first display area and the second display area.

- Command information may be included.

- the display of the superimposition information of the first display area and the second display area based on the area information and the disparity information included in the display control information together with the command information is turned on. Alternatively, it can be turned off.

- the data transmission unit inserts identification information for identifying that the second data stream includes transmission superimposition information data corresponding to the transmission format of the stereoscopic image data into the multiplexed data stream. It may be made to do. In this case, on the receiving side, whether or not the second data stream includes transmission superimposition information data (stereoscopic image superimposition information data) corresponding to the transmission format of the stereoscopic image data is determined based on this identification information. Identification becomes possible.

- the superimposition information data is subtitle data

- the superimposition information data display area is a region

- the first display area and the second display area are set to be included in the region. It may be a subregion.

- the subregion region is a newly defined region.

- a data receiver for receiving a multiplexed data stream having a first data stream and a second data stream;

- the first data stream includes stereoscopic image data of a predetermined transmission format having left eye image data and right eye image data

- the second data stream includes transmission superimposition information data and display control information

- the transmission superimposition information data includes left eye superimposition information data corresponding to the left eye image data and right eye superimposition information data corresponding to the right eye image data included in the stereoscopic image data of the predetermined transmission format.

- the display control information includes a first display area corresponding to a display position of the left eye superimposition information set in a display area of the transmission superimposition information data and a second display area corresponding to a display position of the right eye superimposition information.

- An image data acquisition unit that acquires the stereoscopic image data from the first data stream included in the multiplexed data stream received by the data reception unit;

- a superimposition information data acquisition unit that acquires the transmission superimposition information data from the second data stream included in the multiplexed data stream received by the data reception unit;

- a display control information acquisition unit that acquires the display control information from the second data stream of the multiplexed data stream received by the data reception unit;

- Display that generates display data for displaying the left eye superimposition information and the right eye superimposition information superimposed on the left eye image and the right eye image, respectively, based on the transmission superimposition information data acquired by the superimposition information data acquisition unit

- a data generator Of the display data generated by the display data generation unit, based on the region information of the first display region and the second display region of the display control information acquired by the display control information acquisition unit.

- a display data extraction unit for extracting display data of the first display area and the second display area;

- the positions of the display data in the first display area and the second display area extracted by the display data extraction unit are based on the parallax information included in the display control information acquired by the display control information acquisition unit.

- Shift adjustment section for adjusting the shift,

- the display data of the first display area and the second display area that have been shift-adjusted by the shift adjustment unit are acquired from the stereoscopic image data acquired by the image data acquisition unit, respectively.

- the stereoscopic image data receiving apparatus further includes a data synthesis unit that obtains output stereoscopic image data by superimposing the target frame indicated by the target frame information included in the display control information acquired by the unit.

- the data receiving unit receives a multiplexed data stream having the first data stream and the second data stream.

- the first data stream includes stereoscopic image data in a predetermined transmission format having left eye image data and right eye image data.

- the second data stream includes transmission superimposition information data (stereoscopic image superimposition information data) having left-eye superimposition information data and right-eye superimposition information data.

- the left-eye superimposition information data is data corresponding to the left-eye image data included in the stereoscopic image data of the predetermined transmission format described above, and the left-eye superimposition information to be superimposed on the left-eye image data included in the stereoscopic image data is displayed.

- Data for generating data is data corresponding to the right-eye image data included in the stereoscopic image data of the above-described predetermined transmission format, and the right-eye superimposition information that is superimposed on the right-eye image data included in the stereoscopic image data. This is data for generating display data.

- the second data stream includes display control information.

- the display control information includes a first display area corresponding to the display position of the left eye superimposition information set in the display area of the superimposition information data for transmission and a second display corresponding to the display position of the right eye superimposition information. Each area information of the area is included.

- the display control information includes information on the target frame for displaying the superimposition information included in the first display area and the second display area, respectively. Further, the display control information includes disparity information for shifting and adjusting the display positions of the superimposition information included in the first display area and the second display area.

- the stereoscopic image data of a predetermined transmission format is acquired from the first data stream included in the multiplexed data stream received by the data reception unit by the image data acquisition unit. Further, the superimposition information data acquisition unit acquires the superimposition information data for transmission from the second data stream included in the multiplexed data stream received by the data reception unit. Further, the display control information acquisition unit acquires display control information from the second data stream included in the multiplexed data stream received by the data reception unit.

- the display data generating unit generates display data for displaying superimposed information on the left eye image and the right eye image based on the transmission superimposed information data acquired by the superimposed information data acquiring unit. Then, based on the area information of the first display area and the second display area included in the display control information among the display data generated by the display data generation section by the display data extraction section, the first display area and Display data of the second display area is extracted.

- the display data extracted in this way is a display target.

- the position of the display data in the first display area and the second display area extracted by the display data extraction unit by the shift adjustment unit is based on the parallax information included in the display control information acquired by the display control information acquisition unit. Shift adjusted.

- the display data of the first display area and the second display area that have been shift-adjusted by the data adjustment unit by the data combining unit is the display control information of the stereoscopic image data acquired by the image data acquisition unit.

- Output stereoscopic image data is obtained by being superimposed on the target frame indicated by the information of the target frame acquired by the acquisition unit.

- the output stereoscopic image data is transmitted to an external device by a digital interface unit such as HDMI, for example.

- the output stereoscopic image data causes the display panel to display a left eye image and a right eye image for allowing the user to perceive a stereoscopic image.

- the superimposing information data for transmission having the left-eye superimposing information data and the right-eye superimposing information data corresponding to the transmission format is received together with the stereoscopic image data. Therefore, based on the transmission superimposition information data, the display data of the left eye superimposition information superimposed on the left eye image data possessed by the stereoscopic image data and the display data of the right eye superimposition information superimposed on the right eye image data possessed by the stereoscopic image data Can be easily generated, and the processing can be facilitated.

- the first display area corresponding to the display position of the left eye superimposition information and the second display area corresponding to the display position of the right eye superimposition information Display control information (region information, target frame information, parallax information) related to the display region is received. Therefore, only the superimposition information of the first display area and the second display area can be superimposed and displayed on the target frame.

- parallax can be given to the display position of the superimposition information in the first display area and the second display area, and in the display of the superimposition information such as captions, the consistency of perspective between each object in the image is improved. It becomes possible to maintain the optimum state.

- the multiplexed data stream received by the data receiving unit is identification information for identifying that the second data stream includes superimposition information data for transmission corresponding to the transmission format of the stereoscopic image data.

- a superimposition information data identification unit for identifying that the superimposition information data for transmission corresponding to the transmission format is included may be further provided. In this case, this identification information makes it possible to identify whether or not the second data stream includes transmission superimposition information data (stereoscopic image superimposition information data) corresponding to the transmission format of the stereoscopic image data.

- transmission superimposition information data having left-eye superimposition information data and right-eye superimposition information data corresponding to the transmission format is transmitted together with the stereoscopic image data from the transmission side to the reception side. Therefore, on the receiving side, based on the transmission superimposition information data, the display data of the left eye superimposition information superimposed on the left eye image data possessed by the stereoscopic image data and the right eye superimposed on the right eye image data possessed by the stereoscopic image data Display data of superimposition information can be easily generated, and processing can be facilitated.

- the first display area corresponding to the display position of the left eye superimposition information and the second display area corresponding to the display position of the right eye superimposition information is transmitted.

- Display control information region information, target frame information, parallax information

- the superimposition information of the first display area and the second display area can be superimposed and displayed on the target frame.

- parallax can be given to the display position of the superimposition information in the first display area and the second display area, and in the display of the superimposition information such as captions, the consistency of perspective between each object in the image is improved. It becomes possible to maintain the optimum state.

- FIG. 6 is a diagram for explaining a configuration example (cases A to E) of data (including a parallax information group). It is a figure which shows notionally the production method of the subtitle data for stereoscopic images in case the transmission format of stereoscopic image data is a side-by-side system. It is a figure which shows an example of the region (region) and object (object) by the subtitle data for stereo images, and also a subregion (Subregion). It is a figure which shows the creation example (example 1) of each segment of the subtitle data for stereo images in case the transmission format of stereo image data is a side by side system.

- FIG. 1 It is a figure which shows the creation example (example 2) of each segment of the subtitle data for stereoscopic images in case the transmission format of stereoscopic image data is a side by side system. It is a figure which shows notionally the production method of the subtitle data for stereoscopic images in case the transmission format of stereoscopic image data is a top and bottom system. It is a figure which shows an example of the region (region) and object (object) by the subtitle data for stereo images, and also a subregion (Subregion). It is a figure which shows the creation example (example 1) of each segment of the subtitle data for stereo images in case the transmission format of stereo image data is a top and bottom system.

- stereoscopic image display using binocular parallax it is a figure for demonstrating the relationship between the display position of the left-right image of the object on a screen, and the reproduction

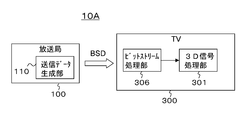

- FIG. 1 shows a configuration example of an image transmission / reception system 10 as an embodiment.

- the image transmission / reception system 10 includes a broadcasting station 100, a set top box (STB) 200, and a television receiver (TV) 300.

- STB set top box

- TV television receiver

- the set top box 200 and the television receiver 300 are connected by a digital interface of HDMI (High-Definition Multimedia Interface).

- the set top box 200 and the television receiver 300 are connected using an HDMI cable 400.

- the set top box 200 is provided with an HDMI terminal 202.

- the television receiver 300 is provided with an HDMI terminal 302.

- One end of the HDMI cable 400 is connected to the HDMI terminal 202 of the set top box 200, and the other end of the HDMI cable 400 is connected to the HDMI terminal 302 of the television receiver 300.

- the broadcasting station 100 transmits the bit stream data BSD on a broadcast wave.

- the broadcast station 100 includes a transmission data generation unit 110 that generates bit stream data BSD.

- the bit stream data BSD includes stereoscopic image data, audio data, superimposition information data, and the like.

- the stereoscopic image data has a predetermined transmission format, and has left-eye image data and right-eye image data for displaying a stereoscopic image.

- the superimposition information is generally subtitles, graphics information, text information, etc., but in this embodiment, it is a subtitle (caption).

- FIG. 2 shows a configuration example of the transmission data generation unit 110 in the broadcast station 100.

- the transmission data generation unit 110 includes cameras 111L and 111R, a video framing unit 112, a parallax vector detection unit 113, a microphone 114, a data extraction unit 115, and changeover switches 116 to 118.

- the transmission data generation unit 110 includes a video encoder 119, an audio encoder 120, a subtitle generation unit 121, a disparity information creation unit 122, a subtitle processing unit 123, a subtitle encoder 125, and a multiplexer 125. Yes.

- the camera 111L captures a left eye image and obtains left eye image data for stereoscopic image display.

- the camera 111R captures the right eye image and obtains right eye image data for stereoscopic image display.

- the video framing unit 112 processes the left eye image data obtained by the camera 111L and the right eye image data obtained by the camera 111R into stereoscopic image data (3D image data) corresponding to the transmission format.

- the video framing unit 112 constitutes an image data output unit.

- the first transmission method is a top-and-bottom method. As shown in FIG. 4A, in the first half of the vertical direction, the data of each line of the left eye image data is transmitted, and the vertical direction In the latter half of the method, the data of each line of the left eye image data is transmitted. In this case, since the lines of the left eye image data and the right eye image data are thinned out to 1 ⁇ 2, the vertical resolution is halved with respect to the original signal.

- the second transmission method is a side-by-side (Side By Side) method.

- pixel data of the left eye image data is transmitted, and in the second half in the horizontal direction.

- the pixel data of the right eye image data is transmitted.

- the pixel data in the horizontal direction is thinned out to 1/2.

- the horizontal resolution is halved with respect to the original signal.

- the third transmission method is a frame sequential method, in which left-eye image data and right-eye image data are sequentially switched for each frame and transmitted as shown in FIG. 4 (c).

- This frame sequential method may be referred to as a full frame method or a backward compatible method.

- the parallax vector detection unit 113 detects, for example, a parallax vector for each pixel constituting the image based on the left eye image data and the right eye image data.

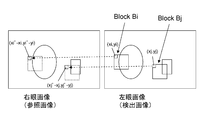

- a detection example of a disparity vector will be described.

- the parallax vector of the right eye image with respect to the left eye image will be described.

- the left eye image is a detected image

- the right eye image is a reference image.

- the disparity vectors at the positions (xi, yi) and (xj, yj) are detected.

- a case where a disparity vector at the position of (xi, yi) is detected will be described as an example.

- a 4 ⁇ 4, 8 ⁇ 8, or 16 ⁇ 16 pixel block (parallax detection block) Bi is set in the left eye image with the pixel at the position (xi, yi) at the upper left. Then, a pixel block matching the pixel block Bi is searched in the right eye image.

- a search range centered on the position of (xi, yi) is set in the right eye image, and each pixel in the search range is sequentially set as a pixel of interest, for example, 4 ⁇ 4 similar to the above-described pixel block Bi. 8 ⁇ 8 or 16 ⁇ 16 comparison blocks are sequentially set.

- the sum of the absolute differences for each corresponding pixel is obtained.

- the pixel block Bi when the pixel value of the pixel block Bi is L (x, y) and the pixel value of the comparison block is R (x, y), the pixel block Bi, a certain comparison block, The sum of absolute differences between the two is represented by ⁇

- n pixels are included in the search range set in the right eye image, n total sums S1 to Sn are finally obtained, and the minimum sum Smin is selected. Then, the position of the upper left pixel (xi ′, yi ′) is obtained from the comparison block from which the sum Smin is obtained. Thus, the disparity vector at the position (xi, yi) is detected as (xi′ ⁇ xi, yi′ ⁇ yi).

- the left eye image has the pixel at the position (xj, yj) at the upper left, for example, 4 ⁇ 4, 8 ⁇ 8, or 16

- a x16 pixel block Bj is set and detected in the same process.

- the microphone 114 detects sound corresponding to the images photographed by the cameras 111L and 111R, and obtains sound data.

- the data extraction unit 115 is used in a state where the data recording medium 115a is detachably mounted.

- the data recording medium 115a is a disk-shaped recording medium, a semiconductor memory, or the like.

- audio data, superimposition information data, and parallax vectors are recorded in association with stereoscopic image data including left-eye image data and right-eye image data.

- the data extraction unit 115 extracts and outputs stereoscopic image data, audio data, and disparity vectors from the data recording medium 115a.

- the data extraction unit 115 constitutes an image data output unit.

- the stereoscopic image data recorded on the data recording medium 115 a corresponds to the stereoscopic image data obtained by the video framing unit 112.

- the audio data recorded on the data recording medium 115 a corresponds to the audio data obtained by the microphone 114.

- the disparity vector recorded on the data recording medium 115 a corresponds to the disparity vector detected by the disparity vector detection unit 113.

- the changeover switch 116 selectively extracts the stereoscopic image data obtained by the video framing unit 112 or the stereoscopic image data output from the data extraction unit 115.

- the changeover switch 116 is connected to the a side in the live mode and takes out the stereoscopic image data obtained by the video framing unit 112, and is connected to the b side in the playback mode and is output from the data extraction unit 115. Extract stereo image data.

- the changeover switch 117 selectively extracts the disparity vector detected by the disparity vector detection unit 113 or the disparity vector output from the data extraction unit 115.

- the changeover switch 117 is connected to the a side in the live mode and extracts the disparity vector detected by the disparity vector detection unit 113, and is connected to the b side in the reproduction mode and output from the data extraction unit 115. Extract disparity vectors.

- the changeover switch 118 selectively takes out the voice data obtained by the microphone 114 or the voice data output from the data fetch unit 115.

- the changeover switch 118 is connected to the a side in the live mode and takes out the audio data obtained by the microphone 114, and is connected to the b side in the playback mode, and the audio data output from the data extraction unit 115 is taken out. Take out.

- the video encoder 119 performs encoding such as MPEG4-AVC, MPEG2, or VC-1 on the stereoscopic image data extracted by the changeover switch 116 to generate a video data stream (video elementary stream).

- the audio encoder 120 performs encoding such as AC3 or AAC on the audio data extracted by the changeover switch 118 to generate an audio data stream (audio elementary stream).

- the subtitle generation unit 121 generates subtitle data that is DVB (Digital Video Broadcasting) subtitle data. This subtitle data is subtitle data for a two-dimensional image.

- the subtitle generation unit 121 constitutes a superimposition information data output unit.

- the disparity information creating unit 122 performs a downsizing process on the disparity vector (horizontal disparity vector) for each pixel (pixel) extracted by the changeover switch 117, and disparity information (horizontal disparity vector) to be applied to the subtitle. ).

- the parallax information creation unit 122 constitutes a parallax information output unit. Note that the disparity information applied to the subtitle can be attached in units of pages, regions, or objects. The disparity information does not necessarily have to be generated by the disparity information creating unit 122, and a configuration in which the disparity information is separately supplied from the outside is also possible.

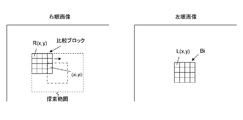

- FIG. 7 shows an example of the downsizing process performed by the parallax information creating unit 122.

- the disparity information creating unit 122 obtains a disparity vector for each block using a disparity vector for each pixel (pixel) as illustrated in FIG.

- a block corresponds to an upper layer of pixels located at the lowest layer, and is configured by dividing an image (picture) region into a predetermined size in the horizontal direction and the vertical direction.

- the disparity vector of each block is obtained, for example, by selecting the disparity vector having the largest value from the disparity vectors of all the pixels (pixels) existing in the block.

- the disparity information creating unit 122 obtains a disparity vector for each group (Group Of ⁇ ⁇ ⁇ ⁇ Block) using the disparity vector for each block as illustrated in FIG.

- a group is an upper layer of a block, and is obtained by grouping a plurality of adjacent blocks together.

- each group is composed of four blocks bounded by a broken line frame.

- the disparity vector of each group is obtained, for example, by selecting the disparity vector having the largest value from the disparity vectors of all blocks in the group.

- the disparity information creating unit 122 obtains a disparity vector for each partition (Partition) using the disparity vector for each group as illustrated in FIG.

- the partition is an upper layer of the group and is obtained by grouping a plurality of adjacent groups together.

- each partition is configured by two groups bounded by a broken line frame.

- the disparity vector of each partition is obtained, for example, by selecting the disparity vector having the largest value from the disparity vectors of all groups in the partition.

- the disparity information creating unit 122 obtains a disparity vector of the entire picture (entire image) located in the highest layer using the disparity vector for each partition.

- the entire picture includes four partitions that are bounded by a broken line frame. Then, the disparity vector for the entire picture is obtained, for example, by selecting the disparity vector having the largest value from the disparity vectors for all partitions included in the entire picture.

- the disparity information creating unit 122 performs the downsizing process on the disparity vector for each pixel (pixel) located in the lowest layer, and the disparity vectors of the respective regions in each layer of the block, group, partition, and entire picture Can be requested.

- disparity vectors of four layers of blocks, groups, partitions, and entire pictures are obtained in addition to the pixel (pixel) layer.

- the number of hierarchies, how to cut areas in each hierarchy, and the number of areas are not limited to this.

- the subtitle processing unit 123 converts the subtitle data generated by the subtitle generation unit 121 into a stereoscopic image (three-dimensional image) subtitle corresponding to the transmission format of the stereoscopic image data extracted by the changeover switch 116. Convert to data.

- the subtitle processing unit 123 forms a superimposition information data processing unit, and the converted subtitle data for stereoscopic image data forms superimposition information data for transmission.

- the stereoscopic image subtitle data includes left eye subtitle data and right eye subtitle data.

- the left-eye subtitle data is data corresponding to the left-eye image data included in the above-described stereoscopic image data, and the display of the left-eye subtitle superimposed on the left-eye image data included in the stereoscopic image data on the receiving side.

- Data for generating data is data corresponding to the right-eye image data included in the above-described stereoscopic image data, and display data of the right-eye subtitle to be superimposed on the right-eye image data included in the stereoscopic image data on the receiving side. It is data for generating.

- the subtitle processing unit 123 shifts at least the left eye subtitle or the right eye subtitle based on the disparity information (horizontal disparity vector) to be applied to the subtitle from the disparity information creating unit 122, and Parallax can be given to the right eye subtitle.

- the subtitle processing unit 123 shifts at least the left eye subtitle or the right eye subtitle based on the disparity information (horizontal disparity vector) to be applied to the subtitle from the disparity information creating unit 122, and Parallax can be given to the right eye subtitle.

- each object in the image can be displayed in the display of the subtitle (caption) without performing parallax processing on the receiving side.

- the consistency of perspective between the two can be maintained in an optimum state.

- the subtitle processing unit 123 includes a display control information generation unit 124.

- the display control information generation unit 124 generates display control information related to the subregion.

- the sub-region is an area defined only within the region.

- This subregion includes a left eye subregion (left eye SR) and a right eye subregion (right eye SR).

- the subregion is referred to as the left eye SR

- the right eye subregion is referred to as the right eye SR.

- the left-eye subregion is an area set corresponding to the display position of the left-eye subtitle in the region that is the display area of the transmission superimposition information data.

- the right-eye subregion is an area set corresponding to the display position of the right-eye subtitle in the region that is the display area of the transmission superimposition information data.

- the left eye subregion constitutes a first display area

- the right eye subregion constitutes a second display area.

- the regions of the left eye SR and the right eye SR are set for each subtitle data generated by the subtitle generation unit 121, for example, based on a user operation or automatically. In this case, the regions of the left eye SR and the right eye SR are set so that the left eye subtitle in the left eye SR and the right eye subtitle in the right eye SR correspond to each other.

- the display control information includes area information for the left eye SR and area information for the right eye SR.

- the display control information includes information on a target frame that displays a left-eye subtitle included in the left eye SR and information on a target frame that displays a right-eye subtitle included in the right eye SR.

- the information of the target frame displaying the left eye subtitle included in the left eye SR indicates the frame of the left eye image

- the information of the target frame displaying the right eye subtitle included in the right eye SR is the frame of the right eye image. Indicates.

- the display control information includes disparity information (disparity) for shifting the display position of the left eye subtitle included in the left eye SR and disparity information for shifting the display position of the right eye subtitle included in the right eye SR. And are included. These pieces of parallax information are for giving parallax between the left eye subtitle included in the left eye SR and the right eye subtitle included in the right eye SR.

- the display control information generation unit 123 performs shift adjustment to be included in the above-described display control information based on, for example, the disparity information (horizontal disparity vector) to be applied to the subtitle created by the disparity information creating unit 122. Is obtained.

- the disparity information “Disparity1” of the left eye SR and the disparity information “Disparity2” of the right eye SP have the same absolute value, and the difference between them corresponds to the disparity information (Disparity) to be applied to the subtitle. It is determined to be a value.

- the transmission format of the stereoscopic image data is the side-by-side format

- the value corresponding to the disparity information (Disparity) is “Disparity / 2”.

- the value corresponding to the disparity information (Disparity) is “Disparity”.

- the display control information generation unit 124 generates parallax information included in the display control information so as to have sub-pixel accuracy.

- the parallax information includes an integer part and a decimal part as shown in FIG.

- a subpixel is a subdivision of a pixel (Integer pixel) that constitutes a digital image. Since the disparity information has sub-pixel accuracy, the reception side can shift the display positions of the left-eye subtitle in the left eye SR and the right-eye subtitle in the right eye SR with sub-pixel accuracy. It becomes.

- FIG. 8B schematically shows an example of shift adjustment with subpixel accuracy, and shows an example in which the display position of the subtitle in the region partition is shifted from the solid line frame position to the broken line frame position. Yes.

- DDS display definition segment

- PCS page composition segment

- RCS region composition segment

- CDS CLUT definition segment

- ODS objectdata segment

- a segment of SCS (Subregion composition segment) is newly defined. Then, the display control information generated by the display control information generation unit 124 as described above is inserted into this SCS segment. Details of the processing of the subtitle processing unit 123 will be described later.

- the subtitle encoder 125 generates a subtitle data stream (subtitle elementary stream) including the subtitle data for stereoscopic images output from the subtitle processing unit 123 and display control information.

- the multiplexer 126 multiplexes the data streams from the video encoder 119, the audio encoder 120, and the subtitle encoder 125, and obtains a multiplexed data stream as bit stream data (transport stream) BSD.

- the operation of the transmission data generation unit 110 shown in FIG. 2 will be briefly described.

- the camera 111L captures a left eye image.

- the left eye image data for stereoscopic image display obtained by the camera 111L is supplied to the video framing unit 112.

- the camera 111R captures a right eye image.

- Right-eye image data for stereoscopic image display obtained by the camera 111R is supplied to the video framing unit 112.

- the left-eye image data and the right-eye image data are processed into a state corresponding to the transmission format, and stereoscopic image data is obtained (see FIGS. 4A to 4C).

- the stereoscopic image data obtained by the video framing unit 112 is supplied to the fixed terminal on the a side of the changeover switch 116.

- the stereoscopic image data obtained by the data extraction unit 115 is supplied to the fixed terminal on the b side of the changeover switch 116.

- the changeover switch 116 is connected to the a side, and the stereoscopic image data obtained by the video framing unit 112 is taken out from the changeover switch 116.

- the selector switch 116 is connected to the b side, and the stereoscopic image data output from the data extracting unit 115 is extracted from the selector switch 116.

- the stereoscopic image data extracted by the changeover switch 116 is supplied to the video encoder 119.

- the stereoscopic image data is encoded by MPEG4-AVC, MPEG2, VC-1, or the like, and a video data stream including the encoded video data is generated. This video data stream is supplied to the multiplexer 126.

- the audio data obtained by the microphone 114 is supplied to the fixed terminal on the a side of the changeover switch 118. Also, the audio data obtained by the data extraction unit 115 is supplied to the fixed terminal on the b side of the changeover switch 118.

- the changeover switch 118 In the live mode, the changeover switch 118 is connected to the a side, and the audio data obtained by the microphone 114 is extracted from the changeover switch 118.

- the changeover switch 118 is connected to the b side, and the audio data output from the data extraction unit 115 is taken out from the changeover switch 118.

- the audio data extracted by the changeover switch 118 is supplied to the audio encoder 120.

- the audio encoder 120 performs encoding such as MPEG-2Audio AAC or MPEG-4 AAC on the audio data, and generates an audio data stream including the encoded audio data. This audio data stream is supplied to the multiplexer 126.

- Left eye image data and right eye image data obtained by the cameras 111L and 111R are supplied to the parallax vector detection unit 113 through the video framing unit 112.

- the disparity vector detection unit 113 detects disparity vectors for each pixel (pixel) based on the left eye image data and the right eye image data. This disparity vector is supplied to the fixed terminal on the a side of the changeover switch 117. Further, the disparity vector for each pixel (pixel) output from the data extraction unit 115 is supplied to a fixed terminal on the b side of the changeover switch 117.

- the changeover switch 117 is connected to the a side, and the parallax vector for each pixel (pixel) obtained by the parallax vector detection unit 113 is extracted from the changeover switch 117.

- the selector switch 117 is connected to the b side, and the disparity vector for each pixel (pixel) output from the data extracting unit 115 is extracted from the selector switch 117.

- the subtitle generation unit 121 generates subtitle data (for two-dimensional images) that is DVB subtitle data. This subtitle data is supplied to the parallax information creation unit 122 and the subtitle processing unit 123.

- the disparity vector for each pixel (pixel) extracted by the changeover switch 117 is supplied to the disparity information creating unit 122.

- the subtitle processing unit 123 the subtitle data for the two-dimensional image generated by the subtitle generation unit 121 is converted into stereoscopic image subtitle data corresponding to the transmission format of the stereoscopic image data extracted by the changeover switch 116 described above.

- the stereoscopic image subtitle data includes left-eye subtitle data and right-eye subtitle data.

- the subtitle processing unit 123 shifts at least the left-eye subtitle or the right-eye subtitle based on the disparity information to be applied to the subtitle from the disparity information creation unit 122, so that it is between the left-eye subtitle and the right-eye subtitle. May be given parallax.

- the display control information generation unit 124 of the subtitle processing unit 123 generates display control information (region information, target frame information, parallax information) related to the subregion (Subregion).

- the subregion includes the left eye subregion (left eye SR) and the right eye subregion (right eye SR). Therefore, area information, target frame information, and parallax information of the left eye SR and right eye SR are generated as display control information.

- the left eye SR is set corresponding to the display position of the left eye subtitle, for example, in a region that is a display area of superimposing information data for transmission based on a user operation.

- the right eye SR is set corresponding to the display position of the right eye subtitle, for example, based on a user operation or automatically in a region that is a display area of the superimposed information data for transmission.

- the stereoscopic image subtitle data and display control information obtained by the subtitle processing unit 123 are supplied to the subtitle encoder 125.

- the subtitle encoder 125 generates a subtitle data stream including stereoscopic image subtitle data and display control information.

- the subtitle data stream includes a newly defined SCS segment including display control information, as well as segments such as DDS, PCS, RCS, CDS, and ODS into which stereoscopic image subtitle data is inserted.

- each data stream from the video encoder 119, the audio encoder 120, and the subtitle encoder 125 is supplied to the multiplexer 126.

- each data stream is packetized and multiplexed to obtain a multiplexed data stream as bit stream data (transport stream) BSD.

- FIG. 9 shows a configuration example of a transport stream (bit stream data).

- This transport stream includes PES packets obtained by packetizing each elementary stream.

- PES packets obtained by packetizing each elementary stream.

- a PES packet “Video PES” of a video elementary stream a PES packet “AudioPES” of an audio elementary stream, and a PES packet ““ Subtitle PES ”of a subtitle elementary stream are included.

- the subtitle elementary stream includes stereoscopic image subtitle data and display control information.

- This stream includes SCS segments including newly defined display control information, as well as conventionally known segments such as DDS, PCS, RCS, CDS, and ODS.

- FIG. 10 shows the structure of PCS (page_composition_segment).

- the segment type of this PCS is “0x10” as shown in FIG. “Region_horizontal_address” and “region_vertical_address” indicate the start position of the region.

- the structure of other segments such as DDS, RSC, and ODS is not shown.

- the DDS segment type is “0x14”

- the RCS segment type is “0x11”

- the CDS segment type is “0x12”

- the ODS segment type is “0x13”.

- the SCS segment type is “0x49”. The detailed structure of the SCS segment will be described later.

- the transport stream also includes a PMT (Program Map Table) as PSI (Program Specific Information).

- PSI Program Specific Information

- This PSI is information describing to which program each elementary stream included in the transport stream belongs.

- the transport stream includes an EIT (EventInformation Table) as SI (Serviced Information) for managing each event.

- SI Serviced Information

- the PMT has a program descriptor (ProgramDescriptor) that describes information related to the entire program.

- the PMT includes an elementary loop having information related to each elementary stream. In this configuration example, there are a video elementary loop, an audio elementary loop, and a subtitle elementary loop.

- information such as a packet identifier (PID) is arranged for each stream, and a descriptor (descriptor) describing information related to the elementary stream is also arranged, although not shown.

- PID packet identifier

- descriptor descriptor

- the component descriptor (Component_Descriptor) is inserted under the EIT.

- the subtitle data stream includes the subtitle data for stereoscopic images.

- “stream_content” of “component_descriptor” indicating distribution content indicates a subtitle (subtitle)

- the subtitle processing unit 123 converts the subtitle data for two-dimensional images into subtitle data for stereoscopic images.

- the subtitle processing unit 123 generates display control information (including region information of the left eye SR and right eye SR, target frame information, and parallax information) in the display control information generation unit 124.

- “Case A” or “Case B” can be considered as shown in FIG.

- a series of segments related to subtitle display of DDS, PCS, RCS, CDS, ODS, SCS, and EDS are created before the start of a predetermined number of frame periods in which subtitles are displayed, Information (PTS) is added and sent together.

- PTS Picture-Time Transport Stream

- subtitle display periods the predetermined number of frame periods in which the subtitles are displayed.

- a series of segments related to subtitle display of DDS, PCS, RCS, CDS, ODS, and SCS are created before the start of a predetermined number of frame periods (subtitle display periods) in which the subtitles are displayed.

- Time information (PTS) is added and transmitted at once.

- SCS segments with updated disparity information are sequentially created, and time information (PTS) is added and transmitted.

- PTS time information

- EDS EDS segment is also created and transmitted with time information (PTS) added.

- this display on / off control turns on (validates) this display when performing display based on the disparity information in the SCS segment of a certain frame, and changes the disparity information in the SCS segment of the previous frame. This is control for turning off (invalidating) the display based on the display.

- the SCS segment includes command information for controlling on / off of display (details will be described later).

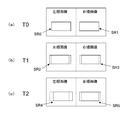

- An example of display on / off control on the receiving side will be described with reference to FIGS. 14 and 15.

- FIG. 14 shows an example of SCS segments sequentially transmitted to the receiving side.

- SCSs corresponding to the T0 frame, T1 frame, and T2 frame are sequentially sent.

- FIG. 15 shows a shift example of the display position of the left eye subtitle in the left eye SR and the right eye subtitle in the right eye SR by the SCS corresponding to each of the T0 frame, the T1 frame, and the T2 frame.

- the disparity information (Disparity_0) for obtaining the display position SP0 of the left eye subtitle in the left eye SR and the display of the display position SR0 are turned on (valid).

- Command information (Display_ON) is included.

- disparity information (Disparity_1) for obtaining the display position SR1 of the right eye subtitle in the right eye SR, and command information for turning on (validating) the display of the display position SR1 (Display_ON) is included.

- the left eye subtitle in the left eye SR is displayed (superposed) at the display position SR0 on the left eye image.

- the right eye subtitle in the right eye SR is displayed (superposed) at the display position SR1 on the right eye image.

- the SCS of the T1 frame includes command information (Display_OFF) for turning off (invalidating) the display positions SR0 and SR1. Also, in the SCS of the T1 frame, disparity information (Disparity_2) for obtaining the display position SR2 of the subtitle in the left eye SR, and command information (Display_ON) for turning on (validating) the display of the display position SR2 It is included. Further, in the SCS of the T1 frame, disparity information (Disparity_3) for obtaining the display position SR3 of the subtitle in the right eye SR, and command information (Display_ON) for turning on (validating) the display of the display position SR3 are displayed. )It is included.

- the display position SR0 on the left eye image is turned off (invalid) and the display position SR1 on the right eye image is displayed. Is turned off (disabled).

- the left eye subtitle in the left eye SR is displayed (superposed) at the display position SR2 on the left eye image.

- the right eye subtitle in the right eye SR is displayed (superposed) at the display position SR3 on the right eye image.

- the SCS of the T2 frame includes command information (Display_OFF) for turning off (invalidating) the display positions SR2 and SR3.

- disparity information Disposity_4 for obtaining the display position SR4 of the subtitle in the left eye SR

- command information Disposable_ON for turning on (validating) the display of the display position SR4 It is included.

- disparity information Disposity_5 for obtaining the display position SR5 of the subtitle in the right eye SR

- command information (Display_ON) for turning on (validating) the display of the display position SR5 are displayed.

- It is included.

- the display position SR2 on the left-eye image is turned off (invalid) and the display position SR3 on the right-eye image is displayed. Is turned off (disabled).

- the left eye subtitle in the left eye SR is displayed (superposed) at the display position SR4 on the left eye image.

- the right eye subtitle in the right eye SR is displayed (superposed) at the display position SP5 on the right eye image.

- FIG. 16 shows a display example of the left-eye subtitle and right-eye subtitle on the receiving side when, for example, command information for controlling on / off of display (Display) is not included in the SCS segment.

- the subtitle in the left eye SR is in a state of being displayed (superimposed) on the display positions SR0, SR2 and SR4.

- the subtitles in the right eye SR are in a state of being displayed (superimposed) on the display positions SR1, SR3, SR5 in an overlapping manner.

- the dynamic change of the display position of the left eye subtitle in the left eye SR and the right eye subtitle in the right eye SR is not performed correctly.

- FIG. 17 conceptually shows a method for creating stereoscopic image subtitle data when the transmission format of stereoscopic image data is the side-by-side format.

- FIG. 17A shows a region based on subtitle data for a two-dimensional image. In this example, the region includes three objects.

- the subtitle processing unit 123 converts the size of the region based on the above-described subtitle data for a two-dimensional image into a size suitable for the side-by-side method as illustrated in FIG. Generate bitmap data of that size.

- the subtitle processing unit 123 uses the bitmap data after the size conversion as a component of the region in the stereoscopic image subtitle data. That is, the bitmap data after the size conversion is an object corresponding to the left eye subtitle in the region and an object corresponding to the right eye subtitle in the region.

- the subtitle processing unit 123 converts the subtitle data for the two-dimensional image into the subtitle data for the stereoscopic image, and DDS, PCS, RCS, CDS, ODS corresponding to the stereoscopic image subtitle data. Create a segment such as

- the subtitle processing unit 123 based on a user operation or automatically, on the region of the region in the stereoscopic image subtitle data, Set the right eye SR.

- the left eye SR is set in an area including an object corresponding to the left eye subtitle.

- the right eye SR is set in an area including an object corresponding to the right eye subtitle.

- the subtitle processing unit 123 creates an SCS segment including the region information, target frame information, and disparity information of the left eye SR and right eye SR set as described above. For example, the subtitle processing unit 123 creates an SCS including the left eye SR and right eye SR region information, target frame information, and disparity information in common, or the left eye SR and right eye SR region information, target frame information, SCS segments each including disparity information are created.

- FIG. 18 shows an example of a region and an object based on stereoscopic image subtitle data created as described above.

- the start position of the region is “Region_address”.

- FIG. 18 shows an example of the left eye SR and the right eye SR set as described above.

- FIG. 19 shows a creation example (example 1) of each segment of stereoscopic image subtitle data when the transmission format of stereoscopic image data is the side-by-side format.

- the start position “object_position1” of the object on the left eye image side and the start position “object_position2” of the object on the right eye image side are designated.

- the left eye SR and right eye SR SCS (Subregion composition segment) are created separately.

- the start position (Subregion ⁇ Position 1) of the left eye SR is specified.

- Target_Frame 1 of the right eye SR

- Disarity2 disparity information of the right eye SR

- Command2 display on / off command information

- FIG. 21 conceptually shows a method of creating stereoscopic image subtitle data when the transmission format of stereoscopic image data is the top-and-bottom method.

- FIG. 21A shows a region based on subtitle data for a two-dimensional image. In this example, the region includes three objects.

- the subtitle processing unit 123 uses the bitmap data after the size conversion as a component of the region of the stereoscopic image subtitle data. That is, the bitmap data after the size conversion is set as a region object on the left eye image (leftview) side and a region object on the right eye image (Right view) side.

- the subtitle processing unit 123 converts the subtitle data for the two-dimensional image into the subtitle data for the stereoscopic image, and PCS, RCS, CDS, ODS, etc. corresponding to the stereoscopic image subtitle data. Create a segment.

- the subtitle processing unit 123 based on a user operation or automatically, on the region of the region in the stereoscopic image subtitle data, Set the right eye SR.

- the left eye SR is set to an area including an object in the region on the left eye image side.

- the right eye SR is set to an area including an object in the region on the left eye image side.

- FIG. 22 shows an example of a region (region) and an object (object) based on stereoscopic image subtitle data created as described above.

- the start position of the region on the left eye image (left view) side is “Region_address1”

- the start position of the region on the right eye image (right view) side is “Region_address2”.

- FIG. 22 shows an example of the left eye SR and the right eye SR set as described above.

- FIG. 23 shows a creation example (Example 1) of each segment of stereoscopic image subtitle data when the transmission format of stereoscopic image data is the top-and-bottom method.

- PCS page_composition segment

- the left eye SR and the right eye SR SCS are created separately.

- the start position (Subregion ⁇ Position 1) of the left eye SR is specified.

- Target_Frame 1 of the right eye SR

- Disarity2 disparity information of the right eye SR

- Command2 display on / off command information

- FIG. 24 shows another example of creation of each segment of stereoscopic image subtitle data when the transmission format of stereoscopic image data is the top-and-bottom method (example 2).

- PCS, RCS, CDS, CDS, and ODS segments are created as in the example shown in FIG. 23 (example 1).

- the SCS for the left eye SR and the right eye SR are created in common. That is, various information of the left eye SR and the right eye SR is included in the common SCS.

- FIG. 25 conceptually shows a method of creating stereoscopic image subtitle data when the transmission format of stereoscopic image data is a frame sequential method.

- FIG. 25A shows a region based on subtitle data for a two-dimensional image. In this example, the region includes one object.

- the 2D image subtitle data is used as it is as the stereoscopic image subtitle data.

- segments such as DDS, PCS, RCS, and ODS corresponding to subtitle data for 2D images become segments such as DDS, PCS, RCS, and ODS corresponding to subtitle data for stereoscopic images as they are.

- the subtitle processing unit 123 on the region of the region in the stereoscopic image subtitle data, automatically or based on a user operation, or Set the right eye SR.

- the left eye SR is set in an area including an object corresponding to the left eye subtitle.

- the right eye SR is set in an area including an object corresponding to the right eye subtitle.

- the subtitle processing unit 123 creates an SCS segment including the region information, target frame information, and disparity information of the left eye SR and right eye SR set as described above. For example, the subtitle processing unit 123 creates an SCS including the left eye SR and right eye SR region information, target frame information, and disparity information in common, or the left eye SR and right eye SR region information, target frame information, SCS segments each including disparity information are created.

- FIG. 26 shows an example of the left eye SR and the right eye SR set as described above.

- FIG. 27 shows a creation example (example 1) of each segment of stereoscopic image subtitle data when the transmission format of stereoscopic image data is the frame sequential method.

- the start position “object_position1” of the object is designated.

- the left eye SR and the right eye SR SCS are created separately.

- the start position (Subregion ⁇ Position 1) of the left eye SR is specified.

- Target_Frame 1 of the right eye SR

- Disarity2 disparity information of the right eye SR

- Command2 display on / off command information

- FIG. 28 shows another example of creation of each segment of stereoscopic image subtitle data (example 2) when the transmission format of stereoscopic image data is the frame sequential method.

- PCS, RCS, and ODS segments are created as in the creation example (example 1) shown in FIG.

- the SCS of the left eye SR and the right eye SP is created in common. That is, various information of the left eye SR and the right eye SR is included in the common SCS.

- FIG. 29 and FIG. 30 show a structure example (syntax) of SCS (Subregion Composition segment).

- FIG. 31 shows the main data definition contents (semantics) of the SCS.

- This structure includes information of “Sync_byte”, “segment_type”, “page_id”, and “segment_length”.

- “Segment_type” is 8-bit data indicating the segment type, and is “0x49” indicating SCS here (see FIG. 11).

- Segment_length is 8-bit data indicating the length (size) of the segment.

- FIG. 30 shows a part including substantial information of the SCS.

- display control information for the left eye SR and right eye SR that is, area information for the left eye SR and right eye SR, target frame information, parallax information, and display on / off command information can be transmitted.

- display control information for an arbitrary number of subregions can be held.

- “Subregion_disparity_integer_part” is 8-bit information indicating an integer pixel (pixel) precision part (integer part) of disparity information (disparity) for shifting the display position of the corresponding subregion in the horizontal direction.

- “Subregion_disparity_fractional_part” is 4-bit information indicating a sub-pixel precision part (decimal part) of disparity information (disparity) for shifting the corresponding region / partition in the horizontal direction.

- the disparity information (disparity) shifts the display position of the corresponding sub-region as described above, and as described above, the left-eye subtitle in the left eye SR and the right-eye subtitle in the right eye SR. This is information for giving parallax to the display position.

- Subregion_horizontal_position is 16-bit information indicating the position of the left end of the subregion which is a rectangular region.