WO2004034183A2 - Image acquisition and processing methods for automatic vehicular exterior lighting control - Google Patents

Image acquisition and processing methods for automatic vehicular exterior lighting control Download PDFInfo

- Publication number

- WO2004034183A2 WO2004034183A2 PCT/US2003/026407 US0326407W WO2004034183A2 WO 2004034183 A2 WO2004034183 A2 WO 2004034183A2 US 0326407 W US0326407 W US 0326407W WO 2004034183 A2 WO2004034183 A2 WO 2004034183A2

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- light control

- exterior light

- vehicle

- light source

- vehicular exterior

- Prior art date

Links

- 238000003672 processing method Methods 0.000 title abstract description 4

- 238000013528 artificial neural network Methods 0.000 claims description 122

- 230000006870 function Effects 0.000 claims description 87

- 238000000034 method Methods 0.000 claims description 81

- 230000007704 transition Effects 0.000 claims description 57

- 230000008859 change Effects 0.000 claims description 36

- 238000004422 calculation algorithm Methods 0.000 claims description 29

- 238000012545 processing Methods 0.000 claims description 27

- 238000001514 detection method Methods 0.000 claims description 16

- 238000005286 illumination Methods 0.000 claims description 15

- 230000035945 sensitivity Effects 0.000 claims description 13

- 238000004458 analytical method Methods 0.000 claims description 11

- 230000004913 activation Effects 0.000 claims description 6

- 230000003213 activating effect Effects 0.000 claims description 3

- 238000010191 image analysis Methods 0.000 claims 3

- 230000002194 synthesizing effect Effects 0.000 abstract description 2

- 238000006243 chemical reaction Methods 0.000 description 23

- 238000012549 training Methods 0.000 description 20

- 238000012937 correction Methods 0.000 description 17

- 239000010410 layer Substances 0.000 description 16

- 230000008569 process Effects 0.000 description 16

- 230000004044 response Effects 0.000 description 15

- 230000006399 behavior Effects 0.000 description 14

- 238000003384 imaging method Methods 0.000 description 14

- 230000002829 reductive effect Effects 0.000 description 14

- 239000000463 material Substances 0.000 description 12

- 238000004519 manufacturing process Methods 0.000 description 11

- 230000004313 glare Effects 0.000 description 10

- 230000010354 integration Effects 0.000 description 9

- 210000002569 neuron Anatomy 0.000 description 9

- 238000004891 communication Methods 0.000 description 8

- 229920006395 saturated elastomer Polymers 0.000 description 8

- 210000000225 synapse Anatomy 0.000 description 8

- 230000000712 assembly Effects 0.000 description 7

- 238000000429 assembly Methods 0.000 description 7

- 238000007906 compression Methods 0.000 description 7

- 230000006835 compression Effects 0.000 description 7

- 230000009977 dual effect Effects 0.000 description 7

- 238000013507 mapping Methods 0.000 description 7

- 238000012544 monitoring process Methods 0.000 description 7

- 238000002310 reflectometry Methods 0.000 description 7

- 238000013144 data compression Methods 0.000 description 6

- 238000013461 design Methods 0.000 description 6

- 238000010586 diagram Methods 0.000 description 6

- 238000000605 extraction Methods 0.000 description 6

- 239000007787 solid Substances 0.000 description 6

- 239000010405 anode material Substances 0.000 description 5

- 230000009286 beneficial effect Effects 0.000 description 5

- 230000008901 benefit Effects 0.000 description 5

- 239000010406 cathode material Substances 0.000 description 5

- 230000001419 dependent effect Effects 0.000 description 5

- 210000002364 input neuron Anatomy 0.000 description 5

- 230000003287 optical effect Effects 0.000 description 5

- 230000009467 reduction Effects 0.000 description 5

- 239000000758 substrate Substances 0.000 description 5

- 238000012360 testing method Methods 0.000 description 5

- 241001417501 Lobotidae Species 0.000 description 4

- 238000007635 classification algorithm Methods 0.000 description 4

- 238000010276 construction Methods 0.000 description 4

- 230000003247 decreasing effect Effects 0.000 description 4

- 230000014509 gene expression Effects 0.000 description 4

- 239000002356 single layer Substances 0.000 description 4

- 230000003466 anti-cipated effect Effects 0.000 description 3

- 238000013459 approach Methods 0.000 description 3

- 125000004122 cyclic group Chemical group 0.000 description 3

- 230000000694 effects Effects 0.000 description 3

- 239000011263 electroactive material Substances 0.000 description 3

- 239000003792 electrolyte Substances 0.000 description 3

- 238000005516 engineering process Methods 0.000 description 3

- 238000005562 fading Methods 0.000 description 3

- 239000011159 matrix material Substances 0.000 description 3

- 230000007246 mechanism Effects 0.000 description 3

- 230000036961 partial effect Effects 0.000 description 3

- 230000009471 action Effects 0.000 description 2

- 230000003044 adaptive effect Effects 0.000 description 2

- 239000000853 adhesive Substances 0.000 description 2

- 230000001070 adhesive effect Effects 0.000 description 2

- 230000015572 biosynthetic process Effects 0.000 description 2

- 150000001875 compounds Chemical class 0.000 description 2

- 230000004438 eyesight Effects 0.000 description 2

- 239000012467 final product Substances 0.000 description 2

- 239000006260 foam Substances 0.000 description 2

- 230000004048 modification Effects 0.000 description 2

- 238000012986 modification Methods 0.000 description 2

- 230000001537 neural effect Effects 0.000 description 2

- 238000003909 pattern recognition Methods 0.000 description 2

- 229920000642 polymer Polymers 0.000 description 2

- 239000000047 product Substances 0.000 description 2

- 230000002441 reversible effect Effects 0.000 description 2

- 238000003860 storage Methods 0.000 description 2

- 238000003786 synthesis reaction Methods 0.000 description 2

- 229910052724 xenon Inorganic materials 0.000 description 2

- 241001282110 Pagrus major Species 0.000 description 1

- XUIMIQQOPSSXEZ-UHFFFAOYSA-N Silicon Chemical compound [Si] XUIMIQQOPSSXEZ-UHFFFAOYSA-N 0.000 description 1

- 238000012896 Statistical algorithm Methods 0.000 description 1

- XHCLAFWTIXFWPH-UHFFFAOYSA-N [O-2].[O-2].[O-2].[O-2].[O-2].[V+5].[V+5] Chemical compound [O-2].[O-2].[O-2].[O-2].[O-2].[V+5].[V+5] XHCLAFWTIXFWPH-UHFFFAOYSA-N 0.000 description 1

- 239000006096 absorbing agent Substances 0.000 description 1

- 230000002730 additional effect Effects 0.000 description 1

- 238000004378 air conditioning Methods 0.000 description 1

- 230000004075 alteration Effects 0.000 description 1

- 239000003963 antioxidant agent Substances 0.000 description 1

- 230000009118 appropriate response Effects 0.000 description 1

- 230000033228 biological regulation Effects 0.000 description 1

- 230000005540 biological transmission Effects 0.000 description 1

- 238000004364 calculation method Methods 0.000 description 1

- 239000000969 carrier Substances 0.000 description 1

- 230000001413 cellular effect Effects 0.000 description 1

- 239000011248 coating agent Substances 0.000 description 1

- 238000000576 coating method Methods 0.000 description 1

- 239000003086 colorant Substances 0.000 description 1

- 230000002301 combined effect Effects 0.000 description 1

- 229920006037 cross link polymer Polymers 0.000 description 1

- 239000013078 crystal Substances 0.000 description 1

- 230000009849 deactivation Effects 0.000 description 1

- 230000008021 deposition Effects 0.000 description 1

- 238000011161 development Methods 0.000 description 1

- 238000007865 diluting Methods 0.000 description 1

- HTXDPTMKBJXEOW-UHFFFAOYSA-N dioxoiridium Chemical compound O=[Ir]=O HTXDPTMKBJXEOW-UHFFFAOYSA-N 0.000 description 1

- 238000009826 distribution Methods 0.000 description 1

- 238000005315 distribution function Methods 0.000 description 1

- 230000001747 exhibiting effect Effects 0.000 description 1

- 238000007667 floating Methods 0.000 description 1

- 239000011521 glass Substances 0.000 description 1

- 229910052736 halogen Inorganic materials 0.000 description 1

- 150000002367 halogens Chemical class 0.000 description 1

- 230000002209 hydrophobic effect Effects 0.000 description 1

- 238000003703 image analysis method Methods 0.000 description 1

- 230000006872 improvement Effects 0.000 description 1

- 238000010348 incorporation Methods 0.000 description 1

- 229910000457 iridium oxide Inorganic materials 0.000 description 1

- 239000004611 light stabiliser Substances 0.000 description 1

- 230000000670 limiting effect Effects 0.000 description 1

- 238000012886 linear function Methods 0.000 description 1

- 238000002844 melting Methods 0.000 description 1

- 230000008018 melting Effects 0.000 description 1

- 229910044991 metal oxide Inorganic materials 0.000 description 1

- 150000004706 metal oxides Chemical class 0.000 description 1

- 229910000480 nickel oxide Inorganic materials 0.000 description 1

- 230000004297 night vision Effects 0.000 description 1

- QGLKJKCYBOYXKC-UHFFFAOYSA-N nonaoxidotritungsten Chemical compound O=[W]1(=O)O[W](=O)(=O)O[W](=O)(=O)O1 QGLKJKCYBOYXKC-UHFFFAOYSA-N 0.000 description 1

- 230000010355 oscillation Effects 0.000 description 1

- 210000004205 output neuron Anatomy 0.000 description 1

- 230000003647 oxidation Effects 0.000 description 1

- 238000007254 oxidation reaction Methods 0.000 description 1

- GNRSAWUEBMWBQH-UHFFFAOYSA-N oxonickel Chemical compound [Ni]=O GNRSAWUEBMWBQH-UHFFFAOYSA-N 0.000 description 1

- 230000000737 periodic effect Effects 0.000 description 1

- 230000002093 peripheral effect Effects 0.000 description 1

- 229920000767 polyaniline Polymers 0.000 description 1

- 229920000128 polypyrrole Polymers 0.000 description 1

- 229920000123 polythiophene Polymers 0.000 description 1

- 238000001556 precipitation Methods 0.000 description 1

- 238000002360 preparation method Methods 0.000 description 1

- 230000000135 prohibitive effect Effects 0.000 description 1

- 238000007493 shaping process Methods 0.000 description 1

- 230000011664 signaling Effects 0.000 description 1

- 229910052710 silicon Inorganic materials 0.000 description 1

- 239000010703 silicon Substances 0.000 description 1

- 230000007480 spreading Effects 0.000 description 1

- 238000003892 spreading Methods 0.000 description 1

- 238000007619 statistical method Methods 0.000 description 1

- 230000001360 synchronised effect Effects 0.000 description 1

- 230000002123 temporal effect Effects 0.000 description 1

- 239000003017 thermal stabilizer Substances 0.000 description 1

- 239000002562 thickening agent Substances 0.000 description 1

- 230000001960 triggered effect Effects 0.000 description 1

- 229910001930 tungsten oxide Inorganic materials 0.000 description 1

- 229910001935 vanadium oxide Inorganic materials 0.000 description 1

- 239000004034 viscosity adjusting agent Substances 0.000 description 1

- 230000000007 visual effect Effects 0.000 description 1

- FHNFHKCVQCLJFQ-UHFFFAOYSA-N xenon atom Chemical compound [Xe] FHNFHKCVQCLJFQ-UHFFFAOYSA-N 0.000 description 1

Classifications

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q1/00—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor

- B60Q1/02—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments

- B60Q1/04—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights

- B60Q1/18—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights being additional front lights

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F7/00—Methods or arrangements for processing data by operating upon the order or content of the data handled

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q1/00—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor

- B60Q1/02—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments

- B60Q1/04—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights

- B60Q1/06—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights adjustable, e.g. remotely-controlled from inside vehicle

- B60Q1/08—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights adjustable, e.g. remotely-controlled from inside vehicle automatically

- B60Q1/085—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights adjustable, e.g. remotely-controlled from inside vehicle automatically due to special conditions, e.g. adverse weather, type of road, badly illuminated road signs or potential dangers

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q1/00—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor

- B60Q1/02—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments

- B60Q1/04—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights

- B60Q1/14—Arrangement of optical signalling or lighting devices, the mounting or supporting thereof or circuits therefor the devices being primarily intended to illuminate the way ahead or to illuminate other areas of way or environments the devices being headlights having dimming means

- B60Q1/1415—Dimming circuits

- B60Q1/1423—Automatic dimming circuits, i.e. switching between high beam and low beam due to change of ambient light or light level in road traffic

- B60Q1/143—Automatic dimming circuits, i.e. switching between high beam and low beam due to change of ambient light or light level in road traffic combined with another condition, e.g. using vehicle recognition from camera images or activation of wipers

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/50—Context or environment of the image

- G06V20/56—Context or environment of the image exterior to a vehicle by using sensors mounted on the vehicle

- G06V20/58—Recognition of moving objects or obstacles, e.g. vehicles or pedestrians; Recognition of traffic objects, e.g. traffic signs, traffic lights or roads

- G06V20/584—Recognition of moving objects or obstacles, e.g. vehicles or pedestrians; Recognition of traffic objects, e.g. traffic signs, traffic lights or roads of vehicle lights or traffic lights

-

- H—ELECTRICITY

- H05—ELECTRIC TECHNIQUES NOT OTHERWISE PROVIDED FOR

- H05B—ELECTRIC HEATING; ELECTRIC LIGHT SOURCES NOT OTHERWISE PROVIDED FOR; CIRCUIT ARRANGEMENTS FOR ELECTRIC LIGHT SOURCES, IN GENERAL

- H05B47/00—Circuit arrangements for operating light sources in general, i.e. where the type of light source is not relevant

- H05B47/10—Controlling the light source

- H05B47/165—Controlling the light source following a pre-assigned programmed sequence; Logic control [LC]

-

- H—ELECTRICITY

- H05—ELECTRIC TECHNIQUES NOT OTHERWISE PROVIDED FOR

- H05B—ELECTRIC HEATING; ELECTRIC LIGHT SOURCES NOT OTHERWISE PROVIDED FOR; CIRCUIT ARRANGEMENTS FOR ELECTRIC LIGHT SOURCES, IN GENERAL

- H05B47/00—Circuit arrangements for operating light sources in general, i.e. where the type of light source is not relevant

- H05B47/10—Controlling the light source

- H05B47/17—Operational modes, e.g. switching from manual to automatic mode or prohibiting specific operations

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/05—Special features for controlling or switching of the light beam

- B60Q2300/052—Switching delay, i.e. the beam is not switched or changed instantaneously upon occurrence of a condition change

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/05—Special features for controlling or switching of the light beam

- B60Q2300/054—Variable non-standard intensity, i.e. emission of various beam intensities different from standard intensities, e.g. continuous or stepped transitions of intensity

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/05—Special features for controlling or switching of the light beam

- B60Q2300/056—Special anti-blinding beams, e.g. a standard beam is chopped or moved in order not to blind

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/10—Indexing codes relating to particular vehicle conditions

- B60Q2300/11—Linear movements of the vehicle

- B60Q2300/112—Vehicle speed

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/10—Indexing codes relating to particular vehicle conditions

- B60Q2300/12—Steering parameters

- B60Q2300/122—Steering angle

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/10—Indexing codes relating to particular vehicle conditions

- B60Q2300/13—Attitude of the vehicle body

- B60Q2300/132—Pitch

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/10—Indexing codes relating to particular vehicle conditions

- B60Q2300/13—Attitude of the vehicle body

- B60Q2300/134—Yaw

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/20—Indexing codes relating to the driver or the passengers

- B60Q2300/21—Manual control

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/30—Indexing codes relating to the vehicle environment

- B60Q2300/31—Atmospheric conditions

- B60Q2300/312—Adverse weather

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/30—Indexing codes relating to the vehicle environment

- B60Q2300/31—Atmospheric conditions

- B60Q2300/314—Ambient light

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/30—Indexing codes relating to the vehicle environment

- B60Q2300/32—Road surface or travel path

- B60Q2300/322—Road curvature

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/30—Indexing codes relating to the vehicle environment

- B60Q2300/33—Driving situation

- B60Q2300/331—Driving situation characterised by the driving side, e.g. on the left or right hand side

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/30—Indexing codes relating to the vehicle environment

- B60Q2300/33—Driving situation

- B60Q2300/332—Driving situation on city roads

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/30—Indexing codes relating to the vehicle environment

- B60Q2300/33—Driving situation

- B60Q2300/332—Driving situation on city roads

- B60Q2300/3321—Detection of streetlights

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/40—Indexing codes relating to other road users or special conditions

- B60Q2300/41—Indexing codes relating to other road users or special conditions preceding vehicle

-

- B—PERFORMING OPERATIONS; TRANSPORTING

- B60—VEHICLES IN GENERAL

- B60Q—ARRANGEMENT OF SIGNALLING OR LIGHTING DEVICES, THE MOUNTING OR SUPPORTING THEREOF OR CIRCUITS THEREFOR, FOR VEHICLES IN GENERAL

- B60Q2300/00—Indexing codes for automatically adjustable headlamps or automatically dimmable headlamps

- B60Q2300/40—Indexing codes relating to other road users or special conditions

- B60Q2300/42—Indexing codes relating to other road users or special conditions oncoming vehicle

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02B—CLIMATE CHANGE MITIGATION TECHNOLOGIES RELATED TO BUILDINGS, e.g. HOUSING, HOUSE APPLIANCES OR RELATED END-USER APPLICATIONS

- Y02B20/00—Energy efficient lighting technologies, e.g. halogen lamps or gas discharge lamps

- Y02B20/40—Control techniques providing energy savings, e.g. smart controller or presence detection

Definitions

- Patent Application serial number 60/404,879 entitled “IMAGE ACQUISITION AND PROCESSING METHOD FOR VEHICULAR LIGHTING CONTROL,” filed on August 21 , 2002, Joseph S. Stam et al. The disclosure of which is hereby incorporated in its entirety by reference.

- Such automatic lighting control may include automatic activation and deactivation of a controlled vehicle's high beam headlights as a function of driving conditions. This function has been widely attempted using various types of optical sensors to detect the ambient lighting conditions, the head lamps of oncoming vehicles and the tail lamps leading vehicles. Most recently, sensors utilizing an electronic image sensor have been proposed. Such systems are disclosed in commonly assigned U.S. patent numbers: 5,837,994 entitled Control system to automatically dim vehicle head lamps and 6,049,171 entitled Continuously variable headlamp control and commonly assigned U.S.

- the present invention seeks to overcome the limitations of the prior art by providing improved methods of acquiring and analyzing images from an image sensor for the purpose of detecting the head lamps of oncoming vehicles and tail lamps of leading vehicles and for discriminating these light sources from other sources of light within an image.

- the information obtained by the apparatus and methods disclosed herein may be used to automatically control vehicle equipment, such as controlling a controlled vehicle's exterior lights, windshield wipers, defroster, or for other purposes.

- an apparatus for acquiring images of a scene is provided.

- an apparatus for processing and storing the related information is provided.

- a low-voltage differential signal device with a memory buffer is provided for interface of an imager to a microprocessor.

- a high dynamic range image is synthesized to accommodate the diverse brightness levels associated with the various light sources anticipated to be present in the associated field of view of the imager.

- a peak detect algorithm is employed to detect individual light sources.

- the peak detect algorithms disclosed provide a means to separately detect light sources that are very close together and, or, partially overlapping.

- light source classification algorithms are employed to identify light sources that induce system responses.

- a host of classification algorithms incorporating probability functions and, or, neural networks are disclosed.

- switching methods are employed for automatically varying the operation of exterior vehicle lights.

- Various techniques for controlling both bi-modal, substantially continuously variable and continuously variable lights are disclosed.

- Training routines are provided in at least one embodiment for calibration of the classification algorithms.

- Empirical, experimental, real time and statistical data may be used individually, or in various combinations, to facilitate training.

- Fig. 1 depicts a controlled vehicle in relation to other oncoming and leading vehicles;

- Fig. 2 depicts an embodiment of an imager;

- FIG. 3 depicts an embodiment of an image sensor with related components

- Fig. 4 depicts a low-voltage differential signal device with a memory buffer connected between an imager and a microprocessor

- Fig. 5 depicts a flow chart for an algorithm to set the state of an exterior light based upon various light sources in an image

- Fig. 6 depicts a flow chart for an algorithm to synthesize a high dynamic range image

- Fig. 7 depicts a graph of the results of a data compression algorithm

- FIG. 8 depicts a flow chart for a data compression algorithm

- Fig. 9a and 9b depict stepwise representation of a data compression algorithm

- Fig. 10 depicts a flow chart for a peak detect algorithm

- Fig. 11 depicts a flow chart for an algorithm to determine inter-frame light source characteristics

- Fig. 12 depicts a flow chart for an algorithm to set the state of an exterior light based upon various light sources in an image

- Fig. 13 depicts an example flow chart of a neural network

- Fig. 14 depicts a state transition flow chart for exterior light control

- Fig. 15 depicts a first state transition chart for exterior light control

- Fig. 16 depicts a second state transition chart for exterior light control

- Fig. 17 depicts graph of duty cycle v. transition level for exterior light control

- Fig. 18 depicts an exploded view of an exterior rearview mirror assembly

- Fig. 19 depicts an interior rearview mirror assembly

- Fig. 20 depicts a sectional view of the mirror assembly of Fig. 19 taken along section line 20-20; and [0031] Fig. 21 depicts an exploded view of an interior rearview mirror assembly.

- DETAILED DESCRIPTION OF THE INVENTION [0032] The functionality of the current invention is best described with initial reference to Fig. 1.

- a controlled vehicle 101 contains an imager and an image processing system that is capable of acquiring and analyzing images of the region generally forward of the controlled vehicle.

- the imager and image processing system are preferably contained in the controlled vehicle's rear view mirror assembly 102, thus providing a clear forward view 103 from a similar perspective as the driver through the windshield in the region cleaned by the windshield wipers.

- the imager may alternatively be placed in any suitable position in the vehicle and the processing system may be contained with the imager or positioned elsewhere. A host of alternate configurations are described herein, as well as, within various incorporated references.

- the image analysis methods described herein may be implemented by a single processor, such as a microcontroller or DSP, multiple distributed processors, or may be implemented in a hardware ASIC or FPGA.

- the imager acquires images such that the head lamps 104 of oncoming vehicle 105 and the tail lamps 106 of preceding vehicle 107 may be detected whenever they are within an area where the drivers of vehicles 105 or 106 would perceive glare from the head lamps of controlled vehicle 101.

- the high beams of controlled vehicle 101 may be switched off or the beam pattern may be otherwise modified in such a way as to reduce glare to the occupants of other vehicles.

- An imager 200 for use with the present invention is shown in Fig. 2.

- 201 containing two separate lens elements 202 and 203 forms two images of the associated scene onto an image sensor 204.

- One image of the scene is filtered by a red filter 205 placed on the surface of the image sensor 204 and covering one half of the pixels.

- red filter 205 placed on the surface of the image sensor 204 and covering one half of the pixels.

- Other methods of color discrimination such as the use of checkerboard red/clear filters, striped red/clear filters, or mosaic or striped red/green/blue filters may also be used.

- Detailed descriptions of optical systems for use with the present invention are contained in copending U.S. patent number 6,130,421 entitled Imaging system for vehicle headlamp control and U.S.

- the imager comprises a pixel array 305, a voltage/current reference 310, digital-to-analog converters (DACs) 315, voltage regulators 320, low-voltage differential signal I/O 325, a digital block 330, row decoders 335, reset boost 340, temperature sensor 345, pipeline analog-to-digital converter (ADC) 350, gain stage 355, crystal oscillator interface 360, analog column 365 and column decoders 370.

- ADC pipeline analog-to-digital converter

- these devices are integrated on a common circuit board or silicon substrate. However, any or all of the individually identified devices may be mounted to a separate structure. Details of a preferred imager in accordance with that shown in Fig. 3 is described in detail in commonly assigned U.S.

- the imager is a CMOS design configured to meet the requirements of automotive applications.

- the imager provides 144 columns and 176 rows of photodiode based pixels.

- the imager also has provisions for sensing temperature, controlling at least one output signal, providing voltage regulation to internal components, and incorporated device testing facilities.

- Imager commands preferably provide control of a variety of exposure, mode and analog settings.

- the imager is preferably capable of taking two image subwindows simultaneously from different starting rows; this feature permits highly synchronized images in a dual lens system as described with reference to Fig. 2.

- a single command instruction is sent to the imager and the imager then responds with two sequential images having unique exposure times.

- Another preferred option allows the analog gains to be applied in a checkerboard image for applications where a filter is applied to the imager in a checkerboard pattern.

- data can be transmitted in ten bit mode, a compressed eight bit mode where a ten bit value is represented in eight bits (as described in more detail elsewhere herein), or a truncated eight bit mode where only the most significant eight bits of each ten bit pixel value is transmitted.

- LVDS SPI low-voltage differential signaling serial peripheral interface

- the LVDS SPI provides a communication interface between image sensor 410 and microcontroller 415.

- the preferred LVDS SPI comprises a LVDS transceiver 420, an incoming data logic block 425, a dual port memory 430, and a microcontroller interface logic block 435. It should be understood that a host of known LVDS devices are commercially available and it is envisioned that LVDSs other than that shown in Fig. 4 may be utilized with the present invention.

- the dual port memory may be omitted and the control and data signals will be transmitted directly over the LVDS link.

- a more detailed description of the LVDS SPI interface in accordance with that shown in Fig. 4 is contained in commonly assigned U.S. provision patent application Attorney Docket AUTO 318V1 , the disclosure of which is incorporated in its entirety herein by reference.

- the dual port memory is provided to enable the microcontroller to perform other functions while image data is being sent from the imager.

- the microcontroller then reads the image data from the dual port memory once free to do so.

- the dual port memory allows sequential access to individual memory registers one-by-one.

- two read pointers are provided to allow alternate access to two different regions of memory. This feature is particularly beneficial when used along with the dual integration time feature of the image sensors.

- the image sensor will send two images sequentially having different integration times.

- the first pointer is set to the start of the first image and the second pointer to the start of the second. Thus, for each pixel location the first integration time pixel value is read out first followed by the pixel value from the second integration.

- Fig. 5 An image acquisition and analysis method of the present invention is described with reference first to Fig. 5.

- the control proceeds as a sequence of acquisition and processing cycles 500, repeated indefinitely whenever control is active. Cyclic operation may occur at a regular rate, for example once every 200 ms. Alternatively, the cyclic rate may be adjusted depending on the level of activity or the current state of the vehicle lamps. Cycles may be interrupted for other functions.

- the processing system may also control an automatic dimming rear view mirror, a compass, a rain sensor, lighting, user interface buttons, microphones, displays, vehicle interfaces, telemetry functions, multiplexed bus communication, as well as other features. If one of these features requires processor attention, cycle 500 may be suspended, interrupted or postponed.

- Cycle 500 begins with the acquisition of one or more images 501 that are, at least in part, stored to memory for processing.

- the corresponding images may be synthetic high dynamic range images as described further herein.

- various objects and properties of these objects are extracted from the acquired images. These objects usually are light sources that must be detected and classified.

- the term "light source” as used herein includes sources that emit light rays, as well as, objects that reflect light rays.

- step 503 the motion of light sources and other historical behavior is determined by finding and identifying light sources from prior cycles and associating them with light sources in the current cycle.

- Light sources are classified in step 504 to determine if they are vehicular head lamps, vehicle tail lamps, or other types of light sources.

- step 505 the state of the controlled vehicle lamps are modified, if necessary, based upon the output of step 504 and other vehicle inputs.

- step 502 its historical information may be immediately determined in step 503 and it may be immediately classified in step 504. Then the next light source, if any, may be identified in step 502. It should also be understood that any of the steps of Fig. 5 may be beneficially applied to vehicle imaging systems independently of other steps, in various combinations with other steps or with prior art embodiments.

- the process for acquiring and synthesizing a HDR image includes the acquisition of two or more images at different exposures to cover different brightness ranges. While any number of images may be taken at different exposure intervals, three images will be used, as an example, with exposure times of 1 , 6, and 36ms.

- an HDR is synthesized utilizing five images, each with a unique integration period, For example, with exposures of 0.25, 0.5, 2, 8 and 30ms.

- a preferred imager provides the ability to acquire two images with unique integration periods with a single command; it may be desirable to create a HDR utilizing two images having unique integration periods, for example using integration times between 0.5 and 50ms.

- step 601 the image memory is zeroed.

- step 602 the first image with the shortest exposure (1 ms) is acquired.

- Step 603 is irrelevant for the first image since the memory is all zeros.

- Step 604 represents an optional step used to correct for fixed pattern imager noise.

- Most image sensors exhibit some type of fixed pattern noise due to manufacturing variances from pixel to pixel.

- Fixed pattern noise may be exhibited as a variance in an offset, a gain or slope or combination thereof. Correction of fixed pattern noise may improve overall performance by assuring that the sensed light level of an imaged light source is the same regardless of the pixel onto which it is imaged. Improvements in imager fabrication process may render this correction unnecessary.

- correction in offset (step 604), slope (step 606) or both may be accomplished by the following method.

- each sensor is measured during manufacturing and a pixel-by-pixel lookup table is generated that stores the offset and/or slope error for each pixel.

- the offset is corrected by adding or subtracting the error value stored in the table for the current (i th ) pixel.

- Slope correction may also be applied at this point by multiplying the pixel value by the slope error factor.

- the slope correction may be applied by a less computationally expensive addition or subtraction to the logarithmic value in step 606.

- step 605 the pixel value (plus the optional offset correction from step 604) is converted for creation of the HDR image.

- This conversion first may include an optional step of linearization.

- Many pixel architectures may respond non-linearly to incident light levels. This non-linearity may be manifested as an S-shaped curve that begins responding slowly to increasing light levels, then more linearly, and then tapers off until saturation. Such a response may induce error when attempting brightness or color computations. Fortunately, the non-linearity is usually repeatable and usually consistent for a given imager design. This correction is most efficiently achieved through a lookup table that maps the non-linear pixel response to a linear value.

- the lookup table may be hard-coded into the processor. Otherwise it may be measured and stored on a chip-by-chip basis, as is the case for fixed pattern noise correction. Sensors that exhibit a substantially linear response will not require linearity correction.

- each pixel output must also be scaled by the ratio between the maximum exposure and the current exposure. In the case of this example, the data from the 1 ms image must be multiplied by 36. Finally, to accommodate the wide dynamic range, it is beneficial to take the logarithm of this value and store it to memory. This allows for the pixel value to be maintained as an 8-bit number thus reducing the memory requirement. If sufficient memory is available, logarithmic compression may be omitted. While the natural log is commonly used, log base 2 may alternatively be used. Highly computationally efficient algorithms may be used to compute the log and anti-log in base 2. The entire computation of step 605, linearization, scaling, and taking the logarithm is preferably performed in a single lookup table.

- a lookup table with these factors pre-computed is created for each exposure setting and used to convert the value from step 604 to the value to be stored to memory.

- a 10-bit to 8-bit compression algorithm may be employed.

- the slope error correction may be applied in step 606 to the logarithmic value from step 605.

- the final value is stored to memory in step 607. This entire process proceeds for each pixel in the image as indicated by step 608. Once the first image is stored, the next higher exposure image may be acquired. Processing for this and all subsequent images proceeds similarly except for step 603.

- values are only stored to memory if no value from a lesser sensitivity image was detected. If a value is currently in memory there is no need for the value, that is likely saturated or nearer saturation, from a higher sensitivity image. Essentially, the higher sensitivity images simply serve to "fill in the blanks" left by those pixels that did not sense any light in prior images. Finally, when the highest exposure (36ms in this example) image is acquired, no scaling will be necessary.

- the process may occur backwards, beginning with the highest sensitivity image.

- pixels that are saturated from the higher sensitivity images may be replaced by non-saturated pixels from lower sensitivity images.

- Multiple images may be taken at each sensitivity and averaged to reduce noise.

- Functions other than the log function may be used to compress the range of the image such as deriving a unity, normalized, factor.

- Bit depths other than 8-bits may be used to acquire and store the image such as 9-bits, 10-bits, 16-bits, 32-bits and 64-bits.

- methods other than varying the exposure time such as varying gain or A/D conversion parameters, may be used to alter the sensitivity of the acquired images.

- Dynamic range compression of image grayscale values may also occur in hardware, either as a feature provided on chip with the image sensor or through associated circuitry. This is especially beneficial when 10 bit or higher resolution A/D converters are provided, since many bus communication protocols, such as the SPI bus, typically transmit data in 8-bit words or multiples of 8 bits. Thus a 10-bit value would be usually be transmitted as a 16-bit word and actually take twice the bandwidth and memory of an 8-bit value.

- the requirements for reading resolution are usually more closely aligned with constant percent of reading than with constant percent of full scale.

- the percentage change of a linearly encoded variable is a constant percent of full scale for each incremental step in the reading whereas the percentage change in the linear value corresponding to its logarithmically encoded counterpart is a constant percent of the linear reading for each incremental step in its associated log encoded value.

- linear encoding the incremental change for a small value which is close to zero is a very large percent of the reading or value and the incremental change for a large value which is close to full scale is a very small percent of the reading or value.

- the conversion is normally linear and must be converted or mapped to another form when such a conversion is needed. Unless it is stated otherwise, it will generally be assumed that incremental accuracy refers to values already in or converted back to their linear range.

- the incremental step is a large percentage of the reading and mapping these into readings where the incremental change in the associated linear value is smaller will result in single input values being mapped into multiple output values.

- An object of encoding values from a larger to a smaller set is to preserve necessary information with a smaller number of available bits or data points to encode the values. For example, in converting a 10 bit value to a compressed 8 bit value, the available number of data points drops by a factor of four from 1024 in the input set to 256 in the converted output set. To make effective use of the smaller number of available points, a given number of input codes in the larger input space should not in general map into a larger number of codes in the output space.

- the logarithm also has the desirable property that the effect of the application of a constant multiplier in the linear domain may be offset by the subtraction of the log of this multiplier in the log domain.

- a variant of scientific notation may be used applying a multiplier and expressing the number as a value in a specified range times an integral power of this range.

- binary numbers it is normally most convenient to choose a range of two to one, an octave, and to express the number as a normalized value which spans one octave times a power of two. Then for the log range, depending on the output codes available, the number of output values per octave may be chosen.

- Rlrb(63) is approximately equal to 1.59% per increment which is close to 1.45% per increment for the logarithmic encoding making it a good place for the transition from linear one to one mapping to logarithmic mapping.

- the log conversion for the octave from 64 through 127 maintains the one to one mapping of input to output through value 77.

- the same one octave linear to log conversion may be used for each of the four octaves.

- a variable which is greater than another in the output range assures that the same relation held for the related pair of values in the input range.

- Cameras which incorporate stepwise linear compression are known to the inventor as are cameras with sensing arrangements which have a nonlinear and perhaps logarithmic light sensing characteristic to achieve an extended range. Cameras which combine ranges so that part of the output range is linear and part is logarithmic are not known. No cameras for the headlamp dimmer application which incorporate any form of compression in the camera module are known to the inventor.

- Fig. 9a and 9b The implementation described is a combinatorial circuit but sequential or asynchronous implementations are within the scope of the invention.

- Ten bit digital input signal in10[9:0] (901 ) is input to the circuit and the combinatorial output is eight bit signal out8[7:0] (902).

- one high range indication signal bd[4:0] is generated with one of the 5 lines of bd[4:0] high and the others zero for each of the input ranges as indicated.

- the input value ranges for in10[9:0] are shown in the first column in decimal as numbers without underscore separators or a Ox prefix.

- the output numbers prefixed by Ox are in hexadecimal format.

- Binary numbers in block 308 are indicated by an underscore separating each group of four binary 0 and 1 digits. These conventions will be used for each of the blocks in Figs. 9a and 9b.

- a range designation from 0 to 4 is shown in the middle column of block 903 and is for convenience since the range is referenced so often in the logic and in this description.

- Input values which are in range 0 are passed directly to output out8[7:0] without alteration.

- Each of the other four ranges span one octave.

- the octave is taken to include the lowest number and the number two times this number is included with the next octave so that each of the octave related input values is, by this definition, included in exactly one octave.

- the value is scaled and, or, offset according to which range it is in and mapped into a 48 output value range using a common decoder block in the logic.

- the one octave 48 step logarithmically related output value is then scaled and, or, offset according to the range that the input value is in and directed to the output.

- the input value is scaled and, or, offset according to the range that it is in as indicated by the value of bd[4:0] and output as signal in9s[8:0] to the first block 908 of the logarithmic decoder.

- the logarithmic conversions are used for ranges 1 through 4 and due to the range classification criteria, the next higher bit which would be in10[6] to 1 n10[9] for ranges 1 through 4, respectively, is always 1.

- this bit is always one and adds no variable information, it is omitted from the comparison and is also excluded as a leading tenth bit in the inverse log columns 3 and 6 of block 908.

- all nine of the variable bits are included in the comparison for the logarithmic conversion.

- the value is shifted left 1 as indicated by the multiply by 2 and a 1 is placed on the Isb, bit in9s[0].

- the 1 in bit zero by subjective comparison yielded the smoothest conversion result.

- the value is shifted left 2 places and binary 10 is placed in the two least significant bits to provide a smooth conversion result.

- Blocks 908, 909, and 910 are used to perform the 10 bit binary o 48 step per octave logarithmic conversion with 0 to 47 as the output log[5:0].

- Block 908 is a group of 48 compare functions used in the ensuing blocks in the conversion. The ge[x, in9s[8:0j] terms are true if and only if the 9 bit input ge[x, in9s[8:0]] is a value whose output log[5:0] is greater than or equal to x. These functions are useful because to test that an output log[5:0] for an input in9s[8:0]] is in a range which is greater than or equal to a but less than b the following expression may be used:

- ge[x] will be used to mean the same thing as ge[x, in9s[8:0]].

- the value x in columns 1 and 4 is the index for the x* n value of the

- ge[x, in9s[8:0]] is the function which represents the combinatorial logic function whose value is 1 if and only if in9s[8:0] is greater than or equal to the associated Inverse log(x) value shown in the third or sixth column of block 908. As indicated before, the msb which is 1 is not shown.

- the inverse log values may be generated by the equation

- exp(y) is the exponential function with the natural number e raised to the y tn power and log(z) is the natural log of z.

- the most significant 1 bit is omitted in columns 3 and 6 of block 908.

- an optional gray code encoding stage is used and optionally, the encoding could be done directly in binary but would require a few more logic terms.

- the encoding for each of the six bits glog[0] through glog[5] of an intermediate gray code is performed with each of the glog bits being expressed as a function of ge[x] terms.

- the gray code was chosen because only one of the six bits in glog[5:0] changes for each successive step in the glog output value. This generates a minimal number of groups of consecutive ones to decode for consecutive output codes for each of the output bits glog[0] through glog[5].

- a minimal number of ge[x] terms are required in the logic expressions in column 2 of block 909.

- the gray code glog[5:0] input is converted to a binary log[5:0] output.

- the number to add to log[5:0] to generate the appropriate log based output value for inputs in ranges 1 through 4 is generated.

- the hexadecimal range of the in10[9:0] value is listed in the first column and the number to add to bits 4 through 7 of olog[7:0] is indicated in hexadecimal format in the second column.

- the third column indicates the actual offset added for each of the ranges when the bit positions to which the value is added are accounted for.

- the direct linear encoding in10[5:0] zero padded in bits 6 and 7 is selected for inputs in range 0 and the logarithmically encoded value olog[7:0] is selected for the other ranges 1 through 4 to generate 8 bit output out8[7:0].

- Fig. 7 depicts the output 700a as a function of the input 700 of a data compression circuit such as the one detailed in the block diagram of Fig. 8.

- the input ranges extend in a first range from 0 to (not including) 701 and similarly in four one octave ranges from 701 to 702, from 702 to 703, from 703 to 704, and finally from 704 to 705.

- the first range maps directly into range 0 to (not including 701a) and the four one octave ranges map respectively into 48 output value ranges from 701a to 702a, from 702a to 703a, from 703a to 704a, and finally from 704a to 705a.

- the output for each of the four one octave output ranges is processed by a common input to log converter by first determining which range and thus which octave, if any, the input is in and then scaling the input to fit into the top octave from 704 to 705, then converting the input value to a 48 count 0-47 log based output.

- the offset at 701a, 702a, 703a, or 704a is then selectively added if the input is in the first, second, third or fourth octave, respectively.

- the direct linear output is selected and otherwise, the log based value calculated as just described is selected to create the output mapping depicted by curve 710.

- Fig. 8 is a procedural form of the conversion detailed in the block diagram of

- Fig. 9a and 9b In block 801 the range that the input is in is determined. In block 802 the value is pre-scaled and, or, translated to condition the value from the range that the input is in to use the common conversion algorithm. In block 803 the conversion algorithm is applied in one or in two or possibly more than two stages. In block 804, the compressed value is scaled and, or, translated so that the output value is appropriate for the range that the input is in. In block 806, the compression algorithm of blocks 801 through 804 is used if the range that the input is in is appropriate to the data and the value is output in block 807. Otherwise, an alternate conversion appropriate to the special range is output in block 806.

- Extraction of the light sources (also referred to as objects) from the image generated in step 501 is preformed in step 502.

- the goal of the extraction operation is to identify the presence and location of light sources within the image and determine various properties of the light sources that can be used to characterize the objects as head lamps of oncoming vehicles, tail lamps of leading vehicles or other light sources.

- Prior-art methods for object extraction utilized a "seed-fill" algorithm that identified groups of connected lit pixels. While this method is largely successful for identifying many light sources, it occasionally fails to distinguish between multiple light sources in close proximity in the image that blur together into a single object.

- the present invention overcomes this limitation by providing a peak-detect algorithm that identifies the location of peak brightness of the light source. Thereby, two light sources that may substantially blur together but still have distinct peaks may be distinguished from one another.

- the steps shown proceed in a loop fashion scanning through the image. Each step is usually performed for each lit pixel.

- the first test 1001 simply determines if the currently examined pixel is greater than each of its neighbors. If not, the pixel is not a peak and processing proceeds to examine the next pixel 1008. Either orthogonal neighbors alone or diagonal and orthogonal neighbors are tested. Also, it is useful to use a greater-than-or-equal operation in one direction and a greater-than operation in the other. This way, if two neighboring pixels of equal value form the peak, only one of them will be identified as the peak pixel.

- step 1002 If a pixel is greater than its neighbors, the sharpness of the peak is determined in step 1002. Only peaks with a gradient greater than a threshold are selected to prevent identification reflections off of large objects such as the road and snow banks. The inventors have observed that light sources of interest tend to have very distinct peaks, provided the image is not saturated at the peak (saturated objects are handled in a different fashion discussed in more detail below). Many numerical methods exist for computing the gradient of a discrete sample set such as an image and are considered to be within the scope of the present invention. A very simple method benefits from the logarithmic image representation generated in step 501.

- the slope between the current pixel and the four neighbors in orthogonal directions two pixels away is computed by subtracting the log value of the current pixel under consideration from the log value of the neighbors. These four slopes are then averaged and this average used as the gradient value. Slopes from more neighbors, or neighbors at different distances away may also be used. With higher resolution images, use of neighbors at a greater distance may be advantageous.

- the gradient is computed, it is compared to a threshold in step 1003. Only pixels with a gradient larger than the threshold are considered peaks.

- the centroid of a light source and, or, the brightness may be computed using a paraboloid curve fitting technique.

- the peak value is stored to a light list (step

- the peak value alone may be used as an indicator of the light source brightness, it is preferred to use the sum of the pixel values in the local neighborhood of the peak pixel. This is beneficial because the actual peak of the light source may be imaged between two or more pixels, spreading the energy over these pixels, potentially resulting in significant error if only the peak is used. Therefore, the sum of the peak pixel plus the orthogonal and diagonal nearest neighbors is preferably computed. If logarithmic image representation is used, the pixel values must first be converted to a linear value before summing, preferably by using a lookup table to convert the logarithmic value to a linear value with a higher bit depth. Preferably this sum is then stored to a light list in step 1005 and used as the brightness of the light source.

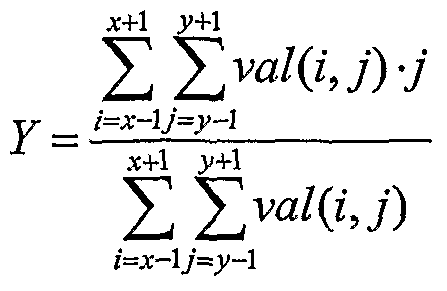

- centroid location may be computed by the following formula: x+ ⁇ y+l

- x is the x-coordinate of the peak pixel

- y is the y-coordinate of the peak pixel and and Yis the resulting centroid.

- neighborhoods other than the 3X3 local neighborhood surrounding the peak pixel may be used with the appropriate modification to the formula.

- the color of the light source is determined in step 1007.

- the red-to-white color ratio may be computed by computing the corresponding 3X3 neighborhood sum in the clear image and then dividing the red image brightness value by this number.

- only the pixel peak value in the red image may be divided by the corresponding peak pixel value in the clear image.

- each pixel in the 3X3 neighborhood may have an associated scale factor by which it is multiplied prior to summing. For example, the center pixel may have a higher scale factor than the neighboring pixels and the orthogonal neighbors may have a higher scale factor than the diagonal neighbors. The same scale factors may be applied to the corresponding 3X3 neighborhood in the clear image.

- Misalignment in the placement of lens 201 over image array 204 may be measured during production test of devices and stored as a calibration factor for each system. This misalignment may be factored when computing the color ratio. This misalignment may be corrected by having different weighting factors for each pixel in the 3X3 neighborhood of the clear image as compared to that of the red image. For example, if there is a small amount of misalignment such that the peak in the clear image is ⁇ A pixel left of the peak in the red image, the left neighboring pixel in the clear image may have an increased scale factor and the right neighboring pixel may have a reduced scale factor. As before, neighborhoods of sizes other than 3X3 may also be used.

- color may be computed using conventional color interpolation techniques known in the art and "redness" or full color information may be utilized. Color processing may be performed on the entire image immediately following acquisition or may be performed only for those groups of pixels determined to be light sources. For example, consider an imaging system having a red/clear checkerboard filter pattern. The process depicted in Fig. 10 may be performed by considering only the red filtered pixels and skipping all the clear pixels. When a peak is detected, the color in step 1006 is determined by dividing the peak pixel value (that is a red filtered pixel) by the average of its four neighboring clear pixels. More pixels may also be considered, for example four-fifths of the average of the peak pixel plus its four diagonal neighbors (also red filtered) may be divided by the four clear orthogonal neighbors.

- step 502 Several other useful features may be extracted in step 502 and used to further aid the classification of the light source in step 504.

- the height of the light source may be computed by examining pixels in increasing positive and negative vertical directions from the peak until the pixel value falls below a threshold that may be a multiple of the peak, Vz of the peak value for example.

- the width of an object may be determined similarly.

- a "seed-fill" algorithm may also be implemented to determine the total extents and number of pixels in the object.

- the above described algorithm has many advantages including being fairly computationally efficient. In the case where only immediate neighbors and two row or column distant neighbors are examined, only four rows plus one pixel of the image are required. Therefore, analysis may be performed as the image is being acquired or, if sufficient dynamic range is present from a single image, only enough image memory for this limited amount of data is needed. Other algorithms for locating peaks of light sources in the image may also be utilized. For example, the seed fill algorithm used in the prior art may be modified to only include pixels that are within a certain brightness range of the peak, thus allowing discrimination of nearby light sources with at least a reasonable valley between them. A neural-network peak detection method is also discussed in more detail herein.

- any single pixel that is either saturated or exceeds a maximum brightness threshold may be identified as a light source, regardless whether it is a peak or not. In fact, for very bright lights, the entire process of Fig. 5 may be aborted and high beam headlights may be switched off. In another alternative, the sum of a given number of pixels neighboring the currently examined pixel is computed.

- this sum exceeds a high-brightness threshold, it is immediately identified as a light source or control is aborted and the high beam headlights are dimmed.

- a high-brightness threshold Normally, two conditions are used to qualify pixels as peaks, the pixel must be greater than (or greater than or equal to) its neighbors and, or, the gradient must be above a threshold. For saturated pixels, the gradient condition may be skipped since gradient may not be accurately computed when saturated.

- step 503 Significant clues useful for the discrimination of vehicular light sources from other light sources may be gained by monitoring the behavior of light sources over several cycles.

- step 503 light sources from prior cycles are compared to light sources from a current cycle to determine the motion of light sources, change in brightness of light sources, and, or, to determine the total number of cycles for which a light source has been detected. While such analysis is possible by storing several images overtime and then comparing the light sources within these images, current memory limitations of low-cost processors make it more appealing to create and store light lists. Although, the concept of storing the entire image, or portions thereof, are within the scope of the present invention and should be considered as alternate approaches.

- Fig. 11 The process in Fig. 11 occurs for all light sources from the current cycle. Each light from the current cycle is compared to all lights from the prior cycle to find the most likely, if any, parent.

- step 1101 the distance between the light source in the current cycle and the light source from the prior cycle (hereafter called current light and prior light) is computed by subtracting their peak coordinates and then compared to a threshold in step 1102. If the prior light is further away than the threshold, control proceeds to step 1105 and the next prior light is examined.

- the threshold in step 1102 may be determined in a variety of ways including being a constant threshold, a speed and/or position dependent threshold, and may take into account vehicle turning information if available.

- step 1103 the distance between the prior light and current light is checked to see if it is the minimum distance to all prior lights checked so far. If so, this prior light is the current best candidate for identification as the parent.

- Another factor in the determination of a parent light source is to compare a color ratio characteristic of light sources of two images and, or, comparison to a color ratio threshold. It is also within the scope of the present invention to utilize a brightness value of determination of a parent light source. As indicated in step 1105, this process continues until all lights from the prior cycle are checked. Once all prior lights are checked, step 1106 determines if a parent light was found from the prior cycle light list. If a parent is identified, various useful parameters may be computed.

- the motion vector is computed as the X and Y peak coordinate differences between the current light and the parent.

- the brightness change in the light source is computed in step 1108 as the difference between the current light and the parent light.

- the age of the current light defined to be the number of consecutive cycles for which the light has been present, is set as the age of the parent light plus one.

- averages of the motion vector and the brightness change may prove more useful than the instantaneous change between two cycles, due to noise and jittering in the image. Averages can be computed by storing information from more than one prior cycle and determining grandparent and great-grandparent, etc. light sources. Alternatively a running average may be computed alleviating the need for storage of multiple generations.

- the running average may, for example, take a fraction (e.g. 1/3) of the current motion vector or brightness change plus another fraction (e.g. 2/3) of the previous average and form a new running average.

- light lists containing the position information and possibility other properties such as the brightness and color of detected light sources may be stored for multiple cycles. This information may then be used for the classification of the objects from the current cycle in step 504.

- determination ofthe most likely prior light source as the parent may also consider properties such as the brightness difference between the current light source and the prior light source, the prior light source's motion vector, and the color difference between the light sources.

- two light sources from the current cycle may have the same parent. This is common when a pair of head lamps is originally imaged as one light source but upon coming closer to the controlled vehicle splits into two distinct objects.

- Classification step 504 utilizes the properties of light sources extracted in step 502 and the historical behavior of light sources determined in step 503 to distinguish head lamps and tail lamps from other light sources. For summary, the following properties have been identified thus far: peak brightness, total brightness, centroid location, gradient, width, height and color. The following historical information may also be used: motion vector (x & y), brightness change, motion jitter, age, average motion vector and average brightness change.

- vehicle state parameters may be utilized to improve classification. These may include: vehicle speed, light source brightness that corresponds to the controlled vehicle's exterior light brightness(indicative of reflections), ambient light level, vehicle turn rate (from image information, steering wheel angle, compass, wheel speed, GPS, etc.), lane tracking system, vehicle pitch or yaw, and geographic location or road type (from GPS). Although specific uses for individual parameters may be discussed, the present invention should not be construed as limited to these specific implementations.

- the goal of the present invention is to provide a generalized method of light source classification that can be applied to any, or all, of the above listed parameters or additional parameters for use in identifying objects in the images.

- the classification of light sources may be supplemented by information from other than the image processing system, such as radar detection of objects, for example.

- FIG. 12 An example classification scheme proceeds in accordance with Fig. 12.

- the control sequence of Fig. 12 repeats for each light source identified in the current cycle as indicated in 1212.

- the brightness of the light source is compared to an immediate dim threshold. If the brightness exceeds this threshold, indicating that a very bright light has been detected, the processing of Fig. 12 concludes and the high beams are reduced in brightness, or the beam pattern otherwise modified, if not already off. This feature prevents any possible misclassification of very bright light sources and insures a rapid response to those that are detected.

- Step 1202 provides for the discrimination of street lights by detecting a fast flickering in intensity of the light sources, which is not visible to humans, resulting from their AC power source. Vehicular lights, which are powered from a DC source, do not exhibit this flicker. Flicker may be detected by acquiring several images of the region surrounding the light source at a frame rate that is greater than the flicker rate, preferably at 240 Hz and most preferably at 480 Hz. These frames are then analyzed to detect an AC component and those lights exhibiting flicker are ignored (step 1203). Additionally, a count, or average density, of streetlights may be derived to determine if the vehicle is likely traveling in a town or otherwise well lit area.

- a minimum redness threshold criterion is determined with which the color is compared in step 1204. It is assumed that all tail lamps will have a redness that is at least as high as this threshold. Light sources that exhibit redness greater than this threshold are classified through a tail lamp classification network in step 1205.

- the classification network may take several forms. Most simply, the classification network may contain a set of rules and thresholds to which the properties of the light source is compared.

- Thresholds for brightness, color, motion and other parameters may be experimentally measured for images of known tail lamps to create these rules. These rules may be determined by examination of the probability distribution function of each of the parameters, or combinations of parameters, for each classification type. Frequently however, the number of variables and the combined effect of multiple variables make generating the appropriate rules complex.

- the motion vector of a light source may, in itself, not be a useful discriminator of a tail lamp from another light source.

- a moving vehicle may exhibit the same vertical and horizontal motion as a street sign. However, the motion vector viewed in combination with the position of the light source, the color of the light source, the brightness of the light source, and the speed of the controlled vehicle, for example, may provide an excellent discriminate.

- probability functions are employed to classify the individual light sources.

- the individual probability functions may be first, second, third or fourth order equations.

- the individual probability functions may contain a combination of terms that are derived from either first, second, third or fourth order equations intermixed with one another.

- the given probability functions may have unique multiplication weighting factors associated with each term , within the given function.

- the multiplication weighting factors may be statistically derived by analyzing images containing known light sources and, or, obtained during known driving conditions. Alternatively, the multiplication weighting factors may be derived experimentally by analyzing various images and, or, erroneous classifications from empirical data.

- the output of the classification network may be either a Boolean, true-false, value indicative of a tail lamp or not a tail lamp or may be a substantially continuous function indicative of the probability of the object being a tail lamp.

- the same is applicable with regard to headlamps.

- Substantially continuous output functions are advantageous because they give a measure of confidence that the detected object fits the pattern associated with the properties and behavior of a head lamp or tail lamp.

- This probability, or confidence measure may be used to variably control the rate of change of the controlled vehicle's exterior lights, with a higher confidence causing a more rapid change.

- a probability, or confidence, measure threshold other than 0% and 100% may be used to initiate automatic control activity.

- an excellent classification scheme that considers these complex variable relationships is implemented as a neural network.