Description

METHOD AND APPARATUS FOR MACHINE PROCESSING USING WAVELET/WAVELET-PACKET BASES

FIELD OF THE INVENTION

This invention relates to apparatus and techniques for machine processing of values representative of physical

quantities. The invention was made with Government support under grant numbers N00014-88-K0020, N00014-89-J1527 and

N00014-86-K0310 awarded by the Office of Navel Research of the Department of Defense. The U.S. Government has certain rights in the invention.

BACKGROUND OF THE INVENTION

There are many practical situations where it is necessary or desirable to apply an operator, representable by a matrix of two or more dimensions, to a set of values, representable by a vector (or by another matrix, which can be visualized as a group of vectors). As one example, consider the situation in weather prediction when one has input readings of wind velocities and needs to compute a map of pressure distributions from which subsequent weather patterns can be forcasted. The wind

velocities can be represented as a vector, and an operator, which may be a matrix in the form of a generalized Hubert transform, can be applied to the vector to obtain the desired pressure distributions. For a typical weather prediction problem, the matrix will be large, and multiplying the vector by the matrix will require an enormous number of computations. For example, for a vector with N elements and an NxN matrix, the

multiplication will require order O(N2) computations. If the input vector is group of vectors viewed as another NxN matrix, the multiplication of the two matrices will require order O(N3) operations. When it is considered that the input vector may be of a dynamic nature (e.g., the input data concerning wind

velocities constantly changing), the computational task can be overwhelming.

It is among the objects of the present invention to provide a technique and apparatus to ease the computational burden in the machine processing of values representative of physical

quantities. It is among the further objects of the invention to provide a technique and apparatus which achieves a saving of storage space and/or bandwidth when information is stored and/or transmitted.

SUMMARY OF THE INVENTION

The present invention is based, in part, on the fact that many vectors and matrices encountered in practical numerical computation of physical problems are in some sense smooth; i.e., their elements are smooth functions of their indices. As will become understood, an aspect of the invention involves, inter alia, transforming vectors and matrices and converting to a sparse form.

The invention is applicable for use in a machine processing system such as a digital computer. A received first set of values is representative of any physical quantities and a second received set of values is representative of an operator. The first set of values may represent, for example, a measured or generated signal as a function of time, position, temperature, pressure, intensity, or any other index or parameter. The values may also represent, for example, coordinate positions, size, shape, or any other numerically representable physical

quantities. These examples are not intended to be limiting, and others could be set forth. The first set of values can be representable as a one dimensional vector or, for example, any number or combination of vectors that can be visualized or treated (e.g. sequentially or otherwise) as a matrix of two or more dimensions. The second set of values may represent any operator to be applied to the first set of values. Examples, again not limiting, would be a function or, like the first set of values, an arrangement representative of physical quantities. For most advantageous use of the present invention, the operator values should preferably be substantially smoothly varying; that is, with no more than a small fraction of sharp discontinuities

in the variation between adjacent values. An embodiment of the invention is a method for applying said operator to said first set of values, and comprises the following steps: transforming the first set of values, on a wavelet/wavelet-packet basis, into a transformed vector; transforming the second set of values, on a wavelet/wavelet-packet basis, into a transformed matrix;

performing a thresholding operation on the transformed matrix to obtain a processed transformed matrix; multiplying the

transformed vector by the processed transformed matrix to obtain a product vector; and back-transforming, on a

wavelet/wavelet-packet basis, the product vector. The

thresholding operation may also be performed on the transformed vector. As used herein, wavelets are zero mean value orthogonal basis functions which are non-zero over a limited extent and are used to transform an operator by their application to the

operator in a finite number of scales (dilations) and positions (translations) to obtain transform coefficients. [In the computational context, very small non-zero values may be treated as zero if they are known not to affect the desired accuracy of the solution to a problem.] Reference can be made, for example, to: A. Haar, Zur Theorie der Orthogonalen Functionsysteme, Math Annal. 69 (1910); K.G. Beauchamp, Walsh Functions And Their

Applications, Academic Press (1975); I. Daubechies, Orthonormal Bases of Compactly Supported Wavelets, Comm. Pure Appl. Math XL1 (1988). Wavelet-packets, which are described in U.S. Patent Application Serial No. 525,973, are obtained from combinations of wavelets. Back-transforming, or reverse transforming, is an operation of a type opposite to that of obtaining transform coefficients; namely, one wherein transform coefficients are utilized to reconstruct a set of values. As used herein, a wavelet/wavelet-packet basis means that either wavelets or

wavelet-packets or both are used to obtain the transform or back-transform, as the case may be.

In a described embodiment of the invention, the step of transforming the first set of values, on a wavelet/wavelet-packet basis, comprises generating difference values and at least one sum value, the difference values being obtained by taking

successively coarser differences among the first set of values. In this embodiment, the step of transforming the second set of values, on a wavelet/wavelet-packet basis, also comprises

generating difference values and at least one sum value, the difference values being obtained by taking successively coarser differences among the second set of values.

In a first form of the described embodiment (also referred to as the "non-standard" form), the transformed vector has a number of sum values that is substantially equal to the number of difference values generated from the first set of values, the sum values being generated by taking successively coarser sums among said first set of values. In this first form, said at least one sum value of the transformed matrix consists of a single sum value for the second set of values, the single sum value being an average of substantially all the second set of values.

In a second form of the described embodiment (also referred to as the "standard form"), said at least one sum value of the transformed vector consists of a single sum value, the single sum value being an average of substantially all the first set of values. In this second form, the at least one sum value of the transformed matrix consists of a single row of sum values.

In the described embodiments, the difference and sum values may be weighted sum and difference values.

A typical output as obtained using the techniques hereof is close to the vector that would have been obtained by

conventionally multiplying the original vector by the operator matrix. The closeness of the approximation will depend on the thresholding performed on the transformed matrix. As the threshold is raised (which tends to remove more non-zero values from the transformed matrix) the approximation becomes less accurate. However, as will be demonstrated, for reasonable threshold values that strikingly sparsify the transformed matrix and permit commensurate reduction in computational requirements, the approximation is quite good. Indeed, as will also be demonstrated, the reduction in overall error which results from the reduced number of computations (and, therefore, reduced accumulation of rounding error from individual computations)

tends to offset somewhat the approximation errors that result from thresholding. As will become better understood, for applications where the operator is to be utilized repetitively, so that computation of the transformed matrix need only be performed once, there is a striking saving of computational cost when processing each input vector.

The practical applications of the invention are many and varied, and the following summarizes a few exemplary fields wherein the techniques and apparatus of the invention can be employed: processing and enhancement of audio and video

information; processing of seismic and well logging information to obtain measurements and models of earth formations; medical imaging processing; optical processing; calculation of potentials and fluid flow; and computation of optimum design of structural parts. Substantial saving of storage space and/or bandwidth can also be achieved when the thresholded matrix and/or vector is stored and/or transmitted.

Further features and advantages of the invention will become more readily apparent from the following detailed description when taken in conjunction with the accompanying drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

Fig. 1 is a block diagram of a system which can be used to implement an embodiment of the invention and to practice an embodiment of the method of the invention.

Fig. 2 is a diagram of positions in a concert hall which is useful in gaining initial understanding of an application of the invention.

Fig. 3 illustrates a vector transformed on a wavelet basis and a matrix transformed on a wavelet basis in accordance with a first form of an embodiment of the invention.

Fig. 4 shows groupings of matrix component values utilized in an illustration of the first form of an embodiment of the invention.

Fig. 5 shows the polarities of the group members used to obtain the different types of matrix component values for the first form of matrix transformation in the disclosed embodiment.

Fig. 6A, 6B and 6C illustrate the matrix and vector

transformations, on a wavelet basis, for a second form of an embodiment of the invention.

Fig. 7 is a flow diagram of a routine for programming a processor to implement an embodiment of the invention.

Fig. 8 is a flow diagram of the routine for transforming a vector on a wavelet basis.

Fig. 9 is a flow diagram of the routine for transforming a matrix on a wavelet basis using the first form of an embodiment of the invention.

Fig. 10 is a flow diagram of the routine for transforming a matrix on a wavelet basis using the second form of an embodiment of the invention.

Fig. 11 is a flow diagram of the routine for threshold processing a transformed matrix or vector.

Fig. 12 is a flow diagram of a routine for applying a matrix operator to a vector.

Fig. 13 is a flow diagram of a routine for reconstructing a vector, on a wavelet basis, for a first form of an embodiment of the invention.

Fig. 14 is a flow diagram of a routine for reconstructing a vector, on a wavelet basis, for a second form of an embodiment of the invention.

Fig. 15 is an illustration of a type of wavelet that can be utilized in an embodiment of the invention.

Fig. 16 is an illustration of a transformed threshold- processed matrix, of the first form, for the operator of

Example 1.

Fig. 17 is an illustration of a transformed

threshold-processed matrix, of the second form, for the operator of Example 1.

Fig. 18 is an illustration of a transformed threshold- processed matrix, of the first form, for the operator of

Example 2.

Fig. 19 is an illustration of a transformed

threshold-processed matrix, of the second form, for the operator of Example 2.

Fig. 20 is a diagram of positions in a concert hall which is useful in understanding another application of the invention.

Fig.s 20-24 are diagrams illustrating further applications of the invention.

Fig.s 25A and 25B are diagrams illustrating conversion from the first form to the second form.

DESCRIPTION OF THE PREFERRED EMBODIMENTS

Referring to Fig. 1, there is shown a block diagram of a system 100 which can be used to implement an embodiment of the invention and to practice an embodiment of the method of the invention when a processor 110 of the system is programmed in the manner to be described. The processor 110 may be any suitable processor, for example an electronic digital or analog processor or microprocessor. It will be understood that any general purpose or special purpose processor, or other machine that can perform the computations described herein, electronically, optically, or by other means, can be utilized. The processor 110, which for purposes of the particular described embodiments hereof can be considered as the processor or CPU of a general purpose electronic digital computer, such as a SUN-3/50 Computer, sold by Sun Microsystems, will typically include memories 125 clock and timing circuitry 130, input/output functions 135 and display functions 140, which may all be of conventional types. Data 150, which is either already in digital form, or is

converted to digital form by suitable sampling and

analog-to-digital converter 145, is input to the processor 110. The data 150 is illustrated as a first set of values f1, f2, f3..., which can be considered as a vector f. The set of values can represent any physical quantity. For example, the values may represent a measured or generated signal, as a function of time, position, temperature, pressure, intensity, or any other index or parameter. The values may also represent coordinate positions, size, shape, or any other numerically representable physical quantities. Specific examples will be treated later. The values 150 can be representable as a one dimensional vector or, for example, any number or combination of vectors that can be

visualized or treated (e.g. sequentially) as a matrix of two or more dimensions.

Also input to the processor 110 is data 160 representative of a second set of values, depicted in Fig. 1 by a matrix K, that represents an operator to be applied to the vector f. For most advantageous use of the present invention, the operator values should preferably be substantially smoothly varying; that is, with no more than a small fraction of sharp discontinuities in the variation between adjacent values. The data 160 may comprise the actual operator to be applied to the data 150 or, for example, data from which an appropriate operator can be computed by processor 110.

After processing in accordance with the invention, an output set of values F1, F2, F3..., illustrated in Fig. 1 as a vector F, is produced, the vector F being a useful approximation of the operator K applied to the input vector f . In accordance with a feature of the invention, and as will be described further, the number of computations needed for effective application of the operator to the input vector is reduced, thereby saving time, memory requriements, bandwidth, cost, or a combination of these.

Various examples of applications of the present invention will be described hereinbelow, but for purposes of initially understanding an application of the invention a simplified example will be set forth. Consider the arrangement of Fig. 2 which shows a sound source 210 that may be, for example a musical instrument. An acoustic transducer, such a microphone 220, is located at a first position (1) within a concert hall 200. The acoustic signal received by the transducer 220 is a signal

A1(t), represented by the sketch in Fig. 2. The signal A1(t) can be sampled over a particular time interval, and at a suitable rate, to obtain digital samples representable as a vector f, having values f1, f2, f3... . Assume that one wants to generate, from the signal A1(t), an acoustic signal that represents the sound as heard at another position (2) in the hall. The task is fairly complex in that the sound waves have many reflective paths (in addition to the direct paths) of travel in the hall, with different characteristic arrival times and phases. The

relationship of the signal at location 2 with respect to the signal at location 1 will be determined by the positions of 1 and 2 with respect to the source 210, and by the shape of the hall 200. It is known that the relationship is approximated by an operator which can be represented by a matrix in two or more dimensions. The vector can be multiplied by the operator matrix to obtain an output vector which, after digital-to-analog conversion, will approximately represent the sound segment heard at location 2. For a matrix of substantial size, multiplying each input vector (representative of a segment of sampled acoustic signal) by the operator matrix will require a

relatively large number of computations. For example, assume that the acoustic signal vector has N elements, and that the operator matrix has NxN elements. In such case, each time the vector is multiplied by the matrix, N individual multiplications and N additions are required. The desirability of substantially reducing this computational burden, without unduly compromising the accuracy of the result, is evident. The advantage can be thought of in terms of increased speed for a given computational capability, or reduction in required computing power for a given allotted time, or both.

The foregoing is a simplified example of the type of machine processing task for which the system and technique of the present invention will be advantageous. The invention substantially reduces the computational burden without undue compromise in the accuracy of the result. There are various types of machine processing applications wherein operators to be applied are represented by very large matrices in two or more dimensions, and, as will become understood, the benefits of the invention become more pronounced as the magnitude of the original

computational task is increased. The example set forth above, for the purpose of initial explanation, is but one of many situations in which the system and technique of the invention can be employed, and a number of examples and applications are described hereinbelow after the following description of

different forms of the invented technique. Reference can also be made to Appendices I and II, appended hereto.

In accordance with an embodiment of the invention, the vector f is transformed, on a wavelet basis (as previously defined), to obtain a transformed vector designated fwp. The present illustrative embodiment uses the well known Haar

functions, as defined by the relationship (2.1) of Appendix I. Haar coefficients dk j and averages Sk j are computed in accordance with relationships (2.7) through (2.12) of Appendix I. Omitting the normalizing weights (which are 1//2 for Haar wavelets), the first level coefficients (differences) dk 1 are obtained as

d1 1 = f1 - f2

d2 1 = f3 - f4

d3 1 = f5 - f6

.

.

.

The first level averages (sums) s 1 are obtained as

s11 = f1 + f2

s21 = f3 + f4

s31 = f5 + f6

.

.

.

The second level coefficients dk 2 are obtained as

d1 2 = s1 1 - s2 1

d2 2 = s3 1 - s4 1

d3 2 = s5 1 - s6 1

.

.

.

The second level averages sk 2 are obtained as

s1 2 = s1 1 + s2 1

s2 2 = s3 1 + s4 1

s3 2 = s5 1 - s6 1

.

.

.

Thus, the pyramid scheme illustrated in (2.12) of Appendix I can

be used to develop all dk j and sk j in accordance with the

relationships (2.10) and (2.11). For N Samples, evaluating a complete set of coefficients and averages will require 2(N-1) additions and 2N multiplications. There will be (N-1)

coefficients and (N-1) averages. As an example, if there are 256 samples, there will be 255 coefficients and 255 averages

obtained. In such case, it is known that the original samples could be completely recovered from the 255 [(i.e., (N-1)]

coefficients dk j and the single highest level average, s. [The recovery formulas are given in (3.15) of Appendix I.] The other 254 averages, sk j, are not needed for this purpose, but are utilized to advantage in the present embodiment, as described further hereinbelow. The component values for the transformed vector fw are illustrated in the strip on the right side of

Fig. 3.

A matrix K(x,y), represents an operator that is to be applied to the vector f. The matrix has a first row of values designated K11, K12, K13, ..., a second row of values designated K21, K22, K23, ... , and so on. The present illustration uses Haar functions to obtain transformed sets of values designated αj, βj, and γj, in accordance with the relationships (2.13) through

(2.26) of Appendix I. The values α1, β1 and γ1 (the first level values) contain the most detailed difference information, with successively higher level values of αj, βj and γj successively containing less detailed (coarser) difference information. Sums are developed at each level, and used only for obtaining the difference information at the next level. Only the last sum is saved. In the present embodiment, the values can be visualized as being stored in a transformed matrix arrangement as

illustrated in Fig. 3; i.e., in regions starting (for first level designations) in the upper left-hand corner of the

transformed matrix and descending, for successively higher level designations, toward the lower right-hand corner. The procedure is as follows. Again, omitting normalizing weights, the first level coefficients and sums are:

First row of α1, β1 , γ1 and S1 α1 1 1 = + K11 - K12 - K21 + K22

β1 1 1 = + K11 + K12 - K21 - K22

γ111 = + K11 - K12 + K21 - K22

S111 = + K11 + K12 + K21 + K22 α1 1 2 = + K13 - K14 - K23 + K24

β1 1 2 = + K13 + K14 - K23 - K24

γ1 1 2 = + K13 - K14 + K23 - K24

S1 1 2 = + K13 + K14 + K23 + K24 α1 1 3 = + K15 - K16 - K25 + K26

β1 1 3 = + K15 + K16 - K25 - K26

γ1 1 3 = + K15 - K16 + K25 - K26

S1 1 3 = + K15 + K16 + K25 + K26

and so on, for the first row of α1, the first row of β1 the first row of γ1, and the first row of S1. Second row of α1, β1, γ1 and S1

α2 1 1 = + K31 - K32 - K41 + K42

β2 1 1 = + K31 + K32 - K41 - K42

γ2 1 1 = + K31 - K32 + K41 - K42

S2 1 1 = + K31 + K32 + K41 + K42 α2 1 2 = + K33 - K34 - K43 + K44

β2 1 2 = + K33 + K34 - K43 - K44

γ2 1 2 = + K33 - K34 + K43 - K44

S2 1 2 = + K33 + K34 + K43 + K44 α2 1 3 = + K35 - K36 - K45 + K46

β2 1 3 = + K35 + K36 - K45 - K46

γ2 1 3 = + K35 - K36 + K45 - K46

S2 1 3 = + K35 + K36 + K45 + K46

and so on, for the second row of α1, the second row of β1, the second row of γ1, and the second row of S1. The subsequent rows of α1, β1, γ1 and S1 are developed in similar manner. The

procedure is illustrated in Fig.s 4 and 5, with Fig. 4 showing the groups of four values (circled) used for the first and second rows of the first level computations, and Fig. 5 showing the

polarities for the differences α, β and γ, and the sum S. The second level coefficients and sums can then be developed from the first level sums, as follows:

First row of α2, β2, γ2 and S2 α1 2 1 = + S1 1 1 - S1 1 2 - S2 1 1 + S2 1 2

β1 2 1 = + S1 1 1 + S1 1 2 - S2 1 1 - S2 1 2

γ1 2 1 = + S1 1 1 - S1 1 2 + S2 1 1 - S2 1 2

S1 2 1 = + S1 1 1 + S1 1 2 + S2 1 1 + S2 1 2 α1 2 2 = + S1 1 3 - S1 1 4 - S2 1 3 + S2 1 4

β1 2 2 = + S1 1 3 + S1 1 4 - S2 1 3 - S2 1 4

γ1 2 2 = + S1 1 3 - S1 1 4 + S2 1 3 - S2 1 4

S1 2 2 = + S1 1 3 + S1 1 4 + S2 1 3 + S2 1 4 α1 2 3 = + S1 1 5 - S1 1 6 - S2 1 5 + S2 1 6

β1 2 3 = + S1 1 5 + S1 1 6 - S2 1 5 - S2 1 6

γ1 2 3 = + S1 1 5 - S1 1 6 + S2 1 5 - S2 1 6

S1 2 3 = + S1 1 5 + S1 1 6 + S2 1 5 + S2 1 6

and so on, for the first row of each.

Second row of α2, β2, γ2 and S2 α2 2 1 = + S3 1 1 - S3 1 2 - S4 1 1 + S4 1 2

β2 2 1 = + S3 1 1 + S3 1 2 - S4 1 1 - S4 1 2

γ2 2 1 = + S3 1 1 - S3 1 2 + S4 1 1 - S4 1 2

S2 2 1 = + S3 1 1 + S3 1 2 + S4 1 1 + S4 1 2 α2 2 2 = + S3 1 3 - S3 1 4 - S4 1 3 + S4 1 4

β2 2 2 = + S3 1 3 + S3 1 4 - S4 1 3 - S4 1 4

γ2 2 2 = + S3 1 3 - S3 1 4 + S4 1 3 - S4 1 4

S2 2 2 = + S3 1 3 + S3 1 4 + S4 1 3 + S4 1 4 α2 2 3 = + S3 1 5 - S3 1 6 - S4 1 5 + S4 1 6

β2 2 3 = + S3 1 5 + S3 1 6 - S4 1 5 - S4 1 6

γ2 2 3 = + S3 1 5 - S3 1 6 + S4 1 5 - S4 1 6

S2 2 3 = + S3 1 5 + S3 1 6 + S4 1 5 + S4 1 6

and so on, for the second row of each.

Subsequent levels of αk, βk, γk and Sk can be computed, in similar manner, and positioned in diagonally descending order toward the lower right-hand corner of the transformed matrix of Fig. 3. The final sum is stored in the lower rightmost corner of the transformed matrix. From the above, and the illustration of Fig. 3, it can be seen that for an original matrix size NxN, the transformed matrix of Fig. 3 will have dimensions 2(N-1)x2(N-1), and there will be up to log2N levels or "groups" of αk, βk and γk, with the final sum being associated with the highest level group. To facilitate understanding, it is assumed in this example that there are log2N levels. The transformed matrix is designated Kw. Thus, for example, a 256x256 matrix will

transform, in this format, to 510x510, with eight "groups" of α, β and γ. Of course, only 2562 members of the transformed matrix will have values (even before the thresholding, to be described) the rest of the matrix in this format being empty. The final sum will be placed in the lower right-hand quadrant of the final "group" (the corresponding quadrants of all other groups being empty), as illustrated in the Figure. The transformed matrix is designated Kw.

The next step in the present embodiment is to set to zero (i.e., effectively remove from the transformed matrix) the matrix members that have values less than a predetermined magnitude;

i.e. remove all matrix members (other than the final S) whose absolute values are less than a predetermined threshold. This can be routinely done by scanning the completed matrix, or by performing the thresholding operation as each level of the matrix is constructed (all values of S being retained, of course, for computation of the next level) .

The next step in the present embodiment involves multiplying the vector which has been transformed on a wavelet basis (and is designated fw), by the matrix which has been transformed on a wavelet basis and threshold-modified (and is then designated Kwp) . This can be achieved, in known fashion, by multiplying the vector fw, a row at a time, by the rows of K^ to obtain the individual members of a product vector fwp. Techniques for multiplying a

vector by a matrix are well known in the art, as are techniques for efficiently achieving this end when the matrix is sparse and has a large percentage of zero (or empty) values. These zero values, or strings of them, can be flagged beforehand, so that only those matrix positions, or strings of positions which have non-zero values will be considered in the multiplication process. It will be understood that for this form of the invention the transformed matrix, Kw, and/or the threshold-modified version thereof, Kwp, can be stored in other formats than that used for illustration in the Fig. 3 (such as a continuous string of values), so long as the transformed values are in known positions to achieve the appropriate multiplication of values of vector and matrix rows, in accordance with the scheme of the Fig. 3

illustration.

The product vector fwp that results from the described multiplication will have individual component values in the same arrangement as illustrated in the transformed vector of Fig. 3; namely differences now designated d1 1p, d2 1p . . . . , d1 2p ,

d

2 2p......

/ ' a nd so on, and sums now designated s

1 1p, s

2 1p.....

S / s

1 2p, s

2 2p . ...

/ / and so on, but where the p in the

superscript indicates that these transformed vector values have been processed, in this case as a result of having been

multiplied by the transformed matrix Kw.

The transformed and processed vector fwp can then be

back-transformed, on a wavelet basis, to obtain an output vector, F, that is close to the vector that would have been obtained by conventionally multiplying the original vector f by the matrix K. This can be done, for example, in accordance with the

relationships (2.27) through (2.30) and (3.15) of Appendix I. In particular, the highest level sp and the highest level dp are combined to obtain the next lower level difference and sum information. [The sum information is accumulated at each step.] The sum and difference information can then be used to obtain the next lower level information, and so on, until the lowest level information values, which are the components of F, are obtained.

As stated, the output vector F is close to the vector that would have been obtained by conventionally multiplying the

original vector f by the matrix K. The closeness of the

approximation will depend on the thresholding performed on the transformed matrix Kw. As the threshold is raised (which tends to remove more non-zero values from the transformed matrix Kw) the approximation becomes less accurate. However, as will be demonstrated, for reasonable threshold values that strikingly sparsify the transformed matrix and permit commensurate reduction in computational requirements, the approximation is quite good. Indeed, as will also be demonstrated, the reduction in overall error which results from the reduced number of computations (and, therefore, reduced accumulation of rounding error from individual computations) tends to offset somewhat the approximation errors that result from thresholding. As will become better understood, for applications where the operator [matrix K(x,y), for the above example] is to be utilized repetitively, so that computation of the transformed matrix need only be performed once, there is a striking saving of computational cost when processing each input vector.

A feature of the described first form of the invention is the "decoupling" among the different levels (or coarseness scales) which are a measure of difference between adjacent elements (at the lowest processing level) or adjacent groups of elements (at successively higher levels, as the groups get larger) when the transformed matrix and vector are multiplied. [See, for example, (4.32) - (4.58), and accompanying text, in Appendix I]. In other words, and as can be seen by visualizing the vector fw in a horizontal configuration for successive multiplication by the matrix rows, the lowest level α, β and γ of the transformed matrix Kw are combined only with the lowest level difference and sum information of the transformed vector fw.

Similarly, the second lowest levels of Kw and fw are combined, and so on. There are no "cross-terms" between levels. It is seen that the difference terms of fw interact with the α and γ terms of Kw at each level, and the sum terms of fw interact with the β terms.

A further embodiment hereof is referred to as a second form or "standard form". In this embodiment, the Haar (or other

wavelet or wave packet) coefficients and sum that are stored are those that could be used to recover the original vector or matrix (as the case may be), and they are generated and arranged in a symmetrical fashion that permits inversion. Assume, again, that one has a vector f with values f1, f2, f3,..., and that Haar functions are then used as representative wavelet or wave packet transform vehicles. In this embodiment, however, the transformed vector, fw, contains only the difference information (i.e., coefficients designated d1 1, d2 1, d3 1,...

/ d1 2, d2 2, d3,...

and so on), and the final sum (s), as illustrated in the

right-hand strip of Fig. 6C. Assume, again, that a matrix K(x,y) represents an operator that is to be applied to the vector f (see Fig. 6A). The matrix again has a first row of values designated K11, K12, K13..., a second row of values designated K21, K22, K23..., and so on. This illustration again uses Haar

functions to obtain transformed values; in this case, in

accordance with the relationships (2.10) through (2.12) of

Appendix I. First, each row of the matrix is processed to obtain coefficients (differences) and a final sum, in the same manner as used to transform the vector f to fw as just described and as shown in the strip at the right-hand side of Fig. 6C. In

particular, the first matrix row K11, K12, K13... K1N is converted to D1 1 1 , D1 1 2 , D1 1 3....D1 1, N- 1 , S1N , where

D1 1 1 = K11 - K12

D1 1 2 = K13 - K14

.

.

.

D1 1 ( N/2 ) = K1 ( N- 1 ) - K1N

D1 1 ( N/2+ 1 ) = S1 1 1 - S1 1 2

.

.

.

D1 1 ( 3N/4 ) = S1 1 ( N/2- 1 ) - S1 1 (N/2 )

D1 1 ( 3N/4+1 ) = S1 1 (N/2+1) - S1 1 (N/2+2 )

.

S1 1 N = K11 + K12 + .. .K1N where

S1 1 1 = K11 + K12

S1 1 1 = K13 + K14

.

.

.

S1 1 (N/2) = K1(N-1) + K1N

S1 1 (N/2+1) = S1 1 1 + S1 1 2

.

.

.

S1 1 (3N/4) = S1 1 (N/2-1) + S1 1 (N/2)

S1 1 (3N/4+1) = S1 1 (N/2+1) + S1 1 (N/2+2)

.

.

.

Similarly, the second row K21, K22, K23...K2N is converted to D211, D2 1 2, D2 1 3...D2,N-1 S2,N , and each subsequent row is converted in similar manner. The result is shown in Fig. 6B. Next, the same procedure is applied, by column, to the individual columns of the Fig. 6B arrangement. In particular, the column D1 1 1, D2 1 1, D3 1 1... , is converted to D1 2 1, D2 2 1, D3 2 1..., where

D1 2 1 = S1 1 1 - S2 1 1

D2 2 1 = S3 1 1 - S4 1 1

.

.

.

D( 2 N/2)1 = S( 1 N-1)1 - SN 1 1

D( 2 N/2+1)1 = S1 2 1 - S2 2 1

.

.

.

D( 2 3N/4)1 = S( 2 N/2+1)1 - S( 2 N/2+2)1

.

.

.

SN 2 1 = S1 1 1 + S2 1 1..... SN 1 1

where

S1 2 1 = S1 1 1 + S2 1 1

S 2 2 1 = S 3 1 1 + S 4 1 1

.

.

.

S( 2 N/2 ) 1 = S( 1 N-1) 1 + SN 1 1

S(N 2 /2+1)1 = S 1 2 1 + S 2 2 1

.

.

.

S( 2 3N/4)1 = S( 2 N/2+1)1 + S( 2 N/2+2)1

.

.

.

Similarly, the column D1 1 2, D2 1 2, D3 1 2...DN 1 2 is converted to D1 2 2 , D2 2 2, D3 2 2...SN 2 2, and each subsequent column is converted in similar manner with the resultant transformed matrix, Kw, being illustrated in Fig. 6C.

A thresholding procedure can then be applied to the

transformed matrix, as previously described. An advantage of the resultant sparse matrix (which is obtained when a matrix is transformed using the just-described second form, and then processed with a thresholding operation) is that its product after multiplication by another similarly transformed matrix, will remain in said second form.

After multiplication of Kw by fw, and other suitable

manipulation, the resultant vector fwp can be back-transformed, using the reverse of the procedure described to obtain fw, to obtain the output vector F. This can be done, for example, in accordance with relationships (3.15) of Appendix I. [Also see observation 3.1 of Appendix I.] In particular, the sum sp and the highest level dp difference are combined to obtain the next lower level sum information, which is then combined with the

difference information at that level to obtain the next lower level sum information, and so on, until the components of output vector F are obtained.

Referring to Fig. 7, there is shown an overview flow diagram of the routine for programming the processor 110 (Fig. 1) to implement the technique of the first or second forms of the disclosed embodiment of the invention. The block 710 represents the routine, described further in conjunction with Fig. 8, for transforming the vector f on a wavelet basis to obtain a

transformed vector fw, in either the first or second form. The block 720 represents the routine for transforming the matrix K, on a wavelet basis, to obtain a transformed matrix, Kw. The first form matrix transformation is further described in

conjunction with Fig. 9, and the second form matrix

transformation is further described in conjunction with Fig. 10. The block 730 represents the routine, described further in conjunction with Fig. 11, for threshold processing of the transformed matrix Kw to obtain the transformed processed matrix Kwp. The block 740 represents the routine, described further in conjunction with Fig. 12, for applying the transformed and processed operator matrix Kwp to the transformed vector fw to obtain a product vector fwp. The block 750 represents the routine for back-transforming from fwp, on a wavelet basis, to obtain the output vector F.

Fig. 8 is a flow diagram of a routine for transforming the vector f, on a wavelet basis, into a transformed vector fw; that is, for the first form, a vector having coefficients

(differences) and sums as indicated for the transformed vector components illustrated in the strip on the right-hand side of Fig. 3 and, for the second form, the coefficients and the single final sum illustrated in the strip on the right-hand side of Fig. 6C. The block 810 represents the inputting of the vector component values f1, f2, ... fN of the vector to be transformed. The first level coefficients (differences) can then be computed and stored, as represented by the block 820. These differences are in accordance with the relationships set forth above for the d values, each being computed as a difference of adjacent

component values of the vector being transformed. The first level sums are then also computed and stored from the vector component values, as described hereinabove, to obtain the s1 values, and as represented by the block 830. [For the first form (Fig. 3), all the sums will be retained for fw, whereas for the second form (Fig. 6), only the final sum is part of fw.] The block 840 is then entered, this block representing the computing and storing of the next higher level differences (for example, the d2 values) from the previous level sums. Also computed and stored, from the previous level sums, are the next higher level sums, as represented by the block 850. Inquiry is then made (diamond 860) as to whether the last level has been processed. [There will be at most log2N levels for a vector having N components.] If not, the level index is incremented (block 870) and the block 840 is re-entered. The loop 875 continues until all differences and sums of the transformed vector have been computed and stored, whereupon the inquiry of diamond 860 is answered in the negative and the vector transformation is

complete.

Fig. 9 shows the routine for transforming the matrix K

(which, in the present example, is an NxN matrix, where N is a power of 2), on a wavelet basis, to obtain a transformed matrix Kw of the first form and as illustrated in Fig. 3. In the

routine of Fig. 9, the block 910 represents the reading in of the matrix values for the matrix K. The block 920 represents the computation and storage of the first level α, β, γ, and S values in accordance with relationships first set forth above. In the present embodiment, the values of α, β and γ are stored at positions (or, if desired, with index numbers, or in a sequence) that correspond to the indicated positions of Fig. 3, and which will result in suitable multiplication of each transformed vector element by the appropriate transformed matrix value in accordance with the routine of Fig. 12. The sums, S, need only be stored until used for obtaining the next level of transform values, with only the final sum being retained in the transformed matrix of the first form of the present embodiment. Next, the block 930 represents computation and storage, from the previous level

values of S, of the next higher level values of α, β, γ and S, in accordance with the above relationships. Again each computed value is stored at a matrix position (or, as previously

indicated, with an appropriate index or sequence) in accordance with the diagram of Fig. 3. Inquiry is then made (diamond 940) as to whether the highest level has been processed. For an NxN matrix, there will be at most log2N levels, as previously noted. If not, the level index is incremented (block 950), and the block 930 is re-entered. The loop 955 then continues until the

transform values have been computed and stored for all levels.

Fig. 10 shows the routine for transforming the matrix K, on a wavelet basis, to obtain a transformed matrix Kw of the second form and as illustrated in Fig. 6C. The block 1010 represents the reading in of the matrix values for the matrix K. The block 1020 represents the computation and storage of the row

transformation coefficient values D1 and sums S1 in accordance with the relationships described in conjunction with Fig. 6B. Next, the block 1030 represents the computation and storage of the column transformation coefficient values D2 and S2 in

accordance with the relationships described in conjunction with Fig. 6C.

Fig. 11 is a flow diagram illustrating a routine for

processing the transformed matrix to remove members thereof that have an absolute magnitude smaller than a predetermined

threshold. It will be understood that, if desired, at least some of the thresholding can be performed as the transformed matrix is being computed and stored, but the thresholding of the present embodiment is shown as a subsequent operation for ease of

illustration. The block 1110 represents initiation of the scanning process, by initializing an index used to sequence through the transformed matrix members. Inquiry is made (diamond 1115) as to whether the absolute value of the currently indexed matrix element is greater than the predetermined threshold. If the answer is yes, the diamond 1120 is entered, and inquiry is made as to whether a flag is set. The flag is used to mark strings of below threshold element values, so that they can effectively be considered as zeros during subsequent processing.

If a flag is not currently set, the block 1125 is entered, this block representing the setting of a flag for the address of the current matrix component. Diamond 1160 is then entered, and is also directly entered from the "yes" output of diamond 1120. If the threshold is exceeded, the diamond 1130 is entered, and inquiry is made as to whether the flag is currently set. If it is, the flag is turned off (block 1135) and diamond 1160 is entered. If not, diamond 1160 is entered directly. Diamond 1160 represents the inquiry as to whether the last element of the matrix has been reached. If not, the index is incremented to the next element (block 1150), and the loop 1155 is continued until the entire transformed matrix has been processed so that flags are set to cover those elements, or sequences of elements, that are to be considered as zero during subsequent processing.

Referring to Fig. 12, there is shown a flow diagram of a routine for multiplying the transformed vector fw by the

transformed and threshold-processed matrix Kwp. In the present illustrative embodiment, the previously described flags are used to keep track of strings of zeros in the matrix, and each element of the vector and matrix is considered for ease of illustration. It will be understood, however, that various techniques are known in the art for multiplying matrices,

including sparse matrices, in efficient fashion [see, for example, S. Pissanetsky, Sparse Matrix Technology, Academic

Press, 1984]. Such techniques can also be used for multiplying matrices having bands of information in approximately known positions, as will occur in certain cases hereof. In the

illustrative routine of Fig. 12, indices used to keep track of the matrix row (block 1210) and the vector element and

corresponding matrix column (block 1220) are initialized.

Inquiry is made (diamond 1225) as to whether the flag is set for the current matrix element. If so, the element index can skip to the end of the string of elements on the row at which the flag is still set, this function being represented by the block 1230. If the flag is not set at this element of the matrix, the block 1235 is entered, this block representing the multiplying of the

current vector and matrix elements . The product is then added to

the current row accumulator, as represented by the block 1240. Inquiry is then made (diamond 1245, which is also entered from the output of block 1230) as to whether the last element of the row has been reached. If not, the matrix and vector element index is incremented (block 1250), and the loop 1255 is continued until the row is complete. When this occurs, the block 1260 is entered, this block representing the storing of the accumulator contents (which is the total for the current matrix row times the vector) at the component of the output vector that

corresponds to the current row. Inquiry is then made (diamond 1265) as to whether the last row has been reached. If not, the row index is incremented (block 1280) and the block 1220 is re-entered. The loop 1285 then continues as the vector fw is multiplied by each row of the matrix Kwp, and the individual components of the output vector fwp are computed and stored.

It will be understood that alternative techniques can be utilized for multiplying a sparse matrix by a vector. For example, a simplified sparse matrix format, which provides fast processing, can employ one-dimensional arrays (vectors)

designated a , ia, and ja. Vector a would contain only the non-zero elements of the matrix, and integer vectors ia and ja would respectively contain the row and column locations of the element. The total number of elements in each of the vectors a, ia, ja, is equal to the total number of nonzero elements in the original matrix. The vector a, with its associated integer vectors used as indices, can then be applied to a desired vector or matrix (which may also be in a similar sparse format) to obtain a product.

Referring to Fig. 13, there is shown a flow diagram of the routine for back-transforming to obtain an output vector F from the transformed and processed vector fwp of the first form. The block 1310 represents initializing to first consider the highest level sum and difference components of fwp. The next lower level sum information is then computed from the previous level sum and difference information, this operation being represented by the block 1320. For example, and as previously described at the last level using Haar wavelets, the sum and difference would be added

and subtracted to respectively obtain the two next lower level sums. The block 1330 is then entered, this block representing the adding of the sum information to the existing sum information at the next lower level. Inquiry is then made (diamond 1340) as to whether the lowest level has been reached. If not, the level index is decremented (block 1350), and the the block 1320 is re-entered. The next lower level sum information is then

computed, as before, and the loop 1355 continues until the lowest level has been reached. The lowest level sum and difference information is then combined (block 1370) to obtain the

components of an output vector F, and the result is read out (block 1380).

Fig. 14 is a flow diagram of the routine for back- transforming to obtain the output vector F from the transformed and processed vector f^ of the second form. The routine is similar to that of the Fig. 13 routine (for the first form), except that there is no extra sum information to be accumulated at each succeeding lower level. Accordingly, all blocks of like reference numerals to those of Fig. 13 represent similar

functions, but there is no counterpart of block 1330.

The Haar wavelet system, used for ease of explanation herein, often does not lead to transformed and thresholded matrices that decay away from the diagonals (i.e., for example, the diagonals between the upper left corner and the lower right corner of the square regions of Fig. 3) as fast as can be

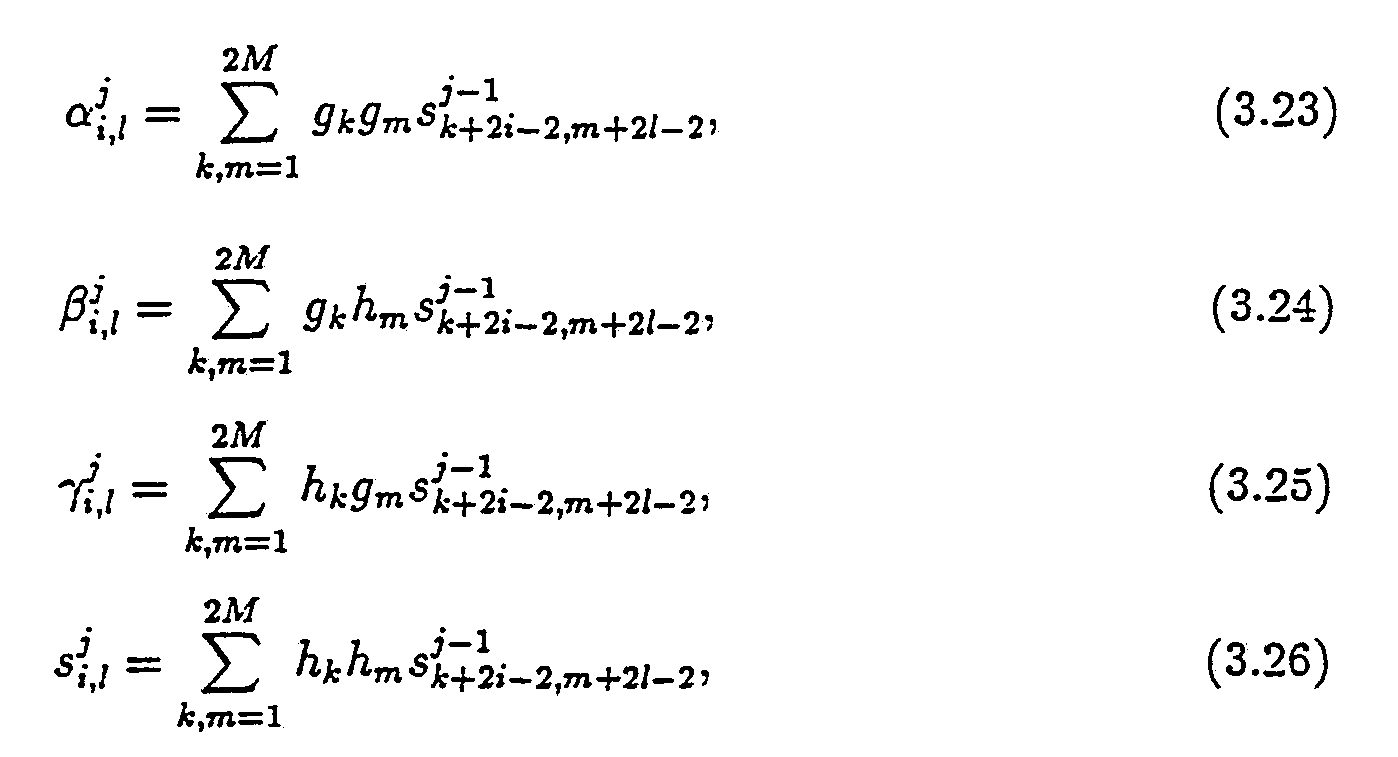

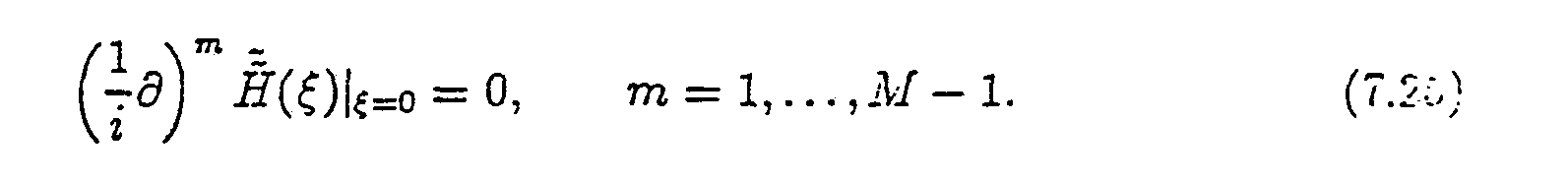

achieved with other wavelet bases. To obtain faster decay in these cases, one can employ a wavelet/wavelet-packet basis in which the wavelet elements (or wavelet-packets) have several vanishing moments. These are described for example in (3.1) - (3.26) of Appendix I. Reference can also be made to I.

Daubechies, Orthonormal Bases of Compactly Supported Wavelets, Comm. Pure, Applied Math, XL1, 1988; Y. Meyer Principe

d' Incertitude, Bases Hilbertiennes et Algebres d'Operateurs, Seminaire Bourbaki, 1985-86, 662, Astέrisque (Societe'

Mathematique de France); S. Mallat, Review of Multifrequency

Channel Decomposition of Images and Wavelet Models, Technical Report 412, Robotics Report 178, NYU (1988), and thee

aboverefrenced U.S. Patent Application Serial No. 525,973.

The wavelet illustrated in Fig. 15 has two vanishing

moments. As used herein, the number of vanishing moments, for a wavelet Ψ(x) is determined by the highest integer n for which

∫ψ(x) xkdx = 0

where 0≤k<n-l, and this is known as the vanishing moments

condition. Using this convention, the Haar wavelet has 1

vanishing moment, and the wavelet of Fig. 15 has 2 vanishing moments. To obtain faster decay away from the diagonal, it is preferable that the wavelet (and/or wavelet-packet obtained from combining wavelets) has a plurality of vanishing moments, with several vanishing moments being most preferred. The wavelet of Fig. 15 has defining coefficients as follows: (See Daubechies, supra) :

h2 = (1 + / /

h2 = (3 + / /

h3 = (3 - /

g 1 = h4

g2 = -h3

g3 = h2

g4 = -h1

The vanishing moments condition, written in terms of defining coefficients, would be the following:

and L is the number of coefficients.

Referring again to the wavelet of Fig. 15, the procedure for applying this wavelet would be similar to that described above for the Haar wavelet, but groups of 4 elements would be utilized, and would be multiplied by the h coefficients and the g

coefficients to obtain the respective averages and differences.

It is generally advantageous to utilize wavelets (and/or wavelet-packets) having as many vanishing moments as is

practical, it being understood that the computational burden of computing the transformed vectors and/or matrices increases as the number of vanishing moments (and coefficients) increases. Accordingly, a trade-off exists which will generally lead to use of a moderate number of vanishing moments, as in the illustrative examples below.

EXAMPLES

Properties of various types of matrices, including the extent to which they are rendered sparse using the techniques hereof, are illustrated in conjunction with the following

examples. The numerical experiments of these examples were performed on a SUN-3/50 computer equipped with an MC68881

floating-point accelerator. The algorithms were applied to a number of different operators, using a FORTRAN program, as described in the examples to follow. Column 1 of the Table accompanying each example shows the number of nodes N in the discretization of the operator. An N-component random vector is multiplied by each NxN matrix for each example, for N = 64, 128, 256, 512, and 1024. Calculations were performed, in both single precision (7 digit precision) and double precision (15 digit precision). Ts is the CPU time, in seconds, that is required for performing the multiplication using conventional technique; that is, an order O(N2) operation. Tw is the CPU time that is required for performing the multiplication on a wavelet basis. The wavelet used for these examples was of the type described by Daubechies [see I. Daubechies Orthonormal Bases of Compactly Supported Wavelets, Comm. Pure, Applied Math, XL1, 1988], with the number of vanishing moments and defining coefficients as indicated for each example. [It will be understood that the higher the number of vanishing moments, the more defining

coefficients a wavelet will have, and accuracy can, to some degree, be traded off against processing time.] Td is the CPU time used to obtain the first form of the operator (e.g. Fig. 3).

The L2 (normalized) and L∞ (normalized) errors are taken with respect to double precision calculations for the conventional matrix multiplication technique [order O(N2)]. L2 is the square root of the sum of the squares of the difference at each matrix point. L∞ is the largest single difference. The last column of each Table indicates the compression coefficient, Ccomp, which is the ratio of the number of elements in the matrix, N2, to the number of non-zero elements in the threshold-processed matrix, using the first form of the invention. In all Figures 16 to 23, the matrices are depicted for N = 256.

Example 1

were processed using wavelets with six first moments set equal to zero, and setting to zero all resultant matrix entries whose absolute values were smaller than 10-7 , with results shown in Table 1 and Fig. 16. The second form (so-called "standard form") threshold-processed matrix is shown in Fig. 17.

The operator represented by the matrix of this example has the familiar Hubert transform characteristic which can be used in various practical applications. For example, consider the problem in weather prediction, first noted in the Background section hereof, when one has input readings of wind velocities and wishes to compute a map of pressure distributions from which subsequent weather patterns can be forecasted. The operator used in computing the pressure distributions may be of the type set forth in this example.

The Table 1 shows the striking advantage of the processing hereof as the matrix size increases. For example, for N = 1024, Ts is 30.72 seconds, whereas Tw is only a small fraction as much (3.72 seconds). Although Td is quite high (605.74 seconds, without optimized software) in this example, it will be

understood that for most applications Td will only have to be computed once, or infrequently. Another advantage of the sparse matrix is illustrated by comparing the normalized errors L and

L∞ for single precision multiplication with the normalized erro L2 and L∞ for the processing hereof (designated "FWT" for "fast wavelet transform"). [As indicated above, for all such

measurements, the normalizing factor is the double precision measurements using conventional matrix multiplication technique. It is seen that FWT error values are less than the single precision error values in all cases, with the contrast greatest for largest N. It is thus seen that computational rounding erro tends to accumulate less when there are many fewer non-zero members in the matrix.

Example 2

In this example the matrices considered are of the form -l

where i, j = 1, . . . , N and N = 2n.

This matrix is not a function of i-j and is therefore not a convolution type matrix, and its singularities are more

complicated, and not known a priori. Wavelets of six vanishing moments and a threshold of 10

-7 were used The results are shown in Fig. 18 and are tabulated in Table 2. The second form matrix is shown in Fig. 19. The cost of constructing the second form of the operator is proportional to Ν

2.

Fig. 20 illustrates a simplified example of how an

embodiment of the invention can be used to advantage for

efficient inverting of a matrix. Consider, again, the concert hall 200, sound source 210, and acoustic transducer 200, as in the Fig. 2 example. In the present illustration, however, it is assumed that one wishes to use the received acoustic signal

(which results from a direct signal and many reflected signals of different characteristic path lengths - and accordingly differen arrival times and phases) in order to compute the original signal at the source 210. Again, assume that a segment of the signal A1(t) is sampled and digitized to obtain the vector f. A matrix, designated K, can be determined, in known fashion, from the shap of the hall and the respective positions of the sound source 210 and the receiving transducer 220. If a segment of the original sound (sampled and digitized) is representable in this example by the vector F, and F were known, one could obtain the vector f from the vector F and the matrix K. In the present example, however, it is assumed that one has the vector f from the

measurements at the receiving transducer, and that F is the unknown to be determined. In such instance, it is necessary to invert K to obtain an inverted matrix K-1, and then use this inverted matrix in conjunction with the vector f in order to obtain the vector F. The procedure, in conventional fashion, is illustrated in Fig. 20. Techniques for inversion of a matrix are well known in the art. For a matrix of substantial size,

however, the inversion process involves a large number of

computations (for example, of the order of N2 computations for an NxN matrix). However, if the matrix being inverted is sparse, the computational task is drastically reduced, as illustrated for similar situations above.

The improved procedure, in accordance with an embodiment of the invention, is illustrated in Fig. 21. The matrix f is transformed, on a wavelet/wavelet-packet basis, using the above second form (or "standard form") to obtain fw. This can be done in accordance with the procedure described in conjunction with Fig. 6 (flow diagram of Fig. 8). Also, as before, the matrix K is transformed, using the second form, as also illustrated in conjunction with Fig. 6 (and the flow diagram with Fig. 10), to obtain Kw. The matrix is then threshold processed (e.g. flow diagram of Fig. 11) to obtain Kwp, and the matrix is inverted to obtain [Kwp]-1 (see Appendix II). The inverted matrix can then be multiplied by the vector fw (e.g. flow diagram of Fig. 12) to obtain the product vector fwp, and the output vector, F, can be recovered by back-transforming (e.g. flow diagram of Fig. 14).

As first noted above, substantially smooth vectors can, if desired, also be compressed by threshold processing prior to multiplication by a transformed and threshold processed matrix. The procedure is similar to that set forth in the flow diagram of Fig. 11, except that a step of threshold processing the

transformed vector fw is performed before the vector is

multiplied by the matrix. This is illustrated in Fig. 22, which shows the vector fw processed to obtain fwp. In this case, the product vector is designated fwpp.

Fig. 23 illustrates the procedure for matrix multiplication (see also Appendix II), for example, where one matrix L

represents a first set of values representative of physical quantities, and a second matrix K represents an operator to be applied. [It will be understood that the matrix L, in any number of dimensions, can be visualized as a set of vectors f.] In the Fig. 23 illustration, only one of the matrices (K) is compressed by threshold processing. In the similar example illustrated in Fig. 24, both matrices are threshold processed before

multiplication of the matrices. In both Fig. 23 and Fig. 24, the

product matrix is designated Mw, and the back-transformed output matrix is designated M. In both cases, the second form (or so-called standard form) is utilized. The back-transform

technique is as described in conjunction with the flow diagram of Fig. 14, but with the columns and than rows being processed (in the manner of individual vectors processed as illustrated in Fig. 6), in the reverse of the order described in conjunction with Fig. 6.

As described in Appendix I, e.g. at relationships (4.27) through (4.29), one can convert between the above-described first and second forms, or vice versa, as desired. For example, if the first (non-standard) form has been utilized and a sparse matrix is obtained, the conversion to the second (standard) form will not require undue computation, and the second form may be

advantageous for an operation such as inverting the matrix. A simplified illustration of the procedure is shown in Fig.s 25A and 25B. Fig. 25A illustrates the first form (as in Fig. 3 above), with three levels. Fig. 25B shows how the information in Fig . 25A can be converted to the second form using the

above-referenced relationships of Appendix I. In particular, the α1, α2 and α3 and S3 information is placed, without change, in the matrix positions illustrated in Fig. 25B. The β information is placed as shown in Fig. 25B, but after conversion by

considering each row of β1, β2 and β3 as a vector and converting the vectors, in the manner of conversion of the vector of Fig. 6A - 6C above, to obtain β1, β2 and β3. Similarly, the γ

information is placed as shown in Fig. 25B, but after conversion by considering each column of γ , γ and γ as a vector and converting the vectors, in the manner of conversion of the vector of Fig. 6A - 6C above, to obtain Conversion can

also be effected from the second form to the first form, if desired.

The invention has been described with reference to

particular preferred embodiments, but variations within the spirit and scope of the invention will occur to those skilled in the art. For example, it will be understood that after

sparsifying a matrix as taught herein, the matrix information

could be incorporated in a special purpose chip or integrated circuit, e.g. for repetitive use, or could be the basis for a very fast special purpose computational logic circuit.

Appendix I

I Introduction

The purpose of this paper is to introduce a class of numerical algorithms designed for rapid application of dense matrices (or integral operators) to vectors. As is well-known, applying directly a dense NxN- matrix to a vector requires roughly N2 operations, and this simple fact is a cause of serious difficulties encountered in large-scale computations. For example, the main reason for the limited use of integral equations as a numerical tool in large-scale computations is that they normally lead to dense systems of linear algebraic equations, and the latter have to be solved, either directly or iteratively. Most iterative methods for the solution of systems of linear equations involve the application of the matrix of the system to a sequence of recursively generated vectors, which tends to be prohibitively expensive for large-scale problems. The situation is even worse if a direct solver for the linear system is used, since such solvers normally require O(N3) operations. As a result, in most areas of computational mathematics dense matrices axe simply avoided whenever possible. For example, finite difference and finite element methods can be viewed as devices for reducing a partial differential equation to a sparse linear system. In this case, the cost of sparsity is the inherently high condition number of the resulting matrices.

For translation invariant operators, the problem of excessive cost of applying (or inverting) the dense matrices has been met by the Fast Fourier Transform (FFT) and related algorithms (fast convolution schemes, etc.). These methods use algebraic properties of a matrix to apply it to a vector in order N log(N) operations. Such schemes are exact in exact arithmetic, and are fragile in the sense that they depend on the exact algebraic properties of the operator for their applicabilitv. A more recent group of fast

algorithms [1,2,5,9] uses explicit analytical properties of specific operators to rapidly apply them to arbitrary vectors. The algorithms in this group are approximate in exact arithmetic (though they are capable of producing any prescribed accuracy), do not require that the operators in question be translation invariant, and are considerably more adaptable than the algorithms based on the FFT and its variants.

In this paper, we introduce a radical generalization of the algorithms of [1,2,5,9]. We describe a method for the fast numerical application to arbitrary vectors of a wide variety of operators. The method normally requires order O(N) operations, and is directly applicable to all Calderon-Zygmund and pseudo- differential operators. While each of the algorithms of [1,2,5,9] addresses a particular operator and uses an analytical technique specifically tailored to it, we introduce several numerical tools applicable in all of these (and many other) situations. The algorithms presented here are meant to be a general tool similar to FFT. However, they do not require that the operator be translation invariant, and are approximate in exact arithmetic, though they achieve any prescribed finite accuracy. In addition, the techniques of this paper generalize to certain classes of multi-linear transformations (see Section 4.6 below).

We use a class of orthonormal "wavelet" bases generalizing the Haar functions and originally introduced by Stromberg [10] and Meyer [7]. The specific wavelet basis functions used in this paper were constructed by I. Daubechies [4], and are remarkably well adapted to numerical calculations. In these bases (for a given accuracy) integral operators satisfying certain analytical estimates have a band-diagonal form, and can be applied to arbitrary functions in a 'fast' manner. In particular, Dirichlet and Neumann boundary value problems for certain elliptic partial differential equations can be solved in order N calculations, where N is the number of nodes in the discretization of the boundary of the region. Other applications include an O(N log(N)) algorithm for the evaluation of Legendre series, and similar schemes (comparable in speed to FFT in the same dimensions) for other special function expansions. In general, the scheme of this paper can be viewed as a method for the conversion (whenever regularity permits) of dense matrices to a sparse form.

Once the sparse form of the matrix is obtained, applying it to an arbitrary vector is an order O(N) procedure, while the construction of the sparse form in general requires O(N2) operations. On the other hand, if the structure of the singularities of the matrix is known a priori (as for Green's functions of elliptic operators or for Calderon-Zygmund operators) the compression of the operator to a banded form is an order O(N) procedure. The non-zero entries of the resulting compressed matrix mimic the structure of the singularities of the original kernel.

Effectively, this paper provides two schemes for the numerical evaluation of integral operators. The first is a straightforward realization ("standard form") of the matrix of the operator in the wavelet basis. This scheme is an order Nlog(N) procedure (even for such simple operators as multiplication by a function). While this straightforward realization of the matrix is a useful numerical tool in itself, its range of applicability is significantly extended by the second scheme, which we describe in this paper in more detail. This realization ("non-standard form") leads to an order N scheme. The estimates for the latter follow from the more subtle analysis of the proof of the "T(1) theorem" of David and Journe (see [3]). We also present two numerical examples showing that our algorithms can be useful for certain operators which are outside the class for which we provide proofs. The paper is organized as follows. In Section II we use the well-known Haar basis to describe a simplified version of the algorithm. In Section III we summarize the relevant facts from the theory of wavelets. Section IV contains an analysis of a class of integral operators for which we obtain an order N algorithm, and a description of a version of the algorithm for bilinear operators. Section V contains a detailed description and a complexity analysis of the scheme. Finally, in Section VI we present several numerical applications.

Generalizations to higher dimensions and numerical operator calculus containing O(Nlog(N)) implementations of pseudodifferential operators and their inverses will appear in a sequel to this paper.

II The algorithm in the Haar system

The Haar functions h

j,k with integer indices j and k are defined by

1

Clearly, the Haar function hj,k(x) is supported in the dyadic interval Ij,k

Ij,k = [2i(k - l), ^k}. (2.2)

1We define the basis so that the dyadic scale with the index j is finer than the scale with index j + 1. This choice of indexing is convenient for numerical applications.

We will use the notation hj,k(x) = h Ij,k(x) = hl(x) = 2-j/2h(2-jx - k + 1), where h(x) = h0 ,1 (x). We index the Haar functions by dyadic intervals Ij,k and observe that the system hIj,k(x) forms an orthonormal basis of L2(R) (see, for example, [8]).

We also introduce the normalized characteristic function X I

j,k(x)

where lIj,kl denotes the length of Ij,k, and will use the notation Xj,k = XIj,k.

Given a function f ∈ L2(R) and an interval l C R, we define its Haar coefficient di oi f

and " average" s

l of f on I as

and observe that

where I' and I" are the left and the right halves of I.

To obtain a numerical method for calculating the Haar coefficients of a function we proceed as follows. Suppose we are given N = 2

n "samples" of a function, which can for simplicity be thought of as values of scaled averages

of f on intervals of length 2-n. We then get the Haar coefficients for the intervals of length 2- n+1 via (2.6), and obtain the coefficients

4

We also compute the 'averages' fy J

on the intervals of length 2

-n+1. Repeating this procedure, we obtain the Haar coefficients and averages

4 ^ 4 4

for j = 0, ... , n - 1 and k = 1, . .. , 2n-j-1. This is illustrated by the pyramid scheme

K

It is easy to see that evaluating the whole set of coefficients dl, sl in (2.12) requires 2(N - 1) additions and 2N multiplications.

In two dimensions, there are two natural ways to construct Haar systems. The first is simply the tensor product hlxJ = hi <g> hJ, so that each basis function hlxJ is supported on the rectangle I x J. The second basis is defined by associating three basis functions: hl(x)hl,(y), hl(x)Xl' (y), and Xl(x)hl' (y) to each square I x I', where I and I' are two dyadic intervals of the same length.

We consider an integral operator

T(f)(x) =∫κ(x,y)f(y)dy, (2.13) and expand its kernel (formally) as a function of two variables in the two-dimensional Haar series h r

where the sum extends over all dyadic squares I x I' with lIl = lI'l, and where α

ll' =∫∫ K(x, y)h

l(x)h

l'(y)dxdy, (2.15) β

ll' =∫∫ K (x , y)h

l(x)X

l' (y)dxdy, (2.16) and

γll' =∫∫ K(x, y)Xl(x)hl' (y)dxdy. (2.17)

When I = Ij,k, I' = lj,k, (see (2.2)), we will also use the notation

( ) defining the matrices α

j = {

Substituting (2.14) into (2.13), we obtain

(recall that in each of the sums in (2.21) I and I' always have the same length).

To discretize (2.21), we define projection operators >

and approximate T by

(2.23) where Po is the projection operator on the finest scale. An alternative derivation of (2.21) consists of expanding T0 in a 'telescopic' series

T0 = P0TP0 =∑(Pj-1TPj-1 - PjTPj) + PnTPn

[(P

j-1 - P

j)T(P

j-1 - P

j) + (P

j-1 - P

j)TP

j + P

jT(P

j-1 - P

j)] + P

nTP

n.

(2.24) Defining the operators Qj with j = 1, 2, . . . , n, by the formula

Qj = Pj-1 - Pj, (2.25) we can rewrite (2.24) in the form

T

0 = (Q

jTQ

j + Q

jTP

j + P

jTQ

j) + P

nTP

n . (2.26)

The latter can be viewed as a decomposition of the operator T into a sum of contributions from different scales. Comparing (2.14) and (2.26), we observe that while the term P

nTP

n (or its equivalent) is absent in (2.14), it appears in (2.26) to compensate for the finite number of scales.

Observation 2.1. Clearly, expression (2.21) can be viewed as a scheme for the numerical application of the operator T to arbitrary functions. To be more specific, given a function f, we start with discretizing it into samples

k = 1, 2, . .. ,N, which are then converted into a vector ∈ R

2N-2 consisting of all coefficients

J and ordered as

Then, we construct the matrices α

j, β

j , γ

j for j = 1, 2, . . . , n (see (2.15) - (2.20) and Observation 3.2) corresponding to the operator T, and evaluate the vectors

{

k},

via the formulae

where d

3' =

k = 1, 2, .. . , 2

n-j , with j = 1, . .. ,n. Finally, we define an approximation T

^ to T

0 by the formula 2 M 2

Clearly,

T /) is a restriction of the operator T

0 in (2.23) on a finite-dimensional subspace of i

2(R). A rapid procedure for the numerical evaluation of the operator is

described (in a more general situation) is Section III below.

It is convenient to organize the matrices αj, βj, γj with j = 1, 2. . . , n into a single matrix, depicted in Figure 1, and for reasons that will become clear in Section IV, the matrix in Figure 1 will be referred to as the non-standard form of the operator T, while (2.14) will be referred to as the "non-standard" representation of T (note that the (2.14) is not the matrix realization of the operator T0 in the Haar basis).

III Wavelets with vanishing moments and associated quadratures

3.1. Wavelets with vanishing moments. Though the Haar system leads to simple algorithms, it is not very useful in actual calculations, since the decay of αll', βll', γll' away from diagonal is not sufficiently fast (see below). To have a faster decay, it is necessary to use a basis in which the elements have several vanishing moments. In our algorithms, we use the orthonormal bases of compactly supported wavelets constructed by I. Daubechies [4] following the work of Y. Meyer [7] and S. Mallat [6]. We now describe these orthonormal bases.

Consider functions ψ and φ (corresponding to h and X in Section II), which satisfy the following relations:

where

9k = (-1)k-1h2M-k+1, k = 1, . . . , 2M (3.3) and

∫φ(x)dx = 1. (3.4)

The coefficients h are chosen so that the functions

ψ j,k(x) = 2-j/2ψ(2-j x - k + 1), (3.5) where j and k are integers, form an orthonormal basis and, in addition, the function ψ has M vanishing moments

∫ψ(x)xmdx = 0, m = 0, . . . , M - 1. (3.6)

We will also need the notation φ

j,k(x) = 2

-j/ 2φ (2

-jx - k + 1). (3.7)

Note that the Haar system is a particular case of (3.1)-(3.6) with M = 1 and h

1 = h

2 =

φ = X and ψ = h, and that the expansion (2.14)-(2.17) and the nonstandard form in (2.26) in Section II can be rewritten in any wavelet basis by simply replacing functions X and h by φ and ψ respectively.

Remark 3.1. Several classes of functions φ, ψ have been constructed in recent years, and we refer the reader to [4] for a detailed description of some of those.

Remark 3.2. Unlike the Haar basis, the functions φl, φJ can have overlapping supports for As a result, the pyramid structure (2.12) 'spills out' of the interval [1, N] on which the structure is originally defined. Therefore, it is technically convenient to replace the original structure with a periodic one with period N. This is equivalent to replacing the original wavelet basis with its periodized version (see [8]).

3.2. Wavelet-based quadratures. In the preceeding subsection, we introduce a procedure for calculating the coefficients

for all j≥ 1, k = 1, 2, . . . , N, given the coefficients for k = 1, 2, ... ,N. In this subsection, we introduce a set of quadrature

formulae for the efficient evaluation of the coefficients

corresponding to smooth functions f. The simplest class of procedures of this kind is obtained under the assumption that there exists a real constant T

M such that the function φ satisfies the condition

∫φ(x + TM)xm dx = 0, for m = 1,2, . . . , M - 1, (3.8)

∫φ(x) dx = 1, (3.9) i.e. that the first M - 1 'shifted' moments of φ are equal to zero, while its integral is equal to 1. Recalling the definition of

∫ f(x) φ(2

n x - k + 1) dx = ∫ f(x + 2

-n(k - 1)) φ(2

nx) dx, (3.10)

expanding f into a Taylor series around 2

-n(k - 1 + T

M ), and using (3.8), we obtain ∫ f(x + 2

-n(k - 1)) φ (2

nx) dx = f(2

-n(k - 1 + T

M)) + O(2-^ (3.11)

In effect, (3.11), is a one-point quadrature formula for the evaluation of Applying the same calculation to with j≥ 1, we easily obtain

f(2

-n+j(k - 1 + T

M)) + O(2

-( n-jW * (3.12)

which turns out to be extremely useful for the rapid evaluation of the coefficients of compressed forms of matrices (see Section IV below).

Though the compactly supported wavelets found in [4] do not satisfy the condition (3.8), a slight variation of the procedure described there produces a basis satisfying (3.8), in addition to (3.1) - (3.6).

It turns out that the filters in Table 1 are 50% longer that those in the original wavelets found in [4], given the same order M. Therefore, it might be desirable to adapt the numerical scheme so that the 'shorter' wavelets could be used. Such an adaptation (by means of appropriately designed quadrature formulae for the evaluation of the integrals (3.10)) is presented at pages 1-24 to 1-28.

Remark 3.3. We do not discuss in this paper wavelet-based quadrature formulae for the evaluation of singular integrals, since such schemes tend to be problem-specific. Note, however, that for all integrable kernels quadrature formulae of the type developed in this paper are adequate with minor modifications.

3.3. Fast wavelet transform. For the rest of this section, we treat the procedures being discussed as linear transformations in RN, viewed as the Euclidean space of all periodic sequences with the period N.

Replacing the Haar basis with a basis of wavelets with vanishing moments, and assuming that the coefficients

= 1, 2, . . . ,N axe given, we replace the expressions (2.8) - (2.11) with the formulae and

where are viewed as periodic sequences with the period 2

n-j (see also Remark