US20020038211A1 - Speech processing system - Google Patents

Speech processing system Download PDFInfo

- Publication number

- US20020038211A1 US20020038211A1 US09/866,595 US86659501A US2002038211A1 US 20020038211 A1 US20020038211 A1 US 20020038211A1 US 86659501 A US86659501 A US 86659501A US 2002038211 A1 US2002038211 A1 US 2002038211A1

- Authority

- US

- United States

- Prior art keywords

- speech

- values

- audio signal

- parameters

- model

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

- 238000012545 processing Methods 0.000 title claims description 101

- 230000006870 function Effects 0.000 claims abstract description 89

- 230000005236 sound signal Effects 0.000 claims abstract description 54

- 230000015654 memory Effects 0.000 claims abstract description 12

- 238000000034 method Methods 0.000 claims description 81

- 238000004088 simulation Methods 0.000 claims description 25

- 230000005540 biological transmission Effects 0.000 claims description 7

- 238000003672 processing method Methods 0.000 claims 1

- 238000004458 analytical method Methods 0.000 abstract description 16

- 238000007619 statistical method Methods 0.000 description 53

- 239000013598 vector Substances 0.000 description 28

- 238000009826 distribution Methods 0.000 description 27

- 238000001514 detection method Methods 0.000 description 23

- 238000007405 data analysis Methods 0.000 description 18

- 239000011159 matrix material Substances 0.000 description 16

- 238000005259 measurement Methods 0.000 description 13

- 238000009499 grossing Methods 0.000 description 9

- 238000010586 diagram Methods 0.000 description 6

- 230000009466 transformation Effects 0.000 description 5

- 230000003044 adaptive effect Effects 0.000 description 3

- 230000000694 effects Effects 0.000 description 3

- 238000004519 manufacturing process Methods 0.000 description 3

- 230000001373 regressive effect Effects 0.000 description 3

- 230000002441 reversible effect Effects 0.000 description 3

- 238000005070 sampling Methods 0.000 description 3

- 238000012795 verification Methods 0.000 description 3

- 238000007476 Maximum Likelihood Methods 0.000 description 2

- 238000010420 art technique Methods 0.000 description 2

- 238000004422 calculation algorithm Methods 0.000 description 2

- 238000004364 calculation method Methods 0.000 description 2

- 238000009827 uniform distribution Methods 0.000 description 2

- 230000003936 working memory Effects 0.000 description 2

- 238000000342 Monte Carlo simulation Methods 0.000 description 1

- 239000000654 additive Substances 0.000 description 1

- 230000000996 additive effect Effects 0.000 description 1

- 238000013459 approach Methods 0.000 description 1

- 230000001419 dependent effect Effects 0.000 description 1

- 238000005315 distribution function Methods 0.000 description 1

- 230000000977 initiatory effect Effects 0.000 description 1

- 239000000203 mixture Substances 0.000 description 1

- 238000012544 monitoring process Methods 0.000 description 1

- 230000003595 spectral effect Effects 0.000 description 1

- 238000003860 storage Methods 0.000 description 1

- 230000001052 transient effect Effects 0.000 description 1

- 238000010977 unit operation Methods 0.000 description 1

Images

Classifications

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L25/00—Speech or voice analysis techniques not restricted to a single one of groups G10L15/00 - G10L21/00

- G10L25/78—Detection of presence or absence of voice signals

Definitions

- the present invention relates to an apparatus for and method of speech processing.

- the invention has particular, although not exclusive relevance to the detection of speech within an input speech signal.

- the microphone used to convert the user's speech into a corresponding electrical signal is continuously switched on. Therefore, even when the user is not speaking, there will constantly be an output signal from the microphone corresponding to silence or background noise.

- such systems employ speech detection circuits which continuously monitor the signal from the microphone and which only activate the main speech processing system when speech is identified in the incoming signal.

- Detecting the presence of speech within an input speech signal is also necessary for adaptive speech processing systems which dynamically adjust weights of a filter either during speech or during silence portions.

- the filter coefficients of the noise filter are only adapted when both speech and noise are present.

- the beam is only adapted when the signal of interest is not present within the input signal (i.e. during silence periods). In these systems, it is therefore important to know when the desired speech to be processed is present within the input signal.

- One aim of the present invention is to provide an alternative speech detection system for detecting speech within an input signal.

- the present invention provides an apparatus for detecting the presence of speech within an input audio signal, comprising: a memory for storing a probability density function for parameters of a predetermined speech model which is assumed to have generated a set of received audio signal values; means for applying the received set of audio signal values to the stored probability density function; means for processing the probability density function with those values applied to obtain values of the parameters that are representative of the input audio signal; and means for detecting the presence of speech using the obtained parameter values.

- FIG. 1 is a schematic view of a computer which may be programmed to operate in accordance with an embodiment of the present invention

- FIG. 2 is a block diagram illustrating the principal components of a speech recognition system which includes a speech detection system embodying the present invention

- FIG. 3 is a block diagram representing a model employed by a statistical analysis unit which forms part of the speech recognition system shown in FIG. 2;

- FIG. 4 is a flow chart illustrating the processing steps performed by a model order selection unit forming part of the statistical analysis unit shown in FIG. 2;

- FIG. 5 is a flow chart illustrating the main processing steps employed by a Simulation Smoother which forms part of the statistical analysis unit shown in FIG. 2;

- FIG. 6 is a block diagram illustrating the main processing components of the statistical analysis unit shown in FIG. 2;

- FIG. 7 is a memory map illustrating the data that is stored in a memory which forms part of the statistical analysis unit shown in FIG. 2;

- FIG. 8 is a flow chart illustrating the main processing steps performed by the statistical analysis unit shown in FIG. 6;

- FIG. 9 a is a histogram for a model order of an auto regressive filter model which forms part of the model shown in FIG. 3;

- FIG. 9 b is a histogram for the variance of process noise modelled by the model shown in FIG. 3;

- FIG. 9 c is a histogram for a third coefficient of the AR filter model.

- Embodiments of the present invention can be implemented on computer hardware, but the embodiment to be described is implemented in software which is run in conjunction with processing hardware such as a personal computer, workstation, photocopier, facsimile machine or the like.

- FIG. 1 shows a personal computer (PC) 1 which may be programmed to operate an embodiment of the present invention.

- a keyboard 3 , a pointing device 5 , a microphone 7 and a telephone line 9 are connected to the PC 1 via an interface 11 .

- the keyboard 3 and pointing device 5 allow the system to be controlled by a user.

- the microphone 7 converts the acoustic speech signal of the user into an equivalent electrical signal and supplies this to the PC 1 for processing.

- An internal modem and speech receiving circuit may be connected to the telephone line 9 so that the PC 1 can communicate with, for example, a remote computer or with a remote user.

- the program instructions which make the PC 1 operate in accordance with the present invention may be supplied for use with an existing PC 1 on, for example, a storage device such as a magnetic disc 13 , or by downloading the software from the Internet (not shown) via the internal modem and telephone line 9 .

- Sequential blocks (or frames) of speech samples are then passed from the buffer 19 to a statistical analysis unit 21 which performs a statistical analysis of each frame of speech samples in sequence to determine, amongst other things, a set of auto regressive (AR) coefficients representative of the speech within the frame.

- the AR coefficients output by the statistical analysis unit 21 are then input to a speech recognition unit 25 which compares the AR coefficients for successive frames of speech with a set of stored speech models 27 , which may be template based or Hidden Markov Model based, to generate a recognition result.

- the speech recognition unit 25 only performs this speech recognition processing when it is enabled to do so by a speech detection unit 61 which detects when speech is present within the input signal. In this way, the speech recognition unit 25 only processes the AR coefficients when there is speech within the signal to be recognised.

- the speech detection unit 61 also receives the AR coefficients output by the statistical analysis unit 21 together with the AR filter model order, which, as will be described below, is also generated by the statistical analysis unit 21 and determines from these, when speech is present within the signal received from the microphone 7 . It can do this, since the AR filter model order and the AR coefficient values will be larger during speech than when there is no speech present. Therefore, by comparing the AR filter model order and/or the AR coefficient values with appropriate threshold values, the speech detection unit 61 can determine whether or not speech is present within the input signal.

- the statistical analysis unit 21 analyses the speech within successive frames of the input speech signal.

- the frames are overlapping.

- the frames of speech are non-overlapping and have a duration of 20 ms which, with the 16 kHz sampling rate of the analogue to digital converter 17 , results in a frame size of 320 samples.

- the analysis unit 21 assumes that there is an underlying process which generated each sample within the frame.

- the model of this process used in this embodiment is shown in FIG. 3.

- AR auto regressive

- the statistical analysis unit 21 assumes that a current raw speech sample (s(n)) can be determined from a linear weighted combination of the most recent previous raw speech samples, i.e.:

- s ( n ) a 1 s ( n ⁇ 1)+a 2 s ( n ⁇ 2)+ . . . + a k s ( n ⁇ k )+ e ( n ) (1)

- a 1 , a 2 . . . a k are the AR filter coefficients representing the amount of correlation between the speech samples; k is the AR filter model order; and e(n) represents random process noise which is involved in the generation of the raw speech samples.

- these AR filter coefficients are the same coefficients that the linear prediction (LP) analysis estimates albeit using a different processing technique.

- the raw speech samples s(n) generated by the speech source are input to a channel 33 which models the acoustic environment between the speech source 31 and the output of the analogue to digital converter 17 .

- the channel 33 should simply attenuate the speech as it travels from the source 31 to the microphone.

- the signal (y(n)) output by the analogue to digital converter 17 will depend not only on the current raw speech sample (s(n)) but it will also depend upon previous raw speech samples. Therefore, in this embodiment, the statistical analysis unit 21 models the channel 33 by a moving average (MA) filter, i.e.:

- MA moving average

- h 0 , h 1 , h 2 . . . h r are the channel filter coefficients representing the amount of distortion within the channel 33

- r is the channel filter model order

- ⁇ (n) represents a random additive measurement noise component

- s ( n ) a 1 s ( n ⁇ 1)+ a 2 s ( n ⁇ 2)+ . . . + a k s ( n ⁇ k )+ e ( n )

- s ( n ⁇ 1) a 1 s ( n ⁇ 2)+ a 2 s ( n ⁇ 3)+ . . . + a k s ( n ⁇ k ⁇ 1)+ e ( n ⁇ 1)

- s ( n ⁇ N+ 1) a 1 s ( n ⁇ N )+ a 2 s ( n ⁇ N ⁇ 1)+ . . . + a k s ( n ⁇ k ⁇ N+ 1)+ e ( n ⁇ N+ 1) (3)

- e ( n ) s ( n ) ⁇ a 1 s ( n ⁇ 1) ⁇ a 2 s ( n ⁇ 2) ⁇ . . . ⁇ a k s ( n ⁇ k )

- e ( n ⁇ 1) s ( n ⁇ 1) ⁇ a 1 s ( n ⁇ 2) ⁇ 2 s ( n ⁇ 3) ⁇ . . . ⁇ a k s ( n ⁇ k ⁇ 1)

- e ( n ⁇ N+ 1) s ( n ⁇ N+ 1) ⁇ a 1 s ( n ⁇ N ) ⁇ a 2 s ( n ⁇ N ⁇ 1) ⁇ . . . ⁇ a k s ( n ⁇ k ⁇ N+ 1) (5)

- a . [ 1 - a 1 - a 2 - a 3 ... - a k 0 0 0 ... 0 0 1 - a 1 - a 2 ... - a k - 1 - a k 0 0 ... 0 0 1 - a 1 ... - a k - 2 - a k - 1 - a k 0 ... 0 ⁇ ⁇ 0 1 ] NxN

- the analysis unit 21 aims to determine, amongst other things, values for the AR filter coefficients (a) which best represent the observed signal samples (y(n)) in the current frame. It does this by determining the AR filter coefficients (a) that maximise the joint probability density function of the speech model, channel model, speech samples and the noise statistics given the observed signal samples output from the analogue to digital converter 17 , i.e. by determining: max a - ⁇ ⁇ p ( a _ , k , h _ , r , ⁇ e 2 , ⁇ ⁇ 2 , s _ ⁇ ( n ) ⁇ ⁇ y _ ⁇ ( n ) ⁇ ( 9 )

- ⁇ e 2 and ⁇ ⁇ 2 represent the process and measurement noise statistics respectively.

- this function defines the probability that a particular speech model, channel model, raw speech samples and noise statistics generated the observed frame of speech samples (y(n)) from the analogue to digital converter. To do this, the statistical analysis unit 21 must determine what this function looks like.

- This term represents the joint probability density function for generating the vector of raw speech samples (s(n)) during a frame, given the AR filter coefficients (a), the AR filter model order (k) and the process noise statistics ( ⁇ e 2 ). From equation (6) above, this joint probability density function for the raw speech samples can be determined from the joint probability density function for the process noise.

- the statistical analysis unit 21 assumes that the process noise associated with the speech source 31 is Gaussian having zero mean and some unknown variance ⁇ e 2 .

- the statistical analysis unit 21 also assumes that the process noise at one time point is independent of the process noise at another time point.

- This term represents the joint probability density function for generating the vector of speech samples (y(n)) output from the analogue to digital converter 17 , given the vector of raw speech samples (s(n)), the channel filter coefficients (h), the channel filter model order (r) and the measurement noise statistics ( ⁇ ⁇ 2 ). From equation (8), this joint probability density function can be determined from the joint probability density function for the process noise.

- the statistical analysis unit 21 assumes that the measurement noise is Gaussian having zero mean and some unknown variance ⁇ ⁇ 2 . It also assumes that the measurement noise at one time point is independent of the measurement noise at another time point. Therefore, the joint probability density function for the measurement noise in a frame of the input speech will have the same form as the process noise defined in equation (12).

- This term defines the prior probability density function for the AR filter coefficients (a) and it allows the statistical analysis unit 21 to introduce knowledge about what values it expects these coefficients will take.

- the prior density functions (p( ⁇ a 2 ) and p( ⁇ a )) for these variables must be added to the numerator of equation (10) above.

- the mean vector ( ⁇ a ) can be set to zero and for the second and subsequent frames of speech being processed, it can be set to the mean vector obtained during the processing of the previous frame.

- p( ⁇ a ) is just a Dirac delta function located at the current value of ⁇ a and can therefore be ignored.

- the statistical analysis unit 21 could set this equal to some constant to imply that all variances are equally probable. However, this term can be used to introduce knowledge about what the variance of the AR filter coefficients is expected to be.

- the statistical analysis unit 21 At the beginning of the speech being processed, the statistical analysis unit 21 will not have much knowledge about the variance of the AR filter coefficients. Therefore, initially, the statistical analysis unit 21 sets the variance ⁇ a 2 and the ⁇ and ⁇ parameters of the Inverse Gamma function to ensure that this probability density function is fairly flat and therefore non-informative. However, after the first frame of speech has been processed, these parameters can be set more accurately during the processing of the next frame of speech by using the parameter values calculated during the processing of the previous frame of speech.

- This term represents the prior probability density function for the channel model coefficients (h) and it allows the statistical analysis unit 21 to introduce knowledge about what values it expects these coefficients to take.

- the prior density functions (p( ⁇ h ) and p( ⁇ h )) must be added to the numerator of equation (10).

- the mean vector can initially be set to zero and after the first frame of speech has been processed and for all subsequent frames of speech being processed, the mean vector can be set to equal the mean vector obtained during the processing of the previous frame. Therefore, p( ⁇ h ) is also just a Dirac delta function located at the current value of ⁇ h and can be ignored.

- the statistical analysis unit 21 models these by an Inverse Gamma function having parameters ⁇ e , ⁇ e and ⁇ ⁇ , ⁇ ⁇ respectively. Again, these variances and these Gamma function parameters can be set initially so that they are non-informative and will not appreciably affect the subsequent calculations for the initial frame.

- the statistical analysis unit 21 “draws samples” from it.

- the joint probability density function to be sampled is a complex multivariate function

- a Gibbs sampler is used which breaks down the problem into one of drawing samples from probability density functions of smaller dimensionality.

- the Gibbs sampler proceeds by drawing random variates from conditional densities as follows: first ⁇ ⁇ iteration p ( a _ , k ⁇ ⁇ h 0 , r 0 , ⁇ e 2 0 , ⁇ ⁇ 2 0 , ⁇ a 2 0 , ⁇ h 2 0 , s _ ⁇ ( n ) 0 , y _ ⁇ ( n ) ) -> a _ 1 , k 1 p ( h _ , r ⁇ ⁇ a _ 1 , k 1 , ⁇ e 2 0 , ⁇ ⁇ 2 0 , ⁇ a 2 0 , ⁇ h 2 0 , s _ ⁇ ( n ) 0 , y _ ⁇ ( n ) ) -> h _ 1 , k 1 p ( ⁇ e 2 ⁇ a _ 1 ,

- conditional densities are obtained by inserting the current values for the given (or known) variables into the terms of the density function of equation (19).

- a sample can then be drawn from this standard Gaussian distribution to give a g (where g is the g th iteration of the Gibbs sampler) with the model order (k g ) being determined by a model order selection routine which will be described later.

- the drawing of a sample from this Gaussian distribution may be done by using a random number generator which generates a vector of random values which are uniformly distributed and then using a transformation of random variables using the covariance matrix and the mean value given in equations (22) and (23) to generate the sample.

- a random number generator is used which generates random numbers from a Gaussian distribution having zero mean and a variance of one.

- a sample is then drawn from this Inverse Gamma distribution by firstly generating a random number from a uniform distribution and then performing a transformation of random variables using the alpha and beta parameters given in equation (27), to give ( ⁇ e 2 ) g .

- the Gibbs sampler requires an initial transient period to converge to equilibrium (known as burn-in).

- burn-in the sample (a L , k L , h L , r L , ( ⁇ e 2 ) L , ( ⁇ ⁇ 2 ) L , ( ⁇ a 2 ) L , ( ⁇ h 2 ) L , s(n) L ) is considered to be a sample from the joint probability density function defined in equation (19).

- the Gibbs sampler performs approximately one hundred and fifty (150) iterations on each frame of input speech and discards the samples from the first fifty iterations and uses the rest to give a picture (a set of histograms) of what the joint probability density function defined in equation (19) looks like. From these histograms, the set of AR coefficients (a) which best represents the observed speech samples (y(n)) from the analogue to digital converter 17 are determined. The histograms are also used to determine appropriate values for the variances and channel model coefficients (h) which can be used as the initial values for the Gibbs sampler when it processes the next frame of speech.

- model order (k) of the AR filter and the model order (r) of the channel filter are updated using a model order selection routine.

- this is performed using a technique derived from “Reversible jump Markov chain Monte Carlo computation”, which is described in the paper entitled “Reversible jump Markov chain Monte Carlo Computation and Bayesian model determination” by Peter Green, Biometrika, vol 82, pp 711 to 732, 1995.

- FIG. 4 is a flow chart which illustrates the processing steps performed during this model order selection routine for the AR filter model order (k).

- a new model order (k 2 ) is proposed.

- a sample is drawn from a discretised Laplacian density function centred on the current model order (k 1 ) and with the variance of this Laplacian density function being chosen a priori in accordance with the degree of sampling of the model order space that is required.

- the ratio term is the ratio of the conditional probability given in equation (21) evaluated for the current AR filter coefficients (a) drawn by the Gibbs sampler for the current model order (k 1 ) and for the proposed new model order (k 2 ). If k 2 >k 1 , then the matrix S must first be resized and then a new sample must be drawn from the Gaussian distribution having the mean vector and covariance matrix defined by equations (22) and (23) (determined for the resized matrix S), to provide the AR filter coefficients (a ⁇ 1:k2>) for the new model order (k 2 ). If k 2 ⁇ k 1 then all that is required is to delete the last (k 1 ⁇ k 2 ) samples of the a vector.

- the ratio in equation (31) is greater than one, then this implies that the proposed model order (k 2 ) is better than the current model order whereas if it is less than one then this implies that the current model order is better than the proposed model order.

- the model order variable (MO) is compared, in step s 5 , with a random number which lies between zero and one. If the model order variable (MO) is greater than this random number, then the processing proceeds to step s 7 where the model order is set to the proposed model order (k 2 ) and a count associated with the value of k 2 is incremented.

- step s 9 the processing proceeds to step s 9 where the current model order is maintained and a count associated with the value of the current model order (k 1 ) is incremented. The processing then ends.

- This model order selection routine is carried out for both the model order of the AR filter model and for the model order of the channel filter model. This routine may be carried out at each Gibbs iteration. However, this is not essential. Therefore, in this embodiment, this model order updating routine is only carried out every third Gibbs iteration.

- the Simulation Smoother is run before the Gibbs Sampler. It is also run again during the Gibbs iterations in order to update the estimates of the raw speech samples. In this embodiment, the Simulation Smoother is run every fourth Gibbs iteration.

- the dimensionality of the raw speech vectors ( ⁇ (n)) and the process noise vectors (ê(n)) do not need to be N ⁇ 1 but only have to be as large as the greater of the model orders—k and r.

- the channel model order (r) will be larger than the AR filter model order (k).

- the vector of raw speech samples ( ⁇ (n)) and the vector of process noise (ê(n)) only need to be rxl and hence the dimensionality of the matrix ⁇ only needs to be rxr.

- the Simulation Smoother involves two stages—a first stage in which a Kalman filter is run on the speech samples in the current frame and then a second stage in which a “smoothing” filter is run on the speech samples in the current frame using data obtained from the Kalman filter stage.

- FIG. 5 is a flow chart illustrating the processing steps performed by the Simulation Smoother.

- the system initialises a time variable t to equal one.

- step s 21 the processing then proceeds to step s 23 , where the following Kalman filter equations are computed for the current speech sample (y(t)) being processed:

- ⁇ ( t+ 1) ⁇ ( t )+ k ⁇ ( t ) ⁇ w ( t )

- the initial vector of raw speech samples ( ⁇ (1)) includes raw speech samples obtained from the processing of the previous frame (or if there are no previous frames then s(i) is set equal to zero for i ⁇ 1);

- P(1) is the variance of ⁇ (1) (which can be obtained from the previous frame or initially can be set to ⁇ e 2 );

- h is the current set of channel model coefficients which can be obtained from the processing of the previous frame (or if there are no previous frames then the elements of h can be set to their expected values—zero);

- y(t) is the current speech sample of the current frame being processed and I is the identity matrix.

- step s 25 the scalar values w(t) and d(t) are stored together with the rxr matrix L(t) (or alternatively the Kalman filter gain vector k f (t) could be stored from which L(t) can be generated).

- step s 27 the system determines whether or not all the speech samples in the current frame have been processed. If they have not, then the processing proceeds to step s 29 where the time variable t is incremented by one so that the next sample in the current frame will be processed in the same way. Once all N samples in the current frame have been processed in this way and the corresponding values stored, the first stage of the Simulation Smoother is complete.

- step s 31 the second stage of the Simulation Smoother is started in which the smoothing filter processes the speech samples in the current frame in reverse sequential order.

- step s 31 the system runs the following set of smoothing filter equations on the current speech sample being processed together with the stored Kalman filter variables computed for the current speech sample being processed:

- the processing then proceeds to step s 33 where the estimate of the process noise ( ⁇ tilde over (e) ⁇ (t)) for the current speech sample being processed and the estimate of the raw speech sample ( ⁇ (t)) for the current speech sample being processed are stored.

- step s 35 the system determines whether or not all the speech samples in the current frame have been processed.

- step s 37 the time variable t is decremented by one so that the previous sample in the current frame will be processed in the same way.

- the matrix S and the matrix Y require raw speech samples s(n ⁇ N ⁇ 1) to s(n ⁇ N ⁇ k+1) and s(n ⁇ N ⁇ 1) to s(n ⁇ N ⁇ r+1) respectively in addition to those in s(n).

- These additional raw speech samples can be obtained either from the processing of the previous frame of speech or if there are no previous frames, they can be set to zero.

- the Gibbs sampler can be run to draw samples from the above described probability density functions.

- FIG. 6 is a block diagram illustrating the principal components of the statistical analysis unit 21 of this embodiment. As shown, it comprises the above described Gibbs sampler 41 , Simulation Smoother 43 (including the Kalman filter 43 - 1 and smoothing filter 43 - 2 ) and model order selector 45 . It also comprises a memory 47 which receives the speech samples of the current frame to be processed, a data analysis unit 49 which processes the data generated by the Gibbs sampler 41 and the model order selector 45 and a controller 50 which controls the operation of the statistical analysis unit 21 .

- the memory 47 includes a non volatile memory area 47 - 1 and a working memory area 47 - 2 .

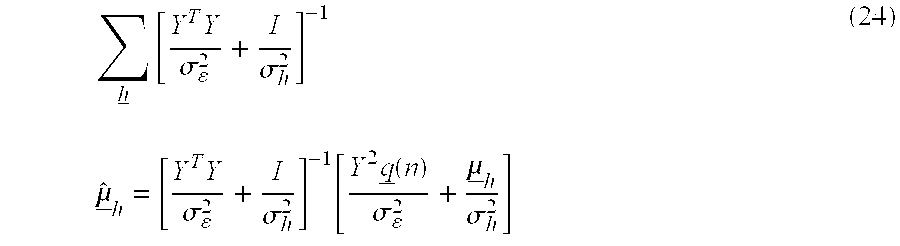

- the non volatile memory 47 - 1 is used to store the joint probability density function given in equation (19) above and the equations for the variances and mean values and the equations for the Inverse Gamma parameters given above in equations (22) to (24) and (27) to (30) for the above mentioned conditional probability density functions for use by the Gibbs sampler 41 .

- the non volatile memory 47 - 1 also stores the Kalman filter equations given above in equation (33) and the smoothing filter equations given above in equation 34 for use by the Simulation Smoother 43 .

- FIG. 7 is a schematic diagram illustrating the parameter values that are stored in the working memory area (RAM) 47 - 2 .

- the RAM includes a store 51 for storing the speech samples y f (1) to y f (N) output by the analogue to digital converter 17 for the current frame (f) being processed. As mentioned above, these speech samples are used in both the Gibbs sampler 41 and the Simulation Smoother 43 .

- the RAM 47 - 2 also includes a store 57 for storing the estimates of the raw speech samples ( ⁇ f (t)) and the estimates of the process noise ( ⁇ tilde over (e) ⁇ f (t)) generated by the smoothing filter 43 - 2 , as discussed above.

- the RAM 47 - 2 also includes a store 59 for storing the model order counts which are generated by the model order selector 45 when the model orders for the AR filter model and the channel model are updated.

- FIG. 8 is a flow diagram illustrating the control program used by the controller 50 , in this embodiment, to control the processing operations of the statistical analysis unit 21 .

- the controller 50 retrieves the next frame of speech samples to be processed from the buffer 19 and stores them in the memory store 51 .

- the processing then proceeds to step s 43 where initial estimates for the channel model, raw speech samples and the process noise and measurement noise statistics are set and stored in the store 53 . These initial estimates are either set to be the values obtained during the processing of the previous frame of speech or, where there are no previous frames of speech, are set to their expected values (which may be zero).

- step s 45 the Simulation Smoother 43 is activated so as to provide an estimate of the raw speech samples in the manner described above.

- the processing then proceeds to step s 47 where one iteration of the Gibbs sampler 41 is run in order to update the channel model, speech model and the process and measurement noise statistics using the raw speech samples obtained in step s 45 .

- These updated parameter values are then stored in the memory store 53 .

- the processing then proceeds to step s 49 where the controller 50 determines whether or not to update the model orders of the AR filter model and the channel model. As mentioned above, in this embodiment, these model orders are updated every third Gibbs iteration.

- step s 51 the model order selector 45 is used to update the model orders of the AR filter model and the channel model in the manner described above. If at step s 49 the controller 50 determines that the model orders are not to be updated, then the processing skips step s 51 and the processing proceeds to step s 53 .

- step s 53 the controller 50 determines whether or not to perform another Gibbs iteration. If another iteration is to be performed, then the processing proceeds to decision block s 55 where the controller 50 decides whether or not to update the estimates of the raw speech samples (s(t)). If the raw speech samples are not to be updated, then the processing returns to step s 47 where the next Gibbs iteration is run.

- the Simulation Smoother 43 is run every fourth Gibbs iteration in order to update the raw speech samples. Therefore, if the controller 50 determines, in step s 55 that there has been four Gibbs iterations since the last time the speech samples were updated, then the processing returns to step s 45 where the Simulation Smoother is run again to provide new estimates of the raw speech samples (s(t)). Once the controller 50 has determined that the required 150 Gibbs iterations have been performed, the controller 50 causes the processing to proceed to step s 57 where the data analysis unit 49 analyses the model order counts generated by the model order selector 45 to determine the model orders for the AR filter model and the channel model which best represents the current frame of speech being processed.

- step s 59 the data analysis unit 49 analyses the samples drawn from the conditional densities by the Gibbs sampler 41 to determine the AR filter coefficients (a), the channel model coefficients (h), the variances of these coefficients and the process and measurement noise variances which best represent the current frame of speech being processed.

- step s 61 the controller 50 determines whether or not there is any further speech to be processed. If there is more speech to be processed, then processing returns to step S 41 and the above process is repeated for the next frame of speech. Once all the speech has been processed in this way, the processing ends.

- the data analysis unit 49 initially determines, in step s 57 , the model orders for both the AR filter model and the channel model which best represents the current frame of speech being processed. It does this using the counts that have been generated by the model order selector 45 when it was run in step s 51 . These counts are stored in the store 59 of the RAM 47 - 2 . In this embodiment, in determining the best model orders, the data analysis unit 49 identifies the model order having the highest count.

- FIG. 9 a is an exemplary histogram which illustrates the distribution of counts that is generated for the model order (k) of the AR filter model. Therefore, in this example, the data analysis unit 49 would set the best model order of the AR filter model as five.

- the data analysis unit 49 performs a similar analysis of the counts generated for the model order (r) of the channel model to determine the best model order for the channel model.

- the data analysis unit 49 analyses the samples generated by the Gibbs sampler 41 which are stored in the store 53 of the RAM 47 - 2 , in order to determine parameter values that are most representative of those samples. It does this by determining a histogram for each of the parameters from which it determines the most representative parameter value. To generate the histogram, the data analysis unit 49 determines the maximum and minimum sample value which was drawn by the Gibbs sampler and then divides the range of parameter values between this minimum and maximum value into a predetermined number of sub-ranges or bins. The data analysis unit 49 then assigns each of the sample values into the appropriate bins and counts how many samples are allocated to each bin.

- FIG. 9 b illustrates an example histogram which is generated for the variance ( ⁇ e 2 ) of the process noise, from which the data analysis unit 49 determines that the variance representative of the sample is 0.3149.

- the data analysis unit 49 determines and analyses a histogram of the samples for each coefficient independently.

- FIG. 9 c shows an exemplary histogram obtained for the third AR filter coefficient (a 3 ), from which the data analysis unit 49 determines that the coefficient representative of the samples is ⁇ 0.4977.

- the data analysis unit 49 outputs the AR coefficients (a) and the AR filter model order (k).

- the AR filter coefficients (a) are output to both the speech recognition unit 25 and the speech detection unit 61 , whereas the AR filter model order (k) is only output to the speech detection unit 61 .

- These parameter values (and the remaining parameter values determined by the data analysis unit 49 ) are also stored in the RAM 47 - 2 for use during the processing of the next frame of speech.

- the speech detection unit 61 compares the AR filter model order (k) and the AR filter coefficient values with appropriate threshold values, and determines that speech is present within the input signal when the AR filter model order and the AR filter coefficient values exceed these threshold values.

- the speech detection unit 61 When the speech detection unit 61 detects the presence of speech, it outputs an appropriate control signal to the speech recognition unit 25 , which causes it to start processing the AR coefficients it receives from the statistical analysis unit 21 . Similarly, when the speech detection unit 61 detects the end of speech, it outputs an appropriate control signal to the speech recognition unit 25 which causes it to stop processing the AR coefficients it receives from the statistical analysis unit 21 .

- the AR filter coefficients output by the statistical analysis unit 21 will more accurately represent the corresponding input speech. Further still, since the underlying process model that is used separates the speech source from the channel, the AR filter coefficients that are determined will be more representative of the actual speech and will be less likely to include distortive effects of the channel. Further still, since variance information is available for each of the parameters, this provides an indication of the confidence of each of the parameter estimates. This is in contrast to maximum likelihood and least squares approaches, such as linear prediction analysis, where point estimates of the parameter values are determined.

- the statistical analysis unit was used as a pre-processor for a speech recognition system in order to generate AR coefficients representative of the input speech.

- the statistical analysis unit was also used to determine the AR filter model order which was used together with the AR coefficients by a speech detection unit to detect the presence of speech within the input signal.

- the speech detection unit can detect the presence of speech using only the AR filter model order or only the AR coefficient values.

- both the model order and the AR coefficient values are used, since this allows a more accurate speech detection to be performed.

- fricative sounds For example, for speech sounds where there is a weak correlation between adjacent speech samples (such as fricative sounds), if only the AR coefficient values are used, then the presence of such fricative sounds may be missed since all the AR filter coefficients may have small values below the corresponding threshold values. Nonetheless, with such fricative sounds, the model order is likely to exceed its threshold value, in which case the speech detection unit can still reliably detect the speech.

- a speech detection system was described in use together with a speech recognition system.

- the speech detection system described above may be used in any speech processing system to control the initiation and termination of the speech processing operation.

- it can be used in a speaker verification system or in a speech transmission system in order to control the verification process and the transmission process respectively.

- the statistical analysis unit was used effectively as a “preprocessor” for both the speech recognition unit and the speech detection unit.

- a separate preprocessor may be provided as the front end to the speech recognition unit.

- the statistical analysis unit would only be used to provide information to the speech detection unit.

- such separate parameterisation of the input speech for the speech recognition unit is not preferred because of the additional processing overhead involved.

- a speech recognition system was used which used the AR filter coefficients output by the statistical analysis unit.

- an appropriate coefficient converter may be used to convert the AR coefficients into the appropriate coefficients for use by the speech recognition unit.

- Gaussian and Inverse Gamma distributions were used to model the various prior probability density functions of equation (19).

- the reason these distributions were chosen is that they are conjugate to one another.

- each of the conditional probability density functions which are used in the Gibbs sampler will also either be Gaussian or Inverse Gamma. This therefore simplifies the task of drawing samples from the conditional probability densities.

- the noise probability density functions could be modelled by Laplacian or student-t distributions rather than Gaussian distributions.

- the probability density functions for the variances may be modelled by a distribution other than the Inverse Gamma distribution. For example, they can be modelled by a Rayleigh distribution or some other distribution which is always positive.

- the use of probability density functions that are not conjugate will result in increased complexity in drawing samples from the conditional densities by the Gibbs sampler.

- a Simulation Smoother was used to generate estimates for the raw speech samples.

- This Simulation Smoother included a Kalman filter stage and a smoothing filter stage in order to generate the estimates of the raw speech samples.

- the smoothing filter stage may be omitted, since the Kalman filter stage generates estimates of the raw speech (see equation (33)).

- these raw speech samples were ignored, since the speech samples generated by the smoothing filter are considered to be more accurate and robust. This is because the Kalman filter essentially generates a point estimate of the speech samples from the joint probability density function p(s(n)

- a Simulation Smoother was used in order to generate estimates of the raw speech samples. It is possible to avoid having to estimate the raw speech samples by treating them as “ nuisance parameters” and integrating them out of equation (19). However, this is not preferred, since the resulting integral will have a much more complex form than the Gaussian and Inverse Gamma mixture defined in equation (19). This in turn will result in more complex conditional probabilities corresponding to equations (20) to (30). In a similar way, the other nuisance parameters (such as the coefficient variances or any of the Inverse Gamma, alpha and beta parameters) may be integrated out as well. However, again this is not preferred, since it increases the complexity of the density function to be sampled using the Gibbs sampler. The technique of integrating out nuisance parameters is well known in the field of statistical analysis and will not be described further here.

- the data analysis unit analysed the samples drawn by the Gibbs sampler by determining a histogram for each of the model parameters and then determining the value of the model parameter using a weighted average of the samples drawn by the Gibbs sampler with the weighting being dependent upon the number of samples in the corresponding bin.

- the value of the model parameter may be determined from the histogram as being the value of the model parameter having the highest count.

- a predetermined curve such as a bell curve

- the statistical analysis unit modelled the underlying speech production process with a separate speech source model (AR filter) and a channel model. Whilst this is the preferred model structure, the underlying speech production process may be modelled without the channel model. In this case, there is no need to estimate the values of the raw speech samples using a Kalman filter or the like, although this can still be done. However, such a model of the underlying speech production process is not preferred, since the speech model will inevitably represent aspects of the channel as well as the speech. Further, although the statistical analysis unit described above ran a model order selection routine in order to allow the model orders of the AR filter model and the channel model to vary, this is not essential.

- the speech that was processed was received from a user via a microphone.

- the speech may be received from a telephone line or may have been stored on a recording medium.

- the channel model will compensate for this so that the AR filter coefficients representative of the actual speech that has been spoken should not be significantly affected.

- the speech generation process was modelled as an auto-regressive (AR) process and the channel was modelled as a moving average (MA) process.

- AR auto-regressive

- MA moving average

- other signal models may be used. However, these models are preferred because it has been found that they suitably represent the speech source and the channel they are intended to model.

- a new model order was proposed by drawing a random variable from a predetermined Laplacian distribution function.

- the new model order may be proposed in a deterministic way (ie under predetermined rules), provided that the model order space is sufficiently sampled.

Landscapes

- Engineering & Computer Science (AREA)

- Computational Linguistics (AREA)

- Signal Processing (AREA)

- Health & Medical Sciences (AREA)

- Audiology, Speech & Language Pathology (AREA)

- Human Computer Interaction (AREA)

- Physics & Mathematics (AREA)

- Acoustics & Sound (AREA)

- Multimedia (AREA)

- Complex Calculations (AREA)

- Telephonic Communication Services (AREA)

Abstract

A system is provided for detecting the presence of speech within an input audio signal. The system includes a memory for storing a predetermined function which gives, for a given set of audio signal values, a probability density for parameters of a predetermined speech model which is assumed to have generated the set of audio signal values, the probability density defining, for a given set of model parameter values, the probability that the predetermined speech model has those parameter values given that the speech model is assumed to have generated the set of audio signal values. The system applies a current set of received signal values to the stored probability density function and then draws samples from it using a Gibbs sampler. The system then analyses the samples to determine a set parameter values representative of the audio signal. The system then uses these parameter values to determine whether or not speech is present within the audio signals.

Description

- The present invention relates to an apparatus for and method of speech processing. The invention has particular, although not exclusive relevance to the detection of speech within an input speech signal.

- In some applications, such as speech recognition, speaker verification and voice transmission systems, the microphone used to convert the user's speech into a corresponding electrical signal is continuously switched on. Therefore, even when the user is not speaking, there will constantly be an output signal from the microphone corresponding to silence or background noise. In order (i) to prevent unnecessary processing of this background noise signal; (ii) to prevent misrecognitions caused by the noise; and (iii) to increase overall performance, such systems employ speech detection circuits which continuously monitor the signal from the microphone and which only activate the main speech processing system when speech is identified in the incoming signal.

- Detecting the presence of speech within an input speech signal is also necessary for adaptive speech processing systems which dynamically adjust weights of a filter either during speech or during silence portions. For example, in adaptive noise cancellation systems, the filter coefficients of the noise filter are only adapted when both speech and noise are present. Alternatively still, in systems which employ adaptive beam forming to suppress noise from one or more sources, the beam is only adapted when the signal of interest is not present within the input signal (i.e. during silence periods). In these systems, it is therefore important to know when the desired speech to be processed is present within the input signal.

- Most prior art speech detection circuits detect the beginning and end of speech by monitoring the energy within the input signal, since during silence the signal energy is small but during speech it is large. In particular, in conventional systems, speech is detected by comparing the average energy with a threshold and indicating that speech has started when the average energy exceeds this threshold. In order for this technique to be able to accurately determine the points at which speech starts and ends (the so called end points), the threshold has to be set near the noise floor. This type of system works well in environments with a low constant level of noise. It is not, however, suitable in many situations where there is a high level of noise which can change significantly with time. Examples of such situations include in a car, near a road or any crowded public place. The noise in these environments can mask quieter portions of speech and changes in the noise level can cause noise to be incorrectly detected as speech.

- One aim of the present invention is to provide an alternative speech detection system for detecting speech within an input signal.

- According to one aspect, the present invention provides an apparatus for detecting the presence of speech within an input audio signal, comprising: a memory for storing a probability density function for parameters of a predetermined speech model which is assumed to have generated a set of received audio signal values; means for applying the received set of audio signal values to the stored probability density function; means for processing the probability density function with those values applied to obtain values of the parameters that are representative of the input audio signal; and means for detecting the presence of speech using the obtained parameter values.

- Exemplary embodiments of the present invention will now be described with reference to the accompanying drawings in which:

- FIG. 1 is a schematic view of a computer which may be programmed to operate in accordance with an embodiment of the present invention;

- FIG. 2 is a block diagram illustrating the principal components of a speech recognition system which includes a speech detection system embodying the present invention;

- FIG. 3 is a block diagram representing a model employed by a statistical analysis unit which forms part of the speech recognition system shown in FIG. 2;

- FIG. 4 is a flow chart illustrating the processing steps performed by a model order selection unit forming part of the statistical analysis unit shown in FIG. 2;

- FIG. 5 is a flow chart illustrating the main processing steps employed by a Simulation Smoother which forms part of the statistical analysis unit shown in FIG. 2;

- FIG. 6 is a block diagram illustrating the main processing components of the statistical analysis unit shown in FIG. 2;

- FIG. 7 is a memory map illustrating the data that is stored in a memory which forms part of the statistical analysis unit shown in FIG. 2;

- FIG. 8 is a flow chart illustrating the main processing steps performed by the statistical analysis unit shown in FIG. 6;

- FIG. 9 a is a histogram for a model order of an auto regressive filter model which forms part of the model shown in FIG. 3;

- FIG. 9 b is a histogram for the variance of process noise modelled by the model shown in FIG. 3; and

- FIG. 9 c is a histogram for a third coefficient of the AR filter model.

- Embodiments of the present invention can be implemented on computer hardware, but the embodiment to be described is implemented in software which is run in conjunction with processing hardware such as a personal computer, workstation, photocopier, facsimile machine or the like.

- FIG. 1 shows a personal computer (PC) 1 which may be programmed to operate an embodiment of the present invention. A

keyboard 3, apointing device 5, amicrophone 7 and atelephone line 9 are connected to the PC 1 via aninterface 11. Thekeyboard 3 and pointingdevice 5 allow the system to be controlled by a user. Themicrophone 7 converts the acoustic speech signal of the user into an equivalent electrical signal and supplies this to the PC 1 for processing. An internal modem and speech receiving circuit (not shown) may be connected to thetelephone line 9 so that the PC 1 can communicate with, for example, a remote computer or with a remote user. - The program instructions which make the PC 1 operate in accordance with the present invention may be supplied for use with an existing PC 1 on, for example, a storage device such as a

magnetic disc 13, or by downloading the software from the Internet (not shown) via the internal modem andtelephone line 9. - The operation of a speech recognition system which employs a speech detection system embodying the present invention will now be described with reference to FIG. 2. Electrical signals representative of the input speech from the

microphone 7 are input to afilter 15 which removes unwanted frequencies (in this embodiment frequencies above 8 kHz) within the input signal. The filtered signal is then sampled (at a rate of 16 kHz) and digitised by the analogue todigital converter 17 and the digitised speech samples are then stored in abuffer 19. Sequential blocks (or frames) of speech samples are then passed from thebuffer 19 to astatistical analysis unit 21 which performs a statistical analysis of each frame of speech samples in sequence to determine, amongst other things, a set of auto regressive (AR) coefficients representative of the speech within the frame. In this embodiment, the AR coefficients output by thestatistical analysis unit 21 are then input to aspeech recognition unit 25 which compares the AR coefficients for successive frames of speech with a set ofstored speech models 27, which may be template based or Hidden Markov Model based, to generate a recognition result. In this embodiment, thespeech recognition unit 25 only performs this speech recognition processing when it is enabled to do so by aspeech detection unit 61 which detects when speech is present within the input signal. In this way, thespeech recognition unit 25 only processes the AR coefficients when there is speech within the signal to be recognised. - In this embodiment, the

speech detection unit 61 also receives the AR coefficients output by thestatistical analysis unit 21 together with the AR filter model order, which, as will be described below, is also generated by thestatistical analysis unit 21 and determines from these, when speech is present within the signal received from themicrophone 7. It can do this, since the AR filter model order and the AR coefficient values will be larger during speech than when there is no speech present. Therefore, by comparing the AR filter model order and/or the AR coefficient values with appropriate threshold values, thespeech detection unit 61 can determine whether or not speech is present within the input signal. - Statistical Analysis Unit—Theory and Overview

- As mentioned above, the

statistical analysis unit 21 analyses the speech within successive frames of the input speech signal. In most speech processing systems, the frames are overlapping. However, in this embodiment, the frames of speech are non-overlapping and have a duration of 20 ms which, with the 16 kHz sampling rate of the analogue todigital converter 17, results in a frame size of 320 samples. - In order to perform the statistical analysis on each of the frames, the

analysis unit 21 assumes that there is an underlying process which generated each sample within the frame. The model of this process used in this embodiment is shown in FIG. 3. As shown, the process is modelled by aspeech source 31 which generates, at time t=n, a raw speech sample s(n). Since there are physical constraints on the movement of the speech articulators, there is some correlation between neighbouring speech samples. Therefore, in this embodiment, thespeech source 31 is modelled by an auto regressive (AR) process. In other words, thestatistical analysis unit 21 assumes that a current raw speech sample (s(n)) can be determined from a linear weighted combination of the most recent previous raw speech samples, i.e.: - s(n)=a 1 s(n−1)+a2 s(n−2)+ . . . +a k s(n−k)+e(n) (1)

- where a 1, a2 . . . ak are the AR filter coefficients representing the amount of correlation between the speech samples; k is the AR filter model order; and e(n) represents random process noise which is involved in the generation of the raw speech samples. As those skilled in the art of speech processing will appreciate, these AR filter coefficients are the same coefficients that the linear prediction (LP) analysis estimates albeit using a different processing technique.

- As shown in FIG. 3, the raw speech samples s(n) generated by the speech source are input to a

channel 33 which models the acoustic environment between thespeech source 31 and the output of the analogue todigital converter 17. Ideally, thechannel 33 should simply attenuate the speech as it travels from thesource 31 to the microphone. However, due to reverberation and other distortive effects, the signal (y(n)) output by the analogue todigital converter 17 will depend not only on the current raw speech sample (s(n)) but it will also depend upon previous raw speech samples. Therefore, in this embodiment, thestatistical analysis unit 21 models thechannel 33 by a moving average (MA) filter, i.e.: - y(n)=h 0 s(n)+h 1 s(n−1)+h 2 s(n−2)+ . . . +h r s(n−r)+ε(n) (2)

- where y(n) represents the signal sample output by the analogue to

digital converter 17 at time t=n; h0, h1, h2 . . . hr are the channel filter coefficients representing the amount of distortion within thechannel 33; r is the channel filter model order; and ε(n) represents a random additive measurement noise component. - For the current frame of speech being processed, the filter coefficients for both the speech source and the channel are assumed to be constant but unknown. Therefore, considering all N samples (where N=320) in the current frame being processed gives:

- s(n)=a 1 s(n−1)+a 2 s(n−2)+ . . . +a k s(n−k)+e(n)

- s(n−1)=a 1 s(n−2)+a 2 s(n−3)+ . . . +a k s(n−k−1)+e(n−1)

- s(n−N+1)=a 1 s(n−N)+a 2 s(n−N−1)+ . . . +a k s(n−k−N+1)+e(n−N+1) (3)

- which can be written in vector form as:

- s(n)=S·a+e(n) (4)

-

- As will be apparent from the following discussion, it is also convenient to rewrite equation (3) in terms of the random error component (often referred to as the residual) e(n). This gives:

- e(n)=s(n)−a 1 s(n−1)−a 2 s(n−2)− . . . −a k s(n−k)

- e(n−1)=s(n−1)−a 1 s(n−2)−2 s(n−3)− . . . −a k s(n−k−1)

- e(n−N+1)=s(n−N+1)−a1 s(n−N)−a 2 s(n−N−1)− . . . −a k s(n−k−N+1) (5)

- which can be written in vector notation as:

- e(n)=Äs(n) (6)

-

- Similarly, considering the channel model defined by equation (2), with h 0=1 (since this provides a more stable solution), gives:

- q(n)=h 1 s(n−1)+h 2 s(n−2)+ . . . +h r s(n−r)+ε(n)

- q(n−1)=h1 s(n−2)+h2 s(n−3)+ . . . +h r s(n−r−1)+ε(n−1)

- q(n−N+1)=h 1 s(n−N)+h 2 s(n−N−1)+ . . . +h r s(n−r−N+1)+ε(n−N+1) (7)

- (where q(n)=y(n)−s(n)) which can be written in vector form as:

- q(n)=Y·h+ε(n) (8)

-

- In this embodiment, the

analysis unit 21 aims to determine, amongst other things, values for the AR filter coefficients (a) which best represent the observed signal samples (y(n)) in the current frame. It does this by determining the AR filter coefficients (a) that maximise the joint probability density function of the speech model, channel model, speech samples and the noise statistics given the observed signal samples output from the analogue todigital converter 17, i.e. by determining: - where σ e 2 and σε 2 represent the process and measurement noise statistics respectively. As those skilled in the art will appreciate, this function defines the probability that a particular speech model, channel model, raw speech samples and noise statistics generated the observed frame of speech samples (y(n)) from the analogue to digital converter. To do this, the

statistical analysis unit 21 must determine what this function looks like. This problem can be simplified by rearranging this probability density function using Bayes law to give: - As those skilled in the art will appreciate, the denominator of equation (10) can be ignored since the probability of the signals from the analogue to digital converter is constant for all choices of model. Therefore, the AR filter coefficients that maximise the function defined by equation (9) will also maximise the numerator of equation (10).

- Each of the terms on the numerator of equation (10) will now be considered in turn.

- p(s(n)|a, k, σ e 2)

- This term represents the joint probability density function for generating the vector of raw speech samples (s(n)) during a frame, given the AR filter coefficients (a), the AR filter model order (k) and the process noise statistics (σ e 2). From equation (6) above, this joint probability density function for the raw speech samples can be determined from the joint probability density function for the process noise. In particular p(s(n)|a, k, σe 2) is given by:

- where p(e(n)) is the joint probability density function for the process noise during a frame of the input speech and the second term on the right-hand side is known as the Jacobean of the transformation. In this case, the Jacobean is unity because of the triangular form of the matrix Ä (see equations (6) above).

- In this embodiment, the

statistical analysis unit 21 assumes that the process noise associated with thespeech source 31 is Gaussian having zero mean and some unknown variance σe 2. Thestatistical analysis unit 21 also assumes that the process noise at one time point is independent of the process noise at another time point. Therefore, the joint probability density function for the process noise during a frame of the input speech (which defines the probability of any given vector of process noise e(n) occurring) is given by: -

- p(y(n)|s(n), h, r, σ ε 2)

- This term represents the joint probability density function for generating the vector of speech samples (y(n)) output from the analogue to

digital converter 17, given the vector of raw speech samples (s(n)), the channel filter coefficients (h), the channel filter model order (r) and the measurement noise statistics (σε 2). From equation (8), this joint probability density function can be determined from the joint probability density function for the process noise. In particular, p(y(n)|s(n), h, r, σε 2) is given by: - where p(ε(n)) is the joint probability density function for the measurement noise during a frame of the input speech and the second term on the right hand side is the Jacobean of the transformation which again has a value of one.

- In this embodiment, the

statistical analysis unit 21 assumes that the measurement noise is Gaussian having zero mean and some unknown variance σε 2. It also assumes that the measurement noise at one time point is independent of the measurement noise at another time point. Therefore, the joint probability density function for the measurement noise in a frame of the input speech will have the same form as the process noise defined in equation (12). Therefore, the joint probability density function for a vector of speech samples (y(n)) output from the analogue todigital converter 17, given the channel filter coefficients (h), the channel filter model order (r), the measurement noise statistics (σε 2) and the raw speech samples (s(n)) will have the following form: - As those skilled in the art will appreciate, although this joint probability density function for the vector of speech samples (y(n)) is in terms of the variable q(n), this does not matter since q(n) is a function of y(n) and s(n), and s(n) is a given variable (ie known) for this probability density function.

- p(a|k)

- This term defines the prior probability density function for the AR filter coefficients (a) and it allows the

statistical analysis unit 21 to introduce knowledge about what values it expects these coefficients will take. In this embodiment, thestatistical analysis unit 21 models this prior probability density function by a Gaussian having an unknown variance (σa 2) and mean vector (μa), i.e.: - By introducing the new variables σ a 2 and μa, the prior density functions (p(σa 2) and p(μa)) for these variables must be added to the numerator of equation (10) above. Initially, for the first frame of speech being processed the mean vector (μa) can be set to zero and for the second and subsequent frames of speech being processed, it can be set to the mean vector obtained during the processing of the previous frame. In this case, p(μa) is just a Dirac delta function located at the current value of μa and can therefore be ignored.

- With regard to the prior probability density function for the variance of the AR filter coefficients, the

statistical analysis unit 21 could set this equal to some constant to imply that all variances are equally probable. However, this term can be used to introduce knowledge about what the variance of the AR filter coefficients is expected to be. In this embodiment, since variances are always positive, thestatistical analysis unit 21 models this variance prior probability density function by an Inverse Gamma function having parameters αa and βa, i.e.: - At the beginning of the speech being processed, the

statistical analysis unit 21 will not have much knowledge about the variance of the AR filter coefficients. Therefore, initially, thestatistical analysis unit 21 sets the variance σa 2 and the α and β parameters of the Inverse Gamma function to ensure that this probability density function is fairly flat and therefore non-informative. However, after the first frame of speech has been processed, these parameters can be set more accurately during the processing of the next frame of speech by using the parameter values calculated during the processing of the previous frame of speech. - p(h|r)

- This term represents the prior probability density function for the channel model coefficients (h) and it allows the

statistical analysis unit 21 to introduce knowledge about what values it expects these coefficients to take. As with the prior probability density function for the AR filter coefficients, in this embodiment, this probability density function is modelled by a Gaussian having an unknown variance (σh 2) and mean vector (μh), i.e.: - Again, by introducing these new variables, the prior density functions (p(σ h) and p(μh)) must be added to the numerator of equation (10). Again, the mean vector can initially be set to zero and after the first frame of speech has been processed and for all subsequent frames of speech being processed, the mean vector can be set to equal the mean vector obtained during the processing of the previous frame. Therefore, p(μh) is also just a Dirac delta function located at the current value of μh and can be ignored.

- With regard to the prior probability density function for the variance of the channel filter coefficients, again, in this embodiment, this is modelled by an Inverse Gamma function having parameters α h and βh. Again, the variance (σh 2) and the α and β parameters of the Inverse Gamma function can be chosen initially so that these densities are non-informative so that they will have little effect on the subsequent processing of the initial frame.

- p(σ e 2) and p(σε 2)

- These terms are the prior probability density functions for the process and measurement noise variances and again, these allow the

statistical analysis unit 21 to introduce knowledge about what values it expects these noise variances will take. As with the other variances, in this embodiment, thestatistical analysis unit 21 models these by an Inverse Gamma function having parameters αe, βe and αε, βε respectively. Again, these variances and these Gamma function parameters can be set initially so that they are non-informative and will not appreciably affect the subsequent calculations for the initial frame. - p(k) and p(r)

- These terms are the prior probability density functions for the AR filter model order (k) and the channel model order (r) respectively. In this embodiment, these are modelled by a uniform distribution up to some maximum order. In this way, there is no prior bias on the number of coefficients in the models except that they can not exceed these predefined maximums. In this embodiment, the maximum AR filter model order (k) is thirty and the maximum channel model order (r) is one hundred and fifty.

-

- Gibbs Sampler

- In order to determine the form of this joint probability density function, the

statistical analysis unit 21 “draws samples” from it. In this embodiment, since the joint probability density function to be sampled is a complex multivariate function, a Gibbs sampler is used which breaks down the problem into one of drawing samples from probability density functions of smaller dimensionality. In particular, the Gibbs sampler proceeds by drawing random variates from conditional densities as follows: - etc.

- where (h 0, r0, (σe 2)0, (σε 2)0, (σa 2)0, (σh 2)0, s(n)0) are initial values which may be obtained from the results of the statistical analysis of the previous frame of speech, or where there are no previous frames, can be set to appropriate values that will be known to those skilled in the art of speech processing.

-

-

-

-

- A sample can then be drawn from this standard Gaussian distribution to give a g (where g is the gth iteration of the Gibbs sampler) with the model order (kg) being determined by a model order selection routine which will be described later. The drawing of a sample from this Gaussian distribution may be done by using a random number generator which generates a vector of random values which are uniformly distributed and then using a transformation of random variables using the covariance matrix and the mean value given in equations (22) and (23) to generate the sample. In this embodiment, however, a random number generator is used which generates random numbers from a Gaussian distribution having zero mean and a variance of one. This simplifies the transformation process to one of a simple scaling using the covariance matrix given in equation (22) and shifting using the mean value given in equation (23). Since the techniques for drawing samples from Gaussian distributions are well known in the art of statistical analysis, a further description of them will not be given here. A more detailed description and explanation can be found in the book entitled “Numerical Recipes in C”, by W. Press et al, Cambridge University Press, 1992 and in particular at

chapter 7. - As those skilled in the art will appreciate, however, before a sample can be drawn from this Gaussian distribution, estimates of the raw speech samples must be available so that the matrix S and the vector s(n) are known. The way in which these estimates of the raw speech samples are obtained in this embodiment will be described later.

-

- from which a sample for h g can be drawn in the manner described above, with the channel model order (rg) being determined using the model order selection routine which will be described later.

-

- where:

- E=s(n)T s(n)−2a T Ss(n)+a T S T Sa

-

-

- A sample is then drawn from this Inverse Gamma distribution by firstly generating a random number from a uniform distribution and then performing a transformation of random variables using the alpha and beta parameters given in equation (27), to give (σ e 2)g.

-

- where:

- E*=q(n)T q(n)−2h T Yq(n)+h T Y T Yh

- A sample is then drawn from this Inverse Gamma distribution in the manner described above to give (σ ε 2)g.

-

- A sample is then drawn from this Inverse Gamma distribution in the manner described above to give (σ a 2)g.

-

- A sample is then drawn from this Inverse Gamma distribution in the manner described above to give (σ h 2)g.

- As those skilled in the art will appreciate, the Gibbs sampler requires an initial transient period to converge to equilibrium (known as burn-in). Eventually, after L iterations, the sample (a L, kL, hL, rL, (σe 2)L, (σε 2)L, (σa 2)L, (σh 2)L, s(n)L) is considered to be a sample from the joint probability density function defined in equation (19). In this embodiment, the Gibbs sampler performs approximately one hundred and fifty (150) iterations on each frame of input speech and discards the samples from the first fifty iterations and uses the rest to give a picture (a set of histograms) of what the joint probability density function defined in equation (19) looks like. From these histograms, the set of AR coefficients (a) which best represents the observed speech samples (y(n)) from the analogue to

digital converter 17 are determined. The histograms are also used to determine appropriate values for the variances and channel model coefficients (h) which can be used as the initial values for the Gibbs sampler when it processes the next frame of speech. - Model Order Selection

- As mentioned above, during the Gibbs iterations, the model order (k) of the AR filter and the model order (r) of the channel filter are updated using a model order selection routine. In this embodiment, this is performed using a technique derived from “Reversible jump Markov chain Monte Carlo computation”, which is described in the paper entitled “Reversible jump Markov chain Monte Carlo Computation and Bayesian model determination” by Peter Green, Biometrika, vol 82, pp 711 to 732, 1995.

- FIG. 4 is a flow chart which illustrates the processing steps performed during this model order selection routine for the AR filter model order (k). As shown, in step s 1, a new model order (k2) is proposed. In this embodiment, the new model order will normally be proposed as k2=k1±1, but occasionally it will be proposed as k2=k1±2 and very occasionally as k2=k1±3 etc. To achieve this, a sample is drawn from a discretised Laplacian density function centred on the current model order (k1) and with the variance of this Laplacian density function being chosen a priori in accordance with the degree of sampling of the model order space that is required.

-

- where the ratio term is the ratio of the conditional probability given in equation (21) evaluated for the current AR filter coefficients (a) drawn by the Gibbs sampler for the current model order (k 1) and for the proposed new model order (k2). If k2>k1, then the matrix S must first be resized and then a new sample must be drawn from the Gaussian distribution having the mean vector and covariance matrix defined by equations (22) and (23) (determined for the resized matrix S), to provide the AR filter coefficients (a<1:k2>) for the new model order (k2). If k2<k1 then all that is required is to delete the last (k1−k2) samples of the a vector. If the ratio in equation (31) is greater than one, then this implies that the proposed model order (k2) is better than the current model order whereas if it is less than one then this implies that the current model order is better than the proposed model order. However, since occasionally this will not be the case, rather than deciding whether or not to accept the proposed model order by comparing the model order variable (MO) with a fixed threshold of one, in this embodiment, the model order variable (MO) is compared, in step s5, with a random number which lies between zero and one. If the model order variable (MO) is greater than this random number, then the processing proceeds to step s7 where the model order is set to the proposed model order (k2) and a count associated with the value of k2 is incremented. If, on the other hand, the model order variable (MO) is smaller than the random number, then the processing proceeds to step s9 where the current model order is maintained and a count associated with the value of the current model order (k1) is incremented. The processing then ends.

- This model order selection routine is carried out for both the model order of the AR filter model and for the model order of the channel filter model. This routine may be carried out at each Gibbs iteration. However, this is not essential. Therefore, in this embodiment, this model order updating routine is only carried out every third Gibbs iteration.

- Simulation Smoother

- As mentioned above, in order to be able to draw samples using the Gibbs sampler, estimates of the raw speech samples are required to generate s(n), S and Y which are used in the Gibbs calculations. These could be obtained from the conditional probability density function p(s(n)| . . . ). However, this is not done in this embodiment because of the high dimensionality of S(n). Therefore, in this embodiment, a different technique is used to provide the necessary estimates of the raw speech samples. In particular, in this embodiment, a “Simulation Smoother” is used to provide these estimates. This Simulation Smoother was proposed by Piet de Jong in the paper entitled “The Simulation Smoother for Time Series Models”, Biometrika (1995),