TWI762128B - Device and method of energy management using multi-object reinforcement learning - Google Patents

Device and method of energy management using multi-object reinforcement learning Download PDFInfo

- Publication number

- TWI762128B TWI762128B TW109146801A TW109146801A TWI762128B TW I762128 B TWI762128 B TW I762128B TW 109146801 A TW109146801 A TW 109146801A TW 109146801 A TW109146801 A TW 109146801A TW I762128 B TWI762128 B TW I762128B

- Authority

- TW

- Taiwan

- Prior art keywords

- energy

- reinforcement learning

- consuming

- storage device

- time interval

- Prior art date

Links

Images

Abstract

Description

本發明係關於智慧能源管理(Intelligent energy managment)之技術領域,尤指一種利用多目標強化學習之能源管理裝置及方法。The present invention relates to the technical field of intelligent energy management, and more particularly, to an energy management device and method utilizing multi-objective reinforcement learning.

科技的發展係增進生活便利性且帶來良好的生活品質,同時卻也導致能源的大量使用,致使能耗(Energy consumption)成為家庭和小型企業等用戶之開支的重要部分。故而,如何適當處理能耗開支成為用戶極度重視的課題,於是能源管理系統(Energy management system, EMS)近年來被廣泛研究用以妥善管理電力使用,期望能夠在電力需求和能耗之間取得平衡。The development of science and technology improves the convenience of life and brings good quality of life, but also leads to a large amount of energy consumption, so that energy consumption has become an important part of the expenses of users such as households and small businesses. Therefore, how to properly handle energy consumption has become a topic that users attach great importance to. Therefore, energy management systems (EMS) have been widely studied in recent years to properly manage electricity usage, hoping to achieve a balance between electricity demand and energy consumption. .

應知道,電力公司對用戶所收取之電費一般包含二種類別:電能費用(energy charge)及需量費用(demand charge)。其中,電能費用為用戶於一段期間(如:一個月帳單期間)內所使用之總電量所衍生之費用,其計價單位為「千瓦.小時(kWh)」。更詳細地說明,電力公司通常實施分時電價制度(即,不同時間區間設定不同電價費率),藉此引導用戶降低其尖峰時間區間之用電量。另一方面,需量費用則是電力公司依據用戶於一段期間內之最大需量(maximum demand)所收取的費用,其計價單位為「千瓦(kW)」。更詳細地說明,電力公司依事先與用戶簽訂的契約容量(contracted capacity)收取固定的需量費用,如果用戶實際用電的最大需量超過該契約容量時,再額外收取超約金額。It should be known that electricity charges charged by power companies to users generally include two categories: energy charges and demand charges. Among them, the electricity cost is the cost derived from the total electricity used by the user during a period (eg, a monthly billing period), and the unit of measurement is "kilowatt-hour (kWh)". In more detail, power companies usually implement a time-of-use electricity price system (ie, different electricity price rates are set in different time intervals), thereby guiding users to reduce their electricity consumption during peak time intervals. Demand charges, on the other hand, are charges charged by power companies based on the maximum demand from users within a period of time, and the unit of measure is "kilowatts (kW)." In more detail, the power company charges a fixed demand fee based on the contracted capacity signed with the user in advance. If the actual maximum demand of the user exceeds the contracted capacity, an additional over-contracted amount will be charged.

由前述說明可知,需量響應(Demand Response, DR)在能源管理系統之中扮演著重要角色。有鑑於此,文獻一提出可應用在能源管理系統之中的一種基於需量響應之優化的功率調度方法。於此,文獻一指的是Zhao et.al, “An optimal power scheduling method for demand response in home energy management system,” IEEE Trans. Smart Grid, vol. 4, no. 3, pp. 1391–1400, Sep. 2013。 所述方法結合實時電價(real-time pricing)以及累進電價(inclining block rate, IBR)設計出應用在所述能源管理系統之一主控制器的一功率調度算法。實驗結果證實,通過採用此功率調度算法,所述能源管理系統可以降低用戶的能耗開支。可惜的是,此功率調度算法雖然可以降低用戶的能耗開支,卻也同時降低了用戶的用電滿意度。As can be seen from the above description, Demand Response (DR) plays an important role in the energy management system. In view of this,

因此,文獻二提出了兼顧用戶的用電滿意度和能耗開支的改善方案。於此,文獻二指的是Althaher et.al, “Automated demand response from home energy management system under dynamic pricing and power and comfort constraints,” IEEE Trans. Smart Grid, vol. 6, no. 4, pp. 1874–1883, Jul. 2015.。其中,由於所述改善方案係依據全天電價、可再生能源裝置的電量以及用戶需求而制定出最佳的用電排程。然而,在某些情況下,可能無法提前知道某一日的全天電價以及可再生能源裝置在該日所能夠產生的電量。換句話說,文獻二的改善方案所需要的數據具有不確定性,以致於限制了其實際應用的可行性。Therefore,

為了處理前述不確定的環境,機器學習技術於是被應用於能源管理系統。其中,強化學習(Reinforcement Learning, RL)即為運用有限信息解決未知環境的一種決策算法。舉例而言,文獻三提出了一種使用強化學習的電設備需量響應方法。於此,文獻三指的是Wen, et.al, “Optimal demand response using devicebased Reinforcement learning,” IEEE Trans. Smart Grid, vol. 6, no. 5, pp. 2312–2324, Sep. 2015。進一步地,文獻四提出應用於家庭能源管理的一種使用強化學習和類神經網路之需量響應方法。文獻四指的是Lu, et.al, “Demand response for home energy management using reinforcement learning and artificial neural network,” IEEE Trans. Smart Grid, vol. 10, no. 6, pp. 6629–6639, Nov. 2019。To deal with the aforementioned uncertain environment, machine learning techniques are then applied to energy management systems. Among them, Reinforcement Learning (RL) is a decision-making algorithm that uses limited information to solve the unknown environment. For example,

在文獻四的需量響應方法中,係使用權重和轉換(weighted-sum transformation)將需量響應表述為單一目標強化學習(Single Objective Reinforcement Learning, SORL)算法。其中,由於權重變化係基於用戶偏好,故其會隨時間變化。在權重依用戶偏好改變之後,強化學習產生最佳用電排程的速度取決於環境(如:數據),且其必須歷經一段時間的重新訓練才能夠完成最佳用電排程。更重要的是,文獻四的方法只能夠針對單一目標(即,電設備)的需量響應進行強化學習而後產生對應的最佳用電排程。In the demand response method in

由上述說明可知,習知的應用在能源管理系統之中的基於電設備或用戶用電之需量響應方法仍具有加以改善的空間。有鑑於此,本案之發明人係極力加以研究發明,而終於研發完成一種利用多目標強化學習之能源管理裝置及方法。As can be seen from the above description, there is still room for improvement in the conventional demand response method based on electrical equipment or user power consumption applied in the energy management system. In view of this, the inventor of this case has made great efforts to research and invent, and finally developed an energy management device and method using multi-objective reinforcement learning.

本發明之主要目的在於提供一種利用多目標強化學習之能源管理裝置,其耦接於至少一可再生能源裝置、一能源儲存裝置和複數個耗能裝置之間,且具有一第一強化學習單元以及一第二強化學習單元。其中,所述第一強化學習單元依據用戶給定的裝置運作需求(如裝置運作時間、功率)與電力公司之實時電價而執行強化學習運算以獲得包括複數個裝置控制參數的一耗能裝置運作排程。另一方面,所述第二強化學習單元依據電力公司之實時電價與能源儲存裝置之狀態參數而執行強化學習運算以獲得該能源儲存裝置的一充/放電運作排程。最終,所述能源管理裝置依據耗能裝置運作排程控制複數個所述耗能裝置分時運作,且依據充/放電運作排程控制能源儲存裝置之充/放電,從而在用電成本最小化的情況下使得用戶的用電滿意度能夠最大化。The main purpose of the present invention is to provide an energy management device using multi-objective reinforcement learning, which is coupled between at least one renewable energy device, an energy storage device and a plurality of energy consuming devices, and has a first reinforcement learning unit and a second reinforcement learning unit. Wherein, the first reinforcement learning unit performs reinforcement learning operation according to the device operation requirements (such as device operation time, power) given by the user and the real-time electricity price of the power company to obtain an energy-consuming device operation including a plurality of device control parameters schedule. On the other hand, the second reinforcement learning unit performs reinforcement learning operations according to the real-time electricity price of the power company and the state parameters of the energy storage device to obtain a charging/discharging operation schedule of the energy storage device. Finally, the energy management device controls the time-sharing operation of a plurality of the energy consuming devices according to the energy consuming device operation schedule, and controls the charging/discharging of the energy storage device according to the charging/discharging operation schedule, so as to minimize the cost of electricity consumption Under the circumstance, the user's satisfaction with electricity consumption can be maximized.

為達成上述目的,本發明提出所述利用多目標強化學習之能源管理裝置的一實施例,其耦接於至少一可再生能源裝置、一能源儲存裝置以及複數個耗能裝置之間,且包括: 一控制單元; 一用戶介面,耦接該控制單元,用以供用戶操作以輸入複數個裝置運作需求; 一資訊提供單元,耦接該控制單元,用以提供至少一能源儲存裝置狀態資訊以及一電價資訊予所述控制單元; 一第一強化學習單元,用以基於該控制單元之控制而依據複數個所述裝置運作需求和所述電價資訊執行一第一強化學習運算,從而獲得包含複數個裝置控制參數的一耗能裝置運作排程,使該控制單元依據所述耗能裝置運作排程控制複數個所述耗能裝置進行分時動作;以及 一第二強化學習單元,用以基於該控制單元之控制而依據所述能源儲存裝置資訊和所述電價資訊執行一第二強化學習運算,從而獲得包含一充電控制參數和一放電控制參數的一充/放電運作排程,使該控制單元依據所述充/放電運作排程控制該能源儲存裝置進行分時動作。 In order to achieve the above object, the present invention proposes an embodiment of the energy management device using multi-objective reinforcement learning, which is coupled between at least one renewable energy device, an energy storage device, and a plurality of energy consumption devices, and includes: : a control unit; a user interface, coupled to the control unit, for the user to operate to input operation requirements of a plurality of devices; an information providing unit, coupled to the control unit, for providing at least one energy storage device status information and an electricity price information to the control unit; a first reinforcement learning unit for performing a first reinforcement learning operation according to a plurality of the device operation requirements and the electricity price information based on the control of the control unit, so as to obtain an energy-consuming device including a plurality of device control parameters an operation schedule, so that the control unit controls a plurality of the energy-consuming devices to perform time-sharing operations according to the energy-consuming device operation schedule; and a second reinforcement learning unit for performing a second reinforcement learning operation according to the energy storage device information and the electricity price information based on the control of the control unit, so as to obtain a charging control parameter and a discharging control parameter The charging/discharging operation schedule enables the control unit to control the energy storage device to perform time-sharing operations according to the charging/discharging operation schedule.

並且,本發明同時提出一種利用多目標強化學習之能源管理方法,其係由耦接於至少一可再生能源裝置、一能源儲存裝置以及複數個耗能裝置之間的一能源管理裝置實現,且包括以下步驟: (1)接收用戶所輸入的複數個裝置運作需求,且取得至少一能源儲存裝置狀態資訊以及一電價資訊; (2)依據複數個所述裝置運作需求和所述電價資訊執行一第一強化學習運算以獲得包含複數個裝置控制參數的一耗能裝置運作排程,且依據所述能源儲存裝置資訊和所述電價資訊執行一第二強化學習運算以獲得包含一充電控制參數和一放電控制參數的一充/放電運作排程;以及 (3)依據所述耗能裝置運作排程控制控制複數個所述耗能裝置進行分時動作,且依據所述充/放電運作排程控制該能源儲存裝置進行分時動作。 Furthermore, the present invention also provides an energy management method using multi-objective reinforcement learning, which is realized by an energy management device coupled between at least one renewable energy device, an energy storage device, and a plurality of energy consuming devices, and Include the following steps: (1) Receive a plurality of device operation requirements input by the user, and obtain at least one energy storage device status information and an electricity price information; (2) Execute a first reinforcement learning operation according to a plurality of the device operation requirements and the electricity price information to obtain an energy consumption device operation schedule including a plurality of device control parameters, and according to the energy storage device information and all performing a second reinforcement learning operation on the electricity price information to obtain a charge/discharge operation schedule including a charge control parameter and a discharge control parameter; and (3) Controlling a plurality of the energy consuming devices to perform time-sharing operations according to the energy-consuming device operation schedule, and controlling the energy storage device to perform time-sharing operations according to the charging/discharging operation schedule.

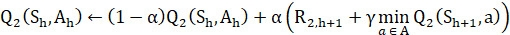

在一實施例中,所述第一強化學習運算係利用以下數學式(1)、(2)和(3)完成: (1) ; (2) ; (3) ; 其中, 、 、 、 、 、和 皆為Q值,其係依據所述耗能裝置之狀態與動作而產生的數值; 其中,α為學習率, 為與用電費用對應的獎勵值, 為與用戶滿意度對應的獎勵值, 為折扣率, 為所述耗能裝置於當前時間區間之狀態, 為所述耗能裝置於下一時間區間之狀態, 為所述耗能裝置於當前時間區間的最佳動作, 為所述耗能裝置於當前時間區間之動作, 為所述耗能裝置於下一時間區間之的動作,a為所述耗能裝置所進行的動作, A為所述耗能裝置之一動作集合, 為與用電費用對應的權重值,且 為與用戶滿意度對應的權重值,且 。 In one embodiment, the first reinforcement learning operation is performed using the following equations (1), (2) and (3): (1) ; (2) ; (3) ; in, , , , , ,and are all Q values, which are values generated according to the state and action of the energy-consuming device; where α is the learning rate, is the reward value corresponding to the electricity cost, is the reward value corresponding to user satisfaction, is the discount rate, is the state of the energy-consuming device in the current time interval, is the state of the energy-consuming device in the next time interval, is the best action of the energy-consuming device in the current time interval, is the action of the energy-consuming device in the current time interval, is the action of the energy-consuming device in the next time interval, a is the action performed by the energy-consuming device, A is an action set of the energy-consuming device, is the weight value corresponding to the electricity cost, and is the weight value corresponding to user satisfaction, and .

在一實施例中,所述第二強化學習運算係利用以下數學式(4)和(5)完成: (4) ; (5) ; 其中, 和 為Q值,其係依據所述能源儲存裝置之狀態與動作而產生的數值; 其中,α為學習率, 為獎勵值, 為所述能源儲存裝置於當前時間區間之狀態, 為所述能源儲存裝置於下一時間區間之狀態, 為所述能源儲存裝置於當前時間區間的最佳動作, 為所述能源儲存裝置於當前時間區間之動作,a為所述能源儲存裝置所進行的動作,且 為所述能源儲存裝置之一動作集合。 In one embodiment, the second reinforcement learning operation is performed using the following equations (4) and (5): (4) ; (5) ; in, and is the Q value, which is a value generated according to the state and action of the energy storage device; wherein, α is the learning rate, is the reward value, is the state of the energy storage device in the current time interval, is the state of the energy storage device in the next time interval, is the best action of the energy storage device in the current time interval, is the action of the energy storage device in the current time interval, a is the action performed by the energy storage device, and An action set for the energy storage device.

在一實施例中,該複數個耗能裝置包括:不可彈性調整運作模式之至少一第一耗能裝置、運作時間可彈性調整之至少一第二耗能裝置、以及運作功率可彈性調整之至少一第三耗能裝置。In one embodiment, the plurality of energy consuming devices include: at least one first energy consuming device whose operating mode cannot be flexibly adjusted, at least one second energy consuming device whose operating time can be flexibly adjusted, and at least one energy consuming device whose operating power can be flexibly adjusted. A third energy consuming device.

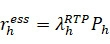

在一實施例中,對於所述第二耗能裝置而言,其用於上式(1)和上式(2)之獎勵值係利用下式(1-1)和(1-2)計算獲得: (1-1) ; (1-2) ; 其中, 為當前時間區間的實時電價(real time pricing (RTP) tariff), 為所述第二耗能裝置於當前時間區間的能耗, 為對應於所述第二耗能裝置之運作排程的使用者不滿意度。 In one embodiment, for the second energy consuming device, the reward value for the above formula (1) and the above formula (2) is calculated by using the following formulae (1-1) and (1-2) Gain: (1-1) ; (1-2) ; in, is the real time pricing (RTP) tariff for the current time interval, is the energy consumption of the second energy-consuming device in the current time interval, User dissatisfaction corresponding to the operation schedule of the second energy consuming device.

在一實施例中,對於所述第三耗能裝置而言,其用於上式(1)和上式(2)之獎勵值係利用下式(1-1)和(1-2)計算獲得: (1-1) ; (1-2) ; 其中, 為當前時間區間的實時電價(real time pricing (RTP) tariff), 為所述第三耗能裝置於當前時間區間的能耗,且 為對應於所述第三耗能裝置之運作排程的使用者不滿意度。 In one embodiment, for the third energy consuming device, the reward value used for the above formula (1) and the above formula (2) is calculated by the following formulas (1-1) and (1-2) Gain: (1-1) ; (1-2) ; in, is the real time pricing (RTP) tariff for the current time interval, is the energy consumption of the third energy-consuming device in the current time interval, and User dissatisfaction corresponding to the operation schedule of the third energy consuming device.

在一實施例中,該能源儲存裝置為電池,且所述可再生能源裝置為下列任一者:太陽光能發電裝置、太陽熱能發電裝置、風力發電裝置、火力發電裝置、或水力發電裝置。In one embodiment, the energy storage device is a battery, and the renewable energy device is any one of the following: a solar power generation device, a solar thermal power generation device, a wind power generation device, a thermal power generation device, or a hydroelectric power generation device.

在一實施例中,其中,所述能源管理裝置係透過一電池管理裝置取得所述能源儲存裝置狀態資訊,且該能源儲存裝置狀態資訊包括:電池容量、電池充電效率、電池放電效率、以及漏電參數。In one embodiment, the energy management device obtains the state information of the energy storage device through a battery management device, and the state information of the energy storage device includes: battery capacity, battery charging efficiency, battery discharging efficiency, and leakage current parameter.

在一實施例中,所述能源管理裝置係透過一電池管理裝置控制該能源儲存裝置進行分時動作。In one embodiment, the energy management device controls the energy storage device to perform time-sharing operations through a battery management device.

在一實施例中,該複數個裝置運作需求包括:運作時間區間、持續運作時間、運作功率、最高運作功率、以及最低運作功率。In one embodiment, the plurality of device operation requirements include: an operation time interval, a continuous operation time, an operation power, a maximum operation power, and a minimum operation power.

為使 貴審查委員能進一步瞭解本發明之結構、特徵、目的、與其優點,茲附以圖式及較佳具體實施例之詳細說明如後。In order to enable your examiners to further understand the structure, characteristics, purpose, and advantages of the present invention, drawings and detailed descriptions of preferred embodiments are attached as follows.

請參閱圖1,其顯示本發明之一種利用多目標強化學習之能源管理系統的立體圖。並且,請同時參閱圖2,其顯示本發明之利用多目標強化學習之能源管理系統的方塊圖。如圖1與圖2所示,本發明之利用多目標強化學習之能源管理裝置1(下文簡稱“能源管理裝置1”)耦接於至少一可再生能源裝置2、一能源儲存裝置3以及複數個耗能裝置(5a, 5b, 5c)之間。特別說明的是,雖然圖1繪示該可再生能源裝置2為一太陽光能發電裝置(Photovoltaic (PV) device),然而,在可行的實施例中,所述可再生能源裝置2也可以是太陽熱能發電裝置、風力發電裝置、火力發電裝置、或水力發電裝置。另一方面,圖1繪示該能源儲存裝置3為電池。Please refer to FIG. 1 , which shows a perspective view of an energy management system utilizing multi-objective reinforcement learning of the present invention. Also, please refer to FIG. 2 , which shows a block diagram of an energy management system utilizing multi-objective reinforcement learning of the present invention. As shown in FIG. 1 and FIG. 2 , the

如圖1與圖2所示,該能源管理裝置1包括:一控制單元10、一用戶介面11、一資訊提供單元12、一第一強化學習單元13、以及一第二強化學習單元14。在一實施例中,該控制單元10可為一處理器,且該第一強化學習單元13和該第二強化學習單元14係以韌體、函式庫、變數、或運算元的形式被建立於該處理器之中。在另一實施例中,該能源管理裝置1具有一作業系統,且該第一強化學習單元13和該第二強化學習單元14以應用軟體的形式安裝在該作業系統內,用以依據該控制單元10的控制而啟用。如圖1與圖2所示,該用戶介面11,例如一觸控螢幕,其係耦接該控制單元10且用以供用戶操作以輸入複數個裝置運作需求。當然,在用戶的操作下,用戶介面11也可以顯示取該可再生能源裝置2、該能源儲存裝置3及/或該複數個耗能裝置(5a, 5b, 5c)的當前資訊。As shown in FIG. 1 and FIG. 2 , the

更詳細地說明,該資訊提供單元12耦接該控制單元10,用以提供至少一能源儲存裝置狀態資訊以及一電價資訊予所述控制單元10。應可理解,所述資訊提供單元12為一資料傳/收介面,其可透過一電池管理裝置4取得該能源儲存裝置3(如電池)之有關能源儲存裝置狀態資訊,包括:電池容量、電池充電效率、電池放電效率、以及漏電參數,從而將前述之能源儲存裝置狀態資訊提供給控制單元10。另一方面,該資訊提供單元12(即,資料傳/收介面)亦可自電力公司處取得實時電價(real time pricing, RTP)表,從而提供一電價資訊予控制單元10。More specifically, the

更詳細地說明,依據該控制單元10之控制,該第一強化學習單元13利用Q-learning法而基於複數個所述裝置運作需求與所述電價資訊執行一第一強化學習運算,從而獲得含有複數個裝置控制參數的一耗能裝置運作排程,使該控制單元10依據該耗能裝置運作排程控制複數個所述耗能裝置(5a, 5b, 5c)進行分時動作。其中,該第一強化學習運算13係利用以下數學式(1)、(2)和(3)完成所述第一強化學習運算:

(1)

;

(2)

;以及

(3)

。

In more detail, according to the control of the

於上式(1)、(2)和(3)之中, 、 、 、 、 、和 皆為Q值,其係依據所述耗能裝置之狀態與動作而產生的數值。熟悉Q-learning法的演算法工程師必然知道,在強化學習的過程中,會先基於狀態-動作對(state-action pair)產生所謂的Q表。實際上,Q表為包含M×N個Q值的矩陣,包括: 、……、 ,而強化學習便是利用獎勵值和學習規則不斷地訓練Q值,直至耗能裝置的最佳動作(即, )被找出。另一方面,α為學習率, 為與用電費用(即,實時電價)對應的獎勵值, 為與用戶滿意度對應的獎勵值, 為折扣率, 為所述耗能裝置於當前時間區間之狀態, 為所述耗能裝置於下一時間區間之狀態, 為所述耗能裝置於當前時間區間的最佳動作, 為所述耗能裝置於當前時間區間之動作, 為所述耗能裝置於下一時間區間之的動作,a為所述耗能裝置所進行的動作, A為所述耗能裝置之一動作集合, 為與用電費用對應的權重值,且 為與用戶滿意度對應的權重值,且 。 In the above formulas (1), (2) and (3), , , , , ,and All are Q values, which are values generated according to the state and action of the energy consuming device. Algorithm engineers who are familiar with the Q-learning method must know that in the process of reinforcement learning, the so-called Q-table is first generated based on the state-action pair. In fact, the Q table is a matrix containing M × N Q values, including: ,……, , and reinforcement learning is to use the reward value and learning rules to continuously train the Q value until the optimal action of the energy-consuming device (ie, ) was found. On the other hand, α is the learning rate, is the reward value corresponding to the electricity cost (ie, the real-time electricity price), is the reward value corresponding to user satisfaction, is the discount rate, is the state of the energy-consuming device in the current time interval, is the state of the energy-consuming device in the next time interval, is the best action of the energy-consuming device in the current time interval, is the action of the energy-consuming device in the current time interval, is the action of the energy-consuming device in the next time interval, a is the action performed by the energy-consuming device, A is an action set of the energy-consuming device, is the weight value corresponding to the electricity cost, and is the weight value corresponding to user satisfaction, and .

值得加以解釋的是,h指的是當前時間區間(time slot),若將1天分為24個時間區間,則h∈{1,2,………,23,24},且h+1為下一個時間區間。另一方面,α∈[0, 1],且 ∈[0, 1]。 It is worth explaining that h refers to the current time slot. If one day is divided into 24 time slots, then h∈{1,2,......,23,24}, and h+1 for the next time interval. On the other hand, α∈[0, 1], and ∈[0, 1].

本發明之目的係在用電成本最小化的情況下使得用戶的電滿意度能夠最大化。因此,必須設立兩個Q表,即 和 。於式(1)中, 為與用電費用(即,實時電價)對應的獎勵值,亦即,式(1)係基於依據用電費用所設計出的獎勵方案從而使用epsilon-greedy算法(ε-greedy algorithm)進行耗能裝置的動作選擇。當然,所述動作係依用戶輸入的裝置運作需求以及裝置類別而有所不同。另一方面,於式(2)中, 為與用戶滿意度對應的獎勵值,亦即,式(2)係基於依據用戶滿意度所設計出的獎勵方案從而使用ε-greedy算法進行耗能裝置的動作選擇。最終,在使用Q-learning法完成 和 (即,兩個Q表)的建立後,式(3)即用於選出耗能裝置(5a, 5b, 5c)於當前時間區間的最佳動作。 The purpose of the present invention is to maximize the user's electrical satisfaction while minimizing the electricity cost. Therefore, two Q tables must be established, namely and . In formula (1), is the reward value corresponding to the electricity cost (ie, the real-time electricity price), that is, the formula (1) is based on the reward scheme designed according to the electricity cost, so that the epsilon-greedy algorithm (ε-greedy algorithm) is used for energy consumption Device action selection. Of course, the actions are different according to the operation requirements of the device input by the user and the type of the device. On the other hand, in formula (2), is the reward value corresponding to the user's satisfaction, that is, formula (2) is based on the reward scheme designed according to the user's satisfaction, so that the ε-greedy algorithm is used to select the action of the energy-consuming device. Finally, after using the Q-learning method to complete and After the establishment of the two Q tables (that is, the two Q tables), the formula (3) is used to select the best action of the energy consuming devices (5a, 5b, 5c) in the current time interval.

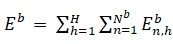

依據本發明之設計,該複數個耗能裝置(5a, 5b, 5c)包括:不可彈性調整運作模式之至少一第一耗能裝置(Inflexible appliance)5a、運作時間可彈性調整之至少一第二耗能裝置(Time-flexible appliance)5b、以及運作功率可彈性調整之至少一第三耗能裝置(Power-flexible appliance)5c。舉例而言,對於一般用戶而言,電腦主機和電冰箱屬於不可彈性調整運作模式之第一耗能裝置5a,洗衣機屬於運作時間可彈性調整之第二耗能裝置5b,而空調裝置則屬於運作功率可彈性調整之第三耗能裝置5c。進一步地,可利用下式(a)、式(b)以及式(c)分別表示三種耗能裝置一整天的能耗(energy consumption):

(a)

;

(b)

;以及

(c)

。

According to the design of the present invention, the plurality of energy consuming devices (5a, 5b, 5c) include: at least one first energy consuming device (Inflexible appliance) 5a whose operation mode cannot be flexibly adjusted, and at least one second

如於上式(1)、(2)和(3)之中,n表示為耗能裝置的編號,且N為最大數量。並且,h表示為時間區間,且H為最大值(如24)。進一步地,可基於裝置能耗設計出對應三種耗能裝置的用戶不滿意度之數學運算式,如下式(b1)、(c1)所示: (b1) ;以及 (c1) 。 As in the above equations (1), (2) and (3), n represents the number of the energy consuming device, and N is the maximum number. And, h is represented as a time interval, and H is the maximum value (eg, 24). Further, based on the energy consumption of the device, a mathematical expression corresponding to the user's dissatisfaction of the three energy-consuming devices can be designed, as shown in the following formulas (b1) and (c1): (b1) ; and (c1) .

依據不同種類的耗能裝置(5a, 5b, 5c),用戶在輸入所述裝置運作需求之時,該用戶介面11會引導用戶輸入運作時間區間(Requested time range)、持續運作時間(Operation duration)、運作功率(Operation power)、最高運作功率、以及最低運作功率。於上式(b1)和(c1)之中,

為用戶對於第二耗能裝置5b的用戶不滿意度,且

為用戶對於第三耗能裝置5c的用戶不滿意度。應可注意到,由於第一耗能裝置5a不具運作可調整性,因此本發明之能源管理裝置1無須對其運作模式進行分時控制,自然不會有所謂的用戶不滿意度產生。更詳細地說明,

為與第二耗能裝置5b有關的不滿意度參數,

為與第三耗能裝置5c有關的不滿意度參數,且兩個不滿意度參數的數值皆介於0和1之間。再者,

為本發明之能源管理裝置1控制第二耗能裝置5b啟動之時間,且

收到用戶所輸入的裝置運作需求之時間。更詳細地說明,由於第三耗能裝置5c為運作功率可調之裝置,因此

指的是用戶輸入的最高運作功率(即,能耗)。

According to different types of energy-consuming devices (5a, 5b, 5c), when the user inputs the operation requirements of the device, the

利用上式(b1)和(c1)計算出用戶不滿意度之後,便可繼續地計算出用於上式(1)和上式(2)之獎勵值。對於所述第二耗能裝置5b而言,其用於上式(1)和上式(2)之獎勵值係利用下式(1-1)和(1-2)計算獲得:

(1-1)

;以及

(1-2)

。

After the user's dissatisfaction is calculated using the above equations (b1) and (c1), the reward value for the above equations (1) and (2) can be continuously calculated. For the second

於上式(1-1)和(1-2)之中, 為當前時間區間的實時電價(real time pricing (RTP) tariff), 為所述第二耗能裝置於當前時間區間的能耗, 為對應於所述第二耗能裝置之運作排程的使用者不滿意度。 In the above formulas (1-1) and (1-2), is the real time pricing (RTP) tariff for the current time interval, is the energy consumption of the second energy-consuming device in the current time interval, User dissatisfaction corresponding to the operation schedule of the second energy consuming device.

繼續地參閱圖1與圖2。該第二強化學習單元14用以基於該控制單元10之控制而依據所述能源儲存裝置資訊和所述電價資訊執行一第二強化學習運算,從而獲得包含一充電控制參數和一放電控制參數的一充/放電運作排程,使該控制單元10依據所述充/放電運作排程控制該能源儲存裝置3進行分時動作。其中,該第二強化學習單元14係利用以下數學式(4)和(5)完成所述第二強化學習運算:

(4)

;以及

(5)

。

Continue to refer to FIGS. 1 and 2 . The second

於上式(4)和式(5)中,

和

為Q值,其係依據所述能源儲存裝置3之狀態與動作而產生的數值。另一方面,

為與能源儲存裝置3所採取動作有關的獎勵值,

為所述能源儲存裝置3於當前時間區間之狀態,

為所述能源儲存裝置3於下一時間區間之狀態,

為所述能源儲存裝置3於當前時間區間的最佳動作,

為所述能源儲存裝置3於當前時間區間之動作,a為所述能源儲存裝置3所採取執行的動作,且

為所述能源儲存裝置3之一動作集合。

In the above formula (4) and formula (5), and is the Q value, which is a value generated according to the state and action of the

對於能源儲存裝置3而言,其用於上式(4)之獎勵值係利用下式(4-1)計算獲得:

。其中,

為電池的充/放電功率。應可理解,可再生能源裝置2(如PV裝置)與能源儲存裝置3(如電池)之間通常具有一電池管理裝置4,用以管理該可再生能源裝置2向電池充電,且管理該能源儲存裝置3向各種裝置提供所需電力。因此,在所述充/放電運作排程產生之後,所述能源管理裝置1係透過電池管理裝置4而依據該充/放電運作排程控制該能源儲存裝置3進行分時動作 。即,在指定的時間區間(requested time range)內進行充電及/或放電。一般而言,為了使得用電成本能夠最小化,本發明之能源管理裝置1會在實時電價便宜的時間區段控制電池進行充電,且在實時電價相對昂貴的時間區段控制電池向各種裝置提供所需電力。

For the

本發明同時提出一種利用多目標強化學習之能源管理方法,如圖1與圖2所示,本發明之能源管理方法係由耦接於至少一可再生能源裝置2、一能源儲存裝置3以及複數個耗能裝置之間的一能源管理裝置1予以實現。圖3顯示本發明之一種利用多目標強化學習之能源管理方法的流程圖。如圖1、圖2與圖3所示,方法流程首先執行步驟圖S1:接收用戶所輸入的複數個裝置運作需求,且取得至少一能源儲存裝置狀態資訊以及一電價資訊。依據前述說明,資訊提供單元12例如為一資料傳/收介面,其透過電池管理裝置4取得該能源儲存裝置3(如電池)之有關能源儲存裝置狀態資訊,包括:電池容量、電池充電效率、電池放電效率、以及漏電參數,從而將前述之能源儲存裝置狀態資訊提供給控制單元10。並且,該資訊提供單元12(即,資料傳/收介面)亦可自電力公司處取得實時電價(real time pricing, RTP)表,從而提供一電價資訊予控制單元10。The present invention also proposes an energy management method using multi-objective reinforcement learning. As shown in FIG. 1 and FIG. 2 , the energy management method of the present invention is coupled to at least one

如圖1、圖2與圖3所示,方法流程接著執行步驟S2:依據複數個所述裝置運作需求和所述電價資訊執行一第一強化學習運算以獲得包含複數個裝置控制參數的一耗能裝置運作排程,且依據所述能源儲存裝置資訊和所述電價資訊執行一第二強化學習運算以獲得包含一充電控制參數和一放電控制參數的一充/放電運作排程。依據前述說明,第一強化學習單元13利用數學式(1)、(2)和(3)完成所述第一強化學習運算,且第二強化學習單元14利用數學式(4)和(5)完成所述第二強化學習運算。As shown in FIG. 1 , FIG. 2 and FIG. 3 , the method flow then executes step S2 : performing a first reinforcement learning operation according to a plurality of the device operation requirements and the electricity price information to obtain a power consumption including a plurality of device control parameters An energy device operation schedule is performed, and a second reinforcement learning operation is performed according to the energy storage device information and the electricity price information to obtain a charge/discharge operation schedule including a charge control parameter and a discharge control parameter. According to the foregoing description, the first

最終,方法流程接著執行步驟S3:依據所述耗能裝置運作排程控制控制複數個所述耗能裝置(5a, 5b, 5c)進行分時動作,且依據所述充/放電運作排程控制該能源儲存裝置3進行分時動作,從而在用電成本最小化的情況下使得用戶的用電滿意度能夠最大化。Finally, the method flow then executes step S3: controlling a plurality of the energy consuming devices (5a, 5b, 5c) to perform time-sharing operations according to the operation schedule control of the energy consuming devices, and controlling the charging/discharging operation schedule according to the The

如此,上述已完整且清楚地說明本發明之一種利用多目標強化學習之能源管理裝置。然而,必須加以強調的是,前述本案所揭示者乃為較佳實施例,舉凡局部之變更或修飾而源於本案之技術思想而為熟習該項技藝之人所易於推知者,俱不脫本案之專利權範疇。Thus, the above has completely and clearly described an energy management device utilizing multi-objective reinforcement learning of the present invention. However, it must be emphasized that what is disclosed in the above-mentioned case is a preferred embodiment, and any partial changes or modifications originating from the technical ideas of this case and easy to infer by those who are familiar with the art are all within the scope of this case. the scope of patent rights.

1:能源管理裝置

10:控制單元

11:用戶介面

12:資訊提供單元

13:第一強化學習單元

14:第二強化學習單元

2:可再生能源裝置

3:能源儲存裝置

4:電池管理裝置

5a:第一耗能裝置

5b:第二耗能裝置

5c:第三耗能裝置

S1-S3:步驟

1: Energy management device

10: Control unit

11: User Interface

12: Information provision unit

13: First Reinforcement Learning Unit

14: Second Reinforcement Learning Unit

2: Renewable energy installations

3: Energy Storage Devices

4:

圖1為本發明之一種利用多目標強化學習之能源管理系統的立體圖; 圖2為本發明之利用多目標強化學習之能源管理系統的方塊圖;以及 圖3為本發明之一種利用多目標強化學習之能源管理方法的流程圖。 1 is a perspective view of an energy management system utilizing multi-objective reinforcement learning according to the present invention; FIG. 2 is a block diagram of an energy management system utilizing multi-objective reinforcement learning of the present invention; and FIG. 3 is a flow chart of an energy management method utilizing multi-objective reinforcement learning according to the present invention.

1:能源管理裝置 1: Energy management device

2:可再生能源裝置 2: Renewable energy installations

3:能源儲存裝置 3: Energy Storage Devices

5a:第一耗能裝置 5a: The first energy-consuming device

5b:第二耗能裝置 5b: Second energy consumption device

5c:第三耗能裝置 5c: Third energy-consuming device

Claims (18)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| TW109146801A TWI762128B (en) | 2020-12-30 | 2020-12-30 | Device and method of energy management using multi-object reinforcement learning |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| TW109146801A TWI762128B (en) | 2020-12-30 | 2020-12-30 | Device and method of energy management using multi-object reinforcement learning |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| TWI762128B true TWI762128B (en) | 2022-04-21 |

| TW202226700A TW202226700A (en) | 2022-07-01 |

Family

ID=82198904

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| TW109146801A TWI762128B (en) | 2020-12-30 | 2020-12-30 | Device and method of energy management using multi-object reinforcement learning |

Country Status (1)

| Country | Link |

|---|---|

| TW (1) | TWI762128B (en) |

Citations (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20090063228A1 (en) * | 2007-08-28 | 2009-03-05 | Forbes Jr Joseph W | Method and apparatus for providing a virtual electric utility |

| TW201102945A (en) * | 2009-07-01 | 2011-01-16 | Chunghwa Telecom Co Ltd | Expense-saving type energy-saving management system and method |

| CN103117568A (en) * | 2011-11-16 | 2013-05-22 | 财团法人工业技术研究院 | Charging system and electric vehicle management method |

| TW201411978A (en) * | 2012-09-14 | 2014-03-16 | Atomic Energy Council | Apparatus of controlling loads in high-performance micro-grid |

| TW201615454A (en) * | 2014-10-21 | 2016-05-01 | 行政院原子能委員會核能研究所 | Device of optimizing schedule of charging electric vehicle |

-

2020

- 2020-12-30 TW TW109146801A patent/TWI762128B/en active

Patent Citations (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20090063228A1 (en) * | 2007-08-28 | 2009-03-05 | Forbes Jr Joseph W | Method and apparatus for providing a virtual electric utility |

| TW201102945A (en) * | 2009-07-01 | 2011-01-16 | Chunghwa Telecom Co Ltd | Expense-saving type energy-saving management system and method |

| CN103117568A (en) * | 2011-11-16 | 2013-05-22 | 财团法人工业技术研究院 | Charging system and electric vehicle management method |

| TW201411978A (en) * | 2012-09-14 | 2014-03-16 | Atomic Energy Council | Apparatus of controlling loads in high-performance micro-grid |

| TW201615454A (en) * | 2014-10-21 | 2016-05-01 | 行政院原子能委員會核能研究所 | Device of optimizing schedule of charging electric vehicle |

Also Published As

| Publication number | Publication date |

|---|---|

| TW202226700A (en) | 2022-07-01 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Molla et al. | Integrated optimization of smart home appliances with cost-effective energy management system | |

| Nan et al. | Optimal residential community demand response scheduling in smart grid | |

| Hou et al. | Smart home energy management optimization method considering energy storage and electric vehicle | |

| Bahrami et al. | Deep reinforcement learning for demand response in distribution networks | |

| Wei et al. | A bi-level scheduling model for virtual power plants with aggregated thermostatically controlled loads and renewable energy | |

| Wang et al. | Robust optimization for load scheduling of a smart home with photovoltaic system | |

| Merdanoğlu et al. | Finding optimal schedules in a home energy management system | |

| Huang et al. | Demand response for home energy management system | |

| Saleh et al. | Load aggregation from generation-follows-load to load-follows-generation: Residential loads | |

| Zhang et al. | Optimal operation of a smart residential microgrid based on model predictive control by considering uncertainties and storage impacts | |

| Mazidi et al. | Integrated scheduling of renewable generation and demand response programs in a microgrid | |

| Lee et al. | Joint energy management system of electric supply and demand in houses and buildings | |

| Al Essa | Home energy management of thermostatically controlled loads and photovoltaic-battery systems | |

| US11610214B2 (en) | Deep reinforcement learning based real-time scheduling of Energy Storage System (ESS) in commercial campus | |

| CN107612041B (en) | Micro-grid automatic demand response method considering uncertainty and based on event driving | |

| Babaei et al. | A data-mining based optimal demand response program for smart home with energy storages and electric vehicles | |

| Iqbal et al. | IoT-enabled smart home energy management strategy for DR actions in smart grid paradigm | |

| CN110474370B (en) | Cooperative control system and method for air conditioner controllable load and photovoltaic energy storage system | |

| Khalkhali et al. | Novel residential energy demand management framework based on clustering approach in energy and performance-based regulation service markets | |

| Nan et al. | Optimal scheduling approach on smart residential community considering residential load uncertainties | |

| Hao et al. | Locational marginal pricing in the campus power system at the power distribution level | |

| Yang et al. | Exploring blockchain for the coordination of distributed energy resources | |

| Melatti et al. | A two-layer near-optimal strategy for substation constraint management via home batteries | |

| Alrumayh et al. | Model predictive control based home energy management system in smart grid | |

| Gao et al. | An iterative optimization and learning-based IoT system for energy management of connected buildings |