EP4564347A1 - Audiosystem und -verfahren - Google Patents

Audiosystem und -verfahren Download PDFInfo

- Publication number

- EP4564347A1 EP4564347A1 EP23212578.1A EP23212578A EP4564347A1 EP 4564347 A1 EP4564347 A1 EP 4564347A1 EP 23212578 A EP23212578 A EP 23212578A EP 4564347 A1 EP4564347 A1 EP 4564347A1

- Authority

- EP

- European Patent Office

- Prior art keywords

- reverberation

- audio

- input signals

- audio input

- frames

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04S—STEREOPHONIC SYSTEMS

- H04S7/00—Indicating arrangements; Control arrangements, e.g. balance control

- H04S7/30—Control circuits for electronic adaptation of the sound field

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04R—LOUDSPEAKERS, MICROPHONES, GRAMOPHONE PICK-UPS OR LIKE ACOUSTIC ELECTROMECHANICAL TRANSDUCERS; ELECTRIC HEARING AIDS; PUBLIC ADDRESS SYSTEMS

- H04R3/00—Circuits for transducers

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10K—SOUND-PRODUCING DEVICES; METHODS OR DEVICES FOR PROTECTING AGAINST, OR FOR DAMPING, NOISE OR OTHER ACOUSTIC WAVES IN GENERAL; ACOUSTICS NOT OTHERWISE PROVIDED FOR

- G10K15/00—Acoustics not otherwise provided for

- G10K15/08—Arrangements for producing a reverberation or echo sound

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04R—LOUDSPEAKERS, MICROPHONES, GRAMOPHONE PICK-UPS OR LIKE ACOUSTIC ELECTROMECHANICAL TRANSDUCERS; ELECTRIC HEARING AIDS; PUBLIC ADDRESS SYSTEMS

- H04R2430/00—Signal processing covered by H04R, not provided for in its groups

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04S—STEREOPHONIC SYSTEMS

- H04S5/00—Pseudo-stereo systems, e.g. in which additional channel signals are derived from monophonic signals by means of phase shifting, time delay or reverberation

- H04S5/005—Pseudo-stereo systems, e.g. in which additional channel signals are derived from monophonic signals by means of phase shifting, time delay or reverberation of the pseudo five- or more-channel type, e.g. virtual surround

Definitions

- the disclosure relates to an audio system and related method, in particular an audio system and method for adding reverberation to an audio signal.

- an audio signal with surround or 3D information By extending an audio signal with surround or 3D information, e.g. adding a reverberation effect to an audio signal that matches a reverberation already existent in the audio signal, thereby simulating a certain listening environment, the listening experience of a user to whom the audio signal is presented can be significantly increased.

- An audio signal may be expanded, e.g., in the context of an upmixing process, by adding a reverberation which matches the original audio signal, or by creating additional reverberation channels or signals which match the existing audio signal.

- Acoustically simulating a concert hall, or any other kind of listening space by suitably adding and reproducing a matching reverberation, however, can be challenging.

- the resulting audio signal may comprise unwanted artifacts, may not be satisfying to listen to, and high computational load may be required to generate an extended audio signal.

- An audio system includes a processing unit, and a reverb classification unit, wherein the reverb classification unit is configured to receive a first plurality of audio input signals, estimate a class of reverberation suitable for the first plurality of audio input signals by means of a deep learning, DL, classification algorithm, and output a prediction to the processing unit, the prediction including information concerning the estimated class of reverberation, and the processing unit is configured to receive the first plurality of audio input signals, generate a second plurality of audio output signals based on the first plurality of audio input signals, and output the second plurality of audio output signals, wherein generating the second plurality of audio output signals includes adding reverberation to at least one of the second plurality of audio output signals based on the prediction received from the reverb classification unit.

- a method includes estimating a class of reverberation suitable for a first plurality of audio input signals by means of a deep learning, DL, classification algorithm, and make a prediction including information concerning the estimated class of reverberation, generating a second plurality of audio output signals based on the first plurality of audio input signals, wherein generating the second plurality of audio output signals includes adding reverberation to at least one of the second plurality of audio output signals based on the prediction, and outputting the second plurality of audio output signals.

- the audio system and related method according to the various embodiments described herein allow to simulate different listening environments by adding reverberation to an audio signal. Only comparably little computational load is required, and the resulting audio signal is highly satisfying to listen to, as it comprises only few or even no artifacts. Instead of "blindly" processing an audio signal as is done in conventional audio systems, the audio system and method disclosed herein preform an "informed" processing on an audio signal.

- the listening experience for a user listening to the audio signal can be significantly increased.

- multi-channel playback provides the possibility of providing the impression to the user that they are located at a certain location or event, while listening to a musical piece.

- Additional ambience playback can create an envelopment that can be compared to the experience of being at a live event. For example, if a center signal of a multi-channel audio signal is extracted and added to a centrally positioned speaker, an optimum listening area (so-called sweet spot) can be enlarged and the stability of the front image can be significantly improved.

- Using 3D speakers the feeling of a realistic immersion into an audio event can be improved further. There is even the possibility of lifting the stage and playing back overhead effects.

- the perception of the sound scene is often modeled as a combination of the foreground sound and the background sound, which are often also referred to as primary (or direct) and ambient (or diffuse) components, respectively.

- the primary components consist of point-like directional sound sources, whereas the ambient components are generally made up of diffuse environmental sound (reverberation). Due to perceptual differences between the primary components and the ambient components, different rendering schemes are generally applied to the primary components and the ambient components for optimal spatial audio reproduction of sound scenes.

- Channel-based audio only provides mixed signals. Some approaches, therefore, focus on extracting the primary components and the ambient components from the mixed signals.

- Known methods which may include, e.g., ambience estimation in the frequency domain, often require a large computational load, and the resulting audio signal often is not satisfying to listen to, as it comprises a significant amount of artifacts.

- the audio system and related method disclosed herein in contrast to conventional methods, use artificial intelligence (deep learning, DL) algorithms in order to classify the spatial component of an existing audio signal, and further use this information in order to create artificial reverberation that matches the original reverberation. That is, instead of extracting the ambient components from the mixed signal, an environment in which a musical piece might have been recorded is estimated by means of artificial intelligence.

- a musical piece generally includes a specific class of reverberation.

- the reverberation included in the musical piece generally has certain characteristics that are typical for the specific class of reverberation, e.g., long reverb tail, specific room modes, early reflections, etc.

- reverberation may be added to the audio signal that matches the reverberation included in the musical piece. That is, based on an estimated class of reverberation, for example, a matching artificial reverberation is added to the audio signal.

- the audio system comprises a processing unit 100, and a reverb classification unit 200.

- the reverb classification unit 200 is configured to receive a first plurality of audio input signals IN 1 , ..., IN N , estimate a class of reverberation suitable for the first plurality of audio input signals IN 1 , ..., IN N by means of a deep learning, DL, classification algorithm, and output a prediction P1 to the processing unit 100, the prediction P1 including information concerning the estimated class of reverberation.

- the processing unit 100 is configured to receive the first plurality of audio input signals IN 1 , ..., IN N , generate a second plurality of audio output signals OUT 1 , ..., OUT M based on the first plurality of audio input signals IN 1 , ..., IN N , and output the second plurality of audio output signals OUT 1 , ..., OUT M , wherein generating the second plurality of audio output signals OUT 1 , ..., OUT M comprises adding reverberation to at least one of the second plurality of audio output signals OUT 1 , ..., OUT M based on the prediction P1 received from the reverb classification unit 200.

- the first plurality of audio input signals IN 1 , ..., IN N may be two channels (e.g., left (L) and right (R) channel) of a stereo audio signal.

- the second plurality of audio output signals OUT 1 , ..., OUT M may be the different channels (e.g., front left (FL) channel, front right (FR) channel, center (C) channel, surround left (LS) channel, surround right (RS) channel) of an upmixed 5.1 surround signal.

- Reverberation may be added to each of a plurality of audio output signals OUT 1 , ..., OUT M , or only to some of a plurality of audio output signals OUT 1 , ..., OUT M .

- reverberation may only be added to the surround left (LS) and surround right (RS) channels of an upmixed 5.1 surround signal, to name just one example.

- the reverb classification unit 200 is configured to estimate the class of reverberation suitable for the first plurality of audio input signals IN 1 , ..., IN N (a class of reverberation that matches the first plurality of audio input signals IN 1 , ..., IN N ).

- estimating a class of reverberation suitable for the first plurality of audio input signals IN 1 , ..., IN N comprises separating the first plurality of audio input signals IN 1 , ..., IN N into a plurality of successive separate frames, extracting one or more features from each of the separate frames, each of the one or more features being characteristic for one of a plurality of types of listening environments, identifying a specific pattern in each of the separate frames by means of the extracted features, and estimating a class of reverberation suitable for each of the separate frames based on the identified specific pattern.

- the length of each frame may influence the accuracy of the deep learning, DL, classification algorithm and may be chosen in any suitable way.

- the reverb classification unit 200 may comprise a receiving unit 202 configured to receive the first plurality of audio input signals IN 1 , ..., IN N .

- the reverb classification unit 200 may further comprise a pre-processing unit 204.

- the pre-processing unit 204 may be configured to, in a first step, pre-process the input audio signal according to the requirements of the deep learning, DL, classification algorithm.

- the pre-processing unit 204 may re-sample the audio input signals to a target sampling rate and slice the audio input signals into frames of a defined length. The length of each frame may correspond to a desired temporal window for the output predictions.

- the reverb classification unit 200 may further comprise a feature extraction unit 206 that is configured to transform the separate signal frames into a signal representation that allows the classification algorithm to more easily identify patterns in the frames, the patterns being related to an amount of reverberation present in the respective frame of the audio input signal.

- a feature extraction unit 206 that is configured to transform the separate signal frames into a signal representation that allows the classification algorithm to more easily identify patterns in the frames, the patterns being related to an amount of reverberation present in the respective frame of the audio input signal.

- Suitable signal representations may include time-frequency representations such as, e.g., log-frequency spectrograms, for example. This is schematically illustrated in Figure 3. Figure 3 schematically illustrates that a log-frequency spectrogram may be generated for each of the different frames the audio input signal has been sliced into.

- a (log-frequency) spectrogram is a standard sound visualization tool, which allows to visualize the distribution of energy in both time and frequency.

- a log-frequency spectrogram is simply an image formed by the magnitude of the short-time Fourier transform, on a log-intensity axis (e.g., dB).

- the transformed audio input signal frames may then be processed in batches in a classification unit 208, by means of the classification algorithm. This results in a prediction of a suitable reverberation class for each of the audio input signal segments (frames).

- the reverb classification unit 200 may further comprise a prediction unit 210 that is configured to make and output the prediction P1 to the processing unit 100.

- the reverb classification unit 200 may be configured to make a global prediction P1 based on the estimated classes of reverberation in a defined plurality of separate successive frames.

- the defined plurality of separate successive frames may constitute a musical piece, and the global prediction P1 may include information concerning the estimated class of reverberation suitable for the entire musical piece. That is, a musical piece may be separated into a plurality of separate frames.

- a suitable reverberation may be determined for each of the plurality of separate frames. For example, a suitable reverberation may either be "high reverberation" or "low reverberation".

- high reverberation may be added to the entire musical piece, or vice versa.

- High reverberation and “low reverberation”, however, are merely examples.

- Other classes of reverberation may include, but are not limited to, e.g., "large/medium/small jazz hall”, “large/medium/small living room”, “wooden large/medium/small concert hall”, etc.

- Other even more generic classes of reverberation may include "Hall 1", “Hall 2", “Hall 3", etc.

- a sub-prediction P1 is made based on the estimated classes of reverberation in a sub-set of a defined plurality of separate successive frames.

- the defined plurality of separate successive frames may constitute a musical piece, and the sub-prediction P1 may include information concerning the estimated class of reverberation suitable for a fraction of the musical piece. That is, a musical piece may be separated into a plurality of separate frames.

- a suitable reverberation may be determined for each of the plurality of separate frames. For example, a suitable reverberation may either be "high reverberation" or "low reverberation".

- a different reverberation may be added to each of the different frames of the audio input signal based on the respective predictions. It is, however, also possible to make a combined prediction for several of the separate frames, but not to all.

- a suitable reverberation may be determined for each of the plurality of frames, resulting in the predictions illustrated on the right side of Figure 3 .

- Several frames e.g., frames 1 to 5 (or any other number of frames) may then be combined to a group of frames and a sub-prediction P1 may be determined and output for this group of frames. If, in the example illustrated in Figure 3 , frames 1 to 5 are combined, the result "high reverberation" is predominant. That is, the sub-prediction P1 "high reverberation" may be output to the processing unit 100, which may then add "high reverberation" to respective frames 1 to 5.

- a musical piece may comprise more than frames 1 to 5. That is, different reverberation may be added to different segments of a musical piece, each segment comprising more than one frame. This allows to add reverberation to a musical piece in a very accurate way, resulting in a very satisfying listening experience.

- the deep learning, DL, classification algorithm may be based on a deep learning, DL, model, wherein the DL model is trained using annotated data consisting of audio signals with different known grades of reverberation.

- the DL model may learn hierarchical representations from input samples, for example. In order to be able to predict reverberation classes with a high accuracy, it may be trained with annotated data consisting of audio signals with different known grades of reverberation. The grades of reverberation may be perceptually measured, for example.

- One or more different databases may generally be used for this purpose. The one or more databases may be obtained in any suitable way.

- the audio systems described herein are able to directly classify an amount of reverberation present in an audio input signal, which is directly aligned with the perceptual measure for reverberation.

- the audio signal is highly flexible concerning the amount of reverberation classes that can be estimated.

- two reverberation classes e.g., "high reverberation” and "low reverberation” may be estimated.

- three reverberation classes e.g., "high reverberation”, “mid reverberation”, and “low reverberation” may be estimated, wherein “mid reverberation” is a reverberation that is less than "high reverberation” and greater than "low reverberation”. Any other intermediate reverberation classes between "high reverberation” and “low reverberation” may generally be estimated as well.

- reverberation may include, but are not limited to, e.g., "large/medium/small jazz hall”, “large/medium/small living room”, “wooden large/medium/small concert hall”, “Hall 1", “Hall 2”, “Hall 3”, etc.

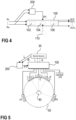

- the audio systems described above may be surround sound systems, or any kind of 3D audio systems (e.g., VR/AR applications), for example. That is, the number of audio input signals included in the first plurality of audio input signals IN 1 , ..., IN N may equal the number of audio signals included in the second plurality of audio output signals OUT 1 , ..., OUT M , as is illustrated in Figure 1 . It is, however, also possible that the number of audio input signals included in the first plurality of audio input signals IN 1 , ..., IN N is less than the number of audio signals included in the second plurality of audio output signals OUT 1 , ..., OUT M , as is schematically illustrated in Figures 4 and 5 (N ⁇ M). That is, the processing unit 100 may be or may comprise an upmixing processor 102, as is exemplarily illustrated in Figure 4 .

- a surround sound system is schematically illustrated in Figure 5 .

- the surround sound system comprises a stereo source 30.

- the processing unit 100 may receive a first plurality of audio input signals IN 1 , ..., IN N from the stereo source 30, e.g., two channels (left (L) and right (R) channel) of a stereo audio signal.

- the second plurality of audio output signals OUT 1 , ..., OUT M may be the different channels (e.g., front left (L') channel, front right (R') channel, center (C) channel, surround left (LS) channel, surround righ (RS) channel) of an upmixed 5.1 surround signal.

- a plurality of loudspeakers may be arranged in a listening environment 50.

- the loudspeakers may be arranged at suitable positions with respect to a listener 40 present in the listening environment 50.

- the different channels of the audio output signal OUT may be fed to the respective loudspeakers.

- a surround sound system as exemplarily illustrated in Figures 4 and 5 , may generate artificial spatiality (ambience) in order to achieve an acoustic envelopment of the listener 40 in the listening environment 50.

- the output signals generated by the surround sound system may be routed to the surround and height channels of a multi-channel speaker system.

- the reverberation added to the one or more audio output signals OUT 1 , ..., OUT M may be generated based on the prediction P1, e.g., by means of a reverberation engine 104, as exemplarily illustrated in Figure 4 .

- the spatial information (prediction P1) obtained and provided by the reverb classification unit 200 may be used to adjust parameters of an artificial reverb generator algorithm (reverb engine 104). That is, for different predictions P1 received from the reverb classification unit 200, a reverb engine 104 may apply different parameters when generating artificial reverberation.

- the artificially generated reverberation may be then fed to a distribution block 106 of the processing unit 100.

- the distribution block 106 may route the reverberation ("ambience" signal component) to the desired speakers (e.g., the surround and/or height speakers of a surround sound system) according to a desired upmixing setting.

- the processing unit 100 comprises or is coupled to a memory 110, wherein different types of reverberation are stored in the memory 110.

- the processing unit 100 i.e. a reverberation engine 104 of the processing unit 100, based on the prediction P1, can retrieve a suitable reverberation from the memory 110 and add it to the one or more audio output signals OUT 1 , ..., OUT M accordingly.

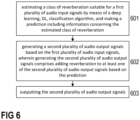

- a method comprises estimating a class of reverberation suitable for a first plurality of audio input signals IN 1 , ..., IN N by means of a deep learning, DL, classification algorithm, and make a prediction P1 including information concerning the estimated class of reverberation (step 601), and generating a second plurality of audio output signals OUT 1 , ..., OUT M based on the first plurality of audio input signals IN 1 , ..., IN N , wherein generating the second plurality of audio output signals OUT 1 , ..., OUT M comprises adding reverberation to at least one of the second plurality of audio output signals OUT 1 , ..., OUT M based on the prediction P1 (step 602).

- the method further comprises outputting the second plurality of audio output signals OUT 1 , ..., OUT M (step 603).

- estimating a class of reverberation suitable for the first plurality of audio input signals IN 1 , ..., IN N may comprise separating the first plurality of audio input signals IN 1 , ..., IN N into a plurality of successive separate frames, extracting one or more features from each of the separate frames, each of the one or more features being characteristic for one of a plurality of types of listening environments, identifying a specific pattern in each of the separate frames by means of the extracted features, and estimating a class of reverberation suitable for each of the separate frames based on the identified specific pattern.

- the method may further comprise, after separating the first plurality of audio input signals IN 1 , ..., IN N into a plurality of successive separate frames and before extracting one or more features from each of the separate frames, transforming the separate signal frames into a log-frequency spectrogram.

- the artificially generated spatiality matches the (possibly) existing spatiality present in the original audio signal (first plurality of audio input signals IN 1 , ..., IN N ). Ideally, it has the same room acoustic properties.

- Classic ambience extraction methods that may be used to extract the original ambience signal from an input signal are algorithmically complex and cause perceptually relevant artefacts.

- a multiplication and distribution of an extracted ambience signal to the speaker channels of a multi-channel system further increases the perceived artefacts.

- the audio system described above overcomes these drawbacks.

- the reverberation class information of the ambience portion of the original signal is used to control or configure an algorithm that artificially generates the output ambience signals.

- the manner in which the artificial ambience component is created is generally not relevant.

- the artificial ambience component may generally be created in any suitable way. Any type of reverb method can generally be used, e.g., Feedback Delay Networks (FDNs), convolution reverb, etc.

- FDNs Feedback Delay Networks

- the number and semantic properties of the reverb classes are adjusted, and the deep learning, DL, classification network may be trained accordingly.

- the classes may be defined based on perceptually relevant characteristics (e.g., reverberation length, density of reflections, early reflection patterns, dry/wet ratio, decay rate, spectral behavior, etc.), and the artificial reverberation algorithm may be configured accordingly. For example, if the DL network was trained to estimate reverberation lengths in the input signal, the output predictions can be used to set the same parameter in an artificial reverb engine.

Landscapes

- Physics & Mathematics (AREA)

- Engineering & Computer Science (AREA)

- Acoustics & Sound (AREA)

- Multimedia (AREA)

- Signal Processing (AREA)

- Stereophonic System (AREA)

Priority Applications (3)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| EP23212578.1A EP4564347A1 (de) | 2023-11-28 | 2023-11-28 | Audiosystem und -verfahren |

| CN202411626001.7A CN120075696A (zh) | 2023-11-28 | 2024-11-14 | 音频系统和方法 |

| US18/957,031 US20250174221A1 (en) | 2023-11-28 | 2024-11-22 | Audio system and method |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| EP23212578.1A EP4564347A1 (de) | 2023-11-28 | 2023-11-28 | Audiosystem und -verfahren |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| EP4564347A1 true EP4564347A1 (de) | 2025-06-04 |

Family

ID=88978278

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| EP23212578.1A Pending EP4564347A1 (de) | 2023-11-28 | 2023-11-28 | Audiosystem und -verfahren |

Country Status (3)

| Country | Link |

|---|---|

| US (1) | US20250174221A1 (de) |

| EP (1) | EP4564347A1 (de) |

| CN (1) | CN120075696A (de) |

Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2019229199A1 (en) * | 2018-06-01 | 2019-12-05 | Sony Corporation | Adaptive remixing of audio content |

| US20210160617A1 (en) * | 2019-11-27 | 2021-05-27 | Roku, Inc. | Sound generation with adaptive directivity |

-

2023

- 2023-11-28 EP EP23212578.1A patent/EP4564347A1/de active Pending

-

2024

- 2024-11-14 CN CN202411626001.7A patent/CN120075696A/zh active Pending

- 2024-11-22 US US18/957,031 patent/US20250174221A1/en active Pending

Patent Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2019229199A1 (en) * | 2018-06-01 | 2019-12-05 | Sony Corporation | Adaptive remixing of audio content |

| US20210160617A1 (en) * | 2019-11-27 | 2021-05-27 | Roku, Inc. | Sound generation with adaptive directivity |

Non-Patent Citations (2)

| Title |

|---|

| ANONYMOUS: "Get started with smart:reverb - sonible", 10 August 2020 (2020-08-10), pages 1 - 6, XP093155376, Retrieved from the Internet <URL:https://www.sonible.com/blog/smartreverb-intro-tutorial/> [retrieved on 20240424] * |

| SAFAVI SAEID ET AL: "Predicting the perceived level of reverberation using machine learning", 2018 52ND ASILOMAR CONFERENCE ON SIGNALS, SYSTEMS, AND COMPUTERS, IEEE, 28 October 2018 (2018-10-28), pages 27 - 30, XP033520980, DOI: 10.1109/ACSSC.2018.8645201 * |

Also Published As

| Publication number | Publication date |

|---|---|

| US20250174221A1 (en) | 2025-05-29 |

| CN120075696A (zh) | 2025-05-30 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| JP5149968B2 (ja) | スピーチ信号処理を含むマルチチャンネル信号を生成するための装置および方法 | |

| CN112205006B (zh) | 音频内容的自适应再混合 | |

| US10645518B2 (en) | Distributed audio capture and mixing | |

| CN103402169B (zh) | 用于提取和改变音频输入信号的混响内容的方法和装置 | |

| RU2650026C2 (ru) | Устройство и способ для многоканального прямого-окружающего разложения для обработки звукового сигнала | |

| JP5957446B2 (ja) | 音響処理システム及び方法 | |

| KR101764175B1 (ko) | 입체 음향 재생 방법 및 장치 | |

| JP2023517720A (ja) | 残響のレンダリング | |

| KR101646540B1 (ko) | 오디오 신호를 변환하는 컨버터 및 방법 | |

| EP2649814A1 (de) | Vorrichtung und verfahren zur dekomposition eines eingabesignals mit einem abwärtsmischer | |

| US12363494B2 (en) | Signal processing apparatus and method | |

| CN114631142B (zh) | 电子设备、方法和计算机程序 | |

| CN115226022B (zh) | 基于内容的空间再混合 | |

| Manocha et al. | SAQAM: Spatial audio quality assessment metric | |

| EP2946573B1 (de) | Audiosignalverarbeitungsvorrichtung | |

| RU2768974C2 (ru) | Процессор аудиосигнала, система и способы распределения окружающего сигнала по множеству каналов окружающего сигнала | |

| Ibrahim et al. | Primary-ambient source separation for upmixing to surround sound systems | |

| US12014710B2 (en) | Device, method and computer program for blind source separation and remixing | |

| Hsu et al. | Model-matching principle applied to the design of an array-based all-neural binaural rendering system for audio telepresence | |

| EP4564347A1 (de) | Audiosystem und -verfahren | |

| Pawlak et al. | Spatial segmentation of an early part of the room impulse response into prominent sound events | |

| Negru et al. | Automatic audio upmixing based on source separation and ambient extraction algorithms | |

| JP2001236084A (ja) | 音響信号処理装置及びそれに用いられる信号分離装置 | |

| Erruz | Binaural Source Separation with Convolutional Neural Networks | |

| WO2025186171A1 (en) | Method for processing audio and audio processing system |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PUAI | Public reference made under article 153(3) epc to a published international application that has entered the european phase |

Free format text: ORIGINAL CODE: 0009012 |

|

| STAA | Information on the status of an ep patent application or granted ep patent |

Free format text: STATUS: THE APPLICATION HAS BEEN PUBLISHED |

|

| AK | Designated contracting states |

Kind code of ref document: A1 Designated state(s): AL AT BE BG CH CY CZ DE DK EE ES FI FR GB GR HR HU IE IS IT LI LT LU LV MC ME MK MT NL NO PL PT RO RS SE SI SK SM TR |

|

| STAA | Information on the status of an ep patent application or granted ep patent |

Free format text: STATUS: REQUEST FOR EXAMINATION WAS MADE |

|

| 17P | Request for examination filed |

Effective date: 20251118 |