EP3034005A1 - Method, apparatus and system for generating body marker indicating object - Google Patents

Method, apparatus and system for generating body marker indicating object Download PDFInfo

- Publication number

- EP3034005A1 EP3034005A1 EP15163220.5A EP15163220A EP3034005A1 EP 3034005 A1 EP3034005 A1 EP 3034005A1 EP 15163220 A EP15163220 A EP 15163220A EP 3034005 A1 EP3034005 A1 EP 3034005A1

- Authority

- EP

- European Patent Office

- Prior art keywords

- body marker

- marker

- controller

- image

- user input

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

- 239000003550 marker Substances 0.000 title claims abstract description 369

- 238000000034 method Methods 0.000 title claims abstract description 34

- 238000002604 ultrasonography Methods 0.000 description 78

- 238000010586 diagram Methods 0.000 description 36

- 210000003754 fetus Anatomy 0.000 description 35

- 238000004891 communication Methods 0.000 description 33

- 239000000523 sample Substances 0.000 description 26

- 238000003745 diagnosis Methods 0.000 description 24

- 238000012285 ultrasound imaging Methods 0.000 description 20

- 230000005540 biological transmission Effects 0.000 description 8

- 210000004204 blood vessel Anatomy 0.000 description 8

- 241001465754 Metazoa Species 0.000 description 4

- 238000002591 computed tomography Methods 0.000 description 4

- 230000033001 locomotion Effects 0.000 description 4

- 210000000056 organ Anatomy 0.000 description 4

- 238000010079 rubber tapping Methods 0.000 description 4

- 230000006870 function Effects 0.000 description 3

- 238000010295 mobile communication Methods 0.000 description 3

- 230000002792 vascular Effects 0.000 description 3

- 210000001015 abdomen Anatomy 0.000 description 2

- 230000017531 blood circulation Effects 0.000 description 2

- 210000004556 brain Anatomy 0.000 description 2

- 230000008859 change Effects 0.000 description 2

- 230000001934 delay Effects 0.000 description 2

- 230000003111 delayed effect Effects 0.000 description 2

- 239000000284 extract Substances 0.000 description 2

- 238000003384 imaging method Methods 0.000 description 2

- 238000002595 magnetic resonance imaging Methods 0.000 description 2

- 230000003287 optical effect Effects 0.000 description 2

- 230000008569 process Effects 0.000 description 2

- 230000004044 response Effects 0.000 description 2

- 210000001519 tissue Anatomy 0.000 description 2

- 210000004291 uterus Anatomy 0.000 description 2

- 230000008901 benefit Effects 0.000 description 1

- 210000004369 blood Anatomy 0.000 description 1

- 239000008280 blood Substances 0.000 description 1

- 210000000481 breast Anatomy 0.000 description 1

- 230000000747 cardiac effect Effects 0.000 description 1

- 238000006243 chemical reaction Methods 0.000 description 1

- 210000000038 chest Anatomy 0.000 description 1

- 239000003086 colorant Substances 0.000 description 1

- 230000006835 compression Effects 0.000 description 1

- 238000007906 compression Methods 0.000 description 1

- 238000001514 detection method Methods 0.000 description 1

- 238000002059 diagnostic imaging Methods 0.000 description 1

- 239000003814 drug Substances 0.000 description 1

- 238000003708 edge detection Methods 0.000 description 1

- 230000000694 effects Effects 0.000 description 1

- 238000005516 engineering process Methods 0.000 description 1

- 230000014509 gene expression Effects 0.000 description 1

- 230000003902 lesion Effects 0.000 description 1

- 210000004185 liver Anatomy 0.000 description 1

- 239000000463 material Substances 0.000 description 1

- 239000013307 optical fiber Substances 0.000 description 1

- 238000004091 panning Methods 0.000 description 1

- 230000002285 radioactive effect Effects 0.000 description 1

- 238000009877 rendering Methods 0.000 description 1

- 230000008439 repair process Effects 0.000 description 1

- 230000029058 respiratory gaseous exchange Effects 0.000 description 1

- 230000035945 sensitivity Effects 0.000 description 1

- 230000001568 sexual effect Effects 0.000 description 1

- 210000004872 soft tissue Anatomy 0.000 description 1

- 238000000638 solvent extraction Methods 0.000 description 1

- 230000003595 spectral effect Effects 0.000 description 1

- 239000000126 substance Substances 0.000 description 1

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0484—Interaction techniques based on graphical user interfaces [GUI] for the control of specific functions or operations, e.g. selecting or manipulating an object, an image or a displayed text element, setting a parameter value or selecting a range

- G06F3/04845—Interaction techniques based on graphical user interfaces [GUI] for the control of specific functions or operations, e.g. selecting or manipulating an object, an image or a displayed text element, setting a parameter value or selecting a range for image manipulation, e.g. dragging, rotation, expansion or change of colour

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/13—Tomography

- A61B8/14—Echo-tomography

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/46—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient

- A61B8/461—Displaying means of special interest

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/46—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient

- A61B8/461—Displaying means of special interest

- A61B8/463—Displaying means of special interest characterised by displaying multiple images or images and diagnostic data on one display

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/46—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient

- A61B8/461—Displaying means of special interest

- A61B8/465—Displaying means of special interest adapted to display user selection data, e.g. icons or menus

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/46—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient

- A61B8/467—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient characterised by special input means

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/46—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient

- A61B8/467—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient characterised by special input means

- A61B8/468—Ultrasonic, sonic or infrasonic diagnostic devices with special arrangements for interfacing with the operator or the patient characterised by special input means allowing annotation or message recording

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0481—Interaction techniques based on graphical user interfaces [GUI] based on specific properties of the displayed interaction object or a metaphor-based environment, e.g. interaction with desktop elements like windows or icons, or assisted by a cursor's changing behaviour or appearance

- G06F3/0482—Interaction with lists of selectable items, e.g. menus

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0484—Interaction techniques based on graphical user interfaces [GUI] for the control of specific functions or operations, e.g. selecting or manipulating an object, an image or a displayed text element, setting a parameter value or selecting a range

- G06F3/04842—Selection of displayed objects or displayed text elements

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/048—Interaction techniques based on graphical user interfaces [GUI]

- G06F3/0487—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser

- G06F3/0488—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures

- G06F3/04883—Interaction techniques based on graphical user interfaces [GUI] using specific features provided by the input device, e.g. functions controlled by the rotation of a mouse with dual sensing arrangements, or of the nature of the input device, e.g. tap gestures based on pressure sensed by a digitiser using a touch-screen or digitiser, e.g. input of commands through traced gestures for inputting data by handwriting, e.g. gesture or text

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T11/00—2D [Two Dimensional] image generation

- G06T11/60—Editing figures and text; Combining figures or text

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

-

- G—PHYSICS

- G16—INFORMATION AND COMMUNICATION TECHNOLOGY [ICT] SPECIALLY ADAPTED FOR SPECIFIC APPLICATION FIELDS

- G16H—HEALTHCARE INFORMATICS, i.e. INFORMATION AND COMMUNICATION TECHNOLOGY [ICT] SPECIALLY ADAPTED FOR THE HANDLING OR PROCESSING OF MEDICAL OR HEALTHCARE DATA

- G16H30/00—ICT specially adapted for the handling or processing of medical images

- G16H30/20—ICT specially adapted for the handling or processing of medical images for handling medical images, e.g. DICOM, HL7 or PACS

-

- G—PHYSICS

- G16—INFORMATION AND COMMUNICATION TECHNOLOGY [ICT] SPECIALLY ADAPTED FOR SPECIFIC APPLICATION FIELDS

- G16H—HEALTHCARE INFORMATICS, i.e. INFORMATION AND COMMUNICATION TECHNOLOGY [ICT] SPECIALLY ADAPTED FOR THE HANDLING OR PROCESSING OF MEDICAL OR HEALTHCARE DATA

- G16H40/00—ICT specially adapted for the management or administration of healthcare resources or facilities; ICT specially adapted for the management or operation of medical equipment or devices

- G16H40/60—ICT specially adapted for the management or administration of healthcare resources or facilities; ICT specially adapted for the management or operation of medical equipment or devices for the operation of medical equipment or devices

- G16H40/63—ICT specially adapted for the management or administration of healthcare resources or facilities; ICT specially adapted for the management or operation of medical equipment or devices for the operation of medical equipment or devices for local operation

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/08—Detecting organic movements or changes, e.g. tumours, cysts, swellings

- A61B8/0866—Detecting organic movements or changes, e.g. tumours, cysts, swellings involving foetal diagnosis; pre-natal or peri-natal diagnosis of the baby

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/08—Detecting organic movements or changes, e.g. tumours, cysts, swellings

- A61B8/0891—Detecting organic movements or changes, e.g. tumours, cysts, swellings for diagnosis of blood vessels

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/44—Constructional features of the ultrasonic, sonic or infrasonic diagnostic device

- A61B8/4405—Device being mounted on a trolley

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/44—Constructional features of the ultrasonic, sonic or infrasonic diagnostic device

- A61B8/4427—Device being portable or laptop-like

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/44—Constructional features of the ultrasonic, sonic or infrasonic diagnostic device

- A61B8/4444—Constructional features of the ultrasonic, sonic or infrasonic diagnostic device related to the probe

- A61B8/4472—Wireless probes

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B8/00—Diagnosis using ultrasonic, sonic or infrasonic waves

- A61B8/56—Details of data transmission or power supply

- A61B8/565—Details of data transmission or power supply involving data transmission via a network

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2200/00—Indexing scheme for image data processing or generation, in general

- G06T2200/24—Indexing scheme for image data processing or generation, in general involving graphical user interfaces [GUIs]

-

- G—PHYSICS

- G06—COMPUTING; CALCULATING OR COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2210/00—Indexing scheme for image generation or computer graphics

- G06T2210/41—Medical

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y10—TECHNICAL SUBJECTS COVERED BY FORMER USPC

- Y10S—TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y10S128/00—Surgery

- Y10S128/916—Ultrasound 3-D imaging

Definitions

- One or more exemplary embodiments relate to a method, an apparatus, and a system for generating a body marker indicating an object.

- Ultrasound diagnosis apparatuses transmit ultrasound signals generated by transducers of a probe to an object and receive echo signals reflected from the object, thereby obtaining at least one image of an internal part of the object (e.g., soft tissues or blood flow).

- ultrasound diagnosis apparatuses are used for medical purposes including observation of the interior of an object, detection of foreign substances, and diagnosis of damage to the object.

- Such ultrasound diagnosis apparatuses provide high stability, display images in real time, and are safe due to the lack of radioactive exposure, compared to X-ray apparatuses. Therefore, ultrasound diagnosis apparatuses are widely used together with other image diagnosis apparatuses including a computed tomography (CT) apparatus, a magnetic resonance imaging (MRI) apparatus, and the like.

- CT computed tomography

- MRI magnetic resonance imaging

- a body marker is added to an ultrasound image when a user selects one of pre-generated body markers.

- it may be difficult to accurately determine a shape or an orientation of an object shown in the ultrasound image based on the body marker shown in the ultrasound image.

- it may require a large amount of time for the user to select one of the pre-generated body markers.

- One or more exemplary embodiments include a method, an apparatus, and a system for generating a body marker indicating an object. Also, a non-transitory computer-readable recording medium having recorded thereon a program, which, when executed by a computer, performs the method.

- a method of generating a body marker includes selecting a first body marker from among a plurality of prestored body markers based on an object shown in a medical image, generating a second body marker by modifying the first body marker according to a user input, and displaying the second body marker.

- the second body marker may include a body marker generated by flipping the first body marker about a vertical axis.

- the second body marker may include a body marker generated by rotating the first body marker about a central axis of the first body marker.

- the second body marker may include a body marker generated by rotating the first body marker in a clockwise direction.

- the second body marker may include a body marker generated by rotating the first body marker in a counterclockwise direction.

- the selecting may include receiving a user input for selecting the first body marker that corresponds to the object from among a plurality of prestored body markers, and selecting the first body marker based on the received user input.

- the selecting may include selecting a portion of the object shown in the medical image, and selecting the first body marker based on the selected portion of the object.

- the plurality of prestored body markers may be sorted into application groups.

- the first body marker and the second body marker may be 2-dimensional or 3-dimensional.

- the user input may include a user gesture input on a touch screen.

- the displaying may include displaying the first and second body markers on a single screen.

- a non-transitory computer-readable recording medium having recorded thereon a program, which, when executed by a computer, performs the method above.

- an apparatus for generating a body marker includes a display displaying a medical image showing an object, and a controller selecting a first body marker from among a plurality of prestored body markers based on the object, and generating a second body marker by modifying a shape of the first body marker according to a user input.

- the display displays the second body marker.

- the second body marker may include a body marker generated by flipping the first body marker about a vertical axis.

- the second body marker may include a body marker generated by flipping the first body marker about a horizontal axis.

- the second body marker may include a body marker generated by rotating the first body marker in a clockwise direction.

- the second body marker may include a body marker generated by rotating the first body marker in a counterclockwise direction.

- the apparatus may further include an input unit receiving a user input for selecting the first body marker that corresponds to the object from among a plurality of prestored body markers, and the controller may select the first body marker based on the received user input.

- the apparatus may further include an image processor selecting a portion of the object from the medical image, and the controller may select the first body marker based on the selected portion of the object.

- the plurality of prestored body markers may be sorted into application groups.

- the first body marker and the second body marker may be 2-dimensional or 3-dimensional.

- the user input may include a user gesture input on a touch screen.

- the display may display the first and second body markers on a single screen.

- an "ultrasound image” refers to an image of an object or an image of a region of interest included in the object, which is obtained using ultrasound waves.

- the region of interest is a region in the object which a user wants to focus on, for example, a lesion.

- an "object” may be a human, an animal, or a part of a human or animal.

- the object may be an organ (e.g., the liver, heart, womb, brain, breast, or abdomen), a blood vessel, or a combination thereof.

- the object may be a phantom.

- the phantom means a material having a density, an effective atomic number, and a volume that are approximately the same as those of an organism.

- the phantom may be a spherical phantom having properties similar to a human body.

- a "user” may be, but is not limited to, a medical expert, for example, a medical doctor, a nurse, a medical laboratory technologist, or a medical imaging expert, or a technician who repairs medical apparatuses.

- FIGS. 1A and 1B are diagrams illustrating an ultrasound diagnosis system 1000 according to an exemplary embodiment.

- a probe 20 may be wired to an ultrasound imaging device 100 in the ultrasound diagnosis system 1000.

- the probe 20, which transmits and receives ultrasound may be connected to a main body of the ultrasound diagnosis system 1000, i.e., the ultrasound image device 100, via a cable 110.

- the probe 20 may be wirelessly connected to the ultrasound imaging device 100 in an ultrasound diagnosis system 1001.

- the probe 20 and the ultrasound imaging device 100 may be connected via a wireless network.

- the probe 20 may be connected to the ultrasound imaging device 100 via a millimeter wave (mmWave) wireless network, receive an echo signal via a transducer, and transmit the echo signal in a 60 GHz frequency range to the ultrasound imaging device 100.

- the ultrasound imaging device 100 may generate an ultrasound image of various modes by using the echo signal received in the 60 GHz frequency range, and display the generated ultrasound image.

- the millimeter wave wireless network may use, but is not limited to, a wireless communication method according to the Wireless Gigabit Alliance (WiGig) standard.

- FIG. 2 is a block diagram illustrating an ultrasound diagnosis system 1002 according to an exemplary embodiment.

- the ultrasound diagnosis system 1002 may include a probe 20 and an ultrasound imaging device 100.

- the ultrasound imaging device 100 may include an ultrasound transceiver 1100, an image processor 1200, a communication module 1300, a display 1400, a memory 1500, an input unit 1600, and a controller 1700, which may be connected to one another via buses 1800.

- the ultrasound imaging device 1002 may be a cart type apparatus or a portable type apparatus.

- portable ultrasound diagnosis apparatuses may include, but are not limited to, a picture archiving and communication system (PACS) viewer, a smartphone, a laptop computer, a personal digital assistant (PDA), and a tablet PC.

- PACS picture archiving and communication system

- smartphone a smartphone

- laptop computer a laptop computer

- PDA personal digital assistant

- tablet PC tablet PC

- the probe 20 may transmit ultrasound waves to an object 10 (or, a region of interest in the object 10) in response to a driving signal applied by the ultrasound transceiver 1100 and receives echo signals reflected by the object 10 (or, the region of interest in the object 10).

- the probe 20 includes a plurality of transducers, and the plurality of transducers oscillate in response to electric signals and generate acoustic energy, that is, ultrasound waves.

- the probe 20 may be wired or wirelessly connected to a main body of the ultrasound diagnosis system 1002, and the ultrasound diagnosis system 1002 may include a plurality of probes 20.

- a transmitter 1110 supplies a driving signal to the probe 20.

- the transmitter 110 includes a pulse generator 1112, a transmission delaying unit 1114, and a pulser 1116.

- the pulse generator 1112 generates pulses for forming transmission ultrasound waves based on a predetermined pulse repetition frequency (PRF), and the transmission delaying unit 1114 delays the pulses by delay times necessary for determining transmission directionality.

- the pulses which have been delayed correspond to a plurality of piezoelectric vibrators included in the probe 20, respectively.

- the pulser 1116 applies a driving signal (or a driving pulse) to the probe 20 based on timing corresponding to each of the pulses which have been delayed.

- a receiver 1120 generates ultrasound data by processing echo signals received from the probe 20.

- the receiver 120 may include an amplifier 1122, an analog-to-digital converter (ADC) 1124, a reception delaying unit 1126, and a summing unit 1128.

- the amplifier 1122 amplifies echo signals in each channel, and the ADC 1124 performs analog-to-digital conversion with respect to the amplified echo signals.

- the reception delaying unit 1126 delays digital echo signals output by the ADC 124 by delay times necessary for determining reception directionality, and the summing unit 1128 generates ultrasound data by summing the echo signals processed by the reception delaying unit 1166.

- the receiver 1120 may not include the amplifier 1122. In other words, if the sensitivity of the probe 20 or the capability of the ADC 1124 to process bits is enhanced, the amplifier 1122 may be omitted.

- the image processor 1200 generates an ultrasound image by scan-converting ultrasound data generated by the ultrasound transceiver 1100.

- the ultrasound image may be not only a grayscale ultrasound image obtained by scanning the object 10 in an amplitude (A) mode, a brightness (B) mode, and a motion (M) mode, but also a Doppler image showing a movement of the object 10 via a Doppler effect.

- the Doppler image may be a blood flow Doppler image showing flow of blood (also referred to as a color Doppler image), a tissue Doppler image showing a movement of tissue, or a spectral Doppler image showing a moving speed of the object 10 as a waveform.

- a B mode processor 1212 extracts B mode components from ultrasound data and processes the B mode components.

- An image generator 1220 may generate an ultrasound image indicating signal intensities as brightness based on the extracted B mode components 1212.

- a Doppler processor 1214 may extract Doppler components from ultrasound data, and the image generator 1220 may generate a Doppler image indicating a movement of the object 10 as colors or waveforms based on the extracted Doppler components.

- the image generator 1220 may generate a three-dimensional (3D) ultrasound image via volume-rendering with respect to volume data and may also generate an elasticity image by imaging deformation of the object 10 due to pressure. Furthermore, the image generator 1220 may display various pieces of additional information in an ultrasound image by using text and graphics. In addition, the generated ultrasound image may be stored in the memory 1500.

- 3D three-dimensional

- the image processor 1200 may select a portion of the object 10 shown in the ultrasound image.

- the display 1400 displays the generated ultrasound image.

- the display 1400 may display not only an ultrasound image, but also various pieces of information processed by the ultrasound imaging device 1002 on a screen image via a graphical user interface (GUI).

- GUI graphical user interface

- the ultrasound imaging device 100 may include two or more display 1400 according to embodiments.

- the display 1400 may display at least one body marker from among the body markers stored in the memory 1500, and the display 1400 may display a second body marker that is generated according to a user input.

- the communication module 1300 is wired or wirelessly connected to a network 30 to communicate with an external device or a server. Also, when the probe 20 is connected to the ultrasound imaging device 100 via a wireless network, the communication module 1300 may communicate with the probe 20.

- the communication module 1300 may exchange data with a hospital server or another medical apparatus in a hospital, which is connected thereto via a PACS. Furthermore, the communication module 1300 may perform data communication according to the digital imaging and communications in medicine (DICOM) standard.

- DICOM digital imaging and communications in medicine

- the communication module 1300 may transmit or receive data related to diagnosis of the object 10, e.g., an ultrasound image, ultrasound data, and Doppler data of the object 10, via the network 30 and may also transmit or receive medical images captured by another medical apparatus, e.g., a computed tomography (CT) apparatus, a magnetic resonance imaging (MRI) apparatus, or an X-ray apparatus. Furthermore, the communication module 1300 may receive information about a diagnosis history or medical treatment schedule of a patient from a server and utilizes the received information to diagnose the patient. Furthermore, the communication module 1300 may perform data communication not only with a server or a medical apparatus in a hospital, but also with a portable terminal of a medical doctor or patient.

- CT computed tomography

- MRI magnetic resonance imaging

- X-ray apparatus e.g., X-ray apparatus

- the communication module 1300 may receive information about a diagnosis history or medical treatment schedule of a patient from a server and utilizes the received information to diagnose the patient. Furthermore, the communication module 1300 may perform data

- the communication module 1300 is wired to the network 30 or connected wirelessly to exchange data with a server 32, a medical apparatus 34, or a portable terminal 36.

- the communication module 1300 may include one or more components for communication with external devices.

- the communication module 1300 may include a local area communication module 1310, a wired communication module 1320, and a mobile communication module 1330.

- the local area communication module 1310 refers to a module for local area communication within a predetermined distance.

- Examples of local area communication techniques according to an embodiment may include, but are not limited to, wireless LAN, Wi-Fi, Bluetooth, ZigBee, Wi-Fi Direct (WFD), ultra wideband (UWB), infrared data association (IrDA), Bluetooth low energy (BLE), and near field communication (NFC).

- the wired communication module 1320 refers to a module for communication using electric signals or optical signals. Examples of wired communication techniques according to an embodiment may include communication via a twisted pair cable, a coaxial cable, an optical fiber cable, and an Ethernet cable.

- the mobile communication module 1330 transmits or receives wireless signals to or from at least one selected from a base station, an external terminal, and a server on a mobile communication network.

- the wireless signals may be voice call signals, video call signals, or various types of data for transmission and reception of text/multimedia messages.

- the memory 1500 stores various data processed by the ultrasound imaging device 1000.

- the memory 1500 may store medical data related to diagnosis of the object 10, such as ultrasound data and an ultrasound image that are input or output, and may also store algorithms or programs which are to be executed in the ultrasound imaging device 1002.

- the memory 1500 may store pre-generated body markers and a body marker generated by the controller 1700.

- the memory 1500 may be any of various storage media, e.g., a flash memory, a hard disk drive, EEPROM, etc. Furthermore, the ultrasound imaging device 1002 may utilize web storage or a cloud server that performs the storage function of the memory 1500 online.

- the input unit 1600 refers to a unit via which a user may input data for controlling the ultrasound imaging device 1002.

- the input unit 1600 may include hardware components, such as a keyboard, a mouse, a touch pad, a touch screen, and a jog switch, and a software module for driving the hardware components.

- the exemplary embodiments are not limited thereto, and the input unit 1600 may further include any of various input units including an electrocardiogram (ECG) measuring module, a respiration measuring module, a voice recognition sensor, a gesture recognition sensor, a fingerprint recognition sensor, an iris recognition sensor, a depth sensor, a distance sensor, etc.

- ECG electrocardiogram

- the input unit 1600 may receive a user input for selecting a first body marker from among the body markers stored in the memory 1500.

- the controller 1700 may control all operations of the ultrasound imaging device 1000. In other words, the controller 1700 may control operations among the probe 20, the ultrasound transceiver 1100, the image processor 1200, the communication module 1300, the display 1400, the memory 1500, and the input unit 1600 shown in FIG. 1 .

- the controller 1700 may select a first body marker from among prestored body markers based on the object 10.

- the prestored body markers are the body markers that are preset and stored in the memory 1500, regardless of a shape or a location of the object 10 shown on the ultrasound image.

- the controller 1700 may generate a second body marker by reshaping or modifying an orientation of the first body marker according to a user input.

- the user input is input via the input unit 1600, and includes a gesture performed by the user, for example, tapping, touch and hold, double tapping, dragging, panning, flicking, drag and drop, pinching, and stretching.

- a first body marker may be selected from the body markers stored in the memory 1500 according to a user input received by the input unit 1600. An example of the first body marker being selected according to the user input will be described in detail with reference to FIGS. 15 , 16A, and 16B . As another example, a first body marker may be selected from the body markers stored in the memory 1500 based on a portion of the object 10 selected from the ultrasound image by the image processor 1200. An example of the first body marker being selected based on the portion of the object 10 selected from the ultrasound image will be described in detail with reference to FIGS. 17 , 18A, and 18B .

- All or some of the probe 20, the ultrasound transceiver 1100, the image processor 1200, the communication module 1300, the display 1400, the memory 1500, the input unit 1600, and the controller 1700 may be implemented as software modules. Furthermore, at least one selected from the ultrasound transceiver 1100, the image processor 1200, and the communication module 1300 may be included in the controller 1700. However, the exemplary embodiments are not limited thereto.

- FIG. 3 is a block diagram illustrating a wireless probe 2000 according to an exemplary embodiment.

- the wireless probe 2000 may include a plurality of transducers, and, according to embodiments, may include some or all of the components of the ultrasound transceiver 1100 shown in FIG. 2 .

- the wireless probe 2000 includes a transmitter 2100, a transducer 2200, and a receiver 2300. Since descriptions thereof are given above with reference to FIG. 2 , detailed descriptions thereof will be omitted here.

- the wireless probe 2000 may selectively include a reception delaying unit 2330 and a summing unit 2340.

- the wireless probe 2000 may transmit ultrasound signals to the object 10, receive echo signals from the object 10, generate ultrasound data, and wirelessly transmit the ultrasound data to the ultrasound diagnosis system 1002 shown in FIG. 2 .

- FIG. 4 is a block diagram illustrating an apparatus 101 for generating a body marker, according to an exemplary embodiment.

- the apparatus 101 may include a controller 1701 and a display 1401.

- One or both of the controller 1701 and the display 1401 may be implemented as software modules, but are not limited thereto.

- One of the controller 1701 and the display 1401 may be implemented as hardware.

- the display 1401 may include an independent control module.

- the controller 1701 may be the same as the controller 1700 of FIG. 2

- the display 1401 may be the same as the display 1400 of FIG. 2

- the apparatus 101 may further include the ultrasound transceiver 1100, the image processor 1200, the communication module 1300, the memory 1500, and the input unit 1600 shown in FIG. 2 .

- the controller 1701 may select a first body marker from prestored body markers based on an object shown in a medical image.

- the body markers may be stored in a memory (not shown) of the apparatus 101.

- a body marker refers to a figure added to a medical image (e.g., an ultrasound image) so that a viewer of the medical image (e.g., a user) may easily recognize an object shown in the medical image.

- a medical image e.g., an ultrasound image

- the first body marker refers to a body marker that is pre-generated and stored in the apparatus 101. That is, the first body marker refers to a body marker generated in advance by a manufacturer of the user, regardless of information of a current shape or location of the object shown in the medical image.

- the first body marker does not reflect the current shape or the location of the object, when the first body marker is added to the medical image, the viewer of the medical image may be unable to accurately recognize the shape, position, or orientation of the object. Also, since the first body marker is selected from the prestored body markers, a large amount of time may be required for a user to select an appropriate body marker for the object. Hereinafter, the first body marker will be described in detail with reference to FIGS. 5A to 5C .

- FIGS. 5A to 5C are diagrams illustrating a first body marker according to an exemplary embodiment.

- a screen 3110 displays an example of prestored body markers 3120.

- the controller 1701 may select a first body marker from any one of the body markers 3120 based on an object shown in a medical image.

- the body markers 3120 may be sorted into application groups 3130.

- An application refers to a diagnosis type determined based on a body part or an organ of a human or an animal.

- the application groups 3130 may include an abdomen group, a small part group including the chest, mouth, sexual organs, and the like, a vascular group, a musculoskeletal group, an obstetrics group, a gynecology group, a cardiac group, a brain group, a urology group, and a veterinary group.

- the application groups 3130 are not limited thereto.

- Body parts and organs of a human or an animal may be sorted into a plurality of application groups based on a predetermined standard.

- the display 1401 may display the body markers 3120, which are sorted into the application groups 3130, on the screen 3110. For example, the user may select (e.g., click or tap) a 'Vascular' icon from icons representing the application groups 3130 displayed on the screen 3110. Then, from among the body markers 3120, the display 1401 may display body markers included in the vascular group on the screen 3110.

- the body markers 3120 are generated and stored in advance. Accordingly, the user has to examine the body markers 3120 and select a first body marker that is the most appropriate for an object in a medical image. Therefore, it may require a large amount of time for the user to select the first body marker.

- the first body marker selected from the body markers 3120 may not include accurate information about the object. This will be described in detail with reference to FIG. 5B .

- FIG. 5B illustrates an example of a medical image 3220 displayed on a screen 3210 and a first body marker 3230 added to the medical image 3220.

- FIG. 5B is described assuming that the medical image 3220 is an ultrasound image showing a portion of blood vessels.

- the first body marker 3230 may be added to the medical image 3220.

- the first body marker 3230 is selected from pre-generated body markers. Therefore, by viewing only the first body marker 3230, the viewer may only be able to recognize that the medical image 3220 obtained is a captured image of blood vessels, without knowing which portion of the blood vessels is shown in the medical image 3220.

- the first body marker 3230 cannot be rotated or modified in any other manner. According to a direction of blood vessels 3250 shown in the first body marker 3230, the first body marker 3230 may be unable to accurately indicate a direction of blood vessels 3240 shown in the medical image 3220. For example, referring to FIG. 5B , the blood vessels 3240 in the medical image 3220 are oriented with respect to an x-axis, whereas the blood vessels 3250 in the first body marker 3230 are oriented with respect to a y-axis. Therefore, the first body marker 3230 does provide accurate information about the object.

- FIG. 5C illustrates an example of a medical image 3320 displayed on a screen 3310 and a first body marker 3330 added to the medical image 3320.

- FIG. 5C is described assuming that the medical image 3320 is an ultrasound image showing a fetus.

- a fetus 3340 of the ultrasound image 3320 is shown lying parallel to the x-axis and facing downward.

- a fetus 3350 of the first body marker 3330 is shown lying parallel to the x-axis and facing upward. Therefore, the first body marker 3330 does not provide accurate information about the fetus 3340 shown in the ultrasound image 3320.

- the first body marker which is a pre-generated body marker, may not accurately represent an object included in a medical image.

- the controller 1701 may generate a second body marker by modify a shape, orientations, or positions of the first body marker. Therefore, the controller 1701 may generate a body marker that accurately shows information about an object.

- the second body marker will be described in detail with reference to FIG. 6 .

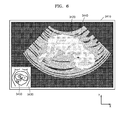

- FIG. 6 is a diagram illustrating a second body marker 3430 according to an exemplary embodiment.

- FIG. 6 illustrates an example of a medical image 3420 displayed on a screen 3410 and a second body marker 3430 added to the medical image 3420.

- FIG. 6 is described assuming that the medical image 3320 is an ultrasound image showing a fetus.

- a fetus 3440 of the ultrasound image 3420 is shown lying parallel to the x-axis and facing downward.

- a fetus 3450 of the second body marker 3430 is also shown lying parallel to the x-axis and facing downward.

- the second body marker 3430 accurately shows an orientation and a facing direction of the fetus 3440 of the ultrasound image 3420.

- the first body marker 3330 does not accurately show a facing direction of the fetus 3340 of the ultrasound image 3320. Therefore, the viewer may be unable to obtain accurate information about the fetus 3340 shown in the ultrasound image 3320 based on only the first body marker 3330.

- the second body marker 3430 accurately shows the orientation and location of the fetus 3440 of the ultrasound image 3420, and thus, the viewer may obtain accurate information about the fetus 3440 shown in the ultrasound image 3420 based on only the second body marker 3430.

- the controller 1701 may generate a second body marker by changing a shape of a first body marker according to a user input.

- the controller 1701 may modify the shape, orientations, or positions of the first body marker according to a user input received via an input unit (not shown) in the apparatus 101.

- the input unit in the apparatus 101 may be the same as the input unit 1600 described with reference to FIG. 2 .

- the user input may include clicking a predetermined point on a screen or inputting a drag gesture from a point on the screen to another point on the screen.

- the user input may include a user gesture input on the touch screen.

- the gesture may include, for example, tapping, touch and hold, double tapping, dragging, scrolling, flicking, drag and drop, pinching, and stretching.

- the controller 1701 may generate a second body marker by flipping the first body marker about a vertical axis according to the user input.

- the controller 1701 may generate a second body marker by rotating the first body marker about a central axis of the first body marker according to the user input.

- the controller 1701 may generate a second body marker by rotating the first body marker in a clockwise or counterclockwise direction according to the user input.

- the display 1401 may display a medical image showing an object on a screen. Also, the display 1401 may display the second body marker generated by the controller 1701 on the screen. The display 1401 may display the first body marker and the second body marker on a single screen.

- the controller 1701 may generate the second body marker by changing the shape of the first body marker according to the user input.

- the display 1401 may display the first body marker and a predetermined guide image on the screen.

- the first body marker and the predetermined guide image displayed by the display 1401 will be described in detail with reference to FIGS. 7A and 7B .

- FIGS. 7A and 7B are diagrams illustrating images used for generating a second body marker, according to an exemplary embodiment.

- FIG. 7A illustrates an example of a first body marker 4120 and first to third guide images 4130, 4140, and 4150 displayed on a screen 4110.

- the user may provide a user input to the apparatus 101 based on the first body marker 4120 and the first to third guide images 4130, 4140, and 4150 displayed on the screen 4110.

- the user may select a point on the guide images 4130, 4140, and 4150 according to a predetermine rule, or input a drag gesture beginning from a point on the guide images 4130, 4140, and 4150 to another point thereon.

- the controller 1701 may modify a shape, orientations, or positions of the first body marker 4120 according to the user input.

- the user input is assumed as a gesture performed by the user in FIGS. 7A to 14B .

- the user input is not limited thereto.

- the user may provide the user input to the apparatus 101 by using various hardware components, such as a keyboard or a mouse.

- the controller 1701 may rotate a portion of the first body marker 4120 about the central axis thereof.

- the controller 1701 may rotate a portion of the first body marker 4120 toward the second guide image 4140.

- the controller 1701 may rotate a portion of the first body marker 4120 in a clockwise or counterclockwise direction.

- the first body marker 4120 may be displayed on a screen 4160.

- the first to third guide images 4130, 4140, and 4150 of FIG. 7A may be omitted from the screen 4160.

- the user may also provide the user input, as described above with reference to FIG. 7A , to the apparatus 101, and the controller 1701 may modify a shape, orientations, or positions of the first body marker 4120 according to the user input.

- FIGS. 8A to 14B it is assumed that the guide images 4130, 4140, and 4150 are not displayed on a screen.

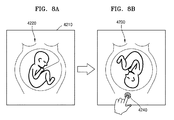

- FIGS. 8A and 8B are diagrams illustrating an example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- the controller 1701 may generate a second body marker 4230 by rotating a portion of a first body marker 4220 according to a user input.

- FIG. 8A illustrates an example of a screen 4210 displaying the first body marker 4220.

- the user may select (e.g., tap) an outer point of the first body marker 4220, and the controller 1701 may rotate the portion of the first body marker 4220 to the point selected by the user.

- FIG. 8B illustrates an example of the second body marker 4230 generated by rotating the portion of the first body marker 4220.

- the controller 1701 may rotate the portion of the first body marker 4220 such that the head of the fetus is located toward a point 4240 selected by the user. In this case, the controller 1701 may rotate the portion of the first body marker 4220 in a clockwise or counterclockwise direction.

- the second body marker 4230 may be the same as when the first body marker 4220 is flipped about a horizontal axis.

- the controller 1701 may generate the second body marker 4230 by rotating the portion of the first body marker 4220 toward the point 4240 selected by the user. Also, the controller 1701 may store the second body marker 4230 in the memory of the apparatus 101.

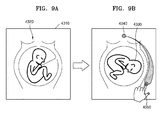

- FIGS. 9A and 9B are diagrams illustrating another example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- the controller 1701 may generate a second body marker 4330 by rotating a portion of a first body marker 4320 in a clockwise or counterclockwise direction according to a user input.

- FIG. 9A illustrates an example of a screen 4310 displaying the first body marker 4320.

- the user may input a drag gesture from an outer point on the first body marker 4320 to another outer point thereon in a clockwise or counterclockwise direction, and the controller 1701 may rotate the portion of the first body marker 4320 in a clockwise or counterclockwise direction according to the drag gesture input by the user.

- FIG. 9B illustrates the second body marker 4330 generated by rotating the portion of the first body marker 4320 in a clockwise direction.

- the first body marker 4320 represents a fetus

- the user inputs a drag gesture in a clockwise direction from a point 4340 toward which the head of the fetus 4833 is directed to another point 4350 on the first body marker 4320.

- the controller 1701 may rotate the portion of the first body marker 4320 in a clockwise direction such that the head of the fetus is pointed toward the point 4350 where the drag gesture stops.

- the controller 1701 may generate the second body marker 4330 by rotating the portion of the first body marker 4320 in a clockwise direction toward the point 4350 selected by the user. Also, the controller 1701 may store the second body marker 4330 in the memory of the apparatus 101.

- the controller 1701 may generate the second body marker 4330 by rotating the portion of the first body marker 4320 in a clockwise direction.

- the controller 1701 may generate a second body marker by rotating a first body marker in a counterclockwise direction toward a point where the drag gesture stops.

- FIGS. 10A to 10D are diagrams illustrating another example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- the controller 1701 may generate second body markers 4440, 4450, and 4460 by rotating a portion of a first body marker 4420 about a central axis of the first body marker 4420 according to a user input.

- FIG. 10A illustrates an example of a screen 4410 displaying the first body marker 4420.

- the user may select (e.g., tap) a point 4430 through which the central axis of the first body marker 4420 passes, and the controller 1701 may rotate the portion of the first body marker 4420 about the central axis thereof according to the selected point.

- FIGS. 10B to 10D illustrate that the first body marker 4420 is rotated about the central axis thereof in a counterclockwise direction

- the exemplary embodiments are not limited thereto.

- the controller 1701 may rotate the portion of the first body marker 4420 about the central axis thereof in the counterclockwise direction based on a predetermined rule.

- FIG. 10B illustrates an example of the second body marker 4440 generated by rotating the portion of the first body marker 4420 by 90° about the central axis thereof.

- the first body marker 4420 represents the fetus, when the user taps the point 4430 through which the central axis of the first body marker 4320 passes.

- the controller 1701 may rotate the portion of the first body marker 4320 by 90° about the central axis thereof in a counterclockwise direction.

- FIG. 10C illustrates an example of the second body marker 4450 generated by rotating the portion of the second body marker 4440 of the FIG. 10B by 90° about a central axis of the second body marker 4440.

- the second body marker 4440 represents the fetus

- the controller 1701 may rotate the portion of the second body marker 4440 by 90° about the central axis thereof in a counterclockwise direction.

- FIG. 10D illustrates an example of the second body marker 4460 generated by rotating the portion of the second body marker 4450 of FIG. 10C by 90° about a central axis of the second body marker 4450.

- the controller 1701 may rotate the portion of the second body marker 4450 by 90° about the central axis thereof in a counterclockwise direction.

- the controller 1701 may generate the second body markers 4440, 4450, and 4460 by rotating the portion of the first body marker 4420 about the central axis thereof. Also, the controller 1701 may store the second body markers 4440, 4450, and 4460 in the memory of the apparatus 101.

- the controller 1701 may rotate the portion of the first body marker 4420 about the central axis thereof according to taps performed by the user.

- the taps may be continuous or discontinuous, but are not limited thereto.

- the controller 1701 may rotate the first body marker 4420 about the central axis thereof in a clockwise direction. For example, when the user inputs a drag gesture in a clockwise direction from a first point to a second point, in which the distance between the first point and the second point is 1 mm, the controller 1701 may rotate the first body marker 4420 by 20° in a clockwise direction.

- the exemplary embodiments are not limited thereto. The distance of the drag gesture and a rotation degree of the first body marker 4420 may vary.

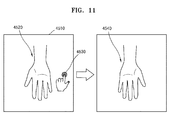

- FIGS. 11A and 11B are diagrams illustrating another example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- the controller 1701 may generate a second body marker 4540 by flipping a first body marker 4520 about the vertical axis according to a user input.

- FIG. 11A illustrates an example of a screen 4510 displaying the first body marker 4520.

- the controller 1701 may flip the first body marker 4520 about the vertical axis.

- FIG. 11B illustrates an example of the second body marker 4540 generated by flipping the first body marker 4520 about the vertical axis.

- the controller 1701 may flip the first body marker 4520 about the vertical axis to show an inverse image of the palm of the right hand.

- the controller 1701 may generate the second body marker 4540 by flipping the first body marker 4520 about the vertical axis. Also, the controller 1701 may store the second body marker 4540 in the memory of the apparatus 101.

- FIGS. 12A and 12B are diagrams illustrating another example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- the controller 1701 may generate a second body marker 4640 by flipping over a first body marker 4620 (i.e., rotating the first body marker 4620 by 180° about a central axis thereof in a clockwise or counterclockwise direction) according to a user input.

- FIG. 12A illustrates an example of a screen 4610 displaying the first body marker 4620.

- the controller 1701 may flip over the first body marker 4620.

- FIG. 12B illustrates an example of the second body marker 4640 generated by flipping over the first body marker 4620.

- the controller 1701 may flip over the first body marker 4620 to display the back of the right hand.

- the controller 1701 may generate the second body marker 4640 by flipping over the first body marker 4620. Also, the controller 1701 may store the second body marker 4640 in the memory of the apparatus 101.

- the controller 1701 may generate a second body marker that represents a single object by using a first body marker that represents a single object.

- a body marker may represent a plurality of objects. For example, when a medical image is obtained by capturing the uterus of a pregnant woman having twins, a body marker added to the medical image has to represent the twins (that is, a plurality of objects).

- the controller 1701 may generate a second body marker that represents a plurality of objects by using a first body marker that represents a single object.

- examples of the controller 1701 generating a second body marker that represents a plurality of objects will be described with reference to FIGS. 13A to 14B .

- FIGS. 13A and 13B are diagrams illustrating another example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- FIG. 13A illustrates an example of a screen 4710 displaying a first body marker 4720 that represents a single object. For convenience of description, it is assumed that the first body marker 4720 represents a fetus.

- the controller 1701 may change the number of objects represented by a body marker, according to a user input. For example, when the user selects (e.g., taps) an icon 4730 displayed at a predetermined location on the screen 4710, the display 1401 may display a pop-up window 4740 showing the number of objects that may be represented by a body marker. Accordingly, the user may set the number of objects to be represented by the body marker.

- the controller 1701 may generate a second body marker 4750 that includes 2 fetuses, as shown in FIG. 13B . Also, the controller 1701 may store the second body marker 4750 in the memory of the apparatus 101.

- the controller 1701 may modify shapes, orientations, or positions of a plurality of objects included in a body marker. For example, when a medical image is obtained by capturing the uterus of a pregnant woman having twins, each fetus may be oriented in different directions. Therefore, the controller 1701 may modify the shape, orientations, or positions of the plurality of objects in the body marker to thus generate a body marker that accurately shows information about the objects.

- controller 1701 generating a second body marker by modifying the shape, orientations, or positions according to a plurality of objects included in a body marker will be described with reference to FIGS. 14A and 14B .

- FIGS. 14A and 14B are diagrams illustrating another example of the controller 1701 generating a second body marker, according to an exemplary embodiment.

- FIG. 14A illustrates an example of a screen 4810 displaying a first body marker 4820 that includes a plurality of objects.

- the first body marker 4820 includes twins.

- the controller 1701 may generate the first body marker 4820 by using a body marker that includes a single fetus.

- the controller 1701 may select an object from among objects included in a body marker. For example, when the user selects (e.g., taps) a fetus 4833 from fetuses 4831 and 4833 displayed on the screen 4810, the controller 1701 may determine the fetus 4833 as an object to be reshaped, reoriented, or repositioned. In this case, the display 1401 may display the fetuses 4831 and 4833 such that the fetus 4833 that is selected is distinguished from the fetus 4831 that is not selected (for example, change in thickness or line color). Thus, the user may easily recognize the selected fetus 4833.

- the controller 1701 may determine the fetus 4833 as an object to be reshaped, reoriented, or repositioned.

- the display 1401 may display the fetuses 4831 and 4833 such that the fetus 4833 that is selected is distinguished from the fetus 4831 that is not

- the controller 1701 may modify a shape, orientations, or positions of the fetus 4833 according to a user input.

- the user input is the same as described above with reference to FIGS. 8A to 12B .

- the controller 1701 may rotate the fetus 4833 such that the head is directed toward the point 4850 where the drag gesture stops.

- the controller 1701 may generate a second body marker 4860 in which a location of the fetus 4833 of FIG. 14A is modified. Also, the controller 1701 may store the second body marker 4860 in the memory of the apparatus 101.

- second body markers described above with reference to FIGS. 7A to 14B are 2-dimensional, the exemplary embodiments are not limited thereto. In other words, the controller 1701 may generate a 3-dimensional second body marker.

- FIG. 15 is a block diagram illustrating an apparatus 102 for generating a body marker, according to another exemplary embodiment.

- the apparatus 102 may include a controller 1702, a display 1402, and an input unit 1601. All or some of the controller 1702, the display 1402, and the input unit 1601 may be implemented as software modules, but are not limited thereto. Some of the controller 1702, the display 1402, and the input unit 1601 may be implemented as hardware. Also, each of the display 1402 and the input unit 1601 may include an independent control module.

- the controller 1702 may be the same as the controller 1701 of FIG. 4

- the display 1402 may be the same as the display 1401 of FIG. 4

- the input unit 1601 may be the same as the input unit 1600 of FIG. 2 . If the apparatus 102 is a component included in an ultrasound imaging device, then, in addition to the controller 1702, the display 1402, and the input unit 1601, the apparatus 102 may further include the ultrasound transceiver 1100, the image processor 1200, the communication module 1300, and the memory 1500 shown in FIG. 2 .

- the input unit 1601 may receive a user input for selecting a first body marker corresponding to an object in a medical image.

- the user input refers to an input selecting the first body marker from prestored body markers.

- the controller 1702 may select the first body marker based on the user input transmitted from the input unit 1601. Also, the controller 1702 may generate a second body marker by changing a shape of the first body marker according to a user input received after the user input for selecting the first body marker. Since examples of the controller 1702 generating the second body marker have been described above in detail with reference to FIGS. 8A to 14B , descriptions of the examples will not be repeated.

- FIGS. 16A and 16B are diagrams illustrating an example of an input unit receiving a user input for selecting a first body marker, according to an exemplary embodiment.

- a screen 5110 displays a plurality of body markers 5120 prestored in the apparatus 102.

- the body markers 5120 may be sorted into application groups 5130, as described above with reference to FIG. 5A .

- the user may select a body marker 5140 from among the body markers 5120 displayed on the screen 5110.

- the input unit 1601 includes hardware components, such as a keyboard, a mouse, a trackball, and a jog switch, and a software module for driving the hardware components

- the user may click the body marker 5140 from among the body markers 5120.

- the input unit 1601 includes a touch screen and a software module for driving the touch screen

- the user may tap the body marker 5140 from among the body markers 5120.

- the controller 1702 may select a first body marker based on the user input.

- the body marker 5140 selected by the user is selected as the first body marker.

- the display 1402 may display a first body marker 5160 on a screen 5150.

- the controller 1702 may select a body marker determined by the user as the first body marker.

- the exemplary embodiments are not limited thereto.

- the controller 1702 may select the first body marker based on a shape of an object shown in a medical image.

- FIGS. 17 , 18A, and 18B an example of the controller 1702 selecting a first body marker based on a shape of an object will be described with reference to FIGS. 17 , 18A, and 18B .

- FIG. 17 is a block diagram illustrating an apparatus 103 for generating a body marker, according to another exemplary embodiment.

- the apparatus 103 may include a controller 1703, a display 1403, and an image processor 1201. All or some of the controller 1703, the display 1403, and the image processor 1201 may be implemented as software modules, but are not limited thereto. Some of the controller 1703, the display 1403, and the image processor 1201 may be implemented as hardware. Also, each of the display 1403 and the image processor 1201 may include an independent control module.

- the controller 1703 may be the same as the controller 1701 of FIG. 4

- the display 1403 may be the same as the display 1401 of FIG. 4

- the image processor 1201 may be the same as the image processor 1200 of FIG. 2 .

- the apparatus 103 may further include the ultrasound transceiver 1100, the communication module 1300, the memory 1500, and the input unit 1600 shown in FIG. 2 .

- the image processor 1201 selects a portion of an object from a medical image. For example, the image processor 1201 may detect outlines of the object in the medical image, connect the detected outlines, and thus select the portion of the object. The image processor 1201 may select the portion of the object by using various methods, for example, a thresholding method, a K-means algorithm, a compression-based method, a histogram-based method, edge detection, a region-growing method, a partial differential equation-based method, and a graph partitioning method. Since the methods above are well-know to one of ordinary skill in the art, detailed descriptions thereof will be omitted.

- the controller 1703 may select a first body marker based on information of the shape of the object. For example, from among a plurality of body markers stored in the apparatus 103, the controller 1703 may select a first body marker having a shape that is the most similar to the object.

- the controller 1703 may generate a second body marker by modifying the shape, orientations, or positions of the first body marker according to a user input received after the first body marker is selected. Since examples of the controller 1703 generating the second body marker have been described above in detail with reference to FIGS. 8A to 14B , the examples will not be repeatedly described.

- FIGS. 18A and 18B are diagrams illustrating an example of an image processor selecting a portion of an object from a medical image, according to an exemplary embodiment.

- a screen 5210 displays a medical image 5220 showing an object 5230.

- the image processor 1201 may select a portion of the object 5230 from the medical image 5220.

- the image processor 1201 may use any one of the methods described with reference to FIG. 17 to select the portion of the object 5230 from the medical image 5220.

- the controller 1703 may select a first body marker based on the shape of the object 5230. For example, from among the plurality of body markers stored in the apparatus 103, the controller 1703 may select a body marker 5240 having a shape that is the most similar to the object 5230 as the first body marker. Also, as shown in FIG. 16B , the display 1402 may display the first body marker 5240 on a screen 5250.

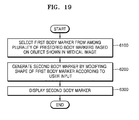

- FIG. 19 is a flowchart illustrating a method of generating a body marker, according to an exemplary embodiment.

- the method of generating the body marker includes operations sequentially performed by the ultrasound diagnosis systems 1000, 1001, and 1002 respectively shown in FIGS. 1A, 1B and 2 or the apparatuses 100, 101, 102, and 103 respectively shown in FIGS. 4 , 15 , and 17 . Therefore, all of the above-described features and elements of the ultrasound diagnosis systems 1000, 1001, and 1002 respectively shown in FIGS. 1A, 1B and 2 and the apparatuses 100, 101, 102, and 103 respectively shown in FIGS. 4 , 15 , and 17 apply to the method of FIG. 19 .

- a controller selects a first body marker from among a plurality of prestored body markers based on an object shown in a medical image.

- the plurality of body markers may be stored in a memory of an apparatus for generating a body marker.

- the controller generates a second body marker by changing a shape of the first body marker according to a user input.

- the controller may generate the second body marker by flipping the first body marker about a vertical axis according to the user input.

- the controller may generate the second body marker by rotating the first body marker about a central axis thereof according to the user input.

- the controller may generate the second body marker by rotating the first body marker in a clockwise or counterclockwise direction according to the user input.

- a display displays the second body marker.

- the display may display the first body marker and the second body marker on a single screen.

- a body marker that corresponds to a current location or direction of an object shown in a medical image may be generated. Also, the user may spend less time selecting the body marker that corresponds to the current location or direction of the object from among prestored body markers.

- exemplary embodiments can also be implemented through computer-readable code/instructions in/on a medium, e.g., a computer-readable medium, to control at least one processing element to implement any above described exemplary embodiment.

- the medium can correspond to any medium/media permitting the storage and/or transmission of the computer-readable code.

- the computer-readable code can be recorded/transferred on a medium in a variety of ways, with examples of the medium including recording media, such as magnetic storage media (e.g., ROM, floppy disks, hard disks, etc.) and optical recording media (e.g., CD-ROMs, or DVDs), and transmission media such as Internet transmission media.

- the medium may be such a defined and measurable structure including or carrying a signal or information, such as a device carrying a bitstream according to one or more exemplary embodiments.

- the media may also be a distributed network, so that the computer-readable code is stored/transferred and executed in a distributed fashion.

- the processing element could include a processor or a computer processor, and processing elements may be distributed and/or included in a single device.

Landscapes

- Engineering & Computer Science (AREA)

- Health & Medical Sciences (AREA)

- Life Sciences & Earth Sciences (AREA)

- Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- General Engineering & Computer Science (AREA)

- Biomedical Technology (AREA)

- General Health & Medical Sciences (AREA)

- Medical Informatics (AREA)

- Public Health (AREA)

- Radiology & Medical Imaging (AREA)

- Nuclear Medicine, Radiotherapy & Molecular Imaging (AREA)

- General Physics & Mathematics (AREA)

- Pathology (AREA)

- Human Computer Interaction (AREA)

- Molecular Biology (AREA)

- Surgery (AREA)

- Animal Behavior & Ethology (AREA)

- Veterinary Medicine (AREA)

- Biophysics (AREA)

- Heart & Thoracic Surgery (AREA)

- Primary Health Care (AREA)

- Epidemiology (AREA)

- Business, Economics & Management (AREA)

- General Business, Economics & Management (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Ultra Sonic Daignosis Equipment (AREA)

Abstract

Description

- This application claims the benefit of Korean Patent Application No.

10-2014-0180499, filed on December 15, 2014 - One or more exemplary embodiments relate to a method, an apparatus, and a system for generating a body marker indicating an object.

- Ultrasound diagnosis apparatuses transmit ultrasound signals generated by transducers of a probe to an object and receive echo signals reflected from the object, thereby obtaining at least one image of an internal part of the object (e.g., soft tissues or blood flow). In particular, ultrasound diagnosis apparatuses are used for medical purposes including observation of the interior of an object, detection of foreign substances, and diagnosis of damage to the object. Such ultrasound diagnosis apparatuses provide high stability, display images in real time, and are safe due to the lack of radioactive exposure, compared to X-ray apparatuses. Therefore, ultrasound diagnosis apparatuses are widely used together with other image diagnosis apparatuses including a computed tomography (CT) apparatus, a magnetic resonance imaging (MRI) apparatus, and the like.

- In general, a body marker is added to an ultrasound image when a user selects one of pre-generated body markers. However, it may be difficult to accurately determine a shape or an orientation of an object shown in the ultrasound image based on the body marker shown in the ultrasound image. Also, it may require a large amount of time for the user to select one of the pre-generated body markers.

- One or more exemplary embodiments include a method, an apparatus, and a system for generating a body marker indicating an object. Also, a non-transitory computer-readable recording medium having recorded thereon a program, which, when executed by a computer, performs the method.

- Additional aspects will be set forth in part in the description which follows and, in part, will be apparent from the description, or may be learned by practice of the presented exemplary embodiments.

- According to one or more exemplary embodiments, a method of generating a body marker includes selecting a first body marker from among a plurality of prestored body markers based on an object shown in a medical image, generating a second body marker by modifying the first body marker according to a user input, and displaying the second body marker.

- The second body marker may include a body marker generated by flipping the first body marker about a vertical axis.

- The second body marker may include a body marker generated by rotating the first body marker about a central axis of the first body marker.

- The second body marker may include a body marker generated by rotating the first body marker in a clockwise direction.

- The second body marker may include a body marker generated by rotating the first body marker in a counterclockwise direction.