EP0248533A2 - Method, apparatus and system for recognising broadcast segments - Google Patents

Method, apparatus and system for recognising broadcast segments Download PDFInfo

- Publication number

- EP0248533A2 EP0248533A2 EP87303968A EP87303968A EP0248533A2 EP 0248533 A2 EP0248533 A2 EP 0248533A2 EP 87303968 A EP87303968 A EP 87303968A EP 87303968 A EP87303968 A EP 87303968A EP 0248533 A2 EP0248533 A2 EP 0248533A2

- Authority

- EP

- European Patent Office

- Prior art keywords

- signature

- segment

- frame

- parametized

- segments

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G11—INFORMATION STORAGE

- G11B—INFORMATION STORAGE BASED ON RELATIVE MOVEMENT BETWEEN RECORD CARRIER AND TRANSDUCER

- G11B27/00—Editing; Indexing; Addressing; Timing or synchronising; Monitoring; Measuring tape travel

- G11B27/10—Indexing; Addressing; Timing or synchronising; Measuring tape travel

- G11B27/19—Indexing; Addressing; Timing or synchronising; Measuring tape travel by using information detectable on the record carrier

- G11B27/28—Indexing; Addressing; Timing or synchronising; Measuring tape travel by using information detectable on the record carrier by using information signals recorded by the same method as the main recording

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/40—Scenes; Scene-specific elements in video content

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04H—BROADCAST COMMUNICATION

- H04H60/00—Arrangements for broadcast applications with a direct linking to broadcast information or broadcast space-time; Broadcast-related systems

- H04H60/35—Arrangements for identifying or recognising characteristics with a direct linkage to broadcast information or to broadcast space-time, e.g. for identifying broadcast stations or for identifying users

- H04H60/37—Arrangements for identifying or recognising characteristics with a direct linkage to broadcast information or to broadcast space-time, e.g. for identifying broadcast stations or for identifying users for identifying segments of broadcast information, e.g. scenes or extracting programme ID

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04H—BROADCAST COMMUNICATION

- H04H60/00—Arrangements for broadcast applications with a direct linking to broadcast information or broadcast space-time; Broadcast-related systems

- H04H60/56—Arrangements characterised by components specially adapted for monitoring, identification or recognition covered by groups H04H60/29-H04H60/54

- H04H60/58—Arrangements characterised by components specially adapted for monitoring, identification or recognition covered by groups H04H60/29-H04H60/54 of audio

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04H—BROADCAST COMMUNICATION

- H04H60/00—Arrangements for broadcast applications with a direct linking to broadcast information or broadcast space-time; Broadcast-related systems

- H04H60/56—Arrangements characterised by components specially adapted for monitoring, identification or recognition covered by groups H04H60/29-H04H60/54

- H04H60/59—Arrangements characterised by components specially adapted for monitoring, identification or recognition covered by groups H04H60/29-H04H60/54 of video

Definitions

- This invention relates to the automatic recognition of broadcast segments, particularly commercial advertisements broadcast by television stations.

- real-time continuous pattern recognition of broadcast segments is accomplished by constructing a digital signature from a known specimen of a segment which is to be recognized.

- the signature is constructed by digitally parametizing the segment, selecting portions from among random frame locations throughout the segment in accordance with a set of predefined rules (i.e. different frames are selected for each segment, but the selection is always based on the same criteria) to form the signature, and associating with the signature the frame locations of the portions.

- the signature and associated frame locations are stored in a library of signatures. Each signature in the library is identified with a particular segment to be recognized.

- a broadcast signal is monitored and digitally parametized.

- the library For each frame of the parametized monitored signal, the library is searched for any signature that may be associated with that frame. Using the frame information stored with the signature, each of the potentially associated stored signatures is compared to the appropriate frames of the parametized signal. If a stored signature compares with the monitored data, a match is declared and the broadcast segment is identified using identification data associated with the signature.

- a digital keyword derived from a designated frame in the parametized segment is identified as associated with the segment signature, but the association of that keyword with a particular segment signature is nonexclusive -- i.e., the same keyword can be associated with more than one signature.

- a plurality of additional frames is selected from among random frame locations throughout the segment in accordance with a set of predefined rules and, in a library of signatures, the keyword representing the designated frame and the words representing the additional frames are stored together with the offsets of the additional frames relative to the designated frame.

- a broadcast signal is monitored and digitally parametized.

- the library For each monitored digital word of the parametized monitored signal, the library is searched for any signature associated with a keyword corresponding to that monitored word, and the additional words of any such signature are compared with those words of the parametized monitored signal which are separated from the monitored word by the stored offset amounts. If the stored signature compares with the monitored data, a match is declared and the broadcast segment is identified using identification data associated with the signature data.

- a system additionally has means for detecting the occurrence of artifacts within the monitored signal characteristic of potential unknown broadcast segments to be recognized, and means for classifying and identifying such potential unknown segments.

- One embodiment of such a system includes a plurality of local sites in different geographic regions for monitoring broadcasts in those regions, and a central site linked to the local sites by a communications network.

- Each local site maintains a library of segment signatures applicable to its geographic region, and each has at least means for performing the storing, monitoring, searching, comparing and detecting tasks described above.

- the central site maintains a global library containing all of the information in all of the local libraries.

- Classification and identification of potential unknown segments involves the generation at the local site of compressed audio and video information, a temporary digital signature, and parametized monitored signal information for a potential unknown segment not found in the library of the local site. At least the parametized monitored signal information and the temporary signature are transmitted to the central site over the communications network. At the central site, the temporary signatures, which are stored in a special portion of the global library, are searched, and the signatures stored in the main portion of the global library are also searched, and are compared with the transmitted parametized monitored signal information to determine if the potential unknown segment was previously received by another local site and is therefore already known to the system. If so, and if a global signature has already been generated, the temporary signature at the originating local site is replaced with the previously generated global signature.

- the present invention can identify a segment of a broadcast signal by pure continuous pattern recognition on a real-time basis. No codes need be inserted into the broadcast signal prior to transmission and no "cues" or “signalling events” (e.g., fades-to-black or scene changes) that occur in the broadcast need be used in the recognition process.

- the broadcast signal can be either a radio broadcast, for which audio information is obviously used for the recognition process, or a television broadcast, for which audio information, video information, or both, can be used, and can be an over-the-air signal, a cable television signal, or a videotape signal.

- the broadcast signal is parametized to yield a digital data stream composed, preferably, of one 16-bit digital word for every 1/30 of a second of signal.

- the audio data is synchronized with the video frame rate to form an "audio frame*.)

- the information is then processed in the same way whether it originated as audio or video.

- a digital signature is constructed for each segment to be recognized.

- the construction of the signature is discussed in more detail below. However, it preferably comprises 128 bits representing eight 16-bit words corresponding to eight frames of signal information selected from among random frame locations throughout the segment in accordance with a set of predefined rules.

- the incoming audio or video signal is digitized and parametized to yield, preferably, a 16-bit digital word for each frame of data. This also is discussed in more detail below.

- the incoming signal is received, it is read into a buffer which holds, e.g., two minutes of signal data. Each word of this data is assumed to be the first word of an eight-word signature.

- offset information indicating the spacing (i.e., the number of frames) between each such word and the first signature word.

- the library of signatures of known segments to be recognized is searched for signatures beginning with that word. Using the offset information stored with the signatures, subsequent received words, already in the buffer, are compared to the remaining signature words to determine whether or not they match those remaining words of the signature.

- the system of the invention be capable of comparing, in real time, incoming frame signature data to many tens of thousands of segment signatures representative of as many different commercials.

- the number of signatures that can be stored and searched within the real-time limitation of 1/30 second and, hence, the number of broadcast segmemts which can be monitored can be increased to several hundred thousand using a designated frame keyword lookup data reduction method.

- a signature is constructed for a known segment, one frame from the segment is chosen, using criteria to be discussed below, as the key frame, its digital perametized equivalent becoming the keyword.

- the signature is still, preferably, eight 16-bit words, but the stored offset information now represents spacing from the keyword rather than from the first signature word.

- the keyword can be one of the signature data words, in which case the offset for that word is zero, or it can be a ninth word.

- the keyword also need not temporally precede all of the other signature words and often will not.

- the present invention need not rely during the recognition process on any cues, signalling events or pre-established codes in the broadcast signal. It simply monitors the incoming signal and performs continuous pattern recognition.

- Video information is parametized and digitized as described below. Although, in the description which follows, parametization is based upon signal luminance, it is to be understood that other or additional attributes of a video signal could be used for this purpose.

- a number of areas, preferably 16 (although more or fewer areas may be used), of the video field or frame are selected.

- the size of each area is preferably eight-by-two pixels, although areas of other sizes could be used.

- the luminance of each area is averaged to produce an absolute gray scale value from, e.g., 0-255. This value is normalized to a bit value of 0 or 1 by comparing the value to one of the following:

- the goal in selecting which comparison to make is to maximize entropy -- i.e., to minimize correlation between the areas.

- Correlation refers to the degree to which the luminance value of one area is related to or follows that of another area.

- the fourth comparison is preferred, with the previous frame being one to four frames behind the current frame.

- the distribution of the sixteen areas in the field or frame, as well as the aixteen areas in the previous field or frame is preferably asymmetrical about the center of the field or frame, because it has been empirically determined that video frames are composed in such a way that there is too much correlation between symmetrically located areas.

- FIG. 1 A sample partial distribution of areas within a frame is shown in FIG. 1.

- Frame 10 is actually a video field of sampled pixels.

- frame will be used to mean either field or frame.

- a bit value of 1 is returned if the luminance of the area in question exceeds the luminance of the comparison area. If the luminance of the area in question is less than or equal to the luminance of the comparison area, a bit value of 0 is returned.

- a 16-bit mask word is created along with each 16-bit frame signature.

- the mask word represents the reliability of the frame signature. For each bit in the frame signature, if the absolute value of the luminance difference used to calculate that bit value is less than a threshold or "guard band" value, the luminance value is assumed to have been susceptible to error (because of noise in transmission), and so the mask bit for that bit is set to 0, indicating suspect data. If the absolute value of the luminance difference is greater than or equal to the guard band value, the luminance value is assumed to be much greater than the noise level and the corresponding mask bit is set to 1, indicating reliable data. As discussed below, the number of ones or zeroes in the mask word is used in generating segment signatures and setting comparison thresholds.

- An audio '' 'frame' signature if such a signature is used, can be constructed in the same foraat as a video frame signature so that it can be processed in the same way.

- Such an audio signature may be used to recognize broadcast radio segments, or may be used to confirm the identification of a video segment the data for which includes a high percentage of suspect data.

- the use of audio signatures is not necessary to the invention.

- the parametized "frame signature" informa - tion is stored in a circular buffer large enough to hold, preferably, approximately twice as much signal information as the longest broadcast segment to be identified.

- a segment signature is being compared to the frame signature data, for each frame that passes the observation point eight comparisons between the segment signature data and the frame signature data are made, one word at a time, using the offset data stored with the segment signature to choose the frame signatures for comparison.

- the frame signature and segment signature words are compared using a bit-by-bit exclusive-NOR operation, which returns a 1 when both bits are the same and a O when they are different. The number of ones resulting from the exculsive-NOR operation is accumulated over all eight word comparisons.

- Stable data means the word selected must have some minimum duration in the broadcast segment -- i.e., it is derived from a portion of the segment for which there are at least a minimum number of (e.g., 20 or more) frames of the same or similar data).

- Noise-free data are data having an associated mask word with a maximum number of unmasked bits. (Maximization of duration or stability, and minimization of masked bits or noise, can be two competing criteria.)

- Hamming distance refers to the dissimilarity of the digital data. It is desirable for more positive and reliable identification of broadcast segments that a signature for one broadcast segment differ from that for another broadcast segment by at least a minimum number of bits, known as the Hamming distance. In generating the second or any subsequent signature in the database, Hamming distance from existing signatures must be considered.

- Kntropy refers to the desirability, discussed above, of having the minimum possible correlation between different portions of the data. for this reason, given the preferred signature data length (8 words, 16 bits per word), preferably no two signature words should be taken from points closer together in the segment than one second. This sets a lower limit on the length of broadcast segments which can be be reliably recognized. If, e.g., eight words make up a signature, and they must be at least one second apart, the shortest segment that can be reliably recognized is a segment of approximately ten seconds in length.

- the duration criterion and mask words are also stored with the segment signature for use in the recognition process.

- the duration parameter signifies that, because the frame data are stable for some time period, a certain number of successive matches on that data should be obtained during the recognition process. If significantly more or fewer matches are obtained, the matches are discarded as statistical anomalies.

- the mask data define a threshold parameter which is used during the recognition process to adjust the default number of matching bits required before a signature is considered to have matched the broadcast data. For example, a default threshold could be set for the system which, during an attempt to match a particular segment signature, is lowered by one-half the number of masked bits associated with that signature.

- a keyword is chosen from within the segment. Having a buffer at least twice as long as the longest segment to be identified assures that sufficient broadcast signal history to make a recognition remains in the buffer at the time the keyword reaches the observation point.

- the criteria used for choosing the keyword are similar to those for choosing the signature words ⁇ it should be of stable duration and relatively noise free, augmented by the need for a "level list" -- i.e., there should be no more than about four signatures having the same keyword so that the necessary computations can be made within the the 1/30 second real-time limit.

- a signature Before a signature can be constructed for a segment for addition to the segment signature data base, it must be determined that a broadcast segment it being received.

- new segments may be played into the system manually -- e.g., where an advertiser or advertising agency supplies a video tape of a new commercial or commercials.

- the operator and the system know that this is a new segment, the signature for which is not yet stored in the segment signature library.

- Bowever it can happen that a new commercial is broadcast by a local broadcast station for which no videotape was earlier supplied and which, therefore, is not yet known to the system. Therefore, the present invention also can detect the presence in the incoming signal of potential segments to be recognized that are new and presently unknown.

- the present invention relies on the presence in the broadcast signal of signal artifacts characteristic of such potential unknown segments.

- artifacts can include fades-to-black, audio power changes including silence ("audio fade-to-black”), and the presence (but not the content) of pre-encoded identification data appearing, e.g., in the vertical intervals of video signals.

- These artifacts are not assumed to indicate the presence of a new, unknown segment to be recognized per se. Rather, they are used as part of a decision-making process.

- the parametized information derived from the unknown segment is saved along with audio and video information corresponding to the unknown segment.

- the signal artifacts that marked the unknown segment are not retained, nor is any record kept of what they were.

- the saved audio and video information is ultimately presented, in compressed form (as discussed below), to an operator for use in identifying the new and unknown segment and recording the identification in the segment signature library.

- the operator also records any special requirements needed to prepare a signature for the segment.

- some national commercials include two portions: a portion which is always the same irrespective of in which part of the country the commercial is to be broadcast (the "constant” portion), and a portion which differs depending on where the commercial is to be broadcast (the "variable” or "tag” portion). It is important to accurately identify different versions of such national commercials. If a comer- cial for a national product has a tag that is specific to one region, the commercial is considered different from the same commercial tagged for a different region. Therefore, the operator would note that the signature must include data from the tag portion of the segment, and that additional data must be taken from the tag. A signature is then generated automatically as described above.

- tags are used, depending on the type of tag associated with the commercial.

- One type of tag is known as a "live tag”, which is a live-action audio/video portion of at least five seconds duration.

- Another type of tag is known as a "still tag” which is a still picture -- e.g., of text -- which has insufficient video content for generating a unique signature.

- Either type of tag can occur anywhere in the commercial.

- a separate signature is generated for the tag.

- the system logs both the commercial occurrences and the tag occurrences, and then merges that data with the help of pointers in the signature database.

- a flag or pointer associated with the commercial signature triggers a brute force video comparison of a frame of the tag with a frame of the reference tag or tags. This comparison is not necessarily done in real time.

- a system constructed in accordance with the present invention is preferably composed of one or more local sites and one central site. Utilizing the recognition methods described above, a local site scans one or more broadcast video and audio television signals looking for known broadcast segments. If a known segment is detected, the local site records time and date information, the channel being monitored, the identification of the segment, and other information in its database. Relying on signal artifacts, the local site also notes and records signals that it senses are likely to be new segments of interest which are not yet known to the system.

- the central site communicates, e.g., via telephone lines, with the local sites to determine what known broadcast segments have been detected and what potential but unknown segments have been seen and saved, and to disseminate to appropriate local sites segment signature and detection information corresponding to new broadcast segments not yet known to those local sites. It is anticipated that the ultimate identification of a new and unknown segment will require visual or aural interpretation by a human operator at the central site.

- FIG. 2 illustrates a block diagram of the system including a central site 22 and a plurality of linked local sites 21.

- Each local site 21 monitors broadcasting in its geographic region.

- the local sites 21 are located at cable television head end stations to assure a clear input signal.

- the local site 21 is capable of recognizing and identifying known broadcast segments and logging occurrences of such segments by date, time, duration, channel, identification, and other desirable information.

- the local site is also capable of recognizing the occurrence of potentially and new unknown segments, and of generating temporary key signatures for such unknown segments so that it can maintain a log of all occurrences of each such segment pending the identification of the segment by the central site 22.

- Local site 21 sends to central site 22 via communications channels 23a, b all log information for recognized known segments, plus information relating to all potential new and unknown segments, and receives in return software updates, signature library updates, and requests for additional information. If a new signature transmitted to and received by one local site 21 originated as an unknown segment originally transmitted to the central site by that local site 21, the local site 21 merges the temporary log into other data awaiting transmission to central site 22, and then purges the temporary signature from its database.

- This ''central-local site is identical to the other local sites 21 and serves the same function as the other local sites 21 for the broadcast market in which it and central site 22 are located.

- central-local site 21a is connected to central site 22 by direct high-speed data link 24, new segments monitored by central-local site 21a are quickly available to central site 22 for identification.

- the signatures for those new segments that are of national scope can then be quickly disseminated to the remote local sites 21 to minimize the number of times a local site 21 will classify a received segment as an unknown, and thereby to prevent communications channels 23a, b from being burdened unnecessarily with the transmission of signature and other data (compressed audio and video) corresponding to locally unknown national segments.

- the audio and video information corresponding to new and unknown segments must be transmitted to the central site 22 via communications channels 23a, b to be reviewed by an operator during the identification process, the data is gathered by local site 21 in a compressed format to reduce communications overhead and expense.

- Compression of the video data preferably is accomplished as follows. First, not every frame is captured. Preferably, only one frame every four seconds is captured to create a "slide show" effect, with an option at the request of an operator at central site 22 to capture frames more frequently. Second, as is well known, a television video frame consists of two interlaced fields occurring 1/60 second apart. In the "slide show", small motion; between the fields causes a noticeable flicker. Therefore, only one field is captured, which still provides a recognizable image. Third, not all pixels are necessary for a sufficiently recognizable image. It has been found that 160 x 120 pixels is sufficient, with an option at the request of an operator at central site 22 to capture 320 x 240 pixels per field. fourth, it is not necessary to capture color information.

- Audio compression is more difficult to accomplish. Considerable information can be removed from video data while still maintaining a recognizable image. However, only a smaller amount of information can be removed from audio data without the remaining audio becoming difficult or impossible to understand, or unacceptably fatiguing for human operators.

- ADPCM Adaptive Differential Pulse Code Modulation

- ADPCK techniques and circuitry are well known.

- the frame signature data and the temporary segment or key signature generated for that unknown segment, and the compressed audio and video information for that segment are stored.

- the local site 21 is polled periodically by central site 22 and at those times the stored frame signature data and the temporary signatures are transmitted to central site 22 for identification via communications channels 23a, b.

- the audio and video information are transmitted optionally, but these data are always stored by local site 21 until the identification and signature of the segment are returned by the central site 22.

- signature compressor 25 associated with central site 22 first checks the frame signature data against the global signature database, which contains all signatures from all local databases for all broadcast segments known to the system, to determine whether or not the "unknown" segment in fact previously had been identified and is already known to the system (perhaps because a different local site saw the broadcast segment before), but the signature for which had not yet been sent to all locai sites 21 or had been identified as being of limited geographic scope and so sent to only some of local sites 21.

- the global signature for that segment (which may differ somewhat from the temporary signature) is transmitted back to the originating local site, and the originating local site 21 is instructed to replace the temporary key signature generated locally with the global key signature. If the frame signature data are not matched to a signature in the global database, the corresponding temporary key signature is added to a separate portion of the global database used to store temporary signatures.

- This "temporary global database” allows coprocessor 25 to carry out the grouping of like unknowns, previously discussed, because if the same unknown comes in from another local site 21 it should be matched by the temporary signature stored in the global database, thereby indicating to coprocessor 25 that it is probably the same segment which has already been sent by another local site 21.

- coprocessor 25 causes host computer 27 to request the compressed audio and/or video data from local site 21 if those data have not already been transmitted.

- audio and video data preferably are not initially transmitted from local sites 21 on the theory that the frame signature data may be identified as a known segment upon comparison to the global database.

- the audio and/or video data may also have already been sent for another segment in the group. Further, audio and video need not both necessarily be transmitted.

- the operator will request one set of data. In this case, unknown segment data are stored until the missing audio or video data arrive, and then queued to an operator workstation 26. If the operator determines that the audio (if only video was sent) or video (if only audio was sent) data are also•needed, the unknown data are again stored until the missing information arrives, when the data are requeued. Similarly, the operator may determine that higher resolution video (320 x 240 pixels or more frames per second) is needed and the unknown data again are stored.

- local site 21 In a case where high resolution video is requested, if local site 21 always captures the high resolution video and simply does not transmit it for purposes of economy, it can be transmitted quickly on request. However, the system may be implemented in such a way that local site 21 does not routinely capture the high resolution video, in which case it is necessary to wait until the segment is broadcast again in that locality.

- the operator may also determine that the information captured as a result of the signal artifacts is actually two segments (e.g., in a 30-second interval, two 15-second commercials occurred), or that two consecutive intervals were each half of a single segment (e.g., two 30-second segments represent one 60-second commercial). In such a case, the operator instructs the system to break up or concatenate the segments, recreate the frame signature and segment signature data, and restart the identification process for the resulting segment or segments.

- the frame signature data for each segment in the group must be aligned with the frame signature data for each other segment in the group before a global key signature can be constructed. Alignment is necessary because different segments in the group may have been captured by local sites 21 by the detection of different artifacts. Thus, the segments may not all have been captured identically -- i.e., between the same points. For example, a set of segment data in a group for a broadcast segment nominally 60 seconds in length may in fact, as captured, be only 55 seconds in length because the segment was broadcast incompletely. Or, a member segment of the group may be too long or too short because it was captured as a consequence of the detection of a fade-to-black artifact or of an audio power change artifact.

- Alignment is carried out by coprocessor 25.

- the frame signature data for the first segment in the group are placed in a buffer.

- data for a second segment are clocked word-by-word into another buffer and an exclusive-NOR between all bits of the two segments is continually performed as the second segment is clocked in.

- an exclusive-NOR between all bits of the two segments is continually performed as the second segment is clocked in.

- the aligned data then are used as a baseline to align the remaining segments in the group.

- the alignment process is time-consuming. Bowever, it is not necessary that alignment be done on a real-time basis. Preferably, alignment is performed at night, or at some other time when system activity is at a low point.

- a best fit of both the frame signature data and the mask data is calculated by assigning to each bit position the value of that bit position in the majority of segments.

- the signature coprocessor 25 then generates a new global signature for the segment using the best fit parametized data.

- the new global signature After the new global signature has been generated, it is compared to the frame signature data for all of the unknown segments in the group to which it applies to make certain that the data for each segment matches the signature. If the new global signature does not match each set of frame signature data, this signifies either that an error was made in selecting the keyword or another of the data words, or that one or more sets of data in the group are really not representative of the same segment. In such a case, an operator may have to view additional segments in the group.

- the signature is transmitted back to each local site 21 or to an appropriate subset of local sites if the newly identified segment is not of national scope.

- the respective local sites 21 then merge the data they had been keeping based on the temporary signatures and send it to central site 22, replace the temporary signatures they had been retaining with the new global signature in their local databases, and purge the audio, video, and frame signature data for that segment.

- a first preferred embodiment of a local site 30 is shown diagrammatically in FIG. 3, and includes an IBM PC personal computer 31 and two Intel 310 computers 32, 33, interconnected by conventional RS-232C serial links 34, 35.

- Personal computer 31 uses a circuit card 36 and a software program to extract video parameter data from inconing video signals.

- Computer 32 creates signatures for new and unknown segments and matches existing signatures maintained in a signature library against incoming frame signature data.

- Computer 33 logs the Whits" or matches made by personal computer 32.

- a frame grabber card 36 such as the PC Vision Frame Grabber by Imaging Technologies, in personal computer 31 generates 512 x 256 pixels for each frame of incoming video information. However, frame grabber 36 is utilized so that only one field per frame is sampled. Frame grabber 36 also provides a video field clock that is used by computer 31. Computer 31 processes the frame grabber output to produce a four-bit word representing each frame.

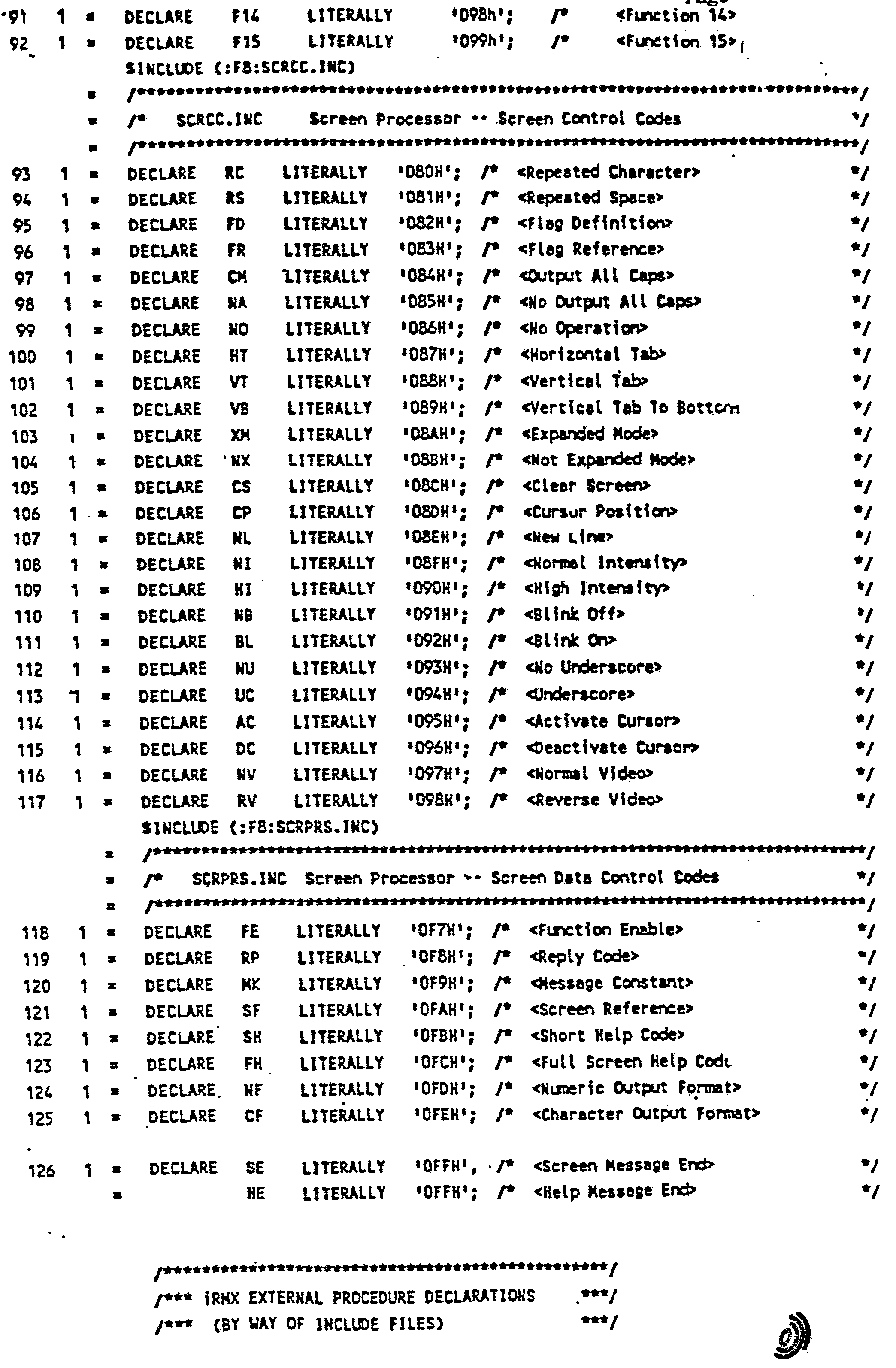

- a Microsoft MACRO Assembler listing of the program used in personal computer 31 is attached hereto as Appendix A and a flowchart, the blocks thereof keyed to the lines of the program listing, is shown in FIG. 4. The flowchart and program listing will be readily understood by those of ordinary skill in the art.

- the parametized data from personal computer 31 are transmitted over serial link 34 to computer 32 where they are clocked into a section of R A M partitioned, using pointers, as a modulo-2048 circular buffer.

- the buffer holds 34 seconds of data.

- frame grabber 36 and personal computer 31 are too slow to grab all of the frame data in real time (although in the second preferred embodiment, described below, this function is performed by hardware which does operate in real time). Resultantly, some data are lost.

- the data that are provided over serial link 34 are inserted into the buffer in their current real-time positions.

- Computer 32 "backfills" the buffer by filling in the empty positions with the next succeeding real time data.

- Computer 32 creates signatures and matches incoming frame signature data to a signature database as described above, without using the designated frame keyword lookup technique.

- a PL/M listing of the signature generation program is attached hereto as Appendix B and a flowchart, the blocks of which are keyed to the lines of the program listing, is shown in FIG. 5.

- a PL/M listing of the signature detection program is attached hereto as Appendix C and a flowchart, the blocks of which are keyed to the lines of the program listing, is shown in FIG. 6.

- the flowcharts and program listings will be readily understood by those of ordinary skill in the art.

- each segment is divided into nine sections -- a half-section at the beginning, followed by eight full sections, and a half-section at the end.

- the two half-sections are discarded to account for possible truncation of the beginning or end of the segment when it is broadcast.

- a word is then selected, using the previously described criteria of stable data, noise-free data, Hamming distance, and entropy, from each of the eight sections.

- the signatures created are stored in the memory of computer 32 while the match data are transmitted over serial link 35 to computer 33 which keeps track of the matches.

- FIG. 7 A listing of a PL/M program used in computer 33 is attached hereto as Appendix D and a flowchart, the blocks of which are keyed to the lines of the program listing, is shown in FIG. 7.

- the flowchart and program listing will be readily understood by those of ordinary skill in the art.

- Signatures are generated in the system of FIG. 3 by the computer 32. However, the generation of signatures is not initiated as earlier described and as implemented in the second preferred embodiment (described below). Rather, in the system of FIG. 3, an operator must "prime” the system by specifying the segment length, playing a tape into the system and manually starting the buffer filling process. Computer 32 then builds a signature automatically. However, there is no threshold or guard-band computed here as was earlier described, and as implemented in the second preferred embodiment (described below). In the second preferred embodiment, a threshold associated with the signature is modified by subtracting from the default threshold one-half the number of masked bits in the signature, while in the system of FIG. 3 the default threshold is augmented at the time of comparison by one-half the number of masked bits in the data being compared.

- FIGS. 8-12 A second preferred embodiment of the system of the invention is shown in FIGS. 8-12.

- FIGS. 8-11B show the details of local sites 21.

- FIG. 8 is a block diagram of a local site 21.

- the local site 21 is comprised of two major subsystems: the signal processing subsystem 80 and the control computer 81.

- the signal processing subsystem 80 receives incoming RF broadcast signals and performs three distinct tasks:

- the hardware of signal processing subsystem 80 includes matching matching module 82, compression module 83, and one or more tuners 84 (there is one tuner for each broadcast station to be monitored).

- Tuner 84 may be any conventional RF tuner; and in the preferred embodiment is Zenith model ST-3100.

- Matching module 82 and compression module 83 are built around the well-known Intel Corporation Multibuse II Bus Structure. Multibus® II is described in the Multibus® II Bus Architecture Databook (Intel Order No. 230893) and the Multibus® II Bus Architecture Specification Handbook (Intel Order No. 146077C), both of which are incorporated herein by reference. The first two tasks discussed above are performed in matching module 8 2 . The third task is performed in compression module 83.

- Each of the two modules 82, 83 includes a Central Services Module (CSM) 90 which is a standard off-the-shelf item (such as Intel Order No. SBC-CSM-001) required in all Hultibus® II systems.

- CSM Central Services Module

- Each module also has an executive microcomputer board 91a, 91b which controls communications between the respective module and control computer 81. All components on each module are interconnected on public bus 92, while those components which require large amounts of high-speed data transfer between them are additionally interconnected by private bus 93.

- Matching module 82 further includes one channel card 94 for each channel to be monitored -- e.g., one for each commercial station in the local market.

- Channel cards 94 generate the 16 bits per frame of parametized signal data and the corresponding 16-bit mask for both audio and video in accordance with the methods earlier described.

- Channel cards 94 also signal the occurrence of signal artifacts which indicate, and are used to delineate and capture, potential unknown segments.

- Also contained within matching module 82 are two circular buffer memories 95.

- One buffer 95 is for audio and the other is for video. These buffers store on a temporary basis the incoming parametized frame signature data, as earlier described. In this preferred embodiment, the maximum length of broadcast segments to be recognized is assumed to be one minute.

- each buffer can hold 4,096 frames, which is 136.5 seconds, of video at a frame rate of thirty frames per second, or just over two minutes. 4,096 is 2 12 , so the offset data associated with each signature (as earlier described) is 12 bits long. Parametized signal information is moved from channel cards 94 to the circular buffers 95 when requested by executive microcomputer 91a.

- Matching module 82 also includes correlation card 96, shown in more detail in FIG. 10.

- Correlation card 96 performs the task of recognizing incoming broadcast segments . Correlation card 96 accomplishes this by comparing incoming video and audio frame signature data with the locally stored database of key signatures to find matches.

- the local signature database is stored in memory 97 (up to 1 3 megabytes of conventional random access memory). Since correlation card 96 in the preferred embodiment may operate on both audio and video data (although, e.g., only video can be used), correlation card 96 must have sufficient capacity to process an amount of data equivalent to twice the number of channels to be monitored.

- Correlation card 96 performs all functions required to generate the raw data for the final correlation, or determination of a segment match.

- Executive microcomputer 91a accumulates the matches resulting from key signature comparisons done by correlation card 96, and then transmits the accumulated matches to control computer 81.

- correlation card 96 contains signature comparison processor (SCP) 101 and two independent interfaces 102, 103 to the Multibus® II public and private buses.

- SCP 101 is a programmable element which operates under command of executive microcomputer 91a. SCP 101 is responsible for the following primary functions:

- Control unit 104 of SCP 101 directs the operation of SCP 101.

- Control unit 104 includes memory which contains all microprogram instructions defining SCP operation. It also contains sufficient functionality to allow for the efficient sequencing of the required microinstructions. Control unit operation is directed, at an upper level, by instructions generated by executive microcomputer 91a.

- Logic unit 105 performs all address and data manipulations required to load both incoming and previously defined reference signatures into onboard buffers for comparison. Signature data is resident in external, offboard memory accessible by the private bus; logic unit 105 and control unit 104 together execute all private bus interface functions.

- Logic unit 105 also generates messages to and interprets messages from executive microcomputer 91a.

- An example of a message interpreted by logic unit 105 is a request to compare selected key signatures in the key signature database to the current, incoming segment signature.

- An example of a message generated by logic unit 105 is that which is generated at the end of a comparison which defines how well the incoming signature matches the reference (how many total correct or incorrect bits after comparison).

- the logic unit peripheral hardware nodule 106 is responsible for assisting logic unit 105 in performing signature comparisons. Specifically, the module contains logic which is intended to increase the processing capability of SCP 101. The features of module 106 are:

- Executive microcomputer 91a is a standard commercial microcomputer card (e.g., Intel Order No. SBC-286-100) based on an 80286 microprocessor. The function of this card is to:

- Compression module 83 includes (in addition to central services module 90 and executive microcomputer 91b) audio capture card 98a, video capture card 98b, and associated circular buffer memories 99a, 99b.

- the audio and video capture cards are shown in more detail in FIGS. 11A and 11B, respectively.

- Audio and video capture cards 98a, 98b each can monitor up to eight broadcast channels (monitoring more channels requires multiple cards).

- Video card 98b captures incoming video frames at a slide-show rate of one frame per second and digitizes and compresses the video data, while audio card 98a digitizes and captures audio continually, for each of the eight.channels.

- the audio and video from up to eight channels are brought to. these cards (as vell as to the channel cards) from channel tuners 8 4 by coaxial cables.

- a conventional 1-of-8 multiplexer 110 (implemented in this embodiment as a T-switch circuit design using CMOS 4066 integrated circuits), selects one of the eight channels for capture.

- the audio from each channel is fed to one of up to eight combination filter/coder/decoder circuits 111, such as a National Semiconductor 3054 integrated circuit.

- combination circuit 111 the audio signal is passed through a low-pass filter and then through a coder/decoder which performs a logarithmic analog-to-digital conversion resulting in a digital signal with a compressed amplitude (the audio signal is expanded again prior to being audited by an operator at central site 22).

- the compressed digital signal is then passed through an adaptive differential pulse code modulator (ADPCM) 112 similar to that used by the telephone industry -- e.g., a NEC 7730 integrated circuit -- for further compression.

- ADPCM adaptive differential pulse code modulator

- An Intel .80188 microprocessor 113 controls the movement of the compressed audio data from ADPCM 112 through bus interface 114 to private bus 93 of compression module 83, and thence to circular buffer memory 99a.

- a JEDEC-standard RAM/EPROM 113a contains programs and memory workspace used by microprocessor 113.

- common video circuit 115 uses the sync information contained in the received analog video signal to generate clock pulses and addresses for analog-to-digital conversion of the video signal.

- Circuit 115 outputs both address signals and digitized data signals representing the video at the rate of one field per second at 116 and 117, respectively.

- the data pass to arithmetic logic unit (ALU) 118 which further compresses the video data by reducing each video field or frame to 160 (horizontal) by 120 (vertical) pixels, each pixel averaging four-by-two pixel areas in size, using gray scale pixel averaging.

- ALU arithmetic logic unit

- the address data pass from common video circuit 115 to dither prom 119 which contains a table of pseudorandom numbers -- one for each video address output by circuit 115.

- dither prom 119 contains a table of pseudorandom numbers -- one for each video address output by circuit 115.

- ALU 118 contains a table of pseudorandom numbers -- one for each video address output by circuit 115.

- This dithering technique by which a low-information-content video signal can be visually improved, allows the video frame to be compressed to as few as 9600 bytes.

- the dithered compressed video data are then passed to one of two line buffers 1101a, b (one buffer is filled while the other is being read and emptied), from which they are passed under the control of direct memory access controller 1102 to private bus 93 of compression module 83, and thence to circular buffer memory 99b.

- the audio and video data are placed in circular buffers 99a and 99b holding the most recent two minutes of data for that channel under the direction of control computer 81 and executive microcomputer 91b.

- Executive microcomputer 91b preferably is a standard commercial microcomputer card (e.g., Intel Order No. SBC-286-100) based on an 80286 microprocessor. This card provides an interface path to the control computer 81. All data to be transferred to the central site is requested by control computer 81.

- the signal processing subsystem 80 can be referred to as the "front end" of local site 21.

- Control computer 81 can be referred to as the "back end”.

- Control computer 81 (FIG. 8) is preferably a commercially available MicroVAX 11 minicomputer manufactured by Digital Equipment Corporation (DEC), running the DEC VMS virtual memory operating system. Between one and nine megabytes of RAM memory are provided along with one DEC RA81 Winchester disk drive (with controller) having a capacity of 456 megabytes.

- a DPV11 synchronous interface connects the control computer 81 to communications channels 23a, b via a modem.

- a DRV11 direct memory access controller connects control computer 81 to signal processing subsystem 80.

- control computer 81 A function of control computer 81 is to determine, using detected signal artifacts, when new and as yet unknown broadcast segments may have been received. Control computer 81 also keeps track of the temporary log for such segments until the segments have been identified by central site 22. Another function of control computer 81 is to determine, based on duration and other criteria, such as time of day, whether or not matches accumulated by executive microcomputer 91a actually indicate that a segment has been recognized. (For example, during "prime time" no commercials are normally broadcast at, e.g., 7 minutes after the hour.) In performing these functions, control computer 81 exercises its ability to control all segment processing subsystem 80 functions via executive microcomputers 91a, 91b.

- Control computer 81 can instruct modules 82 and 83 to tune to a particular channel to capture audio/video data, to compare a pair of frame and key signatures, or to generate a local signature. These instructions can originate in control computer 81 itself or ultimately at central site 22. For example, in identifying a new segment, an operator may Deed higher resolution video information. In such a case, control computer 81 would act on instructions from central site 22 by requesting module 83 to capture the required data when the desired segment is next received. Control computer 81 also stores on disk a backup copy of the signature database stored in RAM memory 97.

- central system 22 includes central-local site 21a, coprocessor 25, mainframe "host” computer and memory 27 with associated mass storage device 28, communications processor 29 and workstations 26.

- Coprocessor 25 is used to assist with certain computationally intensive functions related to the signature processing requirements of the central site 22.

- the "front end * of coprocessor 25 is identical to matching module 82 of local site 21, earlier described, except for the omission from coprocessor 25 of channel cards 94. It is preferred, however, that the memory for the signature database within coprocessor 25 be expanded over that of the local site to ensure that all key signatures used at all local sites can be resident in coprocessor 25.

- the "back end" of coprocessor 25 preferably uses the same MicroVAX computer used by local sites 21 (FIG. 8), although a different type of computer could be used. Another alternative is that the coprocessor front end may be attached to communications processor 29 at the central site. It is also preferred that the coprocessor hardware be duplicated at central site 22 so that a backup system is available in case of a failure of the primary coprocessor.

- the coprocessor's signature generating and comparing functions are performed in the front end, while its checking and grouping functions are controlled by its back and utilizing the comparison capabilities of the front end as described above.

- Workstation 26 is the vehicle by which new and unknown segments are presented to, and classified by, human operators.

- a secondary function of workstation 26 is to act as a terminal in the maintenance of host resident databases.

- Workstation 26 is made up of the following:

- workstation 26 The heart of workstation 26 is the IBM 3270 AT computer.

- the 3270 AT is a blend of an IBM 3270 terminal and an IBM AT personal computer. By combining the power of these components, a very effective mainframe workstation is created.

- Workstation 26 allows easy communication with host 27 and has its own processing capability. Up to four sessions with host 27 can be started as well as a local process all viewed in separate windows on the console screen. A combination of host and local processes are used by the operator.

- the video card contained in the 3270 AT can display two different segments.

- Each video card consists of a pair of "ping-pong" (A/B RAM) memories for holding compressed gray scale video data.

- the ping-pong memories can be used in one of two ways. First a segment can be pre-loaded into RAM A while RAM B is being displayed. Second, the ping-pong memories can be used to load the next frame of a segment while the other is being displayed. Gray scale data is returned to video format and smoothed before being passed through the video digital-to- analog converter.

- An audio control card is also provided in the 3270 AT.

- ADPCM circuits e.g., NEC 7730

- Workstation 26 supports the identification process of new commercial segments and contains self-test routines to check the status of the machine and components. It is possible for workstation 26 to operate in a somewhat stand-alone mode requesting needed information from host 27 and passing results back. Alternatively, host 27 could control the application and send audio/video requests to workstation 26. The operator at workstation 26 controls the audio and video cards to cause the audio and/or video portions of selected segments to be displayed or output.

- Host computer 27 is preferably an IBM mainframe of the 308X series, such as an IBM 3083.

- Host computer 27 maintains a copy of the global signature database and an archive of all parametized and compressed audio/video data from which the signature database was generated.

- the archive allows recreation of the signature database in the event, e.g., of an accidental catastrophic loss of data.

- Host computer 27 uses the following commercially available software packages:

- Host 27 also commands the workstation 26, coprocessor 25 and, ultimately, local site 21. For example, it may be determined that additional information is needed from a particular local site 21. Host 27 acts on that determination by instructing the appropriate local site control computer 81 to request that its signal processing subsystem 80 capture additional data, or upload some data previously captured, for example.

- Host 27 also uses information transmitted by the various local sites 21 as to the occurrences of segments to compile a log of occurrences and one or more reports requested by the system users.

- Communications channels 23a, b and communications processor 29 are shown diagrammatically in more detail in FIG. 12.

- coprocessor 25 has a separate control computer 121, although, as stated above, the functions of that computer could be handled by communications processor 29.

- All communications within central site 22 and local sites 21 are coordinated by Digital Equipment Corporation (DEC) equipment. Therefore, communications throughout the system are based on DEC's DECNet protocol.

- DECNet protocol is run on a local area network 120.

- An SNA gateway 122 manufactured by DEC is connected to local area network 120 to translate between DECNet and IBM's Systems Network Architecture to allow communications between host 27 and the rest of the system.

- An IBM 3725 communications control device 123 interfaces between SNA gateway 122 and host 27.

- An I BM 3724 cluster controller 124 interfaces between device 123 and workstations 26, allowing workstations 26 to communicate with host 27.

- Central-local site 2 1 a is connected to local area network 120 by high-speed link 24 as earlier discussed.

- Communications control computer 29 is preferably a Digital Equipment Corporation VAX 8200.

- Coprocessor control computer 121 is the identical computer and in fact, as discussed above, its function can be performed by communications control computer 29 if desired.

- Communications control computer 29 interfaces between local area network 120 and local sites 21.

- local sites 21 with which high-speed or high-volume communications is necessary or desired can be by high-speed leased line 23b.

- a lower speed packet switching data network 23a running the well-known X.25 protocol is used.

- the X.25 protocol is transparent to DECNet and is available on such commercial data communications networks as Telenet or Tymnet.

- Communications lines 125 between individual local sites 21 and network 23a can be provided either by leased line or over the telephone dial-up network.

Landscapes

- Engineering & Computer Science (AREA)

- Multimedia (AREA)

- Signal Processing (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- Acoustics & Sound (AREA)

- Testing, Inspecting, Measuring Of Stereoscopic Televisions And Televisions (AREA)

- Television Systems (AREA)

Abstract

Description

- This invention relates to the automatic recognition of broadcast segments, particularly commercial advertisements broadcast by television stations.

- Commercial advertisers are interested in log data shoving when various commercials are broadcast on radio or television. This interest stems both from a desire to confirm that broadcast time paid for was actually provided and from an interest in monitoring competitors' advertising strategies.

- It would be advantageous to be able to provide an automated method and system for logging commercial broadcast data which does not rely for recognition on the insertion of special codes in the broadcast signal or on cues occurring in the signal.

- It is also desirable to provide such a method and system which can identify large numbers of commercials in an economic and efficient manner in real time, without resorting to expensive parallel processing or to the most powerful computers.

- It is an object of this invention to provide an automated method, apparatus and system for logging commercial broadcast data which does not rely for recognition on the insertion of special codes or on cues occurring in the signal.

- It is another object of this invention to provide such a method, apparatus and system which can identify large numbers of commercials in an efficient and economic manner in real time, without resorting to expensive parallel processing or to the most powerful computers.

- In accordance with the method and apparatus of this invention, real-time continuous pattern recognition of broadcast segments is accomplished by constructing a digital signature from a known specimen of a segment which is to be recognized. The signature is constructed by digitally parametizing the segment, selecting portions from among random frame locations throughout the segment in accordance with a set of predefined rules (i.e. different frames are selected for each segment, but the selection is always based on the same criteria) to form the signature, and associating with the signature the frame locations of the portions. The signature and associated frame locations are stored in a library of signatures. Each signature in the library is identified with a particular segment to be recognized. A broadcast signal is monitored and digitally parametized. For each frame of the parametized monitored signal, the library is searched for any signature that may be associated with that frame. Using the frame information stored with the signature, each of the potentially associated stored signatures is compared to the appropriate frames of the parametized signal. If a stored signature compares with the monitored data, a match is declared and the broadcast segment is identified using identification data associated with the signature.

- In another embodiment of a method and apparatus according to the invention, a digital keyword derived from a designated frame in the parametized segment is identified as associated with the segment signature, but the association of that keyword with a particular segment signature is nonexclusive -- i.e., the same keyword can be associated with more than one signature. A plurality of additional frames is selected from among random frame locations throughout the segment in accordance with a set of predefined rules and, in a library of signatures, the keyword representing the designated frame and the words representing the additional frames are stored together with the offsets of the additional frames relative to the designated frame. A broadcast signal is monitored and digitally parametized. For each monitored digital word of the parametized monitored signal, the library is searched for any signature associated with a keyword corresponding to that monitored word, and the additional words of any such signature are compared with those words of the parametized monitored signal which are separated from the monitored word by the stored offset amounts. If the stored signature compares with the monitored data, a match is declared and the broadcast segment is identified using identification data associated with the signature data.

- A system according to the invention additionally has means for detecting the occurrence of artifacts within the monitored signal characteristic of potential unknown broadcast segments to be recognized, and means for classifying and identifying such potential unknown segments. One embodiment of such a system includes a plurality of local sites in different geographic regions for monitoring broadcasts in those regions, and a central site linked to the local sites by a communications network. Each local site maintains a library of segment signatures applicable to its geographic region, and each has at least means for performing the storing, monitoring, searching, comparing and detecting tasks described above. The central site maintains a global library containing all of the information in all of the local libraries. Classification and identification of potential unknown segments involves the generation at the local site of compressed audio and video information, a temporary digital signature, and parametized monitored signal information for a potential unknown segment not found in the library of the local site. At least the parametized monitored signal information and the temporary signature are transmitted to the central site over the communications network. At the central site, the temporary signatures, which are stored in a special portion of the global library, are searched, and the signatures stored in the main portion of the global library are also searched, and are compared with the transmitted parametized monitored signal information to determine if the potential unknown segment was previously received by another local site and is therefore already known to the system. If so, and if a global signature has already been generated, the temporary signature at the originating local site is replaced with the previously generated global signature. Like potential unknown segments not found in the main portion of the global library are grouped together. Compressed audio and video for at least one of the segments in the group is requested from the local site and an operator at the central site plays it back to classify and identify it. After the operator has identified the segment, the system automatically constructs a signature which is added to the global library and to the appropriate local libraries.

- The above and other objects and advantages of the invention will be apparent upon consideration of the following detailed description, taken in conjunction with the accompanying drawings, in which like reference characters refer to like parts throughout, and in which:

- FIG. 1 is a diagrammatic representation of the areas of a video frame sampled to derive parametized signal data;

- FIG. 2 is a block diagram of a system according to the invention;

- FIG. 3 is a block diagram of an experimental local site according to the invention;

- FIG. 4 is a flowchart of a software program used in the system of FIG. 3;

- FIG. 5 is a flowchart of a second software program used in the system of FIG. 3;

- FIG. 6 is a flowchart of a third software program used in the system of FIG. 3;

- FIG. 7 is a flowchart of a fourth software program used in the system of FIG. 3;

- FIG. 8 is a block diagram of a local site according to the invention;

- FIG. 9 is a block diagram of the signal processing subsystem of FIG. 8;

- FIG. 10 is a block diagram of the correlator card of FIG. 9;

- FIG. 11A is a block diagram of the audio capture card of FIG. 9;

- FIG. 11B is a block diagram of the video capture card of FIG. 9; and

- FIG. 12 is a block diagram of the central site and system communications of the invention.

- The present invention can identify a segment of a broadcast signal by pure continuous pattern recognition on a real-time basis. No codes need be inserted into the broadcast signal prior to transmission and no "cues" or "signalling events" (e.g., fades-to-black or scene changes) that occur in the broadcast need be used in the recognition process. The broadcast signal can be either a radio broadcast, for which audio information is obviously used for the recognition process, or a television broadcast, for which audio information, video information, or both, can be used, and can be an over-the-air signal, a cable television signal, or a videotape signal.

- Whether audio or video information is used, the broadcast signal is parametized to yield a digital data stream composed, preferably, of one 16-bit digital word for every 1/30 of a second of signal. (In the case of audio information associated with video information, the audio data is synchronized with the video frame rate to form an "audio frame*.) The information is then processed in the same way whether it originated as audio or video.

- A digital signature is constructed for each segment to be recognized. The construction of the signature is discussed in more detail below. However, it preferably comprises 128 bits representing eight 16-bit words corresponding to eight frames of signal information selected from among random frame locations throughout the segment in accordance with a set of predefined rules.

- The incoming audio or video signal is digitized and parametized to yield, preferably, a 16-bit digital word for each frame of data. This also is discussed in more detail below. As the incoming signal is received, it is read into a buffer which holds, e.g., two minutes of signal data. Each word of this data is assumed to be the first word of an eight-word signature. Associated with each word of the signature is offset information indicating the spacing (i.e., the number of frames) between each such word and the first signature word. As each received word reaches a predetermined observation point in the buffer, the library of signatures of known segments to be recognized is searched for signatures beginning with that word. Using the offset information stored with the signatures, subsequent received words, already in the buffer, are compared to the remaining signature words to determine whether or not they match those remaining words of the signature.

- In order for the method to operate in real time, all library comparisons must be made in the time that a received word remains at the observation point. Because a television frame lasts 1/30 second, all comparisons for a given received word must take place within 1/30 second. If every segment signature in the library must be compared to the incoming frame signature data every 1/30 second, current computer speeds would allow comparison to only a few thousand library signatures. This would place an upper limit on the number of segments whose signatures can be stored in the library and the number of broadcast segments (e.g., commercials) which could be monitored without using expensive parallel processors or very powerful computers.

- In the United States, however, as many as 500 different commercials may be playing within a market region at any given time. Assuming a market having 6 stations to be monitored, in the aggregate there may be as many as 4,000 or more occurrences of these commercials each day. Moreover, as many as 40,000 different commercials may be airing nationwide at any given time, and as many as 90,000 or more new commercials may be introduced nationwide each year. Accordingly, it is desirable that the system of the invention be capable of comparing, in real time, incoming frame signature data to many tens of thousands of segment signatures representative of as many different commercials.

- The number of signatures that can be stored and searched within the real-time limitation of 1/30 second and, hence, the number of broadcast segmemts which can be monitored can be increased to several hundred thousand using a designated frame keyword lookup data reduction method. Using such a method, when a signature is constructed for a known segment, one frame from the segment is chosen, using criteria to be discussed below, as the key frame, its digital perametized equivalent becoming the keyword. The signature is still, preferably, eight 16-bit words, but the stored offset information now represents spacing from the keyword rather than from the first signature word. The keyword can be one of the signature data words, in which case the offset for that word is zero, or it can be a ninth word. The keyword also need not temporally precede all of the other signature words and often will not. If 16-bit words are used, there can be 216, or 65,536, possible keywords. Signatures are thus stored in a lookup table with 65,536 keys. Each received word that reaches the observation point in the buffer is assumed to be a keyword. Using the lookup table, a small number of possible signatures associated with that keyword are identified. As discussed below, one of the selection criteria for keywords is that on average four signatures have the same keyword. Typically, then, four signature comparisons would be the maximum that would have to be made within the 1/30 second time limit, assuming no data errors in the received signal. Four signatures multiplied by 65,536 keys yields 262,144 possible signatures for the system, meaning that the system has the capability of identifying in real time any of that number of broadcast segments.

- Whether or not the designated frame keyword lookup technique is used, the present invention need not rely during the recognition process on any cues, signalling events or pre-established codes in the broadcast signal. It simply monitors the incoming signal and performs continuous pattern recognition.

- Video information is parametized and digitized as described below. Although, in the description which follows, parametization is based upon signal luminance, it is to be understood that other or additional attributes of a video signal could be used for this purpose.

- A number of areas, preferably 16 (although more or fewer areas may be used), of the video field or frame are selected. The size of each area is preferably eight-by-two pixels, although areas of other sizes could be used. The luminance of each area is averaged to produce an absolute gray scale value from, e.g., 0-255. This value is normalized to a bit value of 0 or 1 by comparing the value to one of the following:

- 1. The average luminance of the entire field or frame;

- 2. The average luminance of some other area of the field or frame;

- 3. The average luminance of the same area in some previous field or frame; or

- 4. The average luminance of some other area of some previous field or frame.

- The goal in selecting which comparison to make is to maximize entropy -- i.e., to minimize correlation between the areas. (Correlation refers to the degree to which the luminance value of one area is related to or follows that of another area.) For this reason, the fourth comparison, above, is preferred, with the previous frame being one to four frames behind the current frame. For the same reason, the distribution of the sixteen areas in the field or frame, as well as the aixteen areas in the previous field or frame, is preferably asymmetrical about the center of the field or frame, because it has been empirically determined that video frames are composed in such a way that there is too much correlation between symmetrically located areas.

- A sample partial distribution of areas within a frame is shown in FIG. 1. Frame 10 is actually a video field of sampled pixels. (Hereinafter, "frame" will be used to mean either field or frame.) The luminance areas 2n (n = 1-16) (not all sixteen shown) are the areas used for determining the parametized digital word for the current frame. The luminance areas 2n' (n = 1-16) (not all sixteen shown) are areas whose values are held for use as the "previous frame" data for a later frame.

- Whatever comparison is used, a bit value of 1 is returned if the luminance of the area in question exceeds the luminance of the comparison area. If the luminance of the area in question is less than or equal to the luminance of the comparison area, a bit value of 0 is returned. (It is to be understood that here, and in the discussion that follows, the assignments of ones and zeroes for data and mask values can be reversed.) By normalizing the data in this way, offset and gain differences between signals transmitted by different stations or at different times (caused, e.g., by different transmitter control settings), are minimized. The sixteen values thus derived create a parametized "frame signature".

- A 16-bit mask word is created along with each 16-bit frame signature. The mask word represents the reliability of the frame signature. For each bit in the frame signature, if the absolute value of the luminance difference used to calculate that bit value is less than a threshold or "guard band" value, the luminance value is assumed to have been susceptible to error (because of noise in transmission), and so the mask bit for that bit is set to 0, indicating suspect data. If the absolute value of the luminance difference is greater than or equal to the guard band value, the luminance value is assumed to be much greater than the noise level and the corresponding mask bit is set to 1, indicating reliable data. As discussed below, the number of ones or zeroes in the mask word is used in generating segment signatures and setting comparison thresholds.

- An audio '' 'frame' signature," if such a signature is used, can be constructed in the same foraat as a video frame signature so that it can be processed in the same way. Such an audio signature may be used to recognize broadcast radio segments, or may be used to confirm the identification of a video segment the data for which includes a high percentage of suspect data. However, the use of audio signatures is not necessary to the invention.

- The parametized "frame signature" informa- tion is stored in a circular buffer large enough to hold, preferably, approximately twice as much signal information as the longest broadcast segment to be identified. When a segment signature is being compared to the frame signature data, for each frame that passes the observation point eight comparisons between the segment signature data and the frame signature data are made, one word at a time, using the offset data stored with the segment signature to choose the frame signatures for comparison. The frame signature and segment signature words are compared using a bit-by-bit exclusive-NOR operation, which returns a 1 when both bits are the same and a O when they are different. The number of ones resulting from the exculsive-NOR operation is accumulated over all eight word comparisons. It is not necessary actually to construct a ''parameter signature", by in fact concatenating the offset parametized frame signature words, in order to make the comparison. If the number of ones accumulated by the exclusive-NOR operation exceeds a predetermined default threshold, modified as discussed below, a match is considered to have occurred for the frame at the observation point.

- Signatures are assigned to segments as follows: