Hyperspectral image classification method based on full convolution space propagation network

Technical Field

The invention relates to a method for classifying hyperspectral images of a full-convolution space propagation network, and belongs to the field of remote sensing image processing.

Background

The hyperspectral image simultaneously contains spectral information and spatial information, and has important application in military and civil fields. However, the high dimensional nature of hyperspectral images, high correlation between bands, spectral mixing, etc. make hyperspectral image classification a huge challenge. In recent years, with the emergence of new deep learning technology, a hyperspectral image classification method based on deep learning is in breakthrough development. However, deep learning models typically contain a large number of parameters, requiring a large number of training samples. The hyperspectral images have relatively few marked samples, the training of deep learning models is difficult to completely meet, and the overfitting problem is easy to occur.

The hyperspectral image classification problem aims to give an image with part of labeled pixels, and predict specific object classes corresponding to all the pixels in the image through a correlation algorithm. The traditional hyperspectral image classification method generally utilizes artificial preset features, such as SIFT, HOG, PHOG and the like, to extract features from a hyperspectral image, and then classifies the hyperspectral image by means of models, such as a multilayer sensor, a support vector machine and the like. However, the design and selection of these manually preset features depend on professional knowledge, and it is difficult to select a feature with versatility.

In recent years, with the rise of deep learning, a deep neural network which is fully data-driven and does not need prior knowledge has prominent advantages in the fields of image processing, computer vision and the like, and the application range of the deep neural network covers aspects such as high-level image identification, medium-low level image processing and the like, such as target identification, detection, classification and image denoising, dynamic deblurring, reconstruction and the like. The hyperspectral image classification field also introduces a related technology of deep learning, and obtains a classification effect obviously superior to that of the traditional method. However, due to the limitation of the number of hyperspectral image training samples, the deep learning model applied to hyperspectral image classification is relatively shallow, and although a large number of experiments in the aspect of computer vision show that effective depth increase is very beneficial to improving classification performance.

Disclosure of Invention

Technical problem to be solved

In order to avoid the defects of the prior art, the invention provides a highlight spectrogram image classification method based on a full convolution space propagation network.

Technical scheme

A hyperspectral image classification method based on a full convolution space propagation network is characterized by comprising the following steps:

step 1: data pre-processing

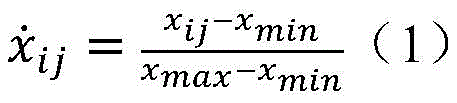

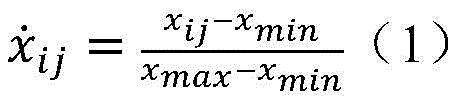

Firstly, performing data expansion on hyperspectral image data to be processed, and performing rotation transformation of up and down, left and right, 90 degrees, 180 degrees and 270 degrees respectively, so that six hyperspectral images can be obtained from one original hyperspectral image; and then carrying out maximum and minimum normalization on the obtained hyperspectral image:

wherein x is ij As raw data, x max 、x min Respectively a maximum value and a minimum value in the original data;

dividing the normalized hyperspectral image according to a certain step length;

and 2, step: data partitioning

Counting the total number of the labeled samples from the preprocessed hyperspectral images, and then selecting 5% of labeled samples from the labeled samples as training data;

and step 3: building a network model

The structure sequentially comprises two parts:

1) A feature extraction part: firstly, input data sequentially passes through an asymmetric three-dimensional convolutional layer, an excitation function and an normalization layer; the asymmetric three-dimensional convolution layer adopts a three-dimensional convolution kernel with an asymmetric structure, the dimension of the spectrum dimension of the convolution kernel is larger than that of the space dimension, the excitation function adopts ReLU, and the normalization adopts BN; after the data is processed by BN, the data is further subjected to down-sampling modules with the widths of 32, 64 and 128 in sequence to further extract depth features, wherein the down-sampling modules comprise: from the input end to the output end, the left trunk part sequentially comprises convolution layers with convolution kernels of 1 multiplied by 3 and 3 multiplied by 1, a ReLU excitation layer and a BN layer are respectively connected after the two convolution layers, and the left trunk part considers spatial direction information in the hyperspectral image and then spectral direction information; correspondingly, the right-side trunk part sequentially comprises convolution layers with convolution kernel sizes of 3 multiplied by 1 and 1 multiplied by 3, a ReLU excitation layer and a BN layer are respectively connected after the two convolution layers, and the right-side trunk part considers spectral direction information in the hyperspectral image and then spatial direction information;

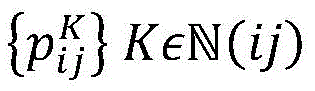

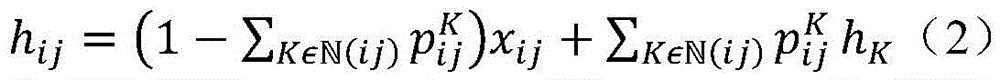

2) And a classification part: the part is composed of four convolutional layers with the widths of 128, 64, 32 and 32 respectively; the first three layers of convolution layers carry out convolution on the feature map to enable the feature map to be restored to the same size as the input image; the last convolution layer is used for linking each pixel by utilizing spatial information of a hyperspectral image, linear propagation operation is carried out on a 2D graph to construct a learnable graph, and a result graph containing probability classification of all categories is finally obtained; the linear propagation formula is as follows:

wherein h is

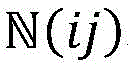

ij Representing surrounding pixels at pixel (i, j),

represents a series of weights at (i, j), K being the adjacent coordinate of (i, j), based on->

Is represented by

K Representing adjacent pixels;

and 4, step 4: training network model

Inputting training data into a constructed full convolution space propagation network in batches, and training network parameters by using a gradient descent algorithm by taking the marked type as guide information until the network converges; in the training process, 5% of samples are extracted from a training set randomly and repeatedly every time to be a batch of training data, the data are input into a network, characteristics are extracted, a prediction result is calculated, a partial derivative of a network weight value is calculated by taking cross entropy between the prediction result and an actual result as a loss function, and network parameters are updated by using a gradient descent algorithm; the training process facilitates one round of training for the entire training set at a time; the whole training process is carried out for 80 rounds, the learning rate of the first 70 rounds is set as 0.01, and the learning rate of the last 10 rounds is reduced to 0.001; in the whole training process, the momentum term is set to be 0.9;

and 5: generating classification results

And performing category prediction on the target hyperspectral image data based on the trained network model to obtain a classification result graph.

Advantageous effects

The hyperspectral image classification method based on the full convolution space propagation network provided by the invention aims at the hyperspectral image classification problem, combines a deep learning related technology, and applies the full convolution space propagation network to hyperspectral image classification for the first time. The traditional hyperspectral image classification method based on the convolutional neural network is used for classifying images pixel by pixel, a large amount of repeated operations exist, and the size of an input image has great influence on a classification result. The full convolution space transmission network reduces repeated operation, can receive input images of any size, and fully utilizes the space information of the hyperspectral images, thereby realizing high-precision classification of the hyperspectral images under certain conditions.

Drawings

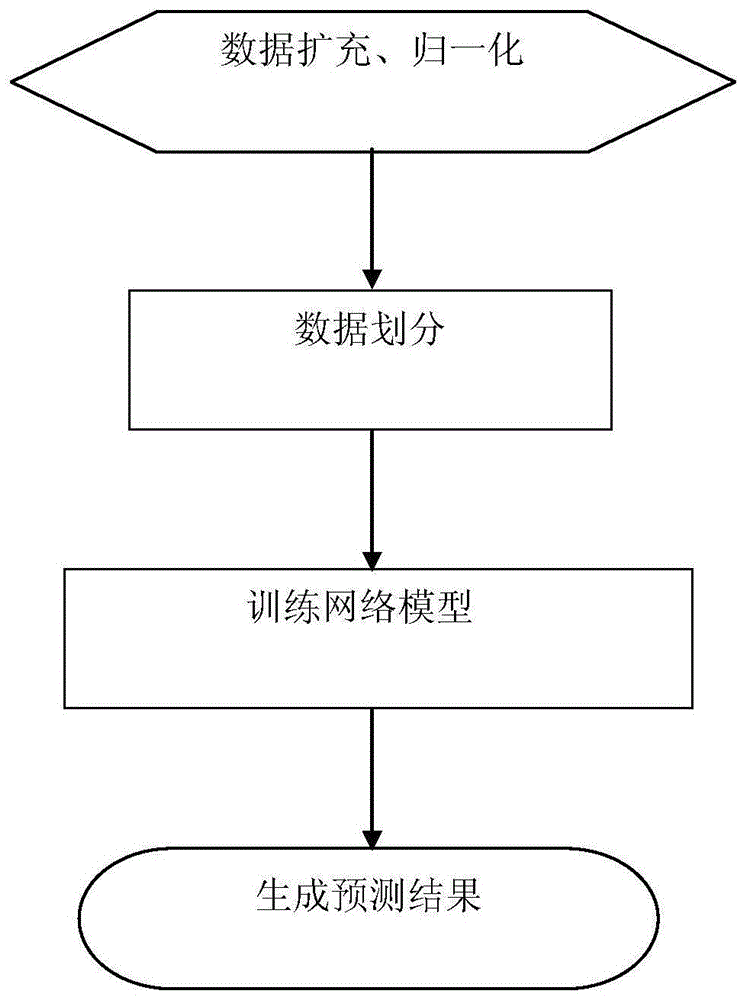

FIG. 1 is a flow chart of a hyperspectral image classification method based on a full convolution space propagation network

FIG. 2 is a block diagram of a downsampling process

FIG. 3 is a diagram of a full convolution space propagation network

Detailed Description

The invention will now be further described with reference to the following examples and drawings:

the method is a hyperspectral image classification method based on a full convolution space propagation network. The method comprises the steps of extracting a proper amount of labeled samples from hyperspectral images to be classified, training a full-convolution space propagation network provided by the technical scheme, and then classifying the whole hyperspectral images by using a trained network model.

The technical scheme comprises the following specific measures:

step 1: preprocessing data; and performing data expansion and maximum and minimum normalization on the hyperspectral data set to be processed.

And 2, step: dividing data; and counting the total number of the labeling samples from the preprocessed hyperspectral images, and then selecting 5% of the labeling samples as training data.

And 3, step 3: constructing a network model; the network structure constructed by the invention is formed by a space propagation network based on full convolution.

And 4, step 4: training a network model; training the constructed network model by using a training set, and then extracting the characteristics of the trained network model; in the training process, the marked category is used as guide information, and a gradient descent algorithm is used for training the network parameters until the network converges.

And 5: obtaining a test result; and based on the trained network model, performing class prediction on all pixels in the target hyperspectral image data set to obtain a classification result graph.

Example (b):

step 1: preprocessing data; firstly, data expansion is carried out on hyperspectral image data to be processed, up-down, left-right, 90 degrees, 180 degrees and 270 degrees of rotation transformation are respectively carried out, and six hyperspectral images can be obtained from one original hyperspectral image. And then carrying out maximum and minimum normalization on the obtained hyperspectral image, wherein the normalization formula is shown as a formula (1). And (4) dividing the normalized hyperspectral image according to a certain step length, and generally taking 20.

Step 2: dividing data; and counting the total number of the labeled samples from the preprocessed hyperspectral images, and then selecting 5% of labeled samples from the hyperspectral images as training data.

And step 3: constructing a network model; the network designed by the invention sequentially comprises two parts of structures:

1) A feature extraction section; the input data is first passed through an asymmetric three-dimensional convolutional layer, an excitation function and an normalization layer. The asymmetric three-dimensional convolutional layer adopts a three-dimensional convolutional kernel with an asymmetric structure, and the size of the spectral dimension of the convolutional kernel is larger than that of the spatial dimension of the convolutional kernel, so that the function of paying more attention to the spectral dimension information in the data processing process of the processing module is achieved, for example, the convolutional layer can adopt a convolutional kernel with the spectral dimension of 5 and the spatial dimension of 1 × 1, and the width of the convolutional layer is set to be 32. In the module, a ReLU is adopted as an excitation function, and Batch Normalization (BN) is adopted as normalization; after the data is processed by the BN, the data is further processed by three down-sampling modules with widths of 32, 64 and 128, and the specific structure is shown in fig. 3. As shown in fig. 2, the down-sampling module has a specific structure, and from an input end to an output end, the left trunk portion sequentially includes convolution layers with convolution kernels of 1 × 3 × 3 and 3 × 1 × 1, the convolution layers are respectively followed by a ReLU excitation layer and a BN layer, and the left trunk portion considers spatial direction information in the hyperspectral image and then spectral direction information; correspondingly, the right trunk part sequentially comprises convolution layers with convolution kernel sizes of 3 multiplied by 1 and 1 multiplied by 3, a ReLU excitation layer and a BN layer are respectively connected after the two convolution layers, and the spectral direction information and the spatial direction information in the hyperspectral image are considered in the right trunk part.

2) A classification section; the part is composed of four convolutional layers with the widths of 128, 64, 32 and 32 respectively; the first three convolutional layers convolve the feature map so that it is restored to the same size as the input image. The last convolution layer is used for linking each pixel by utilizing spatial information of the hyperspectral image, linear propagation operation is carried out on a 2D graph to construct a learnable graph, and finally a result graph containing probability classification of all categories is obtained. The linear propagation formula is shown in formula (2), where h

ij Representing surrounding pixels at pixel (i, j),

represents a series of weights at (i, j), K being the adjacent coordinate of (i, j), by->

Is represented by h

K Representing adjacent pixels.

And 4, step 4: training a network model; inputting training data into a constructed full-convolution space propagation network in batches, and training network parameters by using a gradient descent algorithm with the labeled categories as guide information until the network converges; in the training process, 5% of samples are extracted from a training set randomly and repeatedly at each time to be a batch of training data, the data are input into a network, characteristics are extracted, a prediction result is calculated, a partial derivative of a network weight is calculated by taking cross entropy between the prediction result and an actual result as a loss function, and a network parameter is updated by using a gradient descent algorithm. The training process facilitates one round of training for the entire training set at a time. The whole training process is carried out for 80 rounds, the learning rate of the first 70 rounds is set to be 0.01, and the learning rate of the last 10 rounds is attenuated to be 0.001; in the whole training process, the momentum term is set to 0.9.

And 5: generating a classification result; and performing category prediction on the target hyperspectral image data based on the trained network model to obtain a classification result graph.

According to the method, the depth features of the hyperspectral images are automatically extracted and classified with high precision under the condition of limited training samples by constructing a full-convolution space propagation network according to the characteristics of the hyperspectral images. Compared with the existing hyperspectral image classification method based on convolutional neural network learning, the hyperspectral image classification method based on convolutional neural network learning is higher in classification precision and less in parameter quantity and operand.