CN108229552B - A model processing method, device and storage medium - Google Patents

A model processing method, device and storage medium Download PDFInfo

- Publication number

- CN108229552B CN108229552B CN201711475434.7A CN201711475434A CN108229552B CN 108229552 B CN108229552 B CN 108229552B CN 201711475434 A CN201711475434 A CN 201711475434A CN 108229552 B CN108229552 B CN 108229552B

- Authority

- CN

- China

- Prior art keywords

- object feature

- library

- target library

- mapped

- model

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/21—Design or setup of recognition systems or techniques; Extraction of features in feature space; Blind source separation

- G06F18/214—Generating training patterns; Bootstrap methods, e.g. bagging or boosting

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- Data Mining & Analysis (AREA)

- Life Sciences & Earth Sciences (AREA)

- Artificial Intelligence (AREA)

- General Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- Evolutionary Computation (AREA)

- Bioinformatics & Computational Biology (AREA)

- Computational Linguistics (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Health & Medical Sciences (AREA)

- Biomedical Technology (AREA)

- Biophysics (AREA)

- Evolutionary Biology (AREA)

- General Health & Medical Sciences (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Mathematical Physics (AREA)

- Software Systems (AREA)

- Image Analysis (AREA)

Abstract

本发明提供了一种模型处理方法,包括:分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。本发明还同时提供了一种模型处理装置、以及存储介质。

The present invention provides a model processing method, comprising: extracting a first object feature from a source library and extracting a second object feature from a target library, wherein the first object feature and the second object feature are respectively The sample sets in the respective databases; the first object feature and the second object feature are respectively mapped to the same feature space, and the mapped first object feature and the mapped second object feature are obtained correspondingly; according to the mapping After the first object feature and the second object feature after the mapping, determine the transformation matrix when the difference between the source library and the target library satisfies the preset minimum difference condition; The mapped first object feature is converted to obtain a third object feature; the third object feature and the corresponding label are applied to a model for classifying samples in the target library. The present invention also provides a model processing device and a storage medium.

Description

技术领域technical field

本发明涉及电数字数据处理技术,尤其涉及一种模型处理方法、装置及存储介质。The present invention relates to electric digital data processing technology, in particular to a model processing method, device and storage medium.

背景技术Background technique

随着大数据时代的到来,人们可以更加容易的获得大量数据,此外,由于机器学习领域不断的发展,如何让计算机具有举一反三的能力,如何使大量数据可以更好地发挥作用,这些问题均变得非常实际且有价值,为了解决这些问题,迁移学习被提出并越来越受到人们的重视。With the advent of the era of big data, people can obtain a large amount of data more easily. In addition, due to the continuous development of the field of machine learning, how to make computers have the ability to draw inferences from others, and how to make large amounts of data play a better role, these problems have changed. In order to solve these problems, transfer learning has been proposed and received more and more attention.

在常规机器学习中有一个重要假设,即源库的样本和目标库的样本必须具有相同的分布或者来自相同的特征空间,然而在现实生活中,这一假设是很难实现的;具体来说,对于一个分类问题,如果源库的样本和目标库的样本不具有相同的分布(即可以粗略的理解为来源不属于同一个库),这就可以理解为源库与目标库不具有相同的特征空间;在图像识别领域,由一个图像库训练出的模型,运用于该图像库能得到较好的识别结果,但是,运用于其他图像库或者现实环境中,往往不尽如人意。There is an important assumption in conventional machine learning that the samples of the source library and the samples of the target library must have the same distribution or come from the same feature space, but in real life, this assumption is difficult to realize; specifically , for a classification problem, if the samples of the source library and the samples of the target library do not have the same distribution (that is, it can be roughly understood that the source does not belong to the same library), it can be understood that the source library and the target library do not have the same distribution Feature space: In the field of image recognition, a model trained from an image library can get better recognition results when applied to this image library, but it is often unsatisfactory when applied to other image libraries or in real environments.

综上分析,由于现有技术中源库与目标库不具有相同的特征空间,这就导致由源库训练出的模型运用于目标库时,不能得到很好的识别结果。To sum up, since the source library and the target library in the prior art do not have the same feature space, the model trained from the source library cannot obtain good recognition results when applied to the target library.

发明内容SUMMARY OF THE INVENTION

有鉴于此,本发明实施例期望提供一种模型处理方法、装置及存储介质,能够克服由于源库与目标库特征空间的差异而影响模型准确度的问题。In view of this, the embodiments of the present invention are expected to provide a model processing method, apparatus, and storage medium, which can overcome the problem that the accuracy of the model is affected by the difference in the feature space of the source library and the target library.

为达到上述目的,本发明实施例的技术方案是这样实现的:In order to achieve the above-mentioned purpose, the technical scheme of the embodiment of the present invention is realized as follows:

本发明实施例提供一种模型处理方法,所述方法包括:An embodiment of the present invention provides a model processing method, and the method includes:

分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;Extracting the first object feature from the source library and extracting the second object feature from the target library, respectively, wherein the first object feature and the second object feature are sample sets in the respective databases;

将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;The first object feature and the second object feature are respectively mapped to the same feature space, and the mapped first object feature and the mapped second object feature are obtained correspondingly;

根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;determining, according to the mapped first object feature and the mapped second object feature, a transformation matrix when the difference between the source library and the target library satisfies a preset minimum difference condition;

通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;Converting the mapped first object feature through the transformation matrix to obtain a third object feature;

将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。The third object feature and the corresponding label are applied to the model for classifying the samples in the target library.

本发明实施例还提供一种模型处理装置,所述装置包括:提取模块、映射模块、确定模块、转换模块和应用模块;其中,An embodiment of the present invention further provides a model processing device, the device includes: an extraction module, a mapping module, a determination module, a conversion module, and an application module; wherein,

所述提取模块,用于分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;The extraction module is used to extract the first object feature from the source library and the second object feature from the target library respectively, wherein the first object feature and the second object feature are samples in the respective databases respectively gather;

所述映射模块,用于将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;The mapping module is used to map the first object feature and the second object feature to the same feature space respectively, and correspondingly obtain the mapped first object feature and the mapped second object feature;

所述确定模块,用于根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;The determining module is configured to determine, according to the mapped first object feature and the mapped second object feature, when the difference between the source library and the target library satisfies a preset minimum difference condition The transformation matrix of ;

所述转换模块,用于通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;The conversion module is configured to convert the mapped first object feature through the transformation matrix to obtain a third object feature;

所述应用模块,用于将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。The application module is configured to apply the third object feature and the corresponding label to a model for classifying samples in the target library.

本发明实施例还提供一种存储介质,其上存储有可执行程序,所述可执行程序被处理器执行时实现前述任意一种模型处理方法。An embodiment of the present invention further provides a storage medium on which an executable program is stored, and when the executable program is executed by a processor, any one of the foregoing model processing methods is implemented.

本发明实施例还提供一种模型处理装置,包括存储器、处理器及存储在存储器上并能够由所述处理器运行的可执行程序,所述处理器运行所述可执行程序时执行前述任意一种模型处理方法。An embodiment of the present invention further provides a model processing apparatus, including a memory, a processor, and an executable program stored in the memory and capable of being run by the processor, and the processor executes any one of the foregoing when running the executable program a model processing method.

本发明实施例所提供的模型处理方法、装置及存储介质,分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。如此,通过源库训练出了适合目标库的模型,因此,由源库训练出的模型运用于目标库时,能够得到很好的识别结果,从而提升了模型的准确度。The model processing method, device, and storage medium provided by the embodiments of the present invention respectively extract a first object feature from a source library and a second object feature from a target library, wherein the first object feature and the second object feature are The object features are respectively the sample sets in the respective databases; the first object feature and the second object feature are respectively mapped to the same feature space, and the mapped first object feature and the mapped second object feature are obtained correspondingly; According to the mapped first object feature and the mapped second object feature, determine the transformation matrix when the difference between the source library and the target library satisfies the preset minimum difference condition; The transformation matrix transforms the mapped first object features to obtain third object features; and applies the third object features and corresponding labels to a model for classifying samples in the target library. In this way, a model suitable for the target library is trained through the source library. Therefore, when the model trained from the source library is applied to the target library, a good recognition result can be obtained, thereby improving the accuracy of the model.

附图说明Description of drawings

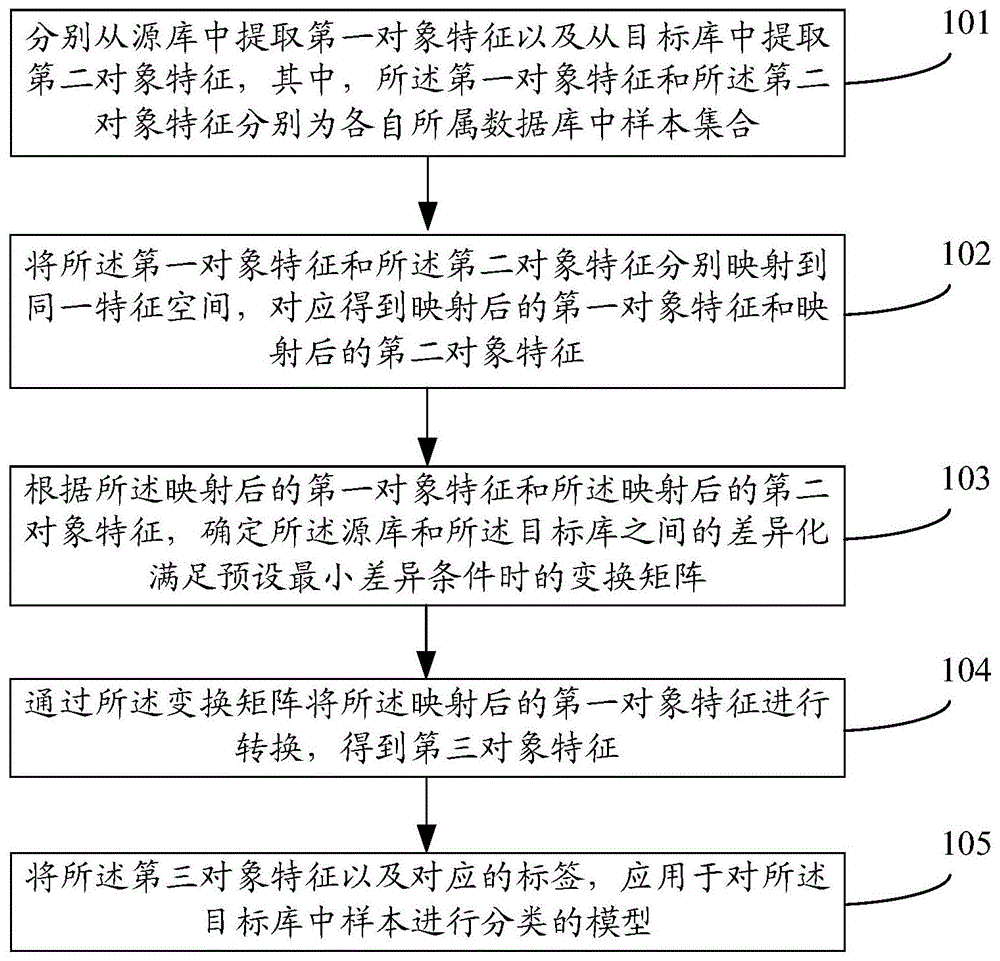

图1为本发明实施例提供的模型处理方法的实现流程示意图;FIG. 1 is a schematic diagram of an implementation flowchart of a model processing method provided by an embodiment of the present invention;

图2为本发明实施例提供的模型处理方法的具体实现流程示意图;2 is a schematic flowchart of a specific implementation of a model processing method provided by an embodiment of the present invention;

图3为本发明实施例提供的人脸标定示意图;3 is a schematic diagram of face calibration provided by an embodiment of the present invention;

图4为本发明实施例提供的Gabor空间示意图;4 is a schematic diagram of a Gabor space provided by an embodiment of the present invention;

图5为本发明实施例提供的深度学习之图像分类模型AlexNet结构组成示意图;5 is a schematic diagram of the structure of the AlexNet image classification model of deep learning provided by an embodiment of the present invention;

图6为本发明实施例提供的模型处理装置的组成结构示意图;FIG. 6 is a schematic diagram of the composition and structure of a model processing apparatus provided by an embodiment of the present invention;

图7为本发明实施例提供的模型处理装置的硬件结构示意图。FIG. 7 is a schematic diagram of a hardware structure of a model processing apparatus provided by an embodiment of the present invention.

具体实施方式Detailed ways

为了能够更加详尽地了解本发明实施例的特点与技术内容,下面结合附图对本发明实施例的实现进行详细阐述,所附附图仅供参考说明之用,并非用来限定本发明。In order to be able to understand the features and technical contents of the embodiments of the present invention in more detail, the implementation of the embodiments of the present invention will be described in detail below with reference to the accompanying drawings. The accompanying drawings are for reference only and are not used to limit the present invention.

图1为本发明实施例提供的一种模型处理方法;如图1所示,本发明实施例中的模型处理方法的实现流程,可以包括以下步骤:FIG. 1 is a model processing method provided by an embodiment of the present invention; as shown in FIG. 1 , the implementation process of the model processing method in the embodiment of the present invention may include the following steps:

步骤101:分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合。Step 101: Extract the first object feature from the source library and the second object feature from the target library, respectively, wherein the first object feature and the second object feature are sample sets in the respective databases.

在一些实施例中,分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,可以从源库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为源库的第一对象特征;可以从目标库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为目标库的第二对象特征。In some embodiments, the first object feature is extracted from the source library and the second object feature is extracted from the target library, wherein different types of object features can be extracted from the source library, and the extracted different types of object features can be combined. Then, it is used as the first object feature of the source library; different types of object features can be extracted from the target library, and the extracted object features of different types can be combined to serve as the second object feature of the target library.

然后,将从源库中提取的第一对象特征、以及从目标库中提取的第二对象特征进行降维;降维的具体过程可以包括:将从源库中提取的第一对象特征、以及从目标库中提取的第二对象特征分别进行归一化处理;从第一对象特征和第二对象特征中分别提取预设维数的特定对象特征,对应作为源库以及目标库中的待映射对象特征。其中,预设维数小于提取前对应的维数;从第一对象特征和第二对象特征中分别提取预设维数的特定对象特征可以包括:从第一对象特征对应的特征矢量,以及第二对象特征对应的特征矢量中,分别选择其中最大的d维个特征值对应的对象特征,作为各自的特定对象特征。Then, dimensionality reduction is performed on the first object feature extracted from the source library and the second object feature extracted from the target library; the specific process of dimensionality reduction may include: the first object feature extracted from the source library, and The second object features extracted from the target library are respectively normalized; the specific object features with preset dimensions are respectively extracted from the first object features and the second object features, which correspond to the to-be-mapped objects in the source library and the target library. Object characteristics. Wherein, the preset dimension is smaller than the corresponding dimension before extraction; respectively extracting the specific object feature of the preset dimension from the first object feature and the second object feature may include: from the feature vector corresponding to the first object feature, and the third In the feature vectors corresponding to the two object features, the object features corresponding to the largest d-dimensional feature values are respectively selected as the respective specific object features.

步骤102:将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征。Step 102: Map the first object feature and the second object feature to the same feature space respectively, and obtain the mapped first object feature and the mapped second object feature correspondingly.

在一些实施例中,为了避免直接将源库和目标库映射到同一特征空间所造成的信息损失,将第一对象特征和第二对象特征采用分别乘以一个转换矩阵的方式,投影到各自对应的子空间,从而,通过空间映射,得到位于同一维度空间的映射后的第一对象特征和映射后的第二对象特征。In some embodiments, in order to avoid the loss of information caused by directly mapping the source library and the target library to the same feature space, the first object feature and the second object feature are respectively multiplied by a transformation matrix and projected to their corresponding Therefore, through spatial mapping, the mapped first object feature and the mapped second object feature located in the same dimension space are obtained.

步骤103:根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵。Step 103 : According to the mapped first object feature and the mapped second object feature, determine a transformation matrix when the difference between the source library and the target library satisfies a preset minimum difference condition.

在一些实施例中,可以基于以映射后的第一对象特征和映射后的第二对象特征为因子,构建表示源库和目标库之间距离的差异函数;以求解差异函数最小值的方式,确定源库和目标库之间的差异化满足预设最小差异条件时的变换矩阵。这里,可以利用映射后的第一对象特征与变换矩阵的乘积,与映射后的第二对象特征的差值作为因子;构建基于所述因子计算范数的函数。In some embodiments, a difference function representing the distance between the source library and the target library may be constructed based on the factored first object feature after mapping and the second object feature after mapping; in a way of finding the minimum value of the difference function, Determine the transformation matrix when the difference between the source library and the target library satisfies the preset minimum difference condition. Here, the product of the mapped first object feature and the transformation matrix, and the difference between the mapped second object feature and the mapped second object feature may be used as a factor; a function for calculating a norm based on the factor is constructed.

步骤104:通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征。Step 104: Convert the mapped first object feature through the transformation matrix to obtain a third object feature.

在一些实施例中,变换矩阵可以通过映射后的第一对象特征与映射后的第二对象特征对齐的方式,将映射后的第一对象特征所在子空间坐标系转换成映射后的第二对象特征所在子空间坐标系,得到第三对象特征。In some embodiments, the transformation matrix may convert the subspace coordinate system where the mapped first object feature is located into the mapped second object by aligning the mapped first object feature with the mapped second object feature The subspace coordinate system where the feature is located, and the third object feature is obtained.

步骤105:将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。Step 105: Apply the third object feature and the corresponding label to a model for classifying samples in the target library.

在一些实施例中,可以将第三对象特征以及对应的标签进行模型训练,具体地:以第三对象特征以及对应的标签为新的样本;求解模型参数相对新的样本的更新值分量;从目标库中样本提取对象特征,基于模型计算目标库中样本具有不同标签的概率值;选取符合概率条件的标签作为目标库中样本的标签。In some embodiments, model training can be performed on the third object feature and the corresponding label, specifically: taking the third object feature and the corresponding label as a new sample; solving the update value component of the model parameter relatively new sample; The samples in the target library extract the object features, and calculate the probability values of the samples in the target library with different labels based on the model; select the labels that meet the probability conditions as the labels of the samples in the target library.

在一些实施例中,可以基于以第三对象特征为因子的相似性函数,对目标库中样本进行近邻分类,得到目标库中样本的标签。In some embodiments, the samples in the target library may be classified as neighbors based on a similarity function with the third object feature as a factor, so as to obtain the labels of the samples in the target library.

在一些实施例中,当所应用的模型包括的类型为至少两个时,基于各模型分别确定针对目标库中样本的输出结果;将各模型的输出结果进行比较,根据比较结果确定目标库中样本的标签。In some embodiments, when the applied models include at least two types, the output results for the samples in the target library are respectively determined based on each model; the output results of each model are compared, and the samples in the target library are determined according to the comparison results. Tag of.

举例来说,当各模型的输出结果相同时,选取任意一个模型的输出结果作为目标库中样本的标签;当各模型的输出结果不同,且不包括特定标签时,选取任意一个模型的输出结果作为目标库中样本的标签;当各模型的输出结果不同,且包括特定标签时,选取特定标签作为目标库中样本的标签。For example, when the output results of each model are the same, select the output result of any one model as the label of the samples in the target library; when the output results of each model are different and do not include a specific label, select the output result of any one model. As the label of the sample in the target library; when the output results of each model are different and include a specific label, select the specific label as the label of the sample in the target library.

下面对本发明实施例模型处理方法的具体实现过程做进一步地详细说明。The specific implementation process of the model processing method according to the embodiment of the present invention will be further described in detail below.

图2给出了本发明实施例模型处理方法的具体实现流程示意图;如图2所示,本发明实施例的模型处理方法包括以下步骤:FIG. 2 shows a schematic diagram of a specific implementation flow of the model processing method according to the embodiment of the present invention; as shown in FIG. 2 , the model processing method according to the embodiment of the present invention includes the following steps:

步骤201:分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,第一对象特征和第二对象特征分别为各自所属数据库中样本集合。Step 201: Extract the first object feature from the source library and extract the second object feature from the target library, respectively, wherein the first object feature and the second object feature are sample sets in the respective databases.

本发明实施例中,以从源库的人脸图片中提取人脸表情特征S、以及从目标库的人脸图片中提取人脸表情特征T为例,这里,可以将从源库中提取的人脸表情特征S理解为第一对象特征,将从目标库中提取的人脸表情特征T理解为第二对象特征。In the embodiment of the present invention, taking the extraction of the facial expression feature S from the human face pictures in the source library and the extraction of the facial expression feature T from the human face pictures in the target library as examples, here, it is possible to extract the facial expression features from the source library. The facial expression feature S is understood as the first object feature, and the facial expression feature T extracted from the target library is understood as the second object feature.

举例来说,可以从源库中提取不同类型的人脸表情特征,将提取的不同类型的人脸表情特征组合后,作为源库的人脸表情特征S;可以从目标库中提取不同类型的人脸表情特征,将提取的不同类型的人脸表情特征组合后,作为目标库的人脸表情特征T。For example, different types of facial expression features can be extracted from the source library, and the extracted facial expression features of different types can be combined as the facial expression feature S of the source library; different types of facial expression features can be extracted from the target library. The facial expression feature, after combining the extracted facial expression features of different types, is used as the facial expression feature T of the target library.

针对源库和目标库,可以采用以下两种方式提取不同类型的人脸表情特征。For the source library and the target library, the following two methods can be used to extract different types of facial expression features.

1)可以提取Gabor特征g:1) Gabor feature g can be extracted:

举例来说,可以使用人脸对齐Intraface处理工具进行人脸标定,Intraface可以对人的眉毛、眼睛、鼻子和嘴巴进行49个点的标定,49个点能够很好的对人脸的特征进行定位,具体实例可以参见图3;在对人脸图片进行标定时,还可采用其他的处理工具,不同的处理工具所使用的标定点个数不同,本发明实施例不限于使用49个点的Intraface处理工具。For example, you can use the face alignment Intraface processing tool for face calibration. Intraface can calibrate 49 points of people's eyebrows, eyes, nose and mouth, and 49 points can be very good for facial features. , a specific example can be seen in Figure 3; when calibrating a face picture, other processing tools can also be used, and the number of calibration points used by different processing tools is different, and the embodiment of the present invention is not limited to using 49 points Intraface processing tool.

然后,可以对标定的点进行特征采样,得到人脸表情特征;举例来说,在标定之后,对标定的部分可以提取Gabor特征,用于判别愤怒、厌恶、恐惧、快乐、悲伤和惊喜等人脸所表达的基本情感;其中,Gabor特征是一种可以用来描述图像纹理信息的特征,Gabor滤波器的频率和方向与人类的视觉系统类似,特别适合于纹理表示与判别。Then, feature sampling can be performed on the calibrated points to obtain facial expression features; for example, after calibration, Gabor features can be extracted from the calibrated part, which can be used to distinguish people such as anger, disgust, fear, happiness, sadness and surprise. The basic emotion expressed by the face; among them, the Gabor feature is a feature that can be used to describe the texture information of the image. The frequency and direction of the Gabor filter are similar to the human visual system, and it is especially suitable for texture representation and discrimination.

Gabor特征表征人脸图片的矩阵Bs和Bt通过与Gabor滤波器进行卷积分别获得Gabor特征Sg和Tg;The Gabor features represent the matrices B s and B t of the face image through convolution with the Gabor filter to obtain the Gabor features S g and T g respectively;

Bs*g=Sg;Bt*g=Tg;B s *g=S g ; B t *g=T g ;

滤波器的计算如下:The filter is calculated as follows:

其中λ代表波长,θ代表旋转角度,ψ代表相位偏移,σ代表高斯函数得标准差,γ代表空间比率,x、y表示像素点;通过上式可知,Gabor滤波器可分为实数部分R和虚数I,经过三角变换可得:where λ represents the wavelength, θ represents the rotation angle, ψ represents the phase offset, σ represents the standard deviation of the Gaussian function, γ represents the spatial ratio, and x and y represent the pixel points; it can be seen from the above formula that the Gabor filter can be divided into a real part R and the imaginary number I, after trigonometric transformation, we can get:

其中Gabor滤波器的实数部分可以看作是各个方向的边缘检测算子,使用这个特性,可以得到Gabor空间,具体可以参见图4。in The real part of the Gabor filter can be regarded as the edge detection operator in all directions. Using this feature, the Gabor space can be obtained. For details, see Figure 4.

2)可以对整个人脸提取深度学习特征,卷积神经网络可以看作是一种特殊类型的前馈网络,这些模型被设计来模仿视觉皮层的行为,卷积神经网络CNN在视觉识别任务上表现很好,CNN具有称为卷积层和池化层的特殊层,其允许网络对某些图像属性进行编码,通过该卷积神经网络模型,可以提取CNN特征:Sc和Tc。2) Deep learning features can be extracted for the entire face. Convolutional neural networks can be regarded as a special type of feedforward network. These models are designed to imitate the behavior of the visual cortex. Convolutional neural networks CNN are used in visual recognition tasks. Performing well, the CNN has special layers called convolutional and pooling layers that allow the network to encode certain image attributes, through which the CNN features can be extracted: S c and T c .

其中,深度学习之图像分类模型AlexNet由具有以下架构的11层CNN组成,具体可以参见图5。Among them, the deep learning image classification model AlexNet consists of 11 layers of CNN with the following architecture, as shown in Figure 5 for details.

将从源库中提取的Gabor特征Sg和卷积神经网络CNN特征Sc,采用串联的方式组合后形成的新的特征作为源库的对象特征即人脸表情特征S;将从目标库中提取的Gabor特征Tg和卷积神经网络CNN特征Tc,采用串联的方式组合后形成的新的特征作为目标库的对象特征即人脸表情特征T,即:The Gabor feature S g extracted from the source library and the convolutional neural network CNN feature S c are combined in series to form a new feature as the object feature of the source library, that is, the facial expression feature S; from the target library The extracted Gabor feature T g and the convolutional neural network CNN feature T c are combined in series to form a new feature as the object feature of the target library, that is, the facial expression feature T, namely:

源库中人脸图片的人脸表情特征为:S=[Sg,Sc];The facial expression features of the face pictures in the source library are: S=[S g , S c ];

目标库中人脸图片的人脸表情特征为:T=[Tg,Tc];The facial expression features of the face pictures in the target library are: T=[T g ,T c ];

其中,Sg和Sc的行数(图片的数量)相同,同理,Tg和Tc的行数也相同。Among them, the number of lines (the number of pictures) of S g and S c are the same, and similarly, the number of lines of T g and T c are also the same.

传统的特征对人脸的细节特性刻画较好,而深度学习特征对人脸的整体特性刻画较好,因此,通过这两种特征的组合,能够更为有效的刻画人脸表情。Traditional features can better describe the details of the face, while deep learning features can better describe the overall features of the face. Therefore, through the combination of these two features, the facial expression can be more effectively described.

步骤202:将从源库中提取的第一对象特征、以及从目标库中提取的第二对象特征进行降维。Step 202: Dimensionality reduction is performed on the first object feature extracted from the source library and the second object feature extracted from the target library.

本发明实施例中,可以将从源库中提取的第一对象特征、以及从目标库中提取的第二对象特征分别进行归一化处理。In this embodiment of the present invention, the first object feature extracted from the source library and the second object feature extracted from the target library may be respectively normalized.

举例来说,以从源库中提取的人脸表情特征S、以及从目标库中提取的人脸表情特征T进行降维为例;可以将从源库中提取的人脸表情特征S、以及从目标库中提取的人脸表情特征T分别进行归一化处理,由于S和T的维度相同,因此,S和T都存在于一个给定的D维空间上,为了能够得到更加具有鲁棒性的表示,并且能够得到两个图像库的差异,可以把S和T都转换成D维归一化矢量(例如零均值和单位标准偏差)。For example, taking the facial expression feature S extracted from the source library and the facial expression feature T extracted from the target library for dimensionality reduction as an example; the facial expression feature S extracted from the source library, and The facial expression features T extracted from the target library are respectively normalized. Since the dimensions of S and T are the same, both S and T exist in a given D-dimensional space. In order to obtain a more robust To get a representation of the properties, and to be able to get the difference between the two image libraries, you can convert both S and T into a D-dimensional normalized vector (eg zero mean and unit standard deviation).

本发明实施例中,可以从第一对象特征和第二对象特征中分别提取预设维数的特定对象特征,对应作为源库以及目标库中的待映射对象特征。其中,预设维数小于提取前对应的维数;从第一对象特征和第二对象特征中分别提取预设维数的特定对象特征可以包括:从第一对象特征对应的特征矢量,以及第二对象特征对应的特征矢量中,分别选择其中最大的d维个特征值对应的对象特征,作为各自的特定对象特征。In this embodiment of the present invention, specific object features with preset dimensions may be extracted from the first object feature and the second object feature, respectively, which correspond to the object features to be mapped in the source library and the target library. Wherein, the preset dimension is smaller than the corresponding dimension before extraction; respectively extracting the specific object feature of the preset dimension from the first object feature and the second object feature may include: from the feature vector corresponding to the first object feature, and the third In the feature vectors corresponding to the two object features, the object features corresponding to the largest d-dimensional feature values are respectively selected as the respective specific object features.

举例来说,可以使用核主成分分析KPCA,对源库以及目标库的特征矢量选择其中最大的d维个特征值,其中核取高斯核。For example, Kernel Principal Component Analysis (KPCA) can be used to select the largest d-dimensional eigenvalues for the eigenvectors of the source library and the target library, and a Gaussian kernel is used as the kernel.

步骤203:将第一对象特征和第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征。Step 203: Map the first object feature and the second object feature to the same feature space respectively, and obtain the mapped first object feature and the mapped second object feature correspondingly.

本发明实施例中,为了避免直接将源库和目标库映射到同一特征空间所造成的信息损失,将第一对象特征和第二对象特征采用分别乘以一个转换矩阵的方式,投影到各自对应的子空间,从而,通过空间映射,得到位于同一维度空间的映射后的第一对象特征和映射后的第二对象特征。In the embodiment of the present invention, in order to avoid the loss of information caused by directly mapping the source library and the target library to the same feature space, the first object feature and the second object feature are respectively multiplied by a transformation matrix and projected to their corresponding Therefore, through spatial mapping, the mapped first object feature and the mapped second object feature located in the same dimension space are obtained.

举例来说,特征矢量作为源库和目标库的基础,分别记作Xs和Xt(Xs,Xt∈RD×d)。注意Xs和Xt是正交的,因此其中Id是d的单位矩阵;为了避免直接将源库和目标库映射到同一特征空间所造成的信息损失,可以将人脸表情特征S和人脸表情特征T采用分别乘以一个转换矩阵As,At∈R1×D的方式,投影到各自对应的子空间,从而,通过空间映射,得到位于同一维度空间的映射后的人脸表情特征Xs和映射后的人脸表情特征Xt。For example, the feature vector is used as the basis for the source library and the target library, which are denoted as X s and X t (X s , X t ∈ R D×d ), respectively. Note that X s and X t are orthogonal, so where I d is the identity matrix of d; in order to avoid the loss of information caused by directly mapping the source library and the target library to the same feature space, the facial expression feature S and the facial expression feature T can be multiplied by a transformation matrix A respectively. s , A t ∈ R 1×D , project to their corresponding subspaces, thus, through spatial mapping, the mapped facial expression feature X s and the mapped facial expression feature X in the same dimensional space are obtained t .

步骤204:根据映射后的第一对象特征和映射后的第二对象特征,确定源库和目标库之间的差异化满足预设最小差异条件时的变换矩阵。Step 204: Determine a transformation matrix when the difference between the source library and the target library satisfies a preset minimum difference condition according to the mapped first object feature and the mapped second object feature.

本发明实施例中,可以基于以映射后的第一对象特征和映射后的第二对象特征为因子,构建表示源库和目标库之间距离的差异函数;以求解差异函数最小值的方式,确定源库和目标库之间的差异化满足预设最小差异条件时的变换矩阵。这里,可以利用映射后的第一对象特征与变换矩阵的乘积,与映射后的第二对象特征的差值作为因子;构建基于所述因子计算范数的函数。In this embodiment of the present invention, a difference function representing the distance between the source library and the target library may be constructed based on the first object feature after mapping and the second object feature after mapping as factors; in a way of finding the minimum value of the difference function, Determine the transformation matrix when the difference between the source library and the target library satisfies the preset minimum difference condition. Here, the product of the mapped first object feature and the transformation matrix, and the difference between the mapped second object feature and the mapped second object feature may be used as a factor; a function for calculating a norm based on the factor is constructed.

举例来说,可以基于以上述映射后的人脸表情特征Xs和Xt为因子,构建表示人脸表情特征Xs、与人脸表情特征Xt之间距离的差异函数;可以利用最小化布莱格曼矩阵差异(Bregman Matrix Divergence)Bregman,确定对应的变换矩阵M。For example, based on the above-mentioned mapped facial expression features X s and X t as factors, a difference function representing the distance between the facial expression feature X s and the facial expression feature X t can be constructed; Bregman Matrix Divergence (Bregman Matrix Divergence) Bregman, determines the corresponding transformation matrix M.

为了实现这个目标,可以使用空间排列的方法,通过使用从Xs到Xt的变换矩阵M来对齐基准矢量,矩阵M是由Bregman学习得到的:To achieve this goal, the spatial alignment method can be used to align the reference vectors by using a transformation matrix M from X s to X t , which is learned by Bregman:

M*=argminM(F(M)) (5)M * = argmin M (F(M)) (5)

其中,是Frobenius规范;由于Xs和Xt是由第d个特征矢量生成的,事实上它们趋于内在正则化,由于Frobenius范数对于正交操作是不变的,因此,可以将公式(4)重写成如下形式:in, is the Frobenius norm; since X s and X t are generated by the d-th eigenvector, in fact they tend to be intrinsically regularized, and since the Frobenius norm is invariant to orthogonal operations, Equation (4) Rewrite as follows:

从公式(6)可以看出,最佳的M*可以由计算得到,如果源库和目标库是相同的,则Xs=Xt,且M*为单位矩阵。It can be seen from formula (6) that the optimal M * can be given by It is calculated that if the source library and the target library are the same, then X s =X t , and M * is the identity matrix.

步骤205:通过变换矩阵将映射后的第一对象特征进行转换,得到第三对象特征。Step 205: Transform the mapped first object feature through a transformation matrix to obtain a third object feature.

本发明实施例中,变换矩阵可以通过映射后的第一对象特征与映射后的第二对象特征对齐的方式,将映射后的第一对象特征所在子空间坐标系转换成映射后的第二对象特征所在子空间坐标系,得到第三对象特征。In the embodiment of the present invention, the transformation matrix may convert the subspace coordinate system where the mapped first object feature is located into the mapped second object by aligning the mapped first object feature with the mapped second object feature The subspace coordinate system where the feature is located, and the third object feature is obtained.

举例来说,上述变换矩阵M通过源库中映射后的人脸表情特征Xs与目标库中映射后的人脸表情特征Xt对齐的方式,将源图像空间坐标系转换到目标图像空间坐标系,通过将映射后的人脸表情特征Xs乘以变换矩阵M的方式,得到转换后的人脸表情特征;如果源库中映射后的人脸表情特征Xs与目标库中映射后的人脸表情特征Xt正交,则它是可忽略的;当对于与目标库中映射后的人脸表情特征Xt良好对准的源库中映射后的人脸表情特征Xs时,给予高权重。For example, the above-mentioned transformation matrix M converts the source image space coordinate system to the target image space coordinate system by aligning the facial expression feature X s after mapping in the source library and the facial expression feature X t after mapping in the target library. system, by multiplying the mapped facial expression feature X s by the transformation matrix M, the converted facial expression feature is obtained; if the mapped facial expression feature X s in the source library and the mapped facial expression feature in the target library If the facial expression feature X t is orthogonal, it can be ignored; when the mapped facial expression feature X s in the source library is well aligned with the mapped facial expression feature X t in the target library, it is given high weight.

步骤206:将第三对象特征以及对应的标签,应用于对目标库中样本进行分类的模型。Step 206: Apply the third object feature and the corresponding label to the model for classifying the samples in the target library.

本发明实施例中,可以将第三对象特征以及对应的标签进行模型训练,具体地:以第三对象特征以及对应的标签为新的样本;求解模型参数相对新的样本的更新值分量;从目标库中样本提取对象特征,基于模型计算目标库中样本具有不同标签的概率值;选取符合概率条件的标签作为目标库中样本的标签。In the embodiment of the present invention, model training can be performed on the third object feature and the corresponding label, specifically: the third object feature and the corresponding label are used as new samples; the update value components of the relatively new samples of model parameters are solved; The samples in the target library extract the object features, and calculate the probability values of the samples in the target library with different labels based on the model; select the labels that meet the probability conditions as the labels of the samples in the target library.

举例来说,可以选取支持向量SVM模型,或者也可以选取神经网络等其它类型的模型进行训练:以转换后的人脸表情特征以及对应的标签为新的样本,放入SVM模型进行训练;求解模型参数相对新的样本的更新值分量;从目标库中样本提取人脸表情特征,基于模型计算目标库中样本具有不同标签的概率值;选取符合概率条件的标签作为目标库中样本的标签。For example, a support vector SVM model can be selected, or other types of models such as neural networks can be selected for training: take the converted facial expression features and corresponding labels as new samples, and put them into the SVM model for training; The update value component of the samples with relatively new model parameters; extract facial expression features from the samples in the target database, and calculate the probability values of the samples in the target database with different labels based on the model; select the labels that meet the probability conditions as the labels of the samples in the target database.

本发明实施例中,可以基于以第三对象特征为因子的相似性函数,对目标库中样本进行近邻分类,得到目标库中样本的标签。In the embodiment of the present invention, the samples in the target library may be classified into neighbors based on the similarity function with the third object feature as a factor, so as to obtain the labels of the samples in the target library.

举例来说,以应用于对目标库中样本进行分类的K近邻准则模型为例,为了将源库中人脸表情特征S对应的As与目标库中人脸表情特征S对应的At进行对比,需要一种度量相似性函数Sim(As,At)。将As和At投影到各自的子空间Xs和Xt并应用最优变换矩阵M*,从而,通过该相似性函数辅助模型进行近邻分类,其中,相似性函数可定义如下:For example, taking the K-nearest neighbor criterion model applied to classify the samples in the target database as an example, in order to compare the A s corresponding to the facial expression feature S in the source database with the A t corresponding to the facial expression feature S in the target database. For comparison, a metric similarity function Sim(A s , At ) is needed. Projecting As and At into their respective subspaces X s and X t and applying the optimal transformation matrix M * , the nearest neighbor classification is aided by this similarity function, where the similarity function can be defined as follows:

其中,是编码向量的不同分量在其原始空间中的相对贡献。in, is the relative contribution of the different components of the encoded vector in its original space.

通过学习得到的最优M*,对源图像进行转换,可以直接将相似性函数Sim作为近邻分类器KNN的度量工具,这里的KNN是不需要进行模型训练的,直接进行分类;假设,源库中存在1-6个表情分类,每一类中有10个表情特征,那么,将目标库中任一样本代入Sim公式后,表情分类1-6分别得到一个值,取其中最大的值(即最相似)对应的标签作为该样本的表情标签;其中,每个表情分类的值,通过其包含的10个表情特征代入后得到的平均值确定。By transforming the source image with the optimal M * obtained by learning, the similarity function Sim can be directly used as the measurement tool of the nearest neighbor classifier KNN. The KNN here does not need model training, and can be directly classified; assuming that the source library There are 1-6 expression categories in , and there are 10 expression features in each category. Then, after substituting any sample in the target library into the Sim formula, expression categories 1-6 get a value respectively, and take the largest value (ie The label corresponding to the most similar) is used as the expression label of the sample; wherein, the value of each expression classification is determined by the average value obtained by substituting the 10 expression features it contains.

本发明实施例中,当所应用的模型包括的类型为两个时,这里,假设所应用的模型为K近邻准则模型和SVM模型,基于这两个模型分别确定针对目标库中样本的输出结果;将这两个模型的输出结果进行比较,根据比较结果确定目标库中样本的标签。In the embodiment of the present invention, when the applied model includes two types, here, it is assumed that the applied model is the K-nearest neighbor criterion model and the SVM model, and the output results for the samples in the target library are respectively determined based on these two models; The output results of the two models are compared, and the labels of the samples in the target library are determined according to the comparison results.

举例来说,当上述两个模型的输出结果相同时,选取任意一个模型的输出结果作为目标库中的表情标签;当上述两个模型的输出结果不同,且不包括特定表情标签时,选取任意一个模型的输出结果作为目标库中的表情标签,这里,特定表情标签可以包括害怕、悲伤和厌恶;当上述两个模型的输出结果不同,且包括特定标签时,选取特定标签作为目标库中的表情标签,这里,可以按优先级依次选取悲伤、厌恶和害怕。For example, when the output results of the above two models are the same, select the output result of any one model as the expression label in the target library; when the output results of the above two models are different and do not include specific expression labels, select any expression label. The output result of a model is used as the expression label in the target library. Here, the specific expression label can include fear, sadness and disgust; when the output results of the above two models are different and include a specific label, the specific label is selected as the target library. Emoji tab, here, you can choose sadness, disgust and fear in order of priority.

采用这种判决方式是因为表情标签高兴和惊讶都属于较为强烈的正面情感,在训练的模型中容易区分;而直观上较为类似的表情标签害怕、悲伤和厌恶等情感都只取得了较为一般的识别率,在识别害怕、悲伤和厌恶等表情时,很容易被识别为高兴等正面情感,因此,出错概率较大,这样做即可缓解分类模型存在的倾向性。通过上述迁移方法,其测试结果的准确率远远高于,使用源库训练得到的模型直接用于目标库进行测试的准确率。This judgment method is adopted because the emoji tags happy and surprised are relatively strong positive emotions, which are easy to distinguish in the trained model; while the intuitively similar emoji tags such as fear, sadness, and disgust have only obtained relatively general emotions. Recognition rate, when recognizing expressions such as fear, sadness, and disgust, it is easy to be recognized as positive emotions such as happiness. Therefore, the probability of error is high, which can alleviate the tendency of the classification model. Through the above migration method, the accuracy of the test results is much higher than the accuracy of the model trained using the source library directly used in the target library for testing.

为实现上述方法,本发明实施例还提供了一种模型处理装置,如图6所示,该装置包括提取模块601、映射模块602、确定模块603、转换模块604和应用模块605;其中,To implement the above method, an embodiment of the present invention further provides a model processing device. As shown in FIG. 6 , the device includes an extraction module 601, a mapping module 602, a determination module 603, a conversion module 604, and an application module 605; wherein,

所述提取模块601,用于分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合。The extraction module 601 is used to extract the first object feature from the source library and the second object feature from the target library respectively, wherein the first object feature and the second object feature are respectively in the respective databases. sample collection.

所述提取模块601,具体用于:从所述源库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为所述源库的第一对象特征;The extraction module 601 is specifically configured to: extract different types of object features from the source library, and combine the extracted different types of object features as the first object features of the source library;

从所述目标库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为所述目标库的第二对象特征。Different types of object features are extracted from the target library, and the extracted different types of object features are combined to serve as the second object features of the target library.

所述映射模块602,用于将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征。The mapping module 602 is configured to map the first object feature and the second object feature to the same feature space respectively, and correspondingly obtain the mapped first object feature and the mapped second object feature.

所述确定模块603,用于根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵。The determining module 603 is configured to determine, according to the mapped first object feature and the mapped second object feature, that the difference between the source library and the target library satisfies a preset minimum difference condition transformation matrix when .

所述确定模块603,具体用于:基于以所述映射后的第一对象特征和所述映射后的第二对象特征为因子,构建表示所述源库和所述目标库之间距离的差异函数;The determining module 603 is specifically configured to: construct a difference representing the distance between the source library and the target library based on the mapped first object feature and the mapped second object feature as factors function;

以求解所述差异函数最小值的方式,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵。A transformation matrix when the difference between the source library and the target library satisfies a preset minimum difference condition is determined in the manner of finding the minimum value of the difference function.

所述确定模块603,具体用于:利用所述映射后的第一对象特征与所述变换矩阵的乘积,与所述映射后的第二对象特征的差值作为因子;构建基于所述因子计算范数的函数。The determining module 603 is specifically configured to: use the product of the mapped first object feature and the transformation matrix, and the difference between the mapped second object feature and the mapped second object feature as a factor; construct a calculation based on the factor norm function.

所述转换模块604,用于通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征。The transformation module 604 is configured to transform the mapped first object feature through the transformation matrix to obtain a third object feature.

所述应用模块605,用于将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。The application module 605 is configured to apply the third object feature and the corresponding label to a model for classifying samples in the target library.

所述应用模块605,具体用于:以所述第三对象特征以及对应的标签为新的样本;求解模型参数相对所述新的样本的更新值分量;从所述目标库中样本提取对象特征,基于所述模型计算所述目标库中样本具有不同标签的概率值;选取符合概率条件的标签作为所述目标库中样本的标签。The application module 605 is specifically configured to: take the third object feature and the corresponding label as a new sample; solve the update value component of the model parameter relative to the new sample; extract the object feature from the sample in the target library , and calculate the probability value that the samples in the target library have different labels based on the model; select the labels that meet the probability conditions as the labels of the samples in the target library.

所述应用模块605,具体用于:基于以所述第三对象特征为因子的相似性函数,对所述目标库中样本进行近邻分类,得到所述目标库中样本的标签。The application module 605 is specifically configured to: perform neighbor classification on the samples in the target library based on the similarity function with the third object feature as a factor, and obtain the labels of the samples in the target library.

所述装置还包括降维模块606,用于:将从所述源库中提取的第一对象特征、以及从所述目标库中提取的第二对象特征进行降维。The apparatus further includes a dimension reduction module 606, configured to: perform dimension reduction on the first object feature extracted from the source library and the second object feature extracted from the target library.

所述降维模块606,具体用于:将从所述源库中提取的第一对象特征、以及从所述目标库中提取的第二对象特征分别进行归一化处理;The dimensionality reduction module 606 is specifically configured to: respectively perform normalization processing on the first object feature extracted from the source library and the second object feature extracted from the target library;

分别提取预设维数的特定对象特征,所述预设维数小于提取前对应的维数。The specific object features of preset dimensions are respectively extracted, and the preset dimensions are smaller than the corresponding dimensions before extraction.

所述装置还包括比较模块607,用于:当所应用的模型包括的类型为至少两个时,基于各模型分别确定针对所述目标库中样本的输出结果;The apparatus further includes a comparison module 607, configured to: when the applied models include at least two types, determine the output results for the samples in the target library respectively based on each model;

将所述各模型的输出结果进行比较,根据比较结果确定所述目标库中样本的标签。The output results of the various models are compared, and the labels of the samples in the target library are determined according to the comparison results.

所比较模块607,具体用于:当所述各模型的输出结果相同时,选取任意一个模型的输出结果作为所述目标库中样本的标签;The comparing module 607 is specifically configured to: when the output results of the models are the same, select the output result of any model as the label of the sample in the target library;

当所述各模型的输出结果不同,且不包括特定标签时,选取任意一个模型的输出结果作为所述目标库中样本的标签;When the output results of the models are different and do not include a specific label, select the output result of any model as the label of the sample in the target library;

当所述各模型的输出结果不同,且包括特定标签时,选取所述特定标签作为所述目标库中样本的标签。When the output results of the models are different and include a specific label, the specific label is selected as the label of the sample in the target library.

在实际应用中,所述提取模块601、映射模块602、确定模块603、转换模块604、应用模块605、降维模块606和比较模块607均可由位于计算机设备上的中央处理器(CPU,Central Processing Unit)、微处理器(MPU,Micro Processor Unit)、数字信号处理器(DSP,Digital Signal Processor)或现场可编程门阵列(FPGA,Field Programmable GateArray)等实现。In practical applications, the extraction module 601 , the mapping module 602 , the determination module 603 , the conversion module 604 , the application module 605 , the dimension reduction module 606 and the comparison module 607 can all be implemented by a central processing unit (CPU, Central Processing Processing) located on a computer device. Unit), Microprocessor (MPU, Micro Processor Unit), Digital Signal Processor (DSP, Digital Signal Processor) or Field Programmable Gate Array (FPGA, Field Programmable GateArray) etc.

需要说明的是:上述实施例提供的模型处理装置在进行模型处理时,仅以上述各程序模块的划分进行举例说明,实际应用中,可以根据需要而将上述处理分配由不同的程序模块完成,即将装置的内部结构划分成不同的程序模块,以完成以上描述的全部或者部分处理。另外,上述实施例提供的模型处理装置与模型处理方法实施例属于同一构思,其具体实现过程详见方法实施例,这里不再赘述。It should be noted that: when the model processing apparatus provided in the above embodiment performs model processing, only the division of the above-mentioned program modules is used as an example for illustration. In practical applications, the above-mentioned processing can be allocated to different program modules as required. That is, the internal structure of the device is divided into different program modules to complete all or part of the processing described above. In addition, the model processing apparatus and the model processing method embodiments provided by the above embodiments belong to the same concept, and the specific implementation process thereof is detailed in the method embodiments, which will not be repeated here.

为了实现上述模型处理方法,本发明实施例还提供了一种模型处理装置的硬件结构。现在将参考附图描述实现本发明实施例的模型处理装置,所述模型处理装置可以以终端设备,如智能手机、平板电脑、掌上电脑等计算机设备来实施。下面对本发明实施例提供的模型处理装置的硬件结构做进一步说明,可以理解,图7仅仅示出了模型处理装置的示例性结构而非全部结构,根据需要可以实施图7示出的部分结构或全部结构。In order to implement the above model processing method, an embodiment of the present invention further provides a hardware structure of a model processing apparatus. The model processing apparatus for implementing the embodiments of the present invention will now be described with reference to the accompanying drawings. The model processing apparatus may be implemented by a terminal device, such as a computer device such as a smart phone, a tablet computer, and a palmtop computer. The hardware structure of the model processing apparatus provided by the embodiments of the present invention will be further described below. It can be understood that FIG. 7 only shows an exemplary structure of the model processing apparatus but not the entire structure. full structure.

参见图7,图7为本发明实施例提供的一种模型处理装置的硬件结构示意图,实际应用中可以应用于前述运行应用程序的终端设备,图7所示的模型处理装置700包括:至少一个处理器701、存储器702、用户接口703和至少一个网络接口704。所述模型处理装置700中的各个组件通过总线系统705耦合在一起。可以理解,总线系统705用于实现这些组件之间的连接通信。总线系统705除包括数据总线之外,还包括电源总线、控制总线和状态信号总线。但是为了清楚说明起见,在图7中将各种总线都标为总线系统705。Referring to FIG. 7, FIG. 7 is a schematic diagram of the hardware structure of a model processing apparatus provided by an embodiment of the present invention, which can be applied to the aforementioned terminal equipment running an application program in practical applications. The

其中,用户接口703可以包括显示器、键盘、鼠标、轨迹球、点击轮、按键、按钮、触感板或者触摸屏等。The

可以理解,存储器702可以是易失性存储器或非易失性存储器,也可包括易失性和非易失性存储器两者。It will be appreciated that

本发明实施例中的存储器702用于存储各种类型的数据以支持模型处理装置700的操作。这些数据的示例包括:用于在模型处理装置700上操作的任何计算机程序,如可执行程序7021和操作系统7022,实现本发明实施例的模型处理方法的程序可以包含在可执行程序7021中。The

本发明实施例揭示的模型处理方法可以应用于处理器701中,或者由处理器701实现。处理器701可能是一种集成电路芯片,具有信号的处理能力。在实现过程中,上述模型处理方法的各步骤可以通过处理器701中的硬件的集成逻辑电路或者软件形式的指令完成。上述的处理器701可以是通用处理器、DSP,或者其他可编程逻辑器件、分立门或者晶体管逻辑器件、分立硬件组件等。处理器701可以实现或者执行本发明实施例中提供的各模型处理方法、步骤及逻辑框图。通用处理器可以是微处理器或者任何常规的处理器等。结合本发明实施例所提供的模型处理方法的步骤,可以直接体现为硬件译码处理器执行完成,或者用译码处理器中的硬件及软件模块组合执行完成。软件模块可以位于存储介质中,该存储介质位于存储器702,处理器701读取存储器702中的信息,结合其硬件完成前述模型处理方法的步骤。The model processing method disclosed in the embodiment of the present invention may be applied to the

本发明实施例还提供了一种模型处理装置的硬件结构,所述模型处理装置700包括存储器702、处理器701及存储在存储器702上并能够由所述处理器701运行的可执行程序7021,所述处理器701运行所述可执行程序7021时实现:The embodiment of the present invention also provides a hardware structure of a model processing apparatus, the

分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。Extracting the first object feature from the source library and extracting the second object feature from the target library respectively, wherein the first object feature and the second object feature are sample sets in the respective databases; The object feature and the second object feature are respectively mapped to the same feature space, and the mapped first object feature and the mapped second object feature are obtained correspondingly; according to the mapped first object feature and the mapped The second object feature is to determine the transformation matrix when the difference between the source library and the target library satisfies the preset minimum difference condition; the first object feature after mapping is converted by the transformation matrix, and the result is obtained The third object feature; the third object feature and the corresponding label are applied to the model for classifying the samples in the target library.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

从所述源库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为所述源库的第一对象特征;从所述目标库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为所述目标库的第二对象特征。Extract different types of object features from the source library, and combine the extracted different types of object features as the first object features of the source library; extract different types of object features from the target library, and extract After combining the different types of object features, it is used as the second object feature of the target library.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

将从所述源库中提取的第一对象特征、以及从所述目标库中提取的第二对象特征进行降维。Dimensionality reduction is performed on the first object feature extracted from the source library and the second object feature extracted from the target library.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

将从所述源库中提取的第一对象特征、以及从所述目标库中提取的第二对象特征分别进行归一化处理;分别提取预设维数的特定对象特征,所述预设维数小于提取前对应的维数。The first object feature extracted from the source library and the second object feature extracted from the target library are respectively normalized; specific object features of preset dimensions are respectively extracted, and the preset dimension The number is less than the corresponding dimension before extraction.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

基于以所述映射后的第一对象特征和所述映射后的第二对象特征为因子,构建表示所述源库和所述目标库之间距离的差异函数;以求解所述差异函数最小值的方式,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵。Based on the first object feature after mapping and the second object feature after mapping as factors, construct a difference function representing the distance between the source library and the target library; to solve the minimum value of the difference function way of determining the transformation matrix when the difference between the source library and the target library satisfies a preset minimum difference condition.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

利用所述映射后的第一对象特征与所述变换矩阵的乘积,与所述映射后的第二对象特征的差值作为因子;构建基于所述因子计算范数的函数。Using the product of the mapped first object feature and the transformation matrix, and the difference between the mapped second object feature and the mapped second object feature as a factor; constructing a function for calculating a norm based on the factor.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

以所述第三对象特征以及对应的标签为新的样本;求解模型参数相对所述新的样本的更新值分量;从所述目标库中样本提取对象特征,基于所述模型计算所述目标库中样本具有不同标签的概率值;选取符合概率条件的标签作为所述目标库中样本的标签。Taking the third object feature and the corresponding label as a new sample; solving the update value component of the model parameter relative to the new sample; extracting the object feature from the sample in the target library, and calculating the target library based on the model The probability value of the samples in the target library with different labels; select the labels that meet the probability conditions as the labels of the samples in the target library.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

基于以所述第三对象特征为因子的相似性函数,对所述目标库中样本进行近邻分类,得到所述目标库中样本的标签。Based on the similarity function with the third object feature as a factor, the samples in the target library are classified as neighbors to obtain the labels of the samples in the target library.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

当所应用的模型包括的类型为至少两个时,基于各模型分别确定针对所述目标库中样本的输出结果;将所述各模型的输出结果进行比较,根据比较结果确定所述目标库中样本的标签。When the applied models include at least two types, the output results for the samples in the target library are determined based on each model; the output results of the various models are compared, and the samples in the target library are determined according to the comparison results. Tag of.

在一些实施例中,所述处理器701运行所述可执行程序7021时实现:In some embodiments, when the

当所述各模型的输出结果相同时,选取任意一个模型的输出结果作为所述目标库中样本的标签;当所述各模型的输出结果不同,且不包括特定标签时,选取任意一个模型的输出结果作为所述目标库中样本的标签;当所述各模型的输出结果不同,且包括特定标签时,选取所述特定标签作为所述目标库中样本的标签。When the output results of the models are the same, select the output results of any one model as the label of the samples in the target library; when the output results of the models are different and do not include specific labels, select the output results of any one model. The output result is used as the label of the sample in the target library; when the output results of the models are different and include a specific label, the specific label is selected as the label of the sample in the target library.

本发明实施例还提供了一种存储介质,所述存储介质可为光盘、闪存或磁盘等存储介质,可选为非瞬间存储介质。其中,所述存储介质上存储有可执行程序7021,所述可执行程序7021被处理器701执行时实现:An embodiment of the present invention further provides a storage medium, where the storage medium may be a storage medium such as an optical disc, a flash memory, or a magnetic disk, and may optionally be a non-transitory storage medium. Wherein, an

分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。Extracting the first object feature from the source library and extracting the second object feature from the target library respectively, wherein the first object feature and the second object feature are sample sets in the respective databases; The object feature and the second object feature are respectively mapped to the same feature space, and the mapped first object feature and the mapped second object feature are obtained correspondingly; according to the mapped first object feature and the mapped The second object feature is to determine the transformation matrix when the difference between the source library and the target library satisfies the preset minimum difference condition; the first object feature after mapping is converted by the transformation matrix, and the result is obtained The third object feature; the third object feature and the corresponding label are applied to the model for classifying the samples in the target library.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

从所述源库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为所述源库的第一对象特征;从所述目标库中提取不同类型的对象特征,将提取的不同类型的对象特征组合后,作为所述目标库的第二对象特征。Extract different types of object features from the source library, and combine the extracted different types of object features as the first object features of the source library; extract different types of object features from the target library, and extract After combining the different types of object features, it is used as the second object feature of the target library.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

将从所述源库中提取的第一对象特征、以及从所述目标库中提取的第二对象特征进行降维。Dimensionality reduction is performed on the first object feature extracted from the source library and the second object feature extracted from the target library.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

将从所述源库中提取的第一对象特征、以及从所述目标库中提取的第二对象特征分别进行归一化处理;分别提取预设维数的特定对象特征,所述预设维数小于提取前对应的维数。The first object feature extracted from the source library and the second object feature extracted from the target library are respectively normalized; specific object features of preset dimensions are respectively extracted, and the preset dimension The number is less than the corresponding dimension before extraction.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

基于以所述映射后的第一对象特征和所述映射后的第二对象特征为因子,构建表示所述源库和所述目标库之间距离的差异函数;以求解所述差异函数最小值的方式,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵。Based on the first object feature after mapping and the second object feature after mapping as factors, construct a difference function representing the distance between the source library and the target library; to solve the minimum value of the difference function way of determining the transformation matrix when the difference between the source library and the target library satisfies a preset minimum difference condition.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

利用所述映射后的第一对象特征与所述变换矩阵的乘积,与所述映射后的第二对象特征的差值作为因子;构建基于所述因子计算范数的函数。Using the product of the mapped first object feature and the transformation matrix, and the difference between the mapped second object feature and the mapped second object feature as a factor; constructing a function for calculating a norm based on the factor.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

以所述第三对象特征以及对应的标签为新的样本;求解模型参数相对所述新的样本的更新值分量;从所述目标库中样本提取对象特征,基于所述模型计算所述目标库中样本具有不同标签的概率值;选取符合概率条件的标签作为所述目标库中样本的标签。Taking the third object feature and the corresponding label as a new sample; solving the update value component of the model parameter relative to the new sample; extracting the object feature from the sample in the target library, and calculating the target library based on the model The probability value of the samples in the target library with different labels; select the labels that meet the probability conditions as the labels of the samples in the target library.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

基于以所述第三对象特征为因子的相似性函数,对所述目标库中样本进行近邻分类,得到所述目标库中样本的标签。Based on the similarity function with the third object feature as a factor, the samples in the target library are classified as neighbors to obtain the labels of the samples in the target library.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

当所应用的模型包括的类型为至少两个时,基于各模型分别确定针对所述目标库中样本的输出结果;将所述各模型的输出结果进行比较,根据比较结果确定所述目标库中样本的标签。When the applied models include at least two types, the output results for the samples in the target library are determined based on each model; the output results of the various models are compared, and the samples in the target library are determined according to the comparison results. Tag of.

在一些实施例中,所述可执行程序7021被处理器701执行时实现:In some embodiments, when the

当所述各模型的输出结果相同时,选取任意一个模型的输出结果作为所述目标库中样本的标签;当所述各模型的输出结果不同,且不包括特定标签时,选取任意一个模型的输出结果作为所述目标库中样本的标签;当所述各模型的输出结果不同,且包括特定标签时,选取所述特定标签作为所述目标库中样本的标签。When the output results of the models are the same, select the output results of any one model as the label of the samples in the target library; when the output results of the models are different and do not include specific labels, select the output results of any one model. The output result is used as the label of the sample in the target library; when the output results of the models are different and include a specific label, the specific label is selected as the label of the sample in the target library.

综上所述,本发明实施例所提供的模型处理方法、装置及存储介质,分别从源库中提取第一对象特征以及从目标库中提取第二对象特征,其中,所述第一对象特征和所述第二对象特征分别为各自所属数据库中样本集合;将所述第一对象特征和所述第二对象特征分别映射到同一特征空间,对应得到映射后的第一对象特征和映射后的第二对象特征;根据所述映射后的第一对象特征和所述映射后的第二对象特征,确定所述源库和所述目标库之间的差异化满足预设最小差异条件时的变换矩阵;通过所述变换矩阵将所述映射后的第一对象特征进行转换,得到第三对象特征;将所述第三对象特征以及对应的标签,应用于对所述目标库中样本进行分类的模型。如此,通过源库训练出了适合目标库的模型,因此,由源库训练出的模型运用于目标库时,能够得到很好的识别结果,从而提升了模型的准确度。To sum up, in the model processing method, device, and storage medium provided by the embodiments of the present invention, the first object feature is extracted from the source library and the second object feature is extracted from the target library, wherein the first object feature The first object feature and the second object feature are respectively mapped to the same feature space, and the mapped first object feature and the mapped first object feature are obtained correspondingly. second object feature; according to the mapped first object feature and the mapped second object feature, determine the transformation when the difference between the source library and the target library satisfies a preset minimum difference condition Matrix; transform the mapped first object feature through the transformation matrix to obtain a third object feature; apply the third object feature and the corresponding label to a method for classifying samples in the target library Model. In this way, a model suitable for the target library is trained through the source library. Therefore, when the model trained from the source library is applied to the target library, a good recognition result can be obtained, thereby improving the accuracy of the model.

本领域内的技术人员应明白,本发明的实施例可提供为方法、系统、或可执行程序产品。因此,本发明可采用硬件实施例、软件实施例、或结合软件和硬件方面的实施例的形式。而且,本发明可采用在一个或多个其中包含有计算机可用程序代码的计算机可用存储介质(包括但不限于磁盘存储器和光学存储器等)上实施的可执行程序产品的形式。As will be appreciated by those skilled in the art, embodiments of the present invention may be provided as a method, system, or executable program product. Accordingly, the invention may take the form of a hardware embodiment, a software embodiment, or an embodiment combining software and hardware aspects. Furthermore, the present invention may take the form of an executable program product embodied on one or more computer-usable storage media having computer-usable program code embodied therein, including but not limited to disk storage, optical storage, and the like.

本发明是参照根据本发明实施例的方法、设备(系统)、和可执行程序产品的流程图和/或方框图来描述的。应理解可由可执行程序指令实现流程图和/或方框图中的每一流程和/或方框、以及流程图和/或方框图中的流程和/或方框的结合。可提供这些可执行程序指令到通用计算机、专用计算机、嵌入式处理机或参考可编程数据处理设备的处理器以产生一个机器,使得通过计算机或参考可编程数据处理设备的处理器执行的指令产生用于实现在流程图一个流程或多个流程和/或方框图一个方框或多个方框中指定的功能的装置。The present invention is described with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems), and executable program products according to embodiments of the invention. It will be understood that each flow and/or block in the flowchart illustrations and/or block diagrams, and combinations of flows and/or blocks in the flowchart illustrations and/or block diagrams, can be implemented by executable program instructions. These executable program instructions may be provided to a general purpose computer, a special purpose computer, an embedded processor or a processor of a reference programmable data processing apparatus to produce a machine such that the instructions executed by the computer or a processor of a reference programmable data processing apparatus produce a Means for implementing the functions specified in a flow or flow of a flowchart and/or a block or blocks of a block diagram.

这些可执行程序指令也可存储在能引导计算机或参考可编程数据处理设备以特定方式工作的计算机可读存储器中,使得存储在该计算机可读存储器中的指令产生包括指令装置的制造品,该指令装置实现在流程图一个流程或多个流程和/或方框图一个方框或多个方框中指定的功能。The executable program instructions may also be stored in a computer-readable memory capable of directing a computer or reference programmable data processing apparatus to function in a particular manner, such that the instructions stored in the computer-readable memory result in an article of manufacture comprising instruction means, the The instruction means implement the functions specified in the flow or flow of the flowcharts and/or the block or blocks of the block diagrams.

这些可执行程序指令也可装载到计算机或参考可编程数据处理设备上,使得在计算机或参考可编程设备上执行一系列操作步骤以产生计算机实现的处理,从而在计算机或参考可编程设备上执行的指令提供用于实现在流程图一个流程或多个流程和/或方框图一个方框或多个方框中指定的功能的步骤。These executable program instructions may also be loaded onto a computer or reference programmable data processing apparatus, such that a series of operational steps are performed on the computer or reference programmable apparatus to produce a computer-implemented process for execution on the computer or reference programmable apparatus The instructions provide steps for implementing the functions specified in one or more of the flowcharts and/or one or more blocks of the block diagrams.

以上所述,仅为本发明的较佳实施例而已,并非用于限定本发明的保护范围,凡在本发明的精神和原则之内所作的任何修改、等同替换和改进等,均应包含在本发明的保护范围之内。The above are only preferred embodiments of the present invention, and are not intended to limit the protection scope of the present invention. Any modifications, equivalent replacements and improvements made within the spirit and principles of the present invention shall be included in the within the protection scope of the present invention.

Claims (22)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201711475434.7A CN108229552B (en) | 2017-12-29 | 2017-12-29 | A model processing method, device and storage medium |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201711475434.7A CN108229552B (en) | 2017-12-29 | 2017-12-29 | A model processing method, device and storage medium |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN108229552A CN108229552A (en) | 2018-06-29 |

| CN108229552B true CN108229552B (en) | 2021-07-09 |

Family

ID=62646962

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201711475434.7A Active CN108229552B (en) | 2017-12-29 | 2017-12-29 | A model processing method, device and storage medium |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN108229552B (en) |

Families Citing this family (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN109214421B (en) * | 2018-07-27 | 2022-01-28 | 创新先进技术有限公司 | Model training method and device and computer equipment |

| CN110097873B (en) * | 2019-05-14 | 2021-08-17 | 苏州沃柯雷克智能系统有限公司 | Method, device, equipment and storage medium for confirming mouth shape through sound |

Family Cites Families (14)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JPS62184586A (en) * | 1986-02-07 | 1987-08-12 | Matsushita Electric Ind Co Ltd | Character recognizing device |

| CN103093235B (en) * | 2012-12-30 | 2016-01-20 | 北京工业大学 | A kind of Handwritten Numeral Recognition Method based on improving distance core principle component analysis |

| CN104574638A (en) * | 2014-09-30 | 2015-04-29 | 上海层峰金融设备有限公司 | Method for identifying RMB |

| CN104616319B (en) * | 2015-01-28 | 2018-06-12 | 南京信息工程大学 | Multiple features selection method for tracking target based on support vector machines |

| CN104700089A (en) * | 2015-03-24 | 2015-06-10 | 江南大学 | Face identification method based on Gabor wavelet and SB2DLPP |

| CN104751191B (en) * | 2015-04-23 | 2017-11-03 | 重庆大学 | A kind of Hyperspectral Image Classification method of sparse adaptive semi-supervised multiple manifold study |

| CN105069447B (en) * | 2015-09-23 | 2018-05-29 | 河北工业大学 | A kind of recognition methods of human face expression |

| CN105139039B (en) * | 2015-09-29 | 2018-05-29 | 河北工业大学 | The recognition methods of the micro- expression of human face in video frequency sequence |

| CN105512331B (en) * | 2015-12-28 | 2019-03-26 | 海信集团有限公司 | A kind of video recommendation method and device |

| CN106548145A (en) * | 2016-10-31 | 2017-03-29 | 北京小米移动软件有限公司 | Image-recognizing method and device |

| CN106599854B (en) * | 2016-12-19 | 2020-03-27 | 河北工业大学 | Automatic facial expression recognition method based on multi-feature fusion |

| CN106803098A (en) * | 2016-12-28 | 2017-06-06 | 南京邮电大学 | A kind of three mode emotion identification methods based on voice, expression and attitude |

| CN107346434A (en) * | 2017-05-03 | 2017-11-14 | 上海大学 | A kind of plant pest detection method based on multiple features and SVMs |

| CN107292246A (en) * | 2017-06-05 | 2017-10-24 | 河海大学 | Infrared human body target identification method based on HOG PCA and transfer learning |

-

2017

- 2017-12-29 CN CN201711475434.7A patent/CN108229552B/en active Active

Also Published As

| Publication number | Publication date |

|---|---|

| CN108229552A (en) | 2018-06-29 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| US12374087B2 (en) | Neural network training method, image classification system, and related device | |

| Johnston et al. | A review of image-based automatic facial landmark identification techniques | |

| US11138413B2 (en) | Fast, embedded, hybrid video face recognition system | |

| Wang et al. | Robust subspace clustering for multi-view data by exploiting correlation consensus | |

| CN109934173B (en) | Expression recognition method and device and electronic equipment | |

| US10395098B2 (en) | Method of extracting feature of image to recognize object | |

| US9323980B2 (en) | Pose-robust recognition | |

| EP3274921B1 (en) | Multi-layer skin detection and fused hand pose matching | |

| Rajan et al. | Facial expression recognition techniques: a comprehensive survey | |

| Wu et al. | Discriminative deep face shape model for facial point detection | |

| US10339369B2 (en) | Facial expression recognition using relations determined by class-to-class comparisons | |

| CN105488463B (en) | Lineal relative's relation recognition method and system based on face biological characteristic | |

| Rodriguez et al. | Finger spelling recognition from RGB-D information using kernel descriptor | |

| CN107463865A (en) | Face datection model training method, method for detecting human face and device | |

| Chang et al. | Intensity rank estimation of facial expressions based on a single image | |

| Mahdikhanlou et al. | Multimodal 3D American sign language recognition for static alphabet and numbers using hand joints and shape coding | |

| CN105550641A (en) | Age estimation method and system based on multi-scale linear differential textural features | |

| Otiniano-Rodríguez et al. | Finger Spelling Recognition from RGB-D Information Using Kernel Descriptor. | |

| Tran et al. | Disentangling geometry and appearance with regularised geometry-aware generative adversarial networks | |

| CN108229552B (en) | A model processing method, device and storage medium | |

| Zhang et al. | Convex hull-based distance metric learning for image classification | |

| Singh et al. | Genetic algorithm implementation to optimize the hybridization of feature extraction and metaheuristic classifiers | |

| Elsayed et al. | Hand gesture recognition based on dimensionality reduction of histogram of oriented gradients | |

| Mantecón et al. | Enhanced gesture-based human-computer interaction through a Compressive Sensing reduction scheme of very large and efficient depth feature descriptors | |

| Ding et al. | Learning representation for multi-view data analysis |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |