EP0140249B1 - Speech analysis/synthesis with energy normalization - Google Patents

Speech analysis/synthesis with energy normalization Download PDFInfo

- Publication number

- EP0140249B1 EP0140249B1 EP19840112266 EP84112266A EP0140249B1 EP 0140249 B1 EP0140249 B1 EP 0140249B1 EP 19840112266 EP19840112266 EP 19840112266 EP 84112266 A EP84112266 A EP 84112266A EP 0140249 B1 EP0140249 B1 EP 0140249B1

- Authority

- EP

- European Patent Office

- Prior art keywords

- frames

- frame

- energy

- speech

- parameters

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Expired

Links

- 238000010606 normalization Methods 0.000 title claims description 29

- 238000003786 synthesis reaction Methods 0.000 title description 12

- 230000015572 biosynthetic process Effects 0.000 title description 11

- 230000001629 suppression Effects 0.000 claims description 21

- 238000000034 method Methods 0.000 claims description 19

- 230000005284 excitation Effects 0.000 claims description 17

- 230000005405 multipole Effects 0.000 claims 1

- 230000006870 function Effects 0.000 description 7

- 230000004044 response Effects 0.000 description 5

- 230000003044 adaptive effect Effects 0.000 description 4

- 230000000630 rising effect Effects 0.000 description 4

- 230000000694 effects Effects 0.000 description 3

- 238000012545 processing Methods 0.000 description 3

- 210000000689 upper leg Anatomy 0.000 description 3

- 239000013598 vector Substances 0.000 description 3

- 238000010586 diagram Methods 0.000 description 2

- 238000005070 sampling Methods 0.000 description 2

- 238000012546 transfer Methods 0.000 description 2

- 230000001154 acute effect Effects 0.000 description 1

- 230000001668 ameliorated effect Effects 0.000 description 1

- 230000003139 buffering effect Effects 0.000 description 1

- 239000006227 byproduct Substances 0.000 description 1

- 238000006243 chemical reaction Methods 0.000 description 1

- 230000001419 dependent effect Effects 0.000 description 1

- 238000009795 derivation Methods 0.000 description 1

- 238000001514 detection method Methods 0.000 description 1

- 238000009472 formulation Methods 0.000 description 1

- 239000000203 mixture Substances 0.000 description 1

- 238000013139 quantization Methods 0.000 description 1

- 238000012552 review Methods 0.000 description 1

- 239000002699 waste material Substances 0.000 description 1

Images

Classifications

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L25/00—Speech or voice analysis techniques not restricted to a single one of groups G10L15/00 - G10L21/00

- G10L25/78—Detection of presence or absence of voice signals

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L21/00—Speech or voice signal processing techniques to produce another audible or non-audible signal, e.g. visual or tactile, in order to modify its quality or its intelligibility

- G10L21/02—Speech enhancement, e.g. noise reduction or echo cancellation

- G10L21/0316—Speech enhancement, e.g. noise reduction or echo cancellation by changing the amplitude

- G10L21/0364—Speech enhancement, e.g. noise reduction or echo cancellation by changing the amplitude for improving intelligibility

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L25/00—Speech or voice analysis techniques not restricted to a single one of groups G10L15/00 - G10L21/00

- G10L25/78—Detection of presence or absence of voice signals

- G10L2025/783—Detection of presence or absence of voice signals based on threshold decision

- G10L2025/786—Adaptive threshold

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS TECHNIQUES OR SPEECH SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING TECHNIQUES; SPEECH OR AUDIO CODING OR DECODING

- G10L21/00—Speech or voice signal processing techniques to produce another audible or non-audible signal, e.g. visual or tactile, in order to modify its quality or its intelligibility

- G10L21/02—Speech enhancement, e.g. noise reduction or echo cancellation

- G10L21/0208—Noise filtering

- G10L21/0216—Noise filtering characterised by the method used for estimating noise

- G10L21/0232—Processing in the frequency domain

Definitions

- the present invention relates to voice coding systems and in particular to a voice mail system and a method of encoding speech as defined in the precharacterizing parts of claims 1, 5, 7 and 8.

- a speech encoding system of the type referred to in the preamble of claim 7 is disclosed in EP-A-47 589.

- voice coding systems including voice mail in microcomputer networks, voice mail sent and received over telephone lines by microcomputers, user-programmed synthetic speech, etc.

- the requirements of many of these applications are quite different from those of simple speech synthesis applications wherein synthetic speech can be carefully encoded and then stored in a ROM or on disk.

- high speed computers with elaborate algorithms, combined with hand tweaking can be used to optimize encoded speech for good intelligibility and low bit requirements.

- the speech encoding step does not have such large resources available. This is most obviously true in voice mail microcomputer networks, but it is also important in applications where a user may wish to generate his own reminder messages, diagnostic messages, signals during program operation, etc.

- a microcomputer system wherein the user could generate synthetic speech messages in his own software would be highly desirable, not only for the individual user but also for the software production houses which do not have trained speech scientists available.

- a particular problem in such application is energy variation. That is, not only will a speaker's voice intensity typically contain a large dynamic range related to sentence inflection, but different speakers will have different volume levels, and the same speaker's voice level may vary widely at different times. Untrained speakers are especially likely to use nonuniform uncontrolled variations in volume, which the listener normally ignores. This large dynamic range would mean that the voice coding method used must accommodate a wide dynamic range, and therefore an increased number of bits would be required for coding at reasonable resolution.

- Energy normalization also improves the intelligibility of the speech received. That is, the dynamic range available from audio amplifiers and loudspeakers is much less than that which can easily be perceived by the human ear. In fact, the dynamic range of loudspeakers is typically much less than that of microphones. This means that a dynamic range which is perfectly intelligible to a human listener may be hard to understand if communicated through a loudspeaker, even if absolutely perfect encoding and decoding is used.

- a further desideratum is that, in many attractive applications, the person listening to synthesized speech should not be required to twiddle a volume control frequently. Where a volume control is available, dynamic range can be analog- adjusted for each received synthetic speech signal, to shift the narrow window provided by the loudspeaker's narrow dynamic range, but this is obviously undesirable for voice mail systems and many other applications.

- analog automatic gain controls have been used to achieve energy normalization of raw signals.

- analog automatic gain controls distort the signal input to the analog to digital converter. That is, where (e.g.) reflection coefficients are used to encode speech data, use of an automatic gain control in the analog signal will introduce error into the calculated reflection coefficients. While it is hard to analyze the nature of this error, error is in fact introduced.

- use of an analog automatic gain control requires an analog part, and every introduction of special analog parts into a digital system greatly increases the cost of the digital system. If an AGC circuit having a fast response is used, the energy levels of consecutive allophones may be inappropriate.

- the sibilant /s/ will normally show a much lower energy than the vowel /I/. If a fast-response AGC circuit is used, the energy-normalized-word “six" is left with a sound extremely hissy, since the initial /s/ will be raised to the same energy as the /i/, inappropriately. Even if a slower-response AGC circuit is used, substantial problems still may exist, such as raising the noise floor up to signal levels during periods of silence, or inadequate limiting of a loud utterance following a silent period.

- a further general problem with energy normalization is caused by the existence of noise during silent periods. That is, if an energy normalization system brings the noise floor up towards the expected normal energy level during periods when no speech signal is present, the intelligibility of speech will be degraded and the speech will be unpleasant to listen to. In addition, substantial bandwidth will be wasted encoding noise signals during speech silence periods.

- the present invention solves the problems of energy normalization digitally, by using look-ahead energy normalization. That is, an adaptive energy normalization parameter is carried from frame to frame during a speech analysis portion of an analysis-synthesis system. Speech frames are buffered for a fairly long period, e.g. 1/2 second, and then are normalized according to the current energy normalization parameter. That is, energy normalization is "look ahead" normalization in that each frame of speech (e.g. each 20 millisecond interval of speech) is normalized according to the energy normalization value from much later, e.g. from 25 frames later. The energy normalization value is calculated for the frames as received by using a fast-rising slow-falling peak-tracking value.

- a novel silence suppression scheme is used.

- Silence suppression is achieved by tracking 2 additional energy contours.

- One contour is a slow-rising fast-falling value, which is updated only during unvoiced speech frames, and therefore tracks a lower envelope of the energy contour. (This in effect tracks the ambient noise level).

- the other parameter is a fast-rising slow-falling parameter, which is updated only during voiced speech frames, and thus tracks an upper envelope of the energy contour. (This in effect tracks the average speech level).

- a threshold value is calculated as the maximum of respective multiples of these 2 parameters, e.g. the greater of: (5 times the lower envelope parameter), and (one fifth of the upper envelope parameter).

- Speech is not considered to have begun unless a first frame which both has an energy above the threshold level and is also voiced in detected.

- the system then backtracks among the buffered frames to include as "speech" all immediately preceding frames which also have energy greater than the threshold. That is, after a period during which the frames of parameters received have been identified as silent frames, all succeeding frames are tentively identified as silent frames, until a super-threshold-energy voiced frame is found.

- the silence suppression system backtracks among frames immediately preceding this super-threshold energy voiced frame until a broken string subthreshold-energy frames at least to 0.4 seconds long is found. When such a 0.4 second interval of silence is found, backtracking ceases, and only those frames after the 0.4 seconds of silence and before the first voiced super-threshold energy frame are identified as non- silent frames.

- a waiting counter is started. If the waiting reaches an upper limit (e.g. 0.4 seconds), without the energy again increasing above T, the utterance is considered to have stopped.

- an upper limit e.g. 0.4 seconds

- these objects are achieved by means for normalising the energy parameter of each said speech frame, wherein said energy parameter of each frame is normalised primarily with respect to an energy parameter of a subsequent frame occurring at least 0.1 seconds after said each frame.

- the method of encoding speech as defined in the precharacterising part of claim 5 is characterised in that the energy parameters of each of said speech frames is normalised with respect to an energy parameter of a subsequent frame occurring later than each said respective frame by at least 0.1 seconds, with normalisation being done prior to encoding said speech parameters into the data channel.

- the speech encoding system as defined in the precharacterising part of claim 7 is characterised by means for normalising the energy parameter of each said speech frame with respect to the energy parameter of a subsequent frame occurring at least 0.1 seconds after said each frame, and wherein said silence suppression means identifies each said frame as silent or non-silent by comparing the energy parameter of each successive one of said frames against a function of first and second adaptively updated threshold values, said first adaptively updated threshold value corresponding to a multiple of an upper envelope of said successive energy parameters of successive ones of said frames and said second threshold value corresponding to a multiple of a lower envelope of said successive values of said frames.

- the voice mail system as defined in the precharacterising part of claim 8 is characterised by means for normalising the energy parameter of each said speech frame with respect to the energy parameter of a subsequent frame occurring at least 0.1 seconds after said each frame, and wherein said silence suppression means identifies each said frame as silent or non-silent by comparing the energy parameter of each successive one of said frames against a function of first and second adaptively updated threshold values, said first adaptively updated threshold value corresponding to a multiple of an upper envelope of said successive energy parameters of successive ones of said frames and said second threshold value corresponding to a multiple of a lower envelope of said successive values of said frames.

- the present invention provides a novel speech analysis/synthesis system, which can be configured in a wide variety of embodiments.

- the presently preferred embodiment uses a VAX 11/780 computer, coupled with a Digital Sound Corporation Model 200 A/D and D/A converter to provided high-resolution high-bitrate digitizing and to provide speech synthesis.

- a conventional microphone and loudspeaker, with an analog amplifier such as a Digital Sound Corporation Model 240 are also used in conjunction with the system.

- the present invention contains novel teachings which are also particularly applicable to microcomputer-based systems. That is, the high resolution provided by the above digitizer is not necessary, and the computing power available on the VAX is also not necessary. In particular, it is expected that a highly attractive embodiment of the present invention will use a TI Professional Computer (TM), using the built in low-quality speaker and an attached microphone as discussed below.

- TM TI Professional Computer

- the system configuration of the presently preferred embodiment is shown schematically in Figure 5. That is, a raw voice input is received by microphone, amplified by microphone amplifier, and digitized by D/A converter.

- the D/A converter used in the presently presently embodiment, as noted, is an expensive high-resolution instrument, which provides 16 bits of resolution at a sample rate of 8 kHz.

- the data received at this high sample rate will be transformed to provide speech parameters at a desired frame rate.

- the frame rate is 50 frames per second, but the frame period can easily range between 10 milliseconds and 30 milliseconds, or over an even wider range.

- linear predictive coding based analysis is used to encode the speech. That is, the successive samples (at the original high bit rate, of, in this example, 8000 per second) are used as inputs to derive a set of linear predictive coding parameters, for example 10 reflection coefficants k,-k, o plus pitch and energy, as described below.

- the audible speech is first translated into a meaningful input for the system.

- a microphone within range of the audible speech is connected to a microphone preamplifier and to an analog-to- digital converter.

- the input stream is sampled 8000 times per second, to an accuracy of 16 bits.

- the stream of input data is then arbitrarily divided up into successive "frames", and, in the presently preferred embodiment, each frame is defined to include 160 samples. That is, the interval between frames is 20 msec, but the LPC parameters of each frame are calculated over a range of 240 samples (30 msec).

- the sequence of samples in each speech input frame is first transformed into a set of inverse filter coefficients a k , as conventionally defined.

- a k inverse filter coefficients

- the a k 's are the predictor coefficients with which a signal S k in a time series can be modeled as the sum of an input Uk and a linear combination of past values S k - n in the series. That is:

- Each input frame contains a large number of sampling points, and the sampling points within any one input frame can themselves be considered as a time series.

- the actual derivation of the filter coefficients a k for the sample frame is as follows: First, the time- series autocorrelation values R, are computed as where the summation is taken over the range of samples within the input frame. In this embodiment, 11 autocorrelation values are calculated (R o R, o ). A recursive procedure is now used to derive the inverse filter coefficients as follows: for

- the presently preferred embodiment uses a procedure due to Leroux-Gueguen.

- the normalized error energy E i.e. the self-residual energy of the input frame

- the Leroux-Gueguen algorithm also produces the reflection coefficients (also referred to as partial correlation coefficients) k i .

- the reflection co- efficients k r are very stable parameters, and are insensitive to coding errors (quantization noise).

- the Leroux-Gueguen procedure is set forth, for example, in IEEE Transactions on Acoustic Speech and Signal Processing, page 257 (June 1977). This. algorithm is a recursive procedure, defined as follows:

- This algorithm computres the reflection co- efficients k, using as intermediaries impulse response estimates e k rather then the filter co- efficients a k .

- Linear predictive coding models generally are well known in the art, and can be found extensively discussed in such references as Rabiner and Schafer, Digital Processing of speech Signals (1978), Markel and Gray, Linear Predictive Coding of Speech (1976).

- the excitation coding transmitted need not be merely energy and pitch, but may also contain . some additional information regarding a residual signal. For example, it would be possible to encode a bandwidth of the residual signal which was an integral multiple of the pitch, and approximately equal to 1000 Hz, as an excitation signal. Many other well-known variations of encoding the excitation information can also be used alternatively.

- the LPC parameters can be encoded in various ways. For example, as is also well known in the art, there are numerous equivalent formulations of linear predictive co- efficients.

- LPC filter coefficients a k can be expressed as the LPC filter coefficients a k , or as the reflection co- efficients k, or as the autocorrelations R, or as other parameter sets such as the impulse response estimates parameters E(i) which are provided by the LeRoux-Guegen procedure.

- the LPC model order is not necessarily 10, but can be 8, 12, 14, or other.

- the present invention does not necessarily have to be used in combination with an LPC speech encoding model at all. That is, the present invention provides an energy normalization method which digitally modifies only the energy of each of a sequence of speech frames, with regard to only the energy and voicing of each of a sequence of speech frames.

- the present invention is applicable to energy normalization of the systems using any one of a great variety of speech encoding methods, including transform techniques, formant encoding techniques, etc.

- the present invention operates on the energy value of the data vectors.

- the encoded parameters are the reflection coefficients k 1 -k 1a , the energy, and pitch.

- ENORM is subsequently updated, for each successive frame, as follows:

- ENORM (i) is set equal to alpha times E(i)+(1-alpha) times ENORM(i-1);

- ENORM(i) is set equal to beta times E(i)+(1-beta) times ENORM(i-1), where alpha is given a value close to 1 to provide a fast rising time constant (preferably about 0.1 seconds), and Beta has given a value close to 0, to provide a slow falling time constant (preferably in the neighborhood of 4 seconds).

- the adaptive parameter ENORM provides an envelope tracking measure which tracks the peak energy of the sequence of frames I.

- This adaptive peak-tracking parameter ENORM(i) is used to normalize the energy of the frames, but this not done directly.

- the energy of each frame I is normalized by dividing it by a look ahead normalized energy ENORM * (i), where ENORM * (i) is defined to be equal to ENORM(i+d), where d represents a number of frames of delay which is typically chosen to be equivalent to 1/2 second (but must be at least 0.1 seconds.

- ENORM * (i) is defined to be equal to ENORM(i+d)

- d represents a number of frames of delay which is typically chosen to be equivalent to 1/2 second (but must be at least 0.1 seconds.

- E * (i) is set equal to E(i)/ENORM * (i). This is accomplished by buffering a number of speech frames equal to the delay d, so that the value of ENORM for the last frame loaded into the buffer provides the value of ENORM * for the oldest frame in the buffer, i.e. for the frame currently being taken out of the buffer.

- the falling time constant (corresponding to the parameter beta) is so long, energy normalization at the end of a word will not be distorted by the approximately zero-energy value of the following frames of silence.

- the silence suppression will prevent ENORM from falling very far in this situation. That is, for a final unvoiced consonant, the long time constant corresponding to beta will mean that the energy normalization value ENORM of the silient frames 1/2 second after the end of a word will be still be dominated by the voiced phonemes immediately preceding the final unvoiced consonant.

- the final unvoiced constant will be normalized with respect to preceding voiced frames, and its energy also will not be unduly raised.

- the foregoing steps provide a normalized energy E * (i) for each speech frame i.

- a further novel step is used to suppress silent periods.

- silence detection is used to selectively prevent certain frames from being encoded. Those frames which are encoded are encoded with a normalized energy E * (i), together with the remaining speech parameters in the chosen model (which in the presently preferred embodiment are the pitch P and the reflection coefficients k l -k lo ).

- Silence suppression is accomplished in a further novel aspect of the present invention, by carrying 2 envelope parameters: ELOW and EHIGH. Both of these parameters are started from some initial value (e.g. 100) and then are updated depending on the energy E(i) of each frame i and on the voiced or unvoiced status of that frame. If the frame is unvoiced, then only the lower parameter ELOW is updated as follows:

- the 2 envelope parameters ELOW and EHIGH are then used to generate 2 threshold parameters TLOW and THIGH, defined as:

- the current frame is a silient frame

- all following frames will be tentatively assumed to be silent unless a voiced super-threshold-energy (and therefore nonsilent) frame is deteched.

- the frames tentatively assumed to be silent will be stored in a buffer (preferable containing at least one second of data), since they may be identified later as not silent.

- a speech frame is detected only when some frame is found which has a frame energy E(i) greater than the threshold T and which "is voiced. That is, an unvoiced super-threshold-energy frame is not by itself enough to cause a decision that speech has begun.

- the unvoiced super-threshold-energy frames in the constant /s/ would not immediately trigger a decision that a speech signal had begun, but, when the voiced super-threshold-energy frames in the /i/ are detected, the immediately preceding frames are reexamined, and the frames corresponding to t /s/ which have energy greater than T are then also designated as "speech" frames.

- the end of the word i.e. the beginning of "silent" frames which need not be encoded

- a voiced frame is found which has its energy E(i) less than T

- a waiting counter is started. If the waiting reaches an upper limit (e.g. 0.4 seconds) without the energy ever rising above T, then speech is determined to have stopped, and frames after the last frame which had energy E(i) greater than T are considered to be silent frames. These frames are therefore not encoded.

- the energy normalization and silence suppression features of the system of the present invention are both dependent in important ways on the voicing decision. It is preferable, although not strictly necessary, that the voicing decision be made by means of a dynamic programming procedure which makes pitch and voicing decision simultaneously, using an interrelated distance measure.

- the actual encoding can now be performed with a minimum bit rate.

- 5 bits are used to encode the energy of each frame, 3 bits are used for each of the ten reflection coefficients, and 5 bits are used for the pitch.

- this bit rate can be further compressed by one of the many variations of delta coding, e.g. by fitting a polynomial to the sequence of parameter values across successive frames and then encoding merely the coefficients of that polynomial, by simple linear delta coding, or by any of the various well known methods.

- an analysis system as described above is combined with speech synthesis capability, to provide a voice mail station, or a station capable of generating used- generated spoken reminder messages.

- the encoded output of the analysis section, as described above is connected to a data channel of some sort.

- This may be a wire to which an RS 232 UART chip is connected, or may be a telephone line accessed by a modem, or may be simply a local data buss which is also connected to a memory board or memory chips, or may of course be any of a tremendous variety of other data channels.

- connection to any of these normal data channels is easily and conveniently made two way, so that data may be received from a communications channel or recalled from memory. Such data received from the channel will thus contain a plurality of speech parameters, including an energy value.

- the encoded data received from the data channel will contain LPC filter parameters for each speech frame, as well as some excitation information.

- the data vector for each speech frame contains 10 reflection coefficients as well as pitch and energy. The reflection co-efficients configure a tense-order lattice filter, and an excitation signal is generated from the excitation parameters and provided as input to this lattice filter.

- the excitation parameters are pitch and energy

- a pulse at intervals equal to the pitch period, is provided as the excitation function during voiced frames (i.e.

- the energy parameter can be used to define the power provided in the excitation function.

- the output of the lattice filter provides the LPC-modeled synthetic signal, which will typically be of good intelligible quality, although not absolutely transparent. This output is then digital-to-analog converted, and the analogue output of the d-a converter is provided to an audio amplifier, which drives a loudspeaker or headphones.

- such a voice mail system is configured in a microcomputer-based system.

- This configuration uses a 8088-based system, together with a special board having a TMS 320 numeric processure chip mounted thereon.

- the fast multiple provided by the TMS 320 is very convenient in performing signal processing functions.

- a pair of audio amplifiers for input and output is also provided on the speech board, as is an 8 bit mu-law codec.

- the function of this embodiment is essentially identical to that of the VAX embodiment described above, except for a slight difference regarding the converters.

- the 8 bit codec performs mu-law conversion, which is non linear but provides enhanced dynamic range.

- a lookup table is used to transform the 8 bit mu-law output provided from the codec chip into a 13 bit linear output.

- This microcomputer embodiment also includes an internal speaker, and a microphone jack.

- a further preferred realization is the use of multiple micro-computer based voice mail stations, as described above, to configure a microcomputer-based voice mail system.

- microcomputers are conventionally connected in a local area network, using one of the many conventional LAN protoacalls, or are connected using PBX tilids.

- the only slightly distinctive feature of this voice mail system embodiment is that the transfer mechanism used must be able to pass binary data, and not merely ASCII data.

- the voice mail operation is simply a straight forward file transfer, wherein a file representing encoded speech data is generated by an analysis operation at one station, is transferrd as a file to another station, and then is converted to analog speech data by a synthesis operation at the second station.

- the crucial changes taught by the present invention are changes in the analysis portion of an analysis/synthesis system, but these changes affect the system as a whole. That is, the system as a whole will achieve higher throughput of intelligible speech information per transmitted bit, better perceptual quality of synthesized sound at the synthesis section, and other system-level advantages.

- microcomputer network voice mail systems perform better with minimized channel loading according to the present invention.

- the present invention provides the objects described above, of energy normalization and of silent suppression, as well as other objects, advantageously.

Landscapes

- Engineering & Computer Science (AREA)

- Computational Linguistics (AREA)

- Signal Processing (AREA)

- Health & Medical Sciences (AREA)

- Audiology, Speech & Language Pathology (AREA)

- Human Computer Interaction (AREA)

- Physics & Mathematics (AREA)

- Acoustics & Sound (AREA)

- Multimedia (AREA)

- Quality & Reliability (AREA)

- Compression, Expansion, Code Conversion, And Decoders (AREA)

Description

- The present invention relates to voice coding systems and in particular to a voice mail system and a method of encoding speech as defined in the precharacterizing parts of

claims 1, 5, 7 and 8. - A speech encoding system of the type referred to in the preamble of claim 7 is disclosed in EP-A-47 589.

- A very large range of applications exists for voice coding systems, including voice mail in microcomputer networks, voice mail sent and received over telephone lines by microcomputers, user-programmed synthetic speech, etc.

- In particular, the requirements of many of these applications are quite different from those of simple speech synthesis applications wherein synthetic speech can be carefully encoded and then stored in a ROM or on disk. In such applications, high speed computers with elaborate algorithms, combined with hand tweaking, can be used to optimize encoded speech for good intelligibility and low bit requirements. However, in many other requirements, the speech encoding step does not have such large resources available. This is most obviously true in voice mail microcomputer networks, but it is also important in applications where a user may wish to generate his own reminder messages, diagnostic messages, signals during program operation, etc. For example, a microcomputer system wherein the user could generate synthetic speech messages in his own software would be highly desirable, not only for the individual user but also for the software production houses which do not have trained speech scientists available.

- A particular problem in such application is energy variation. That is, not only will a speaker's voice intensity typically contain a large dynamic range related to sentence inflection, but different speakers will have different volume levels, and the same speaker's voice level may vary widely at different times. Untrained speakers are especially likely to use nonuniform uncontrolled variations in volume, which the listener normally ignores. This large dynamic range would mean that the voice coding method used must accommodate a wide dynamic range, and therefore an increased number of bits would be required for coding at reasonable resolution.

- However, if energy normalization can be used (i.e. all speech adjusted to approximately a constant energy level) these problems are ameliorated.

- Energy normalization also improves the intelligibility of the speech received. That is, the dynamic range available from audio amplifiers and loudspeakers is much less than that which can easily be perceived by the human ear. In fact, the dynamic range of loudspeakers is typically much less than that of microphones. This means that a dynamic range which is perfectly intelligible to a human listener may be hard to understand if communicated through a loudspeaker, even if absolutely perfect encoding and decoding is used.

- The problems of intelligibility is particularly acute with audio amplifiers and loudspeakers which are not of extremely high fidelity. However, compact low-fidelity loudspeakers must be used in most of the most attractive applications for voice analysis/synthesis, for reasons of compactness, ruggedness, and economy.

- A further desideratum is that, in many attractive applications, the person listening to synthesized speech should not be required to twiddle a volume control frequently. Where a volume control is available, dynamic range can be analog- adjusted for each received synthetic speech signal, to shift the narrow window provided by the loudspeaker's narrow dynamic range, but this is obviously undesirable for voice mail systems and many other applications.

- In the prior art, analog automatic gain controls have been used to achieve energy normalization of raw signals. However, analog automatic gain controls distort the signal input to the analog to digital converter. That is, where (e.g.) reflection coefficients are used to encode speech data, use of an automatic gain control in the analog signal will introduce error into the calculated reflection coefficients. While it is hard to analyze the nature of this error, error is in fact introduced. Moreover, use of an analog automatic gain control requires an analog part, and every introduction of special analog parts into a digital system greatly increases the cost of the digital system. If an AGC circuit having a fast response is used, the energy levels of consecutive allophones may be inappropriate. For example, in the word "six" the sibilant /s/ will normally show a much lower energy than the vowel /I/. If a fast-response AGC circuit is used, the energy-normalized-word "six" is left with a sound extremely hissy, since the initial /s/ will be raised to the same energy as the /i/, inappropriately. Even if a slower-response AGC circuit is used, substantial problems still may exist, such as raising the noise floor up to signal levels during periods of silence, or inadequate limiting of a loud utterance following a silent period.

- Thus, it is an object of the present invention to provide a digital system which can perform energy normalization of voice signals.

- It is a further object of the present invention to provide a method for energy normalization of voice signals which will not overemphasize initial constants.

- It is a further object of the present invention to provide a method for energy normalization of voice signals which can rapidly respond to energy variations in a speaker's utterance, without excessively distorting the relative energy levels of adjacent allophones with an utterance.

- A further general problem with energy normalization is caused by the existence of noise during silent periods. That is, if an energy normalization system brings the noise floor up towards the expected normal energy level during periods when no speech signal is present, the intelligibility of speech will be degraded and the speech will be unpleasant to listen to. In addition, substantial bandwidth will be wasted encoding noise signals during speech silence periods.

- It is a further object of the present invention to provide a voice coding system which will not waste bandwidth on encoding noise during silent periods.

- The present invention solves the problems of energy normalization digitally, by using look-ahead energy normalization. That is, an adaptive energy normalization parameter is carried from frame to frame during a speech analysis portion of an analysis-synthesis system. Speech frames are buffered for a fairly long period, e.g. 1/2 second, and then are normalized according to the current energy normalization parameter. That is, energy normalization is "look ahead" normalization in that each frame of speech (e.g. each 20 millisecond interval of speech) is normalized according to the energy normalization value from much later, e.g. from 25 frames later. The energy normalization value is calculated for the frames as received by using a fast-rising slow-falling peak-tracking value.

- In a further aspect of the present invention, a novel silence suppression scheme is used. Silence suppression is achieved by tracking 2 additional energy contours. One contour is a slow-rising fast-falling value, which is updated only during unvoiced speech frames, and therefore tracks a lower envelope of the energy contour. (This in effect tracks the ambient noise level). The other parameter is a fast-rising slow-falling parameter, which is updated only during voiced speech frames, and thus tracks an upper envelope of the energy contour. (This in effect tracks the average speech level). A threshold value is calculated as the maximum of respective multiples of these 2 parameters, e.g. the greater of: (5 times the lower envelope parameter), and (one fifth of the upper envelope parameter). Speech is not considered to have begun unless a first frame which both has an energy above the threshold level and is also voiced in detected. In that case, the system then backtracks among the buffered frames to include as "speech" all immediately preceding frames which also have energy greater than the threshold. That is, after a period during which the frames of parameters received have been identified as silent frames, all succeeding frames are tentively identified as silent frames, until a super-threshold-energy voiced frame is found. At that point, the silence suppression system backtracks among frames immediately preceding this super-threshold energy voiced frame until a broken string subthreshold-energy frames at least to 0.4 seconds long is found. When such a 0.4 second interval of silence is found, backtracking ceases, and only those frames after the 0.4 seconds of silence and before the first voiced super-threshold energy frame are identified as non- silent frames.

- At the end of speech, when a voice frame is detected having an energy below the threshold T, a waiting counter is started. If the waiting reaches an upper limit (e.g. 0.4 seconds), without the energy again increasing above T, the utterance is considered to have stopped. The significance of the speech/silence decision is that bits are not wasted on encoding silent frames, energy tracking is not distorted by the presence of silent frames as discussed above, and long utterances can be input from an untrained speakers, who are likely to leave very long silences between consecutive words in a sentence.

- In a voice mail system according to the precharacterising part of claim 1 these objects are achieved by means for normalising the energy parameter of each said speech frame, wherein said energy parameter of each frame is normalised primarily with respect to an energy parameter of a subsequent frame occurring at least 0.1 seconds after said each frame.

- According to the invention the method of encoding speech as defined in the precharacterising part of

claim 5 is characterised in that the energy parameters of each of said speech frames is normalised with respect to an energy parameter of a subsequent frame occurring later than each said respective frame by at least 0.1 seconds, with normalisation being done prior to encoding said speech parameters into the data channel. - The speech encoding system as defined in the precharacterising part of claim 7 is characterised by means for normalising the energy parameter of each said speech frame with respect to the energy parameter of a subsequent frame occurring at least 0.1 seconds after said each frame, and wherein said silence suppression means identifies each said frame as silent or non-silent by comparing the energy parameter of each successive one of said frames against a function of first and second adaptively updated threshold values, said first adaptively updated threshold value corresponding to a multiple of an upper envelope of said successive energy parameters of successive ones of said frames and said second threshold value corresponding to a multiple of a lower envelope of said successive values of said frames.

- The voice mail system as defined in the precharacterising part of claim 8 is characterised by means for normalising the energy parameter of each said speech frame with respect to the energy parameter of a subsequent frame occurring at least 0.1 seconds after said each frame, and wherein said silence suppression means identifies each said frame as silent or non-silent by comparing the energy parameter of each successive one of said frames against a function of first and second adaptively updated threshold values, said first adaptively updated threshold value corresponding to a multiple of an upper envelope of said successive energy parameters of successive ones of said frames and said second threshold value corresponding to a multiple of a lower envelope of said successive values of said frames.

- The present invention will be described with reference to the accompanying drawings, which are hereby incorporated by reference and attested to by the attached Declaration, wherein:

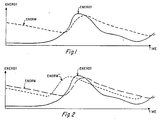

- Figure 1 shows one aspect of the present invention, wherein an adaptively normalized energy level ENORM is derived from the successive energy levels of a sequence of speech frames;

- Figure 2 shows a further aspect of the present invention, wherein a look-ahead energy normalization curve ENORM* is used for normalization;

- Figure 3 shows a further aspect of the present invention, used in silence suppression, wherein high and low envelope curves are continuously maintained for the energy values of a sequence of speech input frames;

- Figure 4 shows a further aspect of the invention, wherein the EHIGH and ELOW curves of Figure 3 are used to derive a threshold curve T; and

- Figure 5 shows a sample system configuration for practicing the present invention.

- The present invention provides a novel speech analysis/synthesis system, which can be configured in a wide variety of embodiments. However, the presently preferred embodiment uses a VAX 11/780 computer, coupled with a Digital Sound Corporation Model 200 A/D and D/A converter to provided high-resolution high-bitrate digitizing and to provide speech synthesis. Naturally, a conventional microphone and loudspeaker, with an analog amplifier such as a Digital Sound Corporation Model 240, are also used in conjunction with the system.

- However, the present invention contains novel teachings which are also particularly applicable to microcomputer-based systems. That is, the high resolution provided by the above digitizer is not necessary, and the computing power available on the VAX is also not necessary. In particular, it is expected that a highly attractive embodiment of the present invention will use a TI Professional Computer (TM), using the built in low-quality speaker and an attached microphone as discussed below.

- The system configuration of the presently preferred embodiment is shown schematically in Figure 5. That is, a raw voice input is received by microphone, amplified by microphone amplifier, and digitized by D/A converter. The D/A converter used in the presently presently embodiment, as noted, is an expensive high-resolution instrument, which provides 16 bits of resolution at a sample rate of 8 kHz. The data received at this high sample rate will be transformed to provide speech parameters at a desired frame rate. In the presently preferred embodiment the frame rate is 50 frames per second, but the frame period can easily range between 10 milliseconds and 30 milliseconds, or over an even wider range.

- In the presently preferred embodiment, linear predictive coding based analysis is used to encode the speech. That is, the successive samples (at the original high bit rate, of, in this example, 8000 per second) are used as inputs to derive a set of linear predictive coding parameters, for example 10 reflection coefficants k,-k,o plus pitch and energy, as described below.

- In practicing the present invention, the audible speech is first translated into a meaningful input for the system. For example, a microphone within range of the audible speech is connected to a microphone preamplifier and to an analog-to- digital converter. In the presently preferred embodiment, the input stream is sampled 8000 times per second, to an accuracy of 16 bits. The stream of input data is then arbitrarily divided up into successive "frames", and, in the presently preferred embodiment, each frame is defined to include 160 samples. That is, the interval between frames is 20 msec, but the LPC parameters of each frame are calculated over a range of 240 samples (30 msec).

- In one embodiment, the sequence of samples in each speech input frame is first transformed into a set of inverse filter coefficients ak, as conventionally defined. See, e.g., Makhoul, "Linear Prediction: A Tutorial Review", proceedings of the IEEE, Volume 63, page 561 (1975). That is, in the linear prediction model, the ak's are the predictor coefficients with which a signal Sk in a time series can be modeled as the sum of an input Uk and a linear combination of past values Sk-n in the series. That is:

- Each input frame contains a large number of sampling points, and the sampling points within any one input frame can themselves be considered as a time series. In one embodiment, the actual derivation of the filter coefficients ak for the sample frame is as follows: First, the time- series autocorrelation values R, are computed as

- These equations are solved recursively for: i=1, 2, ..., up to the model order p (p=10 in this case). The last iteration gives the final ak values.

- The foregoing has described an embodiment using Durbin's recursive procedure to calculate the ak's for the sample frame. However, the presently preferred embodiment uses a procedure due to Leroux-Gueguen. In this procedure, the normalized error energy E (i.e. the self-residual energy of the input frame) is produced as a direct byproduct of the algorithm. The Leroux-Gueguen algorithm also produces the reflection coefficients (also referred to as partial correlation coefficients) ki. The reflection co- efficients kr are very stable parameters, and are insensitive to coding errors (quantization noise).

-

- This algorithm computres the reflection co- efficients k, using as intermediaries impulse response estimates ek rather then the filter co- efficients ak.

- Linear predictive coding models generally are well known in the art, and can be found extensively discussed in such references as Rabiner and Schafer, Digital Processing of speech Signals (1978), Markel and Gray, Linear Predictive Coding of Speech (1976). It should be noted that the excitation coding transmitted need not be merely energy and pitch, but may also contain . some additional information regarding a residual signal. For example, it would be possible to encode a bandwidth of the residual signal which was an integral multiple of the pitch, and approximately equal to 1000 Hz, as an excitation signal. Many other well-known variations of encoding the excitation information can also be used alternatively. Similarly, the LPC parameters can be encoded in various ways. For example, as is also well known in the art, there are numerous equivalent formulations of linear predictive co- efficients. These can be expressed as the LPC filter coefficients ak, or as the reflection co- efficients k,, or as the autocorrelations R,, or as other parameter sets such as the impulse response estimates parameters E(i) which are provided by the LeRoux-Guegen procedure. Moreover, the LPC model order is not necessarily 10, but can be 8, 12, 14, or other.

- Moreover, it should be noted that the present invention does not necessarily have to be used in combination with an LPC speech encoding model at all. That is, the present invention provides an energy normalization method which digitally modifies only the energy of each of a sequence of speech frames, with regard to only the energy and voicing of each of a sequence of speech frames. Thus, the present invention is applicable to energy normalization of the systems using any one of a great variety of speech encoding methods, including transform techniques, formant encoding techniques, etc.

- Thus, after the input samples have been converted to a sequence of speech frames each having a data vector including an energy value, the present invention operates on the energy value of the data vectors. In the presently preferred embodiment, the encoded parameters are the reflection coefficients k1-k1a, the energy, and pitch. (The pitch parameter includes the voicing decision, since an unvoiced frame is encoded as pitch=zero).

- The novel operations in the system of the present invention begin at this point. That is, a sequence of encoded frames, each including an energy parameter and modeling parameters, is provided as the raw output of the speech analysis section. Note that, at this stage, the resolution of the energy parameter coding is much higher than it will be in the encoded information which is actually transmitted over the communications or storage channel 40. The way in which the present invention performs energy normalization on successive frames, and suppresses coding of silient frames, may be seen with regard to the energy diagrams of Figures 1-4. These show examples of the energy values E(i) seen in successive frames i within a sequence of frames, as received as raw output in the speech analysis section.

- An adaptive parameter ENORM(i) is then generated, approximately as shown in Figure 1. That is, ENORM(0) is an initial choice for that parameter, e.g. ENORM(0)=100. ENORM is subsequently updated, for each successive frame, as follows:

- If E(i) is greater than ENORM(i-1), then ENORM (i) is set equal to alpha times E(i)+(1-alpha) times ENORM(i-1);

- Otherwise, ENORM(i) is set equal to beta times E(i)+(1-beta) times ENORM(i-1), where alpha is given a value close to 1 to provide a fast rising time constant (preferably about 0.1 seconds), and Beta has given a value close to 0, to provide a slow falling time constant (preferably in the neighborhood of 4 seconds).

- It should be noted that in the software attached as appendix A, which is hereby incorporated by reference, the parameter alpha is stated as "alpha-up", and the parameter beta is stated as "alpha-down". Thus, the adaptive parameter ENORM provides an envelope tracking measure which tracks the peak energy of the sequence of frames I.

- This adaptive peak-tracking parameter ENORM(i) is used to normalize the energy of the frames, but this not done directly. The energy of each frame I is normalized by dividing it by a look ahead normalized energy ENORM*(i), where ENORM*(i) is defined to be equal to ENORM(i+d), where d represents a number of frames of delay which is typically chosen to be equivalent to 1/2 second (but must be at least 0.1 seconds. Thus, the energy E(i) of each frame is normalized by dividing by the normalized energy ENORM*(i):

- E*(i) is set equal to E(i)/ENORM*(i). This is accomplished by buffering a number of speech frames equal to the delay d, so that the value of ENORM for the last frame loaded into the buffer provides the value of ENORM* for the oldest frame in the buffer, i.e. for the frame currently being taken out of the buffer.

- The introduction of this delay in the energy normalization means that the energy of initial low-energy periods will be normalized with respect to the energy of immediately following high-energy periods, so that the relative energy of initial consonants will not be distorted. That is, unvoiced frames of speech will typically have an energy value which is much lower than that of voiced frames of speech. Thus, in the word "six" the initial allophone /s/ should be normalized with respect to the energy level of the vowel allophone /i/. If the allophone /s/ is normalized with respect to its own energy, then it will be raised to an improperly high energy, and the initial consonant /s/ will be greatly overemphasized.

- Since the falling time constant (corresponding to the parameter beta) is so long, energy normalization at the end of a word will not be distorted by the approximately zero-energy value of the following frames of silence. (In addition, when silence suppression is used, as is preferable, the silence suppression will prevent ENORM from falling very far in this situation). That is, for a final unvoiced consonant, the long time constant corresponding to beta will mean that the energy normalization value ENORM of the silient frames 1/2 second after the end of a word will be still be dominated by the voiced phonemes immediately preceding the final unvoiced consonant. Thus, the final unvoiced constant will be normalized with respect to preceding voiced frames, and its energy also will not be unduly raised.

- Thus, the foregoing steps provide a normalized energy E*(i) for each speech frame i. In the presently preferred embodiment, a further novel step is used to suppress silent periods. As shown in the diagram of Figure 5, silence detection is used to selectively prevent certain frames from being encoded. Those frames which are encoded are encoded with a normalized energy E*(i), together with the remaining speech parameters in the chosen model (which in the presently preferred embodiment are the pitch P and the reflection coefficients kl-klo).

- Silence suppression is accomplished in a further novel aspect of the present invention, by carrying 2 envelope parameters: ELOW and EHIGH. Both of these parameters are started from some initial value (e.g. 100) and then are updated depending on the energy E(i) of each frame i and on the voiced or unvoiced status of that frame. If the frame is unvoiced, then only the lower parameter ELOW is updated as follows:

- If E(i) is greater than ELOW, then ELOW is set equal to gamma times E(i)+(1-gamma) times ELOW;

- otherwise, ELOW is set equal to delta times E(i)+(7-delta) times ELOW,

- where gamme corresponds to a slow rising time constant (typically 1 second), and delta corresponds to a fast falling time constant (typically 0.1 second). Thus, ELOW in effect tracks a lower envelope of the energy contour of EI. The parameters gamma and delta are referred to in the accompanying software as ALOWUP and ALOWDN.

- If the frame I is voiced, then only EHIGH is updated, as follows:

- If E(i) is greater then EHIGH, the EHIGH is set equal to epsilon times E(i)+(1-epsilon) times EHIGH;

- otherwise, EHIGH is set equal to zeta times E(i)+(1-zeta) times EHIGH,

- where epsilon corresponds to a fast rising time constant (typically 0.1 seconds), and zeta corresponds to a fast falling time constant (typically 1 second). Thus, EHIGH tracts an upper envelope of the energy contour. The parameters ELOW and EHIGH are shown in Figure 3. Note that the parameter EHIGH is not updated during the initial unvoiced series of frames, and the parameter ELOW is not disturbed during the following voiced series of frames.

- The 2 envelope parameters ELOW and EHIGH are then used to generate 2 threshold parameters TLOW and THIGH, defined as:

- TLOW=PL times ELOW

- THIGH=PH times EHIGH,

- where PL and PH are scaling factors (e.g. PL=5 and PH=0.2). A threshold T is then set as the maximum of TLOW and THIGH.

- Based on this threshold T, a decision is made whether a frame is nonsilent or silent, as follows:

- If the current frame is a silient frame, all following frames will be tentatively assumed to be silent unless a voiced super-threshold-energy (and therefore nonsilent) frame is deteched. The frames tentatively assumed to be silent will be stored in a buffer (preferable containing at least one second of data), since they may be identified later as not silent. A speech frame is detected only when some frame is found which has a frame energy E(i) greater than the threshold T and which "is voiced. That is, an unvoiced super-threshold-energy frame is not by itself enough to cause a decision that speech has begun. However, once a voiced high energy frame is found, the prior frames in the buffer are reexamined, and all immediately preceding unvoiced frames which have an energy greater than T are then identified as nonsilent frames. Thus, in the sample word "six", the unvoiced super-threshold-energy frames in the constant /s/ would not immediately trigger a decision that a speech signal had begun, but, when the voiced super-threshold-energy frames in the /i/ are detected, the immediately preceding frames are reexamined, and the frames corresponding to t /s/ which have energy greater than T are then also designated as "speech" frames.

- If the current frame is a "speech" (nonsilent) frame, the end of the word (i.e. the beginning of "silent" frames which need not be encoded) is detected as follows. When a voiced frame is found which has its energy E(i) less than T, a waiting counter is started. If the waiting reaches an upper limit (e.g. 0.4 seconds) without the energy ever rising above T, then speech is determined to have stopped, and frames after the last frame which had energy E(i) greater than T are considered to be silent frames. These frames are therefore not encoded.

- It should be noted that the energy normalization and silence suppression features of the system of the present invention are both dependent in important ways on the voicing decision. It is preferable, although not strictly necessary, that the voicing decision be made by means of a dynamic programming procedure which makes pitch and voicing decision simultaneously, using an interrelated distance measure.

- The actual encoding can now be performed with a minimum bit rate. In the presently preferred embodiment, 5 bits are used to encode the energy of each frame, 3 bits are used for each of the ten reflection coefficients, and 5 bits are used for the pitch. However, this bit rate can be further compressed by one of the many variations of delta coding, e.g. by fitting a polynomial to the sequence of parameter values across successive frames and then encoding merely the coefficients of that polynomial, by simple linear delta coding, or by any of the various well known methods.

- In a further attractive contemplated embodiment of the invention, an analysis system as described above is combined with speech synthesis capability, to provide a voice mail station, or a station capable of generating used- generated spoken reminder messages. This combination is easily accomplished with minimal required hardware addition. The encoded output of the analysis section, as described above, is connected to a data channel of some sort. This may be a wire to which an RS 232 UART chip is connected, or may be a telephone line accessed by a modem, or may be simply a local data buss which is also connected to a memory board or memory chips, or may of course be any of a tremendous variety of other data channels. Naturally, connection to any of these normal data channels is easily and conveniently made two way, so that data may be received from a communications channel or recalled from memory. Such data received from the channel will thus contain a plurality of speech parameters, including an energy value.

- In the presently preferred embodiment, where LPC speech modeling is used, the encoded data received from the data channel will contain LPC filter parameters for each speech frame, as well as some excitation information. In the presently preferred embodiment, the data vector for each speech frame contains 10 reflection coefficients as well as pitch and energy. The reflection co-efficients configure a tense-order lattice filter, and an excitation signal is generated from the excitation parameters and provided as input to this lattice filter. For example, where the excitation parameters are pitch and energy, a pulse, at intervals equal to the pitch period, is provided as the excitation function during voiced frames (i.e. during frames when the encoded value of pitch is non zero), and pseudo-random noise is provided as the excitation function when pitch has been encoded as equal to zero (unvoiced frames). In either case, the energy parameter can be used to define the power provided in the excitation function. The output of the lattice filter provides the LPC-modeled synthetic signal, which will typically be of good intelligible quality, although not absolutely transparent. This output is then digital-to-analog converted, and the analogue output of the d-a converter is provided to an audio amplifier, which drives a loudspeaker or headphones.

- In a further attractive alternative embodiment of the present invention, such a voice mail system is configured in a microcomputer-based system. This configuration uses a 8088-based system, together with a special board having a TMS 320 numeric processure chip mounted thereon. The fast multiple provided by the TMS 320 is very convenient in performing signal processing functions. A pair of audio amplifiers for input and output is also provided on the speech board, as is an 8 bit mu-law codec. The function of this embodiment is essentially identical to that of the VAX embodiment described above, except for a slight difference regarding the converters. The 8 bit codec performs mu-law conversion, which is non linear but provides enhanced dynamic range. A lookup table is used to transform the 8 bit mu-law output provided from the codec chip into a 13 bit linear output. Similarly, in a speech synthesis operation, the linear output of the lattice filter operation is pre-converted, using the same lookup table, to an 8-bit word which will give an appropriate analog output signal from the codec. This microcomputer embodiment also includes an internal speaker, and a microphone jack.

- A further preferred realization is the use of multiple micro-computer based voice mail stations, as described above, to configure a microcomputer-based voice mail system. In such a system, microcomputers are conventionally connected in a local area network, using one of the many conventional LAN protoacalls, or are connected using PBX tilids. The only slightly distinctive feature of this voice mail system embodiment is that the transfer mechanism used must be able to pass binary data, and not merely ASCII data. As between microcomputer stations which have the voice mail analysis/synthesis capabilities discussed above, the voice mail operation is simply a straight forward file transfer, wherein a file representing encoded speech data is generated by an analysis operation at one station, is transferrd as a file to another station, and then is converted to analog speech data by a synthesis operation at the second station.

- Thus, the crucial changes taught by the present invention are changes in the analysis portion of an analysis/synthesis system, but these changes affect the system as a whole. That is, the system as a whole will achieve higher throughput of intelligible speech information per transmitted bit, better perceptual quality of synthesized sound at the synthesis section, and other system-level advantages. In particular, microcomputer network voice mail systems perform better with minimized channel loading according to the present invention.

- Thus, the present invention provides the objects described above, of energy normalization and of silent suppression, as well as other objects, advantageously.

Claims (15)

Applications Claiming Priority (4)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| US541497 | 1983-10-13 | ||

| US541410 | 1983-10-13 | ||

| US06/541,497 US4696039A (en) | 1983-10-13 | 1983-10-13 | Speech analysis/synthesis system with silence suppression |

| US06/541,410 US4696040A (en) | 1983-10-13 | 1983-10-13 | Speech analysis/synthesis system with energy normalization and silence suppression |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| EP0140249A1 EP0140249A1 (en) | 1985-05-08 |

| EP0140249B1 true EP0140249B1 (en) | 1988-08-10 |

Family

ID=27066699

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| EP19840112266 Expired EP0140249B1 (en) | 1983-10-13 | 1984-10-12 | Speech analysis/synthesis with energy normalization |

Country Status (3)

| Country | Link |

|---|---|

| EP (1) | EP0140249B1 (en) |

| JP (1) | JPH0644195B2 (en) |

| DE (1) | DE3473373D1 (en) |

Families Citing this family (19)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| FR2631147B1 (en) * | 1988-05-04 | 1991-02-08 | Thomson Csf | METHOD AND DEVICE FOR DETECTING VOICE SIGNALS |

| KR960005741B1 (en) * | 1990-05-28 | 1996-05-01 | 마쯔시다덴기산교 가부시기가이샤 | Voice signal coding system |

| FR2686183A1 (en) * | 1992-01-15 | 1993-07-16 | Idms Sa | System for digitising an audio signal, implementation method and device for compiling a digital database |

| JP3484757B2 (en) * | 1994-05-13 | 2004-01-06 | ソニー株式会社 | Noise reduction method and noise section detection method for voice signal |

| US5991718A (en) * | 1998-02-27 | 1999-11-23 | At&T Corp. | System and method for noise threshold adaptation for voice activity detection in nonstationary noise environments |

| US6314396B1 (en) * | 1998-11-06 | 2001-11-06 | International Business Machines Corporation | Automatic gain control in a speech recognition system |

| US6889186B1 (en) | 2000-06-01 | 2005-05-03 | Avaya Technology Corp. | Method and apparatus for improving the intelligibility of digitally compressed speech |

| GB2367467B (en) | 2000-09-30 | 2004-12-15 | Mitel Corp | Noise level calculator for echo canceller |

| CN1867965B (en) * | 2003-10-16 | 2010-05-26 | Nxp股份有限公司 | Voice activity detection with adaptive noise floor tracking |

| US7660715B1 (en) | 2004-01-12 | 2010-02-09 | Avaya Inc. | Transparent monitoring and intervention to improve automatic adaptation of speech models |

| US7529670B1 (en) | 2005-05-16 | 2009-05-05 | Avaya Inc. | Automatic speech recognition system for people with speech-affecting disabilities |

| US7653543B1 (en) | 2006-03-24 | 2010-01-26 | Avaya Inc. | Automatic signal adjustment based on intelligibility |

| US7925508B1 (en) | 2006-08-22 | 2011-04-12 | Avaya Inc. | Detection of extreme hypoglycemia or hyperglycemia based on automatic analysis of speech patterns |

| US7962342B1 (en) | 2006-08-22 | 2011-06-14 | Avaya Inc. | Dynamic user interface for the temporarily impaired based on automatic analysis for speech patterns |

| US7675411B1 (en) | 2007-02-20 | 2010-03-09 | Avaya Inc. | Enhancing presence information through the addition of one or more of biotelemetry data and environmental data |

| US8041344B1 (en) | 2007-06-26 | 2011-10-18 | Avaya Inc. | Cooling off period prior to sending dependent on user's state |

| US9443529B2 (en) | 2013-03-12 | 2016-09-13 | Aawtend, Inc. | Integrated sensor-array processor |

| US10204638B2 (en) | 2013-03-12 | 2019-02-12 | Aaware, Inc. | Integrated sensor-array processor |

| US10049685B2 (en) | 2013-03-12 | 2018-08-14 | Aaware, Inc. | Integrated sensor-array processor |

Family Cites Families (9)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| AT347504B (en) * | 1975-04-18 | 1978-12-27 | Siemens Ag Oesterreich | DEVICE FOR AUTOMATIC VOLUME CONTROL |

| US4071695A (en) * | 1976-08-12 | 1978-01-31 | Bell Telephone Laboratories, Incorporated | Speech signal amplitude equalizer |

| US4280192A (en) * | 1977-01-07 | 1981-07-21 | Moll Edward W | Minimum space digital storage of analog information |

| FR2380612A1 (en) * | 1977-02-09 | 1978-09-08 | Thomson Csf | SPEECH SIGNAL DISCRIMINATION DEVICE AND ALTERNATION SYSTEM INCLUDING SUCH A DEVICE |

| US4351983A (en) * | 1979-03-05 | 1982-09-28 | International Business Machines Corp. | Speech detector with variable threshold |

| FR2451680A1 (en) * | 1979-03-12 | 1980-10-10 | Soumagne Joel | SPEECH / SILENCE DISCRIMINATOR FOR SPEECH INTERPOLATION |

| FR2466825A1 (en) * | 1979-09-28 | 1981-04-10 | Thomson Csf | DEVICE FOR DETECTING VOICE SIGNALS AND ALTERNAT SYSTEM COMPRISING SUCH A DEVICE |

| CA1147071A (en) * | 1980-09-09 | 1983-05-24 | Northern Telecom Limited | Method of and apparatus for detecting speech in a voice channel signal |

| JPS58171099A (en) * | 1982-03-31 | 1983-10-07 | 富士通株式会社 | Correction of voice parameter |

-

1984

- 1984-10-12 DE DE8484112266T patent/DE3473373D1/en not_active Expired

- 1984-10-12 EP EP19840112266 patent/EP0140249B1/en not_active Expired

- 1984-10-13 JP JP59215061A patent/JPH0644195B2/en not_active Expired - Lifetime

Also Published As

| Publication number | Publication date |

|---|---|

| EP0140249A1 (en) | 1985-05-08 |

| DE3473373D1 (en) | 1988-09-15 |

| JPH0644195B2 (en) | 1994-06-08 |

| JPS60107700A (en) | 1985-06-13 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| US4696040A (en) | Speech analysis/synthesis system with energy normalization and silence suppression | |

| US4696039A (en) | Speech analysis/synthesis system with silence suppression | |

| EP0140249B1 (en) | Speech analysis/synthesis with energy normalization | |

| EP1159736B1 (en) | Distributed voice recognition system | |

| US6092039A (en) | Symbiotic automatic speech recognition and vocoder | |

| US6889186B1 (en) | Method and apparatus for improving the intelligibility of digitally compressed speech | |

| EP0785541B1 (en) | Usage of voice activity detection for efficient coding of speech | |

| GB2327835A (en) | Improving speech intelligibility in noisy enviromnment | |

| EP0814458A2 (en) | Improvements in or relating to speech coding | |

| JPH09152895A (en) | Measuring method for perception noise masking based on frequency response of combined filter | |

| US5706392A (en) | Perceptual speech coder and method | |

| Marques et al. | Harmonic coding at 4.8 kb/s | |

| JPH08254994A (en) | Reconfiguration of arrangement of sound coded parameter by list (inventory) of sorting and outline | |

| KR100498177B1 (en) | Signal quantizer | |

| JPH07199997A (en) | Processing method of sound signal in processing system of sound signal and shortening method of processing time in itsprocessing | |

| GB2336978A (en) | Improving speech intelligibility in presence of noise | |

| JP2003323200A (en) | Gradient descent optimization of linear prediction coefficient for speech coding | |

| JPH0345839B2 (en) | ||

| Holmes | Towards a unified model for low bit-rate speech coding using a recognition-synthesis approach. | |

| Xydeas | Differential encoding techniques applied to speech signals | |

| Babu et al. | Performance analysis of hybrid model of robust automatic continuous speech recognition system | |

| Yegnanarayana | Effect of noise and distortion in speech on parametric extraction | |

| Van Schalkwyk et al. | Linear predictive speech coding at 2400 b/s | |

| JPH0414813B2 (en) | ||

| JP2847730B2 (en) | Audio coding method |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PUAI | Public reference made under article 153(3) epc to a published international application that has entered the european phase |

Free format text: ORIGINAL CODE: 0009012 |

|

| AK | Designated contracting states |

Designated state(s): DE FR GB |

|

| 17P | Request for examination filed |

Effective date: 19851023 |

|

| 17Q | First examination report despatched |

Effective date: 19861212 |

|

| GRAA | (expected) grant |

Free format text: ORIGINAL CODE: 0009210 |

|

| AK | Designated contracting states |

Kind code of ref document: B1 Designated state(s): DE FR GB |

|

| REF | Corresponds to: |

Ref document number: 3473373 Country of ref document: DE Date of ref document: 19880915 |

|

| ET | Fr: translation filed | ||

| PLBE | No opposition filed within time limit |

Free format text: ORIGINAL CODE: 0009261 |

|

| STAA | Information on the status of an ep patent application or granted ep patent |

Free format text: STATUS: NO OPPOSITION FILED WITHIN TIME LIMIT |

|

| 26N | No opposition filed | ||

| REG | Reference to a national code |

Ref country code: GB Ref legal event code: IF02 |

|

| PGFP | Annual fee paid to national office [announced via postgrant information from national office to epo] |

Ref country code: GB Payment date: 20030915 Year of fee payment: 20 |

|

| PGFP | Annual fee paid to national office [announced via postgrant information from national office to epo] |

Ref country code: FR Payment date: 20031003 Year of fee payment: 20 |

|

| PGFP | Annual fee paid to national office [announced via postgrant information from national office to epo] |

Ref country code: DE Payment date: 20031031 Year of fee payment: 20 |

|

| PG25 | Lapsed in a contracting state [announced via postgrant information from national office to epo] |

Ref country code: GB Free format text: LAPSE BECAUSE OF EXPIRATION OF PROTECTION Effective date: 20041011 |

|

| REG | Reference to a national code |

Ref country code: GB Ref legal event code: PE20 |